Bridging the Gap: Integrating AI Models with Expert Knowledge for Enhanced Efficacy and Safety Assessment

This article explores the critical integration of data-driven artificial intelligence (AI) models with human expert knowledge in pharmaceutical efficacy and safety (ES) assessment.

Bridging the Gap: Integrating AI Models with Expert Knowledge for Enhanced Efficacy and Safety Assessment

Abstract

This article explores the critical integration of data-driven artificial intelligence (AI) models with human expert knowledge in pharmaceutical efficacy and safety (ES) assessment. Aimed at researchers and drug development professionals, it covers the foundational rationale for this synergy, detailing how AI technologies like machine learning and knowledge graphs are revolutionizing target validation and compound screening. The content provides a methodological framework for implementation, addresses common data integration and validation challenges with practical solutions, and presents comparative analyses demonstrating how hybrid approaches outperform purely computational or knowledge-driven methods. By synthesizing evidence from recent studies and applications, including drug repositioning successes, this article serves as a comprehensive guide for leveraging integrated strategies to accelerate drug discovery, improve predictive accuracy, and reduce late-stage attrition.

The Synergy of Silicon and Cortex: Why Integrated ES Assessment is the Future of Drug Discovery

The biopharmaceutical industry is navigating an era of unprecedented scientific opportunity alongside immense economic and operational challenges. Drug development remains a marathon of cost, complexity, and uncertainty, often consuming over 7 years and billions of dollars from discovery to market, with only a small fraction of candidates entering trials ever achieving approval [1]. The core dilemma is threefold: soaring costs are straining R&D budgets, astronomical failure rates are worsening, and a flood of data is paradoxically slowing progress rather than accelerating it. In 2024, the success rate for Phase 1 drugs plummeted to just 6.7%, a significant decline from 10% a decade ago, highlighting a critical productivity crisis [2]. This guide examines the evidence behind these challenges and objectively compares emerging solutions, particularly AI-driven platforms and integrated knowledge systems, that aim to redefine the modern R&D playbook.

Quantitative Analysis of the Development Landscape

The economic and operational pressures on drug development are quantifiable and severe. The following tables summarize key performance metrics and data-related challenges identified in recent industry analyses.

Table 1: Key Performance Metrics in Drug Development (2024-2025)

| Metric | Current Industry Average | Trend & Context |

|---|---|---|

| Phase 1 Success Rate | 6.7% (2024) | Down from 10% a decade ago [2]. |

| Average Development Timeline | Over 7 years | Varies by therapeutic area and modality [1]. |

| R&D Margin of Total Revenue | 29% (2024) | Projected to fall to 21% by 2030 [2]. |

| Internal Rate of Return (IRR) for R&D | 4.1% | Well below the cost of capital, indicating lower productivity [2]. |

| Data Points in a Single Phase 3 Trial | Nearly 6 million | Contributing to significant patient and site burden [3]. |

Table 2: The Data Overload Challenge in Clinical Trials

| Challenge | Impact on Trials | Supporting Data |

|---|---|---|

| Non-Essential Data Collection | Slows enrollment, increases burden | ~30% of data collected is "non-core" or "non-essential" [3]. |

| Patient Questionnaire Burden | Contributes to patient dropout | Some patients fill out three questionnaires per day [3]. |

| Protocol Complexity | Extends trial timelines | A 10% rise in complexity can increase study timelines by nearly one-third [1]. |

Experimental Protocols for Modern R&D Solutions

AI-Driven Molecule Design and Optimization

Objective: To leverage artificial intelligence (AI) and machine learning (ML) for the rapid design and optimization of novel therapeutic small molecules with improved efficacy and developmental properties.

Methodology:

- Platforms: This protocol utilizes leading AI-driven drug discovery platforms such as Exscientia's "Centaur Chemist" or Insilico Medicine's generative models [4].

- Data Integration: Models are trained on vast, structured chemical libraries encompassing known compounds, their synthetic pathways, and associated experimental data on potency, selectivity, and ADME (Absorption, Distribution, Metabolism, and Excretion) properties [4].

- Iterative Design Loop: The core of the protocol is an iterative "design-make-test-analyze" cycle:

- AI Design: Generative AI algorithms propose novel molecular structures that satisfy a pre-defined Target Product Profile (TPP).

- Robotic Synthesis: Selected candidate molecules are synthesized, often using automated, robotics-mediated laboratories (e.g., Exscientia's "AutomationStudio") [4].

- High-Throughput Screening: Synthesized compounds are tested in relevant in vitro or ex vivo assays (e.g., using patient-derived tissue samples) to generate new biological activity data [4].

- Model Refinement: New experimental data is fed back into the AI models to refine their predictive accuracy and guide the next design cycle [4] [5].

- Validation: The final candidate molecule is evaluated in standardized preclinical models to confirm its therapeutic potential before IND (Investigational New Drug) submission.

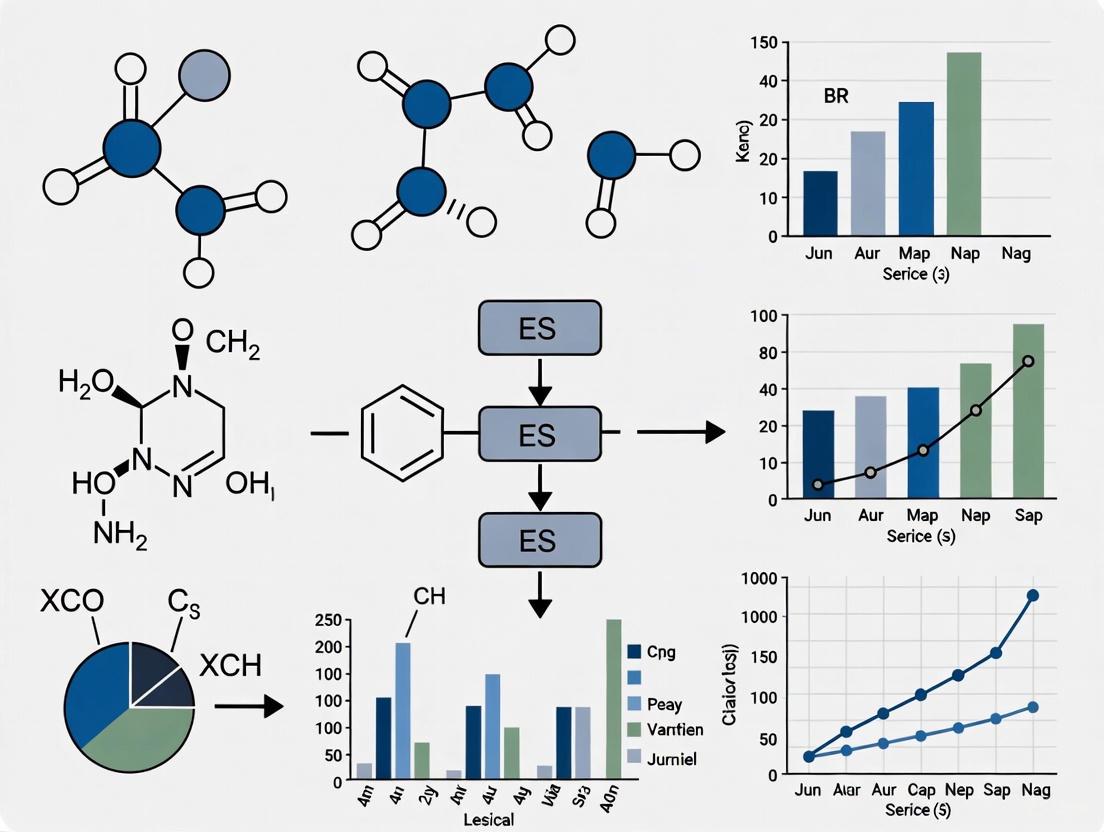

Key Workflow Diagram:

The "Lab in the Loop" for AI-First Research

Objective: To create a tightly integrated, iterative research cycle where AI models guide experimental design, and wet-lab data continuously refines the AI, dramatically accelerating hypothesis testing and discovery.

Methodology:

- Infrastructure: Establishment of a cloud-native computational infrastructure (e.g., on AWS) connected to automated wet-lab facilities [5].

- Data Foundation: Aggregation of high-quality, FAIR (Findable, Accessible, Interoperable, Reusable) data from decades of internal research, public databases, and real-time experimental outputs [5].

- AI Model Training: Development of specialized AI models, including Biological Foundation Models (BioFMs) like ESM-2 for protein structure or other models for binding affinity prediction (e.g., AffinityNet) [5].

- Iterative Workflow:

- Dry Lab Prediction: AI models analyze the integrated data to generate specific, testable hypotheses (e.g., a novel drug target or a optimized compound).

- Wet Lab Validation: Automated laboratory systems execute experiments to test these hypotheses, generating high-fidelity results.

- Data Ingestion & Model Retraining: The new experimental results are automatically ingested into the data platform and used to retrain and improve the AI models, closing the loop [5].

- Agentic AI Assistance: Implementation of AI research agents (e.g., Genentech's gRED Research Agent on Amazon Bedrock) to automate literature reviews and data retrieval, saving thousands of hours of manual effort [5].

Key Workflow Diagram:

Integrating Expert Knowledge with Machine Learning in a Hybrid System

Objective: To develop a hybrid expert system that integrates formalized domain expert knowledge with data-driven machine learning models to improve diagnostic accuracy and decision-making, particularly in complex, data-scarce scenarios.

Methodology:

- Knowledge Acquisition: Expert knowledge is systematically gathered through interviews, clinical guidelines, and prescriptions from domain specialists (e.g., clinicians) [6]. This knowledge often includes diagnostic rules, constraints, and operational heuristics.

- Knowledge Encoding: The acquired knowledge is formally encoded into a logical, machine-readable knowledge base. This can be done using symbolic AI languages like Prolog or within a knowledge graph [6].

- Machine Learning Model Development: Concurrently, predictive ML models (e.g., Random Forest, Support Vector Machine) are trained on relevant datasets, which can include both public data and real-world patient data [6]. Feature selection (e.g., using decision trees, SHAP) is critical for model performance and interpretability.

- System Integration: A hybrid framework is built to integrate the statistical predictions of the ML models with the rule-based logic of the knowledge base. The system is designed to present a unified recommendation, such as a diagnosis or treatment plan [6].

- Evaluation and Refinement: The hybrid system is rigorously evaluated by medical professionals against pure data-driven models and human decision-making to confirm its effectiveness as a decision-support tool [6].

Key Workflow Diagram:

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and digital tools used in the advanced experimental protocols described above.

Table 3: Key Research Reagent Solutions for Modern Drug Development

| Item / Solution | Function in R&D | Application Example |

|---|---|---|

| Generative AI Platforms (e.g., Exscientia, Insilico) | Accelerates novel molecular design by exploring vast chemical spaces. | Designing small-molecule drug candidates with optimized properties in silico [4]. |

| Biological Foundation Models (e.g., ESM-2) | Provides pre-trained deep learning models on biological sequences for protein structure/function prediction. | Genome-wide assessment of protein druggability and binding affinity prediction [5]. |

| Robotic Synthesis & Automation Labs | Automates the synthesis and purification of AI-designed molecules, increasing throughput. | Closing the "make-test" loop in the AI-driven design cycle (e.g., Exscientia's AutomationStudio) [4]. |

| Federated Learning Platforms (e.g., Apheris) | Enables collaborative AI model training across institutions without sharing confidential data. | The AI Structural Biology (AISB) consortium pooling proprietary data to improve predictive models [5]. |

| Real-World Evidence (RWE) Data | Provides insights from electronic health records, claims data, and registries on patient outcomes in routine care. | Informing trial recruitment strategies and generating post-market evidence for payers [1]. |

| Digital Twin Technology | Creates virtual replicas of patients or biological processes to simulate drug effects in silico. | Testing novel drug candidates during early development phases to predict efficacy and speed up trials [7]. |

Comparative Analysis of Leading AI-Driven Discovery Platforms

The landscape of AI in drug discovery is rapidly evolving, with several platforms successfully advancing candidates into clinical trials. The table below provides a comparative overview of selected leading platforms based on their public track records as of 2025.

Table 4: Comparison of Selected AI-Driven Drug Discovery Platforms (2025 Landscape)

| Platform / Company | Core AI Approach | Reported Efficiency Gains | Clinical-Stage Pipeline (Examples) |

|---|---|---|---|

| Exscientia | Generative AI for small-molecule design; "Centaur Chemist" approach. | ~70% faster design cycles; 10x fewer compounds synthesized in one program (136 compounds vs. industry norm of thousands) [4]. | CDK7 inhibitor (GTAEXS-617), LSD1 inhibitor (EXS-74539); Multiple candidates in Phase I/II [4]. |

| Insilico Medicine | Generative AI for target discovery and molecule design. | Progress from target discovery to Phase I trials in 18 months for idiopathic pulmonary fibrosis drug [4]. | IPF drug candidate in Phase I; Other undisclosed programs in development [4]. |

| Recursion | High-throughput phenotypic screening with AI-driven data analysis. | Leverages its massive "Recursion OS" and phenomics dataset for target identification. | Multiple internal and partnered programs in oncology, neurology, and rare diseases [4]. |

| BenevolentAI | Knowledge-graph-driven target discovery and prioritization. | Uses AI to mine scientific literature and data to generate novel target hypotheses. | Several programs in clinical and preclinical stages [4]. |

| Schrödinger | Physics-based computational platform for molecular modeling. | Combines first-principles physics with machine learning for precise molecule design. | Partners with multiple pharma companies and has co-developed clinical-stage assets [4]. |

Discussion: Integrating Knowledge Systems for a Resilient Future

The modern drug development dilemma is a systems problem that requires a systems solution. The evidence indicates that overcoming the triad of high costs, high failure rates, and data overload will not come from simply collecting more data, but from smarter data connections and the integration of diverse knowledge systems [8].

The promise of AI is tangible, with platforms demonstrating they can compress early-stage timelines from years to months and drastically reduce the number of compounds needed for screening [4]. However, the ultimate test—whether these AI-designed drugs achieve superior clinical success rates—remains ongoing, as most are still in early-phase trials [4]. The most effective applications of AI appear to be those that integrate it into a "lab in the loop," creating a self-improving cycle of computational prediction and experimental validation [5].

Furthermore, the principle of integrating different knowledge systems, a concept validated in fields like climate vulnerability assessment for fisheries, offers a powerful framework for drug development [9]. Relying solely on one form of knowledge—be it purely data-driven or purely expert-driven—risks introducing biases and blind spots. The future of resilient and productive R&D lies in hybrid systems that formally combine the pattern-recognition power of machine learning with the deep, contextual, and causal understanding of human experts [6]. This approach, combined with strategic data collection that prioritizes essential over extraneous information, is the most promising path to breaking the modern development dilemma [3].

The integration of artificial intelligence and machine learning (AI/ML) into scientific research, particularly environmental science (ES) assessment and drug development, represents a paradigm shift in how we generate and interpret data. This comparison guide objectively analyzes the capabilities of AI/ML models against human expert knowledge, framing the discussion within the broader thesis of integrating scientific models with expert knowledge. As AI/ML adoption accelerates across research domains, understanding the respective strengths, limitations, and optimal collaboration frameworks between computational and human intelligence becomes critical for advancing scientific discovery. This guide provides a structured comparison based on current empirical evidence, detailing performance metrics, experimental methodologies, and practical implementation strategies to inform researchers, scientists, and drug development professionals in their approach to leveraging both technological and human assets.

Quantitative Performance Comparison

Direct comparisons between AI/ML systems and human experts reveal a nuanced performance landscape, where the superiority of either approach is often task-dependent. The tables below summarize key quantitative findings from rigorous evaluations across multiple domains.

Table 1: Performance Comparison of AI/ML vs. Human Experts in Healthcare Domains

| Domain / Task | AI/ML Performance Metric | Human Expert Performance Metric | Key Finding | Source |

|---|---|---|---|---|

| Medical Imaging (Disease Detection) | Pooled Sensitivity: 87.0%, Specificity: 92.5% | Pooled Sensitivity: 86.4%, Specificity: 90.5% | AI performance was on par with healthcare professionals. | [10] |

| Neurosurgical Outcome Prediction | Median Accuracy: 94.5%, Median AUROC: 0.83 | N/A | AI/ML predictions were significantly better than logistic regression and demonstrated superior performance compared to clinical experts. | [10] |

| Depression Therapeutic Outcomes | Overall Prediction Accuracy: 0.82 (95% CI [0.77, 0.87]) | N/A | Models using multiple data types achieved significantly higher accuracy (pooled proportion 0.93). | [10] |

| Suicidal Behavior Prediction | Risk Classification Accuracy: >90% | N/A | AI/ML demonstrated high predictive capability. | [10] |

| Chest Pain Diagnosis (ED) | High accuracy for AMI diagnosis and mortality prediction. | N/A | AI outperformed existing risk scores in all cases and physicians in 3 out of 4 cases. | [10] |

| Predictive Modeling (Meta-Analysis) | Substantially more accurate in 33-47% of studies. | Substantially more accurate in 6-16% of studies. | Automated decision-making was equal or superior to humans in 84-94% of 136 studies. | [10] |

Table 2: AI/ML Performance in Drug Discovery and Development

| Application Area | AI/ML Technology | Reported Outcome / Advantage | Source |

|---|---|---|---|

| Efficacy & Toxicity Prediction | Deep Learning (DL) | Demonstrated significant predictivity over traditional ML for ADMET data sets. | [11] |

| Virtual Screening | DeepVS (Docking System) | Showed exceptional performance screening 95,000 decoys against 40 receptors and 2,950 ligands. | [12] |

| Novel Compound Design | Deep Learning / Multi-objective Algorithms | Enables rapid, efficient design of novel drug candidates with specific properties like solubility and activity. | [12] [13] |

| Antibiotic Discovery | Machine Learning | Identified powerful antibiotics from a pool of over 100 million molecules. | [12] |

| Overall Impact | Various AI/ML | Accelerates discovery, shortens development timelines, and reduces costs. | [13] [11] |

Experimental Protocols for Human-AI Comparison

Rigorous comparison of human and machine performance requires carefully controlled methodologies to ensure fair and meaningful results. The following protocols are synthesized from best practices in the field.

Guiding Principles for Experimental Design

A robust framework for comparing human and algorithm performance is built on three core principles [14]:

- Account for Cognitive Differences: The experimental design must acknowledge that humans and algorithms process information fundamentally differently. For instance, human memory is inferential and context-dependent, while algorithms can process vast, multi-dimensional data spaces without fatigue [10] [14]. The task should be designed to avoid unfairly advantaging or disadvantaging either party based on these inherent cognitive disparities.

- Match Trials and Conditions: To ensure a direct comparison, the same stimuli, trial sequences, and environmental conditions used to evaluate the AI/ML model must be used in the human evaluation. This eliminates confounding variables and allows for a clear, one-to-one performance comparison [14].

- Adopt Best Practices from Psychology Research: Studies involving human participants should adhere to established ethical review protocols, recruit a sufficiently large and representative subject pool, control for potential performance strategies (e.g., memorization), and collect supplementary subjective data (e.g., on task difficulty) to enrich the analysis and improve replicability [14].

A Standardized Workflow for Comparative Evaluation

The following diagram outlines a generalized experimental workflow for conducting a rigorous human-AI comparison study, incorporating the principles above.

Figure 1: Workflow for rigorous human-AI comparison studies.

Case Study: Predictability in a Real-Effort Task

A 2023 study empirically compared the predictability of AI versus human performance using a computerized "lunar lander" game [15]. The methodology was as follows:

- Task: Participants were asked to guess whether landings of a spaceship, performed by either AI or humans, would succeed.

- Stimuli: The same landing scenarios were used for both AI and human operators.

- Measurement: Researchers measured participants' ability to predict success/failure for both AI and human-led landings.

- Finding: The study concluded that humans were worse at predicting AI performance than at predicting human performance. Participants often underestimated the differences in AI's relative predictability and at times overestimated their own prediction skills, raising concerns about the human ability to effectively control AI in certain contexts [15].

The Integrated Workflow: Human-AI Collaboration

The most effective research models do not pit human against machine, but rather integrate them into a synergistic workflow. The "Human with Machine" paradigm leverages the unique strengths of both to achieve outcomes superior to either alone [10].

The Scientist's Toolkit: Key Reagent Solutions

In AI-driven research, the "reagents" are both digital and material. The following table details essential components for a modern research workflow integrating AI/ML.

Table 3: Essential Reagents for an AI-Integrated Research Workflow

| Category / Item | Function in Research Workflow | Example Applications | |

|---|---|---|---|

| Computational & Data Resources | |||

| Public Chemical/Drug Databases (PubChem, ChemBank, DrugBank) | Provides vast, structured virtual chemical spaces for AI training and virtual screening. | Identifying hit and lead compounds; drug repurposing. | [11] |

| High-Quality, Curated Datasets | Serves as the foundational fuel for training robust and accurate AI/ML models. | Predicting drug efficacy/toxicity; patient outcome prediction. | [12] [16] |

| AI-Driven Analytical Platforms (e.g., E-VAI) | Uses ML algorithms to analyze market, competitor, and stakeholder data to inform strategic decisions. | Resource allocation; sales forecasting; market analysis. | [11] |

| Specialized AI/ML Models & Tools | |||

| Reasoning Models (e.g., OpenAI o1, IBM Granite) | Provides enhanced logical decision-making for complex, multi-step problems by increasing "test-time" compute. | Solving highly technical math and coding problems; complex data interpretation. | [17] |

| Deep Learning Models (e.g., CNNs, RNNs) | Excels at pattern recognition in complex data structures, such as images, sequences, and dynamic systems. | Medical image analysis (CNNs); biological system modeling (RNNs). | [11] |

| AlphaFold Platform | Revolutionizes structural biology by predicting the 3D structures of proteins from their amino acid sequences. | Target validation; understanding protein function in disease. | [12] |

| Operational & Infrastructure Support | |||

| AI Agents | Systems capable of planning and executing multi-step workflows autonomously or with minimal human intervention. | IT service-desk management; deep research in knowledge management; automated network operations. | [18] [16] |

| Hybrid Analytics (AI + Traditional) | Combines robust statistical methods with AI to handle complex, multivariate problems and unstructured data. | Network anomaly detection; root-cause analysis in complex systems. | [16] |

The "Human-in-the-Loop" Operational Model

Successful integration requires a structured model that defines the roles of humans and AI. High-performing organizations are nearly three times more likely to fundamentally redesign individual workflows to incorporate AI, which is a key success factor for capturing enterprise-level value [18]. The following diagram illustrates this collaborative framework.

Figure 2: A collaborative human-AI framework for research.

The comparison between AI/ML models and human expert knowledge reveals a landscape of complementarity rather than outright replacement. AI/ML demonstrates superior capabilities in processing vast chemical and biological spaces, identifying complex patterns in high-dimensional data, and executing rapid, tireless virtual screening and prediction tasks [12] [10] [11]. Human experts, conversely, provide irreplaceable strategic oversight, contextual understanding, ethical judgment, and creative problem-framing abilities [10] [14] [16]. The most successful organizations in ES assessment and drug development will be those that strategically redesign workflows to foster a synergistic "Human with Machine" partnership [18] [10]. This integration, which leverages the computational power of AI and the nuanced intelligence of human experts, is poised to accelerate scientific discovery and improve the efficacy of research outcomes.

The integration of diverse data sources and knowledge types is fundamentally enhancing the predictive accuracy of environmental science (ES) assessment models. Moving beyond purely data-driven algorithms, researchers are increasingly combining scientific models with experiential expert knowledge, leading to more robust, reliable, and actionable predictions. This guide objectively compares the performance of integrated models against traditional, non-integrated approaches, providing a detailed analysis of the methodologies, quantitative benefits, and essential tools driving this transformative shift in research.

In the realm of Environmental Science assessment, the limitations of isolated, single-discipline models are increasingly apparent. Complex challenges such as ecosystem management and climate forecasting require a synthesis of perspectives. Integration is emerging not as a buzzword, but as a core methodology that significantly boosts the predictive power of scientific models [19]. This process involves the deliberate combination of scientific data—from sensors, satellites, and structured databases—with the nuanced, context-rich expert knowledge of stakeholders, from field researchers to local practitioners [20] [21].

The central thesis of modern predictive analytics is that this fusion creates a more complete picture of the systems under study. While advanced algorithms can detect patterns in vast datasets, they often lack the contextual understanding to interpret these patterns correctly or to account for "black swan" events not present in the historical data. Integrated models bridge this gap, leading to tangible improvements in predictive accuracy, which is defined as the success of a model in forecasting future outcomes based on past and current data [22]. The following sections provide the experimental data and methodological details to substantiate this claim.

Comparative Performance Data: Integrated vs. Traditional Models

Empirical evidence from various sectors demonstrates that integrated predictive models consistently outperform their traditional counterparts. The table below summarizes key performance metrics from documented case studies.

Table 1: Comparative Performance of Integrated vs. Traditional Predictive Models

| Sector/Application | Model Type | Key Performance Metric | Result | Source/Context |

|---|---|---|---|---|

| Aviation (Predictive Maintenance) | Integrated (Real-time sensor data + engineering expertise) | Maintenance-related cancellations | 98% reduction (from 5,600 in 2010 to 55 in 2018) [23] | Delta Air Lines case study [23] |

| Finance (Fraud Detection) | Integrated (Behavioral analytics + human oversight) | Fraud losses | Up to 67% reduction [24] | UK banking industry example [24] |

| Personality Research | Nuanced Assessment (Multi-faceted traits + contextual data) | Explanatory and Predictive Power | Significant improvement vs. broad-domain models [25] | Psychological assessment research [25] |

| Healthcare (Patient Risk) | Integrated (Patient data + clinical knowledge) | Patient Outcomes & Costs | Improved outcomes & reduced long-term costs [24] | NHS and private provider applications [24] |

The data indicates that integrated models excel particularly in complex, real-world environments. For instance, in aviation, a purely data-driven model might flag an anomaly based on sensor readings, but the integration of engineering expertise allows for a more accurate diagnosis and decision, leading to a dramatic reduction in operational disruptions [23]. Similarly, in fraud detection, behavioral analytics models are powerful, but their accuracy is significantly enhanced when their outputs are calibrated and validated by human experts who understand nuanced criminal behaviors [24].

Experimental Protocols for Knowledge Integration

The superior performance of integrated models is not automatic; it relies on structured methodologies that facilitate the synthesis of different knowledge types. The following are key experimental protocols cited in recent research.

The Transdisciplinary Scenario Building Method

This method is designed to integrate scientific and experiential knowledge to explore future environmental scenarios [20] [21].

- Objective: To co-create plausible future scenarios for environmental assessment by integrating quantitative scientific data with qualitative stakeholder knowledge.

- Procedure:

- Participant Selection: Assemble a diverse group including scientists from at least three relevant disciplines (e.g., ecology, climatology, economics) and stakeholders with experiential knowledge (e.g., farmers, supply chain managers, conservationists) [20] [21].

- Structured Elicitation: Use facilitated workshops to systematically elicit knowledge from all participants. Scientists provide data-driven projections (e.g., climate models, species population trends), while stakeholders contribute on-the-ground observations, practical constraints, and societal values.

- Co-creation: Guide the group in combining these inputs to build a set of coherent, internally consistent narrative scenarios. This involves iterative discussion, challenge of assumptions, and negotiation of differing perspectives.

- Validation: The scenarios are assessed for their credibility (scientific plausibility), salience (relevance to decision-makers), and legitimacy (perceived as fair and unbiased by all parties) [20].

- Outcome Measurement: The success of knowledge integration in this process can be empirically measured using a novel 25-item scale that evaluates both socio-emotional (e.g., trust, respect) and cognitive-communicative (e.g., shared language, knowledge synthesis) factors [20] [21].

The Nuanced Assessment Protocol

Originating in personality psychology, this protocol has direct relevance for ES research where expert judgments are often encoded in models [25].

- Objective: To improve the predictive and explanatory accuracy of assessments by moving beyond broad categories to measure specific, fine-grained "nuances."

- Procedure:

- Deconstruction: Break down a broad expert judgment (e.g., "ecosystem resilience") into its constituent, measurable nuances (e.g., "soil microbial diversity," "canopy cover density," "seed dispersal rate").

- Independent Measurement: Quantify each nuance using specific, validated tools—which could range from lab assays and sensor data to highly specific survey questions posed to domain experts.

- Statistical Integration: Use statistical models (e.g., machine learning algorithms, structural equation models) to reassemble these nuanced measurements into a composite predictive score. The model learns the optimal weight for each nuance in predicting the target outcome.

- Outcome Measurement: Predictive accuracy is measured using standard metrics (e.g., R-squared, Mean Absolute Error for regression; Accuracy, F1-score for classification) and compared against models that use only broad, aggregate assessments [22] [25].

Visualization of Integrated Model Workflows

The following diagram illustrates the logical flow and iterative nature of a transdisciplinary knowledge integration process, as used in scenario building and similar methods.

Diagram 1: Transdisciplinary Knowledge Integration Process

The Researcher's Toolkit for Integrated Modeling

Building and validating integrated models requires a specific suite of methodological tools and reagents. The following table details key components of a modern research toolkit for this purpose.

Table 2: Essential Research Reagent Solutions for Integrated Predictive Modeling

| Tool/Reagent | Type | Primary Function in Integration | Key Considerations |

|---|---|---|---|

| Transdisciplinary Methods (TdM) | Methodological Framework | Purposeful tools (e.g., scenario building, serious games) to systematically collect and organize knowledge from diverse actors [20]. | Suitability depends on research question complexity, participant dynamics, and project goals [20]. |

| Knowledge Integration Scale | Evaluation Metric | A 25-item scale to empirically measure a method's contribution to knowledge integration across socio-emotional and cognitive-communicative dimensions [20] [21]. | Provides standardized instrument for comparative method analysis, addressing a prior research gap [20]. |

| Ensemble Learning Algorithms | Computational Tool | Methods like bagging, boosting, and stacking that combine multiple models to maximize predictive accuracy and stability [22]. | Effective when individual models are accurate and diverse; balances bias-variance tradeoff [22]. |

| Explainable AI (XAI) Methods | Software Tool | Techniques like LIME and SHAP that reveal how a model makes decisions, illustrating the contribution of each input feature [22] [24]. | Critical for transparency, debugging, and building trust with stakeholders; often required for regulatory compliance [24]. |

| Hyperparameter Optimization | Computational Process | Automated methods (e.g., grid search, cross-validation) to find the optimal parameter values for a machine learning algorithm [22]. | Essential for maximizing model performance on a specific dataset; requires significant computational resources. |

The evidence from across sectors is clear: the integration of scientific models with expert knowledge is a powerful mechanism for enhancing predictive accuracy in Environmental Science assessment. This is not a marginal improvement but a fundamental shift, enabling models to be more robust, context-aware, and trustworthy. As the field advances, the conscious selection and evaluation of integration methods—supported by the toolkit and protocols outlined above—will be paramount for researchers aiming to generate predictions that are not only statistically sound but also genuinely useful for solving complex real-world problems.

The integration of scientific models with expert knowledge represents a frontier in evidence synthesis (ES) assessment research, particularly in drug development. This guide objectively compares three core methodologies—knowledge graphs, deep learning, and expert elicitation—focusing on their performance, protocols, and complementary strengths. As computational methods become more sophisticated, understanding how to leverage structured knowledge, artificial intelligence, and human judgement is crucial for robust scientific assessment. We present experimental data, detailed methodologies, and practical frameworks to guide researchers in selecting and combining these approaches for enhanced ES outcomes.

The table below summarizes the core characteristics, strengths, and limitations of the three key technologies in the context of scientific research and ES assessment.

Table 1: Core Technology Comparison for ES Assessment Research

| Technology | Primary Function | Key Strengths | Common Limitations |

|---|---|---|---|

| Knowledge Graphs (KGs) [26] [27] [28] | Structured representation of entities and relationships. | Superior interconnection & data integration; Enhanced explainability & visualization; Facilitates knowledge reasoning & completion. | Prone to incompleteness; Handling noise in data; Manual construction can be costly. |

| Deep Learning (DL) [29] [28] [30] | Automated pattern recognition and prediction from data. | High accuracy in specific tasks (e.g., recommendation, prediction); Handles complex, multimodal data (audio, video, text). | Operates as a "black box" with low explainability; Requires large volumes of data; Struggles with reasoning on new concepts. |

| Expert Elicitation [31] [32] [33] | Systematic formalization of human expert judgement. | Invaluable where data is limited (e.g., rare diseases); Quantifies uncertainty and clinical plausibility. | Lacks a formal framework; Results can be optimistic/pessimistic vs. real-world data; Potential for between-expert variation. |

Methodologies and Experimental Protocols

Knowledge Graph Construction and Completion

The construction of a knowledge graph is a multi-stage process that has been revolutionized by Large Language Models (LLMs) [34].

Figure 1: Workflow for LLM-Powered Knowledge Graph Construction

Detailed Protocol:

- Data Preprocessing: Clean and normalize input text, then chunk it into manageable segments [34].

- Ontology Definition: Define the schema, including entity types (e.g.,

Person,Organization) and relation types (e.g.,WORKS_AT,PRODUCES) [34]. - LLM-Powered Triple Extraction: Use a generative LLM (e.g., GPT-4, Claude) with few-shot prompting to extract

(subject, predicate, object)triples directly from the text chunks. This approach reframes extraction as a generation task, bypassing the need for multiple trained models [34]. - Graph Construction & Enrichment: Map the extracted triples into a graph database. A knowledge completion model, such as an improved Graph Attention Network (GAT), can then be applied to predict missing links and enhance the graph's completeness [28].

- Querying: The resulting knowledge graph can be queried using natural language or structured query languages like Cypher [34].

Deep Learning for Feature Fusion and Prediction

Deep learning models excel at integrating diverse data types. A common application is a hybrid model for personalized recommendations, as seen in music learning platforms, which can be adapted for scientific resource recommendation [30].

Figure 2: Deep Learning Model for Multimodal Data Fusion

Detailed Protocol:

- Feature Extraction:

- Audio/Visual Data: Processed using Convolutional Neural Networks (CNNs) to extract spatial and spectral features [30].

- Sequential Data: Processed using Long Short-Term Memory (LSTM) networks to model temporal dependencies [30].

- User/Behavioral Data: Encoded using multi-layer perceptrons (MLPs) [30].

- Knowledge Graph Fusion: Integrate structured knowledge from a domain-specific KG (e.g., drug mechanisms, disease pathways) into the feature representation. This provides rich semantic context [30].

- Feature Fusion: The extracted and KG-enhanced feature vectors are concatenated or combined in a fusion layer [30].

- Model Training & Prediction: The fused feature vector is fed into a final predictive layer (e.g., a softmax classifier for recommendation). A self-attention mechanism can be incorporated to help the model focus on the most relevant features [30].

Expert elicitation is a formal process to quantify expert judgement and uncertainty, crucial for parameters where empirical data is scarce [32] [33].

Figure 3: Structured Expert Elicitation Workflow

Detailed Protocol (following the MRC framework) [32]:

- Planning: Define the target parameter (e.g., treatment effect, long-term survival) and plan the exercise.

- Expert Selection: Aim for 5-10 experts from diverse geographies and settings to mitigate bias [32].

- Training: Conduct online or in-person training sessions to calibrate experts and explain the process.

- Judgement Elicitation: Use specific methods to gather quantitative judgements. The roulette method is one common approach, where experts distribute "chips" across a grid representing possible values to express their beliefs about probabilities [32]. Other methods include the SHeffield ELicitation Framework (SHELF) [33].

- Mathematical Aggregation: Combine individual judgements into a single prior distribution. Linear opinion pooling is a documented method for this, which averages the probability distributions provided by each expert [32].

Experimental Data and Performance Benchmarks

Quantitative Performance in Applied Research

The following table compiles key performance metrics from experimental studies across different application domains, demonstrating the measurable impact of these technologies.

Table 2: Experimental Performance Metrics from Applied Research

| Technology | Application Domain | Key Performance Metric | Reported Result | Citation |

|---|---|---|---|---|

| LLM-Powered KG | General Information Extraction | Hallucination Reduction | 90% reduction vs. traditional RAG | [34] |

| KG + DL | Digital Cultural Heritage | Knowledge Extraction Accuracy | Effective automatic extraction from fragmented data | [28] |

| DL + KG | Online Music Learning | Recommendation Accuracy (under TOP-K) | Reached 0.90, exceeding collaborative filtering & content-based methods | [30] |

| Expert Elicitation | Renal Cell Carcinoma (RCC) | Question Response Rate | 95% from 9 participating experts | [32] |

| Expert Elicitation | Clinical Trials (General) | Methodological Standardization | No formal framework identified; 6 elicitation & 10 aggregation methods in use | [31] [33] |

The Researcher's Toolkit: Essential Reagents and Solutions

This table details key software tools and methodological frameworks essential for implementing the discussed technologies.

Table 3: Essential "Research Reagent Solutions" for Implementation

| Category | Tool / Framework Name | Primary Function | Key Features / Use Case | Citation |

|---|---|---|---|---|

| KG Construction | FalkorDB GraphRAG SDK | Graph-native RAG at scale | Production-grade SDK; multi-LLM support; proven hallucination reduction. | [34] |

| KG Construction | Cognee | Cognitive memory layer for AI | Hybrid graph+vector memory; 30+ data connectors; modular pipeline. | [34] |

| Expert Elicitation | SHeffield ELicitation Framework (SHELF) | Structured expert judgement protocol | Provides documents, templates, and software for formal elicitation. | [33] |

| Expert Elicitation | Structured Expert Elicitation Resources (STEER) | Repository for elicitation materials | Aligned with MRC protocol; provides R code and survey examples. | [32] |

| DL & KG Integration | Convolutional Neural Networks (CNN) | Feature extraction from structured data | Extracts spatial/spectral features from images, audio, etc. | [30] |

| DL & KG Integration | Long Short-Term Memory (LSTM) | Modeling temporal sequences | Captures time-dependent patterns in user behavior or text. | [30] |

Integrated Workflow for ES Assessment

The true power of these methodologies is realized through integration. The following diagram illustrates a potential workflow for an ES assessment study that synergistically combines knowledge graphs, deep learning, and expert elicitation.

Figure 4: Integrated ES Assessment Research Workflow

This integrated approach allows for the creation of a dynamic evidence ecosystem. The knowledge graph provides a structured, explainable backbone of current scientific knowledge. Deep learning models can identify complex, non-obvious patterns within the data stored in and enriched by the KG. Finally, expert elicitation provides a mechanism to formally incorporate domain expertise, particularly for quantifying uncertainty in areas with sparse data, thereby creating more robust and clinically plausible assessments.

A central challenge in modern drug development is the frequent failure of candidates that appear safe in preclinical models to translate safely to human patients. This translational gap, largely caused by biological differences between species, leads to high attrition rates in clinical trials and occasional post-marketing withdrawals due to severe adverse events (SAEs) [35]. Traditional toxicity prediction methods have primarily relied on chemical property analysis but often overlook these critical inter-species physiological differences [35] [36]. This comparative analysis examines the evolution of toxicity prediction approaches, from conventional chemical-based methods to a novel Genotype-Phenotype Difference (GPD) framework, evaluating their performance, methodologies, and practical applications in contemporary drug development pipelines. The integration of biologically-grounded machine learning models with domain-specific expert knowledge represents a paradigm shift in early safety assessment, offering the potential to significantly improve patient safety and reduce development costs [37].

Comparative Analysis of Toxicity Prediction Approaches

Traditional Chemical-Based Methods

Conventional toxicity prediction has predominantly utilized chemical structure-based features and drug-likeness rules (e.g., Lipinski, Veber) [35]. These methods employ machine learning algorithms trained on large chemical databases to identify structural motifs associated with toxic outcomes. While valuable for initial screening, these approaches fundamentally lack biological context, as they do not account for species-specific differences in drug target interactions [36]. This limitation is particularly evident for organ-specific toxicities like neurotoxicity and cardiotoxicity, where biological context is crucial for accurate prediction [35]. The primary strength of chemical-based methods lies in their applicability during early discovery phases when only compound structures are available, but their inability to model complex biological interactions results in limited predictive accuracy for human-specific adverse events [35].

The GPD-Based Framework: A Biologically-Grounded Approach

The Genotype-Phenotype Difference (GPD) framework represents a significant advancement in toxicity prediction by directly addressing biological differences between preclinical models and humans [35] [37]. This approach incorporates inter-species variations in how genetic perturbations manifest as phenotypic effects, focusing on three key biological contexts:

- Gene Essentiality: Differences in how critical specific genes are for cellular survival between model systems and humans [35].

- Tissue Expression Profiles: Variations in where and when drug target genes are expressed across tissues and species [35].

- Network Connectivity: Divergence in protein-protein interaction networks and biological pathway architectures [35].

By quantifying these differences for drug targets, the GPD framework provides a biologically grounded foundation for predicting human-specific toxicities that conventional chemical methods often miss [35].

Performance Comparison: Quantitative Metrics

The table below summarizes the performance differences between chemical-based and GPD-based toxicity prediction approaches, based on validation using 434 risky and 790 approved drugs [35]:

Table 1: Performance comparison between chemical-based and GPD-based toxicity prediction models

| Prediction Model | AUROC | AUPRC | Key Strengths | Major Limitations |

|---|---|---|---|---|

| Chemical-Based Model | 0.50 | 0.35 | - Rapid screening- No biological data required- Established workflows | - Poor human translatability- Misses biological mechanisms- Limited for neuro/cardiotoxicity |

| GPD-Based Model | 0.75 | 0.63 | - Captures species differences- Explains biological mechanisms- Superior for neuro/cardiotoxicity | - Requires multiple data types- Complex implementation- Dependent on genomic data quality |

The GPD-based model demonstrates particularly enhanced predictive accuracy for toxicity endpoints that frequently lead to clinical failures, such as neurotoxicity and cardiovascular toxicity, which were previously overlooked due to their chemical properties alone [35]. In chronological validation experiments, the GPD framework correctly predicted 95% of drugs later withdrawn from the market when trained only on data available prior to 1991, demonstrating its practical utility in real-world drug development settings [37].

Experimental Protocols and Methodologies

GPD Framework Implementation Workflow

The experimental protocol for implementing the GPD-based toxicity prediction framework involves a systematic, multi-stage process:

Figure 1: GPD framework implementation workflow showing the multi-stage process from data collection to model deployment.

Data Curation and Preprocessing Protocols

Drug Dataset Compilation: The training dataset incorporated drugs from two primary categories: (1) risky drugs (n=434) including those failed in clinical trials due to safety issues (sourced from Gayvert et al. and ClinTox database) and drugs with post-marketing safety issues (withdrawn drugs or those carrying boxed warnings); and (2) approved drugs (n=790) with no reported SAEs from ChEMBL database (version 32), excluding anticancer drugs due to their distinct toxicity tolerance profiles [35]. To minimize chemical structure bias, duplicate drugs with analogous structures (Tanimoto similarity coefficient ≥0.85) were removed using STITCH IDs and chemical fingerprints generated via RDKit [35].

GPD Feature Calculation: For each drug target, three core GPD features were quantified: (1) Essentiality GPD: Difference in gene essentiality scores between human and model organism cell lines; (2) Expression GPD: Discrepancy in tissue-specific expression patterns across key organs; (3) Network GPD: Differential connectivity metrics within protein-protein interaction networks [35]. These features were integrated with traditional chemical descriptors to create a comprehensive feature set for model training.

Model Training and Evaluation Framework

The machine learning implementation utilized Random Forest algorithm, chosen for its robustness with heterogeneous feature types and ability to handle complex interactions [35]. The model was trained using stratified k-fold cross-validation to account for class imbalance between risky and approved drugs. Performance was evaluated using area under the receiver operating characteristic curve (AUROC) and area under the precision-recall curve (AUPRC), with emphasis on AUPRC given the imbalanced nature of toxicity datasets [35]. Benchmarking against state-of-the-art chemical structure-based models included chronological validation to assess real-world predictive capability for future drug withdrawals [35].

Successful implementation of advanced toxicity prediction frameworks requires specialized computational resources and biological datasets. The following table catalogs key research reagents and their applications in GPD-based toxicity assessment:

Table 2: Essential research reagents and resources for GPD-based toxicity prediction

| Resource Category | Specific Examples | Application in Toxicity Assessment | Access Considerations |

|---|---|---|---|

| Drug Toxicity Databases | ChEMBL [36], ClinTox [36], DILIrank [36], SIDER [36] | Provides curated drug toxicity labels for model training | Publicly available, requires standardized data formatting |

| Biological Databases | Tox21 [36], ToxCast [36], GTEx, DepMap | Source for gene essentiality, expression, and network data | Some restricted access, ethical approvals needed |

| Cheminformatics Tools | RDKit, Extended-Connectivity Fingerprints (ECFP4) [35] | Chemical structure standardization and descriptor calculation | Open-source tools available |

| Machine Learning Frameworks | Scikit-learn, XGBoost, Graph Neural Networks [36] | Model implementation, training, and validation | Open-source with specialized hardware requirements |

| Model Interpretation Tools | SHAP, attention-based visualizations [36] | Explainable AI for model decision transparency | Integrated within major ML frameworks |

Biological Mechanisms and Pathway Visualization

The GPD framework operates on the fundamental principle that evolutionary divergence between species creates differences in genotype-phenotype relationships, which manifest as differential toxicological responses to drug interventions. The following diagram illustrates the key biological contexts and pathways through which these differences arise:

Figure 2: GPD mechanisms and toxicity pathways across biological contexts.

The diagram illustrates three primary mechanisms through which genotype-phenotype differences manifest as differential toxicity: (1) Gene Essentiality Context: A drug target essential in humans but redundant in model organisms may show no toxicity in preclinical studies but cause cellular toxicity in humans; (2) Tissue Expression Context: Differential expression patterns of drug targets in specific tissues (e.g., heart, brain) between species can result in organ-specific toxicity appearing only in humans; (3) Network Connectivity Context: Divergent protein-protein interaction networks can cause identical drug-target interactions to propagate through different biological pathways, leading to unexpected adverse outcomes in humans [35].

The evolution from chemical-based to biology-informed toxicity prediction represents a significant advancement in drug safety assessment. The GPD framework demonstrates that incorporating species-specific biological differences substantially improves prediction accuracy for human-relevant toxicities, particularly for challenging endpoints like neurotoxicity and cardiotoxicity [35]. This approach bridges the critical translational gap between preclinical models and clinical outcomes by addressing the fundamental biological reasons for species differences in drug responses [37].

For the research community, successful implementation of these advanced prediction frameworks requires thoughtful integration of computational approaches with domain expertise. The GPD model's performance, achieving AUROC of 0.75 compared to 0.50 for conventional methods, underscores the value of biologically-grounded features [35]. Furthermore, the framework's practical utility is demonstrated by its 95% accuracy in predicting future drug withdrawals in chronological validation [37]. As drug-target annotations and functional genomics datasets continue to expand, GPD-based and similar biology-informed frameworks are poised to play an increasingly pivotal role in building more effective, human-relevant toxicity assessment models, ultimately contributing to safer therapeutic development and improved patient outcomes [35].

From Theory to Therapy: A Methodological Framework for Integrating Models and Expertise

In modern ES (Environmental Safety) assessment and drug development research, the ability to integrate and analyze complex, multi-scale data is paramount. The exponential growth of scientific data, from high-throughput sequencing to real-time environmental monitoring, necessitates a new paradigm for data management. Unified data ecosystems provide the architectural foundation for seamlessly integrating disparate scientific models and leveraging expert knowledge. These ecosystems are not merely IT infrastructure; they are strategic platforms that enable cross-domain knowledge synthesis, accelerate the pace of discovery, and ensure regulatory compliance through robust governance.

The shift toward these integrated architectures represents a fundamental evolution in scientific data management. Where traditional approaches often resulted in isolated data silos and fragmented analytical capabilities, unified ecosystems establish a coherent framework for managing the entire data lifecycle. For research organizations, this translates to enhanced collaboration capabilities, improved data reproducibility, and more effective utilization of artificial intelligence and machine learning technologies. The 2025 landscape is defined by architectures specifically designed to handle the velocity, variety, and volume of scientific data while maintaining the integrity and context essential for rigorous research [38] [39].

Comparative Analysis of Data Integration Architectures

Selecting the appropriate data architecture is foundational to building an effective unified data ecosystem. The table below provides a comparative analysis of prominent architectural patterns, evaluating their suitability for scientific research applications, particularly in ES assessment and pharmaceutical development.

Table 1: Comparison of Data Integration Architectures for Scientific Research

| Architecture | Core Principles | Advantages for Research | Implementation Challenges | Use Case Alignment |

|---|---|---|---|---|

| Data Mesh | Domain-oriented decentralization; Data-as-a-Product | Empowers domain experts (e.g., toxicologists); improves data accessibility and ownership | Complex governance coordination; cultural shift toward data product thinking | Large, multidisciplinary research organizations with specialized domains |

| Data Fabric | Unified metadata layer; Automated orchestration | Provides consistent semantics across disparate data sources; enhances data discovery | Significant upfront investment in metadata management | Integrating legacy systems and diverse data modalities (e.g., -omics, clinical) |

| Medallion Architecture | Layered data refinement (Bronze→Silver→Gold) | Ensures progressive data quality improvement; clear audit trail for regulatory compliance | Can create latency for real-time analysis needs | Foundational structure for research data lakes and AI/ML readiness |

| Business-Driven Data Vault | Hub-and-spoke modeling around business concepts | Adaptable to changing research requirements; preserves historical data context | Requires specialized modeling expertise; complex query patterns | Longitudinal studies and research programs with evolving protocols |

| Event-Driven Architecture (EDA) | Real-time data flow through events/streams | Enables immediate response to experimental results or monitoring data | New operational paradigm for batch-oriented research teams | Real-time laboratory instrumentation and environmental sensing networks |

Each architectural pattern offers distinct advantages for specific research contexts. The Medallion Architecture, popularized by Databricks, provides a clear path from raw, ingested data to refined, business-ready datasets through its Bronze (raw), Silver (refined), and Gold (business-ready) layers. When combined with model-driven design tools, this architecture ensures that structure and semantics are maintained throughout the refinement process, which is crucial for scientific reproducibility [39].

Data Mesh has gained significant traction in 2025 as organizations seek to scale their data operations while maintaining domain-specific expertise. This approach promotes domain ownership and decentralized data management, allowing research teams in areas like genomics, toxicology, or clinical research to maintain control over their data products while adhering to federated governance standards. The core value proposition for scientific organizations is balancing specialist knowledge with enterprise-wide interoperability [39].

The AI Revolution in Scientific Data Processing

Advanced AI Capabilities for Scientific Discovery

Artificial intelligence has transitioned from an auxiliary tool to a core component of the scientific data ecosystem. Recent advancements demonstrate AI's growing capability to not just analyze data but to generate novel scientific insights and methodologies.

Table 2: AI Performance in Scientific Prediction and Discovery Tasks

| AI System / Capability | Scientific Domain | Performance Metric | Comparative Benchmark | Implications for Research |

|---|---|---|---|---|

| BrainGPT | Neuroscience | ~81% accuracy in predicting experimental outcomes | Surpassed human experts (63.4% accuracy) on BrainBench benchmark | Potential for hypothesis generation and experimental design optimization |

| Google Empirical Software System | Genomics (scRNA-seq) | 14% overall improvement in batch integration | Outperformed best published method (ComBat) on OpenProblems benchmark | Automates development of novel analysis methods beyond human conception |

| Scientific LLMs | Multi-disciplinary | State-of-the-art in specialized benchmarks (e.g., ScienceQA, MMLU-Pro) | Excel in process- and discovery-oriented evaluations | Foundation for cross-domain knowledge integration and literature synthesis |

| AI Agents | Drug Discovery | Execute end-to-end scientific workflows autonomously | Coordinate multiple specialized agents for complex tasks | Accelerates target identification and validation cycles |

Large Language Models specifically trained on scientific literature (Sci-LLMs) have demonstrated remarkable capabilities in predicting experimental outcomes. In a landmark 2025 study published in Nature Human Behaviour, LLMs significantly surpassed human experts in predicting neuroscience results. The study created "BrainBench," a forward-looking benchmark where both AI and human experts selected between original and altered scientific abstracts. LLMs achieved an average accuracy of 81.4% compared to 63.4% for human domain experts [40]. This capability stems from the models' ability to integrate information across the entire abstract, including methodological details, rather than relying solely on results sections [40].

The architecture of these AI systems enables their scientific prowess. As illustrated below, Sci-LLMs process heterogeneous scientific data through specialized tokenization and reasoning frameworks:

Autonomous AI Systems for Empirical Software Generation

Beyond predictive capabilities, AI systems now demonstrate proficiency in generating novel scientific software. Google Research recently developed an AI system that creates "empirical software" designed to maximize predefined quality scores for scientific tasks. This system uses tree search strategies to generate, implement, and validate thousands of code variants, identifying high-performance solutions that often exceed human-designed approaches [41].

In genomics, this system discovered 40 novel methods for single-cell RNA sequencing data integration, with the highest-scoring solution achieving a 14% overall improvement over the best published method (ComBat) [41]. Similarly, in public health, the system generated 14 models that outperformed the official COVID-19 Forecast Hub Ensemble for predicting U.S. COVID-19 hospitalizations [41]. These demonstrations highlight a fundamental shift toward AI-driven methodological innovation in scientific computing.

The workflow of these autonomous discovery systems follows a structured iterative process:

Implementation Framework for Scientific Research Organizations

Foundational Components and Research Reagents

Building a unified data ecosystem requires both technical infrastructure and specialized analytical components. The table below details essential "research reagents" – core solutions and platforms that form the building blocks of a modern scientific data architecture.

Table 3: Essential Research Reagent Solutions for Unified Data Ecosystems

| Component Category | Specific Solutions / Platforms | Core Function | Research Application Examples |

|---|---|---|---|

| Streaming Data Platforms | Apache Kafka, Amazon Kinesis | Real-time data ingestion and processing | Continuous environmental monitoring; high-frequency laboratory instrumentation |

| AI/ML Modeling Platforms | ER/Studio with ERbert AI Assistant | Convert natural language requirements into structured data models | Protocol standardization; metadata management for regulatory compliance |

| Scientific LLMs | BrainGPT, SciGLM, NatureLM | Domain-specific prediction and hypothesis generation | Literature-based discovery; experimental outcome prediction; research gap identification |

| Data Pipeline Tools | Modern ELT platforms (26.8% CAGR) | Cloud-native data transformation and movement | Multi-omics data integration; clinical trial data management |

| Privacy-Enhancing Technologies | Differential privacy, Homomorphic encryption | Secure analysis of sensitive data | Protected health information (PHI) analysis; collaborative research on proprietary data |

| Metadata Management | Data catalog solutions | Automated data discovery and lineage tracking | Reproducibility frameworks; data provenance for publication |

The market for these components shows explosive growth, with the streaming analytics market projected to reach $128.4 billion by 2030 (28.3% CAGR) and data pipeline tools growing at 26.8% CAGR versus traditional ETL's 17.1% [42]. This growth reflects the critical importance of these technologies in managing the increasing volume and velocity of scientific data.

Implementation Methodology and Experimental Protocol

Successful implementation of a unified data ecosystem follows a structured methodology that aligns technical capabilities with research objectives. The protocol below outlines a proven approach for scientific organizations:

Phase 1: Architecture Definition and Governance Framework

- Conduct a comprehensive audit of existing data assets, analytical workflows, and integration points

- Define domain boundaries and data product ownership following Data Mesh principles

- Establish federated computational governance with representatives from each research domain

- Implement business-driven Data Vault modeling to create a scalable integration layer

Phase 2: Core Platform Implementation and Data Product Development

- Deploy event-driven architecture using platforms like Apache Kafka (used by 40%+ of Fortune 500 companies) [42]

- Implement Medallion architecture layers with clear quality gates between Bronze, Silver, and Gold zones

- Develop initial data products focusing on high-impact research assets (e.g., compound libraries, clinical trial data, environmental exposure data)

- Establish automated data quality checks integrated into CI/CD pipelines

Phase 3: AI Integration and Advanced Analytics Enablement

- Integrate Scientific LLMs for literature mining and hypothesis generation

- Implement AI-assisted modeling tools to accelerate data product development

- Deploy privacy-enhancing technologies for sensitive data analysis

- Establish MLOps pipelines for model governance and reproducibility

Phase 4: Ecosystem Expansion and Optimization

- Scale successful data products across additional research domains

- Implement advanced capabilities like synthetic data generation for model training

- Establish continuous improvement processes based on researcher feedback and usage metrics

This methodology enables organizations to systematically address implementation challenges while delivering incremental value. The strategic incorporation of AI throughout the ecosystem transforms how research is conducted, moving from reactive analysis to proactive discovery.

Unified data ecosystems represent the foundational infrastructure for next-generation scientific discovery. By architecting integrated platforms that combine domain expertise with advanced AI capabilities, research organizations can dramatically accelerate the pace of discovery while maintaining rigorous standards for data quality and reproducibility. The architectures, technologies, and methodologies described in this guide provide a roadmap for building ecosystems that are not just technically sophisticated but fundamentally aligned with the processes of scientific innovation.

As these ecosystems mature, they enable new paradigms of research where AI systems and human experts collaborate in a continuous cycle of hypothesis generation, experimental validation, and knowledge integration. This partnership between human intuition and machine scale holds the potential to address increasingly complex scientific challenges, from developing personalized therapeutics to understanding and mitigating environmental health risks. The organizations that successfully architect these foundations today will lead the scientific discoveries of tomorrow.

Leveraging Knowledge Graph Embedding for Multi-Relational Data Synthesis

Knowledge Graph Embedding (KGE) has emerged as a transformative technology for representing structured knowledge in a continuous vector space, enabling sophisticated reasoning and data synthesis across multi-relational datasets. For environmental science (ES) assessment research, where integrating diverse scientific models with expert knowledge is paramount, KGE provides a powerful framework for synthesizing heterogeneous data sources into coherent analytical insights. By capturing complex relationships between entities—from chemical compounds and biological processes to ecosystem interactions—KGE models facilitate the prediction of missing links and generate novel hypotheses about environmental systems. This capability is particularly valuable for drug development professionals and researchers working at the intersection of environmental and biomedical sciences, where understanding complex interactions can accelerate discovery while assessing environmental impact.

The fundamental challenge in multi-relational data synthesis lies in effectively representing entities and their relationships in ways that preserve semantic meaning while enabling computational inference. Traditional approaches often struggle with the inherent complexity, sparsity, and scale of real-world knowledge graphs, especially in scientific domains where relationships follow distinct patterns and hierarchies. This article provides a comprehensive comparison of contemporary KGE methodologies, evaluating their performance across standard benchmarks and specialized domains like drug discovery and microbial ecology, with particular relevance to ES assessment frameworks that require integration of diverse scientific models.

Performance Comparison of KGE Approaches

Quantitative Benchmark Results

Table 1: Performance comparison of KGE models on standard benchmark datasets

| Model | FB15k-237 (MRR) | WN18RR (MRR) | YAGO3-10 (MRR) | Drug Repositioning (AUC) | Specialized Capabilities |

|---|---|---|---|---|---|

| SectorE | 0.380 | 0.480 | 0.570 | - | Semantic hierarchy modeling |

| Ne_AnKGE | 0.370 | 0.470 | - | - | Negative sample analogical reasoning |

| UKEDR | - | - | - | 0.950 | Cold-start scenario handling |

| LukePi | - | - | - | 0.910 | Biomedical interaction prediction |

| AnKGE | 0.350 | 0.450 | - | - | Positive sample analogical reasoning |

| TransE | 0.294 | 0.226 | - | - | Translation-based modeling |

| RotatE | 0.338 | 0.476 | - | - | Complex relation modeling |

Note: MRR = Mean Reciprocal Rank, AUC = Area Under Curve. Dashes indicate unavailable data in the search results. SectorE, Ne_AnKGE, and UKEDR represent contemporary state-of-the-art approaches. [43] [44] [45]

Domain-Specific Performance

Table 2: Performance of KGE models in specialized application domains

| Model | Application Domain | Key Metric | Performance | Data Challenges Addressed |

|---|---|---|---|---|

| UKEDR | Drug repositioning | AUC | 0.950 | Cold start, data imbalance |

| LukePi | Biomedical pairwise interactions | Accuracy | Outperforms 22 baselines | Low-data scenarios, distribution shifts |

| Microbial KGE | Microbial interactions | Prediction accuracy | Effective with minimal features | Missing culture data |

| SectorE | General KG completion | MRR (WN18RR) | 0.480 | Semantic hierarchies |

| Ne_AnKGE | General KG completion | MRR (FB15k-237) | 0.370 | Data imbalance, missing triples |

The evaluation results demonstrate that specialized KGE models consistently outperform general approaches in their respective domains, with UKEDR achieving remarkable 0.95 AUC in drug repositioning by effectively handling cold-start scenarios through semantic similarity-driven embeddings. [44] [46] [47]

Experimental Protocols and Methodologies

SectorE: Annular Sector Embeddings

SectorE introduces a novel geometric approach to knowledge graph embedding by representing relations as annular sectors in polar coordinates, effectively capturing semantic hierarchies and complex relational patterns. The methodology employs:

Coordinate System Transformation: Entities are embedded as points in polar coordinates, with each entity represented by both modulus and phase components that capture different aspects of semantic meaning.

Relation Modeling: Relations are represented as annular sectors defined by modulus intervals and phase ranges, allowing the model to capture hierarchical properties where head entities correspond to smaller moduli and tail entities to larger moduli.

Scoring Function: The model uses a distance-based scoring function that measures how well entities fit within their corresponding relational sectors, considering both radial and angular components.

The experimental protocol for SectorE involves training on standard knowledge graph completion benchmarks (FB15k-237, WN18RR, YAGO3-10) using negative sampling, with evaluation based on standard information retrieval metrics including Mean Reciprocal Rank (MRR) and Hits@N. The model demonstrates competitive performance, particularly in capturing semantic hierarchies that are prevalent in scientific knowledge graphs. [43]

UKEDR: Unified Knowledge-Enhanced Framework

The UKEDR framework employs a sophisticated methodology for drug repositioning that systematically integrates knowledge graph embedding with pre-training strategies and recommendation systems:

Feature Extraction Pipeline: Implements a dual-stream architecture with DisBERT (a BioBERT model fine-tuned on 400,000 disease descriptions) for disease representation and CReSS for drug feature extraction from molecular SMILES and carbon spectral data.

Knowledge Graph Embedding: Utilizes PairRE as the base KGE model due to its exceptional scalability, representing relations with paired vectors to enable adaptive adjustment to complex relationships.

Attentional Factorization Machines: Implements AFM recommendation systems that capture complex feature interactions through attention mechanisms, moving beyond traditional dot products for modeling drug-disease associations.

The experimental validation involves comprehensive testing on three benchmark datasets (RepoAPP and others) with particular emphasis on cold-start scenarios where drugs or diseases are entirely absent from the training knowledge graph. The framework demonstrates a 39.3% improvement in AUC over the next-best model in predicting clinical trial outcomes from approved drug data. [44]

Ne_AnKGE: Negative Sample Analogical Reasoning

Ne_AnKGE addresses the limitations of analogical reasoning in knowledge graphs through a novel negative sampling approach:

Negative Entity Analogy Retrieval: Implements a sophisticated method to extract high-quality negative entity analogy objects from randomly sampled negative entities, constructing more effective training samples.

Projection Matrix Training: Uses selected negative analogical objects as supervision signals to train a projection matrix that maps original entity embeddings to their corresponding analogical embeddings.

Model Fusion: Integrates TransE and RotatE models enhanced through negative sample analogical reasoning, with flexible weight adjustment to adapt to diverse knowledge graph structures.

The experimental protocol involves extensive link prediction experiments on FB15K-237 and WN18RR datasets, with ablation studies confirming the contribution of negative analogical reasoning to performance improvements. The approach demonstrates particular effectiveness in scenarios with data imbalance where similar positive instances are scarce. [45]

Visualization of KGE Approaches

SectorE Model Architecture

SectorE Architecture Diagram: Illustrates how SectorE represents relations as annular sectors in polar coordinates to capture semantic hierarchies, with entities embedded as points within these sectors based on modulus and phase components. [43]

UKEDR Framework for Drug Repositioning

UKEDR Framework Diagram: Shows the integrated framework of UKEDR, highlighting how knowledge graph embedding combines with pre-training strategies and recommendation systems to address cold-start problems in drug repositioning. [44]

Negative Sample Analogical Reasoning

Ne_AnKGE Methodology Diagram: Depicts the negative sample analogical reasoning process where negative entities serve as supervision signals for generating analogical embeddings that enhance link prediction capabilities. [45]

Research Reagent Solutions

Table 3: Essential research reagents and computational resources for KGE experimentation

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| FB15k-237 | Benchmark Dataset | Standard evaluation of KGE models | General knowledge graph completion |

| WN18RR | Benchmark Dataset | Lexical knowledge evaluation | Semantic relationship modeling |

| YAGO3-10 | Benchmark Dataset | Large-scale KG evaluation | Scalability testing |

| RepoAPP | Specialized Dataset | Drug repositioning benchmarks | Biomedical application validation |

| DisBERT | Computational Tool | Disease representation learning | Text-based feature extraction |

| CReSS | Computational Tool | Drug feature extraction | Molecular structure representation |

| PairRE | KGE Algorithm | Base embedding model | Relation representation learning |

| AFM Recommendation | Algorithm | Feature interaction modeling | Drug-disease association prediction |

These research reagents represent essential resources for reproducing and extending KGE research, particularly for multi-relational data synthesis in scientific domains. The benchmark datasets provide standardized evaluation environments, while specialized tools like DisBERT and CReSS enable domain-specific applications in biomedical and environmental research. [43] [44]