Bridging the Gap: Data-Driven Models vs. Stakeholder Perceptions in Ecosystem Services Evaluation

This article explores the critical comparison between data-driven modeling and stakeholder-based evaluations in ecosystem services (ES) assessment.

Bridging the Gap: Data-Driven Models vs. Stakeholder Perceptions in Ecosystem Services Evaluation

Abstract

This article explores the critical comparison between data-driven modeling and stakeholder-based evaluations in ecosystem services (ES) assessment. As ES research becomes increasingly vital for sustainable management and policy, understanding the synergies and disparities between quantitative models and human perception is essential. We examine the foundational principles of both approaches, detail their methodological applications, and analyze empirical evidence highlighting significant mismatches—such as stakeholders overestimating ES potential by an average of 32.8%. The article provides a framework for troubleshooting integration challenges and validates methods for reconciling these perspectives. Aimed at researchers, scientists, and environmental professionals, this review synthesizes current knowledge to advocate for hybrid strategies that combine scientific rigor with local expertise for more effective and inclusive environmental decision-making.

Understanding the Divide: Core Concepts in ES Evaluation

Defining Data-Driven and Stakeholder-Based ES Assessments

Ecosystem Services (ES) assessments are critical for understanding the benefits that ecosystems provide to humans, supporting sustainable ecosystem management and policy development [1]. Within this field, two distinct methodological paradigms have emerged: data-driven spatial modeling and stakeholder-based evaluation. Data-driven approaches rely on computational models and quantitative analysis of biophysical data to estimate ES production, while stakeholder-based methods incorporate expert knowledge and perceptions to evaluate ES potential. These approaches offer complementary strengths and limitations, making their comparative analysis essential for researchers and practitioners designing evaluation frameworks. This guide provides a systematic comparison of both methodologies, supported by experimental data and detailed protocols from recent research.

Core Methodologies and Experimental Protocols

Data-Driven Spatial Modeling Approach

Data-driven ES assessments utilize computational models and geospatial analysis to quantify ecosystem services based on land cover data and other environmental parameters.

Experimental Protocol: The ASEBIO Index Methodology

A representative data-driven methodology is the ASEBIO (Assessment of Ecosystem Services and Biodiversity) index, developed for assessing ES in mainland Portugal over a 28-year period (1990-2018) [1]. The protocol involves these key stages:

ES Indicator Selection and Calculation: Researchers selected eight multi-temporal ES indicators for calculation: climate regulation, water purification, habitat quality, drought regulation, recreation, food provisioning, erosion prevention, and pollination. These were computed using spatial modeling approaches based on CORINE Land Cover data for the reference years 1990, 2000, 2006, 2012, and 2018 [1].

Spatial Modeling Integration: The eight ES indicators were integrated into a composite ASEBIO index using a multi-criteria evaluation method. This index depicts the combined ES potential based on land cover characteristics [1].

Temporal Change Analysis: The spatiotemporal changes of ES were analyzed by comparing model outputs across the five reference periods, identifying trade-offs and changes in relation to documented land use changes [1].

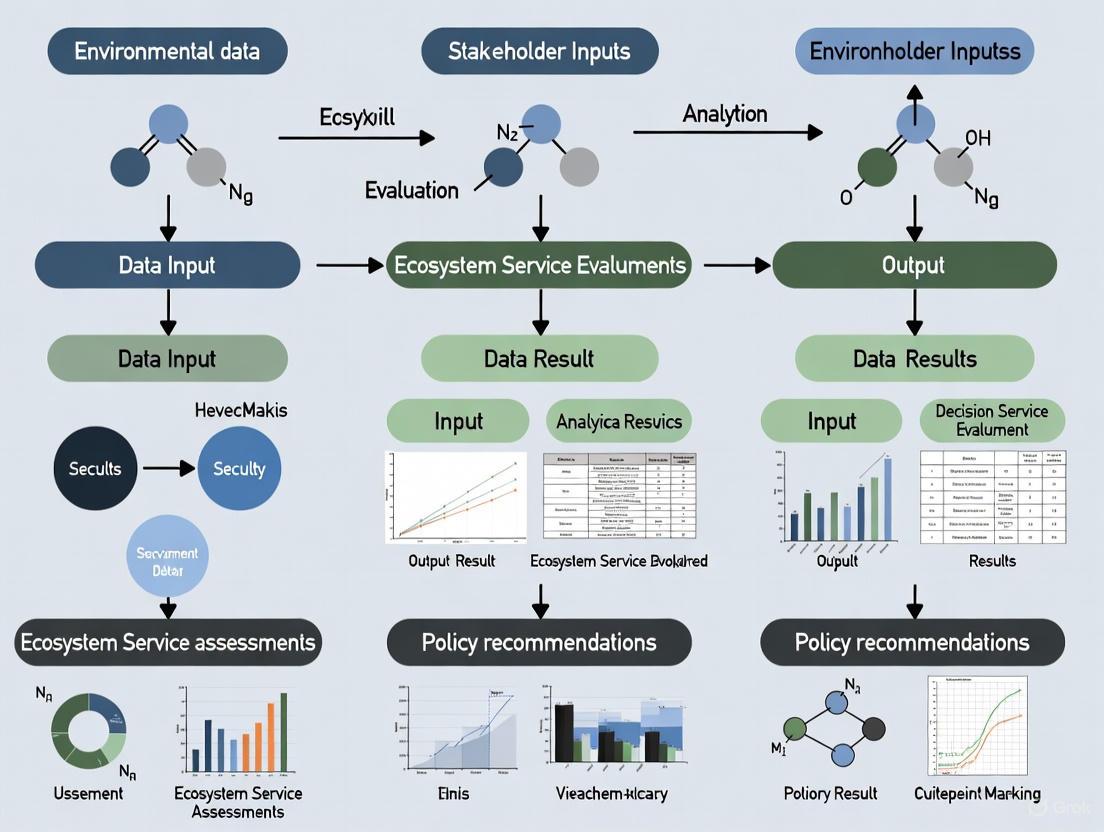

Figure 1: Data-Driven ES Assessment Workflow

Stakeholder-Based Perception Approach

Stakeholder-based evaluations capture human perceptions and expert knowledge through structured engagement processes to assess ES potential.

Experimental Protocol: Stakeholder Perception Assessment

The comparative study in Portugal implemented this stakeholder-based approach through the following methodology [1]:

Stakeholder Recruitment: Engagement of diverse stakeholders from various sectors of society involved in ecosystem management.

Analytical Hierarchy Process (AHP): Implementation of a multi-criteria evaluation method with weights defined by stakeholders through the AHP. This structured technique organizes and analyzing complex decisions by quantifying the relative importance of each ES indicator [1].

Matrix-Based Valuation: Development of a matrix-based methodology reflecting stakeholders' ES perceptions and valuations for specific land cover classes for the reference year 2018 [1].

Comparative Analysis: Quantitative comparison between stakeholder perceptions and model-based ASEBIO index results to identify disparities and alignments [1].

Figure 2: Stakeholder-Based ES Assessment Workflow

Comparative Performance Analysis

Quantitative Results Comparison

The comparative assessment of both approaches revealed significant differences in ES potential estimates, with stakeholder-based assessments consistently yielding higher valuations across all ecosystem services.

Table 1: Comparative ES Potential Assessment (2018)

| Ecosystem Service | Data-Driven Model Results | Stakeholder Perception Results | Deviation | Alignment Level |

|---|---|---|---|---|

| Drought Regulation | Low to Moderate Potential | Significantly Higher Potential | Highest Contrast | Low Alignment |

| Erosion Prevention | Low to Moderate Potential | Significantly Higher Potential | High Contrast | Low Alignment |

| Climate Regulation | Declining Potential | Higher Potential | Moderate Contrast | Moderate Alignment |

| Habitat Quality | Mostly Stable Potential | Higher Potential | Moderate Contrast | Moderate Alignment |

| Pollination | Mostly Stable Potential | Higher Potential | Moderate Contrast | Moderate Alignment |

| Water Purification | Consistently High Potential | Slightly Higher Potential | Lower Contrast | High Alignment |

| Food Production | Mostly Stable Potential | Slightly Higher Potential | Lower Contrast | High Alignment |

| Recreation | Improved Potential | Slightly Higher Potential | Lower Contrast | High Alignment |

| Overall Average | Baseline | 32.8% Higher on Average | Significant Mismatch | Variable Alignment |

The overall deviation shows that stakeholder estimates were 32.8% higher on average than model-based results across all ecosystem services assessed [1].

Spatial and Temporal Pattern Analysis

The data-driven approach revealed distinct spatial and temporal patterns in ES potential across mainland Portugal between 1990 and 2018:

- Climate Regulation: Notable decline in Alentejo Central with improvement in Alto Minho [1]

- Water Purification: Improvement in 10 out of 23 northern regions, with declines in interior and southern regions [1]

- Habitat Quality: Increased in northern regions but declined in Lisbon metropolitan area and Alentejo Central [1]

- Drought Regulation: Showed the largest improvement, especially in central and southern regions [1]

- Metropolitan Areas: Lisbon and Porto showed minimal improvements, with Lisbon experiencing declines in six ES indicators [1]

The ASEBIO index values remained relatively stable over the timeline (0.33-0.35), with median values increasing from 0.27 in 1990 to 0.43 in 2018 [1].

Land Cover Contribution Analysis

The data-driven approach enabled precise quantification of how different land cover classes contribute to overall ES potential:

Table 2: Land Cover Contribution to ASEBIO Index (2018)

| Land Cover Class | Category | Contribution Level |

|---|---|---|

| Port Areas (1.2.3) | Artificial Surfaces | Lowest Contribution |

| Road and Rail Networks (1.2.2) | Artificial Surfaces | Moderate Contribution |

| Green Urban Areas (1.4.1) | Artificial Surfaces | Moderate Contribution |

| Rice Fields (2.1.3) | Agricultural Areas | Low Contribution |

| Agricultural with Natural Vegetation (2.4.3) | Agricultural Areas | High Contribution |

| Agro-forestry Areas (2.4.4) | Agricultural Areas | High Contribution |

| Moors and Heathland (3.2.2) | Forest & Seminatural | Highest Contribution |

| Average Forest & Seminatural Areas | Forest & Seminatural | Main Contributors |

Forest and seminatural areas were identified as the primary contributors to the ASEBIO index, with moors and heathland (3.2.2) exhibiting the highest values [1].

Research Toolkit: Essential Materials and Solutions

Table 3: ES Assessment Research Toolkit

| Research Tool | Function/Purpose | Application Context |

|---|---|---|

| CORINE Land Cover Data | Provides standardized land cover classification | Fundamental input for spatial modeling of ES indicators |

| InVEST Software | Spatial modeling tool for estimating ES and tradeoffs | Calculating ES indicators; planning and research applications |

| Analytical Hierarchy Process (AHP) | Multi-criteria decision making method with stakeholder-defined weights | Integrating stakeholder perceptions into ES valuation |

| GIS (Geographic Information Systems) | Visualization and spatial analysis of ES data | Essential for spatial assessment and mapping ES distribution |

| Constraint Programming Technique | Identifies all possible solutions to constraint satisfaction problems | Searching for admissible changes in product design to improve sustainability [2] |

| Cumulative Belief Rule-Based System (CBRBS) | Data-driven rule-base approach for forecasting environmental trends | Carbon emission trend forecasting with environmental regulation [3] |

Integrated Assessment Framework

The comparative analysis demonstrates that neither data-driven nor stakeholder-based approaches alone provide a complete ES assessment picture. An integrated framework that combines both methodologies offers the most comprehensive approach for ecosystem management and policy development.

The significant mismatch (32.8% average difference) identified between scientific models and human perceptions highlights the necessity of integrative strategies that incorporate both data-driven models and expert knowledge [1]. Such integrated approaches can help bridge the gap between quantitative models and human values, resulting in more balanced and inclusive decision-making processes for sustainable land-use planning [1].

The Critical Need for Integrated ES Evaluation in Policy and Management

Ecosystem services (ES) are the benefits humans derive from nature, crucial for sustaining well-being and economic activity [1]. The central challenge in modern ES evaluation lies in reconciling two distinct paradigms: data-driven spatial models and participatory stakeholder-based assessments [1]. This guide objectively compares these methodological "alternatives," framing them not as rivals but as complementary components for robust environmental decision-making.

Comparative Performance of ES Evaluation Approaches

The table below summarizes the core characteristics, performance data, and optimal applications of model-driven and stakeholder-driven evaluation methods.

| Feature | Data-Driven Models (e.g., InVEST, RUSLE) | Stakeholder-Based Assessments (e.g., Perceptual Surveys, AHP) |

|---|---|---|

| Core Principle | Quantifies ES via biophysical algorithms and spatial data [4] [1]. | Elicits values, preferences, and knowledge through structured engagement [5] [1]. |

| Primary Output | Spatially-explicit maps of ES indicators (e.g., water yield, carbon storage) [4] [1]. | Utility functions, trade-off preferences, and weights of ES importance [5] [1]. |

| Key Strength | Objectively identifies spatio-temporal trends and biophysical trade-offs [4]. | Captures social demand, local knowledge, and context-specific values [5]. |

| Key Limitation | May miss locally relevant, non-material services (e.g., cultural services) [5]. | Perceptions can systematically overestimate or misrepresent biophysical potential [1]. |

| Performance Gap | Quantitative Discrepancy: In Portugal, stakeholder-perceived ES potential was 32.8% higher on average than model outputs [1]. | Perceptual Bias: Largest contrasts were for regulating services (drought regulation, erosion prevention); smallest for provisioning services (food production) [1]. |

| Best Applications | National-scale tracking, baseline assessments, scenario forecasting [4]. | Local and regional planning, conflict resolution, understanding utility and trade-offs [5]. |

Detailed Experimental Protocols for ES Evaluation

Protocol 1: Spatial Modeling of Ecosystem Services

This protocol employs biophysical models to generate quantitative, map-based ES assessments [4] [1].

- Model Selection: Choose specialized models for target ES.

- Data Preparation: Collect primary input data. This typically includes:

- Land Use/Land Cover (LULC) maps (e.g., from CORINE) for all study years [1] [4].

- Climatic data (precipitation, evapotranspiration) from meteorological stations or reanalysis products [4].

- Soil data (depth, texture) from soil databases [4].

- Topographic data (slope, length) from Digital Elevation Models (DEMs) [4].

- Model Execution & Validation: Run models for each time point. Validate outputs using field-measured data where available, or through cross-comparison with established regional studies [4].

- Integration and Index Calculation: To create a unified score (e.g., an Integrated ES Index), use Principal Component Analysis (PCA). PCA objectively determines weights based on the contribution of each ES to total variance, avoiding subjective weighting [4].

This protocol quantifies how stakeholders perceive ES supply and value different service trade-offs [5] [1].

- Stakeholder Typology Identification: Categorize stakeholder groups relevant to the management context (e.g., farmers, conservationists, policy-makers, local communities) [5].

- Structured Engagement Design:

- Production Possibility Frontier (PPF) Elicitation: Present stakeholders with graphs depicting different trade-off curves (e.g., between agricultural intensity and freshwater ecological health). Ask them to select the shape that best matches their understanding of the system and to draw confidence intervals [5].

- Utility Function Elicitation: Using the selected PPF, ask stakeholders to mark their preferred point along the curve, representing their optimal trade-off [5].

- Analytical Hierarchy Process (AHP): Guide stakeholders through pairwise comparisons of different ES to derive weighted priority scores for each service [1].

- Data Synthesis: Analyze responses to quantify mean perceived PPF shapes, confidence intervals, and utility maxima across and within stakeholder groups. Use AHP results to create stakeholder-weighted ES potential maps [5] [1].

- Comparison with Models: Statistically compare stakeholder-derived ES potential scores (e.g., from AHP) with model-generated scores for the same land cover types to identify significant mismatches [1].

Workflow Visualization for Integrated ES Evaluation

The following diagram illustrates a synergistic workflow that integrates both model-driven and stakeholder-driven approaches to achieve a more holistic and policy-relevant assessment.

The table below lists key "research reagents"—critical datasets, models, and engagement tools—for conducting integrated ES evaluations.

| Tool/Resource | Function/Description | Relevant Protocol |

|---|---|---|

| InVEST (Integrated Valuation of Ecosystem Services and Tradeoffs) | A suite of spatial models for mapping and valuing multiple ES (e.g., water yield, carbon, habitat) [1]. | Spatial Modeling |

| RUSLE (Revised Universal Soil Loss Equation) | An empirical model for estimating annual soil loss due to water erosion [4]. | Spatial Modeling |

| CORINE Land Cover Maps | Standardized, Europe-wide LULC data; a primary input for many ES models [1]. | Spatial Modeling |

| Production Possibility Frontier (PPF) | An economic concept used to visualize and elicit stakeholder understanding of trade-offs between two competing ES [5]. | Stakeholder Elicitation |

| Analytical Hierarchy Process (AHP) | A multi-criteria decision-making method to derive stakeholder-based weightings for the importance of different ES [1]. | Stakeholder Elicitation |

| Principal Component Analysis (PCA) | A statistical technique used to objectively integrate multiple ES metrics into a single composite index (e.g., IESI) [4]. | Spatial Modeling, Integration |

| Optimal Parameter-based Geographical Detector (OPGD) Model | A statistical method to identify key driving factors (e.g., NDVI, slope) behind the spatial heterogeneity of ES [4]. | Spatial Modeling, Analysis |

The quantitative assessment of complex systems, whether in neurobiology, drug development, or ecosystem management, increasingly relies on two distinct yet complementary approaches: data-driven computational models and human perceptual judgment. Data-driven models utilize mathematical formalizations and physical properties to simulate biological systems, generating reproducible, quantitative predictions [6]. In parallel, stakeholder-based evaluations incorporate expert knowledge, contextual understanding, and perceptual reasoning to assess system behavior and outcomes. This comparative guide explores the theoretical basis of biophysical models and human perception across multiple scientific domains, examining their relative strengths, limitations, and appropriate contexts of use.

The tension between these approaches represents a fundamental challenge in scientific inference. As revealed in ecosystem services research, significant mismatches can occur between model-generated indicators and stakeholder perceptions, with experts overestimating model-based potential by an average of 32.8% across multiple ecosystem service indicators [7]. Similarly, in visual perception and drug development, the choice between simulation-based inference and human intuition carries profound implications for predictive accuracy, resource allocation, and ultimate success. This guide objectively compares the performance of biophysical modeling approaches against human perceptual assessments through experimental data, methodological protocols, and cross-domain analysis.

Theoretical Frameworks and Computational Approaches

Foundations of Biophysical Modeling

Biophysical modeling represents a class of computational approaches that simulate biological systems using mathematical formalizations of their physical properties. These models enable researchers to predict the influence of biological and physical factors on complex systems, bridging multiple scales from molecular interactions to whole-organism dynamics [6]. The fundamental strength of biophysical models lies in their ability to systematically integrate diverse experimental data, provide mechanistic explanations for observed phenomena, and generate testable hypotheses through in silico experimentation.

In neuroscience, detailed biophysical models of human cortical pyramidal neurons have uncovered how morpho-electrical properties shape signal processing, revealing mechanisms behind action potential generation, dendritic signaling, and information processing capabilities [8]. Similarly, in drug development, Model-Informed Drug Development (MIDD) employs quantitative frameworks to predict drug behavior, optimizing development from discovery through post-market surveillance [9]. These approaches share a common mathematical foundation in representing physical systems through formal computational structures that can be validated against experimental data.

The Role of Human Perception and Expert Judgment

Human perception and expert judgment provide an alternative framework for assessing complex systems, leveraging pattern recognition, contextual understanding, and intuitive reasoning that may elude purely algorithmic approaches. In ecosystem services assessment, stakeholder evaluations incorporate localized knowledge, value judgments, and qualitative assessments that complement purely quantitative models [10]. The perceptual system itself represents an evolved biological model that excels at certain types of inference, particularly in dealing with soft objects and complex physical interactions where explicit simulation may be computationally prohibitive [11].

Research in visual perception demonstrates that human observers excel at perceiving properties of soft objects like stiffness and mass, employing "intuitive physics" that combines sensory input with internal simulations of physical processes [11]. This perceptual capability suggests that human judgment embodies sophisticated inferential processes that can complement or validate computational approaches, particularly in domains characterized by high uncertainty, missing data, or complex contextual factors.

Comparative Analysis: Performance Across Domains

Ecosystem Services Evaluation

Ecosystem services assessment provides a revealing test case for comparing data-driven models with stakeholder perceptions. A comprehensive study in mainland Portugal evaluated eight ecosystem service indicators—including climate regulation, water purification, habitat quality, drought regulation, recreation, food production, erosion prevention, and pollination—using both spatial modeling approaches and stakeholder assessments through an Analytical Hierarchy Process [7].

Table 1: Ecosystem Services Assessment: Models vs. Stakeholder Perception

| Ecosystem Service | Stakeholder Overestimation | Alignment Between Approaches |

|---|---|---|

| Drought Regulation | Highest contrast | Lowest alignment |

| Erosion Prevention | High contrast | Low alignment |

| Water Purification | Close alignment | Highest alignment |

| Food Production | Close alignment | High alignment |

| Recreation | Close alignment | High alignment |

| Climate Regulation | Moderate contrast | Moderate alignment |

| Habitat Quality | Moderate contrast | Moderate alignment |

| Pollination | Moderate contrast | Moderate alignment |

The research demonstrated that all selected ecosystem services were overestimated by stakeholders compared to model-based assessments, with an average overestimation of 32.8% [7]. The greatest disparities occurred for drought regulation and erosion prevention, while water purification, food production, and recreation showed the closest alignment between modeling and perception. This pattern suggests that stakeholders may excel at assessing more tangible, directly observable services while struggling with complex processes involving hidden variables and nonlinear dynamics.

Neuroscience and Cellular Biophysics

In neuroscience, biophysical models have generated fundamental insights into human neuronal function that would be difficult to obtain through perceptual approaches alone. Detailed modeling of human layer 2/3 pyramidal neurons (HL2/3 PNs) has provided mechanistic explanations for four key experimental observations: (1) the steep "kinky" somatic/axonal action potentials; (2) accelerated propagation speed of excitatory postsynaptic potentials in dendrites; (3) the ability to reliably track high-frequency input modulations; and (4) effective transfer of theta frequencies from dendrite to soma [8].

Table 2: Biophysical Insights from Human Neuron Modeling

| Experimental Observation | Biophysical Mechanism | Computational Implication |

|---|---|---|

| Steep "kinky" action potentials | Large dendritic load on soma and axon initial segment | Enhanced temporal precision in spike initiation |

| Accelerated EPSP propagation | Morphology-dependent passive cable properties | Faster dendritic integration |

| High-frequency tracking capability | Reduced axonal initial segment load | Improved encoding of temporal patterns |

| Theta frequency transfer | Prominent h-channels in human dendrites | Rhythm-based processing advantages |

These biophysical insights reveal how human cortical neurons achieve their enhanced computational capabilities, including highly compartmentalized processing, sophisticated nonlinear operations through specialized dendritic currents, and increased capacity for parallel processing [8]. The models demonstrate how distinct human neuronal properties likely support advanced cognitive capabilities, including language, foresight, and creativity.

Drug Development and Toxicity Prediction

The pharmaceutical industry represents a domain where both modeling and expert judgment play crucial roles, with Model-Informed Drug Development (MIDD) increasingly complementing human decision-making. MIDD employs quantitative tools including Quantitative Structure-Activity Relationship (QSAR), Physiologically Based Pharmacokinetic (PBPK) modeling, semi-mechanistic PK/PD, population pharmacokinetics, exposure-response analysis, and quantitative systems pharmacology [9].

Table 3: Model-Informed Drug Development Tools and Applications

| MIDD Tool | Description | Primary Application Stage |

|---|---|---|

| QSAR | Computational modeling predicting biological activity from chemical structure | Early discovery, lead optimization |

| PBPK | Mechanistic modeling of physiology-drug interplay | Preclinical prediction, clinical trial design |

| Semi-mechanistic PK/PD | Hybrid approach characterizing drug pharmacokinetics and pharmacodynamics | Preclinical to clinical translation |

| PPK | Modeling variability in drug exposure among populations | Clinical development, dosage optimization |

| QSP/T | Integrative modeling combining systems biology and pharmacology | Target identification, toxicity prediction |

| AI/ML in MIDD | Data-driven prediction of molecular behavior and clinical outcomes | Throughout development pipeline |

The "fit-for-purpose" application of these tools across drug development stages has demonstrated significant efficiency improvements, including compressed discovery timelines from years to months and reduced clinical trial costs through optimized design [9]. For example, AI-driven drug discovery platforms have advanced candidates to Phase I trials in approximately 18 months compared to the typical 5-year timeline, while achieving clinical candidates with 10x fewer synthesized compounds in some cases [12].

Experimental Protocols and Methodologies

Biophysical Model Development Workflow

The development of biophysically detailed models follows a systematic methodology that integrates experimental data with computational frameworks. A representative protocol for constructing a Hodgkin-Huxley-based model of C. elegans body-wall muscle cells illustrates this process [13]:

Figure 1: Biophysical Model Development Workflow

Experimental Phase: Voltage clamp and mutant experiments identify key ion channels and characterize their dynamics. For C. elegans muscle cells, this involved identifying L-type voltage-gated calcium channels (EGL-19) and potassium channels (SHK-1, SLO-2) as crucial for action potential generation [13]. Researchers conduct electrophysiological recordings using whole-cell patch clamp configurations with fire-polished borosilicate pipettes (resistance 4-6 MΩ), digitizing data at 10-20 kHz with 2.6 kHz filtering.

Model Construction Phase: Experimental data informs the development of Hodgkin-Huxley-based models for individual ion channels, which are subsequently integrated to simulate overall cellular electrical activity. The C. elegans muscle model incorporated detailed current dynamics for each channel based on voltage clamp data [13].

Parameter Estimation Phase: Simulation-based inference methods with parallel sampling determine free parameters by fitting model responses to experimental data under specific stimuli. Bayesian frameworks efficiently explore high-dimensional parameter spaces, identifying regions consistent with experimental observations while quantifying parameter uncertainty [13].

Validation and Application Phase: The validated model predicts cellular responses under novel conditions, including various current stimuli and genetic manipulations, enabling investigation of system properties like optimal response frequencies and functional implications [13].

Human Perception Assessment Protocols

Assessing human perceptual capabilities employs rigorous psychophysical methods that quantify performance under controlled conditions. A representative protocol for evaluating soft object perception illustrates this approach [11]:

Stimulus Generation: Researchers create controlled animations of soft objects (e.g., cloths) undergoing naturalistic transformations across different scene configurations, including "ramp" (solid object colliding with hanging cloth), "drape" (cloth falling on a frame), "rotate" (cloth spinning with table), and "wind" (cloth blowing in wind) [11]. Each animation embodies specific physical parameters (e.g., five stiffness levels, four mass levels) through physics-based simulation.

Experimental Design: Participants perform matching tasks where they identify which of two test animations matches a target animation on a specific property (stiffness or mass). All animations display simultaneously and replay automatically until response, ensuring adequate viewing time [11].

Model Comparison: Human performance compares against computational models including deep neural networks (bottom-up feature extraction) and simulation-based models (intuitive physics approaches). Models are performance-calibrated to ensure comparable overall accuracy, enabling focused comparison of error patterns rather than raw performance [11].

Data Analysis: Researchers quantify accuracy, error patterns, and specific failure modes across conditions, assessing which computational framework best predicts human perceptual patterns including both successes and characteristic failures.

Signaling Pathways and Computational Mechanisms

Ion Channel Dynamics in Cellular Excitability

Biophysical models of cellular electrical activity rely on precise characterization of ion channel dynamics and their interactions. The C. elegans body-wall muscle cell model exemplifies how multiple conductances integrate to generate action potentials and rhythmic activity [13]:

Figure 2: Ion Channel Dynamics in C. Elegans Muscle Cells

This integrated mechanism reveals how calcium-mediated action potentials emerge in nematode muscle cells despite the absence of voltage-gated sodium channels, with the model predicting an optimal response frequency of 3-10 Hz corresponding to burst firing rather than regular firing modes [13]. The balance between depolarizing calcium currents and repolarizing potassium currents creates rhythmic activity patterns essential for locomotion.

Dendritic Computation in Human Cortical Neurons

Human cortical pyramidal neurons employ sophisticated dendritic mechanisms that enhance their computational capabilities beyond typical model systems. Biophysical modeling reveals specialized signaling pathways in human L2/3 pyramidal neurons [8]:

Enhanced Backpropagation and Theta Transfer: Prominent h-channels in human dendrites facilitate theta-frequency (4-8 Hz) input transfer from dendrites to soma, enabling rhythm-based processing potentially relevant to memory and cognitive functions. Power spectrum analysis demonstrates significantly enhanced somatic response to theta inputs when h-channels are incorporated in models [8].

Dendritic Compartmentalization and Nonlinear Processing: Human neurons exhibit increased functional compartmentalization, with dendrites capable of local nonlinear transformations through specialized currents. This architecture supports parallel processing and enables sophisticated computations including XOR operations at the single-cell level [8].

Accelerated Synaptic Integration: Morphological adaptations reduce the functional distance between distal synapses and the soma, accelerating EPSP propagation through optimized cable properties. This allows human neurons to maintain rapid integration despite their physical size [8].

High-Frequency Tracking: Reduced axonal initial segment load enables reliable tracking of high-frequency input modulations (up to 200 Hz), enhancing temporal coding capabilities potentially relevant for speech processing and other rapid temporal patterns [8].

Table 4: Essential Research Tools for Biophysical Modeling and Perception Studies

| Resource Category | Specific Tools/Platforms | Function and Application |

|---|---|---|

| Simulation Platforms | NEURON, GENESIS, Brian | Simulating neuronal dynamics and networks |

| Molecular Modeling | Schrödinger, AutoDock | Predicting molecular interactions and drug-target binding |

| AI-Driven Discovery | Exscientia, Insilico Medicine, Recursion | Accelerating target identification and compound optimization |

| Ecosystem Services Modeling | InVEST, ARIES | Mapping and quantifying ecosystem service provision |

| Electrophysiology | MultiClamp amplifier, Clampex software | Recording cellular electrical activity with high temporal resolution |

| Imaging and Microscopy | Two-photon microscopy, 3D laser scanning | Visualizing cellular structure and dynamic processes |

| Psychophysical Testing | Custom MATLAB/Python scripts, PsychoPy | Quantifying human perceptual capabilities under controlled conditions |

Emerging Technologies and Methodologies

Federated Learning and Privacy-Preserving AI: Secure collaborative platforms enable multi-institutional model development without sharing sensitive data, using approaches like federated learning and Trusted Research Environments (TREs) [14]. These technologies address data privacy concerns while leveraging diverse datasets for improved model performance.

Probabilistic Programming for Perception Modeling: Advanced probabilistic programming frameworks enable implementation of simulation-based cognitive models that incorporate intuitive physics, such as the Woven model for soft object perception [11]. These approaches formally represent uncertainty and infer underlying physical properties from sensory data.

High-Throughput Electrophysiology: Automated patch clamp systems and multi-electrode arrays enable large-scale characterization of electrical properties across cell types and conditions, generating comprehensive datasets for model parameterization and validation [13].

Multi-modal Data Integration: Platforms combining transcriptomic, morphological, electrophysiological, and connectomic data enable development of increasingly comprehensive models, such as the NextBrain atlas providing probabilistic mapping of 333 human brain regions [6].

The comparative analysis of biophysical models and human perception reveals a complex landscape where each approach offers distinct advantages depending on context, domain, and specific scientific question. Data-driven models excel in reproducibility, scalability, and mechanistic insight, while human perception provides robust inference under uncertainty, contextual integration, and intuitive pattern recognition.

The most promising path forward involves integrative strategies that leverage the strengths of both approaches while mitigating their respective limitations. In ecosystem services, combining spatial modeling with stakeholder input creates more balanced decision-making [7]. In drug development, the "fit-for-purpose" application of modeling tools within expert-driven frameworks optimizes development efficiency [9]. In neuroscience, biophysical models generate testable hypotheses about human neuronal function that can be validated through experimental investigation [8].

This synthesis suggests that the fundamental theoretical basis for scientific advancement lies not in choosing between modeling and perception, but in developing frameworks for their appropriate integration—creating a dialogue between data-driven prediction and human understanding that advances our capacity to address complex scientific challenges.

Ecosystem services (ES), defined as the direct and indirect contributions of ecosystems to human well-being, are fundamental to economic and social stability [15]. The conceptual framework for these services was significantly advanced by the United Nations' Millennium Ecosystem Assessment (MA), which categorized them into four primary types: provisioning, regulating, cultural, and supporting services [16]. This classification system provides a vital lens for understanding the multifaceted benefits that nature provides, from the food we eat to the climate regulation that makes our planet habitable. As anthropogenic pressures on the environment intensify, the accurate assessment of these services has become imperative for sustainable ecosystem management [1] [15].

A central challenge in modern ES research lies in the methodological approaches used for evaluation. Currently, a significant disparity exists between data-driven spatial models that quantify ES using biophysical data and stakeholder-based assessments that capture local knowledge and perceptions of ES value [1]. Scientific literature highlights a growing recognition that involving stakeholders from various sectors is necessary for a comprehensive understanding of ES, even when their perceptions diverge from model-based outputs [1]. This guide provides a comparative analysis of these two evaluation paradigms, offering researchers a framework to select appropriate methodologies based on their specific research objectives, spatial scales, and the types of ecosystem services under investigation.

Categorizing Ecosystem Services

The Millennium Ecosystem Assessment's classification system offers a standardized structure for understanding and comparing different ecosystem services. The table below details the four categories, their specific benefits, and examples.

Table 1: Categories of Ecosystem Services as Defined by the Millennium Ecosystem Assessment

| Service Category | Description | Specific Benefits | Examples |

|---|---|---|---|

| Provisioning Services | Tangible products obtained from ecosystems [16] [15]. | Food, water, and material provision. | Fruits, vegetables, timber, fish, livestock, natural gas, oils, medicinal resources [16] [15]. |

| Regulating Services | Benefits from the regulation of natural ecosystem processes [16] [15]. | Climate regulation, hazard mitigation, and pollination. | Air and water purification, climate regulation, erosion and flood control, pollination [16] [15]. |

| Cultural Services | Non-material benefits obtained from ecosystems [16] [15]. | Intellectual, spiritual, and recreational enrichment. | Recreational opportunities, aesthetic enjoyment, cultural heritage, scientific discovery [16]. |

| Supporting Services | Fundamental natural processes necessary for the production of all other ES [16]. | Sustains basic life forms and ecosystem functionality. | Photosynthesis, nutrient cycling, soil formation, water cycle [16]. |

Comparative Analysis: Data-Driven Models vs. Stakeholder Perceptions

Quantitative Comparison of Assessment Outcomes

A 2024 national-scale study in Portugal provides compelling experimental data for directly comparing model-based and stakeholder-based ES evaluations. The research calculated eight multi-temporal ES indicators using a spatial modeling approach and integrated them into a novel ASEBIO index, which was then contrasted against the ES potential perceived by stakeholders for the same region [1].

Table 2: Modeled vs. Perceived Ecosystem Service Potential in Mainland Portugal (2018)

| Ecosystem Service | Stakeholder Perception vs. Model Discrepancy | Alignment Classification |

|---|---|---|

| Drought Regulation | High contrast (Among the highest overestimations by stakeholders) | Low Alignment |

| Erosion Prevention | High contrast (Among the highest overestimations by stakeholders) | Low Alignment |

| Water Purification | Closely aligned | High Alignment |

| Food Production | Closely aligned | High Alignment |

| Recreation | Closely aligned | High Alignment |

| Climate Regulation | Overestimated by stakeholders | Moderate Alignment |

| Habitat Quality | Overestimated by stakeholders | Moderate Alignment |

| Pollination | Overestimated by stakeholders | Moderate Alignment |

| Overall Average | Stakeholder estimates 32.8% higher on average | Moderate Disparity |

Experimental Protocols for Ecosystem Service Assessment

Data-Driven Spatial Modeling Protocol

The methodology for the data-driven approach, as implemented in the Portuguese case study, involves a multi-step spatial analysis process [1].

- Step 1: Land Cover Data Acquisition. The process begins with acquiring spatial land cover data. The Portuguese study utilized CORINE Land Cover maps for the reference years 1990, 2000, 2006, 2012, and 2018 to track changes over a 28-year period [1].

- Step 2: Biophysical Modeling of ES Indicators. Researchers then calculate specific ES indicators using spatial modeling tools. Studies often employ software like the Integrated Valuation of Ecosystem Services and Tradeoffs (InVEST), a widely used spatial modeling tool that estimates ES based on land cover and other biophysical data [1].

- Step 3: Multi-Criteria Index Integration. The individual ES indicators are integrated into a composite index. The Portuguese study developed the ASEBIO (Assessment of Ecosystem Services and Biodiversity) index, which combines the eight ES indicators using a multi-criteria evaluation method [1].

- Step 4: Spatial and Temporal Analysis. The final step involves analyzing the resulting index to understand spatiotemporal changes, trade-offs, and synergies between different ecosystem services across the landscape [1].

Stakeholder Perception Assessment Protocol

The stakeholder-based evaluation employs social science methodologies to capture expert and local knowledge [1].

- Step 1: Stakeholder Identification and Recruitment. Identify and recruit a diverse group of stakeholders from various sectors of society relevant to ecosystem management. This ensures a comprehensive understanding of ES values [1].

- Step 2: Matrix-Based Valuation. Elicit stakeholders' perceptions of the ES supply potential for different land cover types. This is often done using a matrix-based methodology where stakeholders assign values [1].

- Step 3: Analytical Hierarchy Process (AHP). Implement a structured technique for organizing and analyzing complex decisions. Stakeholders use the AHP to define weights that reflect the relative importance of each ecosystem service's supply potential, which are then used in models like the ASEBIO index [1].

- Step 4: Qualitative Analysis (Q Methodology). In some studies, employ Q methodology, a mixed-methods approach used to systematically study human subjectivity, such as perspectives on landscape services or ES demand [17].

Figure 1: Workflow for Integrated ES Assessment Combining Data-Driven and Stakeholder-Based Methods.

The Researcher's Toolkit for Ecosystem Service Assessment

Table 3: Essential Research Tools and Reagents for Ecosystem Services Assessment

| Tool/Reagent | Type/Platform | Primary Function in ES Research |

|---|---|---|

| InVEST | Software Suite (Integrated Valuation of Ecosystem Services and Tradeoffs) | Spatial modeling to estimate and map ecosystem services based on land cover data [1]. |

| ARIES | Modeling Platform (Artificial Intelligence for Ecosystem Services) | Data-driven, probabilistic modeling of ecosystem services using artificial intelligence [18]. |

| CORINE Land Cover | Spatial Data | Provides standardized land cover maps for tracking changes in ecosystem service potential over time [1]. |

| Analytical Hierarchy Process (AHP) | Methodological Framework | Structured technique for capturing stakeholder-derived weights for the relative importance of different ES [1]. |

| Q Methodology | Social Science Approach | Systematic study of human subjectivity to understand stakeholder perspectives and socio-cultural values of ES [17]. |

| Viz Palette | Accessibility Tool | Online tool to test color palettes in data visualizations for accessibility to audiences with color vision deficiencies [19]. |

Implications for Research and Decision-Making

The comparative analysis reveals that the choice between data-driven models and stakeholder-based assessments is not merely technical but fundamentally shapes ES valuation outcomes. The 32.8% average overestimation by stakeholders, with particularly high contrasts for regulating services like drought regulation and erosion prevention, underscores a critical communication gap between scientific quantification and human perception [1]. This disparity highlights the risk of relying exclusively on a single methodology, as policymaking based solely on models may not reflect stakeholder values, while planning based only on perceptions may overlook biophysical realities.

Integrative assessment strategies that combine scientific modeling with expert knowledge are essential for effective ES management and land-use planning [1]. The workflows and tools detailed in this guide provide a pathway for researchers to bridge this gap, fostering more balanced and inclusive environmental decision-making. Future research should focus on standardizing these integrative protocols across different geographical and cultural contexts to advance the field of ecosystem service valuation.

Figure 2: Relationship Between Data-Driven and Stakeholder-Based ES Assessment Approaches.

Global Trends and the Rising Importance of ES in Sustainability Agendas

Ecosystem services (ES), the benefits humans derive from nature, are fundamental to sustainable development and human well-being. The evaluation of these services is critical for informed decision-making, yet the field is characterized by two distinct, and often divergent, methodological approaches: data-driven spatial modeling and stakeholder-based perception studies. Data-driven approaches rely on biophysical data and computational models to quantify ES, offering objectivity and reproducibility. In contrast, stakeholder-based methods capture the perceptions, values, and knowledge of people, providing crucial context and revealing perceived importance that models might miss. Framed within a broader thesis on the merits and limitations of these two paradigms, this guide objectively compares their performance, supported by experimental data. This comparison is particularly relevant for researchers and drug development professionals who routinely navigate the complex interplay between quantitative data and human-centric evidence in their fields, such as in the integration of randomized controlled trials (RCTs) and real-world evidence (RWE) [20] [21]. Understanding how to balance these approaches is key to developing robust sustainability agendas and effectively managing environmental risks and opportunities.

Quantitative Comparison: Models vs. Stakeholder Perceptions

A 2024 national-scale study in Portugal provides a direct, quantitative comparison of data-driven models and stakeholder perceptions for evaluating ecosystem services. The research calculated eight ES indicators over a 28-year period and integrated them into a novel index (ASEBIO) using a multi-criteria evaluation method with weights defined by stakeholders. This was then compared against a matrix-based methodology reflecting stakeholders' direct perceptions of ES potential for the year 2018 [1].

Table 1: Measured Disparities Between Modeled and Perceived Ecosystem Service Potential [1]

| Ecosystem Service Indicator | Average Disparity (Stakeholder Perception vs. Model) | Alignment Classification |

|---|---|---|

| Drought Regulation | Significant Overestimation | Lowest Alignment |

| Erosion Prevention | Significant Overestimation | Low Alignment |

| Climate Regulation | Notable Overestimation | Moderate Alignment |

| Habitat Quality | Notable Overestimation | Moderate Alignment |

| Pollination | Notable Overestimation | Moderate Alignment |

| Water Purification | Closer Alignment | High Alignment |

| Food Production | Closer Alignment | High Alignment |

| Recreation | Closer Alignment | High Alignment |

| All Services (Average) | 32.8% Overestimation by Stakeholders | Moderate Misalignment |

The results, summarized in Table 1, reveal a significant mismatch, with stakeholder estimates being 32.8% higher on average than model-based calculations [1]. All selected ES were overestimated by stakeholders, but the degree of misalignment varied. Services like drought regulation and erosion prevention showed the highest contrasts, while water purification, food production, and recreation were more closely aligned. This demonstrates that the choice of assessment method can substantially influence the perceived state of ecosystem services, with direct implications for prioritization and resource allocation in sustainability strategies.

Experimental Protocols in Ecosystem Services Research

Data-Driven Spatial Modeling Protocol

The data-driven approach exemplified in the Portuguese study follows a rigorous, replicable protocol for quantifying ES [1].

- Step 1: Land Cover Data Acquisition and Processing: The foundation of the model is land cover cartography. The study used CORINE Land Cover data for the reference years 1990, 2000, 2006, 2012, and 2018. This data was classified and processed to create a consistent spatial timeline of landscape changes.

- Step 2: Calculation of ES Indicators: Eight distinct ES indicators were calculated using spatial modeling approaches. This involves applying algorithms and transfer functions that relate land cover classes and other biophysical data (e.g., soil type, rainfall) to the supply of specific ecosystem services. The study did not specify the exact software used, but tools like the InVEST (Integrated Valuation of Ecosystem Services and Tradeoffs) software are widely used for such applications [1].

- Step 3: Integration into a Composite Index (ASEBIO): The individual ES indicators were integrated into a single composite index. A multi-criteria evaluation method, the Analytical Hierarchy Process (AHP), was used. In this step, stakeholders were engaged to define weights reflecting the relative importance of each ecosystem service.

- Step 4: Spatiotemporal Analysis and Validation: The resulting index values were analyzed across space and time to identify trends, trade-offs, and synergies between services. Model outputs were validated against known ecological patterns and through statistical analysis of the distributions.

Stakeholder Perception Assessment Protocol

The stakeholder-based approach aims to capture human perspectives and values, which are not inherently present in geospatial data [1] [22].

- Step 1: Stakeholder Identification and Recruitment: A diverse group of stakeholders is selected, representing various sectors of society that interact with or are affected by the ecosystem (e.g., local residents, farmers, policymakers, NGO representatives).

- Step 2: Perception Elicitation: Stakeholders' perceptions of ecosystem service potential are collected. This can be done through structured surveys, interviews, or participatory workshops. A common method is the use of a matrix-based methodology, where stakeholders assign scores to different land cover types based on their perceived capacity to provide various ecosystem services.

- Step 3: Data Aggregation and Analysis: The individual scores from multiple stakeholders are aggregated to create a composite perception-based assessment. This can involve calculating averages or medians, and analyzing the data for consensus or divergence among different stakeholder groups.

- Step 4: Comparison with Biophysical Models: The final step, as performed in the Portuguese study, is to systematically compare the perception-based outputs with the results from the data-driven spatial models to identify and quantify disparities.

The Scientist's Toolkit: Key Reagents for ES Assessment

Table 2: Essential Research Reagents for Ecosystem Services Evaluation

| Research Reagent / Tool | Function in ES Assessment |

|---|---|

| CORINE Land Cover Data | Provides standardized, time-series spatial data on land use and land cover, serving as the foundational input for most spatial models of ES supply [1]. |

| InVEST Software | A suite of open-source, spatial models used to map and value ecosystem services. It helps quantify the biophysical supply of services and their economic value [1]. |

| Analytical Hierarchy Process (AHP) | A structured multi-criteria decision-making technique used to integrate stakeholder preferences by deriving weightings for different ES, enabling their integration into a composite index [1]. |

| Stakeholder Perception Matrix | A survey-based tool (often a table) used to systematically capture stakeholders' perceptions of the potential of different land cover types to deliver various ecosystem services [1] [22]. |

| Real-World Evidence (RWE) | In the context of drug development, RWE provides insights from data outside controlled trials, analogous to stakeholder perceptions in ES by offering a real-world, human-centric perspective that complements controlled experimental data [20] [21]. |

| Causal Machine Learning (CML) | Advanced analytical methods that combine machine learning with causal inference principles. In drug development, CML helps estimate treatment effects from RWD, similar to how advanced spatial statistics can infer causal links between land use and ES in complex models [20]. |

Global Trends Integrating Data and Stakeholder Perspectives

The global sustainability agenda in 2025 is increasingly demanding a reconciliation of data-driven and stakeholder-based approaches. Several key trends highlight this integration:

- Regulatory Pressure for Standardized Disclosure: The EU's Corporate Sustainability Reporting Directive (CSRD) is forcing companies to collect and disclose standardized sustainability data, placing a spotlight on metrics related to environmental impacts and dependencies, including ecosystem services [23] [24] [25]. This creates a direct need for robust, data-driven assessment methods.

- Supply Chain Resilience and Nature Risks: Companies are increasingly focusing on sustainability challenges across their supply chains, driven by climate shocks, extreme weather, and new due diligence directives like the CSDDD [23] [25]. Assessing these risks requires both spatial models to identify physical threats (e.g., drought risk to key commodities) and stakeholder engagement to understand social vulnerabilities and local impacts.

- The Rise of Green Innovation and AI: Sustainability is increasingly seen as a driver of innovation and growth. Artificial intelligence (AI) and big data analytics are being deployed to enhance the accuracy of ESG reporting and optimize resource use [23] [24]. Furthermore, causal machine learning techniques, pioneered in drug development, are emerging as powerful tools to derive more robust causal insights from complex observational data in sustainability science [20].

The parallel with drug development is instructive. Just as the field is moving beyond a sole reliance on Randomized Controlled Trials (RCTs) to incorporate Real-World Evidence (RWE) for a more comprehensive understanding of drug effects [20] [21], ecosystem services research must move beyond a false dichotomy between models and perceptions. The future lies in integrative strategies that leverage the objectivity and scalability of data-driven models with the contextual richness and value-laden insights of stakeholder perspectives [1] [22]. This will result in more balanced, inclusive, and effective decision-making for sustainable ecosystem management.

Tools of the Trade: Techniques for Quantifying and Qualifying ES

Ecosystem services (ES)—the benefits that humans derive from nature—are fundamental to human well-being and the global economy [1]. Accurately mapping and assessing these services is imperative for sustainable ecosystem management and informed policy decisions, particularly as they face increasing threats from anthropogenic pressure and land cover changes [1]. Within this field, a critical methodological divide exists, pitting data-driven modeling approaches against stakeholder-based evaluations. Data-driven approaches rely on quantitative spatial models and remote sensing data to produce objective, replicable maps of ES potential. In contrast, stakeholder-based methods incorporate expert and local knowledge to capture the perceived value and importance of ES, which may not always align with biophysical models [1] [26]. This guide provides a comparative analysis of these paradigms, using the novel ASEBIO index as a central example of an integrated model, to equip researchers and professionals with the knowledge to select and apply the most appropriate methodologies for their work.

Comparative Analysis: Data-Driven Models versus Stakeholder Perceptions

A landmark national-scale study in Portugal directly compared these approaches by calculating eight multi-temporal ES indicators using a spatial modeling approach, which were then integrated into the ASEBIO index. This index was subsequently contrasted against the ES potential perceived by stakeholders for the same region [1]. The following table summarizes the core quantitative findings of this comparison.

Table 1: Quantitative Comparison of Modeled vs. Perceived Ecosystem Service Potential in Portugal [1]

| Ecosystem Service | Modeled Potential (ASEBIO Index) | Stakeholder Perception | Alignment/Contrast |

|---|---|---|---|

| Overall Average | Based on spatial models & land cover | 32.8% higher on average | Significant mismatch |

| Drought Regulation | Modeled value | Greatly overestimated | Highest contrast |

| Erosion Prevention | Modeled value | Greatly overestimated | Highest contrast |

| Water Purification | Consistently high potential | Closely aligned | High alignment |

| Food Production | Mostly stable, slight declines | Closely aligned | High alignment |

| Recreation | Improved over time | Closely aligned | High alignment |

| Climate Regulation | Declined over time | Overestimated | Moderate misalignment |

| Habitat Quality | Mostly stable | Overestimated | Moderate misalignment |

The experimental protocol for this comparison involved several key stages. First, researchers calculated eight ES indicators for mainland Portugal for the years 1990, 2000, 2006, 2012, and 2018 using a spatial modeling approach based on CORINE Land Cover data [1]. These indicators were then integrated into the ASEBIO (Assessment of Ecosystem Services and Biodiversity) index. The ASEBIO index employs a multi-criteria evaluation method, with weights for each service defined by stakeholders through an Analytical Hierarchy Process (AHP), a structured technique for organizing and analyzing complex decisions [1]. Finally, the composed ASEBIO index for 2018 was quantitatively compared against a separate matrix-based methodology that reflected stakeholders' raw perceptions of ES potential, revealing the disparities quantified in Table 1.

The workflow below illustrates the experimental process and the point of comparison between the two paradigms.

Advanced Spatial Modeling Tools and Techniques

The scientific toolkit for data-driven ES assessment includes a variety of spatial modeling platforms, each with distinct strengths and applications. The following table catalogs key tools and datasets referenced in the literature.

Table 2: Research Reagent Solutions for Ecosystem Services Modeling

| Tool / Dataset Name | Type | Primary Function | Key Features / Applications |

|---|---|---|---|

| InVEST | Software Suite | Integrated ES and tradeoff analysis | Widely used for planning & research; models multiple ES (e.g., carbon, water yield) [1] [27]. |

| SWAT | Hydrological Model | Watershed-scale water resource simulation | Assesses impacts of land use & climate on water provision, sediment, and nutrients [28]. |

| ARIES | Modeling Platform | Rapid ES assessment and valuation | Used for creating global ensembles of ES models like carbon storage [27]. |

| Co$ting Nature | Web Tool | ES mapping and policy analysis | Models ES provision for conservation planning; used in global model ensembles [27]. |

| CORINE Land Cover | Spatial Dataset | Land use/land cover inventory | Foundational input for spatial models; used in ASEBIO index calculation [1]. |

| LUCI | Spatial Tool | ES modeling for landscapes | Compared against InVEST and NC-Model for assessing urban ES [29]. |

Addressing Uncertainty with Model Ensembles

A significant challenge in data-driven modeling is the "certainty gap"—the lack of knowledge about model accuracy. Different models can produce variable projections, making it difficult for practitioners to know which to trust [27]. To address this, researchers are increasingly using model ensembles, which combine the outputs of multiple models.

Global studies have demonstrated that ensembles of models for ES like water supply, carbon storage, and fuelwood production are consistently 2% to 14% more accurate than any individual model chosen at random [27]. This approach distributes accuracy more equitably across the globe, ensuring that data-poor regions do not suffer an "accuracy penalty" [27]. Furthermore, the variation among models in an ensemble can itself serve as a useful indicator of uncertainty when validation data are absent [27].

Methodological Protocols for Integrated Assessment

The following workflow details the protocol for creating an integrated assessment like the ASEBIO index, which combines data-driven and stakeholder elements.

Step 1: Data Acquisition and Spatial Modeling This foundational step involves gathering input data, such as land cover maps (e.g., CORINE) and remote sensing imagery. Subsequently, spatial models like InVEST or SWAT are run to calculate biophysical indicators for multiple ecosystem services across the study area and over a defined time period [1].

Step 2: Stakeholder Engagement and Weighting A diverse group of stakeholders is selected to participate in surveys and structured decision-making processes like the Analytical Hierarchy Process (AHP). The AHP is used to elicit stakeholders' preferences and derive relative weights for each ecosystem service, reflecting their perceived importance [1] [26].

Step 3: Multi-Criteria Synthesis The modeled ES indicators from Step 1 and the stakeholder-derived weights from Step 2 are integrated using a Multi-Criteria Evaluation (MCE) method. This synthesis produces a composite index, such as ASEBIO, which represents the integrated ES potential of the landscape [1].

Step 4: Validation and Uncertainty Analysis The final composite index is validated against independent data or, as in the Portuguese study, directly compared against raw stakeholder perceptions to identify mismatches and quantify uncertainty. This step is crucial for interpreting the results and understanding the limitations of the assessment [1] [27].

The comparison between data-driven models and stakeholder-based evaluations reveals that neither approach is superior; rather, they offer complementary insights. Data-driven models provide objective, spatially-explicit baselines and can track changes over time, but may overlook services that are culturally important or difficult to quantify. Stakeholder perceptions capture the lived experience and value systems of people, but can be influenced by cognitive biases and may over or underestimate actual biophysical potential [1] [26].

The choice between methodologies should be guided by the project's goal:

- Use data-driven models for objective mapping, monitoring temporal trends, and identifying biophysical trade-offs between ES.

- Use stakeholder-based evaluations to understand perceived value, prioritize actions for social license, and resolve conflicts in management.

- Integrate both approaches, as demonstrated by the ASEBIO index, when the objective is to create inclusive, legitimate, and comprehensive management plans that are robust both scientifically and socially.

The emerging practice of using model ensembles and openly sharing ES data helps bridge the "capacity gap" and "certainty gap," particularly in data-poor regions [27]. By understanding the strengths and limitations of each paradigm, researchers and practitioners can more effectively leverage these tools to support sustainable ecosystem management and policy.

In the field of ecosystem services (ES) evaluation and environmental decision-making, a fundamental tension exists between quantitative, model-driven approaches and qualitative, stakeholder-based assessments. While spatial models provide replicable, data-rich insights into ES potential, they often fail to capture the nuanced perceptions and values that stakeholders assign to these services [1]. This methodological divide presents a critical challenge for researchers, scientists, and drug development professionals who must integrate technical data with human perspectives to formulate effective, sustainable policies. The pursuit of a purely objective, data-driven evaluation often overlooks the complex socio-ecological contexts that ultimately determine policy acceptance and implementation success.

Two methodological approaches have emerged as central to bridging this divide: traditional Participatory Workshops and the structured multi-criteria decision-making framework of the Analytical Hierarchy Process (AHP). Participatory Workshops offer a platform for inclusive dialogue and knowledge sharing, directly capturing stakeholder values and perceptions. Conversely, AHP provides a mathematical foundation for integrating diverse perspectives through pairwise comparisons, transforming subjective judgments into quantifiable priorities [30] [31]. A recent national-scale study in Portugal highlighted the critical nature of this integration, revealing a significant 32.8% average overestimation of ecosystem service potential by stakeholders compared to spatial models, with particularly stark contrasts in drought regulation and erosion prevention [1]. This demonstrates the tangible consequences of methodological selection on research outcomes and subsequent decision-making.

Methodological Comparison: Participatory Workshops versus AHP

The following table provides a structured comparison of these two stakeholder engagement methods, highlighting their distinct characteristics, applications, and limitations.

Table 1: Comparative Analysis of Participatory Workshops and the Analytical Hierarchy Process

| Feature | Participatory Workshops | Analytical Hierarchy Process (AHP) |

|---|---|---|

| Core Approach | Collaborative, open-ended discussion and knowledge sharing [32]. | Structured, mathematical pairwise comparisons of criteria and alternatives [30] [33]. |

| Primary Strength | Fosters inclusive dialogue, builds relationships, and captures rich qualitative context [32]. | Provides quantitative prioritization, reduces cognitive bias, and ensures decision traceability [30] [31]. |

| Key Limitation | Susceptible to dominance by vocal stakeholders; outcomes can be subjective and difficult to quantify [32]. | Can be perceived as complex; relies on the consistent judgment of participants during comparisons [30]. |

| Output | Qualitative insights, shared understanding, and identified areas of agreement or conflict [32]. | Quantified weightings for criteria and alternatives, and a ranked list of priorities [30] [1]. |

| Ideal Application Context | Exploring complex problems, building stakeholder consensus, and scoping issues in early project phases [32]. | Selecting and prioritizing projects, evaluating policy alternatives, and integrating diverse expert opinions [30] [1]. |

| Handling of Conflict | Through facilitated discussion and negotiation [32]. | Through mathematical aggregation of judgments and consistency measurement [31]. |

Workflow and Process

The fundamental difference between these methods becomes evident in their operational workflows. The diagram below illustrates the typical stages involved in each process, from initial planning to final outcome.

Experimental Protocols and Case Study Applications

Protocol for a Hybrid AHP-Participatory Study

The following workflow details a specific protocol for integrating AHP with participatory elements, as demonstrated in a national ecosystem services assessment [1]. This hybrid approach is designed to leverage the strengths of both methods.

Case Study: The Portugal National Ecosystem Services Assessment

A seminal 2024 study in Portugal provides a robust experimental framework for comparing model-driven and stakeholder-based evaluations [1]. The study calculated eight ecosystem service indicators (e.g., climate regulation, water purification, habitat quality) for mainland Portugal from 1990 to 2018 using spatial modeling based on CORINE Land Cover data. Concurrently, stakeholders representing various sectors were engaged to assign relative importance weights to these ES indicators using the AHP method. These weights were used to create a novel composite index—the ASEBIO (Assessment of Ecosystem Services and Biodiversity) index.

The key experimental findings from this comparative assessment are summarized in the table below.

Table 2: Key Experimental Results from the Portugal ES Assessment Case Study [1]

| Experimental Metric | Finding | Implication |

|---|---|---|

| Average Discrepancy | Stakeholder perceptions overestimated ES potential by 32.8% on average compared to spatial models. | Highlights a significant optimism bias in human perception that must be accounted for in policy. |

| Highest Contrast ES | Drought regulation and erosion prevention showed the largest model-perception gaps. | Suggests stakeholders may undervalue the complexity of modeling these specific services. |

| Most Aligned ES | Water purification, food production, and recreation were most closely aligned. | Indicates services with more direct human experience are better understood by stakeholders. |

| Primary Contributors | Water purification and recreation were the largest contributors to the final ASEBIO index. | Demonstrates how AHP quantifies stakeholder values, shifting focus from pure biophysical output. |

The Scientist's Toolkit: Essential Reagents and Materials

Successful implementation of these stakeholder engagement methods requires a suite of conceptual and practical tools. The following table outlines the key "research reagents" essential for conducting robust Participatory Workshop and AHP studies.

Table 3: Essential Research Reagents and Materials for Stakeholder Engagement Studies

| Item/Tool | Function/Description | Application Context |

|---|---|---|

| Saaty's 1-9 Comparison Scale | A fundamental scale for pairwise comparisons, converting subjective preferences into numerical values (1=equal importance, 9=extreme importance) [30]. | Core to AHP for building pairwise comparison matrices and deriving weights. |

| Pairwise Comparison Matrix (PCM) | A positive, reciprocal matrix (aij = 1/aji) where elements represent the relative importance of one item over another [31]. | Used in AHP to structure evaluator judgments. The core input for mathematical prioritization. |

| Consistency Ratio (CR) | A metric to measure the logical coherence of judgments in a PCM. A CR < 0.1 is generally acceptable, ensuring evaluator judgments are transitive [31]. | A critical quality control check in AHP to identify and exclude inconsistent evaluations from analysis. |

| Stakeholder Engagement Assessment Matrix | A framework (often a table) to map stakeholders according to their current vs. desired level of engagement (e.g., Unaware, Resistant, Neutral, Supportive, Leading) [32]. | Used in participatory approaches for planning and monitoring engagement strategies, though it can be oversimplified [32]. |

| Interest/Influence/Impact (III) Mapping | A multi-criteria stakeholder analysis method that assesses stakeholders based on their interest in, influence over, and impact from a project [32]. | A robust alternative to simple engagement matrices for prioritizing stakeholders in participatory processes. |

| Sentiment Analysis Tools | AI-driven software that automatically analyzes stakeholder comments, emails, and transcripts to determine positive, negative, or neutral sentiment [32]. | Provides a quantitative measure of stakeholder perception and support, complementing qualitative workshop data. |

The comparative analysis reveals that Participatory Workshops and the Analytic Hierarchy Process are not mutually exclusive but are, in fact, highly complementary. The Portuguese case study demonstrates that an integrated strategy, which incorporates scientific modeling with structured stakeholder knowledge, is essential for effective ecosystem assessments and land-use planning [1]. While models provide critical, data-driven baselines, AHP offers a rigorous mechanism to incorporate human values and preferences into the final decision-making matrix. Participatory workshops remain vital for fostering the dialogue, building trust, and providing the qualitative context that purely numerical approaches lack.

For researchers and scientists, the key takeaway is that the choice between a data-driven and a stakeholder-based evaluation is a false dichotomy. The most robust and actionable outcomes emerge from methodologies that strategically blend both paradigms, using AHP to quantitatively structure stakeholder input and participatory techniques to ensure the process remains inclusive and contextually grounded. This hybrid approach promises to bridge the perceptional gaps identified in research and pave the way for more balanced, legitimate, and ultimately successful environmental and development decisions.

The Role of Land Cover Data (e.g., CORINE) in Spatial Modeling

Spatial modeling of ecosystem services (ES) is fundamental to environmental monitoring, climate change assessment, and sustainable land use planning [34]. The CORINE (Coordination of Information on the Environment) Land Cover (CLC) program, a flagship component of the European Union's Copernicus Land Monitoring Service, has provided standardized, pan-European land cover inventories for over three decades [34]. With 44 thematic classes and regular updates every six years, CLC offers a consistent dataset for transnational environmental analysis [34]. However, a critical scholarly debate examines the comparative effectiveness of purely data-driven spatial models versus approaches that integrate stakeholder-based evaluations. This guide explores the role of CLC within this context, objectively comparing its performance with other data sources and methodologies to illuminate its strengths and limitations in spatial modeling applications.

CORINE Land Cover: Technical Specifications and Applications

The CORINE Land Cover program was initiated in the 1980s to address the challenge of inconsistent and incomparable national land cover maps across European borders [34]. Its primary objective was to enable continental-scale environmental monitoring through a harmonized methodology.

Table 1: Key Technical Specifications of CORINE Land Cover

| Feature | Specification |

|---|---|

| Thematic Classes | 44 classes, ranging from broad forested areas to individual vineyards [34] |

| Update Frequency | Every 6 years (most recent update in 2018) [34] |

| Spatial Coverage | Pan-European [34] |

| Time Series | Available for 1990, 2000, 2006, 2012, and 2018 [35] [7] |

| Primary Applications | Environmental monitoring, land use planning, climate change assessments, emergency management [34] |

CLC data serves as a critical input for spatial models predicting various ecosystem phenomena. For instance, one study of Thessaloniki, Greece, used CLC alongside Landsat 8 time series data and vegetation indices to analyze the correlation between land cover types and Land Surface Temperature (LST) trends [36]. The research identified a gradual increase in average surface temperature, particularly in 2022 and 2023, with mean annual LST values reaching 26.07°C and 27.09°C, respectively [36]. This demonstrates CLC's utility in quantifying and modeling urban climate dynamics.

Comparative Performance: Data-Driven Models vs. Stakeholder-Based Evaluations

A central thesis in contemporary ecosystem services research concerns the relative value of data-driven spatial models versus evaluations based on local stakeholder knowledge. A national-scale study in Portugal offers compelling experimental data for this comparison, revealing significant disparities between these approaches.

The research calculated eight multi-temporal ES indicators using a spatial modeling approach based on CORINE Land Cover and other data, integrating them into a novel ASEBIO index (Assessment of Ecosystem Services and Biodiversity) [7]. This data-driven index was then compared against stakeholders' perceptions of ES potential for the year 2018 [7].

Table 2: Modeled vs. Perceived Ecosystem Service Potential in Portugal [7]

| Ecosystem Service | Stakeholder Overestimation (Average) |

|---|---|

| All Selected ES | 32.8% higher on average |

| Drought Regulation | Highest contrast (specific value not provided) |

| Erosion Prevention | Highest contrast (specific value not provided) |

| Water Purification | Most closely aligned |

| Food Production | Most closely aligned |

| Recreation | Most closely aligned |