Bridging the Gap: A Comprehensive Analysis of Ecosystem Service Models and Stakeholder Perceptions

This article synthesizes current research on the critical comparison between data-driven ecosystem service (ES) models and stakeholder perceptions.

Bridging the Gap: A Comprehensive Analysis of Ecosystem Service Models and Stakeholder Perceptions

Abstract

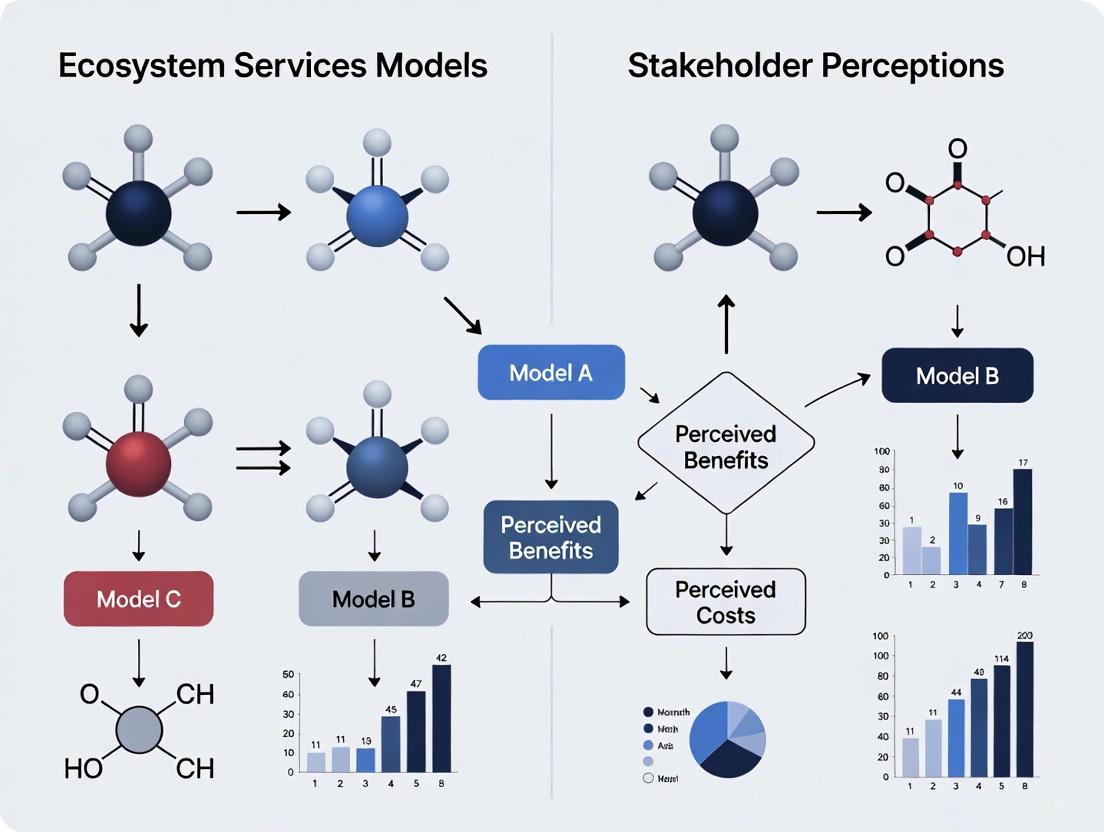

This article synthesizes current research on the critical comparison between data-driven ecosystem service (ES) models and stakeholder perceptions. It explores the foundational theories of this divergence, examines the methodologies for quantifying both models and perceptions, addresses key challenges like validation and uncertainty, and presents empirical evidence from comparative studies. Findings consistently reveal a significant mismatch—with stakeholders often rating ES potential 32.8% higher than models on average—driven by differing knowledge systems and priorities. The article concludes that integrative frameworks, which combine model ensembles with participatory engagement, are essential for credible, salient, and legitimate environmental decision-making. This synthesis provides valuable insights for researchers and practitioners aiming to optimize ecosystem management and policy.

The Science and the Social: Unpacking the Foundation of Ecosystem Service Assessments

Ecosystem Services (ES) are crucial for human well-being and the global economy. The mapping and assessment of these services are imperative for sustainable ecosystem management and informed policy decisions, such as those related to the United Nations Sustainable Development Goals [1]. However, two distinct methodologies dominate ES research: data-driven spatial modeling and assessments based on stakeholder perceptions. A growing body of evidence reveals a significant divide between the outputs of scientific models and the values and perceptions held by local stakeholders [1] [2]. Understanding this divide is critical for researchers and professionals aiming to design effective environmental management and restoration strategies, as failing to consider plural values can create conflicts and result in policy outcomes lacking stakeholder support [2] [3]. These Application Notes provide a structured comparison of these approaches, detailed experimental protocols, and essential tools for conducting comparative research.

Quantitative Data Comparison: Models vs. Perception

A 2024 national-scale study in Portugal provided a direct quantitative comparison between model-based ES potential and stakeholders' perceptions, revealing systematic disparities [1]. The research developed a composite index (ASEBIO) from eight modeled ES indicators and compared it against a matrix-based methodology reflecting stakeholder perceptions for the year 2018.

Table 1: Quantitative Disparities Between Modeled and Perceived Ecosystem Service Potential [1]

| Ecosystem Service Indicator | Average Contrast (Stakeholder Perception vs. Model) | Alignment Category |

|---|---|---|

| Drought Regulation | Highest contrast | Low alignment |

| Erosion Prevention | Highest contrast | Low alignment |

| Climate Regulation | High contrast | Low alignment |

| Habitat Quality | High contrast | Low alignment |

| Pollination | Moderate contrast | Moderate alignment |

| Food Production | Low contrast | High alignment |

| Recreation | Low contrast | High alignment |

| Water Purification | Low contrast | High alignment |

| Overall Average | Stakeholder estimates 32.8% higher | — |

The results demonstrate that stakeholders overestimated ES potential for all selected services, with an average overestimation of 32.8% compared to the model outputs [1]. The largest contrasts were observed in regulating services like drought regulation and erosion prevention.

Experimental Protocols for Comparative Research

Protocol 1: Spatial Modeling of Ecosystem Services

This protocol outlines the methodology for calculating multi-temporal ES indicators and a composite index, as applied in the Portuguese case study [1].

- Objective: To quantify and map the spatiotemporal changes of multiple ecosystem services using a spatial modeling approach.

- Workflow:

- Land Cover Data Collection: Acquire multi-temporal land cover data (e.g., CORINE Land Cover) for the reference years (e.g., 1990, 2000, 2006, 2012, 2018) [1].

- ES Indicator Selection and Modeling: Select relevant ES indicators. Calculate each indicator using spatial modeling tools (e.g., the InVEST software suite) and ancillary data. The Portuguese study modeled eight indicators: climate regulation, water purification, habitat quality, drought regulation, recreation, food production, erosion prevention, and pollination [1].

- Index Composition (ASEBIO): Integrate the individual ES indicators into a novel composite index.

- Multi-Criteria Evaluation: Use a method like the Analytical Hierarchy Process (AHP) to define weights for each ES indicator. These weights should be defined by stakeholders to reflect the relative importance of each service's supply potential [1].

- Spatial Calculation: Compute the final ASEBIO index value for each land unit based on the weighted ES indicators.

- Output: A series of maps and statistical data depicting the spatiotemporal changes of individual ES and the composite index from 1990 to 2018.

Protocol 2: Eliciting Stakeholder Perceptions via Deliberative Valuation

This protocol is based on the Deliberative Multicriteria Evaluation (DMCE) method used in studies in Mexico and Massachusetts, which formalizes community involvement and helps bridge the gap between individual and shared social values [2] [3].

- Objective: To identify which ES are perceived by different stakeholder groups and elicit their shared social values through a structured deliberative process.

- Workflow:

- Stakeholder Identification and Recruitment: Identify and recruit stakeholders from various sectors of society (e.g., local residents, farmers, government officials, NGO representatives) ensuring representation of different professions and backgrounds [2] [3].

- Deliberative Workshops: Conduct virtual or in-person workshops.

- Individual Surveys: Begin with individual surveys to capture pre-deliberation values based on personal experience and knowledge [3].

- Group Deliberation: Facilitate in-depth discussions among participants about ES, allowing for social learning and challenging of individual values. Record and transcribe these deliberations for qualitative analysis [3].

- Swing Weighting Method: Employ the "swing" weighting method within the DMCE framework. This method allows stakeholders to evaluate and assign importance to different ES, which may have different measurement units, in relation to each other [3].

- Data Analysis:

- Quantitative Analysis: Analyze individual survey results and the group's collective preferences (shared social values) derived from the swing weighting [3].

- Qualitative Analysis: Perform applied thematic and co-occurrence analysis on the deliberation transcripts to identify key themes and reasoning behind the values [3].

- Output: Quantitative data on ES prioritization (both individual and group-level) and rich qualitative data explaining the reasoning behind the valuations.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Materials and Tools for ES Comparison Research

| Item Name | Function/Benefit |

|---|---|

| InVEST (Integrated Valuation of Ecosystem Services and Tradeoffs) | A suite of spatial models used to map and value ecosystem services, widely used for planning and research applications [1]. |

| CORINE Land Cover (Coordination of Information on the Environment) | Provides consistent multi-temporal land cover maps for European countries, essential for analyzing land use changes and their impact on ES [1]. |

| Analytical Hierarchy Process (AHP) | A multi-criteria evaluation method used to define the relative weights of different ES indicators based on stakeholder input for composite index creation [1]. |

| Deliberative Multicriteria Evaluation (DMCE) | A framework that combines structured decision-making with deliberation to elicit shared social values for ES, incorporating local knowledge [3]. |

| "Swing" Weighting Method | A technique used within DMCE that is intuitive for participants and allows for the evaluation of ES with different measurement units [3]. |

| APCA Contrast Calculator (Advanced Perceptual Contrast Algorithm) | A next-generation tool for ensuring color contrast accessibility in data visualizations, critical for creating inclusive charts and graphs for publications and presentations [4]. |

Discussion & Integration Strategies

The quantitative and methodological disparities highlighted in Sections 2 and 3 underscore a critical challenge in ES research. The significant mismatch, such as the 32.8% average overestimation by stakeholders, reveals that model-based assessments and human perception capture different aspects of reality [1]. Models are grounded in biophysical data but can overlook socio-cultural dimensions, while perceptions are shaped by personal experience, cultural values, and collective discourse, potentially leading to overestimations or differing priorities [1] [2].

A key unsolved issue in ES modeling is the definition of "service sheds"—the appropriate spatial and temporal context for quantifying a service—which, if not properly accounted for, can lead to misleading estimates [5]. Furthermore, the validation of ES models remains a largely overlooked step, raising questions about the credibility of their outcomes and the need for frameworks that include validation against raw field or sensing data [6].

To bridge this divide, integrative strategies are essential. Combining the DMCE protocol with the spatial modeling protocol allows for the creation of scientifically robust and socially legitimate ES assessments. Presenting results both quantitatively and qualitatively, as outlined in the protocols, helps minimize the disconnect between research and policymakers, providing more useful and tangible information for decision-making [3]. This integrated approach ensures that both data-driven models and human perspectives are sufficiently considered, leading to more balanced, inclusive, and effective ecosystem management and policy.

The Critical Role of Integrating Knowledge Systems in ES Management

Ecosystem Services (ES) management has traditionally relied heavily on quantitative, model-driven assessments. However, a growing body of evidence reveals that disconnects between scientific models and the knowledge and priorities of local stakeholders can compromise the effectiveness and sustainability of conservation outcomes [1] [7]. This document outlines application notes and protocols for the systematic integration of diverse knowledge systems—specifically scientific data and Indigenous and Local Knowledge (ILK)—into ES research and management. Grounded in a thesis that compares ES models with stakeholder perceptions, these guidelines are designed to help researchers and practitioners generate more holistic, legitimate, and contextually relevant evidence for decision-making.

Background and Rationale

The Evidence Gap: Models versus Perceptions

Recent research underscores a significant misalignment between model-based ES assessments and stakeholder perceptions. A 2024 national-scale study in Portugal quantified this gap, finding that stakeholders' valuations of ES potential were, on average, 32.8% higher than model-based calculations [1]. The disparity varied by service; while drought regulation and erosion prevention showed the highest contrasts, services like water purification, food production, and recreation were more closely aligned [1]. This mismatch highlights that biophysical models alone are insufficient for capturing the full spectrum of values and benefits that ecosystems provide to people.

The Value of Indigenous and Local Knowledge (ILK)

ILK represents a cumulative body of knowledge, practice, and belief about the relationship of living beings with each other and their environment [8]. Integrating ILK is crucial for several reasons:

- Contextual Understanding: Local communities possess deep, historically rooted knowledge of ecosystem dynamics and functions [8].

- Revealing Divergent Priorities: Studies consistently show that communities grounded in Traditional Ecological Knowledge (TEK) often prioritize tangible provisioning and cultural services (e.g., food, raw materials), whereas experts and models tend to emphasize regulating services (e.g., carbon sequestration, hazard regulation) [7].

- Ethical Imperative and Equity: Including local perspectives is a step toward overcoming power dichotomies and epistemological asymmetry between Western science and other knowledge systems [8].

Table 1: Comparative Analysis of Model-Based and Stakeholder-Perceived ES Potential

| Ecosystem Service | Model-Based Potential | Stakeholder-Perceived Potential | Approximate Disparity |

|---|---|---|---|

| Drought Regulation | Lower | Higher | Highest Contrast |

| Erosion Prevention | Lower | Higher | Highest Contrast |

| Water Purification | High | High | Closely Aligned |

| Food Production | Stable | High | Closely Aligned |

| Recreation | Improved | High | Closely Aligned |

| Overall Average | - | - | +32.8% [1] |

Application Notes: A Framework for Integration

A successful integration process is cyclical and adaptive, ensuring mutual learning and validation. The following framework synthesizes best practices from methodological research [8] [9].

Foundational Principles for Co-Production of Knowledge

- Post-Normal Science Perspective: Acknowledge that ES management operates in contexts characterized by complexity, high stakes, and uncertainty, necessitating an "extended peer community" that includes local stakeholders [8].

- Ethnoecology: Draw from this discipline to revalue cultures and forms of natural resource appropriation [8].

- Iterative Validation: The process must be cyclical, involving constant data validation and agreement-making with communities, rather than a linear, extractive exercise [8].

A Cyclical Integration Workflow

The integration of knowledge systems is not a linear process but a continuous cycle of engagement, analysis, and validation. The following workflow, adapted from methodologies in socio-ecological research, outlines the key phases [8].

Diagram 1: Cyclical Workflow for Knowledge Integration. This process emphasizes reciprocity and adaptive learning between researchers and communities [8].

Experimental Protocols

This section provides detailed, actionable protocols for implementing the key stages of the integration workflow.

Protocol 1: Trust Building and Scoping (Stage 0)

Objective: To establish mutual trust, define common goals, and gain a preliminary understanding of the socio-ecological context.

Steps:

- Initial Contact: Identify and meet with key informants and community leaders to introduce the research objectives.

- Community Meetings: Hold open meetings to discuss the study's purpose, potential benefits, and commitments required from all parties.

- Preliminary Agreements: Collaboratively define the geographical area of study, main themes of interest, and the role of the community in the process.

- Ethical Considerations: Obtain collective and individual informed consent for participation, ensuring transparency about data use and ownership.

Key Outputs: Memorandum of understanding, defined study area, list of key informants.

Protocol 2: Multi-Tool Data Collection (Stage 1)

Objective: To gather rich, qualitative and quantitative data on ES from both individual and group perspectives.

Methods should be applied interdependently, with each tool building on the previous one [8].

Table 2: Suite of Methods for Socio-Cultural ES Assessment

| Method | Level of Application | Key Function | Procedure Notes |

|---|---|---|---|

| Semi-Structured Interviews [8] | Individual | Explore individual perceptions, experiences, and relationships with the socio-ecosystem. | Conduct as conversations in homes; use evenly suspended attention and free association. Cover topics: way of life, productive activities, socio-environmental problems. |

| Participatory Mapping [8] | Group | Visualize collective spatial knowledge and strengthen bonds between participants. | Produce maps with local actors to represent their territory. A moment of collective exchange to understand spatial relationships and values. |

| "Walking in the Woods" / Field Transects [8] | Individual/Group | Ground-truth information and elicit knowledge in context. | Walk with community members through different ecosystems, discussing uses, names, and changes in vegetation and landscapes. |

| Structured Priority Surveys [7] | Individual/Group | Quantify perceptions and priorities for different ES across land uses. | Use a two-step design: 1) Assess perception/use level (e.g., 4-point scale). 2) For services with ≥50% recognition, conduct a 100-point allocation task to evaluate relative importance. |

Protocol 3: Data Systematization and Analysis (Stage 2)

Objective: To synthesize and analyze the collected data, integrating qualitative narratives with quantitative model outputs.

Steps:

- Qualitative Data Processing: Transcribe interviews and field notes. Use coding techniques (e.g., thematic analysis) to identify key ES, values, and concerns.

- Quantitative Data Analysis: Analyze survey data (e.g., point allocations) to produce descriptive statistics and compare priorities between stakeholder groups (e.g., community vs. experts) and land uses.

- Spatial Data Integration: Georeference participatory maps and integrate them with scientific spatial data (e.g., land cover maps, InVEST model outputs) in a GIS environment.

- Comparative Analysis: Juxtapose the ILK-derived ES priorities and values with the outputs from biophysical and spatial ES models to identify alignments, trade-offs, and gaps.

Protocol 4: Validation and Working Agreements (Stage 3)

Objective: To validate the interpreted results with the community and collaboratively define pathways for action.

Steps:

- Validation Workshops: Return to the community to present the systematized results (e.g., through maps, simple graphs, and narratives) and check for accuracy and interpretation.

- Deliberative Dialogue: Facilitate discussions on the implications of the findings, particularly the identified gaps between model outputs and local perceptions.

- Co-Develop Recommendations: Work with stakeholders to translate the integrated knowledge into management recommendations, policy briefs, or community action plans.

- Establish Long-Term Feedback Mechanisms: Create channels for ongoing communication and monitoring.

This section details essential tools and frameworks for conducting integrated ES assessments.

Table 3: Research Reagent Solutions for Integrated ES Assessments

| Tool / Resource | Type | Primary Function | Relevance to Integration |

|---|---|---|---|

| InVEST [1] | Spatial Modelling Suite | Models biophysical and economic production of ES (e.g., carbon storage, erosion control). | Provides the scientific, data-driven baseline for ES supply that can be compared with perceived ES values. |

| Semi-Structured Interview Guide [8] | Qualitative Instrument | Elicits narratives on way of life, resource use, and environmental change. | Captures ILK and contextualizes quantitative data. The cornerstone of socio-cultural assessment. |

| Participatory Mapping Kit [8] | Spatial Tool | Engages stakeholders in producing maps of their territory, resources, and values. | Makes local spatial knowledge explicit, allowing it to be visualized and integrated into GIS. |

| Analytical Hierarchy Process (AHP) [1] | Multi-Criteria Analysis | Structures stakeholder preferences by weighting the relative importance of different ES. | Quantifies and incorporates stakeholder priorities into a composite index (e.g., ASEBIO index [1]). |

| Final Ecosystem Goods and Services (FEGS) Scoping Tool [10] | Classification Framework | Provides a structured process for identifying stakeholders and the ES benefits relevant to them. | Ensures a comprehensive and inclusive scoping of ES, preventing the omission of locally important services. |

Integrated Data Visualization and Decision Support

The ultimate goal of integration is to produce synthesized knowledge that is accessible and useful for decision-makers. Comparative analysis, as conducted in Portugal, can be powerful for highlighting gaps and synergies [1]. The following diagram illustrates a generalized analytical framework for comparing model outputs with stakeholder perceptions.

Diagram 2: Framework for Comparative Analysis of ES Models and Perceptions. This pathway guides the synthesis of quantitative and qualitative knowledge for robust decision-making [1].

In the assessment and management of complex social-ecological systems, such as fisheries or woodland biodiversity, two distinct forms of knowledge are increasingly recognized as essential: data-driven objectivity and contextualized local knowledge. Data-driven objectivity relies on quantitative scientific information generated through formalized processes like monitoring programs, retrospective assessments, and predictive models [11]. Conversely, contextualized local knowledge encompasses the ecological or socioeconomic understanding held by place-based communities and stakeholders, derived from on-the-ground observations, intergenerational experience, and personal perceptions [11]. The integration of these knowledge systems is seen as best practice for decision-making in fields like biodiversity management and ecosystem service assessment, though it presents significant challenges when predictions from these different viewpoints do not align [12]. This document outlines application notes and protocols for researchers aiming to compare and integrate these knowledge forms within ecosystem services and environmental management research.

Application Notes: Comparative Frameworks and Tensions

Table 1: Comparison of Knowledge Types in Environmental Research

| Characteristic | Data-Driven (Scientific) Knowledge | Contextualized Local Knowledge | Institutional Expert Knowledge |

|---|---|---|---|

| Primary Source | Scientific monitoring, sensor data, models, species distribution data [11] | On-the-water observations, intergenerational experience, personal perceptions [11] | Management/research experience, colleague communication, personal knowledge [11] |

| Typical Form | Quantitative, numerical | Qualitative, narrative, experiential | Often a blend of quantitative and qualitative |

| Key Strength | Provides robust hindcasts/forecasts; generalizable [11] | Offers long historical baselines; rich socio-ecological context [11] | Domain-specific understanding; credible for policy legitimization [11] |

| Inherent Challenge | Scale mismatch with management; demands long-term monitoring [11] | May be perceived as anecdotal; difficult to standardize [11] | Judgment adequacy for complex challenges [11] |

| Example in Practice | Species-specific exposure studies; biomass harvest models [11] | Fishers' perceived impacts of climate change [11] | Expert elicitation to rank species' relative vulnerability [11] |

Documented Tensions and Synergies in Research Outcomes

Comparative studies consistently reveal tensions between the outcomes of data-driven models and stakeholder-based evaluations. In a Portuguese study on ecosystem services (ES), a significant mismatch was found between ES potential calculated via spatial models and the potential perceived by stakeholders; stakeholder estimates were, on average, 32.8% higher [1]. The degree of contrast also varied by service type. Discrepancies in climate vulnerability assessments (CVAs) for fisheries have been attributed to several factors, including [11]:

- Varying levels of individual familiarity, expertise, and research efforts across species.

- Divergences in the use of assessment indicators and scoring criteria.

- Data and knowledge gaps related to species' biological traits and fisheries socioeconomics.

- Uncertainties stemming from data quality and knowledge confidence.

Despite these tensions, synergies are evident. In woodland management, stakeholder predictions and biodiversity data models showed general similarities in ranking the performance of different management scenarios, though important differences remained [12]. This underscores that these knowledge systems are not mutually exclusive but can provide complementary insights.

Experimental Protocols for Comparative Research

Protocol 1: Integrated Climate Vulnerability Assessment (CVA)

This protocol is adapted from methodologies used in fisheries social-ecological systems [11].

Objective: To assess and compare climate vulnerability findings derived from scientific data, institutional expert knowledge, and local fishermen's knowledge.

Workflow:

Detailed Methodology:

Phase 1: Desktop Research and Data Compilation (Data-Driven Approach)

- Action: Compile and pre-process existing scientific data. This includes species biological trait data (e.g., maximum body length, thermal safety margin), long-term fisheries monitoring data (biomass, harvest), and oceanographic data from models or remote sensing [11].

- Output: A standardized dataset for input into a pre-defined CVA framework. This may involve calculating quantitative vulnerability scores based on exposure, sensitivity, and adaptive capacity indicators.

Phase 2: Expert Knowledge Elicitation

- Action: Conduct structured surveys or workshops with a diverse group of institutional experts (e.g., managers, policy-makers, researchers, NGO representatives).

- Procedure: Use deliberative discussions and repeated scoring techniques [12]. Experts are asked to rank or score species' relative vulnerability based on the same assessment framework used in Phase 1, drawing on their own management or research experience.

- Output: A set of expert-derived vulnerability scores and qualitative justifications.

Phase 3: Local Knowledge Collection

- Action: Perform in-depth, semi-structured interviews with local fishermen and community members.

- Procedure: Use open-ended questions to gather perceptions about observed climate change impacts, changes in species abundance and distribution, and the socioeconomic vulnerability of their livelihoods [11]. This is a bottom-up, participatory approach.

- Output: Qualitative and, where possible, quantifiable data on perceived vulnerabilities.

Phase 4: Data Analysis and Triangulation

- Action: Systematically compare the results from the three phases.

- Procedure:

- Identify areas of convergence and divergence in vulnerability rankings.

- Analyze the root causes of discrepancies (e.g., differing indicators, data gaps, perception biases) [11].

- Integrate findings to create a composite vulnerability assessment that leverages the strengths of each knowledge form.

Protocol 2: Ecosystem Services (ES) Modeling vs. Perception Assessment

This protocol is designed to compare model-based ES potential with stakeholder perceptions at a national or regional scale [1].

Objective: To quantify and compare ecosystem service potential as generated by spatial models and as perceived by stakeholders.

Workflow:

Detailed Methodology:

Spatial ES Modeling Track

- Action: Calculate multiple multi-temporal ES indicators (e.g., climate regulation, habitat quality, drought regulation, recreation) using a spatial modelling approach like InVEST software [1].

- Input Data: Land cover cartography (e.g., CORINE Land Cover) over a defined time period.

- Index Development: Integrate the individual ES indicators into a novel composite index (e.g., the ASEBIO index - Assessment of Ecosystem Services and Biodiversity) using a multi-criteria evaluation method.

Stakeholder Perception Track

- Action: Elicit stakeholders' perceptions of ES supply potential.

- Stakeholder Recruitment: Engage a diverse group of stakeholders from various sectors of society through workshops.

- Procedure: Use an Analytical Hierarchy Process (AHP) to allow stakeholders to assign weights reflecting the relative importance of each ecosystem service [1]. Additionally, use a matrix-based methodology where stakeholders provide their valuation of ES potential for different land cover classes.

Comparison and Analysis

- Action: Quantitatively and spatially compare the model-based composite index (ASEBIO) with the matrix of stakeholder-perceived ES potential for a reference year.

- Output: Calculation of the average percentage difference between model and perception results, and identification of which specific ES show the highest and lowest alignment [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Tools for Knowledge Integration Research

| Item/Tool | Function in Research | Application Context |

|---|---|---|

| Spatial Modelling Software (e.g., InVEST) | A suite of models used to map and value ecosystem services based on land/sea use data. Quantifies ES indicators for comparison with perceptions [1]. | Ecosystem Services Assessment |

| Analytical Hierarchy Process (AHP) | A structured multi-criteria decision-making technique. Used to derive stakeholder-defined weights for the importance of different ES or vulnerability indicators [1]. | Stakeholder Elicitation, Index Creation |

| CORINE Land Cover Data | A standardized geographic land cover inventory. Serves as a primary spatial data input for modelling ES potential and understanding land use changes [1]. | Spatial Analysis, ES Modelling |

| Structured Survey Instruments | Standardized questionnaires for expert elicitation. Ensures consistent and comparable data collection on vulnerability scores and rankings across all expert participants [11]. | Expert Knowledge Elicitation |

| Semi-Structured Interview Guides | Flexible interview protocols with open-ended questions. Allows for the collection of rich, contextualized local knowledge while maintaining a focus on core research themes [11]. | Local Knowledge Collection |

| Design Suitability Score (DSS) Framework | An evaluation framework combining AI metrics and stakeholder validation to quantitatively assess the alignment of designs or models with community values [13]. | Model/Design Validation, Co-Design Processes |

Stakeholder heterogeneity represents a critical factor in environmental management and ecosystem service (ES) assessment. The divergence in perceptions, priorities, and knowledge systems between local communities and expert stakeholders significantly influences conservation outcomes, policy relevance, and sustainable management practices [7]. Integrating these varied perspectives presents both a challenge and necessity for developing balanced, inclusive, and effective environmental governance frameworks. This document outlines practical protocols and applications for researching and integrating diverse stakeholder viewpoints within ecosystem services research, providing methodologies to systematically capture, analyze, and reconcile differing perceptions between local communities and experts.

Quantitative Data on Stakeholder Perceptions and Priorities

Table 1: Documented Gaps Between Expert and Community Ecosystem Service Priorities

| Ecosystem Service Category | Community Priority Level | Expert Priority Level | Documented Discrepancy | Study Context |

|---|---|---|---|---|

| Food Production (Provisioning) | High [7] | Moderate | Communities prioritize tangible provisioning services [7] | Rural Laos (Bamboo forest, rice paddy, teak plantation) |

| Raw Materials (Provisioning) | High [7] | Moderate | Communities prioritize tangible provisioning services [7] | Rural Laos (Bamboo forest, rice paddy, teak plantation) |

| Carbon Sequestration (Regulating) | Low [7] | High | Experts emphasize regulating services [7] | Rural Laos (Bamboo forest, rice paddy, teak plantation) |

| Hazard Regulation (Regulating) | Low [7] | High | Experts emphasize regulating services [7] | Rural Laos (Bamboo forest, rice paddy, teak plantation) |

| Biodiversity/Habitat (Habitat) | Low [7] | High | Experts emphasize habitat services [7] | Rural Laos (Bamboo forest, rice paddy, teak plantation) |

| Drought Regulation | Not Specified | Not Specified | Stakeholder estimates 32.8% higher than models on average; drought regulation showed one of the highest contrasts [1] | Mainland Portugal (National ES assessment) |

| Erosion Prevention | Not Specified | Not Specified | Stakeholder estimates 32.8% higher than models on average; erosion prevention showed one of the highest contrasts [1] | Mainland Portugal (National ES assessment) |

| Water Purification | Not Specified | Not Specified | One of the most closely aligned services between stakeholders and models [1] | Mainland Portugal (National ES assessment) |

| Recreation | Not Specified | Not Specified | One of the most closely aligned services between stakeholders and models [1] | Mainland Portugal (National ES assessment) |

Table 2: Modeled vs. Perceived Ecosystem Service Potential (Portugal Case Study)

| Assessment Method | Overall ES Potential Estimate | Key Findings on Specific ES | Temporal Coverage |

|---|---|---|---|

| Spatial Modelling (ASEBIO Index) | Quantitative index based on land cover and stakeholder-derived weights [1] | Water purification was the highest contributor to the index; climate regulation was the lowest contributor in recent years [1] | 1990 - 2018 |

| Stakeholder Valuation | 32.8% higher on average than model outputs [1] | All selected ES were overestimated by stakeholders compared to models; largest contrasts in drought and erosion regulation [1] | 2018 |

Experimental Protocols for Eliciting Stakeholder Perceptions

Two-Step Perception and Priority Survey

This protocol, adapted from research in rural Laos, is designed for comparative analysis of stakeholder groups across different land-use types [7].

- Objective: To identify which Ecosystem Services are recognized by stakeholders and then determine their relative importance.

- Applicability: Useful in data-scarce environments and for comparing perceptions across multiple, distinct stakeholder groups (e.g., community members vs. experts).

- Materials:

- Structured, interviewer-assisted paper questionnaire.

- List of pre-defined ES items (e.g., 15 items across provisioning, regulating, cultural, and habitat categories).

- Translated and back-translated questionnaires in the local language and English.

- Procedure:

- Step 1 - Perception Assessment:

- For each land-use type, present respondents with the list of ES items.

- Ask: “In your opinion, what is the level of use of [land-use type] around the village for [ES item]?”

- Record responses on a four-point scale (1 = no use; 4 = high use).

- Data Filtering: Classify responses of 3 or 4 as "high use." An ES item proceeds to Step 2 only if it is rated as "high use" by ≥50% of respondents [7].

- Step 2 - Priority Evaluation:

- Present respondents only with the ES items filtered from Step 1.

- Instruct respondents to allocate a total of 100 points across these items in 10-point increments, reflecting their relative importance.

- Interviewers must provide detailed instructions and use repeated read-backs and probing questions to ensure understanding and data quality [7].

- Step 1 - Perception Assessment:

- Data Analysis:

- Calculate mean priority scores for each ES item by stakeholder group (community vs. expert) and land-use type.

- Use statistical tests (e.g., t-tests) to identify significant differences in priority allocations between groups.

Deliberative Workshop and Scenario Scoring

This protocol, based on woodland management studies, uses group discussion and scenario planning to elicit nuanced stakeholder judgments [12].

- Objective: To gather contextualized stakeholder knowledge through deliberative discussions and reach a collective evaluation of different management scenarios.

- Applicability: Effective for integrating local, place-based knowledge with scientific predictions and for exploring trade-offs in future management.

- Materials:

- Defined scenarios (e.g., "Biodiversity Conservation," "People Engagement," "Low Budget") [12].

- Scoring sheets for individual and group use.

- Facilitator guides for managing discussions.

- Procedure:

- Preparation: Develop 3-4 distinct management scenarios with clear goals and implications.

- Workshop Execution:

- Bring stakeholders together in a workshop setting.

- Present each scenario in detail.

- Facilitate deliberative discussions where stakeholders debate the potential impacts of each scenario on predefined proxies (e.g., spring flowers, weed species) [12].

- Repeated Scoring: Ask stakeholders to individually score the effects of each scenario both before and after group discussions. This allows observation of how deliberation influences perception.

- Data Consolidation: Aggregate scores to rank scenarios and identify consensus views or persistent disagreements.

- Data Analysis:

- Rank scenarios based on average scores.

- Analyze the shift in individual scores pre- and post-deliberation to understand the impact of group discussion.

Analytical Hierarchy Process (AHP) for Weighting ES

This protocol, used in Portugal and Mulberry-Dyke systems, employs a structured multi-criteria decision-making technique to derive the relative importance of ES from stakeholder input [1] [14].

- Objective: To quantitatively determine stakeholder-defined weights for multiple ecosystem services in a way that ensures consistency in judgment.

- Applicability: Ideal for integrating diverse stakeholder perspectives into composite indices (e.g., the ASEBIO index) or for spatial optimization where trade-offs must be explicitly weighted [1].

- Materials:

- AHP pairwise comparison survey.

- Software for AHP calculation and consistency ratio checks (e.g., Expert Choice, or R/Python packages).

- Procedure:

- Structure the Problem: Define the goal (e.g., "Assess overall ES potential") and list the relevant ES criteria.

- Pairwise Comparisons: Present stakeholders with a matrix where they compare each pair of ES criteria. They indicate which is more important and to what degree, using a standard 1-9 scale (1 = equally important, 9 = extremely more important).

- Data Collection: Conduct surveys with different stakeholder groups (e.g., community members, policymakers, scientists) separately to capture heterogeneous preferences [14].

- Data Analysis:

- Compute the principal eigenvector of the pairwise comparison matrix to derive the priority weights for each ES.

- Calculate a consistency ratio (CR) to ensure the stakeholder's judgments are logically coherent. A CR < 0.1 is generally acceptable.

- Compare the derived weight sets from different stakeholder groups.

Visualization of Research Workflows

Stakeholder Heterogeneity Research Workflow

Two-Step Survey Methodology for ES Perception

The Scientist's Toolkit: Key Research Reagents and Materials

Table 3: Essential Materials for Stakeholder Heterogeneity Research

| Item Name | Function/Application | Specifications/Examples |

|---|---|---|

| Structured Questionnaire | Core instrument for quantitative data collection on ES perceptions and priorities. | Must be translated and back-translated. Includes sections for demographic data, perception scales (e.g., 4-point), and priority allocation tasks (100-point method) [7]. |

| Land Cover Maps (CORINE) | Base spatial data for modeling ecosystem service potential and relating outputs to stakeholder perceptions. | Used in spatial modeling (e.g., ASEBIO index) to quantify ES supply and changes over time (1990-2018) [1]. |

| Spatial ES Models (InVEST) | Software suite for mapping and valuing ecosystem services to produce biophysical models for comparison with stakeholder views. | Models used to quantify ES like habitat quality, carbon storage, and erosion prevention for comparison with stakeholder perceptions [14]. |

| Analytical Hierarchy Process (AHP) Survey | Tool to derive quantitative weights for different ES from stakeholders via pairwise comparisons. | Used to integrate stakeholder preferences into composite indices, ensuring their values directly influence model outcomes [1] [14]. |

| Management Scenarios | Descriptive frameworks used in workshops to elicit stakeholder evaluations of future options and trade-offs. | Scenarios such as "Biodiversity Conservation," "People Engagement," and "Low Budget" are presented for stakeholder scoring [12]. |

| Predefined ES List | A standardized catalog of ecosystem services ensures consistency in survey and workshop materials. | Typically includes 15-20 items across provisioning, regulating, cultural, and habitat service categories, validated by expert panels [7]. |

From Theory to Practice: Methods for Modeling and Eliciting Perceptions

Ecosystem Services (ES) models are computational tools that translate ecological and socioeconomic data into quantitative assessments of nature's benefits to people [15]. The mapping and valuation of these services are critically important for sustainable development, environmental planning, and nature-based decision-making processes [15]. This guide provides a detailed examination of three prominent ES modeling platforms—InVEST, ARIES, and Co$ting Nature—which enable researchers to spatially quantify and value natural capital and its associated services. These platforms help balance environmental and economic goals by allowing decision-makers to assess quantified tradeoffs among alternative management choices [16] [17]. Understanding their technical specifications, application methodologies, and appropriate use contexts is essential for researchers conducting comparative analyses of ecosystem services models and stakeholder perceptions.

Table 1: Core Characteristics of Featured Ecosystem Service Modeling Platforms

| Feature | InVEST | ARIES | Co$ting Nature |

|---|---|---|---|

| Primary Approach | Production functions [16] [17] | AI-assisted, semantic modeling [18] [19] | Pre-processed global data with spatial models [20] [21] |

| Key Differentiator | Modular, multi-service suite [16] | Models service supply, demand, and flow [19] | Focuses on "costing" (opportunity cost) over "valuing" [20] [21] |

| User Interface | Standalone application (Workbench) [16] | Web-based platform (k.Explorer) [18] [19] | Web-based Policy Support System (PSS) [20] |

| Ease of Use | Requires basic-to-intermediate GIS skills [16] | Varies by version; k.Explorer aims for non-technical users [19] [21] | Simple application with global data; complex with custom data [20] |

| Global Default Data | Limited [17] | Available [19] | Extensive (140+ input maps) [20] [21] |

InVEST (Integrated Valuation of Ecosystem Services and Tradeoffs)

Developed by the Natural Capital Project, InVEST is a suite of free, open-source, spatially explicit models used to map and value the goods and services from nature that sustain human life [16] [17]. It employs a production function approach that defines how changes in an ecosystem's structure and function affect the flows and values of ecosystem services across a landscape or seascape [16] [17]. The toolset includes distinct models for terrestrial, freshwater, marine, and coastal ecosystems, and it returns results in either biophysical or economic terms [16] [22]. Its modular design allows users to select only services of interest without running a full suite of models [16].

ARIES (ARtificial Intelligence for Environment & Sustainability)

ARIES is an open-source, collaborative platform powered by k.LAB technology, which uses artificial intelligence and semantic modeling to rapidly assess ecosystem services [18] [19]. Its key innovation is the use of the semantic web paradigm to automatically select and link the best available models and data for a user's specific query and geographic context [19]. ARIES gives equal emphasis to ecosystem service supply, demand, and flow, allowing it to quantify actual service provision and use by society, rather than just potential benefits [19]. It is designed to be a FAIR (Findable, Accessible, Interoperable, Reusable) knowledge commons, supporting applications from urban to global scales [18].

Co$ting Nature

Co$ting Nature is a web-based spatial policy support system focused on natural capital accounting and analyzing the ecosystem services provided by natural environments [20] [21]. Its philosophy centers on "costing nature"—understanding the resource allocation and opportunity costs of protecting nature to produce ecosystem services—rather than merely valuing it in monetary terms [20]. A significant feature is its extensive library of pre-processed global datasets, which allows users to run initial assessments in approximately 30 minutes without specialized GIS skills, though incorporating custom data requires greater technical capacity [20] [21]. It models 18 ecosystem services and incorporates pressures, threats, and conservation priorities [20] [21].

Table 2: Technical Specifications and Resource Requirements

| Specification | InVEST | ARIES | Co$ting Nature |

|---|---|---|---|

| Access Model | Downloadable standalone software [16] | Web-based platform (k.Explorer) [18] [19] | Web-based platform (PSS) [20] |

| Cost | Free, open-source [16] | Free, open-source [18] | Free for non-commercial use [20] |

| Primary Inputs | Predominantly GIS/map data; information tables [17] | Spreadsheets, databases, maps; global maps available online [19] | Global spatial data at 1 km² or 1 ha; user-uploaded data [21] |

| Key Outputs | Maps; quantitative ES data; tables/statistics [17] | Maps; quantitative data on ES and environmental assets [19] | Maps; GIS databases; quantitative ES data; economic assessments [21] |

| GIS Dependency | Required for data prep and viewing results [16] [17] | Not specified | Not required for basic applications [20] |

| Spatial Resolution | Flexible (local to global) [16] | User-definable [19] | 1 km² to 1 ha (10m licensed) [20] |

| Developer | Natural Capital Project (Stanford University, WWF, TNC) [17] | Basque Centre for Climate Change (BC3) [19] | King's College London, AmbioTEK, UNEP-WCMC [20] |

Experimental Protocols and Application Workflows

Protocol: Coastal Flood Risk Assessment Using Co$ting Nature and Suitability Modeling

This protocol is adapted from a study that combined Co$ting Nature outputs with suitability modeling to identify priority areas for Nature-Based Solutions (NBS) in coastal Texas [23].

1. Research Question and Scope Definition

- Objective: Identify coastal areas with high flood risk and low ecosystem service provision to prioritize implementation of nature-based solutions for flood mitigation [23].

- Study Area: Define the geographic boundary (e.g., the Houston-Galveston Metropolitan Statistical Area coastline) [23].

2. Data Acquisition and Preparation

- Primary Data Sources:

- Data Integration:

- Spatially join multiple Co$ting Nature tiles in a GIS environment (e.g., ArcGIS Pro) if the study area spans multiple tiles [23].

- Harmonize all spatial data to a common coordinate system and resolution.

3. Co$ting Nature Analysis

- Baseline Assessment: Run the Co$ting Nature model to calculate baseline (current) provision of relevant ecosystem services, particularly flood regulation [23].

- Threat and Priority Mapping: Generate maps of conservation priority, ecosystem service provisions, and relative threats from the model outputs [23].

4. Suitability Modeling

- Criterion Identification: Define criteria for NBS implementation based on Co$ting Nature outputs (e.g., low current ecosystem service provision, high conservation priority, high threat levels) [23].

- Suitability Analysis: Conduct a suitability model in GIS software, using the Co$ting Nature outputs as input layers to synthesize areas with the greatest need for NBS [23].

- Flood Risk Overlay: Overlay the suitability output with current flood risk data to identify vulnerable coastal areas that are both high-risk and low-service [23].

5. Validation and Interpretation

- Ground Truthing: Where possible, verify model outcomes with field observations or higher-resolution local data [20].

- Policy Application: Interpret results to inform conservation priorities, target communities with high flood risk and low ecological services, and develop policies for green infrastructure implementation [23].

Protocol: Comparative Assessment of Multiple ES Models

This protocol provides a framework for comparing outputs, data requirements, and usability of InVEST, ARIES, and Co$ting Nature for a single case study, as referenced in global and Turkey-specific reviews [15].

1. Experimental Design

- Objective: Systematically compare the process, resource requirements, and results of multiple ecosystem service models applied to the same geographic context [15].

- Model Selection: Select InVEST, ARIES, and Co$ting Nature for their differing methodological approaches [15].

- Focal Ecosystem Services: Choose 2-3 comparable services (e.g., carbon sequestration, water provisioning, flood regulation) available across all platforms [15].

2. Implementation Phase

- Parallel Modeling: Apply each tool to model the selected ES in the same study area.

- Data Logging: Meticulously document for each tool:

- Output Generation: Produce maps and quantitative values (biophysical or economic) for each service from each model [17] [19] [21].

3. Analysis and Comparison

- Spatial Correlation: Analyze the degree of spatial concordance in ES hotspot maps generated by the different tools [15].

- Quantitative Comparison: Compare the absolute and relative values of ES provision estimated by each model.

- Usability Assessment: Evaluate tools based on required expertise, time investment, and transparency of assumptions [15].

Figure 1: Workflow for comparative assessment of multiple ES modeling platforms, illustrating the sequential stages from research definition through to final synthesis.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Ecosystem Services Modeling

| Research Reagent | Function | Platform Application Examples |

|---|---|---|

| Spatial Data (Land Use/Land Cover) | Serves as primary input representing ecosystem structure; determines service supply potential. | Required by all three platforms [15] [17] [21] |

| Digital Elevation Model (DEM) | Provides topographic data crucial for hydrologic services (e.g., water yield, flood regulation) and sediment transport. | Core input for InVEST hydrology models; part of Co$ting Nature's base data [17] [23] |

| Biophysical Tables | CSV files that parameterize ecosystem service production functions by linking land cover to service output. | Critical for InVEST models to define parameters like carbon storage per land cover type [17] |

| Global Satellite Data Products | Pre-processed, globally consistent datasets (e.g., climate, soil, vegetation indices) that enable rapid initial assessments. | Foundation of Co$ting Nature's global analyses; available in ARIES by default [19] [20] [21] |

| Economic Valuation Parameters | Data for translating biophysical service flows into monetary values (e.g., shadow prices, damage costs). | Used in InVEST for economic outputs; available in Co$ting Nature V3 [17] [21] |

| Semantic Model Ontologies | Formal representations of knowledge that define concepts and relationships between ecological and socioeconomic entities. | Core component of ARIES enabling AI-assisted model and data integration [18] [19] |

Figure 2: Decision pathway for selecting an appropriate ecosystem services modeling platform based on research objectives, available resources, and technical constraints.

InVEST, ARIES, and Co$ting Nature represent three sophisticated but philosophically distinct approaches to ecosystem service modeling. InVEST offers a structured, modular framework ideal for scenario analysis where ecological processes are well-understood and GIS capacity is available [16] [17]. ARIES leverages artificial intelligence to automate model and data integration, potentially increasing efficiency and reproducibility for users comfortable with its semantic framework [18] [19]. Co$ting Nature provides unparalleled rapid assessment capabilities using its extensive pre-loaded global datasets, making it particularly valuable for initial screenings and conservation priority setting [20] [21]. For researchers conducting comparative studies of ES models and stakeholder perceptions, understanding these technical differences, resource requirements, and appropriate application contexts is fundamental to designing robust experiments and interpreting results critically. The ongoing development of these platforms, particularly towards better integration of global datasets and improved user accessibility, promises to further enhance their utility in both research and policy domains [15] [17].

Integrating stakeholder perceptions with biophysical ecosystem services (ES) models is critical for developing effective environmental management and drug development strategies that are both scientifically robust and socially relevant. Stakeholder perspectives provide essential context, reveal potential conflicts, and help prioritize ecosystem services that might otherwise be overlooked in purely technical assessments [24] [6]. This integration is particularly vital in the context of ecosystem services models, where comparative research between modeled data and human perceptions can validate findings and uncover discrepancies [6]. The following sections provide application notes and detailed protocols for eliciting, quantifying, and integrating these intangible stakeholder priorities into formal research frameworks.

Application Notes: Core Techniques and Their Rationale

Online Stakeholder Engagement Platforms (OSEPs)

Application Note: OSEPs provide a flexible, anonymous environment for engaging geographically and linguistically diverse stakeholders, which is particularly advantageous for discussing potentially polarizing topics in technology and environmental management.

- Key Advantages: These platforms overcome limitations of traditional methods by enabling asynchronous participation, maintaining participant anonymity to reduce power dynamics and encourage open dialogue, and facilitating interactive group discussions through digital diaries and message boards [25]. This is especially valuable for engaging stakeholders across the drug development pipeline or in transnational environmental assessments where in-person gatherings are impractical.

- Integration with ES Models: The qualitative data gathered through OSEPs can be systematically coded and transformed into quantifiable weights for use in multi-criteria decision analysis (MCDA) frameworks, directly linking stakeholder preferences with technical model outputs [25].

Machine Learning-Driven Analysis

Application Note: Machine learning techniques can identify non-linear relationships and key drivers within complex datasets of stakeholder perceptions, moving beyond the limitations of traditional statistical methods.

- Technical Basis: Algorithms such as gradient boosting models excel at processing complex datasets to uncover key patterns and drivers that influence perceptions and priorities [24]. This approach is particularly effective for analyzing large-scale survey data or identifying patterns in open-ended responses that would be difficult to process manually.

- Scenario Planning: Once key drivers are identified, machine learning can inform the design of future scenarios (e.g., natural development, planning-oriented, ecological priority) to model how changes in these drivers might affect stakeholder perceptions and ecosystem service delivery [24].

Integrated Quantitative-Qualitative Data Frameworks

Application Note: Presenting quantitative and qualitative data together provides a cohesive narrative that conveys both measurable trends and the underlying context, creating more compelling and actionable evidence for decision-makers.

- Complementary Roles: Quantitative data (the "what") provides objective metrics and statistical evidence, while qualitative data (the "why" and "how") offers explanatory context, hypotheses, and nuanced understanding [26]. In ecosystem services research, this might involve pairing spatially explicit model outputs from tools like InVEST with narrative data from stakeholder interviews about why certain services are valued.

- Validation Function: Qualitative data can provide crucial contextual validation for quantitative model outputs, helping researchers understand when and why stakeholder perceptions may diverge from biophysical measurements of the same ecosystem services [6].

Experimental Protocols

Protocol: Implementing an Online Stakeholder Engagement Platform

Objective: To systematically elicit, capture, and analyze stakeholder perceptions using a structured online platform.

Table 1: Key Implementation Steps for Online Stakeholder Engagement

| Step | Description | Key Considerations |

|---|---|---|

| Platform Selection | Select an OSEP (e.g., CMNTY, EngagementHQ) with functionality for surveys, discussion forums, and anonymous interaction. | Ensure the platform complies with data protection regulations (e.g., GDPR, HIPAA). |

| Stakeholder Identification & Recruitment | Identify candidates through professional networks, literature reviews, and conference participant lists [25]. | Target diverse affiliations: government, NGOs, industry, academia, and community representatives. |

| IRB Approval | Develop and submit all study procedures, including consent forms and data management plans, to the Institutional Review Board [25]. | Approval is mandatory for research involving human subjects. |

| Platform Development & Testing | Create a series of structured surveys and moderated discussion forums focused on the specific topic [25]. | Pilot-test the platform to ensure usability and clarity of questions. |

| Data Collection | Participants engage with platform activities over a defined period (e.g., 2-4 weeks). | Allow asynchronous participation while maintaining moderator presence. |

| Data Analysis | Employ mixed-methods: quantitative analysis of survey responses and thematic analysis of discussion forum transcripts [26]. | Use coding frameworks to identify recurring themes and patterns. |

Protocol for Multi-Scenario Ecosystem Service Assessment with Stakeholder Input

Objective: To quantitatively assess ecosystem services under different future scenarios that reflect stakeholder priorities.

Table 2: Phases of Multi-Scenario Ecosystem Service Assessment

| Phase | Core Activities | Tools & Methods |

|---|---|---|

| 1. Baseline ES Assessment | Quantify individual services (water yield, carbon storage, habitat quality, soil conservation) for past and current conditions [24]. | InVEST model; comprehensive ES index to assess overall ecological service capacity [24]. |

| 2. Driver Identification | Identify key social-ecological drivers influencing ES using machine learning models [24]. | Gradient boosting models; analysis of land use, vegetation cover, and socio-economic data [24]. |

| 3. Scenario Co-Design | Develop future scenarios (e.g., natural development, planning-oriented, ecological priority) based on stakeholder input and driver analysis [24]. | Stakeholder workshops; OSEPs; participatory mapping. |

| 4. Land Use Projection | Project land use changes to a target year (e.g., 2035) under each scenario [24]. | PLUS model for simulating complex land-use dynamics at fine spatial scales [24]. |

| 5. Future ES Assessment | Evaluate various ecosystem services based on simulated land use for each scenario [24]. | InVEST model; trade-off and synergy analysis. |

Visualization of Methodological Frameworks

Workflow for Integrated Stakeholder and Ecosystem Services Research

The following diagram illustrates the sequential relationship between stakeholder perception elicitation, data analysis, and ecosystem services modeling, culminating in decision support.

Quantitative and Qualitative Data Integration Workflow

This diagram details the process of collecting, analyzing, and integrating different data types to form a cohesive evidence base.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagents and Solutions for Eliciting and Analyzing Stakeholder Perceptions

| Tool/Reagent | Type/Category | Primary Function | Application Context |

|---|---|---|---|

| Online Stakeholder Engagement Platform (OSEP) | Software Platform | Provides a structured digital environment for engaging geographically diverse stakeholders asynchronously and anonymously [25]. | Eliciting perceptions on complex or potentially polarizing topics (e.g., novel technologies, land use change). |

| InVEST Model | Biophysical Modeling Suite | Quantifies and maps the biophysical supply and economic value of ecosystem services under different scenarios [24]. | Providing quantitative ES data for comparison with stakeholder perceptions; modeling outcomes of different management options. |

| PLUS Model | Land Use Simulation Tool | Projects land use changes by simulating the interplay between human activities and natural systems under different scenarios [24]. | Visualizing potential future landscapes based on stakeholder-driven scenarios. |

| Machine Learning Algorithms (e.g., Gradient Boosting) | Data Analysis Tool | Identifies non-linear relationships and key drivers within complex datasets of stakeholder perceptions and ecosystem services [24]. | Analyzing large-scale survey data; pinpointing the most significant factors influencing perceptions. |

| RAG Status Indicators | Visualization & Reporting Tool | Uses color-coded status (Red, Amber, Green) to visually demonstrate progress or priority levels in reports [26]. | Communicating research priorities or agreement levels distilled from stakeholder data to decision-makers. |

| Multi-Criteria Decision Analysis (MCDA) | Analytical Framework | Systematically incorporates and weighs different stakeholder views, perceptions, and preferences in a transparent structure [25]. | Integrating diverse stakeholder priorities with technical data to support collaborative decision-making. |

The Analytical Hierarchy Process (AHP) is a multi-criteria decision analysis (MCDA) method developed by Thomas Saaty in the 1970s that helps individuals and organizations rank alternatives through pairwise comparisons [27]. For ecosystem services research, AHP provides a structured framework to balance the competing demands of technical suitability, stakeholder involvement, and sustainability considerations [28]. This methodology is particularly valuable in complex environmental decision-making contexts such as forest management, where it enables the integration of scientific data with socio-economic values and political considerations [29] [28]. By breaking down complex decisions into a hierarchical structure, AHP facilitates a systematic approach to prioritizing ecosystem services and spatially stratifying management interventions.

The fundamental strength of AHP in ecosystem services assessment lies in its ability to combine quantitative data with qualitative stakeholder judgments. Recent applications in forest ecosystems demonstrate how AHP can capture both scientific foundations and perspectives of various sectors through a stratification model to determine ecosystem service provisions [28]. This integration is crucial for developing management strategies that are not only ecologically sound but also socially acceptable and economically viable, ultimately supporting more sustainable environmental governance.

Fundamental Principles and Workflow of AHP

Core Mathematical Foundation

AHP operates on the principle of decomposing complex decisions into a hierarchical structure and using pairwise comparisons to derive priority scales [27]. The method requires decision-makers to compare elements pairwise at each level of the hierarchy with respect to their parent element at the next higher level. These comparisons are made using a fundamental 1-9 ratio scale where 1 represents equal importance between two elements and 9 represents extreme importance of one element over another [27]. The pairwise comparisons are organized into a reciprocal matrix, and the principal eigenvector of this matrix is computed to derive the priority weights for each element.

The AHP methodology incorporates a consistency measure to validate the logical coherence of judgments. The Consistency Ratio (CR) is calculated to ensure that transitive relationships hold reasonably well across all comparisons. A CR value of 0.1 or less is generally considered acceptable, indicating that the pairwise comparisons are sufficiently consistent to provide meaningful results. This mathematical foundation ensures that subjective judgments are translated into quantitatively derived priorities with known reliability measures.

Hierarchical Decision Structure

The first step in implementing AHP involves structuring the decision problem into a hierarchical model comprising at least three levels [27]:

- Level 1: The overarching decision-making goal

- Level 2: Criteria (and potentially sub-criteria) for evaluating alternatives

- Level 3: The alternatives being considered

For ecosystem services evaluation, this hierarchy typically positions "Sustainable Ecosystem Management" as the goal, with criteria representing different ecosystem services (biodiversity conservation, water protection, timber production, etc.), and spatial units or management scenarios as alternatives [28].

Table: Fundamental AHP Ratio Scale for Pairwise Comparisons

| Intensity of Importance | Definition | Explanation |

|---|---|---|

| 1 | Equal importance | Two activities contribute equally to the objective |

| 3 | Moderate importance | Experience and judgment slightly favor one activity over another |

| 5 | Strong importance | Experience and judgment strongly favor one activity over another |

| 7 | Very strong importance | An activity is strongly favored and its dominance demonstrated in practice |

| 9 | Extreme importance | The evidence favoring one activity over another is of the highest possible order of affirmation |

| 2,4,6,8 | Intermediate values | Used when compromise is needed |

Application Notes: AHP for Ecosystem Services Stratification

Case Study Implementation

A recent study in Turkey's Yalnızçam forest area demonstrated the application of AHP for spatial stratification of ecosystem services [28]. Researchers employed a Delphi technique integrated with AHP to capture both scientific grounding and perspectives of various sectors. The iterative framework included ecosystem service identification and prioritization steps, culminating in their spatial stratification of forest stands with geographic information systems. The results revealed a primary focus on biodiversity conservation (78.5%) and water protection (13.3%), with minimal provision for timber production (7.9%) and soil protection (0.04%), and none for climate regulation, eco-tourism, and non-wood forest products [28].

This approach enabled a more efficient spatial zoning strategy that balanced technical and socio-cultural factors, streamlining decision-making processes crucial for the sustainable forest management paradigm. The integration of AHP with spatial analysis tools like GIS represents a powerful methodological advancement for translating stakeholder-derived priorities into concrete spatial management recommendations.

Protocol: Structured AHP Implementation for Ecosystem Services

Phase 1: Problem Structuring and Hierarchy Development

- Stakeholder Identification and Engagement: Identify all relevant stakeholder groups (scientists, policymakers, local communities, industry representatives) who will participate in the pairwise comparisons.

- Ecosystem Services Selection: Define the comprehensive set of ecosystem services to be evaluated, ensuring they represent the full range of provisioning, regulating, cultural, and supporting services relevant to the specific context.

- Hierarchical Model Construction: Develop a decision hierarchy with the goal at the top level, ecosystem service categories and sub-categories at intermediate levels, and management alternatives or spatial units at the bottom level.

Phase 2: Data Collection through Pairwise Comparisons

- Structured Questionnaire Development: Create questionnaires for pairwise comparisons using the 1-9 ratio scale.

- Stakeholder Workshops: Conduct facilitated workshops to guide stakeholders through the pairwise comparison process, ensuring understanding of the scale and concepts.

- Data Collection: Collect completed pairwise comparison matrices from all stakeholder participants.

Phase 3: Data Analysis and Priority Derivation

- Matrix Computation: For each stakeholder or stakeholder group, compute the priority weights from the pairwise comparison matrices using the eigenvalue method [27].

- Consistency Verification: Calculate consistency ratios for each set of comparisons, flagging inconsistent responses for potential review.

- Priority Aggregation: Aggregate individual priorities across stakeholder groups using appropriate techniques (geometric mean, weighted average based on stakeholder importance).

Phase 4: Integration with Spatial Planning

- Satial Data Collection: Gather spatial data layers representing each ecosystem service (species distribution maps, water quality data, recreation potential, etc.).

- Priority Mapping: Create composite maps by weighting spatial layers according to the derived AHP priorities.

- Management Zoning: Delineate spatial management zones based on the dominant ecosystem service priorities identified through the AHP process.

Experimental Protocols and Methodologies

Detailed Pairwise Comparison Protocol

The pairwise comparison process represents the core data collection methodology in AHP. The following protocol ensures rigorous implementation:

- Matrix Preparation: Create a pairwise comparison matrix for each level of the hierarchy, with criteria listed in both rows and columns [27].

- Comparison Execution: For each cell in the matrix, pose the question: "With respect to [parent element], how much more important is the element in the row than the element in the column?"

- Scale Application: Use the fundamental 1-9 ratio scale to record responses, with reciprocals (1/2 to 1/9) used when the column element is more important than the row element [27].

- Diagonal Entries: All diagonal elements are automatically 1 (each element compared with itself).

- Reciprocal Enforcement: Ensure that if element A is rated as X times more important than element B, then element B is rated as 1/X times important as element A.

Table: Example Pairwise Comparison Matrix for Forest Ecosystem Services

| Biodiversity Conservation | Water Protection | Timber Production | Soil Protection | |

|---|---|---|---|---|

| Biodiversity Conservation | 1 | 5 | 7 | 9 |

| Water Protection | 1/5 | 1 | 3 | 5 |

| Timber Production | 1/7 | 1/3 | 1 | 3 |

| Soil Protection | 1/9 | 1/5 | 1/3 | 1 |

Priority Calculation Methodology

The calculation of priority weights from pairwise comparison matrices follows this protocol:

- Matrix Squaring: Square the pairwise comparison matrix (multiply the matrix by itself) [27].

- Row Sum Calculation: Sum each row of the squared matrix to obtain row totals.

- Priority Vector Derivation: Sum all row totals, then divide each row total by this sum to obtain the initial priority vector.

- Iteration: Repeat steps 1-3 using the resulting matrix until the priority vector stabilizes (no significant changes to three or four decimal places) [27].

- Consistency Index Calculation: Compute the consistency index (CI) using the formula CI = (λmax - n)/(n - 1), where λmax is the principal eigenvalue and n is the matrix size.

- Consistency Ratio Determination: Calculate CR = CI/RI, where RI is the random index value based on matrix size. A CR ≤ 0.10 indicates acceptable consistency.

Data Presentation and Visualization

Structured Data Tables for Results Communication

Effective presentation of AHP results requires clear tabular formats that enable easy comparison of priorities across stakeholder groups and scenarios. The following table structure is recommended for ecosystem services applications:

Table: Ecosystem Services Priority Weights from AHP Analysis (Yalnızçam Case Study) [28]

| Ecosystem Service | Priority Weight | Stratification Percentage | Dominant Stakeholder Perspective |

|---|---|---|---|

| Biodiversity Conservation | 0.425 | 78.5% | Ecological Integrity |

| Water Protection | 0.215 | 13.3% | Public Health & Safety |

| Timber Production | 0.185 | 7.9% | Economic Sustainability |

| Soil Protection | 0.095 | 0.04% | Long-term Productivity |

| Climate Regulation | 0.045 | 0% | Global Environmental Values |

| Eco-tourism | 0.025 | 0% | Recreational & Cultural Values |

| Non-wood Forest Products | 0.010 | 0% | Local Livelihoods |

AHP Workflow and Hierarchy Visualization

AHP Implementation Workflow

Ecosystem Services Decision Hierarchy

The Scientist's Toolkit: Essential Research Reagents and Materials

Methodological and Analytical Tools

Table: Essential Research Reagents and Solutions for AHP Implementation

| Tool/Resource | Function | Application Context |

|---|---|---|

| Pairwise Comparison Survey Instrument | Standardized data collection format for stakeholder judgments | Eliciting consistent preference ratings across all hierarchy elements |

| AHP Calculation Software (Expert Choice, Super Decisions, R packages) | Matrix computation and priority weight derivation | Performing eigenvalue calculations and consistency verification |

| Consistency Validation Protocol | Quality control for stakeholder responses | Identifying and addressing logically inconsistent judgments |

| Stakeholder Analysis Framework | Classification and weighting of different stakeholder groups | Ensuring representative inclusion of diverse perspectives |

| Spatial Analysis Tools (GIS) | Mapping and visualization of AHP results | Translating priority weights into spatial management zones |

| Sensitivity Analysis Protocol | Testing robustness of results to judgment variations | Assessing stability of priorities under different scenarios |

Advanced Considerations and Methodological Refinements

Recent research in multi-criteria decision making has highlighted approaches that go "beyond consistency" to address the challenges of intransitive preferences in real-world applications [29]. The skew-symmetric bilinear representation offers an alternative mathematical framework for modeling situations where stakeholder preferences may not be perfectly consistent, yet still contain valuable information for decision-making [29]. This is particularly relevant in complex ecosystem services evaluations where different stakeholder groups may have fundamentally different value systems that lead to apparently inconsistent preference structures.

For researchers applying AHP in contested environmental decision contexts, it is advisable to supplement traditional consistency measures with qualitative analysis of the underlying reasons for inconsistency. This may involve facilitated discussions with stakeholders to explore the value tensions that manifest as mathematical inconsistencies in the pairwise comparison matrices, potentially leading to richer insights and more nuanced management recommendations.

Data Management and Structural Considerations

Proper data structuring is essential for effective AHP implementation. Research data should be organized in a structured format with clear rows and columns, where each row represents a single record and each column represents an attribute or variable [30] [31]. This tabular structure enables efficient computation of priority weights and consistency ratios. For ecosystem services applications, it is critical to clearly define the granularity of the data - what each record represents (e.g., individual stakeholder responses, aggregated group preferences, or spatial management units) [30].

When integrating AHP with spatial decision support systems, researchers should maintain clear documentation of data sources, transformation methods, and weighting procedures. This ensures transparency and reproducibility in how stakeholder-derived preference weights are translated into spatial management recommendations. The structured data format also facilitates sensitivity analysis by enabling systematic variation of input parameters to test the robustness of resulting priorities and management zones.