Bridging the Gap: A Comparative Analysis of Ecosystem Services Models and Stakeholder Perceptions for Better Environmental Decision-Making

This article synthesizes current research on the critical comparison between data-driven ecosystem services (ES) models and stakeholder perceptions.

Bridging the Gap: A Comparative Analysis of Ecosystem Services Models and Stakeholder Perceptions for Better Environmental Decision-Making

Abstract

This article synthesizes current research on the critical comparison between data-driven ecosystem services (ES) models and stakeholder perceptions. It explores the foundational reasons for the divergence between these knowledge systems, reviews methodological approaches for their integration, identifies common challenges in implementation, and assesses validation techniques. Aimed at researchers and environmental professionals, this review provides a comprehensive framework for reconciling scientific models with human perspectives to enhance the reliability and applicability of ES assessments in policy and management, with illustrative parallels for biomedical research contexts.

The Great Divide: Understanding the Fundamental Gap Between Models and Human Perception

Ecosystem services (ES) frameworks are vital tools in international environmental policy, informing initiatives from the Sustainable Development Goals to the Convention on Biological Diversity [1]. However, the effective implementation of these frameworks depends on understanding how different stakeholders perceive and value ecosystem services within their specific socio-ecological contexts. This comparative guide analyzes two distinct case studies—from rural Laos to coastal Portugal—that document significant divergence in stakeholder perceptions and methodological approaches. This analysis provides researchers and policymakers with experimental protocols, quantitative data, and visualization tools essential for navigating the complexities of ecosystem service assessment across different geographical and cultural settings.

Sangthong District, Laos: Multi-Land Use Ecosystem Service Assessment

Experimental Objectives and Design: This study employed a sequential, two-step survey methodology to examine ES perceptions and priorities across three land-use systems in a rural Southeast Asian context [1]. The research aimed to: (RQ1) assess how ES are perceived and prioritized within bamboo forests, rice paddies, and teak plantations; (RQ2) identify significant differences between community members and expert groups; and (RQ3) analyze how differentiation patterns manifest across land uses [1].

Participant Selection and Stratification: Researchers classified respondents into community and expert groups. The community group comprised residents directly or indirectly affected by the targeted land uses, while the expert group included personnel from public institutions and academia in forestry, agriculture, and environment fields [1]. The study recruited 500 community members and 30 experts from five villages in Sangthong District, approximately 55 km northwest of Vientiane Capital, Laos [1]. Sampling accounted for village distribution, gender, age, and education levels to ensure representative data collection.

Land Use and Ecosystem Service Selection: Three land-use types were selected based on their socioeconomic importance: bamboo forests (traditional NTFP-based livelihoods), rice paddies (agroforestry interface), and teak plantations (commercial forestry) [1]. Through preliminary interviews, translation/back-translation processes, and expert panel review, researchers identified 15 ecosystem services across four categories: six provisioning, five regulating, two cultural, and two habitat services [1].

Ria de Aveiro, Portugal: Coastal Lagoon Decadal Change Analysis

Research Objectives and Temporal Framework: This study employed a qualitative focus group methodology to examine stakeholder views on decadal changes in the Ria de Aveiro coastal lagoon ecosystem on Portugal's Atlantic coast [2]. The research aimed to document evolving perceptions, identify persistent and emerging challenges, and analyze how local knowledge at ecological, social, political, and economic levels has changed over a ten-year period, potentially influencing community support for lagoon governance [2].

Participant Recruitment and Focus Group Structure: The study organized seven focus groups with 42 stakeholders from coastal parishes to maintain identical geographical representation with research conducted a decade earlier [2]. Participants represented diverse groups interested in or affected by management options in the lagoon system, including local residents, hunters, fishermen, and academic researchers [2]. All participants were required to have lived in the region for at least the ten years covered by the study, ensuring deep contextual knowledge of ecosystem changes [2].

Data Collection and Spatial Analysis: Focus groups followed a semi-structured format with a common discussion script to enable cross-group comparisons while allowing discussions to flow according to participants' experiences [2]. Discussions were complemented with spatialization of both areas of concern and areas considered positive or beneficial, supported by maps of Ria de Aveiro to enhance data specificity and contextual relevance [2].

Experimental Protocols and Methodological Frameworks

Two-Step Survey Methodology: Laos Case Study

The Laos study implemented a structured two-phase approach to data collection conducted over six weeks between November and December 2020 [1]:

Step 1: Perception Assessment and ES Selection

- Instrumentation: Structured, interviewer-assisted paper questionnaires translated into Lao and back-translated to English for accuracy [1].

- Administration: Face-to-face interviews conducted over three weeks (10-30 November 2020) by trained interviewers with prior ES field experience [1].

- Measurement: Assessed current use level of ES for each land-use type on a four-point scale (1 = no use; 4 = high use) [1].

- Analysis Protocol: Responses of 1 or 2 were classified as low use; 3 or 4 as high use. Ecosystem services rated as high use by ≥50% of respondents advanced to Step 2 for priority assessment [1].

Step 2: Priority Evaluation

- Instrumentation: Priority allocation tasks using 100-point distribution in 10-point increments for ES selected in Step 1 [1].

- Administration: Interviews conducted over three weeks in December 2020 with the same respondents as Step 1 [1].

- Quality Assurance: Interviewers provided detailed instructions on allocation methods using sample questionnaires, employed repeated read-backs, verbal reviews, and probing questions to identify and correct biases or misunderstandings [1].

Field Administration and Quality Control: The research team implemented rigorous quality assurance measures including a three-day training session for interviewers on ES concepts and terminology (4-6 November 2020) [1]. The operational procedure included repeated field validations and verbal reviews to enhance data accuracy and reliability in a data-scarce environment [1].

Focus Group Methodology: Portugal Case Study

The Portugal study employed a qualitative, participatory approach to capture decadal changes in stakeholder perceptions [2]:

Focus Group Composition and Structure:

- Seven focus groups were structured to mirror the geographical representation of research conducted a decade earlier, with specific groups including: FG1—Union of Parishes of Glória and Vera Cruz; FG2—University of Aveiro; FG3—São Jacinto Parish; FG4—Gafanha da Nazaré Parish; FG5—Torreira Parish; FG6—Murtosa Parish; and FG7—Hunters and Fishermen's Association of Avanca [2].

- Sessions were organized with support from the president of each parish, with participants required to provide free and informed consent and authorize audio recording of sessions [2].

Data Collection Protocol:

- Focus groups followed a semi-structured format with a common discussion script to enable cross-group comparisons while allowing organic discussion flow based on participant experiences [2].

- Discussions specifically addressed changes over the previous decade, including positive developments, persistent concerns, and emerging challenges in the Ria de Aveiro ecosystem [2].

- Spatial mapping exercises complemented discussions, allowing participants to identify specific areas of concern and positive developments within the lagoon system [2].

Results and Data Presentation

Quantitative Data: Laos Case Study Findings

Table 1: Ecosystem Service Perception and Priority Allocation in Laos Land-Use Systems

| Land Use Type | Stakeholder Group | Primary ES Priorities | Priority Allocation Range | Perception Threshold |

|---|---|---|---|---|

| Bamboo Forest | Community Members | Raw Materials, Freshwater | 60-70 points (combined) | ≥50% high use recognition |

| Bamboo Forest | Experts | Regulating Services, Habitat Provision | 55-65 points (combined) | ≥50% high use recognition |

| Rice Paddy | Community Members | Food Provision, Medicinal Resources | 65-75 points (combined) | ≥50% high use recognition |

| Rice Paddy | Experts | Climate Regulation, Biodiversity | 60-70 points (combined) | ≥50% high use recognition |

| Teak Plantation | Community Members | Timber/Bioenergy, Cultural Services | 55-65 points (combined) | ≥50% high use recognition |

| Teak Plantation | Experts | Carbon Sequestration, Hazard Regulation | 60-70 points (combined) | ≥50% high use recognition |

Table 2: Stakeholder Characteristics and Sample Distribution in Laos Study

| Characteristic | Community Group | Expert Group | Total Sample |

|---|---|---|---|

| Sample Size | 500 participants | 30 participants | 530 participants |

| Gender Distribution | Approximately 45% female, 55% male (across villages) | Not specified | Not specified |

| Education Levels | Varied, with limited formal education in some villages | Advanced degrees in relevant fields | Mixed educational backgrounds |

| Data Collection Period | November-December 2020 | November-December 2020 | 6-week field study |

The data revealed systematic divergence in priorities rooted in differing knowledge systems. Community members, grounded in traditional ecological knowledge (TEK), prioritized tangible provisioning and cultural services (e.g., food and raw materials), while experts emphasized regulating services (e.g., carbon sequestration and hazard regulation) and habitat services (e.g., biodiversity and habitat provision) [1]. Distinct "ES bundles" emerged by land use: bamboo (raw materials and freshwater), rice (food and medicine), and teak (timber/bioenergy and regulating services) [1].

Qualitative Data: Portugal Case Study Findings

Table 3: Decadal Changes in Stakeholder Perceptions of Ria de Aveiro Coastal Lagoon

| Change Category | Specific Findings | Stakeholder Consensus Level | Temporal Pattern |

|---|---|---|---|

| Positive Developments | Increased environmental awareness, Improved environmental status, Decreased illegal fishing | High agreement across focus groups | Progressive improvement over decade |

| Persistent Concerns | Lack of efficient management body, Hydrodynamic regime pressures, Native species disappearance | High agreement across focus groups | Consistent concern over decade |

| Emerging Challenges | Invasive alien species increase, Abandonment of traditional activities, Salt pan degradation | Moderate to high agreement | Worsening over recent years |

| Management Gaps | Insufficient stakeholder integration, Limited transdisciplinary approaches, Power imbalances in decision-making | High agreement among researchers and community representatives | Persistent structural issue |

The study identified three key positive changes: increased environmental awareness, a positive trajectory in the environmental status of Ria de Aveiro, and a decrease in illegal fishing activities [2]. Persistent concerns included the lack of an efficient management body for Ria de Aveiro, pressures related to changes in the hydrodynamic regime of the lagoon, the disappearance of native species and increase in invasive alien species, the abandonment of traditional activities, and the degradation and lack of maintenance of salt pans [2].

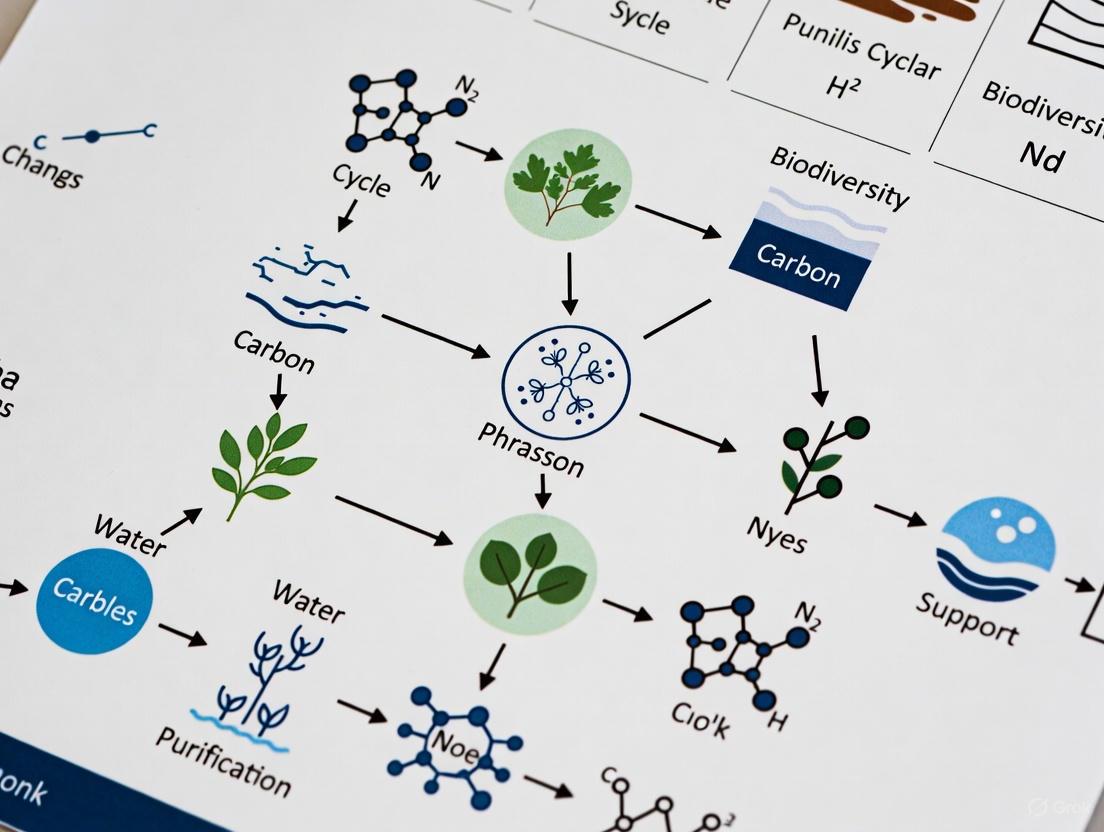

Visualization of Research Methodologies

Laos Study Research Workflow

Laos Research Methodology Flow

Portugal Study Research Workflow

Portugal Research Methodology Flow

Stakeholder Divergence Patterns

Stakeholder Divergence Patterns

Research Reagent Solutions and Essential Materials

Table 4: Essential Research Materials for Ecosystem Services Perception Studies

| Material/Instrument | Application Context | Function and Specification |

|---|---|---|

| Structured Questionnaires | Laos Case Study | Bilingual instruments (Lao/English) with back-translation validation for cross-cultural reliability |

| Four-Point Perception Scale | Laos Case Study | Standardized measurement: 1 = no use to 4 = high use with predetermined ≥50% threshold for advancement |

| 100-Point Allocation System | Laos Case Study | Quantitative priority assessment with 10-point increments for relative importance weighting |

| Focus Group Discussion Guides | Portugal Case Study | Semi-structured scripts with spatial mapping components for geographical reference |

| Audio Recording Equipment | Portugal Case Study | Documentation of verbal responses for qualitative analysis and thematic coding |

| GIS Mapping Resources | Both Studies | Spatial representation of land use changes and stakeholder-identified areas of concern |

| Training Manuals for Interviewers | Laos Case Study | Standardized protocols for ES concept explanation and data collection procedures |

Discussion and Comparative Analysis

The case studies from Laos and Portugal demonstrate remarkable convergence in documenting stakeholder divergence despite their geographical and contextual differences. Both studies reveal systematic patterns of perception gaps between local communities and expert groups, though manifested through different methodological approaches and research designs.

The Laos study provides a quantitative framework for assessing perception-priority gaps across multiple land-use systems, revealing that divergence is not merely a binary community-expert divide but varies significantly across different ecosystem types [1]. The finding that communities prioritized tangible provisioning services while experts emphasized regulating services reflects fundamental differences in knowledge systems and immediate needs [1].

The Portugal study offers longitudinal insights into how these perception gaps persist or evolve over time, highlighting the challenges of integrated ecosystem management when local knowledge isn't fully incorporated into governance structures [2]. The documented abandonment of traditional activities and persistent management gaps despite increased environmental awareness suggests that perception convergence alone is insufficient without institutional mechanisms for knowledge integration.

Both studies underscore the critical importance of methodological choices in documenting divergence. The Laos approach, with its standardized thresholds and quantitative allocation tasks, enables precise comparison across stakeholder groups and land uses [1]. The Portugal methodology, with its qualitative focus and temporal dimension, captures the evolving nature of stakeholder perceptions and the complex socio-ecological dynamics influencing them [2].

These case studies provide robust evidence that stakeholder divergence in ecosystem service perception is not merely an academic concern but has real-world implications for environmental management and policy effectiveness. The consistent findings across diverse contexts suggest that this divergence represents a fundamental challenge in ecosystem governance rather than a context-specific anomaly.

For researchers and practitioners, these studies highlight the necessity of developing more sophisticated methodological frameworks that can capture the multidimensional nature of stakeholder perceptions across different spatial and temporal scales. The experimental protocols and visualization tools presented in this comparison guide provide a foundation for such work, offering replicable approaches for documenting and analyzing perception gaps.

Future research in this field should focus on integrating quantitative and qualitative approaches, developing longitudinal tracking systems for perception evolution, and creating more effective knowledge co-production frameworks that bridge the documented gaps between community and expert perspectives. Only through such integrated approaches can ecosystem service frameworks fully deliver on their potential to support sustainable environmental management that respects both ecological integrity and human wellbeing.

In environmental research, particularly in ecosystem services and stakeholder perceptions, two distinct forms of knowledge production compete and complement one another: scientific generalization and contextualized local knowledge. Scientific generalization seeks to identify universal patterns and principles that can be applied across diverse contexts through standardized methodologies and replicable procedures [3]. It operates on the principle that knowledge should be transferable beyond the specific conditions of its generation, relying on representative sampling, statistical analysis, and controlled experimentation to produce findings considered generalizable across populations and settings [4] [5]. In contrast, contextualized local knowledge represents the specialized, place-based understanding possessed by communities regarding the environmental conditions, social structures, resource dynamics, and historical practices of a specific geographic area [6] [7]. This knowledge is often rooted in cultural traditions, historical experiences, and day-to-day interactions with the local ecosystem, making it inherently specific, experiential, and difficult to codify or transfer without losing essential context [7].

The tension and synergy between these knowledge systems are particularly salient in fisheries management and ecosystem services assessment, where climate change vulnerabilities demand both broad predictive capacity and localized understanding. As research in Chinese fisheries demonstrates, the complementary nature of these diverse knowledge systems is increasingly recognized as essential for addressing complex environmental challenges [8].

Theoretical Foundations and Epistemological Frameworks

Principles of Scientific Generalization

Scientific generalization operates through systematic approaches that produce knowledge meeting four key criteria: reliability (reproducible results), precision (clearly defined concepts), falsifiability (testable hypotheses), and parsimony (preference for simpler explanations) [3]. The process relies on methodological rigor to ensure that findings from studied samples can be extended to broader populations, with generalizability determined by how representative the sample is of the target population—a characteristic known as external validity [4].

In quantitative research, statistical generalization enables researchers to develop general knowledge that applies to all units of a population while studying only a subset of these units [4]. This requires samples that accurately mirror characteristics of the population and sufficient sample sizes to yield statistically significant results [4] [5]. The potential outcomes framework in epidemiology further formalizes this approach, specifying identification conditions sufficient for generalizability, including conditional exchangeability, positivity, consistent treatment versions, no interference, and no measurement error [5].

Nature of Contextualized Local Knowledge

Contextualized local knowledge encompasses the understanding, skills, and insights that people in a specific community possess about their environment and social practices [7]. This knowledge is dynamic and evolves as communities adapt to changing environmental, social, and economic conditions [7]. Unlike scientific knowledge, which seeks to isolate variables, local knowledge embraces complexity and interconnectedness, recognizing the multifaceted relationships between living and physical entities within specific cultural and historical contexts [8] [6].

In fisheries research, local knowledge is often categorized as either institutional expert knowledge (held by fisheries experts, managers, and researchers accumulated through professional experience) or local fishermen's knowledge (derived from place-based fishing communities through on-the-water observations and intergenerational experience) [8]. Both forms provide valuable complementary insights to scientific data, addressing gaps in biological, socioeconomic, and management information while offering long historical baselines that may exceed scientific monitoring records [8].

Methodological Approaches and Experimental Protocols

Data Collection and Validation Methods

Table 1: Methodological Comparison of Knowledge Systems

| Methodological Aspect | Scientific Generalization | Contextualized Local Knowledge |

|---|---|---|

| Primary Data Sources | Standardized monitoring programs, sensor networks, controlled experiments, historical datasets | Personal observations, oral histories, traditional practices, intergenerational knowledge transfer |

| Sampling Approach | Probability sampling seeking statistical representativeness [4] | Purposive sampling of knowledgeable informants, community elders |

| Validation Methods | Statistical significance testing, peer review, replication studies | Triangulation across sources, community consensus, historical consistency |

| Temporal Framework | Discrete study periods, standardized intervals | Lifelong experience, intergenerational perspectives, cyclical time |

| Documentation Format | Quantitative datasets, published papers, technical reports | Stories, practices, rituals, place names, informal sharing |

Climate Vulnerability Assessment Protocol

Research on fisheries climate vulnerability provides a robust experimental protocol for comparing knowledge systems [8]. The methodology involves three parallel assessment approaches:

Desktop Scientific Research: Compiling and analyzing quantitative scientific data on species distribution, biological traits, climate exposure, and sensitivity indicators from existing literature and monitoring programs.

Expert Knowledge Surveys: Administering structured surveys to fisheries experts (managers, policy-makers, researchers, NGO representatives) to assess ecological and socioeconomic vulnerability based on professional experience and institutional knowledge.

Local Fishermen Interviews: Conducting semi-structured interviews with fishermen to document place-based observations, historical changes, and perceived vulnerabilities derived from direct interaction with marine ecosystems.

Each approach produces vulnerability scores for specific species and social components, which are then compared to identify points of convergence and divergence. The protocol systematically documents sources of discrepancy, including variations in familiarity with specific species, differences in assessment indicators, data and knowledge gaps, and uncertainties stemming from data quality and knowledge confidence [8].

Comparative Analysis: Strengths and Limitations

Performance Across Assessment Criteria

Table 2: Comparative Performance of Knowledge Systems in Environmental Assessments

| Assessment Criterion | Scientific Generalization | Contextualized Local Knowledge |

|---|---|---|

| Spatial Scalability | High - designed for broad application [4] [5] | Low - inherently place-specific [6] [7] |

| Temporal Depth | Limited to recorded data periods | Potentially centuries through intergenerational transfer |

| Contextual Sensitivity | Low - seeks to control for context | High - embedded in local context [6] |

| Implementation Cost | High - requires specialized equipment and personnel | Lower - utilizes existing community expertise |

| Adaptive Capacity | Slow - requires new studies and validation | Rapid - evolves with changing conditions [7] |

| Predictive Accuracy | Variable - strong for linear systems, weaker for complex systems | Strong for familiar local conditions, weaker for novel changes |

| Cultural Relevance | Neutral - aims for objectivity | High - integrated with cultural values and practices [7] |

Integration Challenges and Synergies

The integration of knowledge systems presents both challenges and opportunities. Research reveals that data-driven and knowledge-driven approaches can yield different results in climate vulnerability assessments, with discrepancies arising from multiple factors [8]. These include varying levels of individual familiarity with specific species, divergences in assessment indicators and scoring criteria, data and knowledge gaps regarding species biological traits and fisheries socioeconomics, and uncertainties stemming from data quality and knowledge confidence [8].

However, when effectively integrated, these knowledge systems create powerful synergies. Scientific data provides broad-scale patterns and mechanistic understanding, while local knowledge offers fine-grained contextual understanding, historical depth, and culturally appropriate management insights [8] [6]. This complementarity is particularly valuable in contexts where scientific data is limited or where management interventions require community acceptance and participation to succeed [7].

Visualization of Knowledge System Interactions

Knowledge Systems Integration Workflow

Research Reagents and Essential Materials

Table 3: Essential Research Materials for Knowledge Systems Integration

| Research Material | Primary Function | Knowledge System Application |

|---|---|---|

| Standardized Survey Instruments | Enable systematic data collection across diverse respondents | Facilitates comparison between scientific metrics and local perceptions [8] |

| Spatial Mapping Tools | Document and visualize geographic knowledge | Integrates scientific spatial data with local place-based knowledge |

| Structured Interview Protocols | Ensure consistent qualitative data collection | Captures local knowledge while maintaining research comparability [8] |

| Climate Vulnerability Framework | Provide standardized assessment structure | Enables parallel evaluation using different knowledge sources [8] |

| Statistical Analysis Software | Analyze quantitative datasets | Tests correlations between scientific measurements and local observations |

| Cultural Domain Analysis Tools | Identify shared knowledge structures within communities | Documents organization of local ecological knowledge |

| Participatory Mapping Materials | Engage communities in spatial knowledge documentation | Bridges technical cartography with local spatial understanding |

The comparison between scientific generalization and contextualized local knowledge reveals a fundamental complementarity rather than opposition. While scientific generalization provides powerful tools for identifying broad patterns and developing predictive models, contextualized local knowledge offers essential insights into specific contexts, historical dynamics, and culturally appropriate implementation [8] [7]. Research in fisheries climate vulnerability demonstrates that both knowledge systems have distinct strengths and limitations, with their integration offering the most promising path toward effective environmental management [8].

Future research should develop more sophisticated methodologies for knowledge integration, particularly addressing challenges of scale translation, validation frameworks for local knowledge, and institutional mechanisms for equitable knowledge co-production. Such approaches are essential for addressing complex socio-ecological challenges where both general principles and contextual specificity are required for effective intervention.

Ecosystem services (ES) are the benefits people obtain from ecosystems, a concept formally defined by the Millennium Ecosystem Assessment (MA) and central to environmental policy and sustainable development [9] [10]. The MA classifies these services into four interconnected categories: provisioning services (tangible goods like food, water, and timber); regulating services (benefits from the regulation of natural processes such as climate regulation and water purification); cultural services (non-material benefits like recreation and spiritual experiences); and supporting services (underlying processes like nutrient cycling necessary for producing all other services) [9] [10] [11]. This classification provides a critical framework for understanding how human well-being depends on natural systems.

However, a uniform valuation of these services does not exist across different segments of society. Stakeholder perceptions of ES importance vary dramatically, creating a fundamental divergence in conservation and land-use priorities [1] [12]. Research increasingly shows that these perceptions split along a clear fault line: local communities often prioritize tangible provisioning and cultural services directly linked to their livelihoods and daily lives, while scientific experts and policymakers typically emphasize regulating and habitat services with broader, long-term regional or global benefits [1]. This article compares these divergent priorities through the lens of empirical studies, detailing the methodologies, data, and implications for ecosystem service models and management.

Experimental Evidence of Divergent Priorities

Case Study in Rural Laos: Community vs. Expert Priorities

A 2025 study in Sangthong District, Laos, provides a quantitative comparison of ES priorities between rural community members and experts across three land-use types: bamboo forests, rice paddies, and teak plantations [1].

Experimental Protocol:

- Objective: To identify and compare ES perceptions and priorities between community members (n=500) and experts (n=30) [1].

- Methodology: A two-step, structured, interviewer-assisted paper questionnaire was used [1].

- Perception Assessment (Step 1): Respondents rated their use level of 15 pre-identified ES for each land-use type on a four-point scale. Services rated as "high use" by ≥50% of respondents advanced to the next step [1].

- Priority Evaluation (Step 2): Respondents allocated 100 points across the ES selected in Step 1 to indicate their relative importance [1].

- Data Analysis: Mean priority scores were calculated for each service by stakeholder group and land-use type. The study employed statistical analysis to confirm the significance of differences between groups [1].

Key Findings: The research revealed a systematic divergence in priorities rooted in differing knowledge systems and immediate needs. The results are summarized in Table 1 below.

Table 1: Priority Scores for Ecosystem Services in Rural Laos by Stakeholder Group [1]

| Ecosystem Service Category | Specific Service | Community Priority Score (Mean Points) | Expert Priority Score (Mean Points) |

|---|---|---|---|

| Provisioning Services | Food | 28.2 | 12.5 |

| Raw Materials | 25.1 | 11.8 | |

| Fresh Water | 18.5 | 14.3 | |

| Regulating Services | Carbon Sequestration | 4.3 | 22.7 |

| Hazard Regulation | 5.1 | 19.4 | |

| Water Purification | 7.2 | 16.1 | |

| Habitat Services | Biodiversity / Habitat Provision | 3.8 | 18.2 |

The data shows communities, grounded in traditional ecological knowledge, allocated over 70% of their total points to provisioning services like food and raw materials. In contrast, experts assigned over 55% of their points to regulating and habitat services, such as carbon sequestration and biodiversity preservation [1]. The study also identified distinct "ES bundles" for each land-use type, reinforcing that priorities are context-dependent [1].

Case Study in Arid Northwest China: Pastoralist vs. Agriculturalist Perceptions

A 2024 study in the arid desert region of Northwest China further illuminates how livelihood strategies shape ES perceptions, even within local communities.

Experimental Protocol:

- Objective: To investigate how local residents with different livelihoods identify, perceive, and value desert ES, and to assess the impact of land-use change [12].

- Methodology: The study utilized social science research methods, primarily in-depth surveys and interviews, conducted over multiple field seasons (2021-2023) [12].

- Participants: Respondents (n=128) were categorized into two groups based on livelihood strategy:

Key Findings: While both groups in this arid environment identified water as their top priority, their perceptions of how land-use change impacted ES availability diverged significantly, as shown in Table 2.

Table 2: Perceived Changes in Ecosystem Service Availability Following Land-Use Change in Northwest China [12]

| Ecosystem Service | Livelihood Group | Percentage Reporting Significant Decrease |

|---|---|---|

| Herbs | Pastoralist (PPG) | 78.7% |

| Agriculturalist (APG) | 62.7% | |

| Water | Pastoralist (PPG) | 55.7% |

| Agriculturalist (APG) | Not a top-reported concern | |

| Fodder | Pastoralist (PPG) | 50.8% |

| Agriculturalist (APG) | Not a top-reported concern | |

| Sense of Belonging | Agriculturalist (APG) | 37.3% |

| Pastoralist (PPG) | Not a top-reported concern | |

| Link to Ancestors | Agriculturalist (APG) | 32.8% |

| Pastoralist (PPG) | Not a top-reported concern |

The PPG, whose livelihood was directly tied to the native grassland ecosystem, reported drastic declines in key provisioning services (herbs, fodder) and water [12]. The APG, while also noting a decline in herbs, reported greater losses in cultural services (sense of belonging, link to ancestors), reflecting the social and cultural disruption experienced during their relocation to agricultural settlements [12]. This highlights that even within local communities, priorities are not monolithic and are finely tuned to specific livelihood dependencies.

Visualizing Research Workflows and Priority Divergence

Stakeholder Perception Research Workflow

The following diagram illustrates the generalized experimental protocol used in the cited studies to quantify and compare stakeholder priorities.

Conceptual Model of Divergent Priority Formation

This diagram maps the logical relationship between stakeholder characteristics, their primary valuation focus, and the resulting ecosystem service priorities.

The Scientist's Toolkit: Key Methodologies for ES Perception Research

Research into stakeholder perceptions of ecosystem services relies on a suite of methodological tools adapted from the social sciences. The following table details essential "research reagents" and their functions in this field.

Table 3: Essential Methodological Tools for Ecosystem Services Perception Research

| Research Tool | Function & Application | Key Characteristics |

|---|---|---|

| Structured Surveys & Questionnaires | Primary instrument for quantitative data collection on ES use and preferences [1] [12]. | Enables standardization, statistical analysis, and comparison across large, diverse stakeholder groups. |

| Semi-Structured Interviews | In-depth, qualitative exploration of ES values, trade-offs, and context [12]. | Provides rich, narrative data and reveals underlying reasons for preferences that surveys may miss. |

| Participant Observation | Immersive field method to understand the role of ES in daily life and culture [12]. | Builds trust and generates contextual data on the practical use and management of ES. |

| Priority Allocation Task (e.g., 100-Point) | Technique to force-rank the relative importance of different ES [1]. | Quantifies preferences and makes trade-offs explicit, preventing respondents from rating all services as "important." |

| Perception Thresholds (e.g., ≥50% Use) | A filtering mechanism to identify locally relevant ES for further study [1]. | Increases research efficiency and relevance by focusing on the services stakeholders actually interact with. |

Discussion and Implications for Research and Policy

The empirical evidence consistently demonstrates that the divergence in ES priorities is not random but is systematically linked to human needs, knowledge systems, and livelihood dependencies [1] [12]. Local community priorities are shaped by Traditional Ecological Knowledge (TEK) and direct reliance on ecosystems for subsistence, leading to a focus on provisioning services [1]. In contrast, expert priorities are informed by formal scientific models that emphasize global challenges like climate change and biodiversity loss, elevating the importance of regulating and habitat services [1].

This divergence has profound implications. When policymaking relies solely on expert assessment, it risks undervaluing the services most critical to local populations, potentially leading to management failures, social inequity, and unintended negative consequences on human well-being [12]. A study in Laos concluded that a policy transition from single-objective management toward optimizing landscape-level ES portfolios is necessary [1]. This requires institutionalizing participatory co-management that formally integrates local knowledge with scientific expertise [1].

Future research must continue to embrace interdisciplinary methods, bridging ecological science with social science methodologies to fully capture the complex relationships in social-ecological systems [13] [12]. By acknowledging and formally quantifying these divergent priorities, researchers and policymakers can develop more resilient, inclusive, and effective strategies for managing the planet's vital ecosystems.

The Implications of Misalignment for Policy Uptake and Conservation Outcomes

The effective translation of environmental research into conservation policy is often hampered by a fundamental challenge: misalignment between quantitative ecosystem services (ES) models and the perceptions of stakeholders. This misalignment represents a significant barrier to policy uptake, as divergent perspectives on ecosystem value can undermine consensus and stall decisive conservation action. When the data-driven outputs of scientific models do not resonate with the lived experiences and localized knowledge of key stakeholders, including local communities, policymakers, and resource managers, even the most robust scientific evidence may fail to inform effective environmental governance [14].

This comparative guide objectively examines the implications of this misalignment for conservation outcomes, focusing specifically on the disconnect between modeled ES assessments and stakeholder valuations. The guide is structured within the context of a broader thesis comparing ES models and stakeholder perceptions research, presenting empirical evidence of divergence, detailing methodological protocols for assessing such misalignment, and proposing integrative frameworks to bridge these gaps. For researchers, scientists, and environmental professionals, understanding these disconnects is not merely academic—it is essential for designing conservation strategies that are both scientifically sound and socially legitimate, thereby enhancing their implementation success and long-term effectiveness [15].

Theoretical Framework: Understanding Policy Misalignment

Policy misalignment in environmental contexts occurs when different policies, strategies, or assessments work at cross-purposes rather than in concert, ultimately hindering the achievement of sustainability goals [16] [17]. This conceptual framework can be categorized into three distinct levels, each with profound implications for conservation:

Intra-organizational Misalignment: This occurs within a single organization or research team when departmental objectives or methodological approaches conflict. For instance, a scientific team prioritizing publication in high-impact journals might utilize complex ES models that are intentionally abstracted from local contexts to establish generalizable principles. This can inadvertently create tension with the same organization's knowledge translation unit, which requires simplified, accessible findings for stakeholder engagement and policy advocacy [16].

Inter-sectoral Misalignment: This form of misalignment arises between different sectors, such as when environmental conservation objectives clash with agricultural or economic development priorities. A prime example can be observed when agricultural subsidies designed to boost food production inadvertently lead to increased fertilizer runoff, thereby harming aquatic ecosystems that environmental regulations aim to protect [16]. This creates a fundamental contradiction where one sector's policy success directly undermines another's.

Knowledge System Misalignment: Particularly relevant to ES assessment, this occurs when policies or models grounded in one knowledge system conflict with those based on another. The disregard of traditional ecological knowledge in favor of purely techno-scientific approaches in conservation policy often leads to ineffective outcomes, creating a form of epistemological misalignment [16]. This is not merely a technical discrepancy but reflects deeper conflicts over how knowledge is validated and which forms are privileged in policy formulation.

Understanding these layered misalignments provides the necessary foundation for analyzing the specific disconnects between ES modeling and stakeholder perceptions documented in empirical research.

Empirical Evidence: Quantifying the Model-Stakeholder Divide

Recent research provides compelling quantitative evidence of significant disparities between modeled ecosystem services data and stakeholder perceptions. A groundbreaking 2024 national-scale study in Portugal offers particularly revealing insights, directly comparing eight multi-temporal ES indicators derived from spatial modeling against stakeholders' perceptions of ES potential [14].

Comparative Analysis of Modeled vs. Perceived Ecosystem Services

Table 1: Discrepancies between Modeled Ecosystem Services and Stakeholder Perceptions in Portugal

| Ecosystem Service | Stakeholder Overestimation | Alignment Level | Key Findings from Spatial Models (1990-2018) |

|---|---|---|---|

| Drought Regulation | Highest contrast | Low | Showed largest improvement, especially in central/southern regions |

| Erosion Prevention | High contrast | Low | Wide range of values but very low potential in 1990 |

| Climate Regulation | Moderate | Medium | Potential declined over the study period |

| Pollination | Moderate | Medium | Remained mostly stable with slight declines |

| Habitat Quality | Moderate | Medium | Remained stable; increased in north, declined in metropolitan areas |

| Recreation | Lower overestimation | High | Improved overall; closely aligned with models |

| Water Purification | Lower overestimation | High | Consistently showed high potential throughout years |

| Food Production | Lower overestimation | High | Decreased in Algarve, improved in interior regions |

The Portuguese study revealed that stakeholder estimates were 32.8% higher on average than model-based assessments across all eight ecosystem services evaluated [14]. This substantial discrepancy highlights a fundamental perceptual gap that could significantly impact conservation planning and policy acceptance. The misalignment was not uniform across all services; the largest contrasts emerged for regulating services like drought regulation and erosion prevention, which involve complex biophysical processes that may be less directly observable to stakeholders. In contrast, provisioning services (food production) and cultural services (recreation) showed closer alignment, likely because these are more immediately tangible and measurable in daily life [14].

Geospatial analysis further revealed that metropolitan areas like Lisbon and Porto showed minimal improvements in ES indicators according to models, with Lisbon experiencing declines in six of eight ES indicators [14]. This urban-rural divergence in ecosystem service trajectories presents additional complications for policy development, as stakeholders in different regions may experience vastly different environmental realities, further exacerbating perceptual gaps.

Stakeholder Prioritization Versus Modeled Outcomes

Another dimension of misalignment emerges in how different stakeholder groups prioritize ecosystem services based on their values and interests. Research on woodland management scenarios demonstrates how divergent priorities can lead to conflicting conservation approaches [15].

Table 2: Stakeholder Preferences in Woodland Management Scenarios

| Management Scenario | Stakeholder Ranking | Model-Based Ranking (Spring Flowers) | Model-Based Ranking (Weed Control) | Key Characteristics |

|---|---|---|---|---|

| Biodiversity Conservation | Highest | Highest | Highest | Main goal: improving habitats and species conservation |

| Management Plan | Second | Substantially lower | Second | Based on current goals for site management |

| People Engagement | Third | Second | Lower | Encourages use of woodland and its resources |

| Low Budget | Consistently much lower | Much lower | Much lower | Resources constrained to keeping site safe for access |

This comparative analysis reveals both convergence and divergence in evaluation criteria. While stakeholders and models agreed on prioritizing biodiversity-focused management over low-budget approaches, they disagreed on intermediate scenarios. The "People Engagement" scenario, which encourages use of woodland resources, was ranked lower by models for weed control but higher for spring flowers, demonstrating how different evaluation metrics can yield substantially different policy recommendations [15]. This underscores the contextual nature of conservation evaluations and the importance of selecting appropriate success metrics that reflect both ecological integrity and human values.

Methodological Protocols: Assessing Misalignment

To systematically investigate misalignment between ES models and stakeholder perceptions, researchers require robust methodological protocols. The following section details standardized approaches for generating comparable data across these different knowledge domains.

Spatial Modeling of Ecosystem Services

Table 3: Experimental Protocol for Ecosystem Services Modeling

| Research Phase | Methodology | Data Sources | Output Metrics |

|---|---|---|---|

| Land Cover Analysis | Multi-temporal analysis of CORINE Land Cover data (1990, 2000, 2006, 2012, 2018) | Satellite imagery, land cover classifications | Land cover change trajectories, spatial patterns |

| ES Indicator Modeling | Spatial modeling using GIS-based approaches; InVEST software for specific services | Land cover data, climate data, soil data, topography | Eight ES indicators: climate regulation, drought regulation, erosion prevention, etc. |

| ES Index Development | Multi-criteria evaluation with Analytical Hierarchy Process (AHP) weighting | Modeled ES indicators, stakeholder-derived weights | Composite ASEBIO index (0-1 scale) |

| Validation | Statistical analysis of temporal changes (ANOVA); cross-comparison with independent datasets | Time series data, ground truthing where available | Significance testing (F = 1.584, P < 0.001 for ES indicators) |

The spatial modeling approach requires processing land cover data through specialized software such as InVEST (Integrated Valuation of Ecosystem Services and Tradeoffs), which provides spatially explicit models for quantifying ES [14]. This process involves mapping ES indicators across multiple time periods to capture temporal dynamics and trade-offs. Statistical analysis, including ANOVA tests, should be employed to verify the significance of observed changes over time, with the Portuguese study reporting significant differences across all periods (F = 1.584, P < 0.001) [14]. The final output is typically a composite index such as ASEBIO, which integrates multiple ES indicators into a single measure of ecosystem service potential, facilitating broader comparisons and trend analysis [14].

Table 4: Experimental Protocol for Stakeholder Perception Assessment

| Research Phase | Methodology | Participant Selection | Data Collection Format |

|---|---|---|---|

| Workshop Design | Structured workshops with deliberative discussions | Diverse stakeholders: researchers, scientists, drug development professionals | In-person or virtual facilitated sessions |

| Scenario Evaluation | Repeated scoring of scenario effects on ES potential | Purposive sampling to ensure relevant expertise | Ranking exercises using standardized scorecards |

| Perception Elicitation | Matrix-based methodology for ES potential assessment | Cross-sectoral representation | Individual and group assessment components |

| Priority Weighting | Analytical Hierarchy Process (AHP) | Sufficient sample size for statistical power | Pairwise comparisons of ES importance |

Stakeholder elicitation employs structured workshops featuring deliberative discussions and repeated scoring of scenario effects [15] [14]. The process should incorporate the Analytical Hierarchy Process (AHP), a multi-criteria decision-making method that enables stakeholders to assign relative weights to different ecosystem services through pairwise comparisons [14]. This systematic approach transforms qualitative preferences into quantitative weights that can be directly integrated with modeling results. Effective facilitation is crucial to minimize groupthink and power dynamics that might distort authentic perceptions, with techniques including anonymous initial scoring, breakout groups, and structured plenary discussions to capture diverse perspectives [15].

Comparative Analysis Framework

The critical phase of analysis involves directly comparing modeled ES assessments with stakeholder perceptions. This requires spatial aggregation of model outputs to match the scale of stakeholder assessments, statistical testing of differences (e.g., paired t-tests to evaluate the significance of perceptual gaps), and regression analysis to identify factors explaining variation in alignment across services and regions [14]. The 32.8% average overestimation by stakeholders reported in the Portuguese study exemplifies the quantitative metrics that can emerge from this rigorous comparative approach [14].

Visualizing Misalignment: Conceptual Framework and Pathways

The following diagram illustrates the conceptual framework of policy misalignment in conservation, highlighting the disconnect between modeling and stakeholder perspectives and its implications for conservation outcomes.

Diagram 1: Policy Misalignment Framework in Conservation (92 characters)

This visualization captures the fundamental disconnect between modeled ecosystem assessments and stakeholder perceptions, which generates policy misalignment. This misalignment creates implementation barriers that ultimately compromise conservation outcomes. The pathway to resolving this issue lies in developing integrative approaches that incorporate both data-driven models and localized stakeholder knowledge, leading to enhanced policy uptake and improved conservation results.

Table 5: Research Reagent Solutions for Misalignment Studies

| Research Tool | Function | Application Context | Representative Examples |

|---|---|---|---|

| InVEST Software | Spatial modeling of ecosystem services | Quantifying ES indicators across landscapes | Habitat Quality, Carbon Storage, Nutrient Delivery Ratio models [14] |

| CORINE Land Cover | Standardized land cover classification | Land use change analysis and ES mapping | European Land Cover database (1990, 2000, 2006, 2012, 2018) [14] |

| AHP Framework | Multi-criteria decision analysis | Eliciting and weighting stakeholder preferences | Priority weighting of ES indicators [14] |

| Stakeholder Workshop Kits | Structured facilitation materials | Eliciting perceptions through deliberative processes | Scenario descriptions, scoring sheets, discussion guides [15] |

| GIS Platforms | Spatial data analysis and visualization | Mapping ES indicators and perceptual disparities | ArcGIS, QGIS with spatial analysis extensions [14] |

| Statistical Packages | Quantitative analysis of misalignment | Testing significance of model-stakeholder differences | R, SPSS, Python (pandas, scikit-learn) [18] |

This toolkit provides researchers with essential resources for designing comprehensive studies on model-stakeholder misalignment. The InVEST software suite offers standardized, spatially explicit models for quantifying ecosystem services, while the Analytical Hierarchy Process (AHP) provides a rigorous methodology for capturing stakeholder preferences in a structured, quantifiable format [14]. Complementary tools like standardized land cover data and statistical packages enable the integration and analysis of these different knowledge types to identify, quantify, and ultimately address critical misalignments that hinder conservation effectiveness.

The empirical evidence and methodological protocols presented in this comparison guide demonstrate that misalignment between ecosystem services models and stakeholder perceptions is not merely an academic concern but a fundamental barrier to effective conservation policy and practice. The significant disparities quantified in recent research—with stakeholder estimates exceeding model-based assessments by nearly a third on average—highlight the critical need for more integrative approaches that bridge scientific modeling and human perspectives [14].

Addressing this misalignment requires moving beyond technical fixes toward transformative approaches in conservation science and policy. This includes breaking down institutional silos through enhanced interdisciplinary collaboration, adopting longer-term and more integrated planning frameworks, and establishing robust processes for stakeholder engagement that genuinely incorporate diverse knowledge systems and values [16]. The methodological protocols and research tools outlined in this guide provide a foundation for such integrative work, enabling researchers and conservation professionals to systematically identify, analyze, and address the critical disconnects that compromise conservation outcomes.

Ultimately, conservation strategies that successfully integrate data-driven models with stakeholder perspectives are not just more inclusive—they are more scientifically robust, politically legitimate, and practically effective. By embracing both quantitative precision and contextual wisdom, the conservation community can develop policies that better reflect ecological realities while earning the support necessary for successful implementation, thereby enhancing both policy uptake and conservation outcomes in an increasingly complex world.

Integrating Knowledge Systems: Tools and Techniques for Bridging Model-Stakeholder Gaps

This guide provides an objective comparison of two core structured participatory frameworks—deliberative workshops and surveys—within ecosystem services (ES) research. These methods are pivotal for integrating diverse stakeholder perceptions with quantitative modeling data, a integration essential for sustainable environmental management. The critical need for such frameworks is highlighted by research showing a significant 32.8% average overestimation of ecosystem service potential in stakeholder perceptions compared to spatial models [19]. This discrepancy underscores the importance of method selection for generating balanced, inclusive, and actionable data for decision-making in fields ranging from ecological science to public policy.

Comparative Analysis: Deliberative Workshops vs. Surveys

The table below summarizes the core characteristics, strengths, and limitations of deliberative workshops and surveys, providing a basis for methodological selection.

Table 1: Core Methodological Comparison

| Feature | Deliberative Workshops | Structured Surveys |

|---|---|---|

| Core Approach | Facilitated, interactive group discussions aiming for consensus or deep understanding of diverse views [20]. | Standardized questionnaires administered to individuals for quantitative data collection [19]. |

| Primary Data Output | Qualitative data (transcripts, facilitator notes), identified themes, co-created solutions [21]. | Primarily quantitative data (ratings, scores, rankings) suitable for statistical analysis [19]. |

| Interaction Level | High; dynamic and iterative interaction among participants and facilitators [20]. | Low to none; individual response without group interaction. |

| Key Strength | Generates rich, contextual insights, fosters social learning, and can build collective solutions [21] [20]. | Efficiently collects data from large samples, generalizable results, minimizes facilitator bias [19]. |

| Key Limitation | Resource-intensive (time, cost, facilitation), smaller sample sizes, potential for dominance by vocal participants [20]. | Limited depth on complex issues, cannot capture group dynamics or the reasoning behind preferences [19]. |

| Ideal Application | Exploring complex, value-laden issues; conflict resolution; co-designing policies or management strategies [21]. | Gauging the distribution of opinions, preferences, or perceptions across a large population [19]. |

Quantitative Data from Comparative Studies

Empirical studies directly comparing model data with stakeholder perceptions reveal measurable discrepancies and alignments. The following table summarizes key findings from a national-scale study in Portugal.

Table 2: Quantitative Discrepancies Between Modeled and Perceived Ecosystem Service Potential [19]

| Ecosystem Service Indicator | Stakeholder Overestimation vs. Models | Notes on Alignment |

|---|---|---|

| Drought Regulation | Highest Contrast | Largest perceptual gap. |

| Erosion Prevention | High Contrast | Among the highest disparities. |

| Climate Regulation | High Contrast | Listed as a lowest contributor to an integrated index. |

| Water Purification | Closely Aligned | Also the highest contributor to the integrated ASEBIO index. |

| Food Production | Closely Aligned | Relatively strong alignment between models and perception. |

| Recreation | Closely Aligned | Perceived potential doubled in one decade, becoming a major index contributor. |

| Average of All ES | 32.8% Higher | Stakeholder estimates were, on average, nearly a third higher than model outputs. |

Detailed Experimental Protocols

Implementing these frameworks rigorously is critical for generating reliable and comparable data. Below are detailed protocols for key experiments and applications cited in this guide.

Protocol for a Participatory Deliberative Workshop

This protocol is adapted from case studies on mini-publics and participatory housing, focusing on structured facilitation to achieve deliberative goals [20] [21].

Table 3: Phased Protocol for Deliberative Workshops

| Phase | Key Activities | Tools & Reagents |

|---|---|---|

| 1. Preparation & Recruitment | Define deliberative goal (e.g., consensus, problem identification). Recruit a diverse, representative group of stakeholders. Prepare briefing materials. | Stakeholder Map, Recruitment Screeners, Information Booklets. |

| 2. Facilitation & Interaction | Facilitator(s) guide discussion using structured exercises. Encourage equal participation, ensure all voices are heard, and manage group dynamics. | Trained Facilitators, Discussion Guide, Dynamic Facilitation Techniques [20], Recording Equipment. |

| 3. Data Synthesis & Analysis | Transcribe discussions. Code transcripts for themes, arguments, and consensus points. Analyze the quality of deliberation and outcomes. | Qualitative Data Analysis Software (e.g., NVivo), Coding Framework, Thematic Analysis. |

Protocol for Integrating Surveys and Spatial Models (ASEBIO Index)

This protocol details the methodology for creating a composite ES index by combining modeled data with stakeholder-weighted surveys, as demonstrated in the Portugal study [19].

Table 4: Protocol for Integrated Modeling-Survey Approach

| Phase | Key Activities | Tools & Reagents |

|---|---|---|

| 1. Spatial Modeling of ES | Calculate multi-temporal ES indicators using GIS and spatial models (e.g., InVEST). Use land cover data (e.g., CORINE) as a primary input. | GIS Software (e.g., ArcGIS, QGIS), Spatial Models (e.g., InVEST), Land Cover Maps. |

| 2. Stakeholder Weighting via Survey | Engage stakeholders through an Analytical Hierarchy Process (AHP) survey. Elicit weights reflecting the relative importance of each ES. | Structured AHP Survey, Survey Platform (e.g., Google Forms, LimeSurvey), Stakeholder Panel. |

| 3. Data Integration & Index Creation | Integrate modeled ES data with stakeholder-derived weights using a multi-criteria evaluation method (e.g., weighted linear combination). | Multi-Criteria Decision Analysis (MCDA) Software/Code (e.g., in R or Python), Data Integration Framework. |

Workflow and Logical Relationship Diagrams

The following diagram illustrates the logical workflow for a comparative study that integrates both surveys and deliberative workshops with scientific modeling, leading to more holistic decision-making.

Comparative Research Workflow

This diagram maps the logical sequence for designing a multi-method assessment of participatory frameworks, from defining the research objective to informing decisions [19] [20].

The diagram below details the internal structure and flow of a deliberative workshop, highlighting the facilitator's role in guiding the process toward its goals.

Deliberative Workshop Process

This chart breaks down the deliberative workshop process, showing how facilitator interventions manage group interaction to achieve specific deliberative outcomes [20].

The Researcher's Toolkit

This section details essential materials and methodological solutions for implementing the frameworks discussed.

Table 5: Essential Research Reagents & Solutions

| Item | Function in Participatory Research |

|---|---|

| Trained Facilitators | Professionals who guide deliberative processes, ensure inclusive participation, manage group dynamics, and steer discussions toward the defined goal without imposing content [20]. |

| Analytical Hierarchy Process (AHP) | A structured multi-criteria decision-making technique used in surveys to elicit stakeholder preferences and derive weighted priorities for different ecosystem services [19]. |

| Spatial Modeling Software (e.g., InVEST) | A suite of open-source models used to map and value ecosystem services, providing quantitative, data-driven indicators for comparison with stakeholder perceptions [19]. |

| Dynamic Facilitation Method | An involved facilitation approach where the facilitator actively works to minimize internal exclusion, enable diversity of thought, and help the group navigate complex topics toward consensus [20]. |

| Stakeholder Perception Matrix | A matrix-based methodology, often using a Likert scale, to capture stakeholders' perceived potential of ecosystem services for different land cover classes, allowing for systematic comparison with models [19]. |

Multi-Criteria Decision Making (MCDM) provides a structured framework for evaluating complex alternatives characterized by multiple, often conflicting criteria. Within this field, the Analytic Hierarchy Process (AHP) has emerged as a particularly powerful and widely adopted technique for integrating quantitative data with qualitative stakeholder judgments [22]. AHP operates by decomposing a decision problem into a hierarchical structure, facilitating systematic pairwise comparisons between elements to derive precise priority weights [23]. This methodological rigor enables researchers to transform subjective stakeholder preferences into mathematically sound weightings, thereby bridging the gap between technical modeling and human-centered valuation.

The integration of AHP with other MCDM techniques creates sophisticated hybrid frameworks capable of balancing technical precision with social acceptance. These approaches are especially valuable in fields like ecosystem services management and pharmaceutical regulation, where decisions must simultaneously consider scientific evidence, economic feasibility, and diverse societal values. This comparative guide examines the implementation, performance, and practical applications of these integrated methodologies, providing researchers with objective data to inform their analytical choices.

Comparative Analysis of MCDM Methodologies and Applications

Table 1: Comparative analysis of MCDM methodologies and their applications

| Methodology | Key Features | Stakeholder Integration Approach | Application Contexts | Data Requirements |

|---|---|---|---|---|

| AHP-TOPSIS Hybrid | Decomposes decision hierarchy, ranks alternatives by similarity to ideal solution | Explicitly incorporates weights from expert and resident stakeholders | Urban redevelopment [23], Ecosystem services mapping [24] | Pairwise comparisons, performance matrices |

| Skew-Symmetric Bilinear Model | Handles intransitive preferences beyond standard consistency | Captures complex and potentially inconsistent human judgments | Industrial decision-making [25] | Preference data with potential inconsistencies |

| Stakeholder Consultation Frameworks | Qualitative analysis of preferences through interviews and focus groups | Gathers in-depth perspectives through semi-structured interviews | Pharmaceutical pricing [26], Opioid settlement planning [27] | Interview transcripts, thematic analysis |

| AHP-Weighted Spatial Modeling | Combines priority weights with geospatial data and capacity matrices | Uses expert judgment to weight spatial indicators | Landscape planning [24], Water ecosystem services [28] | Land use/cover maps, expert surveys |

Table 2: Quantitative results from AHP-TOPSIS implementation in residential redevelopment [23]

| Stakeholder Perspective | Top Priority Domain | Weight Assigned | Preferred Case | TOPSIS Score |

|---|---|---|---|---|

| Expert/Supply-Side | Project Feasibility | 32.5% | Seoul A District | 0.58 |

| Resident/Demand-Side | Residential Conditions | 28.7% | Gyeonggi D District | 0.69 |

| Combined Evaluation | Legal/Institutional Reforms | 24.2% | Gyeonggi D District | 0.63 |

The comparative analysis reveals how different MCDM approaches balance technical precision with stakeholder integration. The AHP-TOPSIS hybrid framework demonstrates particular strength in contexts requiring explicit comparison of diverse stakeholder perspectives, as evidenced in the Korean public housing redevelopment study where experts prioritized feasibility (32.5%) while residents emphasized livability (28.7%) [23]. This divergence highlights the critical importance of incorporating both technical and experiential knowledge in public policy decisions.

For complex decision environments where stakeholder preferences may not follow perfect consistency, advanced approaches like the skew-symmetric bilinear representation offer valuable alternatives to traditional AHP. These methods can handle intransitive preferences that sometimes characterize real-world human judgments, moving "beyond consistency" to better capture the complexity of stakeholder valuations in industrial and environmental applications [25].

Experimental Protocols and Implementation Methodologies

AHP-TOPSIS Hybrid Framework for Urban Redevelopment

The implementation of hybrid AHP-TOPSIS frameworks follows a structured, multi-stage protocol. In the Korean residential redevelopment study, researchers first conducted Focus Group Interviews (FGIs) with professionals from public, private, and academic sectors to identify 25 key planning elements, subsequently categorized into five domains: legal/institutional reforms, project feasibility, residential conditions, social integration, and complex design [23].

The AHP phase employed pairwise comparison surveys administered to 30 experts and 130 residents, with consistency ratios calculated to ensure judgment reliability. The resulting priority weights were then integrated into the TOPSIS method to evaluate four real-world redevelopment cases based on their relative similarity to ideal solutions. This methodology enabled direct comparison of supply-side (expert) and demand-side (resident) preferences, revealing significant divergence in priorities that informed context-sensitive planning recommendations [23].

Stakeholder Consultation and Qualitative Analysis

In pharmaceutical pricing research, a different methodological approach employed semi-structured interviews with 16 key stakeholders guided by Walt and Gilson's Health Policy Triangle framework [26]. The protocol used purposive sampling to ensure representation across pharmacists, general practitioners, pharmaceutical representatives, academic researchers, policy advisors, policymakers, and the general public.

The qualitative data analysis followed a deductive approach using framework analysis, with data coded and categorized according to the predetermined policy dimensions of content, context, process, and actors. This methodology enabled researchers to identify not only consensus positions but also nuanced concerns about potential cost transfers and impacts on pharmaceutical innovation, providing policymakers with anticipatory insights before policy implementation [26].

Diagram: AHP-TOPSIS hybrid methodology workflow integrating stakeholder preferences

Ecosystem Services Mapping with AHP-Weighted Indicators

In Tuscan landscape planning, researchers developed an innovative AHP-based protocol for mapping and bundling ecosystem services (ES). The method integrated a standard land use/land cover (LULC) map with additional open-source territorial data using AHP to weight multiple spatial indicators [24]. This approach addressed limitations of simple LULC capacity matrices by incorporating supplementary environmental, socio-economic, and geographical data through multi-criteria analysis.

The experimental protocol involved defining five key ES bundles, then applying AHP to determine relative weights for various spatial indicators reflecting soil conditions, ecosystem properties, and topological features. The resulting composite maps enabled identification of spatial synergies and trade-offs between different ES, providing a decision support system (DSS) for regional planners seeking to enhance multifunctional landscapes while avoiding sectoral policy conflicts [24].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential research reagents for MCDM-stakeholder integration studies

| Research Reagent | Function/Application | Implementation Example |

|---|---|---|

| Pairwise Comparison Surveys | Elicits relative importance of criteria through structured judgments | 9-point Saaty scale administered to experts and residents [23] |

| Consistency Ratio (CR) | Validates logical coherence of pairwise comparison judgments | CR < 0.1 threshold for acceptable judgment consistency [23] |

| Focus Group Interview (FGI) Protocols | Identifies key decision criteria through structured group discussions | Professional FGIs identifying 25 planning elements across 5 domains [23] |

| Stakeholder Sampling Frames | Ensures representative inclusion of relevant stakeholder categories | Purposive sampling of 16 stakeholders across 7 categories [26] |

| Semi-Structured Interview Guides | Collects in-depth qualitative data on preferences and concerns | Interview guides based on Health Policy Triangle framework [26] |

| Land Use/Land Cover (LULC) Maps | Provides baseline spatial data for ecosystem services assessment | LULC maps combined with capacity matrices for ES mapping [24] |

| Thematic Analysis Frameworks | Systematically analyzes qualitative interview data | Framework analysis using Context, Process, Content, Actors dimensions [26] |

The comparative analysis demonstrates that hybrid MCDM approaches, particularly AHP integrated with techniques like TOPSIS, provide robust methodological frameworks for balancing technical modeling precision with nuanced stakeholder valuations. The experimental data reveals that these methods consistently identify significant divergences between expert and lay stakeholder priorities—as evidenced by the 32.5% weight experts placed on feasibility versus the 28.7% weight residents placed on livability in housing redevelopment [23].

These methodological insights have profound implications for ecosystem services management and pharmaceutical regulation, where decisions must harmonize scientific evidence with social acceptance. Future research should explore dynamic AHP applications capable of adapting to evolving stakeholder preferences, particularly in rapidly changing environmental and health policy contexts. The continued refinement of these integrated decision-support frameworks promises to enhance both the technical quality and democratic legitimacy of complex public policy decisions.

Sequential assessment designs represent a sophisticated class of methodological frameworks that enable researchers to evaluate interventions, products, or concepts through structured, multi-stage processes. These designs provide formal mechanisms for monitoring accumulating data and making pre-specified modifications to study parameters without compromising statistical integrity. The fundamental strength of sequential approaches lies in their ability to incorporate interim analyses, allowing researchers to stop trials early for efficacy or futility, adjust sample sizes based on emerging trends, or reallocate resources to more promising interventions [29]. In both clinical development and ecosystem services research, these designs offer a rigorous yet flexible alternative to traditional fixed-sample studies, particularly valuable when dealing with uncertainty about effect sizes or when ethical and economic considerations demand efficient resource utilization.

The conceptual foundation of sequential assessment bridges seemingly disparate fields—from pharmaceutical trials to environmental valuation—through shared statistical principles. At its core, sequential methodology addresses the universal challenge of drawing valid inferences from data examined multiple times throughout its collection. The phenomenon of "peeking" at interim results, if done without proper statistical correction, inflates false positive rates beyond nominal levels [30]. Sequential designs formally solve this problem through pre-specified stopping rules and error-spending functions, thus enabling legitimate monitoring while controlling type I error rates. This statistical rigor makes sequential approaches particularly valuable for comparative assessment frameworks where multiple alternatives must be evaluated against common benchmarks.

Foundational Designs and Statistical Frameworks

Group Sequential Designs

Group Sequential Tests (GST) represent one of the most established methodological approaches in sequential analysis. In this design, interim analyses are conducted after batches (groups) of data become available, with stopping boundaries determined to preserve the overall type I error rate. The GST framework exploits the known correlation structure between intermittent test statistics to optimally account for repeated testing [30]. A key advantage of this approach is its flexibility through alpha-spending functions, which allow researchers to specify how the significance level is allocated across interim analyses. Alpha can be spent arbitrarily over the planned peeking times, and unused alpha can be preserved for later analyses if a scheduled interim analysis is skipped [30]. This flexibility makes GST particularly suitable for long-term studies where the timing or number of interim analyses may need adaptation.

The statistical properties of GST require careful planning regarding maximum sample size. If researchers observe fewer participants than expected, the test becomes conservative with a true false positive rate lower than intended. Conversely, if enrollment continues beyond the planned sample size, the false positive rate becomes inflated [30]. This dependency on accurate sample size projection represents a limitation in environments with high uncertainty. Additionally, the numerical computation of critical values becomes increasingly complex with many intermittent analyses, making GST impractical for streaming data scenarios with hundreds or thousands of analyses. Despite these limitations, GST remains widely valued for its direct connection to traditional statistical tests and relatively straightforward interpretability for stakeholders familiar with conventional hypothesis testing.

Always Valid Inference Methods