Bridging Data and Ecosystems: A Guide to Matching Sensor Data with Statistical Models in Ecology

This article provides a comprehensive framework for ecologists and environmental scientists on integrating sensor data with statistical spatial models.

Bridging Data and Ecosystems: A Guide to Matching Sensor Data with Statistical Models in Ecology

Abstract

This article provides a comprehensive framework for ecologists and environmental scientists on integrating sensor data with statistical spatial models. It covers foundational concepts, practical methodologies for hybrid modeling, solutions to common challenges like spatial autocorrelation and data imbalance, and robust validation techniques. By synthesizing recent advances, this guide aims to enhance the reliability and predictive power of ecological models to support informed environmental management and decision-making.

The Foundations of Ecological Sensing and Spatial Statistics

The Growing Role of Sensor Networks in Ecological Observation

Ecological observatory networks represent a paradigm shift in how scientists collect and analyze environmental data. These systems, composed of integrated sensor arrays, field researchers, and centralized databases, provide standardized, long-term, and continental-scale measurements of abiotic and biotic conditions. Their primary mission is to collect open-access data to understand how ecosystems respond to environmental change, addressing grand challenges in environmental science. Networks like the US-based National Ecological Observatory Network (NEON) collect data across 81 terrestrial and aquatic sites, employing both automated sensors and traditional field methods to capture ecological phenomena across multiple temporal and spatial scales. This infrastructure provides an unprecedented opportunity for organismal biologists, ecologists, and researchers to study range expansions, disease epidemics, invasive species colonization, and physiological variation among individual organisms.

The integration of sophisticated sensor technologies with advanced statistical models has created new frontiers in ecological research. Where models historically operated in data-scarce environments, they now face an explosion of information from diverse sensor platforms—ranging from bespoke environmental sensors to mainstream personal devices. This shift enables researchers to move beyond simple data collection toward generating meaningful information about complex ecological processes. The convergence of sensor data with statistical modeling represents a critical advancement for understanding species-habitat associations, ecosystem changes, and biodiversity preservation in the face of rapid anthropogenic change.

Table 1: Spatial and Temporal Scales of Data Collection in the National Ecological Observatory Network (NEON)

| Data Type | Spatial Scale | Temporal Scale | Collection Method |

|---|---|---|---|

| Airborne Remote Sensing | Continental (81 sites across US) | Annual | Airborne observation platforms |

| Organismal Sampling | Site-specific (multiple plots per site) | Weekly/Monthly during growing season | Field technicians |

| Environmental Measurements | Tower-based at site center | Year-round, 1-minute averages | Automated sensors |

| Biological Specimens | Continental scale | Continuous | Biorepository archiving |

Table 2: Statistical Models for Analyzing Sensor-Derived Ecological Data

| Model Type | Data Requirements | Primary Ecological Questions | Key Advantages |

|---|---|---|---|

| Resource Selection Function (RSF) | Animal location data, environmental covariates | Habitat preference at species/home range scale | Ease of implementation; broad-scale patterns |

| Step-Selection Function (SSF) | High-frequency movement data | Movement and habitat selection at fine scale | Accounts for movement constraints and autocorrelation |

| Hidden Markov Models (HMM) | High-temporal resolution behavioral data | Discrete behavioral states and their environmental drivers | Reveals behavior-specific habitat relationships |

| Inhomogeneous Poisson Point Process (IPP) | Spatial point patterns | Density of animal locations across geographical space | Direct modeling of spatial intensity |

Statistical Integration Protocols

Resource Selection Function Implementation

Objective: To quantify habitat selection by comparing environmental conditions at locations used by animals versus available locations within their home range.

Materials and Equipment:

- Animal tracking data (GPS coordinates with timestamps)

- Environmental covariate layers (GIS data)

- R statistical software with

amtpackage - Home range estimation tools (e.g., minimum convex polygon)

Procedure:

- Data Preparation: Import animal tracking data and environmental covariate rasters into R. Ensure consistent coordinate reference systems.

- Home Range Delineation: Calculate the minimum convex polygon (MCP) or utilization distribution from observed locations to define availability.

- Point Generation: Randomly generate available points within the MCP (typically 3-10 times more available points than used points).

- Covariate Extraction: Extract environmental covariate values (e.g., vegetation index, elevation, prey diversity) at both used and available locations.

- Model Fitting: Implement logistic regression with used/available as binary response variable and environmental covariates as predictors:

Pr(y_i = 1|x_i) = exp(β₁x₁,i + β₂x₂,i + ... + βₖxₖ,i) / (1 + exp(β₁x₁,i + β₂x₂,i + ... + βₖxₖ,i))

where y_i = 1 for used locations and 0 for available locations.

- Model Validation: Assess model performance using k-fold cross-validation and calculate area under the receiver operating characteristic curve.

Interpretation: Positive selection coefficients (β) indicate preference for a habitat feature, while negative coefficients indicate avoidance. The exponential form of the RSF, w(x) = exp(β₁x₁ + β₂x₂ + ... + βₖxₖ), represents the relative probability of selection.

Step-Selection Function Framework

Objective: To model habitat selection while accounting for movement constraints and temporal autocorrelation in animal tracking data.

Materials and Equipment:

- High-frequency animal movement data (regular time intervals)

- Environmental covariate layers

- R software with

amtpackage - Computational resources for handling large datasets

Procedure:

- Data Structuring: Define observed steps (consecutive locations) and calculate step lengths and turning angles.

- Control Step Generation: For each observed step, generate random available steps from the empirical distributions of step lengths and turning angles.

- Covariate Integration: Extract environmental conditions at the end point of each observed and available step.

- Model Implementation: Fit conditional logistic regression models stratified by each observed step with its associated available steps.

- Integrated SSF: For more sophisticated applications, simultaneously estimate movement parameters and selection coefficients using a likelihood approach that integrates movement kernels with selection functions.

Interpretation: SSF coefficients indicate habitat selection while moving, after accounting for intrinsic movement constraints. This method provides finer-scale understanding of habitat selection during movement phases.

Hidden Markov Model Application

Objective: To identify latent behavioral states from movement data and link state transitions to environmental conditions.

Materials and Equipment:

- High-temporal resolution sensor data (GPS, accelerometers)

- Environmental covariate data

- R software with

momentuHMMpackage - Computational resources for numerical optimization

Procedure:

- Data Preparation: Process movement data to calculate step lengths and turning angles between consecutive observations.

- Data Exploration: Examine distributions of movement parameters to inform initial state distributions.

- Model Specification: Define number of behavioral states (typically 2-3) and initial parameter estimates for state-dependent distributions.

- Covariate Integration: Include environmental covariates on transition probabilities between states using multinomial logit links:

γ_{ij}^{(t)} = Pr(S_t = j | S_{t-1} = i) = exp(α_{ij} + β_{ij} x_t) / Σ_k exp(α_{ik} + β_{ik} x_t)

where γ_{ij}^{(t)} is the transition probability from state i to state j at time t.

- Model Fitting: Estimate parameters using numerical maximum likelihood methods, typically expectation-maximization or direct numerical optimization.

- State Decoding: Use the Viterbi algorithm to determine the most likely sequence of behavioral states.

- Model Selection: Compare models with different numbers of states using AIC or cross-validation.

Interpretation: HMMs reveal how animals change behaviors in response to environmental conditions, providing mechanistic understanding of habitat selection processes.

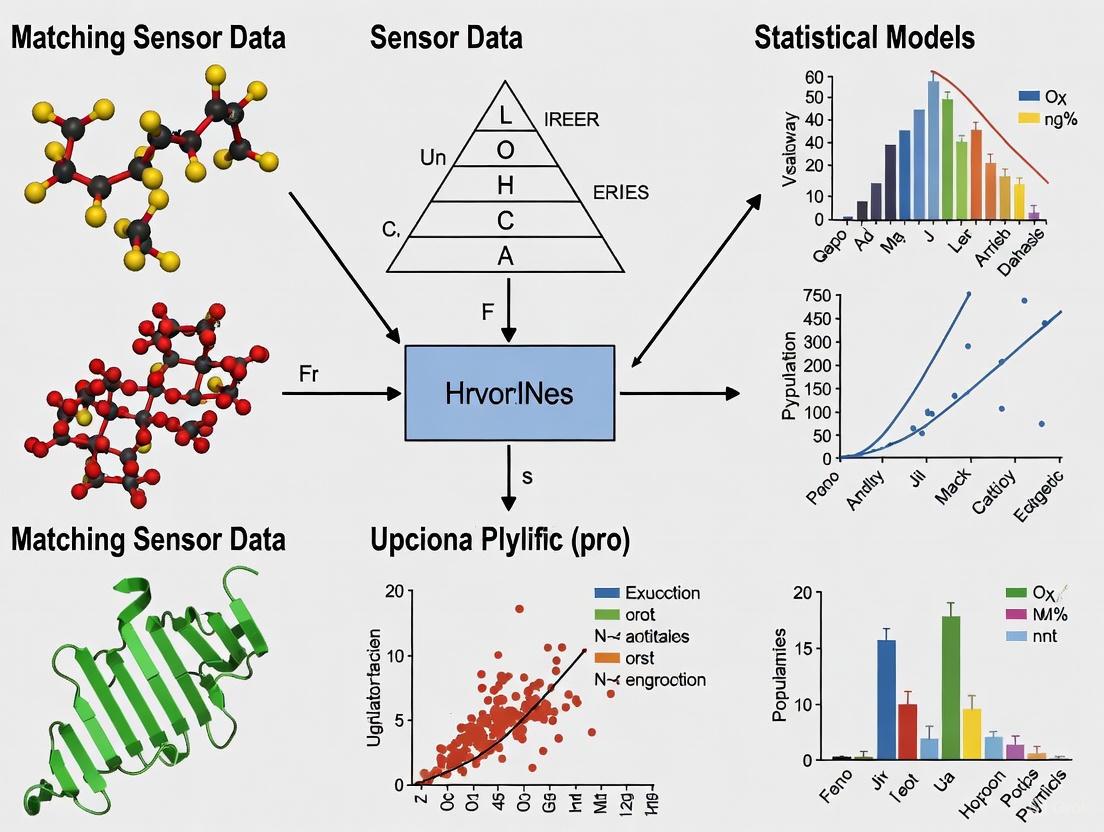

Workflow Visualization

Ecological Data Analysis Workflow: This diagram illustrates the integrated pipeline from sensor data collection through statistical modeling to conservation applications, highlighting decision points for model selection based on data characteristics and research questions.

The Researcher's Toolkit

Table 3: Essential Research Reagents and Solutions for Ecological Sensor Networks

| Tool/Category | Specific Examples | Function in Ecological Research |

|---|---|---|

| Sensor Platforms | NEON instrument towers, Aquatic sensors, Animal-borne biologgers | Collect standardized abiotic and biotic measurements across ecosystems |

| Statistical Software | R packages: amt, momentuHMM, move |

Implement specialized models for movement analysis and habitat selection |

| Data Sources | NEON Data Portal, Biorepository specimens, Assignable Assets | Provide open-access ecological data and samples for extended research |

| Environmental Covariates | Vegetation indices, Climate data, Topography, Prey diversity maps | Represent habitat features in statistical models of species distribution |

| Modeling Approaches | RSF, SSF, HMM, Integrated Step-Selection Analysis | Quantify species-habitat relationships across spatial and behavioral scales |

| Visualization Tools | Satellite imagery, Interactive maps, Geometric coverage tools | Communicate results and identify coverage gaps in sensor networks |

Advanced Integration Protocols

Sensor Network Coverage Optimization

Objective: To quantify and visualize detection coverage areas in wireless sensor networks for ecological monitoring.

Materials and Equipment:

- Range-free sensors with known coordinates

- Satellite imagery and GIS platforms

- Python programming environment with geospatial libraries

- Pre-existing transmitter location data

Procedure:

- Area Definition: Define the Area of Interest (AoI) and project onto global coordinate system.

- Sensor Deployment: Create sensor objects with specified locations, ranges, and angular detection parameters.

- Triangulation Zones: Calculate areas covered by at least three sensors simultaneously to enable accurate localization.

- Coverage Gap Analysis: Identify "blind" zones where existing transmitters obstruct sensor detection capabilities.

- Visualization: Implement interactive satellite maps for dynamic exploration of coverage areas.

- Network Optimization: Adjust sensor parameters and placement to maximize triangulated coverage while minimizing gaps.

Interpretation: This protocol enables researchers to design effective sensor networks prior to field deployment, ensuring adequate spatial coverage for detecting and localizing ecological phenomena of interest.

Integrated Model-Sensor Calibration

Objective: To harness 'Big Data' from sensor networks while addressing challenges of data quality, scale, and integration.

Materials and Equipment:

- Heterogeneous sensor networks (traditional monitors, low-cost sensors, citizen science data)

- Cloud computing infrastructure

- Data fusion and assimilation algorithms

- Quality assurance/quality control (QA/QC) protocols

Procedure:

- Data Quality Framework: Implement tiered QA/QC processes responsive to heterogeneous sensor data characteristics.

- Model-Informed Sensor Placement: Use sensitivity analysis of existing models to identify areas where additional sensor data would most reduce uncertainty.

- Multi-Scale Data Integration: Develop hierarchical modeling approaches that integrate data from different sensor types and spatiotemporal scales.

- Uncertainty Quantification: Propagate measurement errors through modeling pipelines to quantify confidence in ecological predictions.

- Stakeholder Communication: Co-develop visualization tools that effectively communicate integrated model-sensor results to diverse audiences.

Interpretation: This integrated approach moves beyond raw data collection to generate meaningful ecological information, supporting more effective conservation planning and policy decisions.

Spatial autocorrelation, a fundamental concept in spatial statistics, describes the degree to which similar values or states of a variable are clustered together in space. It is a critical consideration for ecological research, as data collected from nearby locations are often more similar than data collected from distant locations, violating the assumption of independence underlying many traditional statistical models. Effectively matching sensor-derived data to appropriate statistical models requires a deep understanding of how to measure, account for, and leverage spatial autocorrelation. This document outlines the core principles, applications, and experimental protocols for handling spatial autocorrelation within the context of modern ecological research, with a specific focus on integrating diverse data streams from remote sensing and other sensor technologies.

Foundational Concepts and Recent Trends

Statistical ecology has evolved to embrace complex data structures, with hierarchical models serving as a key framework for separating ecological processes from observation processes [1]. An analysis of International Statistical Ecology Conference (ISEC) abstracts from 2008 to 2022 reveals that research on species distribution models, occupancy models, and animal movement has become increasingly prevalent [1]. This trend coincides with the proliferation of new data sources, such as automated recorders and remote sensing techniques, which provide high-resolution, spatially referenced data at unprecedented scales [1]. A central challenge, and a key topic at ISEC, is data integration—the fusion of these diverse data streams to achieve a more robust understanding of ecological systems [1].

Spatial autocorrelation plays a dual role in this context. It can be a nuisance that inflates Type I errors and biases parameter estimates if ignored, but it can also be an informative source of signal that reveals underlying ecological processes [2]. For instance, the spatial structure of environmental variables like plant water stress can drive patterns in phenomena like wildfire burn severity [2].

Table 1: Key Statistical Schools of Thought in Spatial Ecology.

| School of Thought | Core Principle | Typical Applications | Common Software/Tools |

|---|---|---|---|

| Frequentist Mixed Models | Accounts for fixed and random effects to handle structured data and non-independence. | Population abundance, species distributions, resource selection. | lme4 (R), MixedModels.jl (Julia) [3] |

| Bayesian Hierarchical Models (BHM) | Explicitly models data, process, and parameters; ideal for integrating data and propagating uncertainty. | Complex system integration, animal movement, population dynamics. | brms, Stan (R/Python/Julia) [3] |

| Machine Learning (ML) | Data-driven, non-parametric approach for identifying complex, non-linear relationships. | Pattern recognition (e.g., species ID), prediction (e.g., wildfire risk). | Random Forests, cito (R) [4] [2] |

| Geostatistical Models | Directly incorporates spatial correlation via variograms and kriging. | Interpolation and prediction of continuous spatial fields (e.g., soil properties). | spmodel (R) [4], gstat (R) |

Recent applied studies demonstrate the critical importance of accounting for spatial autocorrelation in ecological forecasting and spatial planning. The following table synthesizes quantitative findings from research in wildfire prediction and marine aquaculture, highlighting the role of spatial autocorrelation analysis.

Table 2: Quantitative Findings from Spatial Autocorrelation Applications.

| Study & Domain | Primary Goal | Key Predictors/Variables | Spatial Autocorrelation Method | Key Quantitative Result |

|---|---|---|---|---|

| Wildfire Prediction [2] | Predict burn severity (dNBR) 1 week pre-ignition at 70m resolution. | ECOSTRESS (ET, ESI), topography, weather. | Sample spacing increase; Principal Coordinates of Neighbor Matrices (PCNM). | Model R² = 0.77 with all predictors. Accuracy declined with increased sample spacing but was robust, indicating capture of fine-scale processes. |

| Marine Aquaculture Siting [5] | Identify suitable locations for mussel longline farming. | Chlorophyll-a, sea surface temperature, current speed. | Local Indicators of Spatial Association (LISA); Incremental Spatial Autocorrelation (Moran's I). | 17% of the study area was statistically identified as "highly suitable." Moran's I used to set thresholds for oceanographic variables in planning tools. |

| Evolutionary Ecology Simulation [6] | Explore how landscape structure affects evolution of niche optima, tolerance, and dispersal. | Fractal-generated temperature (T) and habitat (H) attributes. | Landscapes generated with controlled Hurst index (H=0: random; H=1: highly autocorrelated). | Compositional heterogeneity had the strongest influence on traits; spatial autocorrelation played a mediating role. Dispersal frequency and distance evolved independently. |

Experimental Protocols

Protocol: Assessing Spatial Autocorrelation in a Fine-Scale Wildfire Prediction Model

This protocol is adapted from the random forest modeling approach used to predict burn severity in New Mexico, USA [2].

1. Problem Definition & Data Collection:

- Objective: Build a model to predict continuous burn severity (dNBR) at a fine spatial resolution (e.g., 70m) one week before a wildfire occurs.

- Response Variable: Differenced Normalized Burn Ratio (dNBR) from post-fire satellite imagery (e.g., Landsat).

- Predictor Variables:

- Fuel Flammability: Evapotranspiration (ET) and Evaporative Stress Index (ESI) from ECOSTRESS sensor (or similar) one week prior to fire.

- Topography: Elevation, slope, aspect (from a Digital Elevation Model).

- Weather: Historical data on temperature, vapor pressure deficit, wind speed.

2. Data Preprocessing & Spatial Alignment:

- Resample all raster datasets to a common resolution and projection.

- Extract values for all predictors and the response at each pixel location within the fire perimeters.

3. Model Fitting & Baseline Assessment:

- Implement a Random Forest regression model using the collected dataset.

- Use a standard random forest implementation (e.g.,

randomForestin R or scikit-learn in Python). - Perform standard cross-validation to establish a baseline performance (e.g., R²).

4. Spatial Autocorrelation Assessment & Validation:

- Increased Sample Spacing: Systematically increase the distance between training data points (pixels) and refit the model. A significant drop in performance suggests the baseline model was over-reliant on short-range spatial autocorrelation.

- Spatial Predictors: Introduce explicit spatial structure predictors, such as the Principal Coordinates of Neighbor Matrices (PCNM), into the model. Compare feature importance and model performance with and without these spatial terms.

- Spatial Cross-Validation: Partition data by spatial blocks or fires (e.g., train on half the fires, predict on the other half) instead of randomly. This tests the model's ability to generalize to new geographic areas.

5. Interpretation & Reporting:

- Report model performance metrics from both random and spatial cross-validation.

- Discuss the relative importance of spatial predictors versus environmental predictors.

- Use the final, validated model to generate predictive maps of burn severity.

Protocol: Incorporating Spatial Autocorrelation in Marine Aquaculture Planning

This protocol outlines the use of spatial autocorrelation analysis for objective marine spatial planning, as demonstrated in the northeast United States [5].

1. Define Suitability Criteria:

- Identify key environmental variables for the target species (e.g., for mussels: chlorophyll-a concentration, sea surface temperature, current speed). Acquire spatially continuous data layers for each.

2. Conduct Relative Site Suitability Analysis (A variant of MCDA):

- Standardize and weight each environmental variable based on biological knowledge.

- Combine them into a single composite "suitability score" for each location in the study area.

3. Apply Local Indicator of Spatial Association (LISA) Analysis:

- Perform a LISA analysis (e.g., using Local Moran's I) on the composite suitability score.

- This analysis will statistically identify significant spatial clusters of high and low values.

- Output: A map classifying areas into "High-High" (hot spots: highly suitable areas surrounded by other highly suitable areas), "Low-Low" (cold spots), and other categories.

4. Define Management-Relevant Zones:

- Use the statistically significant "High-High" clusters from the LISA analysis to objectively define the areas reported as "highly suitable." This removes subjectivity from the final site selection.

5. (Optional) Determine Characteristic Spatial Scales:

- For key oceanographic variables, perform an Incremental Spatial Autocorrelation analysis (Global Moran's I).

- This analysis calculates Moran's I for a series of increasing distance intervals. The peaks in the resulting plot indicate the distance thresholds at which spatial processes are most pronounced.

- These distance thresholds can be incorporated into planning tools (like OceanReports) to define the maximum area for which summary statistics are most representative.

Workflow Visualization

Figure 1: A generalized workflow for ecological data analysis that incorporates checks for spatial autocorrelation (SAC) at critical stages to ensure model robustness.

The Scientist's Toolkit: Research Reagent Solutions

This table details key "research reagents"—the essential data, software, and conceptual tools—required for conducting robust spatial autocorrelation analysis in ecology.

Table 3: Essential Tools for Spatial Autocorrelation Analysis.

| Tool / Reagent | Type | Function / Application | Example / Source |

|---|---|---|---|

| Spatially Explicit Sensor Data | Data | Provides the foundational, georeferenced observations for analysis. | ECOSTRESS (ET, ESI) [2], Movebank animal tracking data [4], acoustic recorder data [1]. |

| R Statistical Environment | Software | Primary platform for statistical ecology; hosts a comprehensive suite of spatial analysis packages. | Core R with packages like spmodel, unmarked, ctmm, brms, randomForest [4] [3] [2]. |

| Hierarchical Model Formulation | Conceptual Framework | Allows separation of ecological process from observation process, crucial for modeling complex dependencies. | State-space models, occupancy models, integrated population models [1]. |

| Spatial Autocorrelation Metrics | Analytical Tool | Quantifies the degree and scale of spatial dependence in data. | Global & Local Moran's I [5], variograms, Mantel test. |

| Fractal Landscape Generators | Modeling Tool | Creates simulated environments with controlled spatial structure for theoretical studies and simulation. | Algorithm from Saupe (1988) as used in [6]. |

| Spatial Cross-Validation | Validation Protocol | Tests model generalizability by holding out spatially contiguous blocks of data, preventing overfitting. | Block Cross-Validation, Leave-One-Location-Out [2]. |

Modern ecology relies on high-resolution, multidimensional data to understand ecosystem dynamics amidst global change and biodiversity declines [7]. The sensor-to-model pipeline represents a paradigm shift, moving from traditional, labor-intensive surveys to integrated systems that automate data collection, processing, and analysis [7]. This pipeline enables researchers to capture complex biotic metrics—including species behaviors, traits, abundances, and distributions—at spatiotemporal scales previously impossible to achieve [7]. The core of this approach lies in matching rich sensor-derived data with appropriate statistical models to extract meaningful ecological patterns and predictions.

These automated frameworks combine networked sensor arrays with artificial intelligence to transform raw environmental data into actionable ecological knowledge. This process is fundamental for predicting population collapses, designing conservation strategies, and understanding the mechanisms driving ecosystem function [7]. The integration of sensing technologies and modeling is particularly valuable in precision agriculture and animal welfare, where data fusion techniques help interpret complex data streams representing diverse phenomena [8].

The Automated Monitoring Workflow

The sensor-to-model pipeline involves a sequential workflow that transforms raw environmental data into ecological understanding. This process begins with automated data collection, progresses through computational analysis, and culminates in ecological pattern quantification.

Workflow Diagram

Data Collection Technologies

Ecological monitoring employs diverse sensor technologies to automatically record environmental and biological data. These sensors can be categorized by their operating principle and the type of data they capture.

Table 1: Ecological Sensor Technologies and Their Applications

| Sensor Category | Specific Technologies | Collected Data | Ecological Applications |

|---|---|---|---|

| Acoustic Wave Recorders | Microphones, Hydrophones, Geophones | Soundscapes, Vocalizations, Vibrations | Detecting sound-producing animals, identifying species, monitoring behavior [7] |

| Electromagnetic Wave Recorders | Camera traps, Optical sensors, LiDAR, Radar systems | Images, Videos, 3D structural data | Counting individuals, tracking movements, measuring morphological traits [7] |

| Chemical Recorders | Environmental DNA sequencers, Soil sensors | Chemical signatures, DNA sequences | Detecting species presence, assessing soil quality, monitoring pollutants [7] [8] |

| Environmental Parameter Sensors | Thermistors, Hygrometers, pH sensors | Temperature, Humidity, pH, Light levels | Correlating environmental conditions with biological patterns [7] [8] |

Data Processing and Feature Extraction

Raw sensor data requires sophisticated computational processing to extract meaningful ecological information. This stage employs artificial intelligence, particularly computer vision and deep learning algorithms, to automate the detection, identification, and measurement of organisms [7].

Computer Vision Workflow for Ecological Data

Data Fusion Strategies

Multi-sensor approaches require data fusion techniques to integrate information from diverse sources. The Dasarathy model groups these techniques by level of abstraction: data (low-level), features (mid-level), or decisions (high-level) [8]. The choice of fusion strategy depends on the research question and data characteristics.

Table 2: Data Fusion Techniques in Ecological Monitoring

| Fusion Level | Description | Advantages | Implementation Example |

|---|---|---|---|

| Low-Level (Data Fusion) | Raw data from multiple sensors is combined before feature extraction | Retains complete information from all sensors | Fusing thermal and RGB images for improved animal detection [8] |

| Mid-Level (Feature Fusion) | Features are extracted from each sensor separately then combined | Reduces dimensionality while preserving relevant information | Combining spectral features with morphological measurements for species ID [8] |

| High-Level (Decision Fusion) | Each sensor stream is processed independently with final decisions combined | Allows for heterogeneous processing pipelines | Fusing species classifications from audio and visual sensors [8] |

Experimental Protocols

Protocol: Camera Trap Monitoring for Ungulate Populations

This protocol outlines the procedure for monitoring wild ungulate populations using camera traps and deep learning-based analysis, adapted from integrated monitoring approaches [9].

Materials and Equipment

- Camera traps with infrared capability for 24-hour monitoring

- Weatherproof enclosures for sensor protection

- Battery packs with solar charging capability

- Data storage devices with adequate capacity for image collection

- GPS units for precise location mapping

- Computer workstations with GPU acceleration for deep learning processing

Procedure

Study Design Phase

- Determine camera placement using stratified random sampling based on habitat types

- Program cameras to capture 3-image bursts with 1-second intervals upon motion trigger

- Set camera sensitivity appropriate for target species size and movement patterns

- Record GPS coordinates and habitat characteristics for each deployment location

Data Collection Phase

- Install cameras at approximately 40-50 cm height, facing north to avoid sun glare

- Conduct monthly maintenance visits to replace batteries and download data

- Document any camera malfunctions or obstructions in field logs

- Collect reference images of target species for training classification algorithms

Data Processing Phase

- Organize images into standardized directory structure with metadata

- Pre-process images using contrast enhancement and noise reduction algorithms

- Implement deep learning algorithm (e.g., Convolutional Neural Network) training using reference images

- Execute automated detection and classification of target species

- Manually verify a subset of automated classifications for accuracy assessment

Data Analysis Phase

- Calculate detection rates and occupancy patterns for each species

- Model abundance using N-mixture models or distance sampling approaches

- Correlate detection patterns with environmental covariates

- Generate spatial distribution maps of species abundance

Protocol: Multi-Sensor Data Fusion Pipeline Development

This protocol provides a framework for developing and testing data fusion pipelines for agricultural and animal monitoring applications [8].

Materials and Equipment

- Heterogeneous sensors (e.g., spectral sensors, temperature loggers, soil moisture probes)

- Data synchronization equipment with precise timing capability

- Data Fusion Explorer (DFE) Python tool or equivalent framework

- Statistical analysis software (R, Python with pandas/sci-kit learn)

- Data visualization platforms for multidimensional data exploration

Procedure

Data Format Identification

- Classify data streams as singlets (low-dimensional), arrays, or images

- Document temporal and spatial characteristics of each data stream

- Identify required pre-processing steps for each data type

Temporal and Spatial Alignment

- Implement synchronization protocols across all sensor platforms

- Resample data to common temporal resolution using appropriate interpolation

- Georeference all spatial data to common coordinate system

- Handle missing data using appropriate imputation methods

Feature Extraction

- Apply dimensional reduction techniques (PCA, ICA) to array-style data

- Extract relevant features from images using computer vision algorithms

- Normalize features across different sensor types to comparable scales

- Select optimal feature subsets using criterion-based methods

Fusion Strategy Testing

- Implement low-level fusion by combining raw data streams

- Implement mid-level fusion by combining extracted features

- Implement high-level fusion by combining model outputs

- Compare fusion strategies using predefined performance metrics

Pipeline Optimization

- Evaluate computational efficiency of different pipeline configurations

- Test robustness to sensor failure or data gaps

- Validate ecological relevance of fused data products

- Document optimal pipeline configuration for specific applications

Quantitative Data Analysis and Distribution Modeling

Ecological data from sensor networks typically requires summarization into distributions to facilitate pattern recognition and modeling. The distribution of a variable describes what values are present in the data and how often those values appear [10].

Data Distribution Visualization

The appropriate graphical representation of quantitative data depends on the type of variable and the monitoring context.

Table 3: Quantitative Data Visualization Methods in Ecological Monitoring

| Graph Type | Description | Best Use Cases | Ecological Example |

|---|---|---|---|

| Histogram | Series of boxes where width represents value intervals and height represents frequency | Moderate to large amounts of continuous data [10] | Distribution of animal group sizes detected across camera traps |

| Frequency Polygon | Points placed at interval midpoints with connecting lines emphasizing distribution | Comparing distributions between groups or conditions [11] | Reaction times of animals to stimuli under different treatments |

| Stemplot (Stem-and-leaf) | Part of each number as stem (left of line), remainder as leaf (right of line) | Small datasets where individual values are meaningful [10] | Exact counts of rare species across sampling locations |

| Comparative Bar Chart | Bars for different groups placed next to each other | Direct comparison of categorical groupings [11] | Species detection frequencies across different habitat types |

Statistical Modeling of Sensor Data

The transition from sensor data to ecological models involves several statistical considerations:

Data Transformation: Sensor data often requires transformation to meet statistical model assumptions (e.g., log-transformation for count data)

Temporal Autocorrelation: Time-series from continuous monitoring requires models that account for temporal dependencies (e.g., ARIMA models, generalized estimating equations)

Spatial Correlation: Georeferenced sensor data necessitates spatial statistics (e.g., kriging, spatial autoregressive models)

Hierarchical Structure: Data from multiple sensors across locations often exhibits nested structure requiring mixed-effects models

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Tools for Sensor-to-Model Pipeline Implementation

| Tool Category | Specific Solutions | Function | Implementation Considerations |

|---|---|---|---|

| Sensor Platforms | Camera traps, Acoustic recorders, eDNA samplers | Automated data collection across spatial and temporal scales | Power requirements, weatherproofing, data storage capacity [7] |

| Data Processing Frameworks | Data Fusion Explorer (DFE), TensorFlow, PyTorch | Implementing custom data fusion pipelines and AI algorithms | Computational resources, programming expertise, modular design [8] |

| Statistical Analysis Environments | R, Python (pandas, sci-kit learn), specialized ecological packages | Modeling species distributions, abundance, and ecological patterns | Model assumptions, spatial-temporal dependencies, validation methods [10] |

| Data Visualization Tools | ggplot2, Matplotlib, GIS platforms | Creating informative visualizations of ecological patterns and model outputs | Color contrast requirements, accessibility standards, multidimensional representation [11] [12] |

The sensor-to-model pipeline represents a transformative approach to ecological monitoring, enabling researchers to move from data scarcity to information abundance. By integrating automated sensor technologies with sophisticated AI processing and statistical modeling, ecologists can now monitor complex ecological systems at unprecedented resolutions [7]. This integrated framework supports more accurate forecasting of ecosystem dynamics and more effective conservation strategies in an era of rapid environmental change.

The continued development of data fusion techniques [8] and the refinement of statistical models that account for the unique characteristics of sensor-derived data will further enhance our ability to extract meaningful ecological knowledge from these automated systems. As these technologies become more accessible and standardized, they promise to revolutionize how we monitor, understand, and protect ecological systems across scales from individual organisms to entire landscapes.

Modern ecological research is increasingly driven by data from advanced biologging sensors and geospatial technologies. A core thesis in contemporary ecology is that the reliability of research findings is fundamentally dependent on appropriately matching the peculiarities of sensor-derived data to the assumptions of statistical models [13] [14]. This document outlines the key challenges of scale, specificity, and spatial bias that arise at this intersection, providing application notes and protocols to enhance the rigor and interpretability of ecological studies. Ignoring these challenges can lead to deceptively high predictive performance in models that fail to accurately represent real-world ecological processes [14].

Quantifying the Core Challenges

The following table summarizes the primary data challenges and their impact on ecological modeling.

Table 1: Core Challenges in Ecological Data and Their Implications

| Challenge | Description | Impact on Modeling & Inference |

|---|---|---|

| Scale | Mismatch between the scale of data collection (e.g., from biologgers), the scale of ecological processes, and the scale of model application [15]. | Leads to inappropriate inference; models answer questions at a different scale than intended (e.g., landscape-level conclusions from fine-scale movement data) [15]. |

| Specificity | The unique, dynamic, and often non-uniform nature of environmental data, including functional trait distributions and ecosystem functioning [16] [14]. | Constrains model generalizability and leads to poor extrapolation performance (out-of-distribution problem) if not accounted for during model development [14]. |

| Spatial Bias | Non-random data collection, such as preferential sampling where areas are sampled only when species are expected to be found [16]. | Introduces bias in parameter estimation and creates spatially skewed predictions that do not reflect true species distributions or habitat associations [16] [14]. |

| Data Imbalance | A significant overabundance of samples from one class (e.g., absence) or region compared to others (e.g., presence) [14]. | Models become biased toward predicting the majority class, and classification rules for rare events or species are often ignored, reducing predictive accuracy for ecologically critical minority classes [14]. |

| Spatial Autocorrelation | The tendency for nearby locations to have more similar values than those farther apart [14]. | Violates the independence assumption of many statistical models, leading to overconfident models and inflated measures of predictive performance if not properly addressed during validation [14]. |

Experimental Protocols for Robust Ecological Analysis

Protocol: Comparing Statistical Models for Animal Movement Data

This protocol guides the comparison of common models used to relate animal movement data to environmental covariates, helping researchers select the appropriate tool for their specific question [15].

- Objective: To apply and compare Resource Selection Functions (RSF), Step-Selection Functions (SSF), and Hidden Markov Models (HMM) on a single movement track to derive and contrast ecological insights.

- Materials: Animal movement track (e.g., GPS data), associated environmental covariates (e.g., prey diversity, vegetation), and R statistical software with packages

amtandmomentuHMM. - Procedure:

- Data Preparation: Load the movement track into R and annotate each observed location with the relevant environmental covariate values.

- RSF Implementation (Broad-Scale Habitat Selection):

- Using the

amtpackage, generate a set of available points within the animal's home range (e.g., Minimum Convex Polygon). - Fit a logistic regression model to the used and available locations to estimate selection coefficients (β) for each environmental covariate [15].

- Interpret the RSF, (w(\mathbf{x}) = {\text{exp}}(\beta{1} x{1} + \beta{2} x{2} + \cdots + \beta{k} x{k})), as the relative probability of selection.

- Using the

- SSF Implementation (Fine-Scale Habitat Selection during Movement):

- For each observed step (the movement between two consecutive points), generate a set of random steps that originated from the same starting point.

- Fit a conditional logistic regression to the used and random steps to estimate selection coefficients.

- Compare the coefficients and their significance with those from the RSF.

- HMM Implementation (Behavior-Specific Habitat Association):

- Using the

momentuHMMpackage, fit an HMM to the movement data to identify latent behavioral states (e.g., "Encamped," "Exploratory"). - Incorporate environmental covariates into the model to test for relationships between habitat and the probability of being in a specific behavioral state.

- Examine how associations with covariates (e.g., prey diversity) vary across the different behavioral states identified.

- Using the

- Expected Output: A case study, as demonstrated with a ringed seal, will show that the three models can yield different ecological insights and identify different "important" areas, underscoring the critical importance of model selection [15].

Protocol: Diagnosing and Correcting for Spatial Bias in Species Data

This protocol addresses the challenge of preferential sampling in presence-only or presence/absence data [16].

- Objective: To identify and correct for bias in species distribution data collection to produce more reliable spatial models.

- Materials: Species occurrence data, a set of environmental raster layers (e.g., climate, topography), and spatial modeling software (e.g., R with

spatialpackages). - Procedure:

- Bias Diagnosis: Model the sampling process itself by relating the presence of survey locations to accessibility covariates (e.g., distance to roads, elevation). A significant relationship indicates preferential sampling.

- Model Specification: Implement a spatial multi-level model within a Bayesian framework. This involves specifying:

- A process model that describes the latent, true species distribution.

- An observation model that links the observed data to the latent process, explicitly incorporating the bias identified in Step 1 [16].

- Parameter Estimation: Use Markov Chain Monte Carlo (MCMC) methods or Integrated Nested Laplace Approximation (INLA) to fit the model and estimate the parameters of the true species distribution while correcting for the sampling bias.

- Validation: Compare the predictive performance of the bias-corrected model against a naive model that does not account for sampling bias, using spatially structured cross-validation.

The Scientist's Toolkit: Essential Reagents & Computational Solutions

Table 2: Key Research Reagent Solutions for Spatial Ecological Modeling

| Item | Function in Analysis |

|---|---|

| Biologging Sensors (GPS/Accelerometer) | Capture high-frequency movement and behavioral data, providing the foundational information for analyzing species-habitat associations [13]. |

| Resource Selection Function (RSF) | A statistical function used to estimate the relative probability of habitat use by an animal based on environmental characteristics, typically at a broad spatial scale [15]. |

| Step-Selection Function (SSF) | A statistical function that models fine-scale habitat selection by comparing the environmental conditions at a chosen movement step to those at alternative, randomly generated steps [15]. |

| Hidden Markov Model (HMM) | A state-space model that identifies latent (unobserved) behavioral states from movement data and can link the probability of these states to environmental covariates [15]. |

| Spatial Cross-Validation | A model validation technique that partitions data based on location to avoid overfitting and provide a realistic measure of a model's ability to predict in new, unsampled areas [14]. |

| Integrated Biologging Framework (IBF) | A structured approach for matching the most appropriate biologging sensors and sensor combinations to specific biological questions, and for analyzing the resulting complex, high-frequency data [13]. |

Visualizing Workflows and Logical Relationships

Geospatial Data-Driven Modeling Pipeline

Model Selection for Movement Data

Methodologies for Integration: Building Hybrid Sensor-Statistical Models

The integration of sensor data in ecological research has revolutionized our ability to monitor ecosystems, yet it simultaneously demands advanced statistical frameworks to interpret spatially correlated information correctly. Spatial statistics provide the essential toolkit for analyzing data where geographical location influences the measured variables, moving beyond the limiting assumption of independence in traditional statistics [17]. The core challenge in ecological studies involves distinguishing the relative effects of endogenous processes (e.g., species dispersal) from exogenous factors (e.g., environmental gradients) on observed spatial patterns [18]. Failure to account for spatial autocorrelation (SAC)—the phenomenon where observations closer in space are more similar or dissimilar than expected by chance—can lead to inflated Type I errors, biased parameter estimates, and ultimately, flawed ecological inferences [18] [19]. This document provides application notes and protocols for selecting and implementing geostatistical, point process, and spatial regression models, specifically framed within the context of matching these models to data generated by modern ecological sensors.

Model Selection Framework: Matching Sensor Data to Statistical Approach

Selecting an appropriate spatial model depends fundamentally on the nature of the sensor data (point-referenced, areal, or point pattern) and the specific research question. The following table provides a structured guide for this selection process.

Table 1: Spatial Model Selection Guide for Ecological Sensor Data

| Data Type & Research Goal | Recommended Model Class | Key Strengths | Common Sensor Data Sources |

|---|---|---|---|

| Predicting a continuous variable at unobserved locations (e.g., soil moisture, pollutant concentration, temperature) | Geostatistics (Kriging variants) | Provides optimal, unbiased predictions with estimation error; incorporates spatial covariance structure [20] [21] [22]. | In-situ sensor networks, hyperspectral imagers (e.g., EMIT, ASTER) [23], thermal infrared spectrometers (e.g., SDGSAT-1 TIS) [24]. |

| Modeling relationship between a response variable and environmental drivers while accounting for spatial dependence | Spatial Regression (GLS, SAR, GAM) | Isolates the relationship between variables from spurious spatial correlations; reduces "red-shift" in feature selection [17] [18] [19]. | Multi-sensor fusion data (e.g., combining vegetation indices from multispectral sensors with topographic data). |

| Analyzing the distribution and intensity of discrete events or objects (e.g., animal nests, tree locations, disease outbreaks) | Point Process Models | Models the underlying intensity function of events; can distinguish between clustering and regularity; incorporates environmental covariates. | GPS animal tags, drone-based imagery for individual plant counts, acoustic sensors. |

| Characterizing complex, non-linear and high-dimensional spatial patterns (e.g., from high-resolution imaging spectrometers) | Hybrid/Machine Learning Models (e.g., GCNN-RNN) | Captures complex, non-linear dependencies that may be missed by classical geostatistics; handles large datasets [25]. | High-resolution satellite imagery (e.g., SDGSAT-1 MII, VIIRS) [23] [24], airborne geophysical surveys. |

Workflow for Spatial Model Selection and Application

The following diagram outlines a systematic workflow for selecting and applying a spatial statistical model to ecological sensor data.

Geostatistical Interpolation and Kriging Protocols

Geostatistics is foundational for creating continuous surfaces from point-referenced sensor measurements.

Core Concepts and Equations

Geostatistics models spatial variation using the variogram, which quantifies the average dissimilarity between data points as a function of their separation distance. The experimental variogram is calculated as:

[ \gamma(h) = \frac{1}{2N(h)} \sum{i=1}^{N(h)} [z(xi) - z(x_i + h)]^2 ]

where ( \gamma(h) ) is the semi-variance for lag distance ( h ), ( N(h) ) is the number of point pairs separated by ( h ), and ( z(xi) ) is the measured value at location ( xi ) [21]. A model (e.g., spherical, exponential, Gaussian) is then fitted to the experimental variogram, characterized by three parameters:

- Nugget (( n )): Represents micro-scale variation and/or measurement error.

- Sill (( s )): The total spatial variance where the variogram levels off.

- Range (( a )): The distance at which data points become spatially independent [25] [22].

Ordinary Kriging (OK), the most common kriging variant, then uses this model to predict values at unsampled locations. It is a Best Linear Unbiased Estimator (BLUE), providing a weighted average of neighboring samples where the weights are derived from the variogram model to minimize prediction variance [20] [22].

Experimental Protocol: Variogram Modeling and Kriging

Objective: To create a continuous map of soil metal concentration from discrete sensor measurements using Ordinary Kriging.

Materials:

- Geochemical Sensor: Field-portable XRF analyzer.

- Positioning System: Differential GPS (sub-meter accuracy).

- Software: R with

gstatpackage, or Python withscikit-gstatandpykrige.

Procedure:

- Systematic Sampling: Establish a sampling grid over the area of interest (e.g., a historical mine tailing site [25]). Collect a minimum of 50-100 geo-referenced soil samples at designated nodes.

- Data Preprocessing: Log-transform the data if necessary to stabilize variance. Check for and handle outliers.

- Compute Experimental Variogram:

Model Fitting: Fit a theoretical model (e.g., spherical) to the experimental variogram.

Cross-Validation: Perform leave-one-out cross-validation to select optimal variogram parameters (

nugget,sill,range) that minimize prediction error [25].Spatial Prediction (Kriging): Interpolate values onto a regular grid.

Validation: Validate the final map using a hold-out dataset not used in model fitting. Report the Root Mean Square Error (RMSE) and Coefficient of Determination (R²) [22].

Advanced Kriging Techniques

Table 2: Advanced and Hybrid Geostatistical Methods

| Method | Description | Ecological Application Example |

|---|---|---|

| Universal Kriging (UK) | Incorporates a deterministic trend model (e.g., a linear function of coordinates) in addition to the spatial residual component [22]. | Modeling large-scale environmental gradients, such as temperature or precipitation trends across a region. |

| Empirical Bayesian Kriging (EBK) | A computationally intensive but automated method that accounts for error in the variogram estimation process by simulating subsets of the data [22]. | Ideal for non-stationary processes and for users seeking to automate the kriging process without manual variogram fitting. |

| Regression Kriging (RK) | Combines a regression of the target variable on auxiliary predictors (e.g., from remote sensing) with kriging of the regression residuals [22]. | Example: Predicting soil organic carbon by first modeling it with NDVI and elevation, then kriging the residuals to capture unexplained spatial variation. |

| Geostatistical CNN–RNN | A hybrid model that uses a Convolutional Neural Network and Bidirectional LSTM informed by kriging-derived spatial covariance structures [25]. | Modeling extremely complex, non-linear spatial patterns in heterogeneous environments, such as geochemistry in mine tailings. |

Spatial Regression Modeling Protocols

Spatial regression models are used when the primary goal is to understand the relationship between a response variable and environmental predictors, while explicitly accounting for spatial autocorrelation to ensure valid inference.

Model Selection and Comparison

The choice of spatial regression model depends on the assumed structure of the spatial dependence.

Table 3: Comparison of Spatial Regression Techniques

| Model | How it Handles Spatial Dependence | Advantages | Limitations |

|---|---|---|---|

| Generalized Least Squares (GLS) | Models spatial structure directly in the error term's covariance matrix, typically using a function of distance (e.g., exponential decay) [19]. | Provides statistically efficient coefficient estimates; well-established theory [19]. | Requires pre-specification of the spatial correlation function; can be computationally intensive for large datasets. |

| Spatial Autoregressive (SAR) Models | Includes a weighted average of neighboring response values (lag model) or error terms (error model) as an additional predictor in the regression [17] [18]. | Intuitive interpretation as a "spatial spillover" effect. | Requires defining a spatial weights matrix (neighborhood structure); interpretation of coefficients is more complex. |

| Generalized Additive Models (GAM) | Incorporates space as a smooth term (e.g., a spline function of coordinates) in the mean model [19]. | Highly flexible in capturing complex, non-linear spatial trends. | The spatial term is a "black box"; may overfit the spatial trend, reducing transferability to new areas. |

| Spatial Filtering (e.g., PCNM) | Uses eigenvectors derived from a spatial connectivity matrix as extra predictors to "filter out" the spatial structure [18]. | Can capture complex multi-scale spatial patterns. | Can lead to overfitting if too many eigenvectors are selected. |

Experimental Protocol: Implementing Spatial Regression with GLS

Objective: To model the effect of urbanization (e.g., impervious surface cover) on a vegetation index, while controlling for spatial autocorrelation.

Materials:

- Response Data: NDVI derived from multispectral sensor (e.g., Landsat-8/9, Sentinel-2, or SDGSAT-1 MII) [24].

- Predictor Data: Impervious surface index from classified imagery or land cover maps.

- Software: R with

nlmepackage.

Procedure:

- Data Extraction: Extract NDVI and predictor values for sample locations across the study area. Ensure all data are aligned to the same coordinate system.

- Preliminary OLS Model: Fit a standard linear model.

Check for SAC: Test the OLS residuals for spatial autocorrelation using Moran's I.

Fit GLS Model: If SAC is significant, fit a GLS model with a spatial correlation structure.

Model Refinement: Compare different correlation structures (

corExp,corGaus,corSpher) using AIC or BIC to select the best-fitting model.- Interpretation: Interpret the coefficient for

imperviousfrom the final GLS model. This estimate now accounts for spatial dependence, providing a more robust understanding of the urbanization impact. - Critical Validation Step: Ensure that the final model is not simply overfitting the spatial trend. Validate the model's predictive performance on a spatially independent test set (e.g., data from a different geographic region) [19].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Tools for Spatial Analysis with Sensor Data

| Tool / "Reagent" | Function / Purpose | Example Sources / Packages |

|---|---|---|

| Spatial Covariance Function | Quantifies the structure and range of spatial dependence; the core "reagent" for kriging and GLS [25]. | Exponential, Spherical, Gaussian models (in gstat, nlme). |

| Spatial Weights Matrix | Defines the neighborhood relationships between spatial units for SAR and similar models [17] [18]. | Created based on distance, k-nearest neighbors, or contiguity (spdep in R). |

| Principal Coordinates of Neighbor Matrices (PCNM) | Generates orthogonal spatial eigenvectors that represent multi-scale spatial patterns for use as predictors in spatial filtering [18]. | vegan or adespatial packages in R. |

| NASA Earthdata Catalog | Provides access to a vast array of satellite-derived sensor data, essential for model inputs and validation [23]. | https://www.earthdata.nasa.gov/ |

| Normalized Difference Vegetation Index (NDVI) | A standardized metric of live green vegetation derived from multispectral sensor data, used as a response or predictor variable [24] [22]. | Calculated from Landsat, Sentinel-2, or SDGSAT-1 MII red and near-infrared bands. |

| Cross-Validation Workflow | A protocol for tuning model parameters and assessing model performance without overfitting, crucial for robust spatial prediction [25] [22]. | Leave-one-out or spatial block cross-validation scripts. |

The integration of physical models with data-driven machine learning (ML) represents a transformative approach for analyzing complex ecological systems. Hybrid modeling leverages the complementary strengths of two distinct paradigms: the interpretability and grounding in first-principles knowledge (e.g., conservation laws, fluid dynamics) offered by physics-based simulations, and the adaptability and pattern recognition capabilities of ML when trained on observational data [26]. In ecological research, this is particularly valuable for translating raw, often noisy, sensor data into robust statistical models of environmental phenomena. This fusion creates a class of models that are not only predictive but also physically consistent, enabling more reliable forecasting of critical events such as peak pollutant concentrations, extreme weather impacts on ecosystems, or the spread of environmental contaminants [26] [27].

The core challenge in ecology is that purely physics-based models, such as Computational Fluid Dynamics (CFD), can be computationally prohibitive for real-time applications, while purely data-driven models often require massive datasets and can produce physically implausible results [26] [28]. The hybrid paradigm directly addresses this by using machine learning as a fast surrogate (or emulator) for complex simulations, or by embedding physical constraints directly into the ML algorithm's architecture [26]. This is especially pertinent given the proliferation of low-cost environmental sensor networks, which provide vast amounts of data but are prone to drift and cross-sensitivities that require sophisticated calibration [29]. By merging physical understanding with statistical learning, hybrid models offer a pragmatic path to actionable insights for environmental management and policy.

Performance and Comparative Analysis

Hybrid models have demonstrated significant advantages in both accuracy and computational efficiency across various environmental applications. The table below synthesizes key quantitative results from recent studies, providing a clear comparison of the performance gains achievable through the hybrid approach.

Table 1: Quantitative Performance of Hybrid Models in Environmental Applications

| Application Domain | Reported Performance | Comparative Baseline | Key Benefit Highlighted |

|---|---|---|---|

| Urban Air Quality & Wind Energy [26] | Prediction accuracy for peak concentrations and wind speeds within ~90–95% of high-fidelity simulations. | Standalone CFD or purely data-driven models. | Computational cost reduction of over 80% while maintaining high fidelity. |

| Satellite Power Subsystems [27] | Predictive accuracy of R² = 0.921, MAE = 0.063 A using a Mixture of Experts (MoE) framework. | Baseline models including Linear Regression, Random Forest, XGBoost, and LSTM. | Superior predictive accuracy and interpretable validation of statistical findings for anomaly detection. |

| Lettuce Growth in Aeroponics [28] | Good predictive performance for fresh weight and total leaf area. | Traditional farming methods and single-approach models. | A dynamic framework for optimizing agricultural inputs and predicting multiple outputs (growth and resource use). |

These results underscore a consistent theme: hybrid models achieve a favorable balance between scientific validity and operational deployability. The substantial reduction in computational cost is particularly critical for enabling near real-time forecasting and decision-making in dynamic ecological contexts, such as issuing air quality alerts or managing agricultural systems [26] [28].

Detailed Experimental Protocols

Implementing a hybrid model requires a structured workflow that systematically integrates data, physics, and learning. The following protocols detail the key phases of this process.

Protocol 1: Sensor Data Pre-processing and Calibration

Objective: To transform raw, uncalibrated sensor data into a reliable dataset for hybrid model development. Background: Low-cost sensor data is often affected by drift and environmental interference (e.g., temperature, humidity), making calibration and quality control essential first steps [29].

Data Collection:

- Co-location: Deploy low-cost sensor nodes (e.g., NDIR CO₂ sensors, particulate matter sensors) at a site equipped with high-fidelity reference instrumentation [29].

- Time-Synchronization: Collect concurrent time-series data from both low-cost and reference sensors over a period sufficient to capture a wide range of environmental conditions (e.g., weeks to months).

- Metadata Logging: Record influencing parameters such as ambient temperature, pressure, and relative humidity, as these are common sources of error for NDIR sensors and others [29].

Data Pre-processing:

Machine Learning-Based Calibration:

- Model Selection: Choose a calibration algorithm. Common choices include Random Forest Regression, Support Vector Regression (SVR), or neural networks like 1D Convolutional Neural Networks (1D-CNN) and Long Short-Term Memory (LSTM) networks, which can capture temporal dependencies [29].

- Feature Engineering: Use raw sensor readings and logged metadata (temperature, pressure) as input features. The target variable is the measurement from the high-fidelity reference sensor.

- Training & Validation: Split the co-located dataset into training and validation sets. Train the selected ML model to map the low-cost sensor signals to the reference values. Validate performance using metrics like Mean Absolute Error (MAE), R², and Pearson Correlation Coefficient [29].

- Drift Monitoring: Continuously monitor the model's performance over time and retrain periodically as sensor performance inevitably degrades [29].

Protocol 2: Development of a Physics-Informed Hybrid Model

Objective: To construct a model that predicts an ecological variable (e.g., pollutant concentration) by fusing calibrated sensor data with physics-based simulation outputs. Background: This protocol uses a surrogate modeling approach, where ML learns a fast approximation of a slower physics-based model, conditioned on real-time sensor data [26].

Physics-Based Simulation:

- Scenario Generation: Define a set of boundary conditions and scenarios representative of the ecological domain of interest (e.g., various wind directions and speeds for urban air dispersion, different nutrient levels for plant growth) [26] [28].

- High-Fidelity Simulation: Execute physics-based models (e.g., CFD-RANS for fluid flow, radiation degradation models for satellite panels) for these scenarios to generate high-resolution spatiotemporal data [26] [27]. This serves as the foundational physical knowledge.

Hybrid Model Training:

- Input/Output Structuring: For each scenario, use the boundary conditions and real-time sensor network data (where available) as input features for the ML model. The target output is the corresponding high-fidelity simulation result (e.g., peak concentration, current output) [26].

- Model Architecture: Implement a machine learning model. A Mixture of Experts (MoE) framework has shown success in complex systems, as it uses a gating network to selectively combine the predictions of multiple "expert" sub-models, each potentially specializing in a different physical regime [27].

- Training Loop: Train the ML model on the dataset of {boundary conditions, sensor data} and {simulation output} pairs. The goal is for the ML model to learn a mapping that generalizes the physics.

Validation and Deployment:

- Benchmarking: Validate the trained hybrid model on a hold-out set of simulation scenarios and, if available, historical field data not used in training. Compare its accuracy and speed against the full physics-based simulation [26] [27].

- Operational Deployment: Deploy the validated hybrid model for real-time forecasting. Its computational efficiency allows it to be run on standard hardware or even at the edge, closer to the sensor network [26].

Workflow Visualization

The following diagram illustrates the end-to-end logical workflow for developing and deploying a hybrid model, as detailed in the experimental protocols.

Diagram 1: Hybrid model development and deployment workflow.

The Scientist's Toolkit: Research Reagent Solutions

The successful implementation of a hybrid modeling framework relies on a suite of computational and material resources. The table below catalogs the essential "research reagents" for this interdisciplinary field.

Table 2: Essential Tools and Resources for Hybrid Ecological Modeling

| Item Name | Type | Function & Application |

|---|---|---|

| ESP32-based Sensor Platform [29] | Hardware | A cost-effective, agile measurement platform with UPS and multiple sensor support (e.g., for CO₂, PM). Enables dense sensor network deployment for data collection. |

| CFD-RANS/LES Solvers [26] | Software | Provides high-fidelity, physics-based simulation data for fluid flow and dispersion in urban or natural environments, forming the physical basis for the hybrid model. |

| PyMC Library [30] | Software (Python) | A high-level library for probabilistic programming, enabling Bayesian calibration and uncertainty quantification for sensor data and model parameters. |

| Mixture of Experts (MoE) Framework [27] | Algorithm | An ensemble machine learning architecture that improves predictive accuracy and interpretability by combining specialized sub-models. |

| SPENVIS Orbit Generator [27] | Software | Models satellite orbits and illumination conditions, crucial for correlating space weather telemetry with power subsystem data. |

| Aeroponic Growth Chambers [28] | Experimental System | Provides a controlled environment agriculture (CEA) setup to generate high-quality data on plant growth, water, and nutrient consumption for model training. |

| Digital Twin Workflows [26] | Conceptual Framework | Interoperable digital replicas of physical systems that fuse live sensor data with simulation models for monitoring, diagnostics, and "what-if" analysis. |

Predicting extreme environmental values—such as peak pollutant concentrations or maximum wind speeds—is a critical challenge in ecological research and environmental management. Traditional approaches relying solely on high-fidelity computational fluid dynamics (CFD) simulations, while accurate, are often computationally prohibitive for real-time forecasting and large-scale ecological applications [26]. Similarly, purely data-driven models may lack physical realism, limiting their predictive power and generalizability.

This application note details the development and implementation of a sensor-CFD hybrid modeling framework that bridges this gap. By strategically integrating physics-based CFD simulations with real-time sensor network data and statistical learning, this paradigm enables rapid, robust prediction of environmental extremes. This approach is fundamentally aligned with the broader thesis of matching sensor data to statistical models in ecology, creating a powerful synergy where physical principles guide model structure and empirical data informs model parameters [26] [31]. The resulting hybrids achieve a balance between scientific validity and operational deployability, supporting critical decision-making in areas like urban air quality management and renewable energy optimization [26].

Key Performance Metrics and Quantitative Outcomes

The sensor-CFD hybrid approach has demonstrated significant advantages over traditional methods in both accuracy and computational efficiency. The table below summarizes key quantitative outcomes from recent validation studies.

Table 1: Performance Metrics of Sensor-CFD Hybrid Models for Extreme Value Prediction

| Application Domain | Prediction Accuracy | Computational Efficiency | Key Performance Highlights |

|---|---|---|---|

| Urban Air Quality [26] | ~90-95% of high-fidelity simulation accuracy for peak pollutant concentrations. | Computational cost reduction of >80% compared to standalone CFD. | Accurately identifies pollution hotspots; enables rapid air quality alerts. |

| Wind Energy Optimization [26] | ~90-95% of high-fidelity simulation accuracy for maximum wind speeds. | Computational cost reduction of >80% compared to standalone CFD. | Supports micro-siting of turbines for maximum energy yield. |

| Urban Heat Mitigation [31] | R² ≥ 0.90 for temperature and cooling load predictions. | Surrogate models up to 800x faster than full CFD simulations. | Random Forest algorithms achieved cooling load prediction accuracies of R² = 0.98. |

| General Urban Microclimate [31] | Particulate matter concentration errors below 10% compared to measured data. | Accelerated urban thermal analysis from over 400,000 hours to approximately one hour. | Enables rapid exploration of large urban green infrastructure design spaces. |

Core Experimental Protocol for Sensor-CFD Hybrid Modeling

This protocol provides a detailed methodology for developing and validating a sensor-CFD hybrid model for predicting extreme environmental values, such as peak pollutant concentrations in an urban environment.

Phase 1: High-Fidelity CFD Simulation and Data Generation

Objective: To generate a comprehensive dataset of environmental extremes under various scenarios for training the statistical model.

Step 1: Problem Definition and Geometry Acquisition

- Define the spatial domain (e.g., an urban neighborhood with complex geometry).

- Acquire a 3D geometric model of the domain, including buildings, terrain, and key vegetation features [32].

Step 2: Mesh Generation

- Discretize the computational domain into a mesh of small cells. The mesh must be refined in areas of interest, such as near pollution sources or building edges, to accurately capture gradients [32].

- Perform a grid convergence study to ensure the solution is independent of mesh size.

Step 3: CFD Simulation Setup

- Solver Configuration: Select an appropriate CFD solver (e.g., OpenFOAM, ANSYS Fluent) and a suitable turbulence model, such as Reynolds-Averaged Navier-Stokes (RANS) for computational efficiency [26] [32].

- Boundary Conditions: Define realistic boundary conditions, including:

- Inlet wind velocity and direction profiles.

- Turbulence parameters (e.g., intensity, length scale).

- Source terms for pollutants or heat [32].

- Physical Models: Activate relevant scalar transport equations for pollutants (e.g., NOₓ, PM2.5) and energy equations for heat transfer.

Step 4: Ensemble Simulation Execution

- Execute an ensemble of CFD simulations spanning a range of meteorological conditions (e.g., wind speeds, directions, stability classes) and source strengths.

- For each simulation, extract full-field data and, critically, time-series data at virtual sensor locations that match the positions of the physical sensor network.

Phase 2: Sensor Network Deployment and Data Acquisition

Objective: To collect real-world, ground-truthed data for model calibration and validation.

Step 1: Sensor Network Design and Optimization

- Deploy a network of environmental sensors (e.g., for air quality, wind speed, temperature) within the study domain.

- Optimize sensor placement using adaptive sensing strategies to ensure coverage of potential environmental extremes and hotspots [26]. The placement should be informed by preliminary CFD results to capture high-variance locations.

Step 2: Data Collection and Preprocessing

- Collect high-frequency time-series data from the sensor network.

- Perform standard data preprocessing: quality control, removal of outliers, synchronization of time stamps, and gap-filling.

Phase 3: Hybrid Model Development and Training

Objective: To fuse CFD-generated data and real sensor data into a predictive empirical model for extremes.

Step 1: Feature Extraction

- From both CFD and sensor data, calculate statistical indicators for fixed time windows. Key features include:

- Mean (μ) and standard deviation (σ) of the target variable (e.g., concentration, wind speed).

- Temporal correlation metrics and integral time scales (τ) [26].

- Spatial features derived from urban morphology (e.g., building height-to-width ratio, proximity to source).

- From both CFD and sensor data, calculate statistical indicators for fixed time windows. Key features include:

Step 2: Empirical Model Formulation

- Implement a core empirical formulation for predicting the maximum value (

X_max) within a given time window [26]:X_max = μ + σ × f(τ) - Here,

f(τ)is a function of the system's temporal correlation structure, often related to a scaling exponent. The parameterbis a calibration factor specific to the application and local environment [26].

- Implement a core empirical formulation for predicting the maximum value (

Step 3: Model Calibration and Training

- Use the dataset from Phase 1 (CFD) to pre-train the model, establishing a baseline relationship between the extracted features and the simulated extremes.

- Calibrate the model coefficients (e.g., parameter

bin the empirical formulation) using the real-world sensor data from Phase 2. This step "matches the sensor data to the statistical model," aligning the physics-based predictions with empirical observations.

Step 4: Implementation of Machine Learning Surrogate

- As an alternative or complement to the explicit empirical formula, train a Machine Learning model (e.g., Random Forest, Multi-layer Perceptron) as a CFD surrogate [31].

- Use the features from Step 1 as inputs and the target extreme values from the CFD/sensor data as outputs.

- This surrogate model can then rapidly predict extremes for new conditions without running a full CFD simulation.

Phase 4: Model Validation and Deployment

Step 1: Validation

- Validate the final hybrid model against a reserved subset of sensor data not used in training.

- Quantify performance using metrics like Root Mean Square Error (RMSE), Mean Absolute Error (MAE), and the coefficient of determination (R²) [31].

Step 2: Deployment for Operational Forecasting

- Deploy the validated model in an operational environment. Incoming real-time sensor data is fed into the model to provide continuous, nowcasted maps of environmental extremes.

- The model can be integrated into a digital twin for interactive "what-if" analysis and decision support [26].

Diagram 1: Sensor-CFD hybrid model development and deployment workflow. The process integrates physics-based simulation (yellow), empirical data acquisition (green), and model synthesis/operation (red/blue).

The Scientist's Toolkit: Essential Research Reagents and Solutions

This section catalogs the key hardware, software, and data components essential for building sensor-CFD hybrid systems.

Table 2: Essential Research Toolkit for Sensor-CFD Hybrid Modeling

| Tool Category | Specific Examples | Function & Application Note |

|---|---|---|

| CFD Simulation Software | OpenFOAM (Open-Source), ANSYS Fluent, STAR-CCM+, SimScale (Cloud) [32] | Solves the governing Navier-Stokes equations to simulate fluid flow and scalar transport. Provides high-fidelity data for model training and virtual sensor outputs. |

| Machine Learning Libraries | Scikit-learn (RF, SVM), TensorFlow/PyTorch (MLP, CNN, PINN) [31] | Used to build surrogate models that emulate CFD results (e.g., MLP, RF) or to incorporate physical laws into learners (Physics-Informed Neural Networks). |