Beyond the Single Study: Confronting the Reproducibility Crisis in Ecological and Biomedical Research

This article addresses the critical challenge of reproducibility in ecological and biomedical experimental results, a cornerstone for scientific credibility and effective drug development.

Beyond the Single Study: Confronting the Reproducibility Crisis in Ecological and Biomedical Research

Abstract

This article addresses the critical challenge of reproducibility in ecological and biomedical experimental results, a cornerstone for scientific credibility and effective drug development. We first explore the foundational concepts and scope of the 'reproducibility crisis,' establishing clear definitions for repeatability, replicability, and reproducibility. The discussion then moves to methodological frameworks and open science practices that enhance research robustness, including data sharing policies and standardized documentation. We subsequently troubleshoot common pitfalls, from low statistical power to the 'standardization fallacy,' and present optimization strategies like multi-laboratory designs. Finally, we examine validation techniques and comparative evidence from recent multi-laboratory studies in ecology, extracting actionable lessons for preclinical research. The synthesis provides a roadmap for researchers and drug development professionals to strengthen the reliability of their findings.

Defining the Crisis: What Reproducibility Means for Ecology and Biomedical Science

Reproducibility, defined as the ability to duplicate the results of a prior study using the same materials and procedures, serves as a fundamental cornerstone of the scientific method [1]. Similarly, replicability refers to obtaining consistent results when a study is repeated with new data collection [1]. In recent years, growing concerns about a "reproducibility crisis" have emerged across numerous scientific fields, as researchers increasingly report difficulties in reproducing previously published findings [2] [3]. A landmark 2016 survey published in Nature highlighted the scope of this problem, revealing that more than 70% of researchers had tried and failed to reproduce another scientist's experiments, while more than half had been unable to reproduce their own findings [2] [3]. This crisis transcends individual disciplines, affecting fields as diverse as psychology, economics, clinical medicine, and laboratory biology [2].

The implications of poor reproducibility extend beyond theoretical concerns to create tangible scientific and societal consequences. Irreproducible findings generate scientific uncertainty, hinder methodological progress, and incur substantial costs to both research institutions and broader society [2]. In drug development, for instance, Bayer researchers reported that in nearly two-thirds of their projects, inconsistencies between published data and in-house findings considerably prolonged target validation processes or resulted in project termination [1]. This suggests that the reproducibility crisis has direct implications for resource allocation and research efficiency in critical fields like pharmaceutical development.

The Scope of the Problem: Quantitative Evidence Across Disciplines

Documented Reproducibility Rates by Field

Table 1: Reproducibility rates across scientific disciplines based on large-scale replication efforts

| Discipline | Reproducibility Rate | Study Details | Key Findings |

|---|---|---|---|

| Psychology | Variable (36%-77%) | Many Labs Replication Project [1] | Significant variation in reproducibility depending on effect size and methodological rigor |

| Economics | 61% | Systematic replications [3] | Replication success correlated with effect size of original study |

| Preclinical Cancer Research | ~65% failure rate | Bayer Healthcare internal reviews [1] | Inconsistencies between published and in-house data led to project termination |

| Insect Ecology | 66-83% | Multi-laboratory study with 3 species [2] | 83% reproduced overall statistical effect; 66% reproduced effect size |

| Ecology (General) | Wide variation | 246 analysts with same datasets [4] | Analytical choices drove substantially different conclusions |

Cross-Disciplinary Survey Evidence

Table 2: Researcher perceptions and experiences with reproducibility across disciplines and countries

| Survey Category | USA Researchers | Indian Researchers | Overall Findings |

|---|---|---|---|

| Engineering Faculty | 72 respondents | 146 respondents | Greater familiarity with reproducibility concepts in computational fields |

| Social Science Faculty | 189 respondents | 45 respondents | Higher awareness of reproducibility discussions in psychology and economics |

| Familiarity with "Reproducibility Crisis" | Varies by discipline | Varies by discipline | Disciplinary norms influence awareness more than national context |

| Institutional Support for Open Science | Reported as inconsistent | Resource constraints noted | Both regions face incentive misalignment despite different resources |

Recent evidence continues to demonstrate the pervasive nature of reproducibility challenges. A 2023 massive-scale exercise in ecology involved 246 biologists analyzing the same ecological datasets, which yielded widely divergent conclusions based primarily on analytical choices rather than environmental differences [4]. This suggests that subjective decision-making in data analysis represents a significant contributor to reproducibility problems across scientific fields. Similarly, a 2025 survey of 452 professors in the USA and India revealed significant gaps in attention to reproducibility and transparency in science, aggravated by incentive misalignment and resource constraints across both developed and developing research ecosystems [3].

Experimental Evidence from Ecological Research

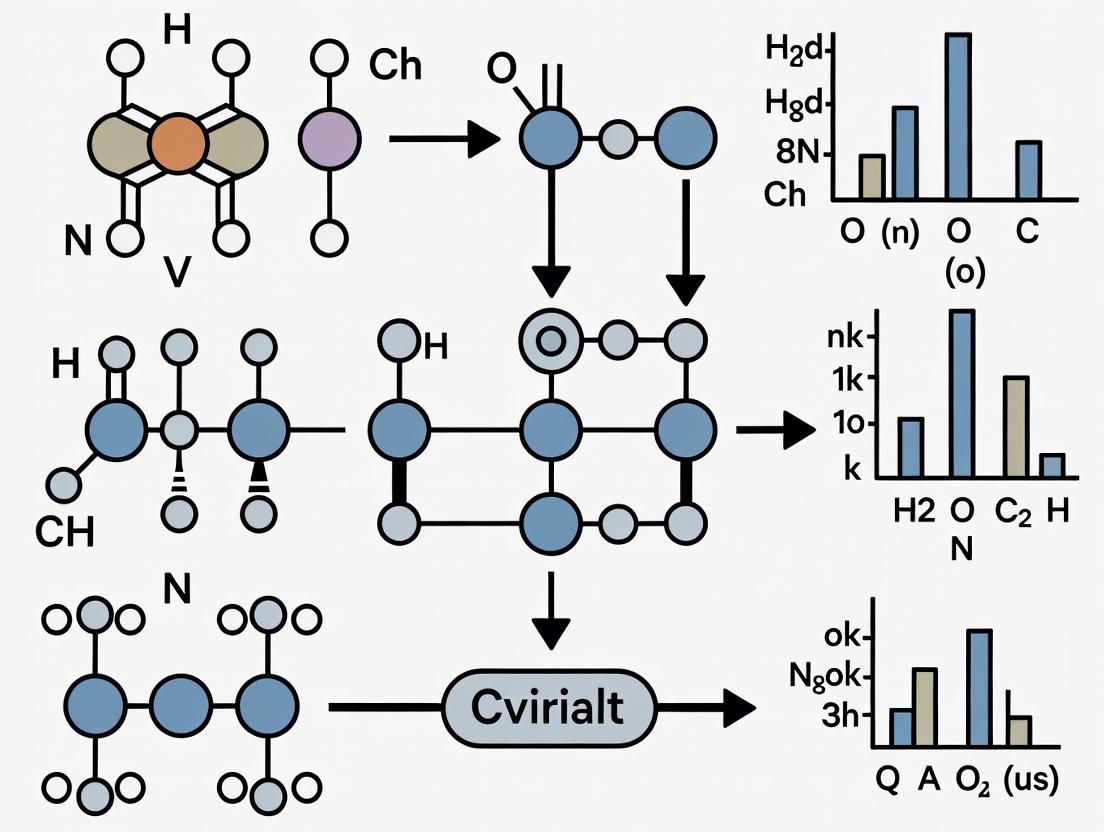

Multi-Laboratory Insect Behavior Studies

A systematic multi-laboratory investigation published in 2025 provides some of the first experimental evidence specifically addressing reproducibility in insect ecological research [2]. This study implemented a 3 × 3 experimental design, incorporating three study sites and three independent experiments on three insect species from different orders: the turnip sawfly (Athalia rosae, Hymenoptera), the meadow grasshopper (Pseudochorthippus parallelus, Orthoptera), and the red flour beetle (Tribolium castaneum, Coleoptera) [2].

Methodological Approach: Each experiment followed rigorously standardized protocols across participating laboratories. Behavioral assays included:

- Starvation effects on larval behavior in A. rosae, measuring post-contact immobility and activity

- Color polymorphism and substrate choice in P. parallelus

- Niche preference in T. castaneum when offered flour types conditioned with or without functional stink glands

Environmental conditions including temperature, humidity, and light cycles were controlled and kept as consistent as possible across laboratories, though dietary sources varied slightly as each laboratory procured food locally [2].

Key Findings: Using random-effect meta-analysis to compare consistency and accuracy of treatment effects on insect behavioral traits across replicate experiments, researchers successfully reproduced the overall statistical treatment effect in 83% of replicate experiments. However, overall effect size replication was achieved in only 66% of replicates [2]. This discrepancy between statistical significance and effect magnitude reproduction highlights the nuanced nature of reproducibility challenges in ecological research.

Controlled Systematic Variability in Ecological Experiments

An alternative approach to addressing reproducibility challenges involves deliberately introducing controlled systematic variability (CSV) into experimental designs. This controversial hypothesis suggests that stringent environmental and biotic standardization may actually reduce reproducibility by amplifying the impacts of laboratory-specific environmental factors not accounted for in study designs [5].

Methodological Approach: In a study conducted by 14 European laboratories, researchers ran simple microcosm experiments using grass (Brachypodium distachyon) monocultures and grass + legume (Medicago truncatula) mixtures [5]. Each laboratory introduced either:

- Environmental CSV

- Genotypic CSV

- Both environmental and genotypic CSV

Experiments were conducted in both growth chambers (with stringent environmental controls) and glasshouses (with less environmental control) [5].

Key Findings: The introduction of genotypic CSV increased reproducibility in growth chambers but not in glasshouses. Environmental CSV had little effect on reproducibility in either growth chambers or glasshouses [5]. This suggests that deliberate introduction of known, quantified genetic variability may represent a viable strategy for increasing reproducibility of ecological studies conducted in highly controlled environmental conditions.

Factors Contributing to Reproducibility Challenges

Common Causes Across Disciplines

Several interconnected factors have been identified as contributing to reproducibility challenges across scientific fields:

Questionable Research Practices: These include p-hacking (analyzing data until statistically significant results are obtained), HARKing (hypothesizing after results are known), selective analysis, and selective reporting [3].

Misaligned Incentives: Academic reward structures often prioritize novel, positive findings over rigorous, reproducible research, prompting researchers to prioritize publishability over reliability [3].

Insufficient Statistical Power: Many studies employ sample sizes that are too small to detect true effects reliably, increasing the likelihood of both false positives and false negatives.

Analytical Flexibility: The 2023 ecology study demonstrating widely divergent conclusions from the same datasets highlights how researchers' analytical choices can drive results [4].

Biological Variation and the Standardization Fallacy: Highly standardized laboratory conditions may limit inference space by restricting the range of environmental conditions, making results idiosyncratic to specific laboratory contexts [2].

The Standardization Fallacy in Ecological Research

The "standardization fallacy" describes the paradoxical situation where efforts to increase reproducibility through rigorous standardization may actually compromise external validity [2] [5]. This occurs because highly standardized conditions represent only a very narrow range of possible environmental conditions, limiting the broader applicability of findings. As noted in the multi-laboratory insect study, "results can differ when experiments are replicated because the response of an animal to an experimental treatment depends not only on the properties of the treatment but is a product of the animal's genotype, parental effects, and its past and present environmental conditions" [2].

Research Reagent Solutions and Methodological Approaches

Table 3: Essential research materials and methodological solutions for improving reproducibility in ecological studies

| Category | Specific Solution | Function/Application | Field Examples |

|---|---|---|---|

| Study Organisms | Turnip sawfly (Athalia rosae) | Intermediate model between lab-adapted and wild-caught | Starvation effects on larval behavior [2] |

| Meadow grasshopper (Pseudochorthippus parallelus) | Wild-caught representative | Color polymorphism and substrate choice [2] | |

| Red flour beetle (Tribolium castaneum) | Laboratory-adapted model system | Niche preference experiments [2] | |

| Methodological Approaches | Multi-laboratory designs | Identifies laboratory-specific environmental factors | 3×3 experimental design across sites/species [2] |

| Controlled Systematic Variability (CSV) | Introduces deliberate, quantified variation | Grass and legume microcosm experiments [5] | |

| Random-effects meta-analysis | Quantifies consistency across replicates | Insect behavior multi-lab study [2] | |

| Open Science Practices | Data and code sharing | Enables computational reproducibility | Positive correlation with citation rates [3] |

| Study pre-registration | Reduces analytical flexibility and HARKing | Adopted in psychology, ecology [3] | |

| Detailed methodology reporting | Facilitates exact replication | ARRIVE guidelines, EDA [2] |

The reproducibility crisis affects diverse scientific disciplines, though its specific manifestations vary across fields. Experimental evidence from ecological research demonstrates that even with rigorous standardization, reproducibility rates for effect sizes remain concerningly low (66% in multi-laboratory insect studies) [2]. The standardization fallacy highlights the paradoxical tension between internal validity and external generalizability [2] [5].

Moving forward, addressing reproducibility challenges will require multifaceted approaches:

Adoption of open research practices including data sharing, code availability, and detailed methodology reporting [2] [3]

Implementation of multi-laboratory designs that systematically account for laboratory-specific environmental factors [2]

Strategic introduction of controlled systematic variability in appropriate research contexts, particularly genotypic CSV in highly controlled environments [5]

Cultural shifts in scientific incentives to reward reproducible, rigorous research rather than solely novel or positive findings [3]

Development of discipline-specific best practices that acknowledge the unique methodological challenges in different fields [3]

As research into reproducibility continues to evolve, the scientific community must balance standardization with appropriate heterogeneity, rigor with practical feasibility, and disciplinary specificity with cross-field learning. Only through such balanced approaches can researchers address the fundamental challenges of reproducibility while advancing reliable knowledge across scientific disciplines.

In the realm of scientific research, particularly in ecology and drug development, the terms repeatability, replicability, and reproducibility represent distinct but interconnected concepts that are fundamental to research validity. While often used interchangeably in casual scientific discourse, these terms describe different levels of verification in the scientific process. Understanding these distinctions is critical for assessing the reliability of ecological experimental results and translating these findings into applications such as drug development.

The significance of these concepts has been magnified by what many refer to as a "reproducibility crisis" across multiple scientific fields. A 2016 survey published in Nature revealed that more than 70% of researchers have attempted and failed to reproduce another scientist's experiments, and more than half have been unable to reproduce their own experiments [3]. This crisis affects diverse disciplines, including psychology, medicine, economics, and ecology [2] [3]. For researchers and drug development professionals, clarifying these terms is not merely academic—it establishes the foundation for rigorous, reliable science that can confidently inform future research and clinical applications.

Defining the Terminology

Core Definitions and Distinctions

Despite their importance, consistent definitions for repeatability, replicability, and reproducibility have been elusive. Different scientific disciplines and institutions have historically used these words in inconsistent or even contradictory ways [6]. To clarify this landscape, the following table outlines the most common definitions, with a focus on their application in ecological and biological research.

Table 1: Core Definitions of Key Verification Terms

| Term | Definition | Key Question | Typical Context |

|---|---|---|---|

| Repeatability | The ability to obtain consistent results when the same experiment is performed multiple times by the same researcher or team, using the same setup, methods, and data [7]. | "Can we get the same result again in our lab, right now?" | Intra-laboratory verification; initial validation of one's own results. |

| Reproducibility | The ability of an independent researcher to obtain the same results using the original data and methods [6] [8]. Often involves reanalyzing the provided data. | "Can an independent team arrive at the same conclusion from the original data?" | Computational verification; reanalysis of shared datasets. |

| Replicability | The ability to confirm a study's findings by conducting a new, independent experiment, collecting new data, but following the same experimental methods [8] [9]. | "Does the phenomenon hold up in a new experiment with new data?" | External validation; confirmation of a scientific finding. |

Resolving Inter-Disciplinary Confusion

The confusion surrounding these terms is well-documented. The National Academies of Sciences, Engineering, and Medicine noted that "Different scientific disciplines and institutions use the words reproducibility and replicability in inconsistent or even contradictory ways" [6]. A review by Barba (2018) outlined three categories of usage [6]:

- Category A: The terms are used with no distinction between them.

- Category B1: "Reproducibility" refers to using the original researcher's data and codes to regenerate results, while "replicability" refers to a researcher collecting new data to arrive at the same findings.

- Category B2: "Reproducibility" refers to independent researchers arriving at the same results using their own data and methods, while "replicability" refers to a different team arriving at the same results using the original author's artifacts.

Notably, the computational science community often employs definitions opposite to those used in many life sciences. For clarity, this guide adopts the B1 definitions, which align with the framework used by the American Statistical Association and are most prevalent in ecological and biological research [9].

The Relationship Between Concepts

The relationship between repeatability, reproducibility, and replicability can be visualized as a hierarchy of scientific verification, with each step providing a stronger, more generalizable validation of research findings.

Diagram 1: Hierarchy of Scientific Verification

This diagram illustrates how these concepts build upon one another. Repeatability forms the foundation—if a researcher cannot consistently reproduce their own results under identical conditions, the findings are unreliable. Reproducibility represents the next level, ensuring that the original analysis was conducted fairly and correctly and that the methods are transparent enough for an independent team to follow. Replicability is the highest standard, demonstrating that the finding is not an artifact of a specific experimental context but a robust phenomenon that holds true when tested anew [8].

Experimental Evidence from Ecological Studies

A Multi-Laboratory Investigation in Insect Ecology

A 2025 systematic multi-laboratory investigation directly tested the reproducibility of ecological studies on insect behavior, implementing a 3×3 experimental design (three study sites and three independent experiments on three insect species) [2]. The study species included the turnip sawfly (Athalia rosae, Hymenoptera), the meadow grasshopper (Pseudochorthippus parallelus, Orthoptera), and the red flour beetle (Tribolium castaneum, Coleoptera).

Table 2: Summary of Multi-Laboratory Insect Ecology Experiments [2]

| Experiment | Species | Treatment | Measured Traits | Original Hypothesis |

|---|---|---|---|---|

| 1. Starvation Stress | Turnip Sawfly (Athalia rosae) | Starvation vs. non-starvation | Post-contact immobility (PCI) and activity | Starved larvae would exhibit shorter PCI and increased activity. |

| 2. Color Polymorphism | Meadow Grasshopper (P. parallelus) | Color morph (green vs. brown) | Substrate choice for camouflage | Each morph would select a substrate matching its body color. |

| 3. Niche Preference | Red Flour Beetle (T. castaneum) | Flour conditioned with/without stink glands | Choice between different flour types | Larvae and adults would differ in niche preference. |

The findings provided nuanced evidence regarding reproducibility. Researchers successfully reproduced the overall statistical treatment effect in 83% of the replicate experiments. However, a more rigorous measure—replication of the overall effect size—was achieved in only 66% of the replicates [2]. This discrepancy highlights that achieving statistical significance is different from reproducing the same magnitude of effect, the latter being crucial for meta-analyses and understanding biological importance.

The Challenge of Analytical Flexibility in Ecology

Beyond experimental design, reproducibility can be undermined during data analysis. A consortium of ecologists, including Amanda Chunco, investigated this by giving 174 independent scientific teams the same ecological dataset and hypothesis to analyze [10]. The results were striking: despite identical data, the analyses varied widely not only in statistical strength but also in the final conclusions about whether the data supported the core hypotheses. This study demonstrated that subjective decisions made during data analysis are a significant, underappreciated source of non-reproducibility in ecology.

Protocols for Enhancing Reproducibility and Replicability

Standardized Data Collection with ReproSchema

Inconsistent data collection is a major barrier to reproducibility. The ReproSchema ecosystem addresses this by providing a schema-centric framework to standardize survey-based data collection, which is relevant for behavioral ecology and clinical drug development [11].

Key Components of the ReproSchema Workflow:

- Structured Schema Definition: Defines each data element (e.g., a survey question) with its metadata, linking the response directly to its collection method and conditions.

- Reusable Assessment Library: A library of over 90 standardized, versioned assessments (e.g., behavioral scales) formatted in JSON-LD.

- Validation and Conversion Tools: A Python package (

reproschema-py) validates schemas and converts them for use on common platforms like REDCap. - Version-Controlled Protocols: Protocols are stored on Git-compatible services (e.g., GitHub) with unique URIs, ensuring persistent access and tracking of changes over time [11].

This structured approach ensures that the same construct is measured consistently across different research teams and time points, which is critical for longitudinal ecological studies and multi-site clinical trials.

Controlled Systematic Variability (CSV) in Ecological Experiments

A counter-intuitive yet powerful method for improving replicability is the deliberate introduction of Controlled Systematic Variability (CSV). A landmark study tested the hypothesis that highly stringent standardization in experiments (using identical seed sources, soils, etc.) might actually reduce reproducibility by amplifying the impact of lab-specific environmental factors not accounted for in the design [5].

Experimental Protocol:

- Setup: 14 European laboratories ran a simple microcosm experiment using grass (Brachypodium distachyon) monocultures and grass-legume mixtures.

- Intervention: Each laboratory introduced either (a) environmental CSV, (b) genotypic CSV, or (c) both, into their experiments, which were conducted in either growth chambers (stringently controlled) or glasshouses (less controlled).

- Finding: The introduction of genotypic CSV (e.g., using multiple genetic strains) significantly increased reproducibility in the stringently controlled growth chambers. This suggests that CSV can make findings more robust by accounting for natural biological variation, thereby expanding the inference space of a study [5].

This methodology offers a practical protocol for ecologists: when designing a multi-site experiment, deliberately varying a key factor (e.g., specific genetic strains, minor temperature regimes, or light sources) across sites can make the final synthesized result more reliable and generalizable than forcing absolute standardization.

The Scientist's Toolkit: Essential Reagents and Solutions

Table 3: Key Research Reagent Solutions for Reproducible Ecological Experiments

| Reagent / Solution | Function in Experimental Design | Role in Enhancing Reproducibility |

|---|---|---|

| Standardized Organisms | Genetically defined or carefully sourced study species (e.g., Tribolium castaneum lab strains) [2]. | Reduces unexplained variation due to genetic heterogeneity, a key principle of CSV [5]. |

| ReproSchema Protocols | A structured, schema-driven framework for defining surveys and behavioral assessments [11]. | Ensures data is collected consistently across different researchers, labs, and time, improving interoperability. |

| Open Science Framework (OSF) | A free, open-source web platform for managing and sharing the entire research workflow. | Facilitates pre-registration, data sharing, and material sharing, which are pillars of reproducible science [3]. |

| Common Data Elements (CDEs) | Standardized, precisely defined questions and response options used in data collection (e.g., from the NIMH) [11]. | Promotes data harmonization and comparability across different studies, enabling powerful meta-analyses. |

The distinctions between repeatability, reproducibility, and replicability are more than semantic pedantry; they form a conceptual framework for building robust scientific knowledge. For researchers in ecology and drug development, actively designing studies to pass these successive hurdles—from consistent internal results to successful independent verification—is paramount. The experimental evidence and protocols outlined here, from multi-laboratory designs and controlled systematic variability to standardized data schemas, provide a practical roadmap for addressing the reproducibility crisis. By integrating these principles and tools, the scientific community can strengthen the validity of ecological findings and ensure they provide a reliable foundation for application in critical fields like drug development.

Reproducibility, defined as the ability of a result to be replicated by an independent experiment, is a cornerstone of the scientific method [12] [2]. However, numerous disciplines are confronting what has been termed a "reproducibility crisis," where findings fail to replicate in subsequent studies [13] [14]. This crisis transcends individual fields, affecting domains as diverse as psychology, economics, medicine, and ecology [12] [2]. Surveys reveal that more than 70% of researchers have tried and failed to reproduce another scientist's experiments, and more than half have failed to reproduce their own findings [2]. This widespread challenge undermines scientific progress, incurs substantial costs, and creates uncertainty that impedes evidence-based decision-making in critical areas like public health and environmental management.

The discussion of poor reproducibility was significantly advanced by a landmark multi-laboratory study on mouse phenotyping by Crabbe et al. in 1999 [13] [12] [2]. Despite rigorous standardization across three laboratories, this research detected strikingly different results across sites, with some behavioral tests yielding contradictory findings [13] [12]. The authors concluded that "experiments characterizing mutants may yield results that are idiosyncratic to a particular laboratory" [12] [2]. This seminal work sparked increased attention to reproducibility issues, particularly in preclinical rodent research. However, as recent evidence demonstrates, this challenge is not confined to mammals but extends to all living organisms, including insects used in ecological and behavioral research [12] [2] [14].

Quantifying the Problem: Reproducibility Rates in Scientific Research

Systematic Evidence from Multi-Laboratory Studies

Recent systematic investigations have provided quantitative assessments of reproducibility rates across different biological research domains. The following table summarizes key findings from multi-laboratory studies examining reproducibility:

Table 1: Reproducibility Rates in Biological Research

| Research Domain | Reproducibility Measure | Success Rate | Experimental Context | Citation |

|---|---|---|---|---|

| Insect Behavior | Statistical effect reproduction | 83% (17% irreproducible) | Three experiments across three laboratories with three insect species | [12] [2] |

| Insect Behavior | Effect size reproduction | 66% (34% irreproducible) | Same as above | [12] [2] |

| Ecological Publications | Reproducibility potential (no code-sharing policy) | 2.5% (shared both code and data) | Analysis of 314 articles from journals without code-sharing policies | [15] |

| Ecological Publications | Reproducibility potential (with code-sharing policy) | 8.1 times higher than without policy | Comparison between journal types | [15] |

A 2025 multi-laboratory study on insect behavior provides some of the first systematic evidence of reproducibility challenges in this field [12] [2] [14]. The research team implemented a 3×3 experimental design, incorporating three study sites and three independent experiments on three insect species from different orders: the turnip sawfly (Athalia rosae, Hymenoptera), the meadow grasshopper (Pseudochorthippus parallelus, Orthoptera), and the red flour beetle (Tribolium castaneum, Coleoptera) [12] [2]. Using random-effect meta-analysis to compare consistency and accuracy of treatment effects across replicate experiments, they found that while overall statistical treatment effects were reproduced in 83% of replicate experiments, overall effect size replication was achieved in only 66% of replicates [12] [2]. This discrepancy highlights the complexity of defining and measuring reproducibility, as different metrics can yield substantially different assessments.

The Impact of Reporting Practices on Reproducibility

Beyond laboratory practices, reporting and data sharing policies significantly influence reproducibility potential. A 2025 study examined code and data sharing practices in ecological journals, comparing those with and without code-sharing policies [15]. The researchers reviewed a random sample of 314 articles published between 2015 and 2019 in 12 ecological journals without code-sharing policies, finding that only 15 articles (4.8%) provided analytical code, though this percentage nearly tripled from 2015-2016 (2.5%) to 2018-2019 (7.0%) [15]. Data sharing was higher than code sharing (increasing from 31.0% to 43.3% across the same period), yet only eight articles (2.5%) shared both code and data [15].

When compared to a sample of 346 articles from 14 ecological journals with code-sharing policies, journals without such policies showed 5.6 times lower code sharing, 2.1 times lower data sharing, and 8.1 times lower reproducibility potential [15]. Despite these differences, key reproducibility-boosting features were similarly lacking across both journal types: while approximately 90% of all articles reported the analytical software used, the software version was often missing (49.8% and 36.1% of articles in journals with and without code-sharing policies, respectively), and exclusively proprietary software was used in 16.7% and 23.5% of articles, respectively [15].

Impacts on Drug Discovery and Development

The Preclinical Research Pipeline

Preclinical research serves as the foundation of biomedical innovation, yet it faces a significant reproducibility crisis that compromises the entire translational pipeline [13]. When preclinical findings cannot be reliably reproduced, drug development processes are delayed or derailed, wasting substantial resources and potentially diverting research efforts toward dead ends [13]. The reproducibility crisis in preclinical science stems from a range of preventable issues, including over-standardization, flawed or underpowered study designs, and environmental inconsistencies that are often overlooked [13]. Human involvement in experiments introduces additional variability, particularly when studies are conducted during daytime hours, disrupting the natural rhythms of nocturnal animals like mice commonly used in preclinical research [13].

Table 2: Impact of Irreproducible Research on Drug Discovery

| Impact Area | Consequences | Proposed Solutions |

|---|---|---|

| Preclinical Validation | Delayed or derailed development of effective therapies; wasted resources | Digital home cage monitoring; improved experimental design |

| Translational Pipeline | Compromised translation from animal models to human treatments | Continuous data collection; reduced human interference |

| Model Characterization | Inadequate understanding of animal behavior and physiology | Long-duration monitoring; standardized protocols |

| Resource Allocation | Misguided investment in non-viable drug candidates | Enhanced reproducibility measures; systematic variation |

Case Study: Digital Home Cage Monitoring

Innovative approaches are emerging to address these challenges. Researchers are turning to digital home cage monitoring, a transformative approach that enables continuous, non-invasive observation of animals in their natural environments [13]. This method minimizes human interference, captures rich behavioral and physiological data, and enhances statistical power through automated, unbiased measurement [13]. One initiative driving progress in this space is the Digital In Vivo Alliance (DIVA), a collaborative initiative led by The Jackson Laboratory that brings together pharmacologists, veterinarians, machine learning experts, and data scientists working to clinically validate digital measures [13].

The JAX Envision platform serves as enabling technology for this initiative, providing advanced digital in vivo monitoring designed to assess mouse behavior and physiology in the home cage environment [13]. This system provides real-time, non-invasive tracking by leveraging computer vision and machine learning technologies, offering scalable monitoring of individual animals in socially-housed environmental conditions while supporting protocol harmonization, operator-independent assessments, and long-term data collection [13].

A recent initiative by DIVA's Animal Health, Husbandry, and Welfare focus group provides a compelling example of how digital monitoring can improve reproducibility [13]. This study, inspired by the seminal findings of Crabbe et al. (1999), assessed sources of variability in rodent activity across three research sites [13]. Researchers hypothesized that combining continuous data collection with unbiased digital measures would enhance inter-site replication and allow for more accurate understanding of variability [13].

The study involved both male and female mice from three genetic backgrounds (C57BL/6J, A/J, and J:ARC) housed and handled under standardized conditions across all sites [13]. The 9-week replicability study produced 24,758 hours (2.82 years) of mouse video documenting 73,504 hours (8.39 years) of individual mouse behavior [13]. When data were aggregated over 24-hour periods, genotype emerged as the dominant factor, explaining over 80% of the variance [13]. This finding is critical because researchers often compare wildtype to mutant strains where genotype is the primary difference between groups [13].

Further analysis revealed that genetic effects were most detectable during early dark periods when animals are naturally active but researchers are typically absent, while technical noise was more pronounced during standard work hours when researchers typically collect data [13]. This study demonstrated that long-duration studies require significantly fewer animals to reach the same level of confidence, directly addressing reduction of animal use and enabling 3Rs (replacement, reduction, refinement) impact [13].

Impacts on Environmental Policy and Ecological Research

The Standardization Fallacy in Ecological Studies

Similar reproducibility challenges affect ecological research with direct implications for environmental policy. The "standardization fallacy" explains why efforts to increase reproducibility through rigorous standardization may actually compromise external validity by restricting the range of environmental conditions to a specific "local set" [12] [2]. This perspective, known as the "reaction norm perspective," recognizes that an animal's response to an experimental treatment depends not only on the properties of the treatment but also on the animal's genotype, parental effects, and its past and present environmental conditions [12] [2]. When laboratory experiments are conducted under highly standardized conditions, they represent only a very narrow range of environmental conditions, thereby limiting the inference space of the entire study [12] [2].

The 2025 insect behavior study tested several specific ecological hypotheses across multiple laboratories [12] [2]. In the first experiment, researchers examined the effects of starvation on larval behavior in the turnip sawfly (Athalia rosae), specifically measuring post-contact immobility (PCI) and activity following a simulated attack [12] [2]. Based on previous findings, they hypothesized that starved larvae would exhibit shorter PCI durations and increased activity levels compared to non-starved larvae [12] [2]. This experiment allowed comparison between behavioral tests requiring manual handling (PCI quantification) versus those requiring little human intervention (activity evaluation), testing the prediction that manual handling would introduce more between-laboratory variation [12] [2].

The second experiment investigated the relevance of color polymorphism for substrate choice in the meadow grasshopper (Pseudochorthippus parallelus), using two color morphs (green and brown) to test for morph-dependent microhabitat choice and crypsis [12] [2]. Researchers predicted that each morph would preferentially select a substrate matching its body color [12] [2]. The third experiment focused on the red flour beetle (Tribolium castaneum), assessing niche preference by offering beetles a choice between flour types conditioned by beetles with or without functional stink glands [12] [2]. Researchers predicted that larvae and adult beetles would differ in their niche choice, with larvae showing preference for conditioned flour containing antimicrobial secretions, while adults would avoid this conditioned flour [12] [2].

Environmental Policy Implications

Irreproducible ecological research directly impacts environmental policy and conservation efforts. When policy decisions are based on findings that cannot be replicated, the consequences can include:

- Ineffective Conservation Strategies: Conservation measures based on non-reproducible findings may fail to protect vulnerable species or ecosystems, wasting limited conservation resources [15].

- Misguided Resource Management: Environmental management decisions regarding water quality, forest management, or invasive species control may be ineffective if based on limited or context-specific findings.

- Delayed Policy Implementation: Uncertainty about scientific evidence can lead to policy paralysis or delayed action on pressing environmental challenges.

- Erosion of Public Trust: When scientific recommendations frequently change or contradict previous findings due to reproducibility issues, public confidence in scientific institutions diminishes.

Comparative Analysis: Drug Discovery vs. Environmental Policy

Stakeholder Perspectives and Consequences

The impacts of irreproducible research manifest differently across drug discovery and environmental policy domains, though common themes emerge. The following table compares these impacts across key dimensions:

Table 3: Comparative Impacts of Irreproducible Research Across Domains

| Dimension | Drug Discovery Impacts | Environmental Policy Impacts |

|---|---|---|

| Financial Costs | Wasted R&D investments (millions per failed drug); delayed time to market | Ineffective conservation spending; economic impacts on resource-dependent industries |

| Human Health | Delayed access to effective treatments; potential patient harm from misdirected therapies | Public health impacts from environmental degradation; exposure to pollutants |

| Timeline Effects | Extended development timelines (years); regulatory delays | Delayed environmental protections; continued ecosystem degradation |

| Stakeholders Affected | Patients, pharmaceutical companies, healthcare systems, investors | General public, ecosystems, future generations, regulatory agencies |

| Systemic Consequences | Erosion of trust in medical research; increased regulatory scrutiny | Undermined evidence-based policymaking; polarization of environmental debates |

Common Methodological Challenges

Despite different applications, both domains face similar methodological challenges that contribute to reproducibility problems:

- Biological Complexity: Both mammalian models used in drug discovery and insect species used in ecological research exhibit substantial biological variation that is often inadequately accounted for in experimental design [13] [12].

- Environmental Sensitivities: Subtle environmental differences, such as light-dark cycles for mice or dietary variations for insects, can significantly influence experimental outcomes [13] [12] [2].

- Standardization Paradox: Efforts to tightly control experimental conditions may limit generalizability and produce findings that are idiosyncratic to specific laboratory contexts [13] [12].

- Reporting Deficiencies: Incomplete reporting of methods, analytical code, and environmental conditions hinders replication attempts in both domains [15].

Solutions and Best Practices for Enhancing Reproducibility

Methodological Improvements

Substantial progress can be made by implementing improved methodological approaches:

Multi-laboratory Designs: Introducing systematic variation through multi-laboratory or heterogenized designs can improve reproducibility in studies involving any living organisms [12] [2]. These approaches intentionally incorporate biological and environmental variation into experimental designs, creating more robust and generalizable findings [12] [2].

Digital Monitoring Technologies: As demonstrated by the DIVA case study, digital home cage monitoring represents a fundamental shift in how researchers approach animal research [13]. These technologies enable continuous, unbiased data collection in the animals' home environment, capturing more accurate behavioral and physiological data while minimizing human interference and stress [13].

Open Research Practices: Adopting open research practices, including code and data sharing, significantly enhances reproducibility potential [15]. Journals with code-sharing policies show substantially higher reproducibility potential than those without such policies [15].

Reporting Guidelines and Frameworks

Several established frameworks support improved experimental design and reporting:

- PREPARE (Planning Research and Experimental Procedures on Animals: Recommendations for Excellence): Guidelines promoting more rigor in experimental planning, design, and reporting [13].

- ARRIVE (Animal Research: Reporting of In Vivo Experiments): Guidelines for complete reporting of animal studies to ensure findings can be reliably interpreted and replicated [13].

- FAIR Principles: Ensuring data and code are Findable, Accessible, Interoperable, and Reusable [15].

Digital home cage monitoring technologies like Envision align seamlessly with PREPARE and ARRIVE guidelines, providing real-time, automated monitoring that helps identify and mitigate issues early in studies [13]. These platforms generate structured, high-resolution datasets that document experimental conditions, creating comprehensive digital audit trails that enhance transparency and reproducibility [13].

Research Reagent Solutions

Table 4: Essential Research Reagents and Resources for Reproducible Research

| Resource Type | Specific Examples | Function in Enhancing Reproducibility |

|---|---|---|

| Digital Monitoring Platforms | JAX Envision platform | Enables continuous, non-invasive observation; reduces human interference; captures rich behavioral data [13] |

| Analytical Software | R, Python with version control | Ensures computational reproducibility; enables code sharing and reanalysis [15] |

| Data Repositories | Zenodo, Dryad | Provides persistent storage for datasets and code; facilitates independent verification [15] |

| Standardized Protocols | DIVA collaborative protocols | Harmonizes methods across laboratories; reduces inter-lab variability [13] |

| Reporting Guidelines | ARRIVE, PREPARE | Improves completeness of methodological reporting; enables proper assessment and replication [13] |

The stakes of irreproducible research are undeniably high across both drug discovery and environmental policy. The reproducibility crisis affects scientific disciplines studying diverse organisms—from mice in preclinical research to insects in ecological studies—indicating fundamental challenges in how biological research is designed, conducted, and reported [13] [12] [2]. Quantitative evidence reveals substantial room for improvement, with reproducibility rates varying considerably across studies and effect size replication proving particularly challenging [12] [2].

Promising solutions are emerging, including digital monitoring technologies that transform data collection practices, multi-laboratory designs that incorporate systematic variation, and open science practices that enhance transparency [13] [12] [15]. Journals with code-sharing policies show dramatically higher reproducibility potential than those without such policies, suggesting that institutional practices and policies can significantly impact research reproducibility [15].

As the scientific community continues to address these challenges, integration of cutting-edge digital monitoring with rigorous planning and reporting standards offers a powerful foundation for more reliable science [13]. These innovations not only enhance the credibility of scientific findings but also accelerate the translation of those findings into effective therapies and evidence-based policies that benefit human health and environmental sustainability.

The integrity of scientific research, particularly in fields like ecology with direct implications for drug development and environmental health, is foundational to genuine progress. However, this integrity is being systematically undermined by deeply embedded systemic pressures. The "publish or perish" culture and pervasive funding biases create incentives that can compromise methodological rigor and, ultimately, the robustness of findings. Within ecology and evolution, conditions known to contribute to irreproducibility are widespread, including a large discrepancy between the proportion of "significant" results and average statistical power, incomplete reporting, and a research culture that encourages questionable practices [16]. This article examines how these pressures manifest, their quantifiable impact on research reproducibility, and the methodological strategies that can help restore reliability.

The Publish or Perish Paradigm and Its Consequences

The "publish or perish" culture describes an academic environment where career advancement, funding, and prestige are disproportionately tied to the quantity of publications and the prestige of the journals in which they appear, rather than the quality or reproducibility of the research. This system creates powerful, often perverse, incentives that can undermine scientific robustness.

The Funding and Prestige Cycle: A highly competitive environment for funding and career promotion incites researchers to submit predominantly positive results for publication, knowing they are more likely to be accepted by editors, favorably reviewed by peers, and cited once published [17]. Editors, in turn, face competition over journal impact factors and financial survival, making it more attractive to publish novel, positive findings [17]. This cycle has been shown to lead to an overestimation of true effect sizes, especially in contexts with greater competition for funding [17].

The File Drawer Problem and Publication Bias: Publication bias, or the tendency to publish only studies with statistically significant results while filing away null or negative findings, has devastating consequences. It leads to a scientific literature that is overwhelmingly "positive," creating a distorted picture of reality. In ecology, the proportion of "positive" results has been estimated at 74%, a figure well above the expected average statistical power of studies in the field, which is at best 40%-47% for medium effects [16]. This discrepancy suggests a dangerously high false-positive rate in the published literature.

Unconscious Bias and Corner-Cutting: The pressure to publish can lead to unconscious bias and the adoption of questionable research practices. As noted by sociologist Brian Martinson, when scientists are already working to their limits, "the only option left... to get an edge... is to cut corners" [18]. This can manifest as skipping crucial validating experiments, engaging in "p-hacking" (reanalyzing data until significant results are found), or other practices that increase the likelihood of publishing false findings [18].

Table 1: Surveys on Reproducibility Challenges in Science

| Survey Source | Respondents Who Could Not Reproduce a Published Result | Respondents Who Believed There was a Significant Crisis | Key Findings |

|---|---|---|---|

| Nature Survey (2016) [18] | >70% of scientists | ~50% of those who failed to replicate | Widespread experience with irreproducibility. |

| American Society for Cell Biology (2014) [18] | 71% of respondents | - | Two-thirds suspected original findings were false positives or lacked rigor. |

| MD Anderson Cancer Center [18] | 66% of senior investigators | - | Only one-third of irreproducible findings were ever resolved. |

Funding and Sponsorship Biases

Beyond the general pressure to publish, the specific source of research funding can introduce another layer of bias, potentially distorting research outcomes to align with a sponsor's interests.

The "Funding Effect": Funding or sponsorship bias occurs when researchers distort results or modify conclusions due to pressure from commercial or non-profit funders [19]. This "funding effect" is well-documented, with industry-sponsored studies significantly more likely to publish positive results than those sponsored by independent organisations [19]. In some cases, funders may legally prevent the publication of unfavourable results or sue researchers for breach of contract [19].

Impacts on Medicine and Ecology: The consequences are particularly acute in pharmaceutical research, where biased reporting can directly affect medical practice and patient health [19]. While less studied in ecology, the same fundamental risk exists when research funding is tied to specific outcomes, such as the environmental impact of a commercial product.

Mitigation Strategies: To combat this, the International Committee of Medical Journal Editors (ICMJE) requires detailed disclosure forms outlining sources of support and the funder's role in study design, data analysis, and publication decisions [19]. Some investigators have proposed that industry-funded academic studies should only proceed if academic centres retain sole responsibility for the design, conduct, analysis, and reporting of trials [19].

Quantifying the Problem: Reproducibility in Ecological Research

The "reproducibility crisis" is not merely theoretical. Systematic efforts to replicate published studies across various scientific disciplines have yielded alarming results, and ecology is no exception.

A landmark multi-laboratory study on insect behavior tested the reproducibility of three different experiments across three laboratories [2] [12]. The study successfully reproduced the overall statistical significance of the treatment effect in 83% of the replicate experiments. However, a more stringent measure—the replication of the effect size—was achieved in only 66% of the cases [2] [12]. This indicates that even when a finding is directionally correct, the magnitude of the effect is often exaggerated or diminished in subsequent replications.

This problem is exacerbated by the "standardization fallacy" in ecological and biological research [12]. The traditional approach of rigorously standardizing experimental conditions (e.g., using identical animal genotypes, feed, and environmental settings) to reduce noise can actually reduce the reproducibility and external validity of findings. This is because the results become idiosyncratic to a very specific, non-representative set of laboratory conditions [12] [5]. A study testing this hypothesis found that introducing controlled systematic variability (CSV)—specifically, genotypic variability—increased reproducibility in stringently controlled growth chambers [5].

Table 2: Key Findings from Multi-Laboratory Reproducibility Studies

| Study Focus | Replication Rate (Statistical Significance) | Replication Rate (Effect Size) | Key Insight |

|---|---|---|---|

| Insect Behavior (2025) [2] [12] | 83% | 66% | Highlights the difference between significance and effect size replication. |

| Psychology (2015) [16] | 39% | - | Effect sizes in replications were about half the magnitude of the originals. |

| Biomedical Research [16] | 11% - 49% | - | Estimates vary, but even the most optimistic shows less than half are reproducible. |

| Grass Monoculture Experiment [5] | Increased with CSV | - | Introducing controlled genotypic variability improved reproducibility. |

Experimental Protocols for Assessing Reproducibility

To objectively assess and improve reproducibility, researchers are employing rigorous multi-laboratory designs. The following protocol exemplifies this approach.

Protocol: Multi-Laboratory Test of Insect Behavior

This methodology is derived from a 2025 study designed to systematically test the reproducibility of ecological studies on insect behavior [2] [12].

1. Experimental Design: A 3x3 factorial design is implemented, involving three study sites (independent laboratories) and three independent experiments, each using a different insect species (e.g., Turnip sawfly, Meadow grasshopper, Red flour beetle).

2. Standardization and Variability: All laboratories follow a standardized protocol for each experiment, controlling for environmental conditions like temperature, humidity, and light cycles as much as possible. However, some elements, such as dietary sources, are necessarily procured locally, introducing a degree of natural, real-world variability [2].

3. Behavioral Assays:

- Starvation Response (Turnip sawfly): Larvae are subjected to a starvation treatment or control. Behavioral traits measured include Post-Contact Immobility (PCI), which requires manual handling, and general activity, which is observed with minimal intervention [2] [12].

- Color Polymorphism (Meadow grasshopper): The substrate choice of different color morphs (green vs. brown) is tested to assess morph-dependent microhabitat selection for crypsis [2].

- Niche Preference (Red flour beetle): Larvae and adult beetles are offered a choice between flour conditioned by beetles with or without functional stink glands to assess niche preference based on chemical cues [2].

4. Data Analysis: A random-effects meta-analysis is conducted to compare the consistency (statistical significance) and accuracy (effect size) of the treatment effects across the three replicate laboratories for each experiment [2] [12].

Pathways to More Robust Research

Overcoming systemic pressures requires concerted effort and a shift in practices at the individual, institutional, and field-wide levels. The following strategies are critical for fostering more robust and reproducible research.

Adopt Open Research Practices: Practices such as pre-registering study designs and hypotheses, sharing raw data and analysis code, and publishing in open-access formats increase transparency and allow for independent verification of results [2] [16]. These practices help mitigate analytical flexibility and publication bias.

Implement Registered Reports: This publishing format involves peer review of the study's introduction, methods, and proposed analysis before results are known [16]. Journals commit to publishing the work regardless of the outcome, based on the soundness of the methodology, thus removing the bias for positive results [16].

Embrace Heterogenization and CSV: To combat the "standardization fallacy," researchers should deliberately introduce controlled systematic variability (CSV) into their experimental designs [12] [5]. This can include using multiple genetic strains, varying environmental conditions, or testing across several laboratories. This approach assesses the stability of an effect across a broader, more realistic range of conditions, thereby enhancing the generalizability and reproducibility of the findings [5].

Reform Evaluation Criteria: Universities, funders, and journals must move beyond using publication counts and journal impact factors as the primary metrics of research quality. Evaluation should instead value reproducible, high-quality work, which includes data sharing, replication studies, and the publication of null results.

The Scientist's Toolkit: Key Research Reagent Solutions

The following materials and approaches are essential for conducting rigorous, reproducible ecological experiments, especially those focused on behavior.

Table 3: Essential Reagents and Materials for Reproducible Ecological Experiments

| Item Name | Function/Application | Key Consideration for Reproducibility |

|---|---|---|

| Multiple Model Organisms (e.g., A. rosae, P. parallelus, T. castaneum) [2] | Using phylogenetically diverse species tests the generalizability of findings beyond a single model system. | Avoids over-reliance on one species, whose response may be unique. |

| Controlled Systematic Variability (CSV) Sources [5] | Introduces known genetic (e.g., different strains) or environmental variation into an experiment. | Counteracts the "standardization fallacy" and improves external validity and reproducibility. |

| Standardized Behavioral Arenas [2] | Provides a consistent and controlled environment for observing and quantifying animal behavior. | Minimizes noise from apparatus differences; must be documented and shared for replication. |

| Open Data & Code Repositories (e.g., Zenodo) [12] | Publicly archives raw datasets and analysis scripts used in the study. | Enables direct computational reproducibility and re-analysis by other research groups. |

| Pre-Registration Protocols [16] | A time-stamped public record of the study plan, including hypotheses and analysis strategy, created before data collection. | Distinguishes confirmatory from exploratory research, reducing hindsight bias and p-hacking. |

The systemic pressures of "publish or perish" and funding biases present significant and documented threats to the robustness of scientific research. The evidence from ecology and other fields reveals a troubling prevalence of publication bias, low statistical power, and irreproducible findings. However, a path forward is clear. By embracing open science practices, adopting innovative experimental designs like CSV, and fundamentally reforming how research is evaluated and funded, the scientific community can reinforce the foundation of evidence upon which drug development, environmental policy, and true innovation depend. The solutions require a collective commitment to valuing rigor over rhetoric and robustness over novelty.

Reproducibility is a cornerstone of the scientific method, serving as the ultimate verification of research findings. In the domain of ecological experimental results research, concerns about the reliability and reproducibility of published studies have reached critical levels, prompting what many have termed a 'reproducibility crisis' [20]. A large-scale assessment of reproducibility in psychology found that only 39% of 100 studied effects could be successfully replicated [21], while in preclinical cancer research, one notable project could confirm findings in only 6 of 53 landmark studies [22]. This pattern of reproducibility challenges extends directly to ecology and evolution, where surveys indicate that questionable research practices (QRPs) are as prevalent as in psychology [23] [24].

The interplay of three statistical factors—p-hacking, low statistical power, and analytical flexibility—creates a perfect storm that substantially threatens the integrity of ecological research findings. P-hacking, formally defined as "a set of statistical decisions and methodology choices during research that artificially produces statistically significant results" [25], represents a major pathway through which false positive findings enter the scientific literature. When combined with chronically underpowered study designs and undisclosed flexibility in data analysis, these practices systematically undermine the evidential value of research outputs and jeopardize the cumulative nature of scientific progress in ecology and related disciplines.

Defining the Core Concepts

What is P-Hacking?

P-hacking, also known as data dredging, data fishing, or selective reporting, occurs when researchers exploit flexibility in data collection and analysis to artificially obtain statistically significant results (typically p < 0.05) [26] [25]. The term emerged during heightened concern about scientific reproducibility, as researchers sought to explain why many statistically significant published findings failed to replicate [25]. This practice encompasses a family of questionable research methods that collectively inflate the false positive rate beyond the nominal 5% significance level, in some extreme cases elevating it to 60% or higher [27].

The statistical foundation of p-hacking rests on the manipulation of what Simmons et al. termed "researcher degrees of freedom"—the many arbitrary choices researchers make during data collection, processing, and analysis [23]. When these choices are made selectively to produce significant results, they violate the assumptions of null hypothesis significance testing and compromise the integrity of statistical inferences. It is crucial to note that p-hacking can occur both intentionally, as researchers consciously manipulate analyses to achieve desired outcomes, and unintentionally, as researchers make analytical decisions influenced by unconscious biases toward significant results [25].

The Problem of Low Statistical Power

Statistical power represents the probability that a study will correctly reject the null hypothesis when a true effect exists. Conventionally, a power of 80% is considered adequate, meaning there is an 80% chance of detecting a true effect of a specified size [28]. Tragically, many research fields, including ecology and evolution, are characterized by chronically low statistical power. In neuroscience, median statistical power has been estimated at just 21%, while in psychology, median power is approximately 36% [22] [28].

The consequences of low statistical power extend beyond simply missing true effects (false negatives). When studies are underpowered, only those that by chance overestimate effect sizes are likely to reach statistical significance, a phenomenon known as the "winner's curse" [28]. This effect size inflation means that even genuine effects detected in underpowered studies will appear larger than they truly are, distorting the scientific literature and potentially misleading future research directions and resource allocation.

Analytical Flexibility and Researcher Degrees of Freedom

Analytical flexibility refers to the numerous legitimate decisions researchers must make throughout the research process, including during data collection, cleaning, variable selection, model specification, and statistical testing [20]. In a high-dimensional dataset, there may be hundreds or thousands of reasonable alternative approaches to analyzing the same data. For example, a systematic review of functional magnetic resonance imaging (fMRI) studies found nearly as many unique analytical pipelines as there were studies [20].

The fundamental problem arises when this inherent flexibility is exploited, either consciously or unconsciously, to produce statistically significant results. As one researcher noted, modern statistical software enables researchers to "try out several statistical analyses and/or data eligibility specifications and then selectively report those that produce significant results" [26]. This undisclosed analytical flexibility represents a critical threat to inference, as it capitalizes on chance patterns in the data without appropriate statistical correction.

Prevalence Across Scientific Disciplines

The prevalence of questionable research practices varies across scientific domains but appears concerningly widespread. The following table summarizes self-reported QRPs across different disciplines:

Table 1: Self-Reported Use of Questionable Research Practices Across Disciplines

| Research Practice | Ecology & Evolution | Psychology (US) | Psychology (Italy) |

|---|---|---|---|

| Failing to report non-significant results (cherry picking) | 64% | 67% | 65% |

| Collecting more data after inspecting results (p-hacking) | 42% | 54% | 41% |

| Reporting unexpected findings as predicted (HARKing) | 51% | 52% | 63% |

Data source: Fraser et al. (2018) survey of 807 researchers in ecology and evolution compared with previous psychology surveys [23] [24]

The consistency of these self-reported behaviors across distinct scientific cultures and geographical regions suggests systemic rather than disciplinary problems. Notably, researchers in ecology and evolution estimated that their colleagues used these practices at even higher rates than they reported for themselves, indicating that the true prevalence might be higher than captured in self-reports [23].

Empirical evidence beyond self-reports further supports concerns about p-hacking. Examinations of p-value distributions in published literature frequently show an overabundance of p-values just below the 0.05 threshold, consistent with widespread p-hacking [26]. This pattern has been observed across multiple disciplines, though its intensity varies. Interestingly, one study of clinical trial registrations found less evidence of p-hacking than in academic publications, suggesting that registration and transparency might mitigate these practices [29].

Common P-Hacking Methods and Their Statistical Consequences

P-hacking encompasses a diverse family of methods that exploit researcher degrees of freedom. The following table details the most common techniques, their operationalization, and their statistical consequences:

Table 2: Common P-Hacking Methods and Their Impact on Statistical Inference

| Method | Description | Impact on False Positive Rate |

|---|---|---|

| Optional Stopping | Stopping data collection once significance is reached, rather than following a predetermined sample size [22] [25] | Can increase false positive rate to 20% or more with repeated testing [22] |

| Selective Outlier Removal | Removing data points based on whether exclusion produces significant results, rather than pre-established criteria [23] | Can turn non-significant results into significant ones without appropriate justification [22] |

| Variable Manipulation | Changing the primary outcome variable, combining groups, or transforming variables mid-analysis to achieve significance [25] | Particularly problematic when multiple outcome variables are measured; with 10 dependent measures, false positive rate can increase to 34% [22] |

| Multiple Hypothesis Testing | Conducting numerous statistical tests without correction for multiple comparisons [25] | Familywise error rate increases with each additional test performed |

| Analytical Model Shopping | Fitting multiple statistical models and selecting only those yielding significant results [25] | Capitalizes on chance associations in the data, dramatically increasing false discoveries |

| Selective Reporting | Reporting only significant analyses while omitting non-significant ones [23] [25] | Creates a systematically biased representation of the evidence |

The consequences of these practices extend beyond the inflation of false positive rates. P-hacked results typically exhibit inflated effect sizes, as the same analytical flexibility that produces significance also tends to exaggerate the magnitude of effects [28]. This effect size inflation can be substantial, with one analysis suggesting that effect estimates from underpowered studies with selective reporting may be inflated by approximately 50% [28]. This distortion has profound implications for ecological research, where effect size magnitudes often inform conservation priorities, resource allocation, and policy decisions.

Experimental Evidence and Detection Methodologies

P-Curve Analysis

P-curve analysis represents a methodological innovation for detecting p-hacking in a body of literature by examining the distribution of statistically significant p-values [26]. This technique leverages the statistical principle that when a true effect exists, the distribution of p-values should be right-skewed, with more p-values close to zero than to 0.05. In contrast, when p-hacking occurs, the p-value distribution often shows a left-skew, with an abundance of p-values just below 0.05 [26].

The experimental protocol for conducting p-curve analysis involves:

- Collecting reported p-values: Extract all p-values testing the same fundamental hypothesis across multiple studies in a literature.

- Including only significant p-values: Focus exclusively on p-values below 0.05, as the method specifically examines the shape of the significant results distribution.

- Comparing observed distribution to expected patterns: Statistically compare the observed p-value distribution to what would be expected under the null hypothesis of no effect and under the alternative hypothesis of a true effect.

- Testing for evidential value and p-hacking: Use binomial tests to determine whether there are significantly more p-values in the lower bin (0-0.025) than the upper bin (0.025-0.05), which would indicate evidential value, or the reverse pattern, which would suggest p-hacking [26].

P-curve analysis has been applied broadly across scientific literatures. One large-scale text-mining study found evidence of p-hacking throughout the scientific literature, though noted that its effect seemed "weak relative to the real effect sizes being measured" [26].

Z-Curve Analysis

Z-curve represents an advancement beyond p-curve that models the distribution of test statistics (z-scores) rather than p-values [27]. This method offers several advantages, including better handling of heterogeneity in effect sizes and sample sizes, and providing estimates of average power, selection bias, and the maximum false discovery rate (FDR) [27].

The methodological workflow for z-curve analysis includes:

- Converting reported statistics to z-scores: Transform all available test statistics from a literature into a standardized z-score metric.

- Modeling the density of z-scores: Use finite mixture modeling to estimate the underlying components of the observed z-score distribution.

- Estimating key parameters: Calculate the average power of studies, the extent of selection bias (the disproportionate publication of significant results), and the expected replication rate.

- Calculating the maximum false discovery rate: Estimate the upper bound of false positives in the literature [27].

Applications of z-curve to psychological literature have provided varying estimates of the field's false discovery rate, with no consensus yet emerging about the precise figure [27]. This uncertainty highlights the challenge of quantifying the problem even with sophisticated methodological tools.

Direct Experimental Comparisons

The most compelling evidence regarding p-hacking comes from direct experimental comparisons between different research approaches. A critical finding emerges from studies comparing Registered Reports—a publishing format where studies are peer-reviewed and accepted before data collection—with traditionally published research. Scheel et al. (2021) found that Registered Reports in psychology had roughly half the proportion of significant findings compared to standard articles (44% vs. 96%), indicating a substantial reduction in publication bias and p-hacking [27].

Similarly, analyses of clinical trials registered through ClinicalTrials.gov have found less evidence of p-hacking than in academic publications, particularly for primary outcomes in phase III trials sponsored by large pharmaceutical companies [29]. This suggests that formal registration and transparency requirements may constrain questionable research practices.

The Scientist's Toolkit: Research Reagent Solutions

Implementing methodological rigor requires specific conceptual and practical tools. The following table details essential "research reagents" for combating p-hacking and low power in ecological research:

Table 3: Essential Methodological Reagents for Improving Research Reproducibility

| Research Reagent | Function | Implementation Example |

|---|---|---|

| Preregistration | Publicly specifying research plans before data collection to eliminate analytical flexibility [20] [25] | Register hypotheses, methods, and analysis plans on platforms like Open Science Framework (OSF) before beginning data collection |

| Power Analysis | Determining sample size needed to detect effects with adequate precision [28] | Use software (G*Power, simr) to conduct a priori power analysis based on smallest effect size of interest |

| Registered Reports | Peer review before data collection with in-principle acceptance regardless of results [27] | Submit Stage 1 manuscript to journals offering Registered Reports format before data collection |

| Blinding | Protecting against confirmation bias during data collection and analysis [20] | Mask experimental conditions during data processing and preliminary analysis |

| Data/Code Sharing | Enabling verification and reanalysis of published findings [20] | Post de-identified data and analysis code on public repositories with DOI assignment |

| P-Curve/Z-Curve | Diagnosing evidential value and p-hacking in literature [26] [27] | Apply to research literature to assess reliability before building on published findings |

Each of these methodological reagents addresses specific vulnerabilities in the research process. Preregistration and Registered Reports directly target analytical flexibility and selective reporting by committing researchers to their analytical plans before data collection [20] [27]. Power analysis addresses the fundamental problem of low statistical power, which not only increases false negative rates but also reduces the probability that statistically significant results reflect true effects [28]. Meanwhile, blinding procedures help counter cognitive biases that can unconsciously influence data collection, processing, and analysis decisions [20].

Statistical Frameworks and Experimental Protocols

Understanding the Positive Predictive Value Framework

The relationship between statistical power, pre-study odds, and research reliability can be formally expressed through the Positive Predictive Value (PPV) framework [28]. The PPV represents the probability that a statistically significant finding reflects a true effect and can be calculated as:

PPV = [(1 - β) × R] / [(1 - β) × R + α]

Where:

- (1 - β) is the statistical power

- R is the pre-study odds (ratio of true to null relationships in the field)

- α is the significance threshold (typically 0.05)

This formula reveals the profound interdependence between statistical power and the reliability of research findings. For example, in a research area with modest pre-study odds (R = 0.11, meaning only 10% of tested hypotheses are true), a study with 80% power yields a PPV of 64%, meaning there is a 64% chance that a significant finding is true. However, with the median power observed in many fields (20%), the PPV drops to just 31%—meaning most statistically significant findings are false positives [28].

Protocol for Conducting a Reproducibility Audit

Ecological researchers can assess the reliability of their specific subfield through systematic reproducibility audits. The experimental protocol for such an audit includes:

- Define the research domain: Clearly bound the literature to be assessed (e.g., "tropical forest fragmentation studies published 2000-2020").

- Extract test statistics: Collect all test statistics (t, F, r values) and p-values from a representative sample of studies in the domain.

- Apply p-curve or z-curve analysis: Use these tools to assess the evidentiary value and potential p-hacking in the literature.

- Estimate average statistical power: Calculate the median power of studies in the domain based on reported effect sizes and sample sizes.

- Calculate the false positive risk: Use the PPV formula with field-specific parameters to estimate the likelihood that significant findings reflect true effects.