Beyond the False N: A Comprehensive Guide to Identifying, Correcting, and Preventing Pseudoreplication in Ecological and Biomedical Research

Pseudoreplication—the treatment of non-independent data points as independent replicates—is a pervasive and serious statistical error that inflates false positive rates, invalidates hypothesis tests, and threatens the reproducibility of scientific research.

Beyond the False N: A Comprehensive Guide to Identifying, Correcting, and Preventing Pseudoreplication in Ecological and Biomedical Research

Abstract

Pseudoreplication—the treatment of non-independent data points as independent replicates—is a pervasive and serious statistical error that inflates false positive rates, invalidates hypothesis tests, and threatens the reproducibility of scientific research. This article provides a complete framework for researchers, scientists, and drug development professionals to address this critical issue. We begin by defining pseudoreplication and exploring its alarming prevalence and consequences, drawing on recent literature. The core of the guide presents robust methodological solutions, including Linear Mixed Models (LMMs) and Bayesian predictive approaches, with practical application examples from ecology and biomedicine. We then troubleshoot common experimental design pitfalls and offer optimization strategies. Finally, we validate these methods through comparative analysis, demonstrating how correct statistical practices lead to more reliable and reproducible scientific conclusions, with direct implications for robust experimental design in clinical and preclinical research.

What is Pseudoreplication? Understanding the Pervasive Error Undermining Scientific Validity

FAQ: What is the fundamental difference between an experimental unit and a measurement unit?

The experimental unit is the physical entity to which a treatment is independently applied. It is the subject of randomisation and the unit about which you want to draw inferences. In contrast, the measurement unit is the entity on which the response is measured; it is the level at which observations are made [1] [2]. There can be multiple measurement units within a single experimental unit.

| Feature | Experimental Unit | Measurement Unit |

|---|---|---|

| Core Definition | Entity subjected to an intervention independently of all other units [3] [4]. | Entity on which response measurements are taken [1]. |

| Role in Design | The unit of randomisation; determines the sample size (N) [4]. | The unit of observation; source of subsamples or repeated measures. |

| Inferential Scope | Conclusions are generalised to the population of these units [3]. | Measurements describe the individual unit but are not independent for statistical analysis. |

| Example | A single cage of animals receiving medicated diet [3]. | An individual animal from that cage from which a blood sample is taken. |

FAQ: What is pseudoreplication and why is it a problem?

Pseudoreplication occurs when inferential statistics are used to test for treatment effects, but the treatments are not replicated, or the replicates are not statistically independent [5]. The most common form, "simple pseudoreplication," happens when researchers mistake measurement units (subsamples) for experimental units (true replicates) and artificially inflate their sample size 'N' in statistical analyses [4] [5].

This is a serious problem because it:

- Invalidates Statistics: It underestimates the true variability in a study, as measurements within an experimental unit are often more similar to each other than to measurements in other units [4].

- Causes False Positives: This underestimation of variance increases the risk of incorrectly declaring a treatment effect significant (Type I error) [4] [5].

- Renders Conclusions Unreliable: The results of the statistical analysis and the resulting scientific conclusions can be invalid [3].

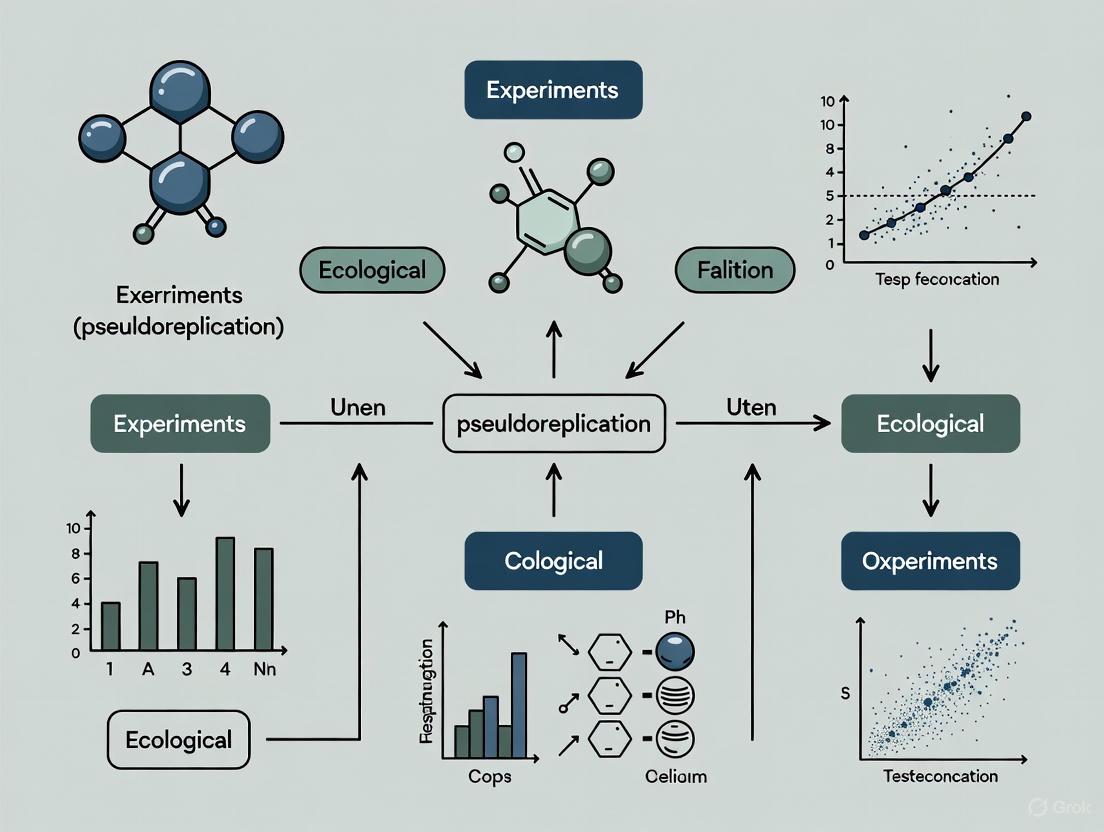

The diagram below illustrates the correct and incorrect paths for analysis to avoid pseudoreplication.

Troubleshooting Guide: How do I correctly identify the experimental unit in my study?

Misidentifying the experimental unit is a common design flaw. Follow this guide to diagnose and correct the issue before starting your experiment.

Step 1: Ask the Key Diagnostic Question

"To what entity is the treatment applied independently?"

The answer to this question is your potential experimental unit. A treatment is applied independently if it is possible to assign any two of these entities to different treatment groups [3] [4].

Step 2: Consult Common Scenarios Use the table below to find the scenario that matches your experimental design. The experimental unit can vary depending on how your intervention is administered [3] [4].

| Your Experimental Design | What is the Experimental Unit? | Explanation |

|---|---|---|

| Individual animals receiving an injection independently. | The individual animal. | Each animal can be randomly assigned to a different treatment. |

| A pregnant dam is treated, and measurements are taken on her pups. | The litter (the dam and her litter). | All pups in a litter are exposed to the same treatment condition. |

| A cage of animals receives a treatment in their diet or water. | The entire cage of animals. | All animals in the cage share the same treatment; they are not independent. |

| Different body parts (e.g., skin patches) on a single animal receive different topical treatments. | The body part (e.g., the patch of skin). | Different treatments are applied independently to different parts of the animal. |

| An animal is used in a crossover design, receiving different treatments over time. | The animal for a period of time. | Each period represents an independent application of a treatment. |

| Classrooms are assigned to a new teaching method, and student test scores are measured. | The classroom. | The intervention is applied at the classroom level, not independently to each student [1]. |

Step 3: Address Complex Designs (Multiple Experimental Units) In some complex experiments, known as split-plot designs, there can be more than one type of experimental unit [3].

- Example: A study in group-housed mice investigates two treatments: a diet administered to the entire cage, and a vitamin supplement given by injection to individual mice.

- Identification:

- The experimental unit for the diet treatment is the cage, as all mice in a cage share the same diet.

- The experimental unit for the vitamin supplement is the individual mouse, as mice within the same cage can receive different injections.

- Action: These designs require specialised statistical analysis (e.g., using mixed models with nested random effects). Seek expert statistical advice before conducting them [3] [5].

The Scientist's Toolkit: Key Concepts for Robust Design

| Concept/Tool | Function & Purpose |

|---|---|

| Experimental Unit | The fundamental replicate (N) for statistical analysis; correctly identifying it ensures the validity of your inference [3] [4]. |

| Blocking | A strategy to group similar experimental units together to reduce variability and increase the power of the experiment [2]. |

| Randomisation | The random assignment of treatments to experimental units to avoid confounding and bias [2]. |

| Nested Analysis | A statistical method (e.g., using mixed models) that correctly accounts for data hierarchies, such as cells within animals or animals within cages [4] [5]. |

| Effect Size | A quantitative measure of the magnitude of a treatment effect; focusing on effect sizes and confidence intervals, alongside p-values, provides a more complete picture of results [5]. |

FAQ: My study is a "natural experiment" or a large-scale manipulation that is hard to replicate. What can I do?

In ecology and field research, true replication is sometimes logistically or financially impossible (e.g., studying a wildfire, a dam removal, or a landscape-scale manipulation) [5]. In such cases, the following approaches are recommended:

- Be Explicit About Limitations: Clearly state in your manuscript that the study is unreplicated and articulate the potential confounding effects. Describe the limited scope of inference—your results apply to the specific system studied [5].

- Use Multiple Control Sites: If possible, compare your single treatment site with multiple control sites to better estimate natural background variability [5].

- Leverage Before-After Data: If you have data from before the event (BACI design - Before-After-Control-Impact), you can analyse the magnitude of change in the treatment area relative to changes in control areas [5].

- Focus on Descriptive Statistics and Effect Sizes: Present your data thoroughly with descriptive statistics. Emphasising the practical significance of observed effects (effect sizes) can be more meaningful than relying solely on p-values in an unreplicated setting [5].

- Consider Bayesian Statistics: Bayesian approaches can be particularly useful for analysing unreplicated studies by formally incorporating prior knowledge or data from similar systems [5].

Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: What is the most fundamental step to avoid pseudoreplication in my experimental design? The most critical step is to correctly identify and replicate the experimental unit. The experimental unit is the smallest entity to which a treatment is independently applied. True replicates are independent experimental units, not subsamples or measurements taken from the same unit [6] [7]. For example, if you apply a warming treatment to an incubator containing 20 Petri dishes, your true replication is one (the incubator), not 20 [6].

Q2: My single-cell study shows highly significant p-values, but I fear they might be invalid. What is the likely cause? The likely cause is sacrificial pseudoreplication. In single-cell studies, cells from the same individual are not statistically independent as they share a common genetic and environmental background. Treating individual cells as independent replicates, instead of the individual organism they come from, dramatically inflates Type I error rates—the probability of falsely rejecting a true null hypothesis. One study found this practice can lead to extremely high sensitivity but very low specificity, making results unreliable [7].

Q3: Can I statistically correct for pseudoreplication after data collection? Sometimes, but not always. If the experiment was designed with multiple independent experimental units per treatment (e.g., several incubators), but you mistakenly treated the subsamples within them as replicates, the analysis can often be "repaired" post-hoc using statistical models that account for the nested data structure, such as generalized linear mixed models (GLMMs) with a random effect for the experimental unit [6] [7]. However, if the study was designed with only one true experimental unit per treatment (e.g., one greenhouse per CO₂ level), the study is fundamentally non-replicated and the results are essentially worthless for statistical inference [6].

Q4: A p-value > 0.05 from my experiment suggests no treatment effect. Is this interpretation correct? Not necessarily. This is a common misinterpretation of p-values. A p-value > 0.05 only indicates that you failed to reject the null hypothesis; it does not prove that the null hypothesis is true or that there is no effect. Concluding "no difference" based solely on a non-significant p-value can be misleading. Other factors, such as low statistical power or high variability, could be the cause. It is recommended to report the actual p-value and consider analyses like equivalence tests if demonstrating the absence of an effect is the goal [8].

Q5: How does pseudoreplication lead to false precision? Pseudoreplication creates a false sense of precision by artificially inflating the sample size used in statistical calculations. When subsamples (e.g., cells from one individual, plants from one greenhouse) are treated as independent, the analysis underestimates the true variability in the data. This makes confidence intervals appear narrower and more precise than they truly are and can make a non-effect appear statistically significant [6] [7].

Diagnostic Table: Common Pseudoreplication Scenarios and Consequences

The table below summarizes common experimental scenarios, their associated statistical consequences, and recommended solutions.

Table 1: Troubleshooting Common Pseudoreplication Problems

| Experimental Scenario | Type of Error | Primary Statistical Consequence | Recommended Solution |

|---|---|---|---|

| Single incubator/chamber per treatment with multiple subsamples analyzed as replicates [6] | Simple Pseudoreplication | Invalid p-values, Inflated Type I Error | Apply treatment to multiple independent chambers; use unit-level replication. |

| Multiple cells from one individual treated as independent observations in a single-cell study [7] | Sacrificial Pseudoreplication | Highly Inflated Type I Error, Low Reproducibility | Use generalized linear mixed models (GLMMs) with a random effect for "individual". |

| All plants in one greenhouse for a given CO₂ level, with many pots measured [6] | Severe Design Flaw (No replication) | Completely Invalid Inference; study is "unreplicated" [6] | Redesign experiment with multiple independent greenhouses or treatment units per CO₂ level. |

| Treating technical replicates (e.g., triplicate measurements from one sample) as biological replicates | Confounding Replicate Types | False Precision, Misleadingly narrow confidence intervals | Average technical replicates; ensure biological replication is the basis for statistical inference. |

Experimental Protocols & Methodologies

Protocol: Implementing a Mixed Model to Correct for Pseudoreplication

This protocol is adapted from solutions proposed in single-cell research [7] but is broadly applicable to hierarchical data structures in ecology.

Application: For analyzing data where multiple measurements (subsamples) are nested within independent experimental units (e.g., individuals, plots, chambers). Objective: To account for the lack of independence among subsamples and obtain valid p-values and confidence intervals.

Materials:

- Dataset with response variable, treatment/fixed effects, and a unique identifier for each experimental unit.

- Statistical software capable of fitting mixed models (e.g., R, Python with

statsmodels).

Procedure:

- Data Structuring: Ensure your dataset includes a column that identifies the experimental unit (e.g.,

Individual_ID,Incubator_ID,Plot_Number). - Model Specification: Fit a generalized linear mixed model (GLMM). The key is to include the experimental unit identifier as a random intercept.

- Model Form:

Response ~ Fixed_Effect_Treatment + (1 | Experimental_Unit_ID) - Example: For testing a drug effect in a single-cell study where cells are nested within patients:

Gene_Expression ~ Drug_Treatment + (1 | Patient_ID)[7].

- Model Form:

- Model Fitting and Inference: Use the output of the GLMM for statistical inference. The p-values for the fixed effect (e.g.,

Drug_Treatment) will now properly account for the correlation of cells within the same patient, controlling the Type I error rate at the nominal level (e.g., 5%) [7].

Workflow Diagram: From Problem to Solution in Hierarchical Data

The diagram below illustrates the logical pathway for diagnosing pseudoreplication and selecting an appropriate analytical method.

This table lists essential "research reagents" for designing robust ecological experiments and avoiding statistical pitfalls. These are primarily conceptual and methodological tools.

Table 2: Essential Reagents for Robust Ecological Experimental Design

| Tool / Reagent | Function / Purpose | Key Consideration |

|---|---|---|

| True Experimental Unit | The entity to which a treatment is independently applied; the basis for true replication. | Distinguish from "sampling unit" or "subsample." Replication must be at this level [6]. |

| Generalized Linear Mixed Model (GLMM) | A statistical model that accounts for non-independent, hierarchical data by including fixed effects and random effects. | The go-to solution for correcting sacrificial pseudoreplication. Use a random effect for the experimental unit [7]. |

| Power Analysis | A pre-experiment calculation to determine the number of replicates needed to detect an effect. | Prevents underpowered studies. Must be based on the number of true experimental units, not subsamples. |

| Randomized Controlled Trial (RCT) Design | The "gold standard" study design where treatments are assigned randomly to experimental units. | Minimizes confounding and selection bias. Strongly preferred over non-randomized designs for causal inference [9]. |

| Before-After-Control-Impact (BACI) Design | A robust design that compares changes in a treatment group to changes in a control group over time. | When randomized (rBACI), provides very strong evidence for causal effects of interventions [9]. |

The Impact of Study Design on Error Rates

Simulation studies comparing different study designs reveal stark differences in their reliability. The table below summarizes false positive rates (FPR) for different designs under confounding conditions, based on simulations of wildlife control interventions [9].

Table 3: False Positive Rates by Study Design (Simulation Data) [9]

| Study Design | Standard Classification | False Positive Rate (FPR) under Confounding | Relative Reliability |

|---|---|---|---|

| Simple Correlation | Bronze | Mostly unreliable, high FPR | Very Low |

| Non-randomized BACI | Silver | High and unreliable | Low |

| Randomized Controlled Trial (RCT) | Gold | Much lower error rates | High |

| Randomized BACI (rBACI) | Gold+ | Lowest error rates | Very High |

Type I Error Inflation from Pseudoreplication in Single-Cell Studies

Empirical simulations demonstrate the severe consequences of pseudoreplication. The following table compares the actual Type I error rates of various analytical methods when analyzing hierarchical data, against a nominal 5% significance level [7].

Table 4: Type I Error Rates of Analytical Methods for Hierarchical Data [7]

| Analytical Method | Accounts for Hierarchy? | Example Tool | Type I Error Rate (α=0.05) | Statistical Consequence |

|---|---|---|---|---|

| Models ignoring individual | No | Standard MAST, other standard tools | Highly Inflated (e.g., >>20%) | Invalid p-values, false discoveries |

| Batch-effect correction | No (Inadequate) | ComBat + standard model | Markedly Increased | Worsens the problem |

| Pseudo-bulk aggregation | Yes (Conservative) | Averaging per individual | Well-controlled, but low power | Valid but conservative p-values |

| Generalized Linear Mixed Model (GLMM) | Yes | MAST with Random Effect | Well-controlled (~5%) | Valid p-values, controlled Type I error |

Troubleshooting Guide: Identifying and Resolving Pseudoreplication

This guide helps researchers diagnose and fix common experimental design errors that lead to pseudoreplication, undermining statistical validity and research reproducibility.

FAQ: Addressing Pseudoreplication and Statistical Validity

What is pseudoreplication and why is it problematic? Pseudoreplication occurs when researchers use inferential statistics while incorrectly assuming data independence, either by analyzing multiple non-independent observations from a single experimental unit or failing to account for inherent grouping structures in data. This statistical error artificially inflates effective sample size, producing underestimated standard errors and spurious statistical significance (inflated Type I error rate) [10]. In some forms, it can also sacrifice statistical power (inflated Type II error rate) [10]. The consequences are severe: in fisheries research, pseudoreplication contributed to flawed stock assessments that failed to predict the collapse of the Grand Banks cod fishery, once the world's largest [10].

How prevalent are statistical issues like pseudoreplication across research fields? Quantitative studies reveal concerning rates of statistical issues across disciplines, though precise pseudoreplication rates are challenging to measure. Empirical estimates of false discovery risks provide insight into the consequences of these statistical problems:

Table: False Discovery Risk Estimates Across Research Fields

| Field | False Discovery Risk (FDR) | Significance Threshold | Key Findings |

|---|---|---|---|

| Psychology | 12-26% (upper 95% CI) | p < 0.05 | At most a quarter of published results may be false positives; lowering threshold to p < 0.01 reduces FDR to <5% [11]. |

| Clinical Trials (Medical) | 13% | p < 0.05 | Lowering threshold to p < 0.01 reduces false positive risk to <5%; clear evidence of publication bias inflating effect sizes [12]. |

| Visual Search (Cognitive Psychology) | >40% (with QRPs) | p < 0.05 | Three questionable practices (retaining pilot data, adding data after checking significance, not publishing nulls) dramatically increase FDR [13]. |

What are the most common forms of pseudoreplication? The primary forms identified in ecological and fisheries research include:

- Simple-spatial pseudoreplication: Failing to acknowledge clustered observations from a single treatment replicate [10]

- Simple-temporal pseudoreplication: Ignoring sequential measurements on the same treatment unit [10]

- Sacrificial pseudoreplication: Overlooking pairing or grouping structures, sacrificing statistical power [10]

Which research practices contribute to false discoveries beyond pseudoreplication? Questionable research practices that dramatically increase false discovery rates include:

- Retaining pilot data in final analyses after using it to assess design efficacy [13]

- Adding participants after checking for significance ("optional stopping") [13]

- Failing to publish null results ("filedrawer problem") creating publication bias [13]

Combined, these practices can produce false discovery rates exceeding 40% and can even obscure or reverse genuine effects [13].

Experimental Protocols: Remedies for Pseudoreplication

Protocol 1: Implementing Mixed-Effects Models Mixed-effects models address pseudoreplication by properly accounting for hierarchical data structures with both fixed and random effects.

Application Example: Community Assemblage Studies

- Objective: Analyze species variability across spatial scales

- Method: Incorporate random effects for different spatial sampling levels

- Implementation: Use Bayesian computational methods for complex, nonlinear models where traditional approaches fail [10]

- Tools: R, SAS System for Mixed Models, or Bayesian Monte Carlo methods [10]

Protocol 2: State-Space Models for Temporal Data State-space models incorporate temporal random effects to address sequential correlation in time-series data.

Application Example: Sequential Population Analysis (SPA)

- Objective: Model fish population dynamics over time

- Method: Explicitly model temporal correlation rather than assuming independent survey errors

- Outcome: Prevents flawed conclusions about population collapse drivers [10]

Protocol 3: Design-Based Remedies for Spatial Sampling

- Objective: Ensure proper sampling independence in field studies

- Method: Treat transects as independent experimental units rather than individual quadrats along transects

- Implementation: Base inference on groupings that represent true random samples [10]

Visualizing Pseudoreplication Concepts and Solutions

Pseudoreplication: Forms and Solutions

Research Reagent Solutions: Statistical Tools for Addressing Pseudoreplication

Table: Essential Methodological Tools for Proper Experimental Design

| Tool/Technique | Function | Application Context |

|---|---|---|

| Mixed-Effects Models | Models both fixed treatment effects and random variance components | Hierarchical data with nested structures (e.g., students within classrooms) |

| State-Space Models | Incorporates temporal random effects for time-series data | Sequential population analysis, longitudinal studies |

| Bayesian Methods (MCMC) | Enables complex model fitting without simplifying assumptions | Nonlinear fisheries models, hierarchical Bayesian models |

| Geostatistical Methods | Accounts for spatial autocorrelation in sampling designs | Ecological field studies, biomass surveys |

| Z-curve Analysis | Estimates false discovery risk and selection bias | Research integrity assessment, meta-research |

| Pre-registration | Prevents p-hacking and data-dependent analysis choices | Clinical trials, experimental psychology |

Implementation Workflow for Robust Experimental Design

Robust Experimental Design Workflow

Field-Specific Considerations

Neuroscience Context Modern neuroscience faces unique challenges with the proliferation of high-density neural recordings, automated behavioral tracking, and expanded neuroimaging methods [14]. The BRAIN Initiative's focus on circuits of interacting neurons creates particular vulnerability to pseudoreplication when analyzing data from connected neural populations [15]. The field is increasingly interdisciplinary, requiring integration of results across subfields and recording modalities [14].

Ecological and Fisheries Research Pseudoreplication is a "notoriously rampant affliction" in ecological field experiments [10]. Fisheries research demonstrates how complex, nonlinear models require specialized remedies beyond simple design fixes, including state-space models and geostatistical approaches [10].

Drug Development and Corporate R&D Reproducibility is increasingly recognized as a competitive advantage in life science R&D, with companies standardizing protocols, investing in FAIR data principles, and incentivizing transparent practices [16]. The pharmaceutical industry reports inability to reproduce 80% or more of experiments from prestigious journals, highlighting the real-world consequences of statistical flaws [17].

Troubleshooting Guide & FAQs

What is pseudoreplication and why is it a problem? Pseudoreplication occurs when data points in a statistical analysis are not statistically independent but are treated as if they are. This can happen when multiple observations are taken from the same experimental subject, or when samples are nested or correlated in time or space. Analyzing such data without accounting for these dependencies tests the wrong hypothesis and can lead to false precision, making results appear more certain than they truly are. This undermines the scientific validity of the experiment [18].

How can a t-test with 8 degrees of freedom become one with 28? This specific error occurs when multiple, non-independent measurements from a few independent experimental units are incorrectly treated as independent data points [18].

Consider this example from experimental science:

- The Experiment: Ten rats are randomly assigned to two groups (treatment vs. control). The rotarod test is performed on all rats for three consecutive days [18].

- The Incorrect Analysis: The analyst performs a standard independent samples t-test using all data points: 5 rats/group × 3 days = 15 observations per group. The degrees of freedom (df) are calculated as (n₁ + n₂ - 2) = (15 + 15 - 2) = 28 [18].

- Why It's Wrong: The three measurements from each rat are correlated, not independent. The rat itself is the experimental unit, not each individual measurement. The analysis falsely inflates the sample size [18].

The table below compares the outcomes of the incorrect and correct analyses for this case, showing how pseudoreplication leads to erroneous conclusions.

| Analysis Type | Statistical Result | Degrees of Freedom (df) | Conclusion |

|---|---|---|---|

| Incorrect (Pseudoreplication) | t = 2.1, p = 0.045 [18] | 28 [18] | False positive: Statistically significant result |

| Correct (Accounted for Dependence) | t = 2.1, p = 0.069 [18] | 8 [18] | Correct: Not statistically significant |

How can I identify pseudoreplication in my own experimental design? Ask yourself: "What is the smallest unit to which a treatment could be independently applied?" The sample size (n) is the number of these independent units [18]. If you have multiple measurements per unit, they are not independent. The diagram below outlines key questions to identify proper experimental units and avoid pseudoreplication.

What are the correct statistical methods if I have repeated measurements? If you have repeated measures from the same experimental units, you must use statistical tests designed for dependent data. The appropriate test depends on your design, as shown in the table below.

| Experimental Design | Incorrect Analysis | Correct Analysis |

|---|---|---|

| Single group measured multiple times (e.g., pre-test/post-test) | Independent samples t-test | Paired sample t-test [19] |

| Multiple measurements from each unit in multiple groups (e.g., our rat case study) | Simple t-test or one-way ANOVA | Repeated-Measures ANOVA or Linear Mixed Models (LMMs) [18] |

The Scientist's Toolkit: Essential Materials & Reagents

The following table details key resources for ensuring robust and statistically sound experiments.

| Item or Resource | Function in Research |

|---|---|

| A Priori Experimental Design | Planning the statistical analysis before data collection to correctly identify the experimental unit (e.g., the rat, not its measurements) and avoid pseudoreplication [18]. |

| Statistical Software (R, Python, SPSS) | Provides advanced procedures (like linear mixed models) to correctly analyze complex data structures with non-independent observations [20]. |

| Linear Mixed Models (LMMs) | A powerful statistical framework that explicitly models the dependency structure in data, such as measurements clustered within individual subjects [18]. |

| Detailed Laboratory Notebook | Accurate and comprehensive recording of experimental protocols, including sample sizes, repeated measures, and potential confounding factors, which is vital for identifying the correct unit of analysis [21]. |

Frequently Asked Questions

- What is the core definition of pseudoreplication? Pseudoreplication is the use of inferential statistics to test for treatment effects with data from experiments where either treatments are not replicated (though samples may be) or replicates are not statistically independent [22] [23].

- Why is pseudoreplication a critical issue for researchers? Analyses that ignore pseudoreplication violate the core statistical assumption of independence of errors [24]. This typically leads to an underestimation of variability, confidence intervals that are too small, and a dramatically inflated Type I error rate—meaning you are more likely to falsely reject a true null hypothesis and claim a significant effect where none exists [25] [26].

- My experimental unit is expensive or logistically challenging to replicate (e.g., a whole forest, a lake, a drug trial on a specific patient cohort). What can I do? This is a common challenge. The key is to be transparent about the limits of statistical inference [5]. Solutions include using descriptive statistics, focusing on effect sizes, or employing advanced models like mixed-effects models that can properly account for the nested structure of your data [5] [10]. Clearly articulate the potential confounding effects in your manuscript [5].

Troubleshooting Guide: Diagnosing and Solving Pseudoreplication

This guide helps you identify the most common forms of pseudoreplication and provides methodologies to correct them.

Scenario 1: Spatial Pseudoreplication

- The Problem: This occurs when samples are collected from locations that are too close together, making them non-independent due to spatial autocorrelation (the principle that "everything is related to everything else, but near things are more related than distant things") [25] [27]. Analyzing clustered samples as if they were independent replicates inflates the effective sample size.

- A Classic Example: Studying the effect of pollution on tree diversity by comparing one polluted city park with one pristine forest, and taking 20 soil samples from each area. The 20 samples describe each area but are pseudoreplicates for testing the "effect of pollution," as the treatment (pollution) is completely confounded with the single area [28].

Scenario 2: Temporal Pseudoreplication

- The Problem: This involves treating repeated measurements taken from the same experimental unit over time as independent replicates [24]. The measurements are temporally correlated because peculiarities of the unit are reflected in all measurements [24].

- A Classic Example: Measuring the root length of the same six plants every two weeks to test a fertilizer's effect. You have only six true replicates (the plants), not (6 plants × 5 time periods) = 30 replicates. The five measurements from each plant are repeated measures, not independent data points [29].

Scenario 3: Sacrificial Pseudoreplication

- The Problem: This occurs when treatments have been genuinely replicated, but the statistical analysis incorrectly uses subsamples or pooled data from within those replicates to test for the treatment effect, rather than using the variation between the true replicate units [22] [23]. This sacrifices statistical power.

- A Classic Example: An insecticide is applied to ten villages (replicates), and a control is applied to another ten. The analysis then pools all individuals within the treated and control villages into two large groups and runs a chi-square test. This is incorrect because the treatment was applied to villages, not individuals. The correct analysis is to calculate the infection prevalence for each village and then compare the mean prevalence between the two groups of villages [28].

The table below summarizes the key characteristics and remedies for these scenarios.

| Scenario | Core Issue | Consequence | Recommended Remediation |

|---|---|---|---|

| Spatial Pseudoreplication [25] [27] | Non-independence of samples due to proximity (spatial autocorrelation). | Inflated Type I error; false confidence in a significant effect. | Use blocking in design; employ spatial autoregressive models or geostatistics in analysis [25] [10]. |

| Temporal Pseudoreplication [24] [29] | Non-independence of repeated measures from the same unit over time. | Inflated Type I error; overestimation of degrees of freedom. | Use mixed-effects models with a random effect for the individual unit (e.g., random = ~time|unit_ID) [10] [29]. |

| Sacrificial Pseudoreplication [22] [28] | Analysis is performed on subsamples or pooled data instead of true replicate means. | Inflated Type II error; loss of statistical power to detect a real effect. | Use nested ANOVA or mixed-effects models, ensuring the F-ratio for the treatment effect is tested over the variation between replicates, not within them [23] [10]. |

Experimental Protocol: A Numerical Simulation of Spatial Pseudoreplication

To illustrate how severe the consequences of pseudoreplication can be, let's examine a simulated experiment from the literature [25].

- 1. Objective: To test the null hypothesis that tree species richness is not correlated with elevation.

- 2. Experimental Design: Three scenarios were simulated, each generating data where the null hypothesis is known to be true (richness was randomly generated).

- Scenario 1 (Independent): 5 mountains, 1 sample per elevation zone on each mountain (Total n = 25).

- Scenario 2 (Pseudoreplicated): 1 mountain, 5 samples per elevation zone (Total n = 25).

- Scenario 3 (Severely Pseudoreplicated): 1 mountain, 100 samples per elevation zone (Total n = 500).

- 3. Methodology: For each scenario, the correlation between richness and elevation was calculated, and its significance (p < 0.05) was recorded. This simulation was repeated 100 times.

- 4. Results: The percentage of false positive significant results (Type I error) was recorded. The expected rate under a valid test is 5%.

The results, shown in the table below, demonstrate the dramatic inflation of Type I error caused by pseudoreplication.

| Scenario | Description | Total Sample Size (n) | % of False Positive Results (Type I Error) |

|---|---|---|---|

| 1 | 5 mountains, 1 sample/zone | 25 | ~5% (as expected) |

| 2 | 1 mountain, 5 samples/zone | 25 | ~50% |

| 3 | 1 mountain, 100 samples/zone | 500 | ~90% |

Source: Adapted from Zelený (2022) [25].

This simulation provides a powerful quantitative argument: collecting many non-independent samples from a single replicate (e.g., one mountain, one forest plot, one growth chamber) can make it very likely you will find a statistically significant—but entirely spurious—correlation.

The Scientist's Toolkit: Key Concepts & Analytical Solutions

Instead of physical reagents, your most critical tools for combating pseudoreplication are conceptual and analytical.

| Tool / Concept | Brief Explanation & Function |

|---|---|

| Experimental Unit | The smallest entity to which a treatment is independently applied (e.g., a growth chamber, a village, a herd). This is the true replicate [26]. |

| Observational Unit | The entity on which a measurement is taken (e.g., a plant, a person, a leaf). These are subsamples if multiple ones belong to one experimental unit [28]. |

| Mixed-Effects Model | A powerful statistical model that includes both fixed effects (the treatments you're interested in) and random effects (to account for grouping structure, like multiple plants within a growth chamber). This is a primary remedy for pseudoreplication [10]. |

| Blocking | A design technique to account for spatial heterogeneity. Similar experimental units are grouped into blocks, and treatments are randomized within each block, helping to control for confounding spatial effects [27]. |

| Spatial Autocorrelation | The statistical dependence between observations based on their geographic proximity. Testing for it (e.g., with Moran's I) is a key diagnostic for spatial pseudoreplication [27]. |

Decision Workflow: Is My Design Pseudoreplicated?

This diagram outlines the logical process to diagnose and address potential pseudoreplication in your experimental design.

Statistical Remedies: Implementing Linear Mixed Models and Bayesian Methods to Correct Pseudoreplication

FAQs: Core Concepts and Troubleshooting

Q1: What is pseudoreplication and why is it a problem in ecological experiments?

Pseudoreplication occurs when researchers incorrectly identify the number of independent samples in a study, often by treating multiple measurements from the same experimental unit as independent data points [30]. For example, if you apply a fertilizer treatment to four plots and measure four plants within each plot, your true replication is four (the plots), not 16 (the plants) [30]. The plants in this case are "pseudo-replicates" [30].

This matters because pseudoreplication artificially inflates degrees of freedom, leading to p-values that are lower than they should be [30]. This increases the likelihood of falsely rejecting your null hypothesis (Type I error), making your statistical analyses invalid and your research conclusions unreliable [30] [6].

Q2: How do Linear Mixed Models (LMMs) solve the problem of pseudoreplication?

LMMs correctly handle data with multi-layered or hierarchical structures by incorporating both fixed and random effects [30] [31]. The fixed effects represent the overall trends or average treatment effects you're interested in, while the random effects account for the natural variability between your grouping factors, such as plots, blocks, or individuals [32].

By including the appropriate random effects, an LMM correctly attributes variance to its source in the experimental design. This ensures that the significance of your fixed effects is tested against the correct error terms, providing valid p-values and confidence intervals [30] [33].

Q3: What is the "maximal random effects structure" and when should I use it?

For confirmatory hypothesis testing—where you have specific, pre-defined hypotheses—the gold standard is to use the maximal random effects structure justified by your experimental design [33]. This means including random intercepts for your grouping factors (e.g., subject_id, block) and, crucially, also including random slopes for your fixed effects of interest when applicable [33].

For instance, if you are testing the effect of a drug (fixed_effect) across multiple hospitals, a maximal model might include a random intercept for hospital and a random slope for fixed_effect within hospital. This structure accounts for the possibility that the drug's effect might vary from one hospital to another. Using a model that is too simple (e.g., random intercepts only) can lead to worse generalization performance than traditional methods [33].

Q4: My model failed to converge or has a singular fit. What should I do?

A convergence warning or singular fit often indicates that the random effects structure is too complex for the data. The following troubleshooting steps are recommended:

- Check your model specification: Ensure you have not overfit the random effects. A common strategy is to start with a model that includes all factors associated with the experimental randomization as random effects [34].

- Simplify the model: If the maximal model fails to converge, you may need to simplify the random effects structure in a principled way. The

buildmerpackage in R can help with this process through backward stepwise elimination. - Rescale your variables: Standardizing your continuous predictors (centering and scaling) can often help convergence [31].

- Consider the available data: Complex random effects structures require sufficient data to be estimated reliably. If you have a small sample size, you might be limited to a simpler model [32].

Experimental Protocol: Implementing an LMM Analysis

The following workflow provides a robust, step-by-step methodology for implementing an LMM analysis for a designed experiment, from a baseline model to final inference [34].

- Define your model: Based on your experimental design, write down the mathematical model. For a study with blocks, plots, and plants, this might be:

y_ijk = μ + r_i + f_j + p_ij + ε_ijkwherer_iis the replicate/block effect,f_jis the family/treatment effect,p_ijis the random plot effect, andε_ijkis the residual error [30]. - Specify fixed and random effects: Incorporate the terms associated with your experimental treatments as fixed or random effects based on your research goals. The randomization structure of your design (e.g., plots nested within blocks) should be encompassed in the random terms [34].

- Fit the model: Use statistical software (e.g., the

lme4package in R, or theMIXEDprocedure in SPSS) to fit this baseline model [31] [35].

- Inspect the residuals: Plot the residuals against fitted values and against each predictor to check for:

- Linearity: The relationship should be linear.

- Homoscedasticity: The variance of the residuals should be constant.

- Normality: A Q-Q plot can check if the residuals are normally distributed [31].

- Address violations: If you find variance heterogeneity, you may need to transform your response variable or extend the random model to account for it (e.g., using different variance structures for different groups) [34].

- Use the full fixed model: While refining the random structure, keep your full set of fixed effects in the model.

- Compare models: Use model comparison techniques like the Likelihood Ratio Test (LRT) or information criteria (AIC, BIC) to compare different random effects structures. A model with a lower AIC or BIC is generally preferred.

- Test fixed effects: Once a suitable random model is established, refine the fixed model. Use Wald F-tests or Likelihood Ratio Tests to test the significance of fixed effects terms.

- Generate predictions: Obtain predicted values (marginal or conditional means) for your treatments of interest.

- Report results: Clearly report the estimates, confidence intervals, and p-values for your fixed effects, along with the variance explained by your random effects.

The Scientist's Toolkit: Research Reagent Solutions

The table below details key components for designing and analyzing experiments where LMMs are essential.

| Research Reagent / Tool | Function in Experimental Context |

|---|---|

| Random Intercept Model | Accounts for baseline differences between clusters (e.g., different baseline blood pressure among patients or different average test scores among schools) by allowing the intercept to vary by group [32]. |

| Random Slope Model | Accounts for variability in the relationship between a predictor and an outcome across groups (e.g., the effect of a drug on blood pressure may vary in strength from one hospital to another) [32]. |

| Maximal Random Effects Structure | The gold-standard model for confirmatory research that includes by-group random intercepts and random slopes for all fixed effects of interest, ensuring the best generalizability of results [33]. |

| REML (Restricted Maximum Likelihood) | A common method for estimating variance components in LMMs. It provides less biased estimates of random effects variances compared to Maximum Likelihood (ML), especially with small sample sizes [35]. |

| Model Comparison (AIC/BIC) | Information criteria used to compare the relative quality of different statistical models. Lower values indicate a better balance between model fit and complexity [34]. |

Practical Example: Analysis of Variance Structure

The following table illustrates how an LMM correctly partitions variance in a hierarchical experiment, compared to an incorrect model that ignores pseudoreplication. The example is based on a study with 8 blocks, 17 families, and 6 plants per plot [30].

| Model Specification | Family Effect Test (Denominator DF) | Interpretation of Family Effect | Variance Component for Plots |

|---|---|---|---|

Incorrect Model (Ignores Plots): height ~ rep + family |

F-value tested with ~686 DF | Artificially inflated precision, high risk of Type I error [30] | Not estimated (assumed zero) |

Correct LMM: height ~ rep + family + (1|plot) |

F-value tested with ~(r*f - r - f) DF | Correctly tested against plot-level variation, valid inference [30] | Estimated (e.g., σ²p) |

Frequently Asked Questions

1. What is the fundamental difference between a fixed and a random effect? A fixed effect assumes that the factor levels (e.g., specific drugs, sexes) are separate, independent, and not similar; inferences are applicable only to the levels present in the data. In contrast, a random effect assumes that the factor levels (e.g., different forests, individual animals) are a random sample from a larger population. This provides inference about both the specific levels in the study and the broader population, including levels not observed [36].

2. I only have data from one incubator per temperature treatment. Is my experiment pseudoreplicated? Yes, this is a classic case of simple pseudoreplication [6] [5]. The incubator itself is the experimental unit to which the temperature treatment is applied. Multiple petri dishes inside a single incubator are not independent replicates because if something goes wrong with that one incubator, it affects all dishes inside it. The correct approach is to use multiple independent incubators per treatment [6].

3. My study involves repeated measurements on the same individual animals over time. How should I account for this? Repeated measurements from the same individual are not independent. To avoid temporal pseudoreplication, you should include "Individual" as a random effect in a mixed model. This accounts for the correlation between measurements within the same animal and allows you to model the population-level (fixed) effects of time or treatment [36] [37].

4. A reviewer rejected my paper for pseudoreplication, but I have a landscape-scale manipulation that is impossible to replicate. What can I do? This is a common challenge in ecology. Be explicit about the limitations of your design and the specific inferences that can be drawn. You can also use statistical solutions such as:

- Multilevel modeling with careful nesting of observational units [5].

- Focusing on effect sizes and the magnitude of differences, alongside inferential statistics [5].

- Using Bayesian statistics to incorporate prior information [5].

5. Is there a minimum number of levels required to use a random effect? A common guideline is to have at least five levels for a random effect to reliably estimate the among-group variance [38]. With fewer levels (e.g., 2-4), the model may struggle to accurately estimate this variation, potentially leading to singular fits. However, if your primary interest is in obtaining accurate fixed effects estimates and the random effect is a "nuisance" parameter to account for non-independence, using fewer than five levels may still be acceptable, though caution is advised [38].

Troubleshooting Guide: Common Pitfalls and Solutions

| Problem | Symptom | Solution | |

|---|---|---|---|

| Pseudoreplication | Applying statistics to non-independent data (e.g., treating subsamples from one plot as true replicates) [6] [5]. | Clearly identify the true experimental unit. Use nested random effects (e.g., `(1 | Site/Plot)`) to account for the hierarchical design. |

| Singular Model Fit | Software warning of a singular fit, often with random effect variance estimates of zero. | Often caused by overly complex random effects structures or too few levels in a random effect. Simplify the model (e.g., remove random slopes) or increase sampling [38]. | |

| Choosing Fixed vs. Random | Uncertainty about whether to model a factor (e.g., Forest_Type) as fixed or random. |

Use a fixed effect if you are interested in the specific levels in your data. Use a random effect if you want to generalize to a broader population and your factor levels are a random sample [36]. | |

| Confounded Treatment | Treatment and experimental unit are confounded (e.g., one greenhouse per CO₂ level) [6]. | Acknowledge the limitation clearly. If possible, use a space-for-time substitution or compare pre- and post-treatment trends to support conclusions [5]. |

Experimental Protocols & Data Presentation

Protocol 1: Designing an Experiment to Avoid Pseudoreplication

- Define Your Treatment: What is the manipulation (e.g., warming, fertilizer)?

- Identify the Experimental Unit: The smallest entity to which an independent treatment is applied (e.g., an entire incubator, a whole plot of land) [6].

- Replicate the Experimental Unit: Apply the treatment to multiple, independent experimental units (e.g., multiple incubators per temperature level).

- Plan Measurements: You may take multiple measurements (subsamples) within each experimental unit, but these are not independent replicates for testing the treatment effect.

Protocol 2: Specifying a Mixed Model in R

This protocol uses the lme4 package to model plant growth (Weight) over Time with Pig as a random effect to account for repeated measures [37].

Table 1: Advantages of Fixed Effects (LM) vs. Random Effects (LMM) Models [38]

| Fixed Effects (LM) | Random Effects (LMM) |

|---|---|

| Faster to compute and conceptually simpler. | Can incorporate hierarchical grouping of data, which is often more conceptually correct. |

| Avoids assumptions about the distribution of random effects. | Allows generalization of results to unobserved levels of the grouping variable (e.g., predicting for a new forest). |

| Prevents inappropriate generalizations beyond the studied levels. | Uses partial pooling to share information across groups, improving estimates for groups with few observations. |

Table 2: Minimum Recommended Levels for Random Effects

| Scenario | Minimum Recommended Levels | Rationale |

|---|---|---|

| Estimating the variance of the random effect itself (e.g., variation among sites). | 5 - 10 [38] | Fewer levels provide insufficient information to accurately estimate the variance of the underlying distribution. |

| Accounting for non-independence when the random effect is a "nuisance" parameter and the focus is on fixed effects. | Can be less than 5 (with caution) [38] | The model may still correctly estimate fixed effects, though estimates of random effects variance may be unreliable. |

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Ecological Experiments

| Item | Function in Experiment |

|---|---|

| Temperature Controllers | To independently apply heating/cooling treatments to individual experimental units (e.g., pots, aquaria), thus avoiding pseudoreplication in climate experiments [6]. |

| Environmental Data Loggers | To monitor and record conditions (e.g., temperature, humidity) within each experimental unit, providing covariates and confirming treatment integrity. |

| Marking & Tagging Systems | To uniquely identify individual organisms or plots for longitudinal tracking, ensuring data integrity in repeated measures designs. |

| Statistical Software (R/Python) | To implement mixed models (e.g., using lme4, statsmodels) and correctly analyze hierarchical data [39] [37]. |

Workflow and Relationship Diagrams

Diagram 1: Experimental Design and Analysis Workflow

Diagram 2: Proper Nesting to Avoid Pseudoreplication

Frequently Asked Questions

1. What is pseudoreplication and why is it a problem? Pseudoreplication occurs when the number of measured data points exceeds the number of genuine, independent replicates (the experimental units), and the statistical analysis incorrectly treats all data points as independent [40] [41]. This artificially inflates the sample size, leading to underestimated standard errors, falsely narrow confidence intervals, and p-values that are lower than they should be [18]. This undermines the validity of statistical inferences and is a major contributor to the reproducibility crisis in scientific research [40].

2. What is the difference between a genuine replicate and a pseudoreplicate? The experimental unit (or genuine replicate) is the smallest entity that can be randomly and independently assigned to a different treatment condition [18]. For example, a pregnant female rodent in a study [40]. A pseudoreplicate is a multiple measurement taken on, or nested within, that same experimental unit, such as multiple offspring from one female rodent [40]. The sample size (n) is the number of genuine replicates [18].

3. My hypothesis is about the pseudoreplicates (e.g., offspring neurons). Don't standard methods like averaging prevent me from testing this? This is a key motivation for the Bayesian predictive approach. While traditional methods like averaging or multilevel models test hypotheses at the level of the genuine replicates (e.g., the mother animals), the Bayesian predictive approach allows you to make direct probabilistic inferences about the biological entities of interest, even if they are pseudoreplicates [40]. You can use the model to predict the outcome for a new, unobserved pseudoreplicate, which directly addresses your research question.

4. What are the practical advantages of the Bayesian predictive approach? This approach provides two major advantages:

- Targeted Inferences: It allows for valid statistical conclusions about the pseudoreplicates themselves [40].

- Observable Focus: Conclusions are probabilistic statements about observable events (e.g., the size of a neuron), not unobservable parameters, which can make findings more interpretable and help address reproducibility issues [40].

5. When should I consider using this approach? You should consider this approach when your experimental design has a nested or hierarchical structure and your primary research question concerns the lower-level units (the pseudoreplicates). Common scenarios include:

- Multiple cells measured per animal.

- Multiple offspring from a single mother.

- Repeated measurements on the same subject over time.

- Multiple technical measurements from the same biological sample.

Troubleshooting Guides

Problem: Incorrect Analysis of Nested Data

Scenario: You have measured the soma size of 20 neurons from each of 10 mice (5 in a control group, 5 in a treatment group). An independent-samples t-test treating all 200 neurons as independent is incorrect [18].

Solution: Apply a Bayesian multilevel (hierarchical) model with a posterior predictive distribution.

Protocol: Implementing the Bayesian Predictive Approach

Define Your Model: Structure a multilevel model that accounts for the data hierarchy. For the mouse neuron example, the model can be specified as [40]:

soma_size_ij ~ Normal(μ_ij, σ_within)μ_ij = α + β * treatment_i + animal_effect_janimal_effect_j ~ Normal(0, σ_animal)Where:soma_size_ijis the measurement from neuroniin animalj.σ_withinrepresents the variation of soma sizes within a single animal.αis the intercept (mean of the control group).βis the coefficient for the treatment effect.animal_effect_jis the random effect for each animal, modeling how individual animals deviate from the group mean, with a standard deviation ofσ_animal.

Specify Prior Distributions: Choose prior distributions for your parameters (α, β, σwithin, σanimal). These should be based on prior knowledge or be weakly informative. For example:

α ~ Normal(0, 100)β ~ Normal(0, 10)σ_within ~ HalfNormal(5)σ_animal ~ HalfNormal(5)Compute the Posterior Distribution: Use Markov Chain Monte Carlo (MCMC) sampling methods to compute the joint posterior distribution of all unknown parameters, given your observed data [40] [42].

Generate the Posterior Predictive Distribution: Use the posterior distribution to simulate new, predicted data values (

y_rep). This distribution represents your model's predictions for the soma size of a new, unobserved neuron, accounting for all sources of uncertainty (within-animal and between-animal variation) [40].Make Inferences from the Predictions: You can now make direct probabilistic statements about the neurons (the pseudoreplicates). For instance, you can calculate the probability that a neuron from the treatment group will be larger than a neuron from the control group, or estimate the predicted difference in soma size between groups.

The following diagram illustrates this workflow.

Problem: Determining the Correct Sample Size

Scenario: You are unsure whether your sample size (n) is the number of litters or the number of offspring.

Solution: Always identify the experimental unit. The sample size is the number of entities that were independently assigned to a treatment. In the following table, the "Genuine Replicate" is the experimental unit and determines the sample size.

Table: Identifying Replicates in Common Experimental Designs

| Experimental Design | Genuine Replicate (Sample Size, n) | Pseudoreplicate |

|---|---|---|

| Treatment applied to pregnant females; outcome measured in offspring [40] | The pregnant female | The individual offspring |

| Treatment applied to cell culture wells; multiple images taken per well [40] | The well | The individual image/field of view |

| Rotarod test performed on 10 rats over 3 consecutive days [18] | The rat | The test result from a single day |

| Multiple neurons sampled from each mouse brain [40] | The mouse | The individual neuron |

Experimental Protocol: Analyzing a Dataset with Pseudoreplication

This protocol is based on the fatty acid dataset re-analyzed in the foundational paper by Harring, Sones, et al. (2020) [40].

1. Background and Objective:

- Research Question: Does infusion of fatty acids (FA) affect the soma size of neurons in vivo?

- Experimental Design: Nine mice (genuine replicates) were randomly assigned to a vehicle control or fatty acid condition. The soma size of multiple neurons (pseudoreplicates) was measured from each animal, resulting in 354 total measurements [40].

2. Materials and Reagent Solutions

| Category | Item/Reagent | Function/Description |

|---|---|---|

| Animal Model | Mice (n=9) | The genuine replicate or experimental unit. |

| Treatment | Fatty Acid (FA) Infusion | The experimental intervention delivered via osmotic minipump [40]. |

| Biological Sample | Neurons | The pseudoreplicate of interest. |

| Key Measurement | Soma Size | The primary outcome variable. |

| Statistical Software | R, Stan, PyMC3, or Brms | For fitting Bayesian multilevel models. |

3. Methodological Comparison: The data were analyzed using four different methods to illustrate the impact of pseudoreplication and the proposed solution [40].

Table: Comparison of Statistical Methods for the Fatty Acid Dataset

| Analysis Method | Description | Effective Sample Size | Key Result (95% CI) |

|---|---|---|---|

| Pooled "Wrong N" | Ignores animal structure; treats all neurons as independent [40]. | 354 neurons (incorrect) | t = -7.75, p = 2.7e-7 [18] |

| Averaging | Averages neuron sizes within each animal, then compares group means [40]. | 9 mice (correct) | Reported as appropriate but loses information on measurement precision [40]. |

| Classic Multilevel Model | Accounts for hierarchy but focuses on parameters at the animal level [40]. | 9 mice (correct) | Tests for a group-level (between-mice) treatment effect. |

| Bayesian Predictive | Uses a multilevel model to make predictions about individual neurons [40]. | 9 mice (correct) | Provides a probability statement about the soma size of a new, unobserved neuron. |

4. Step-by-Step Bayesian Predictive Workflow:

- Step 1 — Model Specification: Define the multilevel model as shown in the troubleshooting guide above.

- Step 2 — Prior Selection: Use the priors suggested in the troubleshooting guide.

- Step 3 — Model Fitting: Run MCMC sampling (e.g., 4 chains, 4000 iterations each) to obtain the posterior distribution.

- Step 4 — Validation: Check MCMC convergence with R-hat statistics (target ~1.0) and effective sample size.

- Step 5 — Prediction: Generate the posterior predictive distribution for a new neuron from the control group and a new neuron from the FA group.

- Step 6 — Interpretation: Calculate the probability that a neuron from the FA group is larger than a neuron from the control group, based on the posterior predictive distributions.

The logical structure of the analysis, from model inputs to final conclusions, is shown below.

In ecological experiments, pseudoreplication occurs when researchers use inferential statistics to test for treatment effects where the treatments are not genuinely replicated or the replicates are not statistically independent [5]. This is a widespread issue, with one survey of ecologists finding that 58% had faced a research question where pseudoreplication was an unavoidable problem [5].

A frequent and simple remedy is data averaging, where multiple non-independent measurements (pseudoreplicates) taken from a single experimental unit are averaged to create one representative value. While this approach can be statistically appropriate, it is crucial to understand both its utility and its significant limitations.

Frequently Asked Questions

Q1: What exactly is pseudoreplication, and why is it a problem in my research?

Pseudoreplication is the use of inferential statistics on data where observations are not statistically independent but are treated as if they are [18]. This often happens when there are multiple observations from the same subject (e.g., the same animal), or when samples are nested (e.g., leaves from the same tree). The core problem is a confusion between the number of data points and the number of genuine, independent replicates [18].

Analyzing pseudoreplicated data without addressing this lack of independence leads to two major issues:

- Testing the Wrong Hypothesis: The statistical test may be examining variation at the wrong biological level (e.g., variation among cells instead of variation among animals) [40] [18].

- False Precision: It artificially inflates the sample size (degrees of freedom), making confidence intervals seem narrower and p-values smaller and more "significant" than they truly are, increasing the risk of false discoveries [18].

Q2: When is it acceptable to use data averaging to deal with pseudoreplication?

Data averaging is a valid and straightforward solution in a specific scenario:

- When your hypothesis is about the experimental unit. If the genuine replicates in your study are, for example, individual animals, and you have taken multiple measurements from each animal, you can average those measurements to create a single value per animal. Your statistical analysis then correctly uses the number of animals as the sample size (n) [40].

Q3: What are the key limitations and drawbacks of using data averaging?

Despite its simplicity, averaging has significant drawbacks that make it unsuitable for many modern studies:

- Loss of Information: Averaging discards all information about the variability within each experimental unit. This within-subject variation can often be biologically interesting [40].

- Inefficient Use of Data: All averages are weighted equally in the subsequent analysis, regardless of whether an average was based on ten observations or one hundred. This is a statistically inefficient use of the data you worked hard to collect [40].

- Shifts the Inference: The statistical inference (the conclusion you draw) is now about the averages of the experimental units, not the original individual measurements. In some cases, this may not align with your research question [40].

Q4: What should I do if averaging is not the right fit for my study?

If your research question is about the pseudoreplicates themselves (e.g., the effect on offspring, not the pregnant mothers) or you wish to retain and model within-subject variation, you should use more advanced statistical methods. The most recommended approaches are:

- Multilevel (Hierarchical) Models: These models explicitly account for the nested structure of the data (e.g., neurons nested within animals) by including random effects [5] [40].

- Bayesian Predictive Approaches: This modern framework uses multilevel models and focuses on making probabilistic predictions about biological entities of interest, even if they are pseudoreplicates, offering a powerful and flexible solution [40].

The table below compares the averaging method to these more sophisticated techniques.

Comparison of Statistical Methods for Handling Pseudoreplication

| Method | Key Principle | Advantages | Disadvantages |

|---|---|---|---|

| Data Averaging | Averages pseudoreplicates to create one value per experimental unit. | Simple to understand and implement; avoids outright incorrect analysis [40]. | Loses information on within-unit variance; can be statistically inefficient; shifts the level of inference [40]. |

| Multilevel Models | Uses random effects to model the nested structure of the data (e.g., neurons within animals). | Retains all data; models variance at multiple levels (within- and between-units); more statistically powerful [5] [40]. | Requires more complex statistical expertise and software; model specification is critical. |

| Bayesian Predictive Approach | Uses multilevel models within a Bayesian framework to make predictions about future observables. | Allows for direct inference about pseudoreplicates; conclusions are about observable quantities; naturally incorporates uncertainty [40]. | Requires understanding of Bayesian statistics; can be computationally intensive. |

The Scientist's Toolkit

Essential Concepts and Reagent Solutions

| Item / Concept | Function / Definition |

|---|---|

| Experimental Unit | The smallest entity that can be randomly assigned to a different treatment condition (e.g., a cage, a single animal, a pregnant female) [18]. |

| Pseudoreplicate | Multiple non-independent observations or measurements taken from a single experimental unit [18]. |

| Intraclass Correlation (IC) | Measures the degree of similarity among pseudoreplicates from the same experimental unit. A high IC indicates that pseudoreplication is a severe issue that must be addressed [18]. |

| Multilevel Model Software | Statistical software packages (e.g., R with lme4, Stan, or Python with PyMC3/Bambi) that are essential for implementing advanced, non-averaging solutions to pseudoreplication [40]. |

Experimental Protocol: Implementing Data Averaging

When the study design and research question make data averaging an appropriate choice, follow this standardized protocol.

Objective: To correctly aggregate multiple pseudoreplicate measurements from a single experimental unit into a single value for a statistical analysis that is conducted at the level of the experimental unit.

Procedure:

- Identify Experimental Units: Clearly define the genuine replicates in your experiment (e.g., the nine mice, not the 354 neurons) [40].

- Group Measurements: Organize your data so that all pseudoreplicate measurements are grouped by their respective experimental unit.

- Choose an Average: Calculate a summary statistic for each experimental unit. The mean is common, but the median is more robust if the within-unit measurements contain outliers [43].

- Proceed with Analysis: Use the resulting averages (one per experimental unit) in your subsequent statistical tests (e.g., a t-test with n=9, not n=354) [40].

Workflow Diagram: Data Averaging Protocol

Decision Guide: When to Use Data Averaging

Use the following flowchart to decide if data averaging is the right strategy for your experimental data.

Decision Guide: Is Data Averaging Appropriate for My Study?

Key Takeaway

Data averaging serves as a simple and valid statistical tool to correct for pseudoreplication when your hypothesis is directed at the level of the experimental unit. However, for more complex questions or to fully leverage the rich data collected in modern ecological research, multilevel and Bayesian predictive models are more powerful and informative alternatives [5] [40].

Frequently Asked Questions

Q1: What is pseudoreplication and why is it a problem in ecological studies? Pseudoreplication occurs when researchers use inferential statistics to test for treatment effects where the treatments are not replicated or the replicates are not statistically independent [5]. In practice, this is a confusion between the number of data points and the number of independent samples [18]. This error can lead to artificially low p-values, making a result appear statistically significant when it is not, thereby undermining the validity of your conclusions [44] [18].

Q2: I have multiple measurements from the same subject. Is my analysis pseudoreplicated? If you are treating multiple measurements from the same subject (e.g., several blood tests from one person, or behavioral observations from a single animal over time) as fully independent data points in a statistical test like a t-test or standard ANOVA, then your analysis is very likely pseudoreplicated [44] [18]. The measurements within a subject are more similar to each other than to measurements from other subjects, violating the assumption of independence.

Q3: My study uses a complex design with nested data (e.g., eggs within nests, or plots within fields). How can I analyze it correctly in R?

A nested design is a classic case where pseudoreplication can occur. The correct solution is to use a model that accounts for the hierarchical structure, such as a mixed-effects model. In R, you can use the lme4 package. For example, to analyze egg sizes from multiple nests, your model would treat "Nest" as a random effect: lmer(egg_size ~ treatment + (1 | Nest_id), data = my_data) [44] [5].

Q4: I'm getting a "number of items to replace is not a multiple of replacement length" error in R. What does this mean?

This common error typically indicates you are trying to assign an object of incorrect length into another object [45]. For instance, you might be trying to put a vector with four elements into a column of a data frame that has five rows. Double-check the dimensions of the objects on both sides of your assignment operator (<-).

Q5: A function from an R package is giving an internal error. How can I troubleshoot it?

First, check that you have provided the correct arguments to the function by reading its documentation with ?function_name. If the function exists in multiple loaded packages, R uses the one from the most recently loaded package, which can cause problems. To be specific, use the package::function() syntax (e.g., lme4::lmer()) to ensure you're calling the right one [45].

Q6: How can I effectively search the internet for help with an R error? When googling an error message, avoid copying the entire text. Remove parts specific to your data (like variable names and values), and search for the core error phrase along with "R". For example, search for "Error in data.frame arguments imply differing number of rows in R" [45]. Repositories like StackOverflow are invaluable resources for solved R problems [46].

Troubleshooting Common R Issues

| Issue Category | Specific Error/Symptom | Likely Cause | Solution |

|---|---|---|---|

| Object & Syntax | Error: object 'x' not found |

Misspelled object name, or object not created due to earlier error [45]. | Check spelling and run your code in order. |

Error: unexpected ')' in "..." |

Unmatched parentheses, brackets, or quotes [45]. | Use RStudio's syntax highlighting to find the mismatch. | |

| Data Structures | replacement has X rows, data has Y |

Trying to assign a vector of length X into a data frame column of length Y [45]. | Ensure vectors for new columns match the data frame's number of rows. |

| Package Management | Function behaves unexpectedly or fails | Function name conflict between packages, or incorrect arguments for the intended function [45]. | Use package::function() syntax and check function documentation with ?. |

| Statistical Analysis | Statistically significant result disappears when using correct replicates | Pseudoreplication; analysis was performed on non-independent data points [44] [18]. | Re-analyze data at the correct hierarchical level (e.g., use nest means) or use a mixed model. |

Statistical Solutions for Pseudoreplication

The table below outlines common scenarios of pseudoreplication and the correct analytical approaches to address them.

| Scenario | Pseudoreplicated Analysis | Correct Approach | |

|---|---|---|---|

| Repeated Measures: Measuring the same subject (e.g., 10 rats) multiple times (e.g., over 3 days) and treating all measurements as independent. | T-test with inflated df (e.g., t28 = 2.1; p=0.045). |

Averaging: Calculate a single mean per subject before analysis. Mixed Model: Use `lmer(response ~ treatment + (1 | subject_id), data)` [18]. |

| Nested Data: Collecting 50 eggs from 20 nests and treating each egg as an independent sample. | T-test or ANOVA with n=50. | Averaging: Analyze data using the nest means (n=20). Nested ANOVA: Statistically nest eggs within nests [44]. | |

| Landscape-scale Manipulation: A management intervention applied to one watershed, with multiple samples taken within it. | Comparing samples from the single treated watershed to samples from a single control watershed using a t-test. | Clearly state the limits of inference. Use Before-After-Control-Impact (BACI) design if data exists, or use statistical models that account for spatial structure [5]. |

Experimental Workflow for Robust Ecological Analysis

The following diagram visualizes a workflow for designing an ecological experiment and analyzing data to avoid the pitfall of pseudoreplication.

The Researcher's Toolkit

| Resource Category | Specific Resource / Tool | Description & Purpose |

|---|---|---|

| R Help & Documentation | ?function and help() |

Accesses R's built-in documentation for functions and packages [46]. |

browseVignettes() |

Opens a list of discursive, tutorial-like documents for installed R packages [46]. | |

| Online Communities | Stack Overflow (https://stackoverflow.com/questions/tagged/r) | A vast Q&A forum for programming issues. Use the "r" tag for R-specific questions [46]. |

| Specialized Search | Rseek.org (https://rseek.org) | A search engine tailored for R-related content across the web [46]. |

| Statistical Packages | lme4 R package |

Provides functions for fitting linear and generalized linear mixed-effects models, essential for handling non-independent data [5]. |

Designing Bulletproof Experiments: A Troubleshooting Guide to Avoid Pseudoreplication from the Start

Troubleshooting Guides and FAQs on Experimental Design

Frequently Asked Questions

What is the single most important thing I can do to strengthen my experimental design? Clearly identify your experimental unit—the entity to which a treatment is independently applied—before you begin. All replication and statistical inference must be based on this unit [6] [28].