Beyond the Data: How Tag Placement Influences Dynamic Body Acceleration Metrics in Biomedical Research

This article provides a comprehensive analysis for researchers and drug development professionals on the critical, yet often overlooked, impact of biologger tag placement on the accuracy and interpretation of Dynamic...

Beyond the Data: How Tag Placement Influences Dynamic Body Acceleration Metrics in Biomedical Research

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on the critical, yet often overlooked, impact of biologger tag placement on the accuracy and interpretation of Dynamic Body Acceleration (DBA) metrics. DBA is a widely used proxy for energy expenditure and activity in preclinical and clinical studies, but its validity is highly dependent on consistent and optimal sensor placement. We explore the foundational principles of DBA, detail methodological best practices for tag deployment, address common troubleshooting scenarios, and present validation frameworks for comparing data across different placement strategies. By synthesizing current research, this review aims to standardize protocols and enhance the reliability of DBA data in translational research, ultimately supporting more robust conclusions in areas from metabolic phenotyping to treatment efficacy.

Understanding Dynamic Body Acceleration: Core Principles and the Critical Role of Sensor Placement

Defining DBA and Its Role as a Proxy for Metabolic Power and Energy Expenditure

Dynamic Body Acceleration (DBA) is a biometric derived from accelerometers that quantifies body movement. As an animal moves, an attached accelerometer measures changes in velocity; the dynamic component of this signal, isolated by removing the static gravitational acceleration, serves as a proxy for movement-based energy expenditure [1] [2]. The underlying principle is that a significant portion of an animal's metabolic energy is used to power muscles for movement, and thus body acceleration can reflect the rate of energy consumption [3] [1]. DBA serves as a critical tool in biologging studies, enabling researchers to estimate energy expenditure in free-ranging animals where direct calorimetry is impossible [2].

The two primary calculations for DBA are Overall Dynamic Body Acceleration (ODBA) and Vectorial Dynamic Body Acceleration (VeDBA).

- ODBA is calculated by summing the absolute values of the dynamic acceleration from three orthogonal axes (surge, sway, and heave) after static acceleration has been subtracted from each [1] [2].

- VeDBA is the vectorial sum of the dynamic accelerations, calculated using the Pythagorean theorem for the three axes, which provides a value closer to the true physical acceleration experienced by the animal [4] [1].

The choice between ODBA and VeDBA can depend on the study species and logger placement. Research on humans and other animals indicates both are strong proxies for oxygen consumption, though ODBA may have a marginally better predictive power in some controlled scenarios [1]. However, VeDBA is less sensitive to changes in device orientation, making it more robust in situations where the logger's alignment cannot be guaranteed [4] [1].

DBA as a Proxy for Energy Expenditure: Mechanisms and Evidence

The Theoretical Link Between DBA and Energetics

The use of DBA as a proxy for energy expenditure is grounded in biomechanics. To instigate movement, an animal's muscles must perform work to overcome inertia and other forces, which consumes metabolic energy. The acceleration of the body's mass is a direct consequence of this work. Therefore, the measured dynamic acceleration should correlate with the mechanical work, and consequently, the metabolic power input [2]. This relationship has been validated by showing strong correlations between DBA and the rate of oxygen consumption (a direct measure of metabolic rate) across a wide range of vertebrate taxa [1].

Key Experimental Validations

Multiple experimental studies have tested the strength of DBA as a proxy for energy expenditure.

- Human Model Studies: Controlled experiments with humans on treadmills have demonstrated strong linear relationships between both ODBA/VedBA and the rate of oxygen consumption. One such study found all r² values exceeded 0.88, establishing humans as a viable model for testing these proxies [1].

- Marine Mammal Studies: A recent study on California sea lions demonstrated that both mean DBA and mean Minimum Specific Acceleration (MSA, another acceleration metric) can predict mean propulsive power at fine temporal scales (5-second intervals) during dives. This relationship held even when avoiding the "time trap" by using mean instead of summed data [2].

- Broad Taxonomic Validation: A reanalysis of data from six animal species confirmed that both ODBA and VeDBA are good proxies for the rate of oxygen consumption, with all r² values exceeding 0.70, though ODBA accounted for slightly more variation [1].

Comparative Analysis of DBA Performance

Table 1: Comparison of ODBA and VeDBA as proxies for energy expenditure.

| Metric | Calculation Method | Key Advantages | Reported Performance (r² with VO₂) | Recommended Use Cases | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

| ODBA | Sum of absolute dynamic acceleration from three axes: `ODBA = | x | + | y | + | z | ` | Marginally better predictor of VO₂ in some controlled studies [1]. | Humans: >0.88 [1]; Other species: >0.70 [1] | Studies where device orientation is highly consistent. |

| VeDBA | Vectorial sum of dynamic acceleration: VeDBA = √(x² + y² + z²) |

Insensitive to device orientation; more robust to logger placement [4] [1]. | Similar to ODBA, but slightly lower in some direct comparisons [1]. | Field studies where consistent logger orientation cannot be guaranteed. |

Table 2: DBA performance across different species and experimental conditions.

| Species/Context | Proxy Used | Correlate | Key Finding | Reference |

|---|---|---|---|---|

| Humans (on treadmill) | ODBA & VeDBA | Rate of oxygen consumption (VO₂) | Both strong proxies; ODBA marginally better. All r² > 0.88. | [1] |

| California Sea Lions (within dives) | DBA & MSA | Propulsive Power (W kg⁻¹) | Linear relationships at 5-second intervals; filtering improved models. | [2] |

| Multiple Animal Species | ODBA & VeDBA | Rate of oxygen consumption (VO₂) | Confirmed as good proxies; all r² > 0.70. | [1] |

| Marine Mammals (critique) | ODBA & Stroke Count | Total Oxygen Consumption | Relationship with totals driven by "time trap"; rates (e.g., mean DBA) showed no relationship. | [5] |

Addressing the "Time Trap" in DBA Analysis

A significant methodological consideration in DBA research is the "time trap" [2] [5]. This pitfall occurs when cumulative energy expenditure (e.g., total oxygen consumption) is regressed against cumulative DBA (e.g., total ODBA). Because both variables inherently contain time, a spurious strong correlation can emerge, driven primarily by the duration of measurement rather than a true physiological link [5].

Experimental evidence supports this critique. A study on fur seals and sea lions found that while total DBA and total number of strokes predicted total oxygen consumption, both proxies were highly correlated with submergence time. When the analysis used rates (mean DBA vs. rate of oxygen consumption), no significant relationship was found [5]. Therefore, best practice is to use mean DBA versus the rate of energy expenditure to avoid conflating time with the relationship [2] [5].

Experimental Protocols and Methodologies

Core Protocol for Validating DBA Against Energy Expenditure

The gold standard for validating DBA involves simultaneous measurement of acceleration and oxygen consumption under controlled conditions.

- Instrumentation: Participants are fitted with tri-axial accelerometers. The devices should be secured firmly close to the animal's center of gravity to best capture whole-body dynamics. Logger placement (e.g., on the back) and attachment method (e.g., a harness) must be documented as they can influence signals [1] [5].

- Experimental Procedure: Subjects perform activities that generate a range of metabolic rates, such as walking, jogging, and running on a treadmill [1] or, for aquatic animals, swimming in a flume or submerged pool [5]. Throughout the trials, oxygen consumption (VO₂) is measured breath-by-breath using a portable metabolic cart or via respirometry systems for marine mammals [1] [5].

- Data Processing:

- Separate Static and Dynamic Acceleration: Raw acceleration signals are processed with a running mean (e.g., over 0.4 to 4 seconds) to estimate static (gravitational) acceleration. The dynamic acceleration is the raw signal minus this static component [5].

- Calculate DBA Metrics: Compute ODBA and VeDBA from the dynamic acceleration signals.

- Statistical Analysis: The relationship between DBA metrics (as mean values) and the rate of oxygen consumption is tested using linear mixed-effects models, often including individual as a random effect to account for variation between subjects [2] [5].

The Scientist's Toolkit: Essential Research Reagents and Equipment

Table 3: Key materials and equipment for DBA research.

| Item | Function/Description | Example Use in Protocol |

|---|---|---|

| Tri-axial Accelerometer | A data logger that measures acceleration in three perpendicular axes (surge, sway, heave). | Attached to the subject to record high-frequency (e.g., >10 Hz) raw acceleration data during activity [1] [2]. |

| Respirometry System | Measures the rate of oxygen consumption (VO₂) and carbon dioxide production (VCO₂). | Used as the gold-standard reference for metabolic rate during controlled experiments [1] [5]. |

| Doubly Labeled Water (DLW) | A technique to measure total energy expenditure over days to weeks in free-living animals. | Provides field-based validation for total energy expenditure, though at a coarser temporal resolution [6] [7]. |

| Data Analysis Software (e.g., R, Python) | For processing raw acceleration data, calculating DBA metrics, and performing statistical analyses. | Used to implement running means, calculate ODBA/VeDBA, and run regression models [2]. |

Critical Considerations and Best Practices

Impact of Tag Placement and Data Processing

The accuracy of DBA can be significantly affected by tag placement and the specific parameters used in data processing.

- Logger Positioning and Attachment: The orientation of the accelerometer relative to the animal's body axes is crucial. VeDBA is generally more robust to skewness in logger orientation than ODBA [1]. The attachment method must also minimize independent movement of the tag, which would introduce noise into the signal [5].

- Data Processing Parameters: The choice of the running mean window used to separate static and dynamic acceleration can greatly influence the correlation between DBA and energy expenditure. Studies must test different window lengths to optimize the relationship for their specific species and behavior [5]. Similarly, applying an acceleration threshold (ignoring values below a certain level) can sometimes improve correlations with total energy expenditure [5].

Limitations and Future Directions

While a powerful proxy, DBA has limitations. It primarily reflects movement-based energy expenditure and may not capture costs from non-propulsive processes like thermoregulation, digestion, or isometric work [2] [5]. The relationship between DBA and metabolism can also vary with gait, substrate, and incline [4]. Future directions include the development of video-based DBA methods that use marker-less tracking to estimate 3D acceleration, eliminating the need for physical loggers and their associated drag and handling effects, particularly in small species [3].

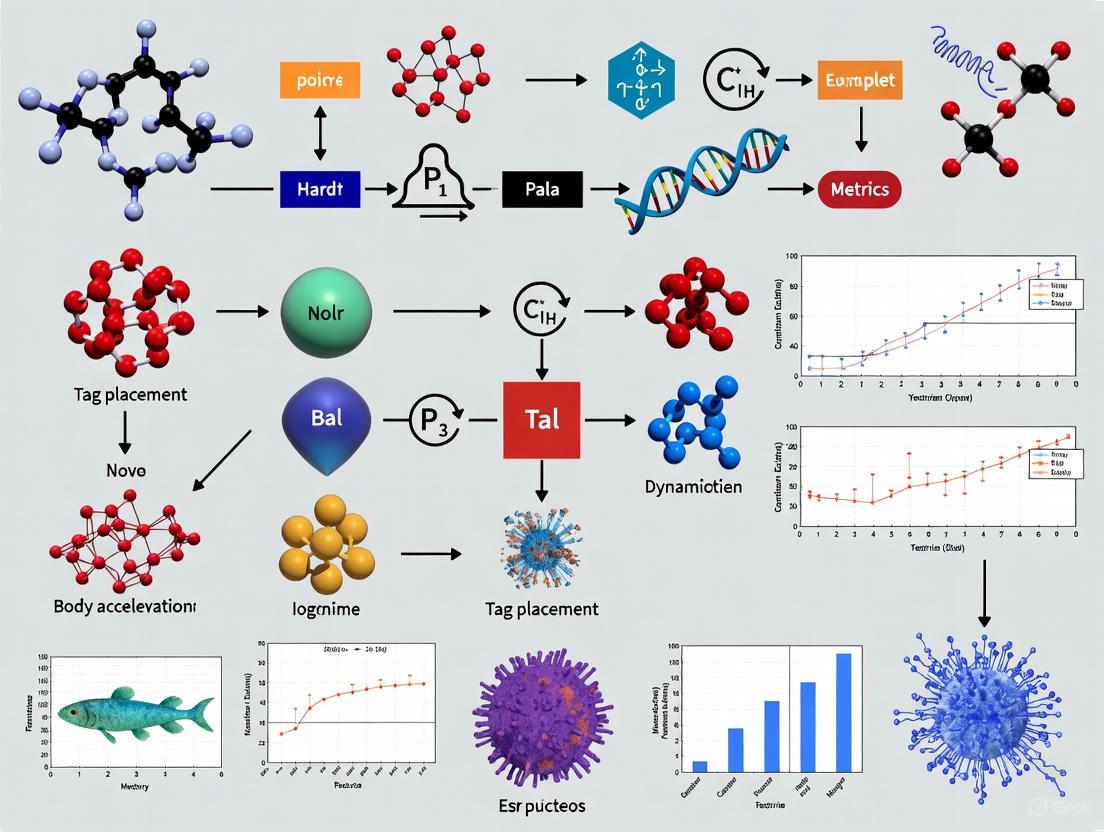

Visualizing the DBA Workflow

The following diagram illustrates the key steps for defining DBA and validating its use as a proxy for energy expenditure.

DBA Validation Workflow

Dynamic Body Acceleration is a well-established and validated proxy for estimating movement-based energy expenditure in humans and a wide range of animal species. The choice between ODBA and VeDBA involves a trade-off between potential predictive power and robustness to device orientation. Critical to its successful application is a rigorous methodological approach that includes proper tag placement, optimized data processing parameters, and, most importantly, the avoidance of the "time trap" by correlating mean DBA with the rate of energy expenditure. When applied with these considerations, DBA remains an invaluable tool for ecologists and physiologists studying energy budgets in free-living organisms.

The study of animal movement has been revolutionized by the use of animal-borne sensors, particularly accelerometers, which provide a fine-scale, continuous record of an animal's behaviour and motion. At its core, this approach is grounded in biomechanics: the physical forces and motions produced by an animal are translated into electrical signals that can be recorded and interpreted. Accelerometers measure proper acceleration, the sum of static acceleration due to gravity and dynamic acceleration resulting from body movement [8]. This combination provides a rich source of information for distinguishing postures and movements. The static component indicates the animal's orientation with respect to gravity, while the dynamic component reveals the intensity and periodicity of its motions. Understanding this translation from muscle contractions and body movements to sensor signals is fundamental for selecting appropriate sensor placements and interpreting the resulting data accurately, which directly impacts the calculation of derived metrics like Dynamic Body Acceleration (DBA).

Key Biomechanical Descriptors from Acceleration Data

Researchers leverage several biomechanical descriptors to characterize behaviour from raw acceleration data. These descriptors are calculated from the sensor's signals and form the basis for behaviour classification.

- Posture: The static component of the acceleration signal, derived from the constant influence of gravity, is used to estimate an animal's body orientation or posture. For instance, in meerkats, the surge axis of an accelerometer was used to differentiate between vigilant posture (standing upright) and curled-up resting [8].

- Movement Intensity: The overall magnitude of dynamic body acceleration reflects the intensity of an animal's movement. This is often quantified as Overall Dynamic Body Acceleration (ODBA) or Vectorial Dynamic Body Acceleration (VeDBA), which sum the dynamic components from all three sensor axes [4].

- Movement Periodicity: The regularity and frequency of movement, such as stride patterns during locomotion, can be extracted from the acceleration signal. This periodicity is a key feature for identifying specific gaits and differentiating between cyclic and non-cyclic behaviours [8].

The following table summarizes the core biomechanical descriptors derived from acceleration data.

Table 1: Core Biomechanical Descriptors from Acceleration Signals

| Biomechanical Descriptor | Source in Signal | Description and Biomechanical Significance | Common Metric(s) |

|---|---|---|---|

| Posture | Static Acceleration (Gravity) | Indicates body orientation in space (e.g., upright, prone). A static measure. | Axis-specific g-values |

| Movement Intensity | Dynamic Acceleration (Body Movement) | Represents the magnitude of physical effort or vigour of movement. | ODBA, VeDBA [4] |

| Movement Periodicity | Dynamic Acceleration (Body Movement) | Captures the rhythmic nature of movements like walking or running. | Stride Frequency, Peak Frequency [4] |

Experimental Protocols for Behaviour Recognition

A standardized methodology is crucial for generating robust, comparable data on animal behaviour using accelerometers. The following workflow outlines the key stages in a typical experiment, from data collection to model validation.

Diagram 1: Experimental workflow for behaviour recognition.

Data Collection and Ground Truthing

The foundation of any behaviour recognition study is the simultaneous collection of high-frequency accelerometer data and video recordings of the animal's behaviour. In a study on domestic cats (Felis catus), researchers equipped nine indoor cats with collar-mounted tri-axial accelerometers [9]. Their behaviours were recorded alongside video footage, which served as ground-truthed calibration data. This paired dataset is essential for linking specific acceleration patterns to unambiguous behaviours.

Data Processing and Model Training

The raw data is processed to enhance predictive accuracy. Key steps include:

- Calculating Additional Descriptive Variables: Beyond basic static and dynamic acceleration, variables like the dominant power spectrum frequency, amplitude, and ratios of VeDBA to dynamic acceleration can improve model specificity [9].

- Altering Data Frequencies: Data recorded at high frequencies (e.g., 40 Hz) can be used directly or summarized as a mean over 1-2 seconds. Higher frequencies better capture fast-paced behaviours, while lower frequencies can more accurately identify slower, aperiodic behaviours like grooming [9].

- Standardising Durations of Behaviours: To prevent models from being biased towards over-represented behaviours (e.g., resting), the training dataset can be balanced to include a similar duration of each behaviour of interest [9].

The processed data is then used to train a machine learning model, such as a Random Forest (RF). An RF model generates hundreds of decision trees from random subsets of the data and variables, with the final predicted behaviour being the most frequent classification across all trees [9].

Model Validation

The model's accuracy is tested against a portion of the calibrated data not used in training. For ultimate robustness, the model should be validated against the behaviours of free-ranging individuals, where behaviours may be more varied and environmental factors come into play [9].

Comparative Analysis: Sensor Types and Placements

Accelerometers vs. Magnetometers

While accelerometers are the most common sensor for this purpose, magnetometers provide a complementary data stream. Both sensors can measure static (posture) and dynamic (movement) components, but they have different strengths and weaknesses.

Table 2: Comparison of Accelerometer and Magnetometer for Behaviour Recognition

| Feature | Accelerometer | Magnetometer |

|---|---|---|

| Static Component | Measures tilt relative to gravity. | Measures inclination relative to Earth's magnetic field. |

| Dynamic Component | Directly measures dynamic acceleration. | Derived from change in sensor tilt over time [8]. |

| Key Strength | Excellent for estimating posture [8]. | Highly robust to inter-individual variability in dynamic behaviour; better for slow, rotational movements [8]. |

| Key Limitation | Dynamic acceleration can interfere with tilt measurement; signal magnitude depends on sensor location [8]. | Accuracy can be compromised by magnetic field disturbances [8]. |

| Reported Recognition Accuracy | > 94% in meerkats (Suricata suricatta) [8]. | > 94% in meerkats, with higher robustness for some dynamic behaviours [8]. |

The Impact of Tag Placement and Data Processing

The location of the sensor on the animal's body significantly influences the signal. For example, the same activity can produce different signal magnitudes depending on the attachment location, which can be problematic for fine-scale parameter estimation [8]. Furthermore, the method of calculating dynamic metrics matters. A study on humans compared Overall Dynamic Body Acceleration (ODBA)—the sum of absolute values of dynamic acceleration—and Vectorial Dynamic Body Acceleration (VeDBA), which uses vector-based calculation. VeDBA was found to be less sensitive to device orientation and had a higher overall coefficient of determination with speed across different terrains [4].

The following table quantifies how different data processing choices can impact the predictive accuracy of behaviour recognition models, as demonstrated in the domestic cat study [9].

Table 3: Impact of Data Processing on Model Accuracy (Domestic Cat Study)

| Processing Method | Impact on Predictive Accuracy of Random Forest Models |

|---|---|

| Adding Descriptive Variables | Improved model accuracy by providing more explanatory power to describe behaviours [9]. |

| Using Higher Recording Frequency (40 Hz vs 1 Hz) | Improved accuracy for identifying fast-paced behaviours (e.g., locomotion) [9]. |

| Using Lower Recording Frequency (1 Hz vs 40 Hz) | More accurately identified slower, aperiodic behaviours (e.g., grooming, feeding) in free-ranging cats [9]. |

| Standardising Behaviour Durations in Training Data | Improved model accuracy by reducing bias toward over-represented behaviours [9]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Materials for Accelerometer-Based Behavioural Research

| Item | Function and Application |

|---|---|

| Tri-axial Accelerometer Loggers | Miniaturized data loggers that measure acceleration in three orthogonal planes (surge, sway, heave), enabling the calculation of posture and movement [9] [4]. |

| Tri-axial Magnetometers | Sensors that measure the intensity of the local magnetic field in three planes. Used for dead-reckoning and as a complementary sensor to accelerometers for behaviour recognition [8]. |

| Video Recording System | A critical tool for obtaining ground-truthed behavioural observations that are synchronized with sensor data for model training and validation [9]. |

| Machine Learning Software (e.g., R, Python with scikit-learn) | Programming environments used to build and train Random Forest models and other classifiers for automated behaviour identification from sensor data [9]. |

| Custom Harnesses or Attachments | Silicone or other biocompatible materials used to securely mount data loggers to the study animal in a standardized position, minimizing movement artifacts [4]. |

In the field of biologging, accelerometry has gained popularity as a simple and affordable proxy for estimating activity levels and energy expenditure in free-ranging animals [2]. The analysis of dynamic body acceleration (DBA) provides researchers with a method to quantify animal movement and infer energetic costs where direct measurement is logistically challenging. Among the various DBA metrics, Overall Dynamic Body Acceleration (ODBA), Vectorial Dynamic Body Acceleration (VeDBA), and Minimum Specific Acceleration (MSA) have emerged as prominent tools. These metrics are particularly valuable in breath-hold divers such as marine mammals, birds, and turtles, where fine-scale energy expenditure is difficult to measure [2]. The application of these metrics must be carefully considered within research design, especially regarding tag placement and orientation, as these factors can significantly influence the recorded signals and their biological interpretation.

Metric Definitions and Formulas

Core Concepts and Calculations

DBA metrics are derived from tri-axial acceleration data, which consists of static (gravitational) and dynamic (animal movement) components. The core principle involves isolating the dynamic acceleration, which is presumed to represent propulsive muscular effort [2].

Table 1: Core Definitions of Acceleration Metrics

| Term | Definition |

|---|---|

| Static Acceleration | Low-frequency component of the acceleration signal, related to the animal's posture and orientation relative to gravity. |

| Dynamic Acceleration | High-frequency component of the acceleration signal, caused by the animal's own movement. |

| ODBA | The sum of the absolute values of the dynamic acceleration from three orthogonal axes. |

| VeDBA | The vectorial sum (Euclidean norm) of the dynamic acceleration from three orthogonal axes. |

| MSA | The absolute difference between the assumed gravitational vector (1 g) and the norm of the three raw acceleration axes. |

The formulas for calculating these metrics are as follows:

ODBA (Overall Dynamic Body Acceleration): Calculated as the sum of the absolute values of the dynamic acceleration from each axis [2].

ODBA = |Dx| + |Dy| + |Dz|Where Dx, Dy, and Dz represent the dynamic acceleration for the x, y, and z axes, respectively.VeDBA (Vectorial Dynamic Body Acceleration): Calculated as the vectorial sum of the dynamic acceleration [2] [10].

VeDBA = √(Dx² + Dy² + Dz²)MSA (Minimum Specific Acceleration): Calculated differently from DBA variants. It is the absolute value of the difference between the assumed gravitational vector of 1 g (9.8 m s⁻²) and the norm of the three raw acceleration axes [2].

MSA = |1 - √(Ax² + Ay² + Az²)|Where Ax, Ay, and Az represent the total (raw) acceleration for the x, y, and z axes.

The primary distinction between the metrics lies in how they separate dynamic from static acceleration. ODBA and VeDBA require a processing step (typically a high-pass filter or smoothing window) to estimate and subtract static acceleration. In contrast, MSA uses a mathematical shortcut based on the magnitude of the total acceleration vector, providing a lower bound of possible specific dynamic acceleration [2].

Comparative Analysis of DBA Variants

Theoretical and Practical Differences

Table 2: Comparison of DBA and MSA Metrics

| Feature | ODBA | VeDBA | MSA |

|---|---|---|---|

| Calculation Method | Sum of absolute dynamic accelerations | Vectorial sum of dynamic accelerations | Difference from 1 g of raw acceleration norm |

| Separation of Dynamic/Static | Requires filtering/smoothing | Requires filtering/smoothing | Direct calculation from raw signal |

| Theoretical Basis | Represents total magnitude of acceleration | Represents magnitude of acceleration vector | Represents minimum possible dynamic acceleration |

| Key Assumption | Static and dynamic acceleration are separable via filtering | Static and dynamic acceleration are separable via filtering | Gravitational vector is consistently 1 g |

| Potential Weakness | May be inaccurate if signals are not distinct across axes [2] | May be inaccurate if signals are not distinct across axes [2] | Inaccurate during free-fall or passive descent [2] |

| Correlation with Propulsive Power | Linear relationship demonstrated [2] | Linear relationship demonstrated [2] [10] | Linear relationship demonstrated [2] |

In practice, ODBA and VeDBA are often almost perfectly correlated, leading to the suggestion that the more general term "DBA" be used, with the specific method (ODBA or VeDBA) always specified [2]. A key practical consideration is that both DBA and MSA theoretically correlate with an animal's propulsive power, but only if the 3-axis acceleration data accurately represents the acceleration of the animal's center of mass caused predominantly by propulsive muscular effort [2].

Experimental Validation and Performance

Recent experimental work has quantitatively tested the performance of these metrics. A 2025 study on California sea lions (Zalophus californianus) validated the use of DBA and MSA at fine temporal scales, successfully avoiding the "Time Trap" by using mean acceleration metrics against mean energy expenditure instead of cumulative sums [2].

Key Experimental Findings:

- Both mean DBA and mean MSA successfully predicted mean propulsive power in 5-second intervals and complete dive phases (descent or ascent) [2].

- All relationships were linear and statistically significant [2].

- Linear mixed-effects models that included random effects for individual animals (both slope and intercept) provided the best fit for the data, indicating the importance of accounting for individual variation [2].

- Filtering and smoothing raw DBA and MSA data improved linear mixed models for 5-second interval data, though models using raw data were also strong [2].

- Using fixed-effects models on individual animals, both DBA and MSA successfully detected a known trend of increasing power use in deeper dives [2].

Another 2025 study on Atlantic bluefin tuna used VeDBA to quantify post-release activity patterns, demonstrating its utility in measuring activity (VeDBA g), tailbeat amplitude, and stroke frequency, which stabilized at lower levels 5-9 hours after release [10].

Methodologies for Application and Validation

Standard Experimental Protocol for Marine Species

The following methodology, derived from recent studies, outlines a robust approach for applying and validating DBA metrics.

A. Animal Capture and Instrumentation:

- Subjects: Typically, wild-caught animals (e.g., California sea lions, Atlantic bluefin tuna) [2] [10].

- Tagging: Animals are instrumented with biologging tags under anesthesia (for mammals) or immediately after capture (for fish). Tags are securely attached, often to the fur on the dorsal side (marine mammals) or via an intramuscular dart (fish) [2] [10].

- Data Loggers: Tags should record pressure (depth), temperature, and tri-axial acceleration. A high sampling frequency for acceleration (e.g., 20 Hz to 30 Hz) is necessary to capture stroke cycles [2] [10].

B. Data Collection and Processing:

- Data Collection: Tags record during natural behavior (e.g., foraging dives) for periods ranging from hours to months [10].

- Axis Calibration: To account for differing tag attachment orientations, accelerometry data must be rotated using known angles to align the tag's frame of reference with the animal's body axes, making data comparable between individuals. The R package "tagtools" can be used for this [10].

- Signal Separation: For ODBA and VeDBA, the static (low-frequency, related to posture) and dynamic (high-frequency, related to movement) acceleration components must be separated. This is typically done by applying a running mean (e.g., 2-second window) to each acceleration channel to estimate static acceleration, which is then subtracted from the raw signal to derive dynamic acceleration [10].

- Metric Calculation: Calculate ODBA, VeDBA, and MSA according to the formulas in Section 2.1.

- Validation: In studies with independent measures of propulsive power (e.g., derived from hydrodynamic glide models [2]) or overall energy expenditure (e.g., from respirometry), statistical models (e.g., linear mixed-effects models) are used to test the relationship between acceleration metrics and power/energy.

Essential Research Toolkit

Table 3: Essential Research Reagents and Materials

| Item / Solution | Function in Research |

|---|---|

| Tri-axial Accelerometer Datalogger | Core sensor for collecting raw acceleration, depth, and temperature data. Example: Cefas G7, Wildlife Computers MiniPAT [10]. |

| Galvanic Time Release | Mechanism to secure tag packages and release them after a predetermined period for recovery. Used in medium-term deployments [10]. |

| R Statistical Software | Primary environment for data analysis, including packages like "tagtools" for calibration and "dplyr" for data manipulation [10]. |

| Hydrodynamic Model Inputs | Animal mass, length, girth, and depth profiles used to calculate independent propulsive power for metric validation [2]. |

| Linear Mixed-Effects Models | Statistical framework to test the relationship between acceleration metrics and energy expenditure, accounting for individual animal variation [2]. |

Conceptual Workflow and Relationships

The following diagram illustrates the logical pathway from raw data collection to the final estimation of energy expenditure, highlighting the parallel paths for DBA and MSA calculation and the critical role of tag placement.

The accurate measurement of biological signals through animal-borne sensors, a practice known as biologging, has revolutionized our understanding of animal physiology, behavior, and ecology. Dynamic Body Acceleration (DBA) and other acceleration metrics have emerged as critical proxies for estimating energy expenditure and classifying behavior in free-living animals [2]. However, a fundamental challenge persists: the physical location of a tag on an animal's body introduces significant variance in the recorded signals. This variation can compromise data quality, hinder cross-study comparisons, and lead to erroneous biological interpretations if not properly accounted for.

The "placement problem" arises from fundamental biomechanical principles. Different body segments experience distinct magnitudes and patterns of acceleration during movement. A tag placed on the head will record different signals than one on the back or a limb, as each body part has unique trajectories and roles in locomotion. Understanding and quantifying this variance is therefore not merely a technical concern but a core prerequisite for valid scientific inference. This guide objectively compares the effects of tag placement across research studies, providing a structured analysis of experimental data and methodologies to inform researcher decision-making.

Comparative Analysis of Placement Effects: Experimental Data

The following tables synthesize quantitative findings from key studies, demonstrating how tag placement influences signal interpretation and model performance across different species and research applications.

Table 1: Impact of Sensor Placement on Diagnostic Model Performance in Human Gait Analysis

| Study Focus | Training Data Source (Placement) | Testing/Validation Data Source (Placement) | Key Performance Metric (Accuracy) | Implication of Placement Variance |

|---|---|---|---|---|

| Peripheral Artery Disease (PAD) Diagnosis [11] | Reflective Marker (Sacrum) | Wearable Accelerometer (Waist) | 28% | Massive drop in accuracy due to placement mismatch, despite measuring similar axial body regions. |

| PAD Diagnosis [11] | Reflective Marker (Sacrum) | Reflective Marker (Sacrum) | 92% | High accuracy when training and testing data are from the identical body location. |

| PAD Diagnosis (with Feature Engineering) [11] | Features from Marker (Sacrum) | Features from Accelerometer (Waist) | 60% | Using extracted gait features instead of raw data reduces the negative impact of placement variance. |

Table 2: Tag Placement Considerations in Animal Biologging Studies

| Research Context | Species | Tag Placement | Measured Variable | Effect of Placement on Data & Interpretation |

|---|---|---|---|---|

| Propulsive Power Validation [2] | California Sea Lions | Not Explicitly Stated | DBA, Minimum Specific Acceleration (MSA) | Emphasized that metrics are only valid if acceleration reflects the animal's center of mass. Placement is a key assumption. |

| Multi-Species Behavior Benchmark [12] | 9 Taxa | Various (Standardized per study) | Tri-axial Acceleration for Behavior Classification | Highlights the challenge of comparing models across datasets that use different, often undefined, tag placements. |

| "Bur-Tagging" Method [13] | Wild Canids, Domestic Animals | Back/Fur (via adhesive) | Multi-sensor data (Accelerometer, etc.) | Novel deployment method separates placement (targeting the back) from capture, but placement precision is lower than manual attachment. |

Detailed Experimental Protocols

To ensure reproducibility and critical evaluation, this section outlines the methodologies of key experiments cited in this guide.

Protocol 1: Validating Acceleration Metrics against Propulsive Power

- Objective: To test whether DBA and MSA can predict propulsive power at fine temporal scales (5-second intervals) in diving California sea lions [2].

- Subjects & Instrumentation: Lactating adult female California sea lions (Zalophus californianus) were captured and instrumented with dataloggers containing tri-axial accelerometers. Animal mass, standard length, and maximum circumference were recorded for subsequent biomechanical modeling [2].

- Independent Power Calculation: Propulsive power (W kg⁻¹) was calculated at 5-second intervals using hydrodynamic glide equations and modeling. This calculation was based on swim speed, depth-derived buoyancy, and animal-specific drag, providing a gold-standard reference independent of the acceleration metrics [2].

- Acceleration Metric Calculation: DBA and MSA were computed from the raw tri-axial accelerometer data for the same 5-second intervals. The researchers tested both raw and filtered/smoothed versions of the acceleration data [2].

- Statistical Analysis: Linear mixed-effects models were used to assess the relationship between the mean DBA/MSA and the mean propulsive power. The models included random effects for individual animals to account for inter-individual differences, and likelihood ratio tests were used to determine model fit [2].

Protocol 2: A Cross-Data-Type Framework for Disease Diagnosis

- Objective: To develop a model for diagnosing Peripheral Artery Disease (PAD) using lab-grade data for training and wearable sensor data for real-world implementation [11].

- Data Sources:

- High-Precision Training Data: Acceleration signals were extracted from the 3D trajectory data of reflective markers placed on anatomical locations (e.g., sacrum, Anterior Superior Iliac Spine - ASIS) during controlled lab walking trials.

- Real-World Validation Data: Data were collected from a wearable ActiGraph GT9X accelerometer attached to the subject's waist during overground walking.

- Model Development & Testing: The study compared several data pathways:

- Path 1: Train and test a Long Short-Term Memory (LSTM) model using raw acceleration from reflective markers.

- Path 2: Train an LSTM model on sacral marker data and test it on waist-worn accelerometer data.

- Path 3: Train and test an LSTM model using only the waist-worn accelerometer data.

- Path 4: Train a Support Vector Machine (SVM) model on features (e.g., stride time, stance time) extracted from the sacral marker data and test it on similar features from the waist-worn accelerometer data [11].

- Evaluation: Model performance was compared using accuracy and F1 scores to determine the impact of sensor placement and data type mismatch [11].

Research Workflow and Signal Variance

The following diagram illustrates the core workflow of a biologging study and key points where tag placement introduces signal variance, affecting downstream analysis and conclusions.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Materials and Tools for Tag Placement Research

| Item | Primary Function in Research | Example Use Case |

|---|---|---|

| Tri-axial Accelerometer | Measures acceleration in three perpendicular axes (surge, heave, sway), forming the primary data for DBA and MSA calculations [2] [12]. | Core sensor in biologgers for quantifying animal movement and behavior. |

| Inertial Measurement Unit (IMU) | Often combines an accelerometer with a gyroscope (measuring orientation) and sometimes a magnetometer. Provides more detailed kinematic data [14]. | Used in human clinical gait analysis and detailed animal movement studies [14]. |

| Animal-borne Bio-loggers | Housing containing sensors (e.g., accelerometer, GPS, depth sensor) designed for deployment on animals. Vary in size, weight, and attachment method [2] [13]. | The complete package deployed on study animals to collect field data. |

| Reflective Motion Capture Markers | Used in laboratory settings for high-precision, gold-standard tracking of body segment movement in 3D space [11]. | Provides reference data for validating wearable sensors or for extracting acceleration signals as done in [11]. |

| Radio Frequency Identification (RFID) Ear Tags | Primarily for animal identification, but increasingly integrated with sensors for monitoring health and location in livestock [15] [16]. | Enables large-scale monitoring in agricultural settings, with placement standardized to the ear. |

| "Bur-Tagging" System | A contact- or projection-based system for deploying tags on furred animals without capture, aiming to reduce stress [13]. | A novel method for tag deployment, though with potentially less control over final tag placement compared to manual attachment. |

The experimental data and protocols presented confirm that tag placement is a significant, non-trivial source of signal variance in biologging research. The drastic performance drop in diagnostic models when training and testing data come from different body locations underscores that measurements are not freely transferable [11]. Furthermore, the validation of acceleration metrics like DBA relies on the assumption that the sensor accurately captures acceleration near the animal's center of mass, a condition directly governed by placement [2].

Strategic Recommendations for Researchers

- Standardize and Report: Within a study, standardize tag placement across individuals. In publications, explicitly document the precise anatomical placement, attachment method, and tag orientation with diagrams or photographs whenever possible.

- Validate for Your System: The relationship between DBA and energy expenditure should not be considered universal. Researchers should conduct calibration or validation studies specific to their study species and, critically, their chosen tag placement.

- Consider Data Type: When integrating data from multiple studies or using different placements, leveraging engineered features (e.g., gait characteristics) may be more robust than using raw acceleration data, as shown in human studies [11].

- Acknowledge the "Placement Problem": Experimental design and data interpretation must explicitly consider the limitations introduced by tag location. Findings from a tag on the head may not be directly comparable to those from a tag on the back, even for the same behavioral class.

In conclusion, the placement of a biologging tag is a fundamental parameter of experimental design, not a mere implementation detail. By systematically understanding and controlling for the variance it introduces, researchers can enhance the validity, reproducibility, and interpretive power of their science.

The Impact of Tag Attachment on Animal Behavior and Data Integrity

The deployment of animal-borne tags (bio-loggers) has become a cornerstone of behavioral ecology, movement ecology, and conservation science. These devices, including accelerometers, GPS receivers, and radio frequency identification (RFID) tags, provide critical data on animal behavior, physiology, and energy expenditure [17] [12]. However, the data integrity and behavioral impacts of these devices are profoundly influenced by how and where they are attached to the animal. Variations in attachment method and tag positioning can alter the acceleration signals used to infer behavior and energetics, potentially introducing systematic error that confounds ecological interpretation [18]. This review synthesizes experimental evidence on how tag attachment characteristics affect behavioral data quality, with particular focus on Dynamic Body Acceleration (DBA) metrics and their derivatives, which are widely used as proxies for energy expenditure [2] [18].

Fundamental Concepts: DBA Metrics and Their Ecological Applications

Dynamic Body Acceleration (DBA) is a vectorial quantity derived from tri-axial accelerometers that measures the dynamic component of acceleration resulting from animal movement [2]. The two primary variants are:

- Overall Dynamic Body Acceleration (ODBA): The sum of the absolute values of dynamic acceleration from three orthogonal axes [4].

- Vectorial Dynamic Body Acceleration (VeDBA): The vectorial sum of dynamic acceleration from three orthogonal axes, calculated using Pythagoras' theorem [4].

These metrics have become fundamental tools in biologging because they correlate with movement-based energy expenditure across diverse taxa [2] [18]. DBA serves as a critical proxy for propulsive power and energy expenditure in species ranging from marine mammals to terrestrial birds, enabling researchers to study energetics in free-living animals where direct calorimetry is impossible [2]. The reliability of these inferences, however, depends critically on consistent and appropriate tag attachment.

Experimental Evidence: Quantifying Attachment Effects on Data Integrity

Sensor Accuracy and Calibration Requirements

Fundamental inaccuracies in accelerometer sensors themselves can introduce error into DBA measurements before considering attachment factors. Laboratory tests reveal that individual acceleration axes require a two-level correction to eliminate measurement error, resulting in DBA differences of up to 5% between calibrated and uncalibrated tags in humans walking at various speeds [18].

Table 1: Impact of Accelerometer Calibration on DBA Measurement Error

| Condition | Calibration Status | DBA Error Magnitude | Experimental Context |

|---|---|---|---|

| Human walking at various speeds | Uncalibrated tags | Up to 5% higher DBA | Laboratory trials with defined courses |

| Human walking at various speeds | Calibrated using 6-orientation method | Accurate DBA values | Same laboratory conditions |

The 6-Orientation (6-O) calibration method involves placing motionless tags in six defined orientations (each for approximately 10 seconds) with one acceleration axis perpendicular to Earth's gravity in each orientation. This allows researchers to derive correction factors for each axis and apply a gain to convert readings to exactly 1.0 g [18]. This simple calibration procedure can be executed under field conditions and should be performed prior to deployments, with calibration data archived with resulting datasets.

Tag Placement and Position Effects

Tag placement on the animal's body introduces substantial variation in acceleration signals, often exceeding errors from sensor inaccuracy alone. Controlled studies demonstrate that device position produces greater variation in DBA than calibration error:

Table 2: Impact of Tag Placement Position on DBA Variation

| Species | Tag Positions Compared | DBA Variation | Experimental Context |

|---|---|---|---|

| Pigeon (Columba livia) | Upper vs. lower back | 9% variation | Wind tunnel flight with simultaneous tag deployment |

| Black-legged kittiwake (Rissa tridactyla) | Back vs. tail mount | 13% variation | Field deployment on wild birds |

| Human | Back vs. waist mount | ~0.25 g variation at intermediate speeds | Treadmill running |

This position-dependent variation arises from differential movement of body segments during locomotion. In birds, for instance, the essentially immovable box-like thorax experiences pitch changes over the wingbeat cycle that affect acceleration recordings differently depending on tag position [18]. This effect is more pronounced in mammals with flexible spines, where tag position relative to the center of mass significantly influences recorded acceleration values.

Attachment Method and Material Considerations

The physical attachment system and materials used for tags directly impact both data quality and animal welfare. Studies comparing attachment methods highlight the balance between secure mounting and minimizing behavioral impact:

Ear tags for livestock, particularly those incorporating RFID technology, have evolved significantly in material composition to address durability and animal comfort concerns. Traditional metal tags have been largely replaced by new composite materials (polymer matrices with carbon or glass fiber) that offer lightweight, high-strength alternatives with excellent corrosion resistance [15]. These advanced materials minimize the impact on animal activity patterns while maintaining data collection functionality.

Recent research on 3D-printed ear tags for virtual fencing applications in cattle demonstrates the critical importance of material selection. Finite Element Analysis (FEA) simulations and field testing revealed that Nylon 6/66 offered a 50% improvement in durability compared to high-speed resin prototypes when subjected to mechanical forces like chewing and environmental exposure [19]. This enhanced durability directly supports data integrity by maintaining consistent tag position and function over time.

Methodological Protocols for Assessing Attachment Effects

Comparative Tag Placement Experiments

To quantitatively evaluate position effects, researchers have deployed multiple tags simultaneously on individual animals. In a representative experiment with pigeons (Columba livia) flying in a wind tunnel, tags were mounted simultaneously in two positions on the back [18]. This controlled setup allowed direct comparison of acceleration signals from different body locations during identical behavioral sequences, isolating the effect of position from other variables.

Experimental Protocol:

- Equip subjects with two or more tags in different body positions

- Record synchronized acceleration data during standardized behaviors (e.g., level flight in wind tunnel)

- Calculate DBA metrics (ODBA and VeDBA) for each tag simultaneously

- Compare values statistically to quantify position-dependent variation

- Establish correction factors if necessary for cross-study comparisons

Field-Based Retrospective Analyses

Retrospective analyses of existing datasets with different attachment protocols provide complementary evidence from natural settings. One such analysis of red-tailed tropicbirds (Phaethon rubricauda) revealed that DBA varied by 25% between seasons when different tag generations were deployed using marginally different attachment procedures [18]. This approach highlights the challenges of attributing signal changes to a single factor when confounding influences tend to covary in field conditions.

Research Reagent Solutions: Essential Materials for Tag Attachment Studies

Table 3: Essential Research Materials for Tag Attachment Studies

| Item | Function/Application | Technical Considerations |

|---|---|---|

| Tri-axial accelerometers | Measures acceleration in 3 orthogonal axes (surge, sway, heave) | Select appropriate range (±3g to ±8g) and sampling rate (20-40 Hz) [4] [18] |

| RFID ear tags | Animal identification and tracking using radio frequency | Must comply with regulatory standards (e.g., USDA 840 standard); consider reading distance and environmental resilience [15] [20] |

| Bio-logger Ethogram Benchmark (BEBE) | Standardized dataset for comparing behavior classification methods | Includes 1654 hours of data from 149 individuals across 9 taxa; enables method validation [12] |

| Nylon 6/66 polymer | Material for 3D-printed ear tags | Offers superior durability (50% improvement over resin); balance of weight and strength [19] |

| Calibration jig | For 6-orientation accelerometer calibration | Provides precise orientation control during calibration procedure [18] |

| Finite Element Analysis software | Simulates mechanical stresses on tag designs | Predicts failure points; optimizes design before fabrication [19] |

Visualization of Experimental Workflow

The following diagram illustrates the key methodological pathways for evaluating tag attachment effects on data integrity:

The evidence synthesized in this review demonstrates that tag attachment characteristics significantly impact both animal behavior and the integrity of collected data, particularly DBA metrics used to infer energy expenditure. The interaction between tag placement, attachment method, and sensor accuracy introduces measurable variation that can confound ecological interpretation and cross-study comparisons.

To enhance data quality and methodological consistency, researchers should implement several key practices:

- Standardized pre-deployment calibration using the 6-orientation method for all accelerometers

- Transparent reporting of exact tag placement and attachment methods in publications

- Pilot studies to quantify position-specific effects for new study systems

- Material selection that balances durability, weight, and animal welfare considerations

- Utilization of shared benchmarks like BEBE for method validation and comparison

Future research should prioritize developing attachment methods that minimize both behavioral impacts and data artifacts, particularly for long-term deployments and cross-species comparative studies. Only through rigorous attention to these methodological details can we ensure the ecological validity of inferences drawn from bio-logger data.

Best Practices for Tag Deployment: From Experimental Design to Data Acquisition

In the field of biologging research, Dynamic Body Acceleration (DBA) and Minimum Specific Acceleration (MSA) have emerged as central proxies for estimating energy expenditure and propulsive power in free-living animals [2]. These metrics are derived from tri-axial acceleration data and hold the potential to provide relatively simple and affordable estimates of movement-based energy expenditure. The core premise is that 3-axis acceleration data recorded by a biologger accurately represents the acceleration of the animal’s center of mass caused predominantly by propulsive muscular effort [2].

The strategic placement of data tags on an animal's body is a critical, yet often underexplored, factor that directly influences the recorded data signatures. Tag placement affects the degree to which the accelerometer data captures whole-body movement versus localized motions, thereby impacting the validity and interpretation of DBA and MSA. This guide provides a structured comparison of tag placements on the head, back, and limbs, synthesizing experimental data and methodologies to inform researcher choices within a broader thesis on evaluating tag placement effects.

Comparative Analysis of Tag Placement Sites

The choice of tag placement involves trade-offs between minimizing animal disturbance, ensuring tag retention, and the quality and interpretability of the data collected. The following sections objectively compare three primary tag placement sites.

Head Placement

- Data Signature Characteristics: Head-mounted tags typically capture rapid, high-frequency movements associated with foraging, feeding, and sensory investigation. This placement can provide excellent data on feeding strikes and head orientation but may be less representative of overall body movement and propulsive power for locomotion.

- Considerations: The head is a sensitive area for many species. Attachment must be extremely secure yet minimally invasive to avoid affecting natural behavior. Data may contain more high-frequency "noise" from abrupt head movements unrelated to overall locomotion.

Back Placement

- Data Signature Characteristics: Placement on the dorsum, near the center of mass, is considered the gold standard for many swimming, flying, and terrestrial species [2]. It theoretically provides the most accurate representation of the animal's whole-body dynamic acceleration, as it is closest to the center of mass and is less affected by the pendulum-like motions of the head and limbs. Research on California sea lions and narwhals has validated the use of back-mounted tags for predicting propulsive power and monitoring post-release behavior [2] [21].

- Considerations: This placement is often the most stable and durable. However, in species with flexible spines or specific locomotion styles, it might not perfectly capture all power strokes. The attachment often requires more invasive procedures, such as bolt-on configurations, which have been shown to potentially affect animal behavior post-release [21].

Limb Placement

- Data Signature Characteristics: Tags on flippers, wings, or legs capture fine-scale kinematics of the specific limb, such as stroke rate, amplitude, and gait. This is invaluable for studies focused on the biomechanics of locomotion itself.

- Considerations: Limb movement is often not perfectly synchronized with the body's core acceleration. Therefore, metrics derived from limb-mounted tags may be a less reliable proxy for whole-body energy expenditure compared to back-mounted tags. Limb tags are also more susceptible to damage and may be shed during molting.

Quantitative Data Comparison

The following tables summarize key experimental findings and performance characteristics related to tag placement and the resulting data.

Table 1: Comparison of Data Characteristics by Tag Placement Site

| Placement Site | Representative Data Signature | Strengths | Limitations | Best Use Cases |

|---|---|---|---|---|

| Head | High-frequency peaks from feeding/pecking; precise orientation data. | Direct data on feeding events, gaze, and sensory behavior. | Data may correlate poorly with whole-body energy expenditure; potentially high signal noise. | Foraging ecology, feeding kinematics, sensory ecology. |

| Back | Strong correlation with propulsive power and whole-body movement [2]. | Considered best proxy for overall dynamic body acceleration and energy expenditure [2]. | May miss fine-scale limb movements; attachment can be more invasive. | Energetics studies, migration ecology, broad behavioral classification. |

| Limb | Cyclic, periodic signals corresponding to stroke or stride cycles. | Excellent for quantifying gait, stroke rate, and limb-specific kinematics. | May overestimate energy cost if limb motion is not the primary driver of overall movement. | Locomotion biomechanics, gait analysis, fine-scale behavioral studies. |

Table 2: Experimental Data on Back-Mounted Tag Performance in Marine Mammals

| Species | Metric Used | Correlation with Propulsive Power | Temporal Scale | Key Finding | Source |

|---|---|---|---|---|---|

| California Sea Lion | DBA / MSA | Linear, significant relationship | 5-second intervals & dive phases | Mean DBA and MSA predicted mean propulsive power even at fine temporal scales [2]. | [2] |

| Narwhal | VeDBA, Norm of Jerk | Used to assess post-handling recovery | Hours to days | Most individuals returned to baseline behavior (recovery) within 24 hours after release, based on accelerometry-derived behaviour [21]. | [21] |

Detailed Experimental Protocols

To ensure the validity and reproducibility of studies using accelerometry, standardizing experimental protocols is essential. The following methodologies are adapted from recent, high-quality research.

Protocol for Validating Acceleration Metrics Against Propulsive Power

This protocol is based on the work of Cole et al. as cited in the California sea lion study [2].

- Animal Instrumentation: Capture and instrument animals (e.g., lactating adult female California sea lions) under appropriate anesthesia. Attach tri-axial accelerometers securely to the dorsum to ensure the tag is positioned near the animal's center of mass.

- Data Collection: Record high-resolution (e.g., 5-second intervals) tri-axial acceleration data throughout the animal's natural diving and movement cycles.

- Independent Power Calculation: Calculate propulsive power independently using hydrodynamic glide equations and modeling. This involves using swim speed, depth, and animal morphometrics (mass, length, girth) to estimate drag and buoyancy across depth. A conversion factor is then applied to derive metabolic power input (propulsive power) from mechanical power output [2].

- Acceleration Metric Calculation: From the raw acceleration data, calculate DBA (either Overall DBA or Vectorial DBA) and MSA for the same 5-second intervals. The dynamic acceleration is separated from static acceleration using appropriate smoothing windows for DBA. MSA is calculated as the absolute value of the difference between the gravitational vector (1 g) and the norm of the three acceleration axes [2].

- Statistical Validation: Use linear mixed-effects models to test the relationship between the mean DBA/MSA and the mean calculated propulsive power at the chosen temporal scales (e.g., within-dive 5-second intervals and full dive phases). The models should include random effects for individual animals to account for inter-individual variation [2].

Protocol for Assessing Post-Tagging Behavioral Effects

This protocol is derived from narwhal post-release monitoring studies [21].

- Capture and Tagging: Capture study animals (e.g., narwhals) using best-practice methods. Record handling time precisely, from capture to release. Attach biologging devices (accelerometers, satellite tags) using recommended configurations (e.g., 'limpet'-style or 'bolt-on').

- Accelerometry Data Collection: Program recoverable accelerometers to record high-resolution data (e.g., three-dimensional acceleration) immediately upon release and for a sustained period (e.g., 72 hours or more).

- Derivation of Behavioral Metrics: Calculate continuous metrics from the accelerometry data to quantify behavior:

- Activity Level: Calculate the "norm of jerk" (the square-root of the sum of squares for the differential of acceleration in all axes).

- Energy Expenditure Proxy: Calculate Vectorial Dynamic Body Acceleration (VeDBA).

- Swimming Activity: Extract tail-beat or stroke rate from the dynamic acceleration signals [21].

- Establish Baseline and Recovery: Define a post-release "recovery" period. The time to recovery is identified when an individual's behavioral metrics return to and stabilize at a long-term mean (baseline), often measured beyond 36 hours or 7-14 days post-release.

- Modeling the Effect: Use generalized additive models (GAMs) to describe changes in behavioral metrics over time post-release. Use handling time, sex, body size, and tag configuration as covariates to determine their influence on the magnitude and duration of behavioral effects [21].

Research Workflow and Data Analysis

The following diagram illustrates the core workflow for a study investigating tag placement effects, from experimental design to data interpretation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of tag placement studies requires a suite of specialized tools and reagents. The following table details key items and their functions.

Table 3: Essential Materials for Tag Effect and Placement Studies

| Item | Function & Application | Example / Specification |

|---|---|---|

| Tri-axial Accelerometer Loggers | Core sensor for measuring dynamic body acceleration in three dimensions (surge, sway, heave). | Tags capable of high-frequency recording (e.g., 10-100 Hz), often integrated with other sensors. |

| Satellite Telemetry Tags | For remote tracking of animal movement and location over long durations and in inaccessible environments. | 'Bolt-on' or 'limpet'-style configurations for long-term deployment on cetaceans [21]. |

| Hydrodynamic Glide Model | Software and algorithms for the independent calculation of propulsive power from dive profiles, swim speed, and animal morphometrics [2]. | Custom scripts implementing equations for drag, buoyancy, and power conversion. |

| Linear Mixed-Effects Models | A statistical modeling framework to analyze accelerometry data, accounting for fixed effects (e.g., metric type) and random effects (e.g., individual animal) [2]. | Implemented in R using packages such as lme4 or nlme. |

| Generalized Additive Models (GAMs) | A statistical tool to model non-linear trends in behavioral recovery over time post-tagging [21]. | Implemented in R using the mgcv package. |

| Animal Handling & Anesthesia Equipment | For the safe capture, restraint, and attachment of tags, following species-specific best practices to ensure animal welfare. | Custom hoop nets, inhalation anesthesia systems (e.g., isoflurane) for marine mammals [2] [21]. |

Step-by-Step Protocol for Secure and Repeatable Tag Attachment

The accurate assessment of animal behavior through dynamic body acceleration (DBA) metrics is fundamentally dependent on the method of sensor attachment. Secure and repeatable tag placement ensures that collected data faithfully represents the animal's natural movements rather than artifacts caused by tag displacement or motion [22]. This guide objectively compares the performance of major tag attachment methodologies used in biologging science, with a specific focus on their effects on data quality, retention duration, and animal welfare. As biologging research expands to include more elusive and morphologically challenging species, such as batoids and free-flying birds, the development of standardized, minimally invasive attachment protocols becomes increasingly critical for generating comparable and valid scientific data [22] [23].

Comparative Analysis of Tag Attachment Methods

The following analysis contrasts the performance of four primary attachment methods based on data from field and captive trials. Each method presents a unique trade-off between retention security, potential for animal impact, and applicability across different species.

Table 1: Quantitative Comparison of Tag Attachment Method Performance

| Attachment Method | Mean Retention Time (Hours) | Retention Range (Hours) | Key Advantages | Key Limitations & Animal Impact |

|---|---|---|---|---|

| Spiracle Strap Suction Cup [22] | 12.1 ± 11.9 SD | 0.1 – 59.2 | Significantly increased retention; Allows placement on smooth-skinned animals. | Requires unique morphological feature (spiracle); Handling stress during attachment. |

| Standard Silicone Suction Cups [22] | < 24 (Typical) | Usually a few hours | Minimally invasive; No penetration of tissue. | Historically limited retention times; High risk of premature detachment. |

| Harness Systems [22] | Months (Potential) | N/A | Potential for long-term deployment. | Can restrict natural movements or growth; Potential for entanglement or injury. |

| Direct Anchors (Fin/Tail) [22] | Variable | N/A | Secure, rigid attachment. | Invasive, causing tissue penetration; Not ideal for fine-scale movement data. |

Table 2: Sensor Data Quality and Behavioral Impact Findings

| Assessment Metric | Spiracle Strap Suction Cup | Standard Suction Cups | Harness Systems | Direct Anchors |

|---|---|---|---|---|

| DBA Data Quality | High (fixed, rigid attachment) [22] | Moderate (can shift or detach) [22] | Variable (can impede movement) | High, but may not reflect body pitch [22] |

| Impact on Brood Provisioning | Not Reported | Not Reported | Not Reported | Minimal impact observed in crows [23] |

| Impact on Reproductive Success | Not Reported | Not Reported | Not Reported | Minimal impact observed in crows [23] |

| Post-Tagging Recovery | Required (handling stress) [22] | Required (handling stress) | Potential long-term effects | Required (handling stress) |

Experimental Protocols for Method Evaluation

Protocol: Spiracle Strap Suction Cup Attachment

This protocol was developed for whitespotted eagle rays (Aetobatus narinari) and details a method to improve retention on smooth-skinned elasmobranchs [22].

- Tag Design and Assembly: The multi-sensor tag package integrated a CATS inertial motion unit (IMU), camera, broadband hydrophone (0–22050 Hz), acoustic transmitter, and satellite transmitter. The complete package measured 24.1 x 7.6 x 5.1 cm, weighed 430 g in air, and was positively buoyant. Syntactic foam was used for flotation, and three holes were drilled to mount two passive silicone suction cups with aluminum "L" locking pins [22].

- Animal Preparation and Handling: Rays were captured from the wild. The attachment site on the anterior dorsal region was cleared of major debris. Researchers noted that elasmobranchs often require multiple hours to recover from wild capture, which must be factored into experimental timing [22].

- Attachment Procedure: The tag was positioned on the anterior dorsal region. Two silicone suction cups were secured to the skin. A critical step involved securing a galvanic timed release (set for 24-h or 48-h) to rigid plastic hooks placed on the cartilage of each spiracle. This spiracle strap was identified as the key feature significantly increasing retention time [22].

- Validation and Data Collection: The tag's IMU recorded tri-axial accelerometry, gyroscope, and magnetometry at 50 Hz. Video and audio recorded foraging behavior and shell fracture acoustics, allowing for direct validation of feeding events inferred from acceleration data [22].

Protocol: MiniDTAG Harness for Avian Species

This protocol describes the deployment of a lightweight, multi-sensor device on free-living carrion crows (Corvus corone), with a focus on assessing device impact [23].

- Device Specifications: The MiniDTAG was a 12.5 g package integrating a microphone, tri-axial accelerometer, tri-axial magnetometer, and pressure sensors. It contained a 1.2 Ah lithium primary battery and a 32 GB flash memory card [23].

- Deployment and Impact Assessment: Over three breeding seasons, 52 devices were deployed. The attachment method was an auto-releasing harness. To quantitatively evaluate impact, researchers analyzed 825 hours of video from 22 crow groups, specifically measuring brood feeding rates and reproductive success for tagged versus untagged birds. The study found minimal effects on these key parameters, supporting the method's use for medium-sized birds [23].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Materials and Reagents for Tag Attachment Research

| Item Name | Function/Application | Specific Example / Properties |

|---|---|---|

| Multi-Sensor Biologging Tag | Core data acquisition unit for movement, video, and sound. | Customized Animal Tracking Solutions (CATS) Cam with IMU (50 Hz), camera (1080p/30fps), and hydrophone [22]. |

| Inertial Measurement Unit (IMU) | Quantifies fine-scale movements and postural kinematics. | Contains a gyroscope, magnetometer, and accelerometer (e.g., sampling at 50-200 Hz) [22]. |

| Silicone Suction Cups | Provides non-penetrating attachment to smooth surfaces. | Passive silicone cups mounted with locking pins; used for rays and marine mammals [22]. |

| Galvanic Timed Release | Ensments timed, automatic detachment of the tag. | Typically set for 24-hour or 48-hour release; critical for tag recovery and limiting animal carry time [22]. |

| Syntactic Foam | Provides customizable buoyancy for aquatic tags. | Machined to create a float package, making the tag positively buoyant in water [22]. |

| Animal-Borne Microphone | Records vocalizations and environmental/foraging sounds. | HTI-96 Min hydrophone; records at 44.1 kHz to capture sounds like shell fracture during predation [22]. |

Workflow and Decision Pathway for Method Selection

The following diagram illustrates the logical decision process for selecting an appropriate tag attachment method based on research objectives and subject morphology.

Tag Attachment Method Decision Workflow

The selection of a tag attachment method is a critical determinant in the quality and reliability of DBA metrics and other biologging data. Quantitative evidence demonstrates that innovations like the spiracle strap can significantly enhance the performance of traditional suction cup attachments for specific morphologies, achieving mean retention times of over 12 hours on whitespotted eagle rays [22]. Meanwhile, harness systems on avian species show that miniaturized, auto-releasing tags can collect vast datasets with minimal impact on key behavioral and reproductive metrics [23]. The continued refinement of these protocols, guided by standardized experimental evaluation and a focus on the three pillars of data security, animal welfare, and methodological repeatability, is essential for advancing the field of animal-attached sensors. Future research should focus on developing even less invasive, longer-lasting attachment techniques and further quantifying the impact of tags across a wider range of species and behaviors.

The accurate measurement of energy expenditure is fundamental to advancing our understanding of animal ecology, behavior, and physiology. Dynamic Body Acceleration (DBA) has emerged as a powerful proxy for estimating energy expenditure in free-ranging animals, leveraging data collected from animal-borne accelerometers [24]. The core premise of DBA is that acceleration due to animal movement correlates with movement-based energy costs, primarily because a significant portion of an animal's energy budget is allocated to locomotion [3] [24]. The technique's utility, however, is entirely dependent on robust calibration procedures that relate raw acceleration signals to known activity levels and validated energy costs, typically measured via oxygen consumption rates ( [3] [24]).

Calibration is not merely a preliminary step but a critical process that defines the validity and applicability of all subsequent energetic inferences. It transforms device-specific acceleration units (m/s²) into physiologically meaningful rates of energy expenditure (Watts or O₂ consumption) [25]. This guide objectively compares the key calibration methodologies, their experimental protocols, and the factors influencing their accuracy, providing a foundational resource for researchers designing studies within the broader context of tag effects on DBA metrics.

Core Calibration Methodologies: A Comparative Analysis

Different calibration approaches have been developed to suit various research questions, species, and logistical constraints. The primary methodologies include laboratory respirometry, allometric calibration, and the emerging video-based DBA technique.

Table 1: Comparison of Primary DBA Calibration Methods

| Method | Core Principle | Key Experimental Protocol | Data Output | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Laboratory Respirometry [24] | Simultaneously measures DBA and oxygen consumption rate (( \dot{V}O_2 )) under controlled conditions to establish a predictive relationship. | Animals instrumented with accelerometers are placed in a respirometer and subjected to controlled activities (e.g., treadmill running, flume swimming). ( \dot{V}O_2 ) is measured via indirect calorimetry. | Linear or non-linear calibration equation converting DBA (m/s²) to metabolic power (W) or ( \dot{V}O_2 ). | Considered the gold standard; provides direct, individualized calibration. | Logistically challenging; requires animal captivity; may not reflect full natural behavioral repertoire. |

| Allometric Calibration [25] | Uses established allometric equations for resting and locomotion costs as a substitute for empirical respirometry to create a calibration. | Acceleration and behavioral data are collected from free-ranging animals. Locomotion speed is estimated from sensor data. Allometric equations (e.g., Kleiber's law) provide energy expenditure estimates for calibration. | Calibration equation derived from allometric estimates, applied to DBA data to estimate Daily Energy Expenditure (DEE) in joules. | Bypasses need for difficult lab respirometry; allows retrospective analysis. | Relies on generalized equations that may not capture individual variation; may underestimate total DEE [25]. |

| Video-Based DBA [3] [26] | Uses marker-less video tracking and 3D reconstruction to compute DBA, which is then calibrated against oxygen consumption. | Fish are recorded in a respirometer with multiple cameras. 3D posture is reconstructed (e.g., using DeepLabCut), and acceleration is derived from positional data before calibration with ( \dot{V}O_2 ) [3]. | Calibration equation for converting video-derived DBA to ( \dot{V}O_2 ). | Non-invasive; applicable to very small animals where loggers are impractical; captures naturalistic movements. | Currently limited to controlled laboratory environments with clear camera views. |

Detailed Experimental Protocols for Key Methods

Protocol for Laboratory Respirometry Calibration

The laboratory respirometry protocol is designed to elicit a range of activity levels while simultaneously measuring acceleration and energy expenditure [3] [24].

- Instrumentation: The subject animal is fitted with a tri-axial accelerometer logger, ensuring secure attachment to minimize movement artifacts. The attachment method (e.g., harness, collar, adhesive) should be species-appropriate and not impede natural movement [18].

- Acclimation: The animal is acclimated to the respirometry chamber (e.g., a flume for aquatic species or a treadmill enclosure for terrestrial animals) to reduce stress.

- Experimental Trials: The subject is exposed to a series of controlled conditions, typically including:

- Resting Measurements: To establish baseline metabolic rate.

- Controlled Activity: Incrementally increasing intensity of exercise (e.g., flow speed in a flume, speed, or incline on a treadmill).

- Data Collection:

- Acceleration: Raw acceleration data is recorded at a high frequency (e.g., 10-50 Hz) throughout the trials.

- Oxygen Consumption: The rate of oxygen consumption (( \dot{V}O2 )) is measured via indirect calorimetry, often using intermittent flow respirometry. The oxygen concentration in the respirometer is monitored, and ( \dot{V}O2 ) is calculated from the decline in O₂ during measurement periods, corrected for background respiration [3].

- Data Processing and Model Fitting:

- DBA Calculation: From the raw acceleration, static acceleration (gravity) is separated from dynamic acceleration (movement) using a smoothing filter. Vectorial Dynamic Body Acceleration (VeDBA) or Overall DBA (ODBA) is then calculated [2].

- Calibration: A statistical model (often linear mixed-effects) is fitted with ( \dot{V}O_2 ) as the response variable and DBA as the predictor. Individual animal identity is often included as a random effect to account for inter-individual variation [2].