Beyond Habitat Maps: Integrating Movement Ecology and Advanced Modeling for Effective Wildlife Corridor Design

This article provides a comprehensive guide for researchers and conservation professionals on integrating habitat suitability modeling with corridor design to address habitat fragmentation.

Beyond Habitat Maps: Integrating Movement Ecology and Advanced Modeling for Effective Wildlife Corridor Design

Abstract

This article provides a comprehensive guide for researchers and conservation professionals on integrating habitat suitability modeling with corridor design to address habitat fragmentation. It explores the foundational principles of connectivity and habitat fragmentation, compares advanced modeling methodologies from species distribution models to circuit theory, and addresses critical challenges such as model overfitting and the gap between habitat suitability and actual animal movement. The content emphasizes robust validation techniques and the synthesis of model ensembles to enhance predictive accuracy. Concluding with future directions, the article serves as a strategic framework for developing effective, evidence-based conservation corridors that support species persistence under changing environmental conditions.

The Bedrock of Connectivity: Understanding Habitat Suitability and Fragmentation

Defining Habitat Suitability and Its Critical Role in Conservation Planning

Habitat Suitability (HS) is defined as the capacity of a habitat to support a viable population of a specific species over an ecological time-scale [1]. It represents a measure of how well a particular environment provides the necessary biotic and abiotic factors to meet a species' needs for survival, reproduction, and overall population persistence [2] [3]. In the specific context of corridor design research, understanding habitat suitability is foundational, as corridors function as conduits facilitating animal movement and gene flow between fragmented habitat patches [4].

The concept exists on a spectrum, where habitats can be classified from highly suitable (offering optimal conditions) to marginally suitable (allowing for survival but not thriving populations), to entirely unsuitable (lacking critical resources) [2]. A robust, scientifically-grounded definition moves beyond simple measures of species presence to assess the functional availability of resources and the ecological context within a dynamic landscape [2].

Core Parameters and Quantitative Assessment

The assessment of habitat suitability relies on quantifying key environmental variables that influence a species' distribution and persistence. These factors are typically categorized as biotic (living) and abiotic (non-living), and their relative importance varies by species and ecosystem.

Table 1: Fundamental Factors Influencing Habitat Suitability

| Factor Category | Specific Variables | Role in Suitability Assessment |

|---|---|---|

| Abiotic Factors | Topography (elevation, slope), Climate (temperature, precipitation), Distance to Water, Soil Composition | Determines the physical and chemical conditions a species can tolerate [2] [3]. |

| Biotic Factors | Vegetation Type and Structure, Prey Availability, Presence of Predators/Competitors | Provides essential resources for food, shelter, and breeding [2] [4]. |

| Anthropogenic Factors | Land Use/Land Cover (LULC), Proximity to Roads, Human Population Density | Measures the degree of human impact, which often reduces suitability through habitat loss and disturbance [3] [5]. |

The synthesis of these factors results in a Habitat Suitability Index (HSI), which is a numerical value, typically ranging from 0 to 1, where 0 represents completely unsuitable habitat and 1 represents optimal conditions [3] [5]. This index can be mapped and classified for conservation planning.

Table 2: Example Habitat Suitability Classification from a Wildlife Sanctuary Study

| Suitability Class | Percentage of Study Area | Implication for Conservation |

|---|---|---|

| Highly Suitable | 18.9% | Priority areas for protection and core corridor nodes. |

| Suitable | 19.5% | Important for landscape connectivity and buffer zones. |

| Moderately Suitable | 19.9% | Potential targets for habitat restoration efforts. |

| Less Suitable | 19.5% | Limited value; may require significant intervention. |

| Unsuitable | 22.2% | Areas to be avoided in corridor planning or targeted for long-term restoration [3] [6]. |

Methodological Protocols for Habitat Suitability Modeling in Corridor Design

GIS-Based Multi-Criteria Decision Making (MCDM)

Application Note: This protocol is ideal for creating foundational habitat suitability maps in data-limited scenarios, providing a critical first step for identifying potential corridor locations [3].

Workflow:

- Data Collection: Acquire spatial datasets for key factors (Table 1). Essential data includes:

- Digital Elevation Model (DEM) for topography and slope.

- Land Use/Land Cover (LULC) data from satellite imagery (e.g., Landsat).

- Layers for human disturbance (road networks, population density).

- Distance-to-water sources layers [3].

- Factor Standardization: Reclassify all factor layers to a common scale (e.g., 1-5 or 0-1) based on their perceived or known positive/negative influence on habitat suitability.

- Weight Assignment using AHP: Use the Analytical Hierarchy Process (AHP) to assign weights to each factor based on its relative importance. This involves creating a pairwise comparison matrix and calculating a consistency ratio to ensure logical judgment [3].

- Suitability Index Calculation: Perform a Weighted Linear Combination (WLC) in a GIS environment. The HSI is calculated using the formula:

HSI = ∑ (Weight_i * ScaledValue_i)where the sum is across all factors. - Map Classification: Classify the continuous HSI raster into distinct suitability classes (e.g., Unsuitable to Highly Suitable) using a method like quantile classification for final mapping and analysis [3] [6].

Movement-Based Corridor Identification

Application Note: This protocol addresses a key limitation of traditional habitat suitability models, which may not accurately capture the essence of animal-defined corridors. It is recommended for validating and refining corridor designs predicted by MCDM or other habitat-focused approaches [4].

Workflow:

- GPS Data Collection: Fit target species with high-resolution GPS collars programmed to record locations at frequent intervals (e.g., every 15 minutes) over the study period [4].

- Behavioral Classification: Analyze movement tracks to identify "corridor behavior." This is defined by two primary metrics:

- Upper Percentile of Speeds: Identifying segments where animals move quickly.

- Lower Percentile of Turning Angles: Identifying segments with near-parallel, directed movement [4].

- Spatial Definition of Corridors: Calculate the occurrence distribution (e.g., using dynamic Brownian bridge movement models) exclusively from the locations classified as corridor behavior. Define contiguous areas of the 95% occurrence distribution as formal corridor polygons [4].

- Validation against Habitat Models: Compare the spatially explicit, animal-defined corridors with the habitat suitability map generated from the MCDM protocol. Research indicates there may be no significant difference in habitat suitability values between the used corridors and their immediate surroundings, highlighting that corridors are not merely spatial bottlenecks of high suitability but are driven by movement efficiency [4].

Table 3: Key Research Reagent Solutions for Habitat Suitability Modeling

| Tool/Resource | Function/Application | Specifications & Considerations |

|---|---|---|

| GPS Telemetry Collars | High-resolution tracking of animal movement for empirical corridor identification and model validation. | Select based on fix interval, battery life, and drop-off mechanism. Accuracy is critical for fine-scale movement analysis [4]. |

| GIS Software (e.g., ArcGIS, QGIS) | Platform for spatial data management, analysis, and map production. Essential for running MCDM and visualizing HSI outputs. | Requires capabilities for raster calculation, reclassification, and spatial analyst tools [3]. |

| Landsat/Sentinel Satellite Imagery | Primary data source for deriving Land Use/Land Cover (LULC) maps and monitoring landscape change over time. | 30m resolution (Landsat) provides a good balance between spatial and temporal coverage for landscape-scale studies [3]. |

| Digital Elevation Model (DEM) | Provides topographic variables (elevation, slope, aspect) which are key abiotic factors in HSM. | Resolution (e.g., 12.5m SRTM, 30m ASTER) should match the scale of the research question [3]. |

| R/Python with Specialized Libraries | Statistical computing and scripting for advanced analyses, including running AHP, creating species distribution models, and movement analysis. | Key libraries: move for movement data [4], GDAL for spatial data, NumPy and Pandas for data manipulation [5]. |

Integrated Workflow for Corridor Design Research

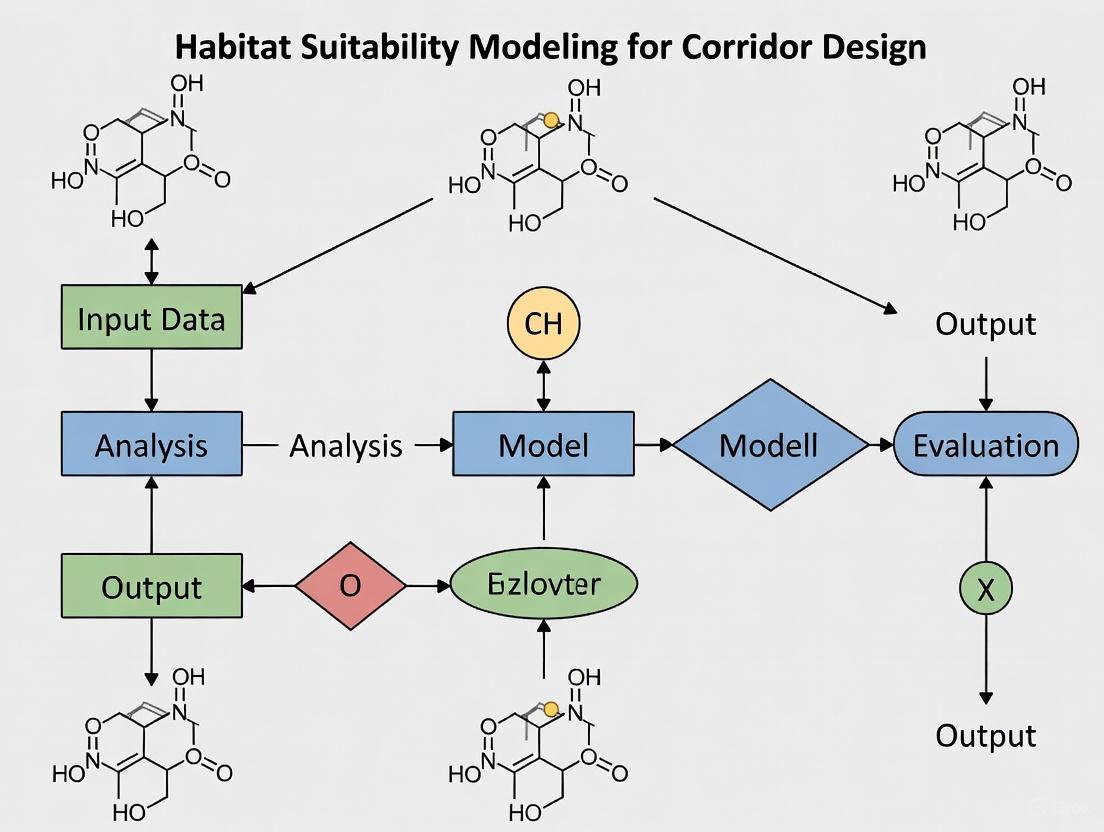

The following diagram illustrates the synergistic integration of habitat suitability modeling and movement data analysis to inform robust corridor design.

The Impacts of Habitat Fragmentation and Barrier Effects on Population Viability

Habitat fragmentation, the process by which extensive habitats are subdivided into smaller, isolated patches, is a primary driver of global biodiversity loss [7] [8]. This phenomenon introduces barrier effects that disrupt species movement, gene flow, and ecological processes, ultimately compromising population viability [9] [10]. Within research focused on modeling habitat suitability for corridor design, understanding these impacts is fundamental. Corridors aim to mitigate fragmentation by reconnecting landscapes, but their effective design requires a precise understanding of how fragmentation influences population persistence. This document provides detailed application notes and experimental protocols to standardize the assessment of fragmentation impacts on population viability, providing researchers with robust methods to generate data essential for effective conservation corridor planning.

Quantitative Foundations of Fragmentation Impacts

A synthesis of long-term fragmentation experiments across multiple biomes and continents provides compelling quantitative evidence of its effects. The following table summarizes key consolidated findings on how fragmentation reduces biodiversity and impairs ecosystem functions [8].

Table 1: Measured Effects of Habitat Fragmentation from Experimental Studies

| Ecological Metric | Impact of Fragmentation | Notes and Context |

|---|---|---|

| Overall Biodiversity | Reductions of 13% to 75% | The most severe effects are observed in the smallest and most isolated fragments. |

| Ecosystem Function | Decreased biomass; altered nutrient cycles. | Effects magnify with the passage of time since fragmentation occurs. |

| Animal Movement & Dispersal | Reduced movement among fragments; decreased recolonization after local extinction. | A result of increased isolation and the barrier effect of the intervening matrix. |

| Species Abundance | Generally reduced for birds, mammals, insects, and plants. | Complex patterns; some species may increase due to release from competition or predation. |

| Ecological Processes | Reduced seed predation and other species interactions. | Driven by disrupted plant-pollinator and predator-prey mutualisms [9]. |

The sensitivity to fragmentation varies significantly among species. The table below contrasts the responses of specialist and generalist species, a critical consideration for predicting population viability and prioritizing conservation efforts [9].

Table 2: Specialist vs. Generalist Species Sensitivity to Fragmentation

| Trait | Specialist Species | Generalist Species |

|---|---|---|

| Pollination Syndrome | Often specialist (e.g., sexually deceptive orchids). | Typically generalist, utilizing multiple pollen vectors. |

| Response to Isolation | Highly sensitive; significant decline in reproductive success (e.g., capsule set). | More resilient; reproduction less affected by patch isolation. |

| Key Limiting Factors | Pollination limitation; complex habitat and landscape-scale interactions. | Primarily habitat-scale variables (e.g., bare ground cover). |

| Extinction Risk | Higher, especially for obligate seeders in fragmented habitats. | Lower, buffered against potential pollinator losses. |

Integrated Habitat-Population Viability Modeling Protocol

Effective corridor design requires integrating projections of habitat change with models of population viability. The following workflow outlines a standardized protocol for linking these components, drawing on advanced methodologies from recent conservation research [11].

Figure 1: Integrated workflow for linking habitat dynamics and population viability analysis.

Protocol: Integrated Habitat and PVA Modeling

Application: This protocol is designed to project the long-term viability of species in fragmented landscapes under various habitat management and corridor design scenarios. It is particularly useful for species dependent on successional habitats, such as the Florida scrub-jay [11].

I. Habitat Dynamics Modeling

- Objective: To project future changes in habitat extent, quality, and configuration.

- Steps:

- Habitat Classification: Map current habitat based on ecological requirements of the focal species (e.g., for Florida scrub-jay: optimal oak scrub, suboptimal closed forest, unsuitable urban). Use field surveys and remote sensing [11] [12].

- Transition Modeling: Model future habitat states using Adaptive Resource Management (ARM) frameworks or state-transition models. Key drivers include vegetation succession, natural disturbances (e.g., fire), and managed disturbances (e.g., mechanical clearing) [11].

- Spatial Explicit Outputs: Generate maps of projected habitat quality over a 50-100 year time horizon.

II. Population Viability Analysis (PVA) Setup

- Objective: To model population dynamics and estimate extinction risk.

- Steps:

- Demographic Parameterization: Collect species-specific vital rates (age-/stage-specific survival and fecundity). Critically, link these rates to habitat quality (e.g., lower fecundity in suboptimal habitat) [11].

- Metapopulation Structure: Define the model's spatial structure based on the fragmented landscape. Identify discrete subpopulations occupying individual habitat patches.

- Dispersal Rules: Parameterize dispersal rates and distances between subpopulations. This is the core parameter influenced by corridor design. Use empirical data where available.

- Genetic Considerations: For long-term viability, configure the model to track genetic metrics, such as the retention of >95% of source population genetic diversity to avoid inbreeding depression [11].

III. Model Integration and Scenario Evaluation

- Objective: To assess viability under different future scenarios.

- Steps:

- Link Models: Input the projected habitat maps from Step I into the PVA model from Step II. This ensures demographic rates and carrying capacities change over time as the habitat changes.

- Run Simulations: Execute multiple stochastic simulations (e.g., 1,000 iterations) for each management scenario.

- Key Output Metrics:

- Probability of extinction or quasi-extinction over 100 years.

- Final metapopulation size and trend.

- Percent of genetic diversity retained.

- Compare Scenarios: Evaluate the efficacy of different corridor designs, habitat restoration efforts, and population translocations in improving viability metrics [11].

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Essential Research Tools for Fragmentation and Viability Analysis

| Tool / Solution | Function in Research | Example Application / Note |

|---|---|---|

| GIS Software & Spatial Data | Core platform for mapping habitats, quantifying fragmentation metrics, and designing corridors. | Used to calculate patch size, isolation, edge-to-area ratio, and landscape connectivity indices [9] [12]. |

| RAMAS GIS, VORTEX | Specialist software for building Population Viability Analysis (PVA) models. | VORTEX is an individual-based model that tracks demography and genetics; custom-built models may provide higher quality results [13]. |

| Lidar & DEM Data | Provides high-resolution digital elevation models to derive landscape metrics. | Metrics like slope, distance to shore, and elevation are proxies for abiotic stressors and can predict habitat distributions [12]. |

| Satellite Imagery & AI Platforms | Enables large-scale, high-resolution land-use and habitat classification. | Platforms like Earth Index use AI to map fine-scale microhabitats, overcoming limitations of coarse public land cover data [14]. |

| Field Data: Mark-Recapture, Telemetry | Provides empirical data on survival, reproduction, and dispersal for PVA parameterization. | Critical for grounding models in reality; dispersal data is essential for validating corridor use [11]. |

| Hand Pollination Trial Kits | Experimental method to test for pollination limitation in fragmented plant populations. | Used to demonstrate that fragmentation can reduce reproductive success independent of resource limitation [9]. |

Experimental Protocol: Measuring Pollination Limitation

Application: This field experiment protocol quantifies one of the key indirect effects of fragmentation—reduced pollinator service—which directly impacts plant population viability [9]. The results can inform corridor design for plant-pollinator networks.

I. Experimental Design and Site Selection

- Objective: To test the hypothesis that habitat fragmentation causes pollination limitation.

- Treatment Groups: For each target plant species, establish two treatments at multiple study sites:

- Control Group: Flowers are left for open pollination.

- Hand-Pollination Group: Flowers receive supplemental pollen.

- Site Selection: Select study sites that vary in key fragmentation metrics, such as patch size, isolation (distance to nearest large patch), and habitat quality (e.g., bare ground cover, weed invasion) [9].

II. Field Methods and Data Collection

- Procedure:

- Tagging: Tag a sufficient number of individual plants and flower buds at each site prior to anthesis.

- Hand-Pollination: When stigmas are receptive, apply ample pollen from donors located at least 5-10 meters away. Use a fine brush to simulate pollen deposition by natural vectors.

- Monitoring: Protect treated flowers from damage and monitor until fruit set.

- Data Recording: For both treatment and control flowers, record the final outcome: successful capsule set (fruit) or abscission.

- Key Covariates: Measure and record site-specific variables for later modeling: population size of the target plant, patch area, PA ratio (perimeter-to-area ratio), and percent bare ground [9].

III. Data Analysis and Interpretation

- Analysis:

- Compare the capsule set ratio (proportion of flowers that set fruit) between hand-pollinated and control flowers using a statistical test like a Chi-squared test.

- Use generalized linear mixed models (GLMM) to analyze how capsule set in control flowers is influenced by fragmentation variables (e.g., isolation, population size, bare ground) [9].

- Interpretation: A significantly higher capsule set in the hand-pollination group indicates pollination limitation. If this limitation is correlated with increasing isolation or decreasing patch size, it provides strong evidence that fragmentation is the cause. This data is critical for modeling the viability of plant populations in fragmented settings.

Core Conceptual Definitions

Landscape Connectivity is the degree to which a landscape facilitates or impedes movement among resource patches. It is a fundamental property influencing ecological processes such as dispersal, gene flow, and species responses to climate change. Connectivity is not solely a function of the landscape's physical structure but emerges from the interaction between this structure and the behavioral response of organisms moving through it [15]. Maintaining connected landscapes is critical for allowing wildlife to find food and shelter, migrate seasonally, establish new territories, and maintain healthy populations through genetic exchange [15].

A Habitat Corridor is a specific, spatially delineated pathway that connects two or more habitat patches and is distinct from the surrounding matrix in its composition and structure. Corridors are linear landscape elements designed to facilitate movement. The Washington Habitat Connectivity Action Plan (WAHCAP), for instance, identifies "Connected Landscapes of Statewide Significance" (CLOSS) as broad pathways that connect major ecological regions [15].

Landscape Permeability refers to the quality of the landscape matrix (the areas between core habitat patches) to allow for animal movement. It is a measure of how easily an organism can move across a landscape, influenced by factors such as vegetation cover, topography, and human land use. Permeability is often described in a diffuse sense, where "working lands provide diffuse landscape permeability for wildlife," as opposed to a defined corridor [15].

Quantitative Parameters for Modeling and Analysis

The analysis of connectivity, corridors, and permeability relies on quantifiable spatial metrics. The table below summarizes key parameters used in habitat suitability modeling for corridor design.

Table 1: Key Quantitative Parameters for Connectivity Modeling

| Parameter Category | Specific Metric | Description and Application |

|---|---|---|

| Landscape Structure Metrics | Patch Density & Size [15] | Measures habitat fragmentation; smaller, more numerous patches indicate higher fragmentation. |

| Edge Contrast [15] | Quantifies the difference between a habitat patch and its surrounding matrix, influencing edge effects. | |

| Spatial Autocorrelation [15] | Assesses the degree to which a spatial phenomenon is correlated with itself across space, identifying clusters of habitat. | |

| Connectivity Value Metrics | Network Importance [15] | A value quantifying a specific area's role in maintaining the integrity of the entire habitat network. |

| Landscape Permeability Score [15] | A modeled value representing the ease with which animals can move through a pixel or area of the landscape. | |

| Climate Connectivity [15] | The capacity of landscapes to facilitate species movement in response to shifting climate conditions. | |

| Synthesis Metrics | Landscape Connectivity Values [15] | A composite layer synthesizing multiple input metrics (e.g., 10 used in WAHCAP) to map and quantify connectivity significance across a region. |

| Landscape Connectivity Hot Spots [15] | Areas identified from the composite values layer with a high density of multiple connectivity functions and values. |

Experimental Protocol: Habitat Suitability and Connectivity Analysis

This protocol outlines a methodology for predicting disease spread in wild boar populations, a framework that can be adapted for general corridor design [16].

Study Area Definition and Species Occurrence Data Collection

- Define the Geographic Scope: Clearly delineate the boundaries of the study area (e.g., Northern Italy) [16].

- Compile Species Occurrence Data: Gather geo-referenced data on species presence. This can include:

- Direct field observations.

- GPS tracking data.

- Records of carcass locations (particularly relevant for disease studies) [16].

Environmental Predictor Variable Processing

- Select Relevant Variables: Acquire spatial layers for environmental variables known to influence the target species' habitat selection. These often include:

- Land cover and land use types.

- Vegetation indices (e.g., NDVI).

- Topographic variables (elevation, slope).

- Climate data.

- Distance to human features (roads, settlements) [16].

- Standardize Spatial Resolution: Process all raster layers to a consistent spatial resolution and extent to ensure compatibility for modeling.

Habitat Suitability Modeling using Species Distribution Models (SDMs)

- Model Implementation: Use statistical or machine learning algorithms to correlate species occurrence data with environmental predictor variables. Common algorithms include MaxEnt, Random Forest, or Generalized Linear Models.

- Model Validation: Validate the model's predictive performance using withheld data (e.g., k-fold cross-validation) and calculate performance metrics such as Area Under the Curve (AUC) [16].

- Suitability Map Generation: The model output is a raster map where each pixel value represents the predicted habitat suitability, which serves as the resistance surface for the connectivity analysis [16].

Landscape Connectivity Analysis

- Resistance Surface Creation: The habitat suitability map is inverted or transformed into a "resistance" surface, where higher suitability values correspond to lower resistance to movement [16].

- Circuit Theory or Least-Cost Path Analysis: Use connectivity algorithms to model movement pathways.

- Circuit Theory: Tools like Circuitscape model landscape connectivity as an electrical circuit, where current flow represents the probability of movement. This is effective for predicting multiple potential dispersal corridors [16].

- Least-Cost Path Analysis: Identifies the single path between two locations that minimizes the cumulative travel cost.

- Delineate Corridors: The model outputs a map of predicted dispersal corridors and connectivity pathways [16].

Validation and Prioritization

- Ground-Truthing: Validate model predictions using independent data, such as:

- GPS tracking data from collared animals.

- Camera trap records.

- Direct observation of animal signs.

- For disease models, the location of confirmed positive cases can serve as validation [16].

- Priority Identification: Synthesize model outputs to identify a hierarchy of conservation actions. For example, the WAHCAP framework identifies "Connected Landscapes of Statewide Significance" (CLOSS) and "Priority Zones" for road barrier mitigation based on synthesized connectivity values and safety data [15].

Advanced Computational Protocol

For high-resolution analysis of complex landscapes, advanced computational methods can be employed.

High-Resolution Semantic Segmentation

- Framework: Implement a novel deep learning framework for high-resolution semantic segmentation of complex visual environments (cities, rural areas, natural landscapes). This integrates:

- Conic Geometric Embeddings: A mathematical approach for capturing hierarchical spatial relationships and context without heavy reliance on post-hoc positional encoding [17].

- Belief-Aware Learning: Introduces probabilistic belief distributions over latent structures, allowing predictions to reflect multiple plausible configurations and improving interpretability [17].

- Model Architecture: Build the model on a hybrid Vision Transformer (ViT) backbone trained end-to-end using adaptive optimization [17].

- Multi-Scale Refinement: Implement a mathematically guided coarse-to-fine fusion within the conic embedding space to ensure semantic consistency across scales and improve boundary accuracy [17].

Training and Evaluation

- Training Datasets: Train the model on benchmark datasets such as EDEN, OpenEarthMap, and Cityscapes [17].

- Performance Metrics: Evaluate model performance using metrics including Accuracy, R², Root Mean Square Error (RMSE), and mean Intersection over Union (mIoU). The proposed model has achieved 88.94% Accuracy on EDEN and 73.21% mIoU on OpenEarthMap, outperforming previous baselines [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Analytical Tools for Connectivity Research

| Tool / Solution | Function / Application |

|---|---|

Species Distribution Modeling (SDM) Software (e.g., MaxEnt, R packages dismo, SDM)) |

Statistical platforms for developing habitat suitability models by correlating species occurrence data with environmental predictors. |

| Connectivity Analysis Tools (e.g., Circuitscape, Linkage Mapper) | Specialized software for modeling landscape connectivity using circuit theory or least-cost path algorithms to delineate corridors. |

| Geographic Information System (GIS) (e.g., ArcGIS, QGIS) | The primary platform for managing, processing, and visualizing spatial data, including environmental layers and model outputs. |

| Remote Sensing Imagery (Satellite, Aerial, UAV/drone) | Provides high-resolution data on land cover, vegetation, and topography, forming the base layers for habitat and permeability analysis [17]. |

| Global Positioning System (GPS) Collars | Used to collect telemetry data on animal movements, which is crucial for validating model-predicted corridors and understanding species-specific movement behavior. |

| Landscape Connectivity Values Layer | A synthesized spatial data product that integrates multiple metrics (e.g., ecosystem connectivity, permeability) to quantify connectivity significance across a region [15]. |

| High-Performance Computing (HPC) Cluster | Essential for processing large geospatial datasets and running computationally intensive models like deep learning semantic segmentation [17]. |

| Camera Traps | Provide non-invasive ground-truthing data for species presence and movement through potential corridor areas. |

Integrating Species Requirements with Landscape Structure for Effective Corridor Design

Habitat fragmentation is a primary driver of global biodiversity loss, impeding species movement, genetic exchange, and adaptive responses to climate change [18] [19]. Effective ecological corridor design addresses this threat by strategically reconnecting fragmented landscapes. This process requires the integration of robust habitat suitability models (HSMs) with structural connectivity analysis to create functional linkages that serve multiple species and ecological processes. The adoption of the Post-2020 Kunming-Montreal Global Biodiversity Framework has further emphasized the urgent need to protect and monitor habitat connectivity, setting clear targets for conservation action [18] [20]. This protocol provides a standardized framework for integrating species-specific habitat requirements with landscape structure analysis to design effective ecological corridors, supporting both biodiversity conservation and climate resilience planning.

Theoretical Foundation

Defining Connectivity for Conservation

Ecological connectivity exists in two complementary forms: structural connectivity, which describes the physical configuration and spatial arrangement of habitat patches in a landscape; and functional connectivity, which reflects how effectively a landscape facilitates or impedes movement for specific organisms [18] [19]. Effective corridor design must address both dimensions, ensuring that physically connected habitats also function as viable movement pathways for target species.

The distinction is critical: a landscape may exhibit high structural connectivity while providing poor functional connectivity for species with specific habitat requirements or limited dispersal capabilities [19]. Conversely, functional connectivity may be maintained through a permeable matrix or stepping stones even when habitats are not physically contiguous [19].

The Role of Habitat Suitability Modeling

Habitat suitability models (HSMs), also referred to as species distribution models (SDMs), provide the ecological foundation for corridor design by quantifying species-environment relationships [21] [22]. These models identify areas likely to support persistent populations based on environmental covariates including bioclimatic conditions, topography, vegetation structure, and soil properties [21]. Advanced modeling approaches now incorporate fine-scale behavioral data to differentiate between habitats suitable for different activities (e.g., foraging versus resting), significantly enhancing the ecological relevance of corridor placement [22].

Quantitative Connectivity Metrics for Conservation Planning

Selecting appropriate metrics is essential for quantifying connectivity status, prioritizing conservation actions, and monitoring progress toward targets. The following table summarizes key connectivity indicators aligned with the Essential Biodiversity Variables framework and suitable for multispecies assessments.

Table 1: Key Connectivity Metrics for Corridor Design and Monitoring

| Metric Category | Specific Indicators | Application Context | Interpretation |

|---|---|---|---|

| Patch-Level Connectedness | Proximity index, Euclidean nearest neighbor [18] [19] | Rapid assessment of habitat isolation | Higher values indicate lower isolation and better connectivity |

| Habitat-Network Connectivity | Probability of Connectivity (PC), Graph theory metrics [18] [19] | Evaluating functional connectivity networks | Measures landscape permeability and inter-patch movement potential |

| Metapopulation Persistence | Metapopulation capacity [18] | Assessing long-term species viability | Estimates potential for population persistence in fragmented landscapes |

| Protected Area Networks | ProNet metric [20] | Tracking performance of area-based conservation | Simple, communicable measure of protected network connectivity |

These metrics enable a comprehensive evaluation of connectivity that informs different aspects of conservation planning, from identifying critical fragmentation points to assessing the long-term viability of species populations [18].

Integrated Methodological Framework: A Step-by-Step Protocol

The following workflow outlines a comprehensive protocol for integrating species requirements with landscape structure to design effective ecological corridors.

Step 1: Define Focal Species and Ecological Requirements

- Ecoprofile Development: Select focal species representing diverse habitat needs and movement capabilities within the target landscape. Employ either a multiple focal species approach (modeling connectivity separately for species with diverse traits) or construct ecoprofiles where a single species represents the needs of a functional group [18]. For example, a study in the St-Lawrence Lowlands effectively used seven ecoprofile species to represent regional forest habitat needs [18].

- Dispersal Parameterization: Compile species-specific data on dispersal distances, movement barriers, and matrix permeability through literature review, expert consultation, or empirical studies [19].

Step 2: Data Collection and Preprocessing

- Species Occurrence Data: Gather validated occurrence records from national biodiversity portals (e.g., InfoSpecies), global databases (GBIF), and systematic field surveys [21] [23]. Apply spatial filtering to mitigate sampling bias [21].

- Environmental Covariates: Compile raster databases encompassing bioclimatic, topographic, edaphic, land use/cover, and hydrological variables at appropriate resolutions (e.g., 25m for fine-scale planning) [21]. The SDMapCH database utilized 877 candidate covariates, demonstrating the comprehensive data required for robust modeling [21].

Step 3: Habitat Suitability Modeling

- Model Selection and Fitting: Implement ensemble modeling approaches using multiple algorithms (e.g., Random Forest, MaxEnt, biomod2) to predict habitat suitability across the study area [24] [23]. For flatback turtles, Random Forest HSMs successfully incorporated behavior-specific data, revealing distinct habitat selection patterns for foraging versus resting [22].

- Model Validation: Employ state-of-the-art cross-validation procedures and systematic data integrity checks to ensure model reliability [21].

Step 4: Landscape Resistance Surface Creation

- Resistance Parameterization: Transform habitat suitability predictions into resistance surfaces where low-suitability areas receive high resistance values [19]. Incorporate species-specific knowledge of barrier effects and matrix permeability.

- Expert Validation: Refine resistance values through expert opinion or empirical movement data where available [19].

Step 5: Connectivity Analysis and Corridor Delineation

- Connectivity Modeling: Apply circuit theory (e.g., Circuitscape) or least-cost path analysis to identify potential movement corridors and pinch points [19]. Graph-based methods (e.g., Conefor) can quantify connectivity metrics for habitat networks [19].

- Multispecies Integration: Overlay connectivity results for multiple focal species to identify priority corridors serving diverse ecological functions [18].

Step 6: Climate Change Integration (Optional)

- Climate Projections: Incorporate future climate scenarios to model shifts in habitat suitability and identify climate-resilient corridors [21] [23]. For Bergenia stracheyi in the Himalayas, ensemble models predicted significant habitat expansion under severe climate change scenarios (RCP8.5), highlighting the need for dynamic conservation planning [23].

- Climate-Gradient Corridors: Design corridors that facilitate species range shifts along elevation or latitudinal gradients [19].

Step 7: Conservation Priority Zoning and Implementation

- Priority Classification: Integrate suitability and connectivity outputs to classify areas into conservation priority zones. A framework for medicinal plant Bletilla striata effectively delineated five zones: core, enhancement, consolidation, buffering, and general zones [24].

- Implementation Planning: Develop specific management recommendations for each zone, including protection, restoration, and monitoring strategies [18].

Table 2: Essential Computational Tools and Data Resources for Corridor Design

| Tool/Resource Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Species Data Platforms | GBIF, InfoSpecies [21] | Provides species occurrence records | Foundation for habitat suitability modeling |

| Environmental Data Repositories | SWECO25, CHclim25 [21] | High-resolution environmental covariates | Predictor variables for habitat models |

| Modeling Software | N-SDM, biomod2, MaxEnt [21] [24] | Habitat suitability modeling | Predicting species distributions |

| Connectivity Analysis Tools | Conefor, Circuitscape, Reconnect R-tool [18] [19] | Graph theory and circuit theory analysis | Modeling landscape connectivity and corridor identification |

| Connectivity Metrics | ProNet, Metapopulation Capacity [18] [20] | Quantifying connectivity for monitoring | Assessing conservation effectiveness and tracking targets |

Advanced Considerations and Future Directions

Incorporating Fine-Scale Behavioral Data

Emerging approaches leverage multi-sensor biologging devices (accelerometers, magnetometers, animal-borne video) to derive behavior-specific habitat suitability models [22]. For instance, incorporating fine-scale resting and foraging behaviors of flatback turtles revealed distinct habitat selection patterns that would be obscured in conventional HSMs [22]. This provides crucial context for designing corridors that support essential life history processes.

Dynamic Connectivity and Climate Adaptation

Static corridor designs may become ineffective under climate change as species ranges shift. Climate-wise connectivity expands traditional concepts by incorporating directional and dynamic perspectives, connecting current habitats with future climate refugia [19]. Techniques include modeling connectivity under future climate scenarios, identifying corridors along climate gradients, and protecting areas of climatic stability [19] [23].

Monitoring and Adaptive Management

Implement monitoring programs to track connectivity changes using selected indicators over time [18]. The Reconnect R-tool provides a framework for rapid assessment of connectivity change, enabling adaptive management in response to landscape transformations [18]. Monitoring is essential for evaluating conservation effectiveness and reporting progress toward global biodiversity targets [18] [20].

This protocol provides a comprehensive framework for integrating species ecological requirements with landscape structure to design effective ecological corridors. By combining advanced habitat suitability modeling with multispecies connectivity analysis, conservation planners can identify priority areas that maintain and restore functional connectivity in human-transformed landscapes. The standardized methodologies, quantitative metrics, and specialized tools outlined here support the implementation of evidence-based corridor design that addresses both current conservation needs and future climate challenges, contributing directly to the achievement of global biodiversity targets.

Climate change is an irreversible force profoundly affecting wildlife habitat suitability and connectivity, posing a significant threat to global biodiversity [25]. Ecological corridors, defined as "clearly defined geographical spaces that are governed and managed over the long term to maintain or restore effective ecological connectivity," serve as vital lifelines between fragmented core habitats [26]. Traditional corridor design often relies on static environmental snapshots, but contemporary climate projections indicate that species distributions are shifting, often toward higher latitudes and elevations [25] [27]. Future-proofing these corridors requires integrating climate change scenarios into the planning process to ensure their functionality over decades. This application note provides researchers and conservation professionals with structured protocols and analytical frameworks for building climate resilience into ecological connectivity projects, directly supporting strategic goals like the EU Biodiversity Strategy 2030 [26].

Core Concepts and Rationale

Habitat fragmentation, driven by both climate change and human activities, is a primary driver of biodiversity loss, creating isolated populations more vulnerable to local extinction [26]. Ecological networks, composed of core areas and connecting corridors, counteract this fragmentation by facilitating essential movement, genetic exchange, and range shifts in response to environmental change [26].

The imperative for future-proofing stems from the accelerating pace of climate change. For instance, a study on the Amur tiger found that while suitable habitat may expand under most future climate scenarios, the centroid of highly suitable areas is projected to shift, necessitating the adaptation of corridor networks [25]. Similarly, amphibians, due to their limited mobility and physiological sensitivity, are particularly vulnerable to climate-driven habitat contraction, highlighting the critical need for proactive corridor planning that accounts for future range shifts [27]. Failure to incorporate these dynamics risks investing in conservation infrastructure that may become obsolete within decades.

Quantitative Data Synthesis

The following tables consolidate key quantitative findings from recent habitat suitability and corridor research, providing a basis for projecting climate change impacts.

Table 1: Projected Changes in Suitable Habitat Area Under Climate Change

| Species | Region | Current Suitable Habitat (km²) | Future Projection (Time Period/Scenario) | Projected Change | Primary Climate Drivers |

|---|---|---|---|---|---|

| Amur Tiger (Panthera tigris altaica) [25] | Northeastern Asia | ~4,942 | Future (SSP scenarios) | Expansion under most scenarios; centroid shift | Not Specified |

| Micromeria serbaliana (Plant) [28] | Saint Catherine Protectorate, Egypt | - | 2041-2060 | Slight Expansion | Mean Temp. of Wettest Quarter (Bio8), Aridity |

| Bufonia multiceps (Plant) [28] | Saint Catherine Protectorate, Egypt | - | 2041-2080 | Moderate Expansion | Isothermality (Bio3), Elevation |

| Amphibians [27] | Mount Emei, China | - | 2055-2085 (High Emission) | Decline, especially in lowlands | Precipitation, Solar Radiation, NDVI |

Table 2: Key Environmental Variables for Habitat Suitability Modeling

| Variable Category | Specific Variables | Application Example |

|---|---|---|

| Climate [25] [27] [29] | Bio1 (Annual Mean Temperature), Bio12 (Annual Precipitation), Bio8 (Mean Temp. of Wettest Quarter), Solar Radiation | Primary drivers for projecting species range shifts under future climates. |

| Topography [25] [28] [27] | Elevation, Slope, Aspect | Influences species distribution and provides refugia; critical for mountainous areas. |

| Vegetation/Habitat [25] [27] | NDVI, EVI, Net Primary Production (NPP), Land Use/Land Cover | Proxies for food availability and habitat structure. |

| Anthropogenic [25] [27] | Human Footprint (HFP), Population Density (POP), GDP | Measures human pressure and habitat fragmentation. |

Experimental Protocol: Modeling Climate-Resilient Corridors

This protocol outlines a workflow for identifying ecological corridors that account for future climate change, integrating Species Distribution Models (SDMs) and connectivity analysis.

The following diagram illustrates the key stages of the corridor future-proofing methodology.

Detailed Methodological Steps

Step 1: Data Collection and Preprocessing

- Species Occurrence Data: Compile presence records from GBIF, scientific literature, museum collections, and field surveys [25] [27]. Clean data by removing duplicates and spatial biases using tools like

ENMToolsor R packages (dplyr,CoordinateCleaner) [25] [27]. - Environmental Variables: Collect current and future layers for:

- Bioclimatic Variables (Bio1-Bio19 from WorldClim or CHELSA) [25] [27] [29].

- Topography: Digital Elevation Model (DEM) to derive elevation, slope, aspect [25] [27].

- Habitat Quality: NDVI, EVI, land use/land cover from MODIS or other sources [25] [27].

- Human Influence: Human Footprint Index, population density, night-time lights [25] [27].

- Climate Projections: Download future climate data for specific time frames (e.g., 2055, 2085) and Shared Socioeconomic Pathways (SSPs) from CMIP6 models [25] [27] [29].

- Preprocessing: Spatially align all rasters to the same resolution and extent. Perform multicollinearity analysis (e.g., Spearman correlation |R| ≥ 0.8) to reduce variable set, retaining those with high ecological relevance and low correlation [29].

Step 2: Habitat Suitability Modeling (SDM)

- Model Selection and Tuning: Use an ensemble modeling approach, combining multiple algorithms (e.g., Random Forest, MaxEnt, Generalized Linear Models) to improve prediction robustness [28] [27]. For MaxEnt, optimize feature classes (L, Q, H, P, T) and regularization multipliers using the

ENMevalpackage in R to avoid overfitting [29]. - Model Execution:

- Train models using current species occurrence and current environmental data.

- Project the trained model onto future climate scenarios to generate future habitat suitability maps.

- Model Evaluation: Assess performance using metrics like True Skill Statistic (TSS), Area Under the ROC Curve (AUC), and Akaike Information Criterion (AICc) [28] [29].

- Output: Generate continuous maps of habitat suitability (0-1) for both current and future scenarios. Reclassify these into binary (suitable/non-suitable) maps using a threshold that maximizes model sensitivity and specificity.

Step 3: Connectivity Analysis

- Create Resistance Surfaces: Invert the binary future habitat suitability maps so that highly suitable areas have low resistance (cost) to movement, and unsuitable areas have high resistance [26]. Alternatively, derive resistance directly from continuous suitability scores.

- Identify Core Areas and Corridors:

- Core Areas: Define based on protected areas (e.g., Natura 2000 sites) or large, contiguous patches of high-suitability habitat from the binary maps [26].

- Corridor Delineation: Apply Least Cost Path (LCP) or circuit theory (using software like Linkage Mapper, Circuitscape) to identify optimal corridors between core areas using the resistance surface [26] [30].

- Prioritization: Corridors can be prioritized based on connectivity importance, projected stability under climate change, and feasibility of implementation [30].

Step 4: Conservation Planning and Implementation

- Spatial Prioritization: Integrate corridor maps with planning tools like the Marxan model to identify priority areas for conservation that meet specific representation targets cost-effectively [29].

- Habitat Quality Assessment: Use models like InVEST to assess habitat quality and degradation within proposed corridors, refining priority areas [29].

- Implementation and Monitoring: Corridor plans should be integrated into spatial development policies [26]. Establish monitoring programs to track species use and corridor effectiveness over time, adapting management as needed.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Tools for Corridor Modeling

| Tool/Reagent | Category | Function/Description | Example Sources |

|---|---|---|---|

| Species Occurrence Data | Data | Primary species location records for model training. | GBIF, Field Surveys, Museum Collections [25] [27] |

| Bioclimatic Variables (WorldClim/CHELSA) | Data | Standardized global climate layers for current and future scenarios. | WorldClim Database [25] [29] |

| Remote Sensing Indices (NDVI, EVI) | Data | Proxies for vegetation cover and habitat quality. | MODIS Database [25] |

R with dplyr, ENMeval, SDM packages |

Software | Statistical computing environment for data cleaning, model tuning, and analysis. | R Project [27] [29] |

| MaxEnt | Software | Algorithm for modeling species distributions with presence-only data. | Phillips et al. (2006) [29] |

| Linkage Mapper / Circuitscape | Software | GIS toolkits for modeling landscape connectivity and delineating corridors. | The Nature Conservancy [26] |

| Marxan | Software | Spatial prioritization software for systematic conservation planning. | Smith et al. (2010) [29] |

| ArcGIS / QGIS | Software | Geographic Information Systems for spatial data management, analysis, and visualization. | Esri; QGIS.org [25] [27] |

Integrating climate change projections into the design of ecological corridors is no longer optional but a fundamental prerequisite for effective, long-term conservation. The methodologies outlined here, leveraging ensemble SDMs, connectivity analysis, and spatial prioritization, provide a robust scientific framework for "future-proofing" these vital landscape elements. By proactively identifying and securing corridors that facilitate climate-induced range shifts, conservation professionals can enhance ecosystem resilience, mitigate biodiversity loss, and ensure that ecological networks remain functional in the face of a changing planet.

From Theory to Terrain: A Toolkit of Habitat and Corridor Modeling Methods

Application Notes

Species Distribution Models (SDMs) are crucial computational tools in ecology and conservation biology, enabling researchers to predict habitat suitability by establishing statistical relationships between species occurrence records and environmental variables [31]. These models are particularly vital for addressing pressing global challenges, including biodiversity conservation, habitat corridor design, and forecasting species responses to climate change [32] [31]. In the context of corridor design research, SDMs help identify key pathways that connect suitable habitats, facilitating gene flow and population resilience.

Three advanced modeling approaches are widely employed:

- MaxEnt (Maximum Entropy Modeling) is a presence-only, machine-learning model known for its strong predictive performance even with small sample sizes. It applies the principle of maximum entropy to estimate a probability distribution of species occurrence across the landscape [33] [31].

- BRT (Boosted Regression Trees) is another machine-learning method that combines regression trees with a boosting technique. This combination allows BRTs to detect complex, nonlinear relationships and interactions between predictor variables, often resulting in high predictive accuracy [34].

- Ensemble Approaches combine predictions from multiple models (e.g., MaxEnt, BRT, and others) to produce a single, consensus forecast. This method is recommended to reduce the uncertainty inherent in any single algorithm and to generate more robust and reliable projections [35].

The selection of an appropriate SDM is a critical step. The following table provides a high-level comparison to guide this decision within a research workflow.

Table 1: Comparative overview of SDM approaches for habitat suitability modeling

| Feature | MaxEnt | Boosted Regression Trees (BRT) | Ensemble Modeling |

|---|---|---|---|

| Core Principle | Maximum entropy probability distribution [31] | Boosting of classification and regression trees [34] | Consensus forecast from multiple models [35] |

| Data Requirements | Presence-only data [31] | Requires both presence and absence/background data [34] | Outputs from multiple constituent models |

| Sample Size Flexibility | Reliable with small sample sizes (e.g., as few as 25 records) [33] | Requires sufficient data for training and boosting | Varies with base models used |

| Key Strengths | Minimizes overfitting via regularization; user-friendly [33] [31] | Handles complex variable interactions; high predictive accuracy [34] | Reduces model-specific bias; enhances projection robustness [35] |

| Ideal Application Context | Preliminary habitat assessment; rare species with limited data [33] | Complex ecological systems with strong predictor interactions [34] | Climate change impact studies; conservation priority planning [35] |

Experimental Protocols

A Generalized Workflow for Habitat Suitability Modeling

The following diagram illustrates a standardized workflow for applying SDMs in habitat suitability and corridor design research, integrating the three modeling approaches.

Protocol 1: MaxEnt Modeling for Baseline Habitat Suitability

Objective: To create a baseline map of potential species distribution using the MaxEnt algorithm.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Data Preparation:

- Species Occurrence: Compile occurrence records from field surveys and databases like the Global Biodiversity Information Facility (GBIF) and the Chinese Virtual Herbarium (CVH) [33] [36].

- Spatial Filtering: To mitigate spatial autocorrelation, apply a spatial filter (e.g., retaining one record per 10-20 km diameter) using GIS software [33].

- Environmental Variables: Obtain current climate data (e.g., 19 bioclimatic variables from WorldClim), topographic data (e.g., elevation from USGS or WorldClim), and soil data (e.g., from SoilGrids or FAO Soils Portal) [36] [35].

Variable Selection:

- Perform a Pearson correlation analysis to identify and remove highly correlated variables (e.g., |r| > 0.7) [36] [35].

- Use the Variance Inflation Factor (VIF) to check for multicollinearity, typically removing variables with VIF > 10 [36].

- The jackknife test within MaxEnt can help identify variables with the most useful information [36].

Model Calibration & Execution:

- Use software like MaxEnt (v3.4.4) or the

ENMevalpackage in R for model optimization [31]. - Set aside a random subset (typically 25-30%) of occurrence data for model testing [36] [31].

- Adjust parameters such as the regularization multiplier and feature class to prevent overfitting [31]. The output format should be set to "Logistic" to generate a probability surface of suitability [36].

- Use software like MaxEnt (v3.4.4) or the

Model Validation:

Protocol 2: BRT Modeling for Complex Relationship Detection

Objective: To model species distribution using BRT, capturing complex nonlinear relationships and interactions among predictors.

Materials: See "The Scientist's Toolkit" below. Programming environments like R are typically required.

Methodology:

- Data Preparation:

- Follow the same steps for occurrence and environmental data preparation as in the MaxEnt protocol. BRT requires both presence and absence (or background) data for model training [34].

Model Training:

- Implement the model using R packages such as

dismoandgbm. - Key hyperparameters to optimize include:

- Tree complexity: Controls the depth of interaction effects between variables.

- Learning rate: Determines the contribution of each tree to the growing model.

- Bag fraction: Specifies the proportion of data used for training each tree [34].

- The model combines a large number of simple trees to improve predictive performance iteratively [34].

- Implement the model using R packages such as

Model Interpretation:

- Analyze the relative influence of each predictor variable, expressed as a percentage, to identify the most critical environmental factors [34].

- Use partial dependence plots to visualize the marginal effect of a single predictor on the predicted response, revealing the shape and nature of the relationship (e.g., optimal ranges for a species) [34].

Protocol 3: Ensemble Modeling for Robust Projections

Objective: To generate a consensus projection of species distribution by integrating multiple SDM algorithms, thereby reducing model-based uncertainty.

Materials: See "The Scientist's Toolkit" below. Software platforms that support multiple models, such as R or BIOMOD2, are essential.

Methodology:

- Model Assembly:

- Run multiple individual models, such as MaxEnt, BRT, Random Forest (RF), and others, using the same species occurrence and environmental data [35].

Ensemble Forecasting:

- Combine the predictions from all individual models into a single ensemble forecast. This can be done by calculating the mean, median, or a weighted average of the predictions, where weights are based on individual model performance (e.g., AUC scores) [35].

Application:

- Ensemble models are particularly valuable for projecting species distributions under future climate change scenarios (e.g., SSP1-2.6, SSP5-8.5) as they provide more robust and reliable estimates of range shifts, which are critical for long-term corridor design [35].

The relationship between different modeling approaches and their output for corridor design can be summarized as follows:

The Scientist's Toolkit

Table 2: Essential research reagents and resources for SDM implementation

| Category | Item / Resource | Function / Application | Example Sources |

|---|---|---|---|

| Species Data | Occurrence Records | Provides georeferenced species presence data for model training and validation. | Field surveys (GPS) [35], GBIF [36], CVH [33] [36] |

| Environmental Data | Bioclimatic Variables | Describes annual trends and extremes in temperature and precipitation. | WorldClim Database [33] [36] [35] |

| Topographic Data | Represents elevation and derived features (slope, aspect) influencing species distribution. | USGS EarthExplorer [35], WorldClim [33] | |

| Soil Data | Provides edaphic factors such as salinity, pH, and organic carbon content. | SoilGrids [35], FAO Soils Portal [33] | |

| Software & Platforms | MaxEnt | Standalone software for implementing the Maximum Entropy model. | --- |

| R Programming Environment | Platform for implementing BRT, ensemble models, and spatial analysis. | Packages: dismo, gbm, ENMeval, BIOMOD2 |

|

| GIS Software | Used for spatial data management, analysis, and map production (e.g., habitat suitability visualization). | ArcGIS, QGIS | |

| Validation Tools | AUC (Area Under the Curve) | Evaluates model discrimination ability based on sensitivity and specificity. | --- |

| TSS (True Skill Statistic) | A threshold-dependent metric that accounts for both sensitivity and specificity. | --- |

Ecological connectivity, the extent to which a landscape facilitates the movement of organisms, has emerged as a central focus in conservation science for preserving biodiversity and ecosystem function [37]. Habitat fragmentation resulting from anthropogenic pressures such as urban expansion, agricultural transformation, and transportation networks significantly hinders the natural movements of wildlife, leading to reduced genetic diversity and threatening long-term population viability [38]. Connectivity modeling provides a powerful methodological framework for designing ecological corridors that reconnect fragmented habitats, thereby facilitating species movement, gene flow, and access to resources [39] [40].

Two dominant computational approaches have revolutionized connectivity conservation: least-cost path (LCP) analysis and circuit theory. Least-cost path analysis, rooted in graph theory, identifies the single most cost-effective route between source and destination points across a landscape resistance surface [41] [42]. Circuit theory, derived from electrical circuit theory, offers a complementary approach that models movement across all possible pathways, recognizing that organisms may not follow a single optimal route [37]. These methodologies now form the cornerstone of modern corridor design, enabling researchers to translate complex ecological requirements into actionable conservation plans.

Theoretical Foundations and Comparative Analysis

Least-Cost Path Analysis

The least-cost path method determines the most cost-effective route from a destination point to a source based on a cost distance surface [43]. The algorithm requires two primary raster inputs: a cost distance raster and a back-link raster, which are typically generated from Cost Distance or Path Distance tools in GIS environments [43]. The back-link raster contains directional information that enables the retracing of the least costly route from the destination back to the source [43].

The fundamental principle of LCP analysis is that movement through each cell in a landscape incurs a specific cost, and the path of least resistance is the one with the lowest accumulated cost [41]. This approach has proven valuable in various applications, from identifying the cheapest route for constructing roads while avoiding steep slopes to modeling wildlife movement corridors between habitat patches [43] [39]. The technique offers different path type options, including calculation for each cell (individual paths for every pixel), each zone (one path per zone), or best single path (only the cheapest path from any zone) [43].

Circuit Theory

Circuit theory applies concepts from electrical circuit theory to model ecological connectivity, treating the landscape as a conductive surface where habitats represent electrical nodes and the resistance to movement functions as electrical resistors [37]. In this framework, organisms are analogous to electrons flowing through multiple possible pathways rather than following a single optimal route [37].

The theoretical foundation of circuit theory in ecology originates from McRae's concept of "isolation by resistance" (IBR), which posits that genetic distance among subpopulations can be estimated by representing the landscape as a circuit board where each pixel is a resistor [37]. Key metrics derived from circuit theory include current density, which estimates net movement probabilities through a given grid cell, and effective resistance, which provides a pairwise distance-based measure of isolation between populations or sites [37]. Circuit theory also facilitates the identification of critical 'pinch points' that constrain potential flow between focal areas and recognizes that increasing the number of pathways decreases total resistance between subpopulations [37].

Comparative Evaluation of Model Performance

A comprehensive simulation study evaluating connectivity models revealed that resistant kernels and Circuitscape consistently performed most accurately across nearly all test cases, with their predictive abilities varying substantially in different contexts [44]. The research indicated that for the majority of conservation applications, resistant kernels represent the most appropriate model, except when animal movement is strongly directed toward a known location [44].

Table 1: Comparative Analysis of Connectivity Modeling Approaches

| Feature | Least-Cost Path Analysis | Circuit Theory |

|---|---|---|

| Theoretical basis | Graph theory, cost-distance analysis | Electrical circuit theory |

| Movement assumption | Single optimal path between points | Multiple possible pathways |

| Key metrics | Accumulated cost distance, back-link direction | Current density, effective resistance, pinch points |

| Spatial output | One-cell-wide linear corridors | Continuous current density maps |

| Data requirements | Cost surface, source and destination points | Resistance surface, focal nodes |

| Primary strengths | Computational efficiency, clear corridor boundaries | Identifies movement bottlenecks, accounts for route redundancy |

| Major limitations | Assumes perfect landscape knowledge, single-path focus | Computationally intensive for large landscapes |

Application Protocols and Methodologies

Protocol for Least-Cost Path Analysis

Step 1: Resistance Surface Development The foundation of effective LCP analysis lies in creating a robust cost raster that accurately represents movement resistance through different landscape features. The cost raster defines the impedance to move planimetrically through each cell, with each cell value representing the cost-per-unit distance for movement [42]. Values in the cost raster must be integer or floating point but cannot be negative or zero [42]. If values of 0 represent areas of low cost, they should be converted to a small positive value such as 0.01; if they represent barriers, they should be assigned as NoData [42].

Step 2: Source and Destination Identification Define source habitats and destination points based on ecological knowledge of the target species. Source patches should be selected according to patch area, landscape suitability, and accessibility [39]. In a study connecting forest patches for large mammals, researchers selected 56 forest patches with a minimum ecological threshold of 100 hectares, with areas ranging from 106.19 to 12,137.48 hectares [39]. The source raster must be converted from vector features if necessary, and NoData values are not included as valid values [42].

Step 3: Cost Distance and Back Link Calculation Generate cost distance and back link rasters using spatial analyst tools. The cost distance raster calculates the least accumulative cost distance for each cell to the nearest source, while the back link raster contains directional information identifying the next neighboring cell along the least-cost path back to the source [43] [42]. These rasters form the computational foundation for determining the optimal route.

Step 4: Path Determination and Validation Execute the Cost Path tool using the destination raster, cost distance raster, and back link raster as inputs [43]. Select the appropriate path type based on conservation objectives: "Each Cell" for paths from every destination pixel, "Each Zone" for paths from each zone, or "Best Single" for the single least-cost path from any destination pixel [42]. Validate the modeled corridors against empirical movement data where possible, or through ground-truthing exercises.

Protocol for Circuit Theory Analysis

Step 1: Resistance Surface Development Create a resistance surface that translates landscape features into movement resistance values. Resistance surfaces can be developed using various approaches, including species distribution models (SDMs) [38] [40], expert opinion, or empirical data from tracking studies. For example, in a study of large mammals in Turkey, resistance surfaces incorporated variables such as road density, vegetation, and elevation [38]. Each pixel of the resistance surface functions as a resistor in the electrical circuit analogy [37].

Step 2: Focal Node Identification Define focal nodes representing core habitat areas or populations between which connectivity will be assessed. In a roe deer conservation study in northern Iran, researchers used species distribution models to identify important habitat patches under current and future climate scenarios, which then served as focal nodes for connectivity analysis [40].

Step 3: Circuitscape Analysis Execute Circuitscape analysis using specialized software. Circuitscape can be implemented in various computational environments, including Julia for high-performance connectivity modeling [40]. The software treats focal nodes as electrical nodes and calculates current flow across the resistance surface, with higher current values indicating higher connectivity [37] [44].

Step 4: Connectivity Interpretation Interpret output maps to identify corridors, pinch points, and barriers. Current density maps visualize areas of high movement probability, while effective resistance values quantify isolation between populations [37]. In the Western Black Sea region of Turkey, circuit theory analysis revealed important ecological corridors for brown bears, wild boars, and gray wolves between the Ballıdağ and Kurtgirmez regions, informing conservation planning to mitigate habitat fragmentation [38].

Integrated Modeling Approaches

Advanced corridor design increasingly combines multiple methodologies to leverage their complementary strengths. A study on roe deer in northern Iran integrated species distribution models (SDMs), least-cost path, and circuit theory to predict habitat suitability and design corridors under current and future climate scenarios [40]. Similarly, researchers in Thailand developed a Bayesian Belief Network that combined ecological data, landscape characteristics, and human dimensions to identify optimal corridors for Asiatic black bears, demonstrating how anthropogenic factors can be incorporated into corridor planning [45].

Table 2: Essential Research Reagents and Tools for Connectivity Modeling

| Tool Category | Specific Solutions | Function in Analysis |

|---|---|---|

| GIS Software | ArcGIS Pro with Spatial Analyst Extension | Provides platform for least-cost path analysis, cost surface development, and visualization [43] [42] |

| Specialized Connectivity Tools | Circuitscape | Implements circuit theory algorithms for modeling landscape connectivity [37] [38] |

| Species Distribution Modeling | MaxEnt, Random Forest, GAM | Generates habitat suitability models that inform resistance surfaces [38] [40] |

| Remote Sensing Data | MODIS NDVI, VIIRS Nighttime Light Data | Provides vegetation and anthropogenic variables for resistance surfaces [39] |

| Field Validation Tools | GPS tracking, camera traps | Collects empirical movement data for model validation [38] [40] |

Workflow Visualization

Advanced Applications and Future Directions

Incorporating Nocturnal Considerations

Artificial nighttime light represents an emerging factor in connectivity modeling that particularly affects nocturnal species. A innovative study in Wuhan, China, integrated Visible Infrared Imaging Radiometer Suite (VIIRS) nighttime light data with Normalized Difference Vegetation Index (NDVI) to create a "Nightscape Adjusted Vegetation Index" (NAVI) for estimating matrix resistance [39]. This approach revealed that compared to traditional daytime models, "dark" ecological corridors shifted location and increased in distance by up to 37.94%, highlighting the importance of considering temporal variations in landscape permeability [39].

Climate Change Integration

Connectivity models must increasingly account for future climate scenarios to ensure corridor longevity. A comprehensive study on roe deer in northern Iran combined species distribution models with connectivity analysis to project habitat suitability and corridor functionality under different climate scenarios for 2060-2080 [40]. This integrated approach enabled researchers to identify corridors that would remain functional despite anticipated climate-driven habitat shifts, demonstrating the value of temporal modeling in conservation planning.

Human Dimensions in Corridor Design

Effective corridor implementation requires consideration of socioeconomic factors alongside ecological data. A Bayesian Belief Network developed for Asiatic black bears in Thailand successfully integrated ecological data, landscape characteristics, and human dimensions—including threat levels toward bears and human attitudes toward corridors—to identify optimal corridor locations and management strategies [45]. The model revealed that improving human attitudes toward wildlife corridor construction represented the most effective management strategy, followed by decreasing human-wildlife conflicts [45].

Multi-Species Corridor Design

Conservation efforts increasingly focus on designing corridors that benefit multiple species simultaneously. Research in Turkey's Western Black Sea region identified ecological corridors for three large mammal species—brown bear, wild boar, and gray wolf—using circuit theory analysis [38]. The study determined that road density, vegetation, and elevation were the most important variables shaping corridors for these species, enabling planners to identify areas where conservation actions would benefit multiple target species [38].

Table 3: Advanced Applications in Connectivity Modeling

| Application Context | Methodological Innovation | Conservation Benefit |

|---|---|---|

| Nocturnal Species Conservation | Integration of VIIRS nighttime light data with NDVI to create NAVI resistance surfaces [39] | Identifies "dark corridors" that mitigate impacts of artificial light on light-sensitive nocturnal species |

| Climate Change Adaptation | Coupling species distribution models (SDMs) with connectivity analysis under future climate scenarios [40] | Designs corridors that remain functional despite climate-driven habitat shifts |

| Human-Wildlife Coexistence | Bayesian Belief Networks incorporating human attitudes and conflict potential [45] | Identifies corridors with higher implementation success through community support |

| Multi-Species Planning | Circuit theory analysis across multiple species with different ecological requirements [38] | Maximizes conservation investment by identifying corridors benefiting multiple target species |

The design of effective habitat corridors is a critical component of conservation strategies aimed at mitigating the impacts of habitat fragmentation. Success in this endeavor hinges on robust habitat suitability models, which in turn depend on the integration of high-quality, multi-faceted spatial data. This protocol outlines detailed methodologies for the acquisition, processing, and integration of three primary data classes—GPS telemetry, remote sensing, and environmental variables—specifically for modeling habitat suitability to inform ecological corridor design. The frameworks presented here are designed to provide researchers and conservation professionals with a standardized approach to generate reliable, data-driven conservation plans.

Data Acquisition Protocols

GPS Telemetry Data

GPS telemetry provides empirical, individual-based data on animal movement, which is fundamental for understanding habitat use and defining corridor pathways.

Protocol 1: GPS Tracking and Data Collection

- Objective: To collect high-resolution, individual-specific location data for quantifying habitat selection and movement patterns.

- Equipment: High-duration GPS tags with UHF/VHF or satellite download capabilities; GPS collars appropriate for the target species' weight and morphology.

- Key Considerations:

- Sampling Regime: The appropriate time interval for GPS fixes must be determined based on the species' mobility and the research question. Shorter intervals (e.g., every 2 hours) can capture fine-scale movement without significantly over- or underestimating space use compared to longer intervals (e.g., every 12 hours) [46].

- Sample Size: Track a sufficient number of individuals (e.g., n=499 presence points used in a tamarisk study) to ensure model robustness and account for individual variation [47].

- Duration: Long-term tracking (across multiple seasons and years) is ideal for capturing seasonal variations and long-term movement trends.

- Ethical Approval: All animal captures and handling must be approved by the appropriate national or regional animal ethics authorities [48].

Remote Sensing Data

Remote sensing offers synoptic, repeatable coverage of landscape characteristics, serving as a primary source for habitat variables.

Protocol 2: Sourcing Remotely Sensed Imagery

- Objective: To acquire satellite imagery for deriving land cover/land use maps and vegetation indices.

- Data Sources and Selection: The choice of remote sensing product involves a trade-off between spatial coverage, resolution, and cost. The table below summarizes key data sources.

Table 1: Comparison of Remote Sensing Data Sources for Habitat Modeling

| Data Source | Spatial Coverage | Spatial Resolution | Key Variables/Themes | Example Use Case |

|---|---|---|---|---|

| Sentinel-1 & 2 [49] | Global | 10 m - 60 m | Land cover map, vegetation indices (NDVI, SAVI) | Baseline habitat modeling where regional data is lacking. |