Beyond Degree Centrality: Advanced Network Indices for Robust Keystone Species Identification in Ecological and Biomedical Research

Identifying keystone species is critical for predicting ecosystem stability and managing biodiversity, yet traditional methods often fall short.

Beyond Degree Centrality: Advanced Network Indices for Robust Keystone Species Identification in Ecological and Biomedical Research

Abstract

Identifying keystone species is critical for predicting ecosystem stability and managing biodiversity, yet traditional methods often fall short. This article provides a comprehensive analysis of modern network-based approaches for keystone species identification, moving from foundational concepts to cutting-edge computational and machine learning techniques. We explore a suite of centrality indices—from local (degree) to meso-scale (motif) and global (betweenness) measures—and evaluate their performance in predicting species impact through dynamic simulations and validation frameworks. Tailored for researchers and scientists in ecology and drug development, this review synthesizes methodological advances, troubleshooting strategies, and comparative analyses to guide the selection and optimization of robust identification protocols for complex biological networks.

The Conceptual Foundation: From Keystone Species Theory to Network-Based Identification

The concept of keystone species represents a cornerstone of modern ecology, describing organisms with disproportionately large effects on their ecosystems relative to abundance. First introduced by zoologist Robert T. Paine in 1969, the keystone species concept has evolved from a qualitative ecological observation to a quantitative framework supported by sophisticated analytical tools [1] [2]. Paine's pioneering research demonstrated that removing the ochre sea star (Pisaster ochraceus) from intertidal zones triggered a dramatic collapse in biodiversity, allowing mussels to monopolize the habitat and crowd out other species [1] [3]. This foundational work established the paradigm that certain species play critical roles in maintaining ecological structure, analogous to the architectural keystone that supports an entire stone arch [1]. Contemporary ecology has since expanded this concept beyond apex predators to include ecosystem engineers, mutualists, and even microorganisms, while developing increasingly precise operational definitions and identification methods [4] [2] [5]. This progression reflects ecology's broader transformation into a more predictive, quantitative science capable of addressing complex conservation challenges in an era of unprecedented biodiversity loss.

Historical Foundation: Paine's Original Concept and Experimental Approach

The Pioneering Experiments

Robert Paine's seminal research in the 1960s established the empirical foundation for the keystone species concept through experimental manipulation of marine invertebrate communities. His field experiments on Makah Bay tidal pools in Washington systematically documented the ecological consequences of removing Pisaster ochraceus [1]. In controlled areas where Paine manually removed the starfish, he observed dramatic shifts in community composition—from an initial 15 species to just 8 species after three years, eventually declining to a near-monoculture of mussels (Mytilus californianus) within a decade [1]. This trophic cascade occurred because Pisaster, a generalist predator, preferentially consumed mussels, which are dominant competitors for space on rocky substrates. Without predation pressure, mussels outcompeted other invertebrates, algae, and anemones, substantially reducing biodiversity [1] [3].

Paine operationalized the keystone concept by defining keystone species as those with "a disproportionately large effect on its environment relative to its abundance" [1]. This definition highlighted the paradoxical nature of keystone species—their ecological importance vastly exceeds what would be predicted from their biomass or productivity alone. The concept gained rapid traction in conservation biology due to its utility in explaining strong species interactions and communicating ecological principles to policymakers, despite early criticisms that it oversimplified complex ecosystems [1].

Classic Case Studies and Early Expansion

Following Paine's work, numerous studies identified keystone roles across diverse taxa and ecosystems, expanding the concept beyond predatory regulation. Three iconic examples demonstrate this early diversification of the keystone concept:

Sea Otters and Kelp Forests: The near-extirpation of sea otters from the North American west coast due to fur hunting revealed their keystone function in controlling sea urchin populations. Without predation, urchins overgrazed kelp forests, destroying habitat for numerous marine species. Reintroduction of otters enabled kelp ecosystem recovery, demonstrating the restorative potential of keystone species conservation [1].

Gray Wolves in Yellowstone: The elimination and subsequent reintroduction of wolves in Yellowstone National Park provided a compelling terrestrial example of keystone predation. Without wolves, elk overbrowsed woody vegetation, degrading riparian areas and impacting beaver populations. Wolf reintroduction restored trophic cascades that benefited beavers, songbirds, and even hydrological processes [1] [3].

Beavers as Ecosystem Engineers: Beavers transform ecosystems by building dams that convert streams to wetlands, creating habitats for diverse species including amphibians, salmon, and songbirds. Their activities increase soil aeration, reverse compaction from grazing, and channel rainwater into aquifers, demonstrating that keystone species need not be predators [1] [3].

Quantitative Revolution: Operational Definitions and Network Indices

Contemporary ecology has developed rigorous quantitative frameworks to identify keystone species, moving beyond observational evidence to computational approaches that analyze species within interaction networks.

From Qualitative to Quantitative Definitions

Operational definitions of keystoneness have evolved to incorporate precise metrics that quantify a species' structural importance. Davic (2003) defined keystone species as "strongly interacting species whose top-down effect on species diversity and competition is large relative to its biomass dominance within a functional group" [1]. This emphasis on disproportionate effect relative to abundance remains central to modern operational definitions.

Advanced operational definitions now incorporate network-level analyses. In microbial ecology, for instance, keystoneness is quantified using a thought experiment of species removal: Ks(i,s) ≡ d(p~,p-)(1-pi), where impact is measured as the dissimilarity between community composition before and after a species' removal, weighted by that species' relative scarcity in the community [4]. This mathematical formulation captures both the impact component of species removal and the biomass component that reflects the disproportion between abundance and ecological influence.

Network Analysis and Centrality Indices

Network analysis has emerged as a powerful approach for identifying keystone species by modeling ecological communities as complex webs of interactions. Different centrality indices measure various aspects of a species' positional importance within these networks [2]:

Table 1: Network Centrality Indices for Keystone Species Identification

| Index Name | Spatial Scale | Measurement Focus | Key Applications | Limitations |

|---|---|---|---|---|

| Degree Centrality | Local | Number of direct connections to other species | Identifying "hub" species with many direct interactions | Ignores indirect effects and interaction strengths |

| Betweenness Centrality | Mesoscale | Frequency of occurring on shortest paths between other species | Identifying bottlenecks and connectors in networks | May overlook locally important but globally peripheral species |

| Closeness Centrality | Global | Average distance to all other species in the network | Identifying species that can rapidly influence entire networks | Sensitive to network disconnectedness |

| Throughflow Centrality | Global | Contribution to material or energy flow through the system | Quantifying functional importance in ecosystem processes | Requires detailed quantitative data on energy/matter flows |

The integration of stable isotope analysis with network metrics represents a significant methodological advancement. Stable isotopes of carbon (δ13C) and nitrogen (δ15N) provide precise measurements of trophic relationships and energy pathways, enabling researchers to quantify interaction strengths based on dietary assimilation rather than simple observation [6]. This approach has revealed that link weights—the strength of species interactions—significantly alter conclusions about keystone importance compared to binary network models [6].

Contemporary Identification Methods: Experimental Protocols and Workflows

Stable Isotope Analysis in Food Web Ecology

Stable isotope analysis has revolutionized the quantification of species interactions in food webs, providing a empirical basis for weighted network analyses. The following workflow illustrates the experimental protocol for keystone species identification using stable isotopes:

Diagram 1: Stable Isotope Workflow for Keystone Species Identification

This methodology was applied in Zhangze Lake, China, where researchers used stable isotope analysis to construct quantitative food webs across different seasons. They found that keystone identities varied temporally, demonstrating the context-dependent nature of keystoneness and highlighting the importance of repeated sampling across environmental conditions [6].

Data-Driven Keystone Identification (DKI) Framework

Machine learning approaches represent the cutting edge of keystone species identification, particularly for complex microbial communities where traditional experimentation is challenging. The Data-driven Keystone species Identification (DKI) framework uses deep learning to implicitly learn assembly rules from microbiome samples:

Table 2: DKI Framework Implementation for Microbial Communities

| Phase | Key Procedures | Data Requirements | Output Metrics | Validation Methods |

|---|---|---|---|---|

| Training Phase | Learn mapping from species assemblage to composition using cNODE2 | Large set of microbiome samples from target habitat | Trained deep learning model | Cross-validation on held-out samples |

| Keystoneness Quantification | Thought experiment of species removal | Resident community composition profile | Structural keystoneness (Ks) and functional keystoneness (Kf) | Comparison with null composition model |

| Community Specificity Analysis | Compute keystoneness across different communities | Multiple community samples from same habitat | Distribution of keystoneness values across communities | Identification of context-dependent keystones |

The DKI framework addresses fundamental limitations of correlation-based network analyses, which often mistake statistically associated species for true keystones. By learning assembly rules directly from compositional data, DKI can predict how communities reorganize following species loss without requiring explicit interaction parameters [4]. This approach has been validated using synthetic datasets generated from Generalized Lotka-Volterra models with known interaction structures [4].

Essential Research Tools and Reagent Solutions

Modern keystone species research requires specialized methodologies and analytical tools. The following table details key research solutions used in contemporary studies:

Table 3: Research Reagent Solutions for Keystone Species Identification

| Research Solution | Primary Function | Application Examples | Technical Considerations |

|---|---|---|---|

| Stable Isotope Analysis | Quantify trophic relationships and energy flow | Food web construction using δ13C and δ15N values [6] | Requires mass spectrometry facilities and standardized protocols |

| cNODE2 (composition Neural ODE) | Learn microbial community assembly rules | Predict compositional changes after species removal [4] | Requires large sample sizes and relative abundance data |

| Network Centrality Algorithms | Identify key nodes in interaction networks | Calculate betweenness and closeness centrality [2] | Sensitive to network completeness and resolution |

| Mixed Modeling for Source Contribution | Estimate proportional dietary contributions | Determine prey importance in predator diets [6] | Assumes isotopic distinctness of sources |

| Environmental DNA (eDNA) Sampling | Detect species presence without direct observation | Biodiversity monitoring in complex ecosystems | Requires careful contamination control and reference databases |

Comparative Analysis: Traditional vs. Modern Approaches

The methodological evolution in keystone species identification reveals distinct advantages and limitations across approaches:

Table 4: Methodological Comparison for Keystone Species Identification

| Methodological Approach | Key Advantages | Principal Limitations | Data Requirements | Context Dependence Handling |

|---|---|---|---|---|

| Experimental Manipulation (Paine) | Direct evidence of causal relationships | Logistically challenging, ethically constrained | Field experiment infrastructure | High (directly tests context) |

| Stable Isotope Analysis | Quantifies actual energy assimilation | Requires specialized equipment and expertise | Tissue samples from community members | Moderate (single time point) |

| Network Centrality Metrics | System-level perspective of connections | May miss weak but important interactions | Comprehensive species interaction data | Low (static network snapshot) |

| Machine Learning (DKI Framework) | Model complex, non-linear interactions | "Black box" interpretation challenges | Large microbiome datasets | High (explicitly models context) |

This comparative analysis reveals that modern methods excel at quantifying interaction strengths and predicting system responses to perturbations, but traditional experimental approaches remain invaluable for establishing causal relationships. The most robust conclusions emerge from integrating multiple methodologies [6] [4] [2].

The conceptual and methodological evolution of keystone species research reflects ecology's ongoing transformation into a more predictive science. From Paine's initial observation that starfish disproportionately influence intertidal diversity, the field has progressed to sophisticated computational frameworks that quantify keystoneness across temporal, spatial, and organizational scales [1] [4]. Network analysis has been particularly transformative, providing both theoretical insights and practical tools for identifying structurally important species [2].

Future research directions will likely focus on integrating multiple identification approaches, expanding keystone concepts to include molecular mechanisms (keystone molecules), and addressing the critical challenge of context dependence—where a species' keystone status varies across environmental conditions and community compositions [6] [7] [4]. As anthropogenic pressures accelerate biodiversity loss, refined operational definitions of keystone species will play increasingly vital roles in guiding effective conservation interventions and ecosystem management strategies. The progression from qualitative observation to quantitative prediction represents both a scientific achievement and an essential adaptation for addressing complex ecological challenges in the Anthropocene.

For decades, ecologists have sought reliable methods to identify keystone species—those organisms with disproportionate importance in maintaining their ecosystem's structure. Traditional approaches often relied on simple metrics like species abundance or biomass. However, contemporary research reveals that a species' ecological importance cannot be ascertained in isolation; rather, it is fundamentally encoded within the architecture of the food web in which it is embedded. The critical shift in ecological understanding recognizes that food web structure provides a more powerful predictor of species importance than any species-specific characteristic alone. This paradigm shift is particularly relevant for conservation biology and ecosystem management, where accurately identifying key species is essential for maintaining ecosystem functioning in the face of accelerating biodiversity loss [8].

The challenge of keystone identification is compounded by the fact that food webs are complex networks of feeding relationships that include both strong and weak interactions among species [9]. Early calculations of keystone indices were based on network models susceptible to construction errors, such as including unnecessary trophic links or omitting functional ones [10]. This raised serious questions about whether researchers could reliably depend on importance rankings derived from these models. This article examines how modern approaches to analyzing food web structure are overcoming these limitations and providing more robust tools for predicting species importance in ecological communities.

Theoretical Foundation: From Food Chains to Network Complexity

Basic Food Web Architecture

A food web consists of all interconnected food chains within an ecosystem, illustrating how energy and nutrients move through feeding relationships [11]. Organisms within these webs are grouped into trophic levels: producers (autotrophs), consumers (herbivores, carnivores, omnivores), and decomposers [11]. There are two primary pathways energy can take: the grazing food chain beginning with living autotrophs, and the detrital food chain beginning with dead organic matter broken down by decomposers [9]. The detrital pathway is particularly crucial as in many ecosystems, the energy flowing through it can equal or exceed that of the grazing pathway [12].

The Emergence of Keystone Concept

The concept of applying food chains to ecology was first proposed by Charles Elton in 1927, who recognized that these chains were mostly limited to 4 or 5 links and were interconnected into what he called "food cycles" [9]. The modern understanding of keystone species emerged from pioneering experimental work by Robert Paine, who demonstrated that removing the predator starfish Pisaster from intertidal zones reduced prey diversity from 15 to 8 species within two years [9]. This study provided crucial evidence that certain species, despite relative scarcity, could exert outsized influence on community structure through their feeding relationships—a phenomenon now known as keystone predation [9].

Analytical Framework: Quantifying Structure and Importance

Typology of Food Web Analyses

Food webs can be described and analyzed through different conceptual frameworks, each offering unique insights into species importance:

Table 1: Three Types of Food Web Analyses

| Analysis Type | Focus | Measurement Approach | Reveals About Species Importance |

|---|---|---|---|

| Connectedness Web | Feeding relationships among species | Portrays links between species as binary connections [9] | Basic topological position and connectivity |

| Energy Flow Web | Quantitative energy transfer | Measures energy flow between species; arrow thickness reflects relationship strength [9] | Quantitative impact on energy circulation through the ecosystem |

| Functional Web | Influence on population growth rates | Represents each species' importance in maintaining community integrity [9] | Effect on growth rates of other species' populations |

Key Structural Metrics for Predicting Importance

Several quantitative metrics have emerged as particularly powerful for predicting species importance from food web structure:

- Betweenness Centrality: Measures how often a species lies on the shortest path between other species in the network. High betweenness indicates potential control over energy flows and information transfer [10].

- Topological Importance: Quantifies a species' position within the overall network architecture, identifying those whose removal would disproportionately disrupt connectivity [10].

- Compartmentalization: The degree to which a food web is divided into subgroups of strongly interacting species. Higher compartmentalization can affect stability and resilience [8].

- Interaction Strength: The direct effect of one species on another's population growth rate, represented as entries in a Jacobian community matrix [12].

- Keystoneness: An integrated metric that combines a species' topological properties with its quantitative effects on energy flow [10].

Advanced Methodologies: Experimental Protocols for Structural Analysis

Protocol 1: Compound-Specific Stable Isotope Analysis of Amino Acids (CSIA-AA)

Purpose: To trace nutrient pathways and identify energy channels in food webs with high precision [13].

Workflow:

- Sample Collection: Obtain tissue samples from target species and potential primary producers in the ecosystem.

- Chemical Extraction: Isolate amino acids from the collected tissue samples using hydrolysis and derivation techniques.

- Isotope Analysis: Analyze stable isotope ratios (δ13C and δ15N) in individual amino acids using gas chromatography/isotope ratio mass spectrometry.

- Trophic Position Calculation: Determine trophic positions using the differential fractionation between trophic and source amino acids.

- Baseline Correction: Correct for baseline isotopic variation to accurately identify primary producer contributions.

- Pathway Modeling: Use multivariate statistics to reconstruct distinct energy pathways from primary producers to consumers.

Applications: This protocol revealed that coral reef food webs are more "siloed" than previously understood, with different snapper species relying on distinct energy pathways (phytoplankton, macroalgae, or coral-based) despite co-occurring in the same habitat [13].

Protocol 2: Loop Weight and Diagonal Strength Analysis

Purpose: To quantify food web stability and identify critical interactions that maintain ecosystem structure [12].

Workflow:

- Jacobian Matrix Construction: Create a community matrix where elements (αij) represent the per capita effect of species j on species i's growth rate.

- Interaction Strength Estimation: Calculate interaction strengths using Ecopath model outputs or empirical data on biomass, diet composition, and production rates.

- Loop Identification: Identify feedback loops of interacting species within the food web.

- Loop Weight Calculation: Compute the geometric mean of interaction strengths for each loop (|αij × αjk × ... × αmi|1/n) [12].

- Diagonal Strength Calculation: Determine the proportion of specific mortality caused by intraspecific competition.

- Stability Assessment: Evaluate stability based on the heaviest loop weight and diagonal strength value, where lighter loops and lower diagonal strength indicate higher stability.

Applications: This protocol revealed that in Baiyangdian Lake, stability was limited by a three-link omnivorous loop (Detritus → zooplankton → filter-feeding fish), and detected a shift from top-down to bottom-up control between 2009-2019 [12].

The following diagram illustrates the experimental workflow for food web stability analysis:

Robustness Testing for Keystone Indices

Purpose: To evaluate the reliability of keystone indices despite potential errors in food web construction [10].

Workflow:

- Multiple Index Calculation: Compute a suite of different structural indices (e.g., betweenness, topological importance, keystoneness) for all species in the food web.

- Error Simulation: Introduce simulated errors into the network model by randomly adding or removing trophic links.

- Index Recalculation: Recompute keystone indices for the perturbed networks.

- Rank Comparison: Compare species importance rankings between original and perturbed networks.

- Robustness Quantification: Calculate robustness measure (R), which is proportional to the likelihood that importance rankings remain unchanged despite network errors.

- Index Selection: Identify which keystone indices maintain most consistent results across uncertainty.

Applications: This approach demonstrated that betweenness, topological importance, keystoneness, and mixed trophic impact have higher robustness values compared to fragmentation indices, making them more reliable for conservation prioritization [10].

Comparative Analysis: Structural vs. Traditional Approaches

Predictive Power Across Ecosystems

Recent research across diverse ecosystems demonstrates the superior predictive power of food web structure analysis compared to traditional approaches:

Table 2: Comparative Performance of Structural vs. Traditional Metrics

| Ecosystem | Traditional Approach | Structural Approach | Key Finding | Reference |

|---|---|---|---|---|

| Coral Reefs (Red Sea) | Species treated as generalist predators | CSIA-AA revealed specialized energy pathways | Food webs highly compartmentalized with vertical "silos" making them more fragile than previously assumed | [13] |

| Paraná River Floodplain | Focus on species richness alone | Analysis of linkages, compartmentalization, and nestedness | Food web complexity mediates relationship between diversity and ecosystem functioning | [8] |

| Baiyangdian Lake | Qualitative food chain descriptions | Loop weight and diagonal strength analysis | Identified critical three-link loop determining stability and detected regime shift | [12] |

| Rocky Intertidal Zones | Population abundance metrics | Functional web analysis measuring influence on growth rates | Revealed starfish as keystone predator despite low abundance | [9] |

Robustness Comparison of Keystone Indices

Different structural indices vary significantly in their reliability for identifying keystone species:

Table 3: Robustness of Keystone Indices to Network Construction Errors

| Index Category | Specific Indices | Robustness (R) Value | Reliability for Conservation | Key Insight |

|---|---|---|---|---|

| High Robustness | Betweenness, Topological Importance, Keystoneness, Mixed Trophic Impact | High | More reliable | These indices maintain consistent species rankings despite uncertainties in network model |

| Low Robustness | Fragmentation indices, Number of dominated nodes | Low | Less reliable | Species rankings sensitive to addition/removal of trophic links |

| Variable Robustness | All indices | Context-dependent | Case-by-case evaluation needed | R values depend heavily on specific food web topology |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Essential Research Materials for Food Web Structural Analysis

| Tool/Technique | Function | Application Context |

|---|---|---|

| Compound-Specific Stable Isotope Analysis (CSIA) | Tracks nutrient pathways by analyzing δ13C and δ15N in amino acids | Precisely identifies energy sources and trophic positions in complex food webs [13] |

| Ecopath with Ecosim Modeling Software | Creates mass-balanced ecosystem models quantifying energy flows | Estimates interaction strengths and analyzes food web stability [12] |

| Network Analysis Algorithms | Computes centrality measures and identifies structural modules | Quantifies keystone indices and maps food web topology [10] |

| Stomach Content Analysis | Provides short-term feeding data through morphological identification | Validates trophic interactions and complements stable isotope data [8] |

| DNA Metabarcoding | Identifies prey species through genetic analysis of gut contents | Provides high-resolution trophic interaction data with species-level precision [13] |

| Loop Weight Analysis | Calculates geometric mean of interaction strengths in feedback loops | Identifies critical pathways that determine overall food web stability [12] |

Implications and Future Directions

Conservation and Ecosystem Management

Understanding food web structure has profound implications for conservation biology and ecosystem management. The research demonstrates that conservation efforts should not focus solely on preserving species richness, but must emphasize maintaining food web complexity [8]. The compartmentalized structure revealed in coral reef ecosystems suggests these systems may be more vulnerable to disturbances than previously thought, as the loss of a single primary producer could fracture an entire food chain [13]. This structural perspective enables more targeted conservation strategies that protect critical nodes and connections rather than simply maximizing species counts.

Emerging Research Frontiers

Future research directions include expanding structural analyses to different ecosystem types, integrating multiple methodological approaches, and developing more accessible tools for ecosystem managers. McMahon's lab plans to apply similar questions to kelp forests and deep-sea ecosystems while integrating DNA metabarcoding to more precisely identify prey species connecting siloed energy channels [13]. Additionally, researchers are working to simplify complex stability metrics into more practical indicators, such as the geometric mean ratio of predator-to-prey biomass, which correlates with diagonal strength and offers a more accessible tool for early-warning assessments of food web stability [12].

The following diagram illustrates the compartmentalization found in coral reef food webs:

The critical shift from species-centric to structure-centric analysis represents a fundamental advancement in ecology and conservation biology. Food web structure provides a more powerful predictor of species importance because it captures the complex network of direct and indirect interactions that determine a species' functional role within an ecosystem. Methodologies like CSIA-AA, loop weight analysis, and robustness testing of keystone indices are providing unprecedented insights into how energy flows through ecosystems and which species truly maintain ecological stability. As we face escalating biodiversity loss, these structural approaches offer our most reliable toolkit for identifying conservation priorities and managing ecosystems for resilience in an increasingly uncertain world.

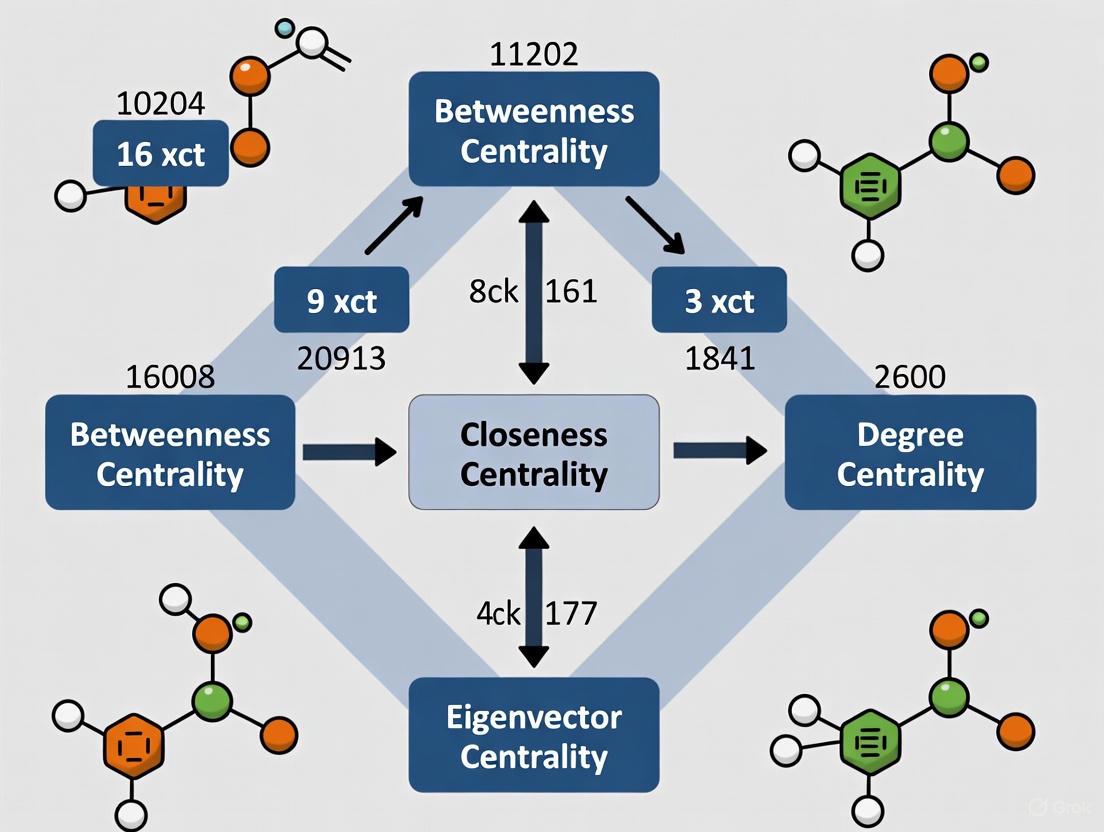

In network science, centrality measures are fundamental tools for quantifying the importance or influence of nodes within a complex system. For researchers studying keystone species identification, these measures provide a mathematical framework to pinpoint which species exert disproportionate influence on their ecological community's structure and stability. The concept of power in networks is inherently relational—an individual's importance stems from their position within the pattern of connections, creating varying levels of dependence and opportunity across the network [14]. Centrality indices are broadly categorized into three distinct classes based on the scale of network information they incorporate: local, meso-scale, and global measures. Each category offers unique insights into node properties, with significant implications for predicting ecological impact in microbial systems and beyond.

Classification of Centrality Measures by Network Scale

Centrality measures can be systematically classified based on the scope of network information they utilize, ranging from immediate neighbors to the entire network structure. The table below organizes key centrality measures by their operational scale and primary computational basis.

Table 1: Classification of Centrality Measures by Network Scale

| Scale Category | Centrality Measures | Computational Basis |

|---|---|---|

| Local | Degree, Laplacian, Leverage | Immediate neighborhood connections (1-hop distance) |

| Meso-Scale | k-shell, Collective Influence, Subgraph | Network topology within a limited radius (2+ hops) |

| Global | Closeness, Betweenness, Eigenvector, PageRank, Stress | Entire network path structure or spectral properties |

This classification is particularly valuable for keystone species research, as the most impactful species may not always be those with the most connections, but rather those occupying critical positions at different structural scales within the ecological network [15] [16].

Detailed Comparison of Centrality Measures

The performance and applicability of centrality measures vary significantly based on network structure and research objectives. The following tables provide a detailed comparison of key measures across different operational scales.

Table 2: Local and Meso-Scale Centrality Measures

| Measure Name | Scale | Mathematical Definition | Key Strengths | Limitations |

|---|---|---|---|---|

| Degree [14] | Local | ( CD(v) = \sum{u=1}^N a_{vu} ) | Computational simplicity; Intuitive interpretation | Ignores broader network structure |

| Laplacian [15] | Local | ( CL(v) = EL(G) - E_L(G \setminus {v}) ) | Measures drop in network connectivity after node removal | Computationally intensive for large networks |

| k-shell [15] | Meso-scale | Core number in k-core decomposition | Identifies hierarchically central nodes in core-periphery structures | May assign same value to many nodes |

| Collective Influence [15] | Meso-scale | ( CI(v) = (kv - 1) \sum{u \in \partial B(v,l)} (k_u - 1) ) | Balances local degree with neighborhood structure | Performance depends on parameter l (distance) |

Table 3: Global Centrality Measures

| Measure Name | Mathematical Definition | Key Strengths | Limitations |

|---|---|---|---|

| Closeness [17] [14] | ( CC(v) = \frac{N-1}{\sum{u \neq v} d_{vu}} ) | Identifies nodes that can reach entire network quickly | Requires global distance calculation; Sensitive to disconnected components |

| Betweenness [15] [14] | ( CB(v) = \sum{s \neq v \neq t} \frac{\sigma{st}(v)}{\sigma{st}} ) | Captures brokerage potential and control over flows | Computationally expensive (O(VE) for unweighted graphs) |

| Eigenvector [15] | ( \mathbf{Ax} = \lambda_1 \mathbf{x} ) | Incorporates importance of neighbors; Recursive definition | May over-concentrate in dense network regions |

| PageRank [15] | ( PR(v) = \frac{1-d}{N} + d \sum{u \in Mv} \frac{PR(u)}{L(u)} ) | Robust to spam/local manipulation; Models random walks | Requires parameter tuning (damping factor) |

Correlation Patterns and Performance Comparisons

Understanding how different centrality measures relate to one another and perform in specific tasks is crucial for selecting appropriate measures in keystone species research.

Table 4: Correlation and Performance Analysis of Centrality Measures

| Analysis Type | Key Finding | Implication for Keystone Species Research |

|---|---|---|

| Correlation Patterns [18] | Measures form distinct communities with strong within-group correlations | Suggests redundancy between some measures; Using measures from different communities provides complementary insights |

| Source Identification Performance [19] | LocalRank, Subgraph, Katz outperform for single source detection; Leverage, Collective Influence excel for influential sets | Different measures optimal for identifying individual keystone species vs. critical groups |

| Robustness to Data Limitations [15] | Degree, k-shell more robust to missing data; Betweenness, Closeness highly vulnerable | Critical consideration for ecological networks often built from incomplete sampling data |

| Theoretical Relationship [17] | Inverse closeness linearly related to logarithm of degree | Explains why these measures often correlate; Suggests derived measures that remove degree dependence may reveal unique information |

Experimental Protocols for Centrality Validation

Generalized Lotka-Volterra Dynamics for Microbial Communities

Mathematical modeling provides a validated approach for testing centrality measures in systems with known interaction patterns. The generalized Lotka-Volterra (gLV) model simulates population dynamics in multi-species microbial communities [16].

Methodology:

- Model Definition: Population dynamics follow: (\frac{dxi}{dt} = rixi(1 - \frac{A\mathbf{x}}{ki})) where (xi) is abundance of species i, (ri) is growth rate, (k_i) is carrying capacity, and A is the interaction matrix.

- Parameter Assignment: Growth rates drawn from uniform distribution (0,1]; carrying capacities from β or lognormal distributions; interaction matrices generated from random graph models (Erdős-Rényi, Watts-Strogatz, Barabási-Albert, Klemm-Eguiluz).

- Numerical Integration: System evaluated using numerical integration (e.g., lsoda function in R) until steady state reached.

- Co-occurrence Network Construction: Association metrics applied to steady-state abundances; statistical significance determined via permutation tests (1000 shuffles) with multiple comparison correction.

- Validation: Compare co-occurrence network to known interaction network using sensitivity/specificity analysis.

This protocol allows researchers to test which centrality measures best identify species known to be critical in the simulated interaction network [16].

Network Propagation Source Identification

Another validation approach uses propagation models to simulate influence spread, relevant for studying information flow or disease transmission in ecological networks.

Methodology [19]:

- Network Preparation: Use real-world and synthetic networks with varying topologies.

- Propagation Simulation: Implement SIR epidemiological model (infection probability 0.1, recovery probability 0.05) and Independent Cascade model.

- Source Detection: Apply centrality measures to identify propagation sources using maximum likelihood approach: (\hat{v} = \arg \max{v \in GI} P(GI|v^* = v)) where (GI) is the induced subgraph of infected nodes.

- Performance Evaluation: Calculate precision, recall, F1 score, and average distance metrics across 192 propagation graphs.

Figure 1: Workflow for validating centrality measures using propagation source identification

Research Reagent Solutions: Computational Tools for Centrality Analysis

Researchers studying keystone species through network centrality require specialized computational tools and libraries. The following table details essential "research reagents" for this field.

Table 5: Essential Computational Tools for Centrality Analysis in Keystone Species Research

| Tool Name | Primary Function | Application in Keystone Research | Access Method |

|---|---|---|---|

| igraph [16] | Network analysis and visualization | Calculating centrality measures; Network topology analysis | R/python package |

| NetCenLib [19] | Centrality measure computation | Standardized calculation of 25+ centrality measures | Python library |

| NDLib [19] | Diffusion process simulation | Testing centrality measures on propagation models | Python library |

| sparCC [16] | Correlation inference for compositional data | Constructing microbial co-occurrence networks from abundance data | Python implementation |

| deSolve [16] | Differential equation solver | Implementing gLV models for simulated microbial communities | R package |

The selection of appropriate centrality measures for keystone species identification depends critically on research goals, network characteristics, and data quality. Local measures (e.g., Degree, Laplacian) offer computational efficiency and robustness to incomplete data but miss broader structural importance. Meso-scale measures (e.g., k-shell, Collective Influence) balance local information with wider network context, often identifying structurally central nodes. Global measures (e.g., Betweenness, Closeness) capture system-wide influence but are computationally demanding and sensitive to data limitations [15].

For microbial ecology applications, research indicates that employing complementary measures from different correlation communities provides the most comprehensive assessment of keystone species [18] [16]. Validation through simulated systems with known interaction networks remains essential, as the performance of centrality measures varies significantly across network topologies and research objectives [19]. By understanding the theoretical foundations, computational requirements, and performance characteristics of these different centrality classes, researchers can make informed decisions when identifying critical species in complex ecological networks.

In ecological research, networks provide powerful frameworks for modeling complex species interactions, such as those in food webs or mutualistic relationships. The choice between a binary and a quantitative network fundamentally influences the analysis and conclusions, especially in critical applications like keystone species identification. A binary network simplifies interactions to presence-absence terms, representing connections as either existing or not [20] [21]. This abstraction is useful for initial structural analysis but ignores interaction intensities. In contrast, a quantitative network (or weighted network) incorporates continuous measures of interaction strength, such as the amount of energy flow between predator and prey, the frequency of interactions between mutualistic species, or the number of cooperative outcomes [22] [23]. This richness of information more accurately reflects biological reality, as the strength of connections is often a key ingredient of the model, and ignoring this information can lead to misleading results [23].

The distinction is paramount in keystone species research. Traditional methods often identified ecological "hubs" based on simple binary measures like degree centrality [6]. However, there is an increasing recognition that qualitative network considerations lack sufficient information, which can significantly alter conclusions about the importance of species [6]. This article provides a comparative guide to these two network paradigms, underscoring why the critical consideration of link weights is indispensable for modern ecological research and conservation management.

Defining the Networks: A Structural and Functional Comparison

The core difference between these networks lies in their data representation, which subsequently dictates the analytical methods and ecological insights they can generate.

- Binary Networks: These are fundamentally simplified models. The adjacency matrix ( A ) that represents the network is composed of elements ( a_{ij} ) that are either 0 (no interaction) or 1 (interaction present) [21]. Analysis focuses solely on the topological structure—the pattern of connections—while ignoring the intensity of those connections.

- Quantitative Networks: These networks enrich the model by assigning a numerical weight ( w_{ij} ) to each link, representing the intensity, capacity, or strength of the interaction [22] [23]. This allows researchers to investigate not just if species interact, but how strongly they do so, providing a more nuanced view of the ecological community.

Table 1: Fundamental Characteristics of Binary and Quantitative Networks

| Feature | Binary Network | Quantitative Network |

|---|---|---|

| Link Representation | Presence/Absence (1 or 0) [21] | Continuous or discrete weight values [22] |

| Primary Focus | Topological connectivity | Intensity and structure of interactions |

| Data Requirement | Species co-occurrence or interaction data | Quantitative data on interaction strength (e.g., energy flow, frequency) [6] |

| Computational Load | Generally lower | Generally higher due to numerical computations |

| Ecological Interpretation | Identifies potential pathways of influence | Quantifies the magnitude of influence and energy fluxes |

Comparative Analysis in Keystone Species Identification

The choice of network model critically impacts the identification and ranking of keystone species, as different metrics and methodologies can yield divergent results.

Methodological Approaches and Experimental Evidence

Experimental protocols in this field typically begin with constructing an interaction network from empirical data. For a binary network, this involves setting a threshold to decide whether an interaction exists. For a quantitative network, link weights must be measured. A seminal study on mutualistic networks investigated the interplay between binary and quantitative structure on stability [20]. The experimental protocol can be summarized as follows:

- Network Compilation: Gather empirical data on species interactions.

- Structure Analysis: Measure the nestedness of both the binary structure (pattern of links) and the quantitative structure (arrangement of interaction strengths).

- Stability Simulation: Use community matrix approaches to compute local stability under different scenarios of network complexity and within-guild competition.

- Result: The study found that the impact of interaction overlap on community persistence depends on competitive context. With competition, the most stable networks were those with a nested binary structure and a nested quantitative structure [20]. This result helps explain the prevalence of binary-nested structures in nature but underscores that their stability is contingent on the unseen quantitative structure.

Another advanced method, the Data-driven Keystone species Identification (DKI) framework, uses deep learning to predict a species' keystoneness—defined as the disproportionate impact of its removal relative to its abundance [4]. This framework implicitly learns the assembly rules of microbial communities from sample data and performs thought experiments on species removal to quantify community-specific keystoneness, a process that inherently requires quantitative data to model the true impact on community structure and function [4].

Comparative Data and Limitations

Research comparing centrality metrics across network types reveals significant discrepancies. A study on the Zhangze Lake ecosystem used stable isotopes to construct a quantitative food web, moving beyond simple material flow measurements [6]. The authors found that "in contrast to binary networks, the importance of species in the weighted network was not always proportional to their link number and link weight." [6]. This is a critical finding, as it demonstrates that a high number of connections (or high-strength connections) does not automatically equate to high keystone potential in a weighted context; the pattern of weight distribution is equally important.

Table 2: Key Network Metrics and Their Interpretation in Two Network Types

| Metric | Interpretation in Binary Networks | Interpretation in Quantitative Networks |

|---|---|---|

| Degree Centrality | Number of direct connections (potential partners) [24]. | Strength: Sum of weights of direct connections (intensity of relationships) [24]. |

| Betweenness Centrality | Potential for controlling flow in the network based on topology. | Control over substantial flows of energy or influence, weighted by interaction strength [6]. |

| Clustering Coefficient | Likelihood that two neighbors of a node are connected [24]. | Intensity of interactions within a node's neighborhood, weighted by link strength [24] [23]. |

| Identification of "Hubs" | Nodes with an unusually high number of links. | Nodes that may not have the most connections, but have a disproportionate influence through strong, strategic links. |

The limitations of binary networks are stark. Relying on correlation networks from statistical co-occurrence to identify keystones is problematic because these edges do not necessarily represent direct ecological interactions, and the approach completely ignores the community specificity of a species' role [4]. Furthermore, a hub in a binary network may turn out to be a peripheral species in a quantitative analysis, and vice versa [6].

Essential Research Toolkit

To conduct robust research in this field, a suite of conceptual and software-based tools is required.

Table 3: Research Reagent Solutions for Network Analysis

| Tool/Resource | Function | Relevance |

|---|---|---|

| Stable Isotope Analysis | Measures interaction strength between species based on trophic relationships for constructing weighted networks [6]. | Provides empirical data for quantitative network links, moving beyond simple material flow. |

| Brain Connectivity Toolbox (BCT) | A comprehensive library for complex network analysis, containing measures for both binary and weighted networks [24]. | Calculates metrics like strength, weighted clustering, and betweenness centrality. |

| cNODE2 (Compositional NODE) | A deep-learning model to learn microbial community assembly rules and predict composition after species removal [4]. | Core of the DKI framework for quantifying community-specific keystoneness in quantitative networks. |

| Egonet-based Dissimilarity Measures | Alignment-free metrics to compare the local structure of weighted networks based on strength, clustering, and egonet persistence [23]. | Enables comparison of weighted networks to evaluate filtering schemes or temporal changes. |

| Null Models (e.g., Maslov-Sneppen) | Randomization techniques for statistical significance testing of network measures [22] [24]. | Essential for validating whether an observed network property is non-trivial. |

Workflow and Conceptual Pathways

The following diagrams illustrate the core experimental and analytical pathways for both network types, highlighting the critical points of divergence.

Binary Network Analysis Workflow

Quantitative Network Analysis Workflow

The evidence unequivocally demonstrates that quantitative networks, which incorporate link weights, provide a more biologically realistic and analytically powerful framework for identifying keystone species compared to binary networks. While binary analysis offers a useful starting point for topological assessment, its failure to account for interaction strength can lead to incomplete or misleading conclusions, such as misidentifying ecological hubs [6]. The stability and functioning of ecosystems, as shown in mutualistic networks, are deeply intertwined with the quantitative arrangement of interaction strengths, not just their binary pattern [20]. Modern computational frameworks, like DKI, further leverage quantitative data to predict the community-specific keystoneness of species, moving beyond static topological indices [4]. For researchers and drug development professionals aiming to accurately model ecological communities and pinpoint species critical for stability, adopting quantitative network analysis is not just an improvement—it is a critical necessity.

The identification of keystone species, defined as those with an ecological impact disproportionately large relative to their abundance, is a cornerstone of conservation prioritization and ecosystem management [25]. However, the keystoneness of a species is not an intrinsic property but is emergent from the specific network of interactions within its community. This guide compares the performance of predominant network-based methods for keystone species identification—including centrality indices, topological importance, and functional indices—by synthesizing experimental data from aquatic, marine, and microbial ecosystems. The evidence consistently demonstrates that the ranking and identity of keystone species are highly sensitive to environmental context, methodological choices, and the specific structure of the ecological network, challenging the notion of universally applicable keystone species lists.

The concept of the keystone species has evolved from early experimental manipulations to sophisticated network analyses [6] [25]. While traditional methods focused on a limited number of species, modern approaches use quantitative network indices to quantify a species' importance within a food web [6]. These indices measure a species' position (centrality), its functional role in energy flow, and the overall impact of its loss on community stability.

A critical synthesis of recent research reveals that a species identified as a keystone in one environment may not retain that status in another. Contextual factors such as resource availability, habitat modification, and the specific configuration of interspecies interactions can fundamentally alter keystoneness [26] [27]. This guide provides a comparative framework for researchers, evaluating the protocols, outputs, and contextual sensitivity of major identification methods.

Comparative Analysis of Keystone Identification Methods

The following table summarizes the core network indices used for quantifying keystoneness, their methodologies, and their primary outputs.

Table 1: Key Network Indices for Keystone Species Identification

| Index Name | Type of Measure | Experimental/Calculation Protocol | Key Output(s) |

|---|---|---|---|

| Weighted Betweenness Centrality (wBC) [6] | Topological (Centrality) | Based on stable isotope analysis (e.g., δ13C, δ15N) to quantify trophic relationships and estimate link strength. The shortest paths between all species pairs are calculated, and wBC counts how many pass through the focal species. | Identifies species that act as bridges, controlling energy flows between different parts of the network. |

| Hub Index [28] | Topological (Composite) | A composite metric: ( Hub{Index} = \text{min}(R{degree}, R{degree_out}, R{pageRank}) ). It combines a species' rank based on its number of connections (degree), number of predators (degree-out), and importance in network flow (PageRank). | Identifies highly connected species critical to the network's structural integrity. Species in the top 5% are deemed "hub species." |

| Keystone Index (Functional) [25] | Functional (Impact) | Derived from Ecopath with Ecosim (EwE) models. It quantifies the relative impact of a species on others in the network, considering both direct and indirect trophic interactions. | Measures the potential change in ecosystem structure and function from a change in the species' biomass. |

| Network Robustness (R50/CR) [27] | Systemic Stability | Simulates species loss by sequentially removing nodes from the network in order of decreasing connectivity, biomass, or density. Tracks the rate of secondary extinctions. Metrics include R50 (the point where 50% of species are lost) and Connectivity Robustness (CR). | Measures the resistance of the community to cascading extinctions following the loss of a specific species or type of species. |

Performance Comparison Across Ecosystems

Applying these indices in different environments yields divergent results, underscoring the context-dependency of keystoneness. The table below synthesizes findings from key studies.

Table 2: Comparative Keystone Species Identification in Different Ecosystems

| Ecosystem (Study) | Identification Method | Identified Keystone Species/Groups | Contrasting Findings & Contextual Dependencies |

|---|---|---|---|

| Zhangze Lake (Eutrophic Lake) [6] | wBC, wCC, Degree Centrality | Varied temporally; different species were ranked highest in April vs. July. | Methodology mattered: Weighted indices (using stable isotopes) identified different keystone species than simple binary (unweighted) indices. Temporal context mattered: Keystone species changed with seasonal shifts in the community. |

| Marine Bacterial Microcosms [26] | Ecological Knock-Out (EKO) experiments, gLV modeling | No keystone species were found across eight different carbon source environments. | Network structure dictated outcomes: A hierarchical structure of interspecies interactions, naturally emerging from variations in carrying capacities, prevented the emergence of classic keystone species and provided community resilience. |

| Chishui River (Undammed) vs. Heishui River (Dammed) [27] | Network Robustness (R50, CR) to loss of high-connectivity species | Undammed River: Predators.Dammed River: Collector-gatherers (Prey). | Anthropogenic alteration changed keystones: Dam construction transformed the functional feeding groups of keystone species, and dammed networks were less robust to species loss. |

| Benthic Ecosystems (Tongoy Bay, etc.) [25] | Functional, Structural, and Qualitative (Loop Analysis) Indices | A core set of species with keystoneness properties varied by location and method; included primary producers, herbivores, and top predators. | No single method was conclusive: Different indices highlighted different species as keystones. A "keystone species complex" was suggested as a more holistic management target. |

Figure 1: A workflow for identifying keystone species, showing how methodological pathways are filtered by contextual factors to produce a community-specific outcome.

Detailed Experimental Protocols

This protocol uses stable isotopes to create a quantitative, weighted food web.

- Field Sampling: Collect primary producers (e.g., phytoplankton, periphyton) and consumer species (e.g., zooplankton, fish) from the study site. Sampling should be repeated across different seasons to capture temporal variation.

- Stable Isotope Analysis: Process samples in the laboratory to analyze ratios of Carbon-13/Carbon-12 (δ13C) and Nitrogen-15/Nitrogen-14 (δ15N). δ13C helps identify primary carbon sources, while δ15N indicates trophic position.

- Mixing Models: Use computational mixing models (e.g., SIAR, MixSIAR) to estimate the proportional contributions of each food source to the diet of consumers. These proportions define the weight of the links between species.

- Network Calculation: Construct the food web network. Calculate weighted centrality indices (wBC, wCC) using network analysis software. Species with consistently high values across indices are potential keystones.

This experimental protocol tests the systemic impact of a species' removal.

- Community Assembly: Assemble a synthetic microbial community from a defined pool of species (e.g., 16 marine bacterial isolates).

- Define Environments: Grow replicated communities in multiple distinct environments (e.g., with simple vs. complex carbon sources like glucose vs. glycogen).

- Systematic Removal: For each environment, create a "full" community and a series of "knock-out" communities where each species is systematically omitted from the initial inoculum.

- Monitor Community Structure: Grow all communities through several dilution cycles to reach a stable state. Use high-throughput sequencing (e.g., 16S rRNA amplicon sequencing) to quantify the final community composition of each knock-out compared to the full community.

- Data Analysis: A keystone species is identified if its removal leads to significant secondary extinctions or invasions, drastically altering community structure. The absence of such cascades, as found in [26], suggests resilience and a lack of classic keystone species.

Figure 2: The experimental workflow for Ecological Knock-Out (EKO) experiments, demonstrating how structured interactions can preclude keystone species.

The Scientist's Toolkit: Essential Research Reagents & Platforms

This section details key reagents, software, and analytical platforms essential for conducting research on keystone species identification.

Table 3: Essential Reagents and Solutions for Keystone Species Research

| Tool/Solution | Category | Primary Function in Research |

|---|---|---|

| Stable Isotopes (¹³C, ¹⁵N) [6] | Chemical Reagent | Serves as a natural tracer to delineate trophic pathways and quantify the strength of species interactions in food web models. |

| Generalized Lotka-Volterra (gLV) Models [26] | Computational Model | A mathematical framework to simulate population dynamics and interspecies interactions, used to test hypotheses about community stability and keystoneness. |

| Ecopath with Ecosim (EwE) [25] | Software Platform | A widely used ecosystem modeling software suite that allows for the construction of mass-balanced trophic models and the calculation of functional keystone indices. |

| Hub Index Calculator [28] | Computational Algorithm | A composite metric (min of degree, degree-out, and PageRank ranks) implemented in network analysis code to identify topologically critical "hub" species. |

| 16S rRNA Sequencing [26] | Molecular Biology Kit | For microbial ecology, it enables the high-resolution identification and relative quantification of species in a community after perturbation experiments (e.g., EKOs). |

The comparative data unequivocally shows that keystoneness is a community-specific property. The identity of a keystone species is not absolute but is co-determined by the method of identification, the environmental context, and the inherent structure of the ecological network.

For researchers and drug development professionals, this has critical implications. In microbial systems relevant to human health, a bacterium's keystone role may be tied to specific host conditions or microbiome compositions [26]. Relying on a single network index or data from a single context is insufficient. A robust research program should:

- Employ Multiple Indices: Combine topological, functional, and experimental removal analyses to triangulate true keystone species.

- Account for Context: Explicitly test for keystoneness across the relevant environmental or host-condition gradients.

- Target Complexes: Consider managing for a "keystone species complex" [25] or a "Hub Index" [28] rather than a single species, as this may be a more resilient and accurate strategy for maintaining ecosystem structure and function.

A Practical Toolkit: Key Network Indices and Their Ecological Interpretation

Degree centrality is a fundamental metric in network science that quantifies the importance of a node based on the number of direct connections it possesses. As one of the most intuitive centrality measures, it conceptualizes node importance through direct involvement or popularity within a network structure [29]. In its simplest form, for an unweighted and undirected network, the degree centrality of a node is calculated as the total number of edges incident upon it, serving as a crude measure of popularity that does not account for the quality of those connections [29].

The mathematical foundation of degree centrality is straightforward. For an unweighted graph, the degree centrality of node i is given by the sum of the adjacency matrix entries: C_D(i) = ΣA_ij, where A_ij indicates the presence (1) or absence (0) of an edge between nodes i and j [29]. In directed networks, this concept splits into in-degree (number of incoming connections) and out-degree (number of outgoing connections), which respectively represent popularity and influence [14]. To enable comparisons across networks of different sizes, degree centrality is often normalized by dividing by the maximum possible degree (N-1), where N is the total number of nodes [29].

While degree centrality provides a valuable local perspective on node connectivity, it has notable limitations. As a local measure, it only considers immediate neighbors and ignores the broader network structure, potentially overlooking nodes that serve as critical bridges between network components [29]. This limitation becomes particularly important in applications such as keystone species identification, where a species with few but strategically important connections may exert disproportionate influence on ecosystem stability [30].

Theoretical Foundations and Evolution to Weighted Measures

From Binary to Weighted Networks

Traditional degree centrality operates effectively in binary networks where relationships are simply present or absent. However, real-world networks in ecology, sociology, and pharmacology often feature relationships with varying intensities or capacities, necessitating the extension of centrality measures to weighted networks [31].

The most direct extension of degree centrality to weighted networks is node strength (often called weighted degree), which calculates the sum of weights attached to edges connected to a node rather than simply counting connections [31]. For a node i, this is computed as C_D^+(i) = ΣW(i, i'), where W(i, i') represents the weight of the edge between nodes i and i' [29]. While this measure incorporates relationship intensity, it overlooks the original conceptual foundation of degree centrality—the number of distinct connections [31].

Integrated Approaches and the Tuning Parameter

To address the limitations of both simple degree count and pure weight summation, Opsahl et al. (2010) proposed a hybrid approach that incorporates both the number of ties and their weights using a tuning parameter α [31]. This parameter controls the relative importance given to the number of ties versus tie weights, with two key benchmark values:

- When α = 0, the measure reduces to traditional degree centrality, ignoring edge weights completely

- When α = 1, the measure equals node strength, considering only the sum of weights and disregarding the number of connections

Intermediate values of α allow researchers to balance these two aspects according to their specific research context [31]. The utility of this approach is evident in ecological networks where both the number of connections (e.g., predator-prey relationships) and their strengths (e.g., energy flow) collectively determine a species' structural role [30].

Table 1: Comparison of Degree Centrality Measures Across Conceptual Approaches

| Measure | Formula | Advantages | Limitations |

|---|---|---|---|

| Freeman's Degree | C_D(i) = ΣA_ij | Simple, intuitive, local computation | Ignores connection strength, global structure |

| Node Strength | C_D^+(i) = ΣW(i, i') | Incorporates relationship intensity | Overlooks number of distinct connections |

| Opsahl's Hybrid | C_W(i) = k_i × (s_i/k_i)^α | Balances quantity and quality of connections | Requires parameter selection (α) |

Quantitative Comparison of Degree Centrality Variants

The practical implications of choosing different degree centrality measures can be substantial, as demonstrated by empirical comparisons across research domains. The table below illustrates how different measures produce varying node rankings using the example from Opsahl et al.'s analysis of the EIES network [31].

Table 2: Comparative Centrality Scores for Different Degree Measures in EIES Network

| Node | Freeman (1978) | Barrat et al. (2004) | Opsahl et al. (α=0.5) | Opsahl et al. (α=1.5) |

|---|---|---|---|---|

| Phipps Arabie (A) | 28 | 155 | 66 | 365 |

| John Boyd (B) | 11 | 188 | 45 | 777 |

| Maureen Hallinan (C) | 6 | 227 | 37 | 1396 |

This comparison reveals crucial insights: Maureen Hallinan (C) ranks lowest with Freeman's traditional degree but highest with Barrat's node strength approach. The hybrid measures with different α values provide intermediate rankings that balance both the number and intensity of connections [31]. Such disparities highlight the importance of selecting appropriate measures based on research questions rather than applying measures indiscriminately.

In ecological applications, these differences can significantly alter keystone species identification. A species with few but strong interactions (high node strength) might be overlooked by traditional degree centrality but identified as crucial by weighted measures [30]. Conversely, species with numerous weak connections might appear important with traditional degree but marginal when interaction strength is considered.

Experimental Protocols for Centrality Analysis in Ecological Networks

Data Acquisition and Network Construction

The initial phase of keystone species identification involves constructing appropriate ecological networks, typically food webs or microbial interaction networks. For food web analysis, this begins with documenting predator-prey relationships through field observations, stable isotope analysis, or gut content examination [30]. In microbial ecology, network construction often relies on correlation-based approaches from abundance data, though these methods have limitations as they represent statistical associations rather than direct ecological interactions [4].

For weighted networks, the next critical step involves quantifying interaction strengths. In food webs, this may involve energy flow measurements, foraging rates, or dietary proportions [30]. In microbial networks, correlation strengths or conditional probabilities are often used as proxies for interaction intensities [4]. The resulting data is structured as an adjacency matrix where entries represent presence/absence or strength of interactions between species.

Figure 1: Experimental workflow for keystone species identification using network centrality

Computational Implementation and Analysis

Once networks are constructed, researchers implement computational pipelines to calculate and compare centrality measures. The following R code demonstrates a basic implementation using the tnet package as referenced in the search results [31]:

For keystone species identification, the computational protocol typically continues with sensitivity analysis through simulated species removals. This involves sequentially removing high-centrality species and measuring secondary extinction rates or ecosystem functional changes [30]. The species whose removal causes the greatest disruption are identified as keystones, aligning with Paine's original definition of species with disproportionately large effects relative to their abundance [4].

Application in Keystone Species Identification: Case Evidence

Performance in Ecological Networks

The application of different degree centrality measures in keystone species identification yields substantially different outcomes, as evidenced by research comparing various approaches. In food web studies, motif-based centrality—which considers a species' participation in recurrent subgraphs—has demonstrated superior performance in identifying species whose removal triggers significant secondary extinctions [30].

Experimental validation through sequential deletion experiments has shown that removal sequences based on motif centrality cause significantly more secondary extinctions than random removals in both topological and dynamic modeling approaches [30]. This suggests that motif centrality captures aspects of species importance that traditional degree measures miss, particularly the structural role of species within characteristic interaction patterns of the ecosystem.

In microbial ecology, recent approaches have highlighted the limitations of correlation-network-based centrality measures. The Data-driven Keystone species Identification (DKI) framework uses deep learning to predict community changes after species removal, revealing that keystoneness is highly community-specific and cannot be captured by static topological indices alone [4]. This represents a significant advancement beyond simple degree-based approaches.

Limitations and Community Specificity

A critical finding in recent keystone species research is the community specificity of centrality measures—a species identified as a keystone in one community may not be keystone in another, even within the same habitat [4]. This challenges the universal application of degree-based indices and emphasizes the need for context-dependent analysis.

Additionally, the DKI framework revealed that taxa with high median keystoneness across different communities display strong community specificity, further complicating the identification of universally important species [4]. These findings suggest that while degree centrality and its weighted counterparts provide valuable starting points for keystone identification, they must be supplemented with community-aware approaches for accurate predictions.

Research Reagent Solutions for Network Analysis

Implementing robust network analysis for keystone species identification requires specialized computational tools and data resources. The table below summarizes essential research reagents for centrality analysis in ecological networks.

Table 3: Essential Research Reagents for Network-Based Keystone Species Identification

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| tnet R Package [31] | Software | Calculates weighted network measures including degree variants | General network analysis, ecological networks |

| igraph Library [32] | Software | Comprehensive network analysis and visualization | Social and ecological network analysis |

| cNODE2 [4] | Algorithm | Deep learning approach for predicting community changes after species removal | Microbial community analysis |

| Food Web Motif Database [30] | Data Resource | Catalog of over-represented subgraphs in ecological networks | Motif-based centrality analysis |

| Multi-omics Integration Tools [33] | Computational Framework | Integrates genomic, transcriptomic, and metabolomic data | Drug discovery, microbial ecology |

Degree centrality and its weighted counterparts provide valuable but incomplete tools for keystone species identification. While traditional degree centrality offers simplicity and interpretability, weighted measures capture crucial information about interaction intensities that often better predict species importance. The hybrid approach proposed by Opsahl et al. represents a promising middle ground, allowing researchers to balance the number and strength of connections according to their specific research context [31].

However, recent advances in motif-based centrality [30] and data-driven keystone identification [4] demonstrate that even sophisticated weighted degree measures cannot fully capture the complex nature of species importance in ecological networks. The community specificity of keystone species further complicates the picture, suggesting that effective keystone identification requires approaches that consider both network structure and community context.

For researchers investigating keystone species, the optimal approach involves using multiple centrality measures in tandem, with experimental validation through simulated removal experiments. As network analysis continues to evolve, integration with multi-omics data and machine learning approaches promises to enhance our ability to identify truly critical species in complex ecosystems, with significant implications for conservation biology and ecosystem management [33].

In ecological network analysis, path-based centrality measures are fundamental tools for quantifying the importance of species based on their position within the food web topology. These measures help researchers identify keystone species—those that exert a disproportionately large influence on ecosystem structure and functioning relative to their abundance. Betweenness centrality and closeness centrality represent two distinct approaches to conceptualizing and quantifying this importance through the lens of shortest paths between species. While betweenness centrality identifies species that act as critical bridges or bottlenecks in the flow of energy and nutrients, closeness centrality highlights species that can rapidly interact with others throughout the network. Understanding the methodological applications, comparative performance, and limitations of these indices is essential for advancing keystone species identification research and developing effective conservation strategies.

The significance of these measures extends beyond theoretical ecology into practical conservation biology. As ecological systems face increasing anthropogenic pressures, the ability to accurately identify species critical for maintaining ecosystem stability becomes paramount. Research by Jordán et al. emphasizes that future conservation biology "needs to be more functional and should be outlined within a multispecies context," with a focus on "conserving the most important species that play key role in maintaining ecosystem functions" [34]. Path-based centrality measures provide a quantitative, less subjective approach to achieving this goal within the framework of network ecology.

Theoretical Foundations of Path-Based Centrality

Betweenness Centrality

Betweenness centrality quantifies the extent to which a node lies on the shortest paths between other nodes in a network. In food webs, it identifies species that act as bridges or bottlenecks in the flow of energy, nutrients, or ecological effects. The mathematical definition states that for a node ( v ), betweenness centrality is calculated as:

[ g(v) = \sum{s \neq v \neq t} \frac{\sigma{st}(v)}{\sigma_{st}} ]

where ( \sigma{st} ) is the total number of shortest paths from node ( s ) to node ( t ), and ( \sigma{st}(v) ) is the number of those paths that pass through node ( v ) [35]. Species with high betweenness centrality potentially control the flow of resources or information between different parts of the food web and may connect otherwise separate modules. Their removal could fragment the network and disrupt critical energy pathways.

Closeness Centrality

Closeness centrality measures how quickly a node can interact with all other nodes in the network via shortest paths. It is defined as the inverse of the sum of the shortest path distances from a node to all other nodes in the network. For a node ( v ), closeness centrality is calculated as:

[ C(v) = \frac{1}{\sum_{u \neq v} d(v,u)} ]

where ( d(v,u) ) is the shortest path distance between nodes ( v ) and ( u ) [36] [37]. In ecological terms, species with high closeness centrality can potentially rapidly affect or be affected by other species in the food web due to their central position. These species may serve as efficient distributors of resources, energy, or ecological effects throughout the network.

Comparative Analysis of Centrality Measures