Balancing Act: Optimizing Model Complexity for Accurate Food Web Projections in Ecological Research

This article synthesizes current research to provide a comprehensive framework for optimizing model complexity in food web projections.

Balancing Act: Optimizing Model Complexity for Accurate Food Web Projections in Ecological Research

Abstract

This article synthesizes current research to provide a comprehensive framework for optimizing model complexity in food web projections. It explores the foundational trade-offs between simplicity and realism, examines advanced methodologies including spatial and machine learning approaches, and addresses key challenges like transient dynamics and computational hardness. By comparing validation techniques and performance across ecological contexts, we offer actionable strategies for researchers to develop robust, predictive models that balance computational feasibility with ecological accuracy, ultimately enhancing the reliability of projections for ecosystem management and conservation.

The Complexity-Stability Paradox: Foundational Principles for Food Web Modeling

FAQ: Understanding the Core Paradox

What is May's Paradox? In 1972, Robert May used random matrix theory to show that mathematically, more complex ecosystems (those with more species and more interactions between them) are less likely to be stable. This finding created a "paradox" because it seems to contradict the observation that highly complex, stable ecosystems are common in nature (e.g., tropical forests, coral reefs) [1] [2].

What is the mathematical basis for May's finding?

May's stability criterion states that a randomly assembled ecosystem is stable only if the following condition is met:

σ√(SC) < d

Where:

- S = Number of species (Richness)

- C = Connectance (Probability any two species interact)

- σ = Standard deviation of interaction strength

- d = Average intraspecific competition (self-regulation) [1] [3]

As the product SC (a measure of complexity) increases, this inequality is harder to satisfy, making stability less likely.

If May's Paradox is mathematically sound, why do complex natural ecosystems exist? Empirical studies have found that natural ecosystems possess non-random, stabilizing properties not accounted for in May's purely random models. These features prevent the predicted negative relationship between complexity and stability from manifesting in the real world [1]. The key is that real ecosystems are not assembled randomly.

FAQ: Troubleshooting Model Instability

My food web model is persistently unstable. What are the first things I should check? If your model is unstable, investigate these core structural properties:

- Correlation between interaction pairs (

ρ): Ensure your model allows for a negative correlation between the effects of species on each other (e.g., a strong effect of a predator on prey is correlated with a weak effect of that prey on the predator). This is a major stabilizing factor [1] [4]. - Distribution of interaction strengths: Check that your model generates a high frequency of weak interactions and few very strong interactions (a "leptokurtic" distribution), which is known to dampen destabilizing forces [1] [4].

- Feasibility constraint: Verify that your model produces a feasible equilibrium (all species have positive biomass). Many randomly generated matrices fail this basic biological requirement [4].

How can I build a complex food web model that is stable? Modern "inverse" approaches offer a more robust methodology. Instead of randomly generating interaction strengths and hoping for stability, this method starts with assumed equilibrium species abundances (which are often easier to estimate empirically) and solves for the interaction strengths that would produce them [4].

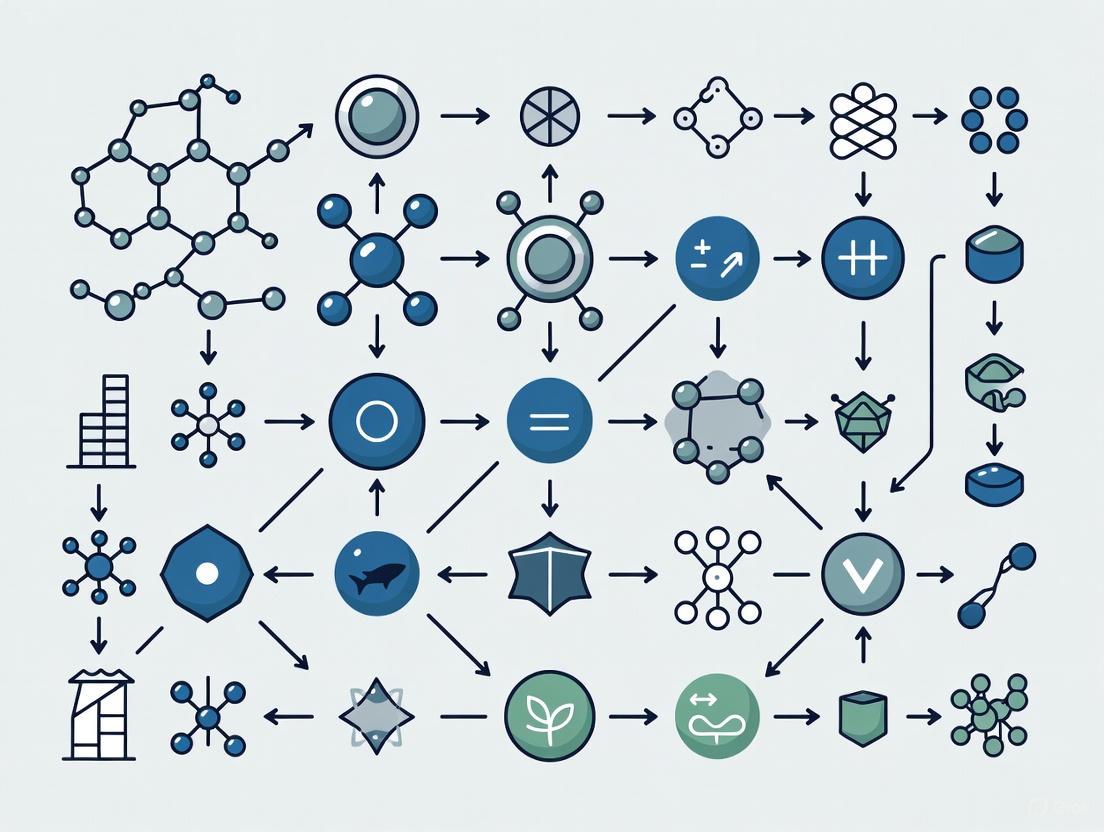

The workflow below contrasts the traditional modeling approach with the inverse approach.

My model is stable but behaves unrealistically. What biological constraints am I missing? Incorporating energetic constraints is often the key. In real predator-prey interactions, the positive effect on the predator's growth rate is weaker than the negative effect on the prey's death rate (an asymmetry). Adding this bioenergetic realism to feasible models dramatically increases the proportion of stable, complex webs, even with weak self-regulation, by promoting a structure dominated by weak interactions [4].

Experimental Protocols & Quantitative Data

Protocol 1: Local Stability Analysis of a Community Matrix

This is the standard method derived from May's work to assess if an ecosystem can recover from small perturbations [1].

- Define the Community Matrix (C): For a system with

Sspecies, construct anS x Smatrix where each elementC_ijquantifies the effect of a small change in the abundance of speciesjon the growth rate of speciesiaround equilibrium. - Calculate Eigenvalues: Compute all eigenvalues

λof the community matrixC. - Determine Stability: The system is considered locally stable if the real part of the dominant (rightmost) eigenvalue, Re(λ_max), is negative. A positive value means the system will diverge from equilibrium after a perturbation.

Protocol 2: Randomization Test for Non-Random Properties

To identify which non-random features of your model contribute to stability, follow this empirical protocol [1].

- Baseline Measurement: Calculate the stability

Re(λ_max)of your empirically structured model. - Generate Randomized Counterparts: Create a set of randomized models where specific biological structures are deliberately removed (e.g., shuffle interaction strengths, set correlation

ρto zero, normalize all interaction strengths). - Compare Stabilities: Re-calculate stability for each randomized ensemble. If the randomized models are significantly less stable than your empirical-based model, the removed property is a key stabilizing factor.

Quantitative Data from Empirical Food Web Studies

The following table synthesizes key findings from the stability analysis of 116 empirical food webs, which showed no correlation between classic complexity descriptors and stability [1].

Table 1: Relationships Between Complexity Metrics and Stability in 116 Food Webs

| Complexity Metric | Relationship with Stability (Empirical Finding) | Theoretical Prediction (from May) |

|---|---|---|

| Species Richness (S) | No significant relationship found | More species decreases stability |

| Connectance (C) | No significant relationship found | Higher connectance decreases stability |

| Interaction Strength (σ) | No significant relationship found | Stronger interactions decrease stability |

| S x C Product | Negatively correlated with σ (Fig. 3a) [1] |

Independent of σ in random models |

The table below summarizes the specific non-random properties identified in these empirical webs and their demonstrated impact on model stability.

Table 2: Impact of Non-Random Properties on Food Web Stability

| Non-Random Property | Description | Effect on Stability |

|---|---|---|

Correlation (ρ) |

Negative correlation between effects of predators on prey and vice versa [1]. | Increases stability [1] [3] |

| Weak Interactions | High frequency of weak interactions and a leptokurtic distribution [1] [4]. | Increases stability [1] [4] |

| Energetic Constraints | Asymmetric interaction strengths where consumer gain < resource loss [4]. | Increases stability and feasibility [4] |

| Generalist-Specialist Trade-off | Generalist predators naturally exhibit weaker per-prey interactions [4]. | Increases stability [4] |

The Scientist's Toolkit: Research Reagents & Conceptual Solutions

Table 3: Key Conceptual "Reagents" for Food Web Modeling

| Conceptual Tool | Function | Reference / Source |

|---|---|---|

| Community Matrix | A square matrix describing the linearized interactions between all species pairs in a community near equilibrium. The foundation for local stability analysis. | [1] [4] |

| Inverse Methodology | A computational approach that starts from a known/assumed equilibrium state and solves for possible interaction parameters, ensuring feasibility. | [4] |

| Random Matrix Theory | The mathematical framework used to predict the eigenvalue distribution of random matrices, providing the null expectation for stability. | [1] [3] |

| Energetic Constraint (Asymmetry) | A biological rule stating that the energy gained by a predator from consuming prey is always less than the energy lost by the prey, breaking symmetry in interaction strengths. | [4] |

| Cascade / Niche Model | Structural food web models that generate realistic "who eats whom" networks by assuming a consumer hierarchy, providing a more realistic topology than random graphs. | [3] |

Pathway to a Stable Complex Ecosystem

The diagram below synthesizes the key stabilizing mechanisms that allow complex ecosystems to persist, resolving the apparent paradox.

Trophic Coherence as a Key Structural Predictor of Ecosystem Stability

Technical Support Center

This support center provides assistance for common computational and methodological challenges encountered in research on trophic coherence and food web stability.

Troubleshooting Guides

Issue 1: Low Trophic Coherence Values in Generated Food Webs

- Problem: Models generate food webs with unexpectedly low trophic coherence (high trophic incoherence parameter 'T').

- Solution: Check the consumer resource distribution in your model. Trophic coherence is higher in food webs where species tend to consume resources with similar trophic levels [5]. Re-evaluate the prey selection algorithm to introduce a bias towards prey of similar trophic levels.

- Further Steps: Validate your model's output against the empirical range of trophic coherence values reported in literature (e.g., Johnson et al., 2014 [5]).

Issue 2: Instability in Large, Complex Model Ecosystems

- Problem: Simulated ecosystems become unstable (species go extinct) as network size and complexity increase, contradicting the theory that coherence can stabilize large networks [5].

- Solution: Ensure your model correctly captures trophic coherence. A maximally coherent network with constant interaction strengths is proven to be linearly stable. Recalibrate model parameters to increase coherence, which can allow stability to grow with size and complexity [5].

- Further Steps: Systematically vary the trophic incoherence parameter 'T' in your model and observe the impact on stability across different network sizes.

Issue 3: Inaccurate Trophic Level Calculation

- Problem: Calculated trophic levels for species do not converge or yield non-sensical values (e.g., negative values).

- Solution: Verify that the food web has at least one "basal species" (species with no resources, typically assigned trophic level 1). Ensure the adjacency matrix of the food web is correctly formatted, with producers as rows and consumers as columns. Use a robust linear algebra library to solve the system of equations for trophic levels.

Frequently Asked Questions (FAQs)

Q1: What is the relationship between trophic coherence and May's paradox? A1: May's paradox highlights the contradiction between classical theory (predicting large, complex ecosystems are unstable) and empirical observation (that they are stable). Trophic coherence provides a solution to this paradox. Research shows that a network's trophic coherence is a better predictor of stability than its size or complexity, and models incorporating it can demonstrate increasing stability with size and complexity [5].

Q2: How is the trophic incoherence parameter ('T') calculated in practice? A2: The trophic incoherence parameter is derived from the distribution of trophic distances in a food web. A lower 'T' value indicates a more coherent (and thus more stable) network. The calculation involves determining the trophic levels of all species and then analyzing the variance in the trophic differences between connected consumers and resources [5].

Q3: Can highly coherent food webs be too stable? A3: While trophic coherence generally promotes stability, which is beneficial for ecosystem persistence, it might reduce the flexibility of an ecosystem to adapt to change. The relationship between stability and resilience is complex, and an optimal level of coherence may exist, balancing persistence against the ability to adapt to perturbations.

Experimental Protocols & Data

Summary of Key Quantitative Findings from Johnson et al. (2014) [5]

The following table summarizes core findings on the relationship between trophic coherence and ecosystem stability:

| Metric | Description | Impact on Stability |

|---|---|---|

| Trophic Incoherence (T) | Measure of variance in trophic levels of a species' prey. Lower 'T' = higher coherence. | Negative Correlation. Lower 'T' values predict higher linear stability [5]. |

| Network Size & Complexity | Number of species and connectance. | Variable. Classically negative, but stability can increase with size/complexity in models with high trophic coherence [5]. |

| Model Accuracy | Ability of a model to reproduce empirical food-web structure. | Positive. A simple model that captures trophic coherence accurately reproduces stability and other structural features [5]. |

Detailed Methodology for Trophic Coherence Analysis

This protocol outlines the key steps for analyzing trophic coherence in a food web.

- Data Preparation: Represent the food web as a directed adjacency matrix where element aᵢⱼ = 1 if species j consumes species i, and 0 otherwise.

- Identify Basal Species: Locate all species with no resources (i.e., a column of zeros in the adjacency matrix). Assign these basal species a trophic level sᵢ = 1.

- Calculate Trophic Levels: For each non-basal species i, calculate its trophic level using the formula: sᵢ = 1 + (1/kᵢ) * Σⱼ aᵢⱼ * sⱼ, where kᵢ is the number of prey species for i. This forms a system of linear equations that can be solved computationally.

- Compute Trophic Distances: For each link where j consumes i, calculate the trophic distance xᵢⱼ = sⱼ - sᵢ.

- Determine Trophic Incoherence (T): The parameter 'T' is the standard deviation of the distribution of xᵢⱼ values across all links. A low 'T' indicates high coherence.

Research Toolkit Visualization

Conceptual Framework of Trophic Coherence Analysis

The diagram below illustrates the logical workflow and key concepts involved in analyzing a food web for trophic coherence.

Key Structural Relationships in a Coherent Food Web

This diagram contrasts a highly coherent food web structure with an incoherent one, highlighting the structural basis for stability.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key resources for conducting research on trophic coherence and food web stability.

| Item / Solution | Function / Application |

|---|---|

| Food Web Database | Provides empirical data for model validation and parameterization. |

| Network Analysis Software | Used for calculating structural properties and visualizing food webs. |

| Trophic Coherence Model | A computational model that incorporates the trophic coherence parameter to predict ecosystem stability [5]. |

| Linear Algebra Library | Essential for solving systems of equations to calculate trophic levels for all species in a network. |

| Stability Analysis Scripts | Custom scripts to run simulations and measure stability metrics. |

Troubleshooting Guides and FAQs

Common Experimental Issues and Solutions

Q: My model becomes unstable as I add more species, contradicting the theory that meta-community complexity should be stabilizing. What might be wrong?

A: This often occurs when migration coupling between local food webs is too strong. The stabilizing effect of meta-community complexity is strongest at intermediate migration strength (M). If coupling is too tight, the entire meta-food web behaves as a single, unstable unit [6].

- Solution: Systemically test a range of migration values (

M). Stability should show a unimodal response, peaking at intermediateM[6]. - Check: Ensure spatial heterogeneity exists; without differences in population densities between local webs, migration cannot act as a stabilizing feedback [6].

Q: How do I differentiate the effects of food-web complexity from meta-community complexity in my results? A: These are two distinct types of complexity [6]:

- Food-web complexity (

N,P): Number of species (N) and the probability of a trophic link (P) within a local community. - Meta-community complexity (

HN,HP): Number of local food webs (HN) and the proportion of connected pairs (HP). - Diagnosis: Hold food-web complexity (

N,P) constant while varying meta-community parameters (HN,HP,M). A positive complexity-stability relationship emerging from this manipulation indicates a successful meta-community effect [6].

Q: I need to identify the most important species to manage for overall ecosystem persistence. Are standard "keystone" indices reliable? A: Common network theory indices can be a poor guide for conservation management. Prioritizing species based on the network-wide impact of their protection is more effective than prioritizing based on the consequence of their loss [7].

- Recommended Approach: A modified Google PageRank algorithm has been shown to reliably minimize extinction risk and severity, outperforming many other metrics [7].

- Solution: Use Bayesian Networks with Constrained Combinatorial Optimization to find the optimal management strategy, which can then be used to validate the performance of simpler indices [7].

Q: My graph visualizations are difficult to read. How can I improve the clarity of nodes and edges? A: Adhere to technical specifications for visual accessibility.

- Text Contrast: Explicitly set the

fontcolorattribute to ensure high contrast against the node'sfillcolor. Thecontrast-color()CSS function can automate this by returningwhiteorblackbased on the background color [8]. - Non-Text Contrast: For graphical objects (e.g., arrows, symbols) and UI components, ensure a minimum contrast ratio of 3:1 against adjacent colors [9].

- Color Palette: Use colors from a defined, accessible palette (e.g.,

#4285F4,#EA4335,#FBBC05,#34A853,#FFFFFF,#F1F3F4,#202124,#5F6368).

Quantitative Parameters for Model Stability

The following parameters are crucial for designing and tuning a stable meta-community food-web model [6].

Table 1: Key Parameters for Meta-Community Food-Web Models

| Parameter | Symbol | Description | Role in Stability |

|---|---|---|---|

| Number of Local Food Webs | HN |

Number of distinct local patches in the meta-community. | Increasing HN under intermediate migration (M) enhances stability [6]. |

| Habitat Connectivity | HP |

Proportion of possible links between local webs that are realized. | Higher HP allows for more stabilizing feedback loops [6]. |

| Migration Strength | M |

Rate of organism movement between connected local food webs. | Stabilization is strongest at intermediate M; too low or too high can be destabilizing [6]. |

| Number of Species | N |

Number of species within a single local food web. | In isolation, more species (N) destabilizes; in a meta-community, this effect can be reversed [6]. |

| Link Probability | P |

Probability that any two species in a local web have a trophic interaction. | Contributes to local food-web complexity; its negative stability effect can be offset by meta-community complexity [6]. |

Table 2: Performance of Management Prioritization Indices [7]

| Management Index / Approach | Key Principle | Relative Performance (Surviving Species) |

|---|---|---|

| Optimal Management (Greedy Heuristic) | Uses Constrained Combinatorial Optimization to find the best species subset to manage. | Highest |

| Modified PageRank | Adapts Google's algorithm to prioritize species based on protection impact. | Near-Optimal (Most Robust) |

| Keystone Index | Identifies species critical to network structure based on topological properties. | Moderate |

| Node Degree | Prioritizes species with the most trophic connections. | Variable (Good only in low-connectance webs) |

| Return-On-Investment (ROI) | Manages species based on lowest cost, ignoring network effects. | Worst (Worse than Random) |

Detailed Experimental Protocol: Meta-Community Stability Analysis

This protocol provides a methodology for testing the effect of spatial complexity on food-web stability, based on the model described in the search results [6].

Objective: To determine how the number of local habitats (HN) and their connectivity (HP) influence the stability of a complex food web.

1. Model Setup and Initialization

- Base Food-Web Structure: Generate random food webs for local habitats. Each pair of species

iandjhas a probabilityPof being connected by a trophic link. The maximum number of links isLmax = N(N-1)/2[6]. - Spatial Dynamics: Implement a meta-community as a network of

HNlocal food webs. Connect these webs with a probabilityHPto create the spatial network. - Population Dynamics: Use the following ordinary differential equation to model the abundance of species

iin habitatl(X_il):dX_il/dt = X_il * (r_il + s_il*X_il + Σ_j a_ijl*X_jl) + Σ_k (M_kl * X_ik)Where:r_ilis the intrinsic rate of change.s_ilis density-dependent self-regulation.a_ijlis the interaction coefficient between speciesiandjin habitatl.M_klrepresents the migration rate from habitatktol[6].

2. Experimental Procedure

- Spatial Complexity Gradient: Vary the meta-community complexity by increasing the number of local food webs (

HN) from 1 to 10, and the connectivity (HP) from 0.1 to 1.0. - Migration Gradient: For each spatial configuration, test a range of migration strengths (

M), for example, from 0.001 to 0.1. - Replication and Heterogeneity: Run multiple replicates for each parameter set. Ensure that parameters (

r_il,s_il,a_ijl) differ randomly between local food webs to create the necessary spatial heterogeneity. Optionally, run treatments with correlated parameters to test the effect of habitat homogeneity [6]. - Stability Measurement: After the model reaches equilibrium, apply a small perturbation to all species populations. Measure local stability as the system's rate of return to this original equilibrium [6].

3. Data Analysis

- Plot community stability against migration strength (

M) for different levels ofHNandHP. - Analyze the relationship between food-web complexity (

N * P) and stability for different levels of meta-community complexity (HN * HP). A positive relationship indicates a successful reversal of the classic complexity-stability paradox [6].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Analytical Tools

| Item | Function in Research | Example Applications / Notes |

|---|---|---|

| NetworkX | Python package for the creation, manipulation, and study of the structure, and dynamics of complex networks. | - Constructing random food-web topologies. - Calculating network metrics (e.g., Node Degree, Betweenness Centrality) [10]. |

| Graphviz (DOT) | Graph visualization software; uses a domain-specific language (DOT) for defining graph structures and attributes. | - Generating publication-quality diagrams of food webs and meta-community networks. - Automating layout to clearly show spatial connectivity [10]. |

| Cytoscape | Dedicated, fully-featured platform for complex network analysis and visualization. | - Importing networks via GraphML format from NetworkX for advanced visualization and analysis [10]. |

| Bayesian Belief Networks (BBNs) | A probabilistic graphical model that represents a set of variables and their conditional dependencies. | - Predicting secondary extinctions in a computationally efficient way, capturing most forecasts of more complex dynamic models [7]. |

| Constrained Combinatorial Optimization | A mathematical method to find the optimal solution from a finite set of possibilities, given constraints. | - Identifying the optimal set of species to manage for ecosystem persistence under a fixed budget [7]. |

| W3C Color Contrast Algorithm | A standard formula to calculate the perceived brightness of a color. | - Ensuring text and graphical elements in diagrams meet accessibility standards (WCAG). The formula is: ((R*299) + (G*587) + (B*114)) / 1000 [11]. |

Experimental Workflow and Signaling Pathway Visualizations

<100: Meta-Community Stability Analysis Workflow

<100: Spatial Feedback Loop for Stability

Functional Redundancy and Its Dual Impact on Ecosystem Resilience and Transients

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental ecological trade-off associated with functional redundancy?

Functional redundancy presents a dual effect: it enhances ecosystem resilience by ensuring that multiple species can perform similar functions, allowing the system to maintain functioning despite species loss. However, it can also generate long-lived ecological transients. These extended periods of non-equilibrium dynamics occur because functionally similar species compete very slowly, arbitrarily delaying the ecosystem's approach to a stable state [12] [13].

FAQ 2: How can I diagnose long transients caused by functional redundancy in my model ecosystem?

Prolonged transient dynamics can be identified by monitoring species abundances over time. A key indicator is transient chaos, where the system's path to equilibrium depends sensitively on initial conditions or assembly history. Mathematically, this manifests as a very slow timescale (on the order of ε⁻¹, where ε represents the minute functional differences between species) in the approach to a final equilibrium [13]. In computational models, this is analogous to solving an ill-conditioned optimization problem [13].

FAQ 3: Are there specific experimental protocols to measure functional redundancy and its effects?

Yes, a robust method involves using closed bioreactor ecosystems. The following table summarizes a key experimental design for investigating functional redundancy in response to perturbations [14]:

Table: Experimental Protocol for Assessing Functional Redundancy in Bioreactors

| Protocol Component | Description |

|---|---|

| System Type | Continuous anaerobic bioreactors as closed model ecosystems. |

| Key Perturbation | Gradual pH shift (e.g., from 5.5 to 6.5). |

| Data Collection | 16S rRNA gene amplicon sequencing and process data (e.g., carboxylate yields). |

| Analysis Methods | Aitchison PCA clustering, linear mixed-effects models, random forest classification, and network analysis. |

| Resilience Indicator | Recovery of product yields and ranges to pre-perturbation states after transient fluctuations. |

FAQ 4: What is "functional similarity" and why is it a preferred term?

Functional similarity is proposed as an alternative term to "functional redundancy." It better reflects that species exist on a gradient of niche overlap and highlights the unique contributions of all coexisting species. The term "redundancy" can be misleading, as it carries a negative connotation of being expendable, which is ecologically inaccurate and problematic for scientific communication [12].

Troubleshooting Guides

Issue: Unrealistically Long Transients in Food Web Models

Problem: Your computational model takes an exceedingly long time to reach equilibrium, making simulations impractical and results difficult to interpret.

Solution:

- Check for Functional Overlap: Analyze your interaction matrix (A) for species with nearly identical interaction coefficients. This is a primary source of ill-conditioning in the system [13].

- Apply Dimensionality Reduction: Use techniques like Principal Components Analysis (PCA) to precondition the dynamics. This separates fast relaxation of distinct functional groups from the slow "solving" dynamics among redundant species [13].

- Introduce Minute Functional Differences: Ensure that no two species are truly identical. Introduce small variations (a singular perturbation, ε) in their interaction strengths or growth rates to break perfect symmetry and allow competitive exclusion to proceed [13].

Issue: Differentiating Redundancy from Complementarity in Experiments

Problem: It is challenging to determine whether stable ecosystem function is due to functional redundancy (species are interchangeable) or functional complementarity (species have unique roles).

Solution:

- Conduct Perturbation Experiments: Selectively remove species or groups of species and monitor ecosystem function. In a redundant system, function will remain stable until a threshold of species loss is crossed. In a complementary system, function will decline more linearly with species loss [12].

- Long-Term Monitoring: Conduct experiments over extended periods. Short-term experiments often show saturating BEF relationships (suggesting redundancy), while long-term studies frequently reveal more linear relationships as complementarity mechanisms strengthen over time [12].

- Analyze Response and Effect Traits: Measure traits related to how species respond to environmental change (response traits) and how they affect ecosystem function (effect traits). A decoupling between these two groups indicates functional redundancy for that specific function [12].

The Scientist's Toolkit

Table: Essential Reagent Solutions for Microbial Ecosystem Experiments

| Research Reagent / Material | Function in Experiment |

|---|---|

| Continuous Anaerobic Bioreactors | Serves as a closed, controllable model ecosystem for studying community assembly and response to perturbations like pH shifts [14]. |

| 16S rRNA Gene Sequencing Reagents | Allows for the taxonomic identification and relative quantification of community members, including key players and rare species [14]. |

| Primers for Key Functional Genes | Targets specific genes involved in critical processes (e.g., chain elongation) to link community composition directly to ecosystem function [14]. |

| Linear Mixed-Effects Models | A statistical tool to analyze time-series data, accounting for both fixed effects (like pH) and random effects (like reactor identity) [14]. |

| Network Analysis Software | Used to infer microbial interactions (e.g., co-occurrence patterns) and understand the plasticity of the community food web in response to change [14]. |

Visualizing Core Concepts

Redundancy Induces Long Transients

Experimental Workflow for Resilience Analysis

Frequently Asked Questions

What does "optimal complexity" mean for food web models? Optimal complexity is the point where a food web model has sufficient detail to make accurate predictions without becoming so over-parameterized that it is unstable or impossible to fit with available data. An overly simple model may miss crucial ecosystem dynamics, while an overly complex one can produce unrealistic results and high uncertainty, making it unreliable for projection [15].

My model predictions show extreme and unexpected outcomes. What could be wrong? This is a classic sign of an ill-conditioned or poorly constrained model. When model parameters cannot be adequately informed by the available data, the system can generate predictions with very high uncertainty. Research on groundwater models has shown that models with simpler parameterization can sometimes produce more extreme predictions than their more complex, but better-constrained, counterparts [15].

How does functional redundancy among species affect my model? Functional redundancy, where multiple species serve similar ecological roles, directly increases model complexity and can lead to long transients. Mathematically, this redundancy creates an ill-conditioned system that is difficult to solve, manifesting as "transient chaos" where the path to equilibrium is highly sensitive to initial conditions [16]. This makes the model's behavior harder to predict over time.

Can I use a complex model if I have limited interaction data? Yes, but it requires strategic simplification. The Allometric Diet Breadth Model (ADBM) is an example of a model that uses body size and foraging theory to predict trophic links, reducing the number of parameters that need direct measurement. Modern approaches use methods like Approximate Bayesian Computation (ABC) to fit the model and estimate its connectance (the proportion of possible links that are realized) simultaneously, even with incomplete data [17].

Troubleshooting Guides

Problem: Model predictions have unacceptably high uncertainty. This often stems from the parameterization strategy and insufficient data to constrain the model.

- Diagnosis: Check if the number of parameters is large relative to the quantity and quality of your observational data. Perform a sensitivity analysis to identify which parameters contribute most to the variance in your outputs.

- Solution:

- Re-evaluate Parameterization: Compare a simple parameterization scheme (e.g., relating parameters to a master variable like depth or body size) against a more complex one (e.g., using pilot points for spatial variation). Evidence suggests the choice significantly impacts predictive uncertainty [15].

- Incorporate Prior Knowledge: Use a Bayesian framework to inform parameter distributions with data from similar systems or expert judgment. This helps constrain the feasible parameter space.

- Reduce Effective Complexity: If data is limited, simplify the model by grouping functionally redundant species into "metaspecies" or trophic levels before parameterizing interactions between these groups [16].

Problem: The model takes an extremely long time to reach a stable state, or seems to behave chaotically. This is likely due to long transients caused by ill-conditioning in the ecosystem dynamics.

- Diagnosis: Simulate the model from different initial species abundances. If the paths to equilibrium are vastly different and highly sensitive to starting conditions, transient chaos is probable.

- Solution:

- Identify Redundancy: Analyze your interaction matrix for species with near-identical interaction profiles. These functional redundancies are the primary cause [16].

- Apply Dimensionality Reduction: Use techniques like Principal Components Analysis (PCA) to precondition the dynamics. This separates the fast, stable relaxation from the slow, ill-conditioned "solving" dynamics, making the system more manageable [16].

- Focus on Group Dynamics: Reformulate the model to first solve for the equilibrium of coarse-grained functional groups, then resolve the slow dynamics within each redundant group.

Problem: I suspect my empirical food web data is missing many trophic links. Incomplete data is a common issue that can lead to underestimating connectance and misrepresenting structure.

- Diagnosis: Compare the connectance of your observed web to webs of similar size and type from published literature. If yours is significantly lower, links are likely missing.

- Solution:

- Use a Model to Predict Links: Employ a food web model like the ADBM not just as a predictive tool, but as a data-gap identification tool. The model can suggest likely missing interactions based on body size and foraging theory [17].

- Simultaneously Estimate Structure and Connectance: Parameterize the ADBM using Approximate Bayesian Computation (ABC). This method estimates posterior distributions for model parameters and, as a result, predicts the most probable connectance and web structure given the incomplete data. This approach often estimates a higher connectance than the raw data shows, indicating potential missing links [17].

Experimental Protocols

Protocol 1: Quantifying Impact of Parameterization on Predictive Uncertainty

This protocol is adapted from studies on environmental impact assessment to provide a systematic way to evaluate modeling choices [15].

Model Formulation: Develop two versions of your food web or ecosystem model for the same system.

- Simple Parameterization: Define key parameters (e.g., hydraulic conductivity, interaction strength) based on a single, master variable (e.g., species body size, habitat depth).

- Complex Parameterization: Allow the same parameters to vary more freely across the spatial domain or among species, using a method like pilot points or species-specific priors.

Model Calibration: Constrain both models using the same set of observational data (e.g., species abundance time series, stable isotope data).

Uncertainty Quantification: Run probabilistic simulations (e.g., Monte Carlo simulations) for both calibrated models to generate a distribution of predictions.

Comparison and Analysis: Compare the ranges (uncertainty) of the key predictions from both models. The study suggests the model with simpler parameterization may produce a wider range of, and potentially more extreme, predictions [15].

Protocol 2: Simultaneously Estimating Food Web Connectance and Structure with ABC

This protocol uses the Allometric Diet Breadth Model (ADBM) to infer missing links and quantify uncertainty [17].

Input Data: Gather an empirically observed food web (predator-prey links) and body size data for all species.

Model Definition: The ADBM uses foraging theory and allometric scaling to predict whether a predator consumes a prey. Its core parameters include handling time and attack rate, scaled to body sizes.

Approximate Bayesian Computation (ABC) Setup:

- Prior Distributions: Define prior probability distributions for the ADBM parameters.

- Summary Statistic: Select the True Skill Statistic (TSS), which balances the accuracy of predicting both presence and absence of links, as a measure of fit between a simulated web and the observed web.

- Distance Metric and Threshold: Define how close a simulation must be to the data (using TSS) to be accepted.

ABC Routine:

- Sample parameter values from the priors.

- Simulate a food web from the ADBM using these parameters.

- Calculate the TSS by comparing the simulated web to the observed web.

- If the TSS is above the acceptance threshold, retain the parameter values and the connectance of the simulated web.

Output: The result is a posterior distribution of both model parameters and food web connectance. The median of this distribution provides a best estimate for the "true" connectance, often higher than the connectance of the original, likely incomplete, data [17].

Research Reagent Solutions

The table below lists key computational tools and conceptual frameworks used in the advanced study of food web complexity.

| Tool / Framework | Function in Food Web Research |

|---|---|

| Generalized Lotka-Volterra (gLV) Model | A foundational differential equation framework for modeling population dynamics, where species abundances change based on intrinsic growth and pairwise interactions [16]. |

| Allometric Diet Breadth Model (ADBM) | A food web model that uses foraging theory and body size relationships to predict the structure of trophic interactions, reducing reliance on fully-empirical data [17]. |

| Approximate Bayesian Computation (ABC) | A parameter inference method used when a model's likelihood function is intractable. It allows estimation of parameter distributions and model outputs, like connectance, by comparing simulations to data [17]. |

| Condition Number Analysis | A numerical analysis concept used to diagnose "ill-conditioning" in ecosystem models, where high values indicate functional redundancy and potential for long transients and unstable fitting procedures [16]. |

| True Skill Statistic (TSS) | A metric used to evaluate the performance of food web models by measuring the accuracy of both predicted presences and absences of trophic links, which is superior to simple accuracy when links are rare [17]. |

Conceptual Workflow and Signaling Pathways

The following diagram illustrates the core conceptual workflow for developing and refining a food web model, from problem identification to a stable, useful solution.

Food Web Model Optimisation Workflow

The diagram below represents the mathematical structure of an ecosystem with functional redundancies, which is a primary source of optimization hardness and long transients.

Ecosystem Structure with Functional Redundancy

Advanced Modeling Approaches: From Spatial Dynamics to Machine Learning Integration

Spatially Explicit Metacommunity Models for Landscape-Scale Projections

Frequently Asked Questions (FAQs)

1. How do the number and spatial placement of initially populated patches affect species recovery in a fragmented landscape? The spatial configuration of introduced communities significantly influences the colonization of empty habitat patches but does not notably impact population recovery in patches that already have an established community [18]. In a five-patch star configuration landscape, the placement (central or peripheral) and number of initially populated patches (e.g., 1 central, 1 peripheral, 4 central, or 4 peripheral) are key factors that govern dispersal and colonization processes [18].

2. What is the effect of increasing food-web complexity on the recovery of species at lower trophic levels? Increasing food-web complexity, defined by a greater number of species and trophic levels, generally reduces the recovery potential of lower trophic levels [18]. This is likely due to increased top-down control from a greater diversity of consumers and predators. However, this negative effect may be partially mitigated at the highest levels of complexity, suggesting non-linear dynamics [18].

3. What is a metaweb and how can it help address the challenge of limited species interaction data? A metaweb is a regional pool of potential species interactions, capturing the gamma diversity of both species and their possible links [19]. It helps address the Eltonian Shortfall—the limited data on species interactions—by serving as a template. Local food webs can be generated by sub-sampling the metaweb based on species occurrence data, enabling insights into ecosystem structure and function with minimal initial data requirements [19].

4. How can the concept of "ES fields" improve the design of landscapes for enhanced ecosystem service performance? The ESMAX model uses "ES fields," which visualize how the intensity of regulating ecosystem services (ESs) decays with distance from their source component (e.g., a clump of trees) [20]. This approach reveals that the size of landscape components has a primary effect on total ES performance, while their spatial arrangement has a secondary effect. This allows for the proactive design of landscape configurations that maximize specific regulating ESs, which in turn support provisioning and cultural ESs [20].

Troubleshooting Common Experimental Issues

Issue 1: Unexpectedly Low Recovery of a Focal Species at a Low Trophic Level

- Potential Cause: The negative effects of food-web complexity are disproportionately impacting your focal species. A weak competitor may be particularly vulnerable to both direct competition and apparent competition mediated through shared parasitoids [18].

- Solution:

- Re-assess Trophic Structure: Experimentally simplify the food web by temporarily removing or excluding a key predator or parasitoid to isolate its effect.

- Check Spatial Refugia: Ensure your landscape configuration includes patches with low connectivity that can act as refuges from predators and strong competitors, facilitating the focal species' persistence [18].

Issue 2: Inadequate Dispersal and Colonization of Empty Habitat Patches

- Potential Cause: The spatial configuration of your initially populated patches does not facilitate sufficient connectivity for species to disperse effectively across the landscape [18].

- Solution:

- Reconfigure Landscape: Shift from a single, peripherally located source patch to multiple, centrally located source patches to enhance dispersal pathways. In a star-configuration landscape, the central patch is crucial for connectivity [18].

- Verify Dispersal Corridors: In a physical experiment, ensure that dispersal corridors (e.g., threads in tubes for insects) are functional and not obstructed [18].

Issue 3: Model Predictions Do Not Align with Experimental Outcomes

- Potential Cause: The model may not adequately capture the idiosyncratic, nonlinear responses that occur when ecological "fields" from different landscape components overlap [20].

- Solution:

- Incorporate Second-Order Effects: Move beyond landcover-proxy models. Update your metacommunity model to include algorithms for nonlinear interactions when the distance-decay fields of ecosystem services or species influences overlap, as in the ESMAX framework [20].

- Calibrate with Field Data: Use empirical data from your experimental system to parameterize the specific distance-decay kernels (intensity, range, form) for your focal species or processes [20].

Experimental Protocol: Metacommunity Assembly and Recovery

1. Objective To investigate the joint effects of spatial configuration and food-web complexity on species recovery trajectories at local (patch) and regional (landscape) scales [18].

2. Materials and Reagent Solutions Table: Key Research Reagents and Materials

| Item Name | Function/Description in the Experiment |

|---|---|

| Radish (Raphanus sativus) | Host plant species; forms the basal level of the food web [18]. |

| Cabbage Aphid (Brevicoryne brassicae) | Focal aphid species; a weak competitor with high parasitization rate [18]. |

| Turnip Aphid (Lipaphis erysimi) | Secondary aphid species; contributes to food-web complexity [18]. |

| Parasitoid Wasp (Diaeretiella rapae) | Primary parasitoid; preferentially attacks cabbage aphids, adding a trophic level [18]. |

| Polyethylene Containers | Serve as individual habitat patches (e.g., 10cm diameter, 20cm height) [18]. |

| Silicone Tubes & Threads | Function as dispersal corridors, allowing insect movement between connected habitat patches [18]. |

3. Methodology

- Landscape Construction: Create a fragmented landscape comprising five habitat patches arranged in a star configuration, with one central patch connected to four peripheral patches [18].

- Community Treatments: Assemble communities of varying complexity [18]:

- Community 1A: One aphid species (B. brassicae).

- Community 2A: Two aphid species (B. brassicae and L. erysimi).

- Community 2A-1P: Two aphid species and one parasitoid species (D. rapae).

- Spatial Configuration Treatments: Introduce the assembled communities to the landscape in different initial spatial configurations [18]:

- 1C: One central patch populated.

- 1P: One peripheral patch populated.

- 4C: Four central patches populated (in a larger simulated landscape).

- 4P: Four peripheral patches populated (in a larger simulated landscape).

- Data Collection: Monitor species abundances (e.g., of the focal aphid, B. brassicae) in all patches over time to track recovery trajectories after an initial disturbance or introduction.

- Data Analysis: Compare recovery success (e.g., final population size, time to recovery) across the different combinations of community complexity and spatial configuration.

Table: Summary of Model and Experimental Findings on Key Factors

| Factor | Effect on Colonization of Empty Patches | Effect on Recovery in Populated Patches | Effect on Lower Trophic Levels |

|---|---|---|---|

| Spatial Configuration (Number & placement of source patches) | Significant effect [18] | Minimal effect [18] | Not Directly Studied |

| Food-Web Complexity (Number of species & trophic levels) | Not the Primary Focus | Not the Primary Focus | Reduces recovery; effect may lessen at highest complexity [18] |

Experimental Workflow and Model Relationships

Platform Comparison and Selection Guide

The following table summarizes the core characteristics, system requirements, and support structures for the Ecopath with Ecosim (EwE) and Atlantis modeling platforms to aid researchers in selecting the appropriate tool.

Table 1: Platform Overview and System Requirements

| Feature | Ecopath with Ecosim (EwE) | Atlantis Ecosystem Model |

|---|---|---|

| Core Description | A free ecological modeling software suite with three main components: Ecopath (static mass-balance), Ecosim (time-dynamic simulation), and Ecospace (spatial-temporal dynamics) [21]. | Software for modelling marine ecosystems, including spatial and temporal dynamics [22]. |

| Primary Application | Addressing ecological questions, evaluating ecosystem effects of fishing, exploring management policy, and analyzing marine protected areas [21]. | Complex, process-driven simulations of marine ecosystem dynamics, often used for strategic management scenarios [22]. |

| Cost & Licensing | 100% free software; professional user support is available for a fee [23] [24]. | Free of charge, but requires a free license agreement after registering with the developers [22]. |

| Operating System | Desktop software runs only on Windows Vista or newer. Can be run on Apple machines via Parallels or Bootcamp [23]. | Available for multiple operating systems [22]. |

| Software Dependencies | Typically requires Microsoft Office (specifically Microsoft Access) for its main file storage, though it can use an alternative format (.eiixml) for execution [23]. | Requires compilation by the user; relies on version control tools like SVN for code access [22]. |

| Source Code Access | Freely available via a Subversion (SVN) repository on a per-user basis [23]. | Access to the code repository is granted by the developers after registration and licensing [22]. |

| User Support | Technical and scientific support packages are available for students and post-docs for a fee (e.g., 100 EUR per hour, minimum 10 hours) [24]. | Support is provided directly by the developer community after registration; users are encouraged to have basic coding skills [22]. |

Experimental Protocol: Model Initialization and Calibration

A generalized workflow for initializing and calibrating an ecosystem model is provided below. This protocol is critical for generating reliable food web projections.

Figure 1: Generalized workflow for initializing and calibrating an ecosystem model.

Detailed Methodology:

Data Collation: Gather all necessary input data. For a standard Atlantis model, this includes creating several key parameter files [22]:

Functional_groups.csv: Contains information on all functional groups in the model.Biology.prm: Details all ecological parameters, submodel selections, and network connections.Initial_condition.nc: A NetCDF file specifying initial biomass and size values for each functional group.Run_settings.prm: Defines the run setup, including timestep and run duration.Physics.prm&Forcings.prm: Contain physics parameters and pathways to forcing files (e.g., hydrodynamics, climate).

Platform Selection: Choose a modeling platform based on the research question and resources, referring to Table 1.

Build Static Model: Construct a mass-balanced snapshot of the ecosystem. In EwE, this is the core Ecopath step. The model must achieve mass-balance before proceeding to dynamic simulations.

Configure Forcings: Set up environmental and anthropogenic drivers. This involves preparing time-series data for factors like water flows, temperature, and fishing catches, which are specified in the

Forcings.prmfile in Atlantis [22].Time-Dynamic Simulation & Calibration: Run the model (Ecosim in EwE, the main executable in Atlantis) and compare output to independent time-series data. The model is calibrated by adjusting key parameters to improve the fit between model output and real-world observations. This is an iterative process (loop back to Step 3 if mass-balance is lost or fit is poor). Tools like

ReactiveAtlantiscan assist in visualizing parameters and outputs during this phase [22].

Frequently Asked Questions (FAQs)

Installation and Setup

Q: Can I run Ecopath with Ecosim on a Mac or Linux computer? A: The EwE desktop software is natively built for Windows. While there is no native Mac or Linux version, you can run it on Apple machines using virtualization software like Parallels or Bootcamp, which requires a Windows installation [23].

Q: Why does EwE require Microsoft Office?

A: For legacy reasons, EwE uses Microsoft Access as its primary file storage format. Your system needs to support Access drivers. However, EwE can also read and execute models from an alternative .eiixml format, which is useful for running on Linux clusters [23].

Q: How do I get the Atlantis model code? A: Unlike EwE, Atlantis code access is managed directly by its developers. You must email the development team with your name, affiliation, and reason for interest to register. After signing a free license agreement, you will be granted access to the code repository [22].

Model Execution and Troubleshooting

Q: My model fails to achieve mass-balance in the initial Ecopath step. What should I do? A: A failure to mass-balance is a common issue indicating that the initial parameterization does not satisfy the mass-balance equations. Systematically check and adjust the following inputs for your functional groups:

- Biomass: Ensure initial biomass estimates are realistic.

- Production/Biomass (P/B) ratio: This is a critical and often sensitive parameter.

- Consumption/Biomass (Q/B) ratio: Verify that consumption rates are plausible.

- Ecotrophic efficiency: This value should typically be less than 1. Values exceeding 1 suggest that the mortality rates for a group are too high given its production.

Q: What are the key output files from an Atlantis simulation, and how can I analyze them? A: Atlantis generates several NetCDF and plain text output files [22]. Key outputs include:

biol.nc: Snapshots of tracers (e.g., biomass) in each box and layer at given time frequencies.BiomIndx.txt: Total biomass in tonnes for each species across the entire model domain.Catch.txt: Total landings per species in tonnes across the domain.DietCheck.txt: Provides information on diet pressure for debugging and analysis. To process and visualize these outputs, you can use R-based tools likeatlantistoolsorShinyRAtlantis, which are designed specifically for this purpose [22].

Q: My dynamic simulation (Ecosim/Atlantis) produces unrealistic biomass explosions or crashes. How can I fix this? A: Unstable dynamics often stem from:

- Unrealistic Vulnerabilities: In Ecosim, the vulnerability parameters control the flow control between prey and predators. Default values are often a good starting point, but extreme values can cause instability. Use the stepwise fitting routine in EwE to calibrate these parameters against time series data.

- Forcing Data Errors: Check your environmental and fishery forcing time series for errors or unrealistic values. Ensure the units and timing are correct.

- Model Structure: Review the food web structure for missing key interactions or groups that might be stabilizing the system in reality.

The Scientist's Toolkit: Essential Research Reagents & Software

This table lists key software tools and resources that act as the "research reagents" for conducting ecosystem modeling with EwE and Atlantis.

Table 2: Essential Software Tools and Resources for Ecosystem Modeling

| Tool Name | Type | Primary Function | Platform |

|---|---|---|---|

| EwE Desktop [21] | Core Modeling Software | Provides the main interface for building Ecopath, Ecosim, and Ecospace models. | Windows |

| Atlantis Source Code [22] | Core Modeling Engine | The computational core for compiling and running Atlantis ecosystem simulations. | Multi-OS |

| VisualSVN | Version Control | Used to check out the EwE and Atlantis source code, ensuring correct file formatting and version control [23] [22]. | Windows |

| atlantistools [22] | Data Analysis Package | An R package for processing, summarizing, and visualizing input and output files from Atlantis models. | R |

| ShinyRAtlantis [22] | Visualization Tool | An R-based Shiny application to visually assess parameter values and initial conditions of an Atlantis model. | R |

| ReactiveAtlantis [22] | Calibration & Analysis Tool | A tool with several utilities to assist in the tuning, parameterization, and analysis of Atlantis models during calibration. | R |

| Microsoft Access Database Engine [23] | Software Dependency | Required by EwE for reading and writing its primary model file format (.ewemdb). | Windows |

Advanced Analysis: Integrating Food-Web Theory

For research focused on optimizing model complexity, integrating food-web theory into model analysis is crucial. The diagram below conceptualizes a network-based approach for identifying key species for management, which can inform which model components require the most complex representation.

Figure 2: A network analysis workflow for prioritizing species in management strategies.

Experimental Protocol for Network Analysis:

- Construct the Food Web Matrix: Export the predator-prey matrix from your calibrated Ecopath or Atlantis model. This represents the topological network of species interactions [22] [7].

- Calculate Network Metrics: Apply a modified Google PageRank algorithm to the food web. Research shows that this metric reliably minimizes the chance and severity of negative outcomes in conservation management by prioritizing species based on the network-wide impact of their protection, rather than just the consequence of their loss [7].

- Inform Model Complexity: The species identified as high-impact through this analysis are candidates for more complex representation in the model (e.g., multi-stanza age structure in EwE, detailed bioenergetics in Atlantis). Lower-impact species may be aggregated into broader functional groups, thereby optimizing the overall model complexity.

Machine Learning-Driven Optimization for Parameter Estimation and Prediction

Core Concepts: Optimization in Research

Frequently Asked Questions (FAQs)

Q1: What is the difference between optimizing a machine learning model and using machine learning for optimization in my research?

A: These are two distinct but related concepts:

- Model Optimization (Optimization I): This refers to improving the performance of a machine learning model itself. It involves tuning its hyperparameters, selecting features, and refining the architecture to enhance accuracy and generalizability on a specific task, such as prediction [25]. Common algorithms include Gradient Descent, Adam, and Bayesian Optimization [25] [26].

- Engineering Optimization (Optimization II): This involves using a trained machine learning model as a tool to optimize products or processes in your field [25]. In your research, this could mean using a model as a surrogate to rapidly approximate complex, computationally expensive food web simulations, thereby accelerating parameter estimation and scenario prediction [27].

Q2: Why are traditional parameter estimation methods like MCMC challenging for complex ecosystem models?

A: Traditional methods like Markov-Chain Monte Carlo (MCMC) and maximum likelihood estimation (MLE) often struggle with high-dimensional models due to [28]:

- Computational Intractability: Evaluating a complex 3D ecosystem model thousands of times for an MCMC run can be prohibitively slow [27].

- Ill-Posed Problems: Models with many parameters can have multiple parameter sets that fit the data equally well, making it difficult to find a unique solution [28].

- Risk of Overfitting: Complex models with many parameters are at a high risk of being over-calibrated to specific data, losing their forecasting skill and portability to different conditions [27].

Q3: How can ML-driven optimization help balance model complexity and performance?

A: ML-driven optimization provides a systematic framework to compare models of different complexities. By using a surrogate-based approach, you can calibrate various model versions to a comparable level of performance against observational data. This allows you to identify the simplest model structure that adequately captures the system's behavior, adhering to the principle of parsimony [27]. This helps avoid unnecessary complexity that does not improve predictive power.

Troubleshooting Common Experimental Issues

Troubleshooting Guide

| Symptom | Potential Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| Poor convergence during parameter estimation; loss function oscillates or fails to decrease. | Learning rate (η) is too high or too low [25] [26]. | 1. Plot the loss function over iterations. 2. A slowly decreasing line suggests a low η; wild oscillations suggest a high η. | Use adaptive learning rate methods like Adam or RMSprop [25] [26]. Start with a moderate rate (e.g., 0.01) and decay it over time [25]. |

| Model overfitting; excellent fit to training data but poor performance on validation/test data. | 1. Model is too complex for the available data [27]. 2. Insufficient observational constraints for the number of parameters being optimized [27]. | 1. Compare training vs. validation loss. 2. Perform a sensitivity analysis to identify influential parameters. | 1. Simplify the model structure [27]. 2. Reduce the number of parameters optimized, focusing on the most sensitive ones [27]. 3. Incorporate regularization techniques. |

| Optimization gets stuck in a local minimum, yielding suboptimal parameters. | The loss landscape is non-convex with multiple low points [25]. | Run the optimization from several different initial parameter sets. | Introduce randomness using algorithms like Stochastic Gradient Descent (SGD) [26] or use metaheuristic algorithms like Genetic Algorithms [25]. |

| Surrogate model predictions are inaccurate compared to the full, complex model. | The surrogate (e.g., 1D model) does not capture all physical dynamics of the target (e.g., 3D model) [27]. | Validate the surrogate's ability to replicate key results of the target model at selected locations/conditions [27]. | Refine the surrogate model construction. Use a statistical emulator or ensure the simplified mechanistic model shares the same core ecosystem components [27]. |

| High computational cost for each evaluation of the objective function. | The forward simulation (e.g., an Agent-Based Model or PDE solver) is inherently expensive [28] [25]. | Profile code to identify bottlenecks. | Replace the expensive simulation with a fast ML-based surrogate model for the optimization loop [25] [27]. |

Key Optimization Algorithms and Their Use Cases

The table below summarizes common optimization algorithms. For parameter estimation in complex models, Adam is often a good starting point for training surrogate models, while Bayesian Optimization is ideal for hyperparameter tuning.

| Algorithm | Typical Use Case | Key Characteristics | Relevance to Research |

|---|---|---|---|

| Gradient Descent [25] [26] | Optimizing model parameters (Optimization I). | First-order, iterative. Requires differentiable loss function. Can be slow for large datasets. | Foundational concept; often used in its advanced forms (e.g., SGD, Adam). |

| Stochastic GD (SGD) [26] | Optimizing model parameters with large datasets. | Uses single data points or mini-batches. Computationally efficient, introduces noise to escape local minima. | Useful for training surrogate models on large ecological datasets. |

| Adam [25] [26] | Optimizing model parameters, especially in deep learning. | Combines momentum and RMSprop. Adaptive learning rates for each parameter. Efficient and robust. | Recommended for training neural network-based surrogates for food web models. |

| Bayesian Optimization [25] | Hyperparameter tuning (Optimization I). | Optimizes expensive black-box functions. Builds a probabilistic surrogate to guide search. | Excellent for tuning the hyperparameters of your surrogate model when each training run is costly. |

| Genetic Algorithms [25] | Engineering design and parameter estimation (Optimization II). | Population-based, inspired by evolution. Good for non-convex, non-differentiable problems. | Suitable for direct parameter estimation in complex, non-differentiable ecosystem models. |

Experimental Protocols & Workflows

Detailed Methodology: Surrogate-Based Model Calibration

This protocol is adapted from studies that calibrate complex ecosystem models using surrogate-based optimization [27].

Objective: To efficiently calibrate the parameters of a computationally expensive 3D food web model by optimizing a faster, simplified surrogate model.

Materials/Input Data:

- Target Model: The high-fidelity, computationally expensive model (e.g., a 3D coupled physical-biological ocean model).

- Observational Data: Time-series data for key variables (e.g., chlorophyll-a, nutrient concentrations) for the study region.

- Computational Resources: High-performance computing (HPC) cluster for running ensembles of model simulations.

Procedure:

- Surrogate Model Construction:

- Develop a simplified model that mimics the target model's core behavior. This could be a 1D version of the water column model at specific observational stations [27] or a statistical emulator trained on a limited set of 3D model runs.

- Validate that the surrogate can replicate key patterns and sensitivities of the target model.

Define the Cost Function:

- Formulate a function (e.g., a weighted sum of squared errors) that quantifies the misfit between surrogate model outputs and observational data [27].

Parameter Sensitivity Analysis (Optional but Recommended):

- Perform a global sensitivity analysis (e.g., using the Morris method or Sobol indices) on the surrogate model to identify which parameters have the greatest influence on the output. This allows you to focus the optimization on the most important parameters.

Execute the Optimization:

- Apply an optimization algorithm (e.g., an evolutionary algorithm [27]) to the surrogate model.

- The algorithm will propose different parameter sets. For each set, run the surrogate model, compute the cost function, and iteratively update the parameters to minimize the cost.

Validation with Target Model:

- Take the best parameter set(s) found by optimizing the surrogate and run them through the full, high-fidelity target model.

- Assess the performance of the calibrated target model against the same observational data. This step is critical to ensure the surrogate-based optimization is effective.

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

This table lists essential computational "reagents" for implementing ML-driven optimization in ecological modeling.

| Item / Solution | Function in the Experiment | Example / Notes |

|---|---|---|

| Surrogate Model | A fast, approximate model that replaces a slow, high-fidelity simulation during the optimization process, drastically reducing computational cost [27]. | A 1D water column model [27], a Gaussian Process emulator, or a Neural Network trained on model output. |

| Optimization Algorithm | The core engine that searches the parameter space to find values that minimize the difference between model output and data (the cost function) [25]. | Evolutionary Algorithms [27], Adam [26], or Bayesian Optimization [25]. |

| Cost Function | A quantitative metric that defines the "goodness-of-fit" between the model's predictions and the observational data. The optimizer's goal is to minimize this function [27]. | Often a weighted sum of squared errors (WSSE) or a negative log-likelihood. |

| Sensitivity Analysis Tool | A method to identify which model parameters have the greatest influence on the model output. This helps prioritize parameters for optimization [27]. | Methods include the Morris Elementary Effects method or Variance-based methods (Sobol indices). |

| High-Performance Computing (HPC) | The computational infrastructure required to run large ensembles of model simulations for sensitivity analysis and optimization algorithms. | Cloud computing platforms or local computing clusters. |

Dimension Reduction Techniques for Simplifying High-Dimensional Food Webs

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why is my dimension-reduced model failing to predict the recoverability of a collapsed food web? The accuracy of a reduced model in predicting recoverability depends heavily on the topological features of the original food web. Key structural factors like connectance (the proportion of possible links that are realized) and the number of predator links significantly influence recovery dynamics [29]. If your model fails, first verify that the web's connectance is within the typical empirical range of 0.02 to 0.4 [30]. Low connectance may hinder recovery. Furthermore, ensure your dimension reduction method accounts for the prevalence of negative interactions (predation, competition) in trophic networks, as these can impede the positive feedback loops necessary for successful recovery that are seen in other network types, like mutualistic networks [29].

Q2: What is the biological basis for connectance in food webs, and how should this inform my models? Connectance is not arbitrary; it is an emergent property of the optimal foraging behavior of individual consumers. The Diet Breadth Model (DBM), rooted in optimal foraging theory, predicts that connectance is effectively the mean proportional diet breadth of all species in the web [30]. When building your model, consider that a consumer's diet breadth is determined by the net energy gained from a prey item, the encounter rate with that prey, and the handling time. Realistic parameterization of these foraging constraints will lead to more accurate predictions of connectance and, consequently, more robust simplified models.

Q3: How does the dimensionality of the trophic niche space affect food web structure? The number of independent traits (dimensionality) that determine consumer-resource links is a central question. A key structural property, intervality, was historically thought to indicate a one-dimensional niche space (e.g., body size). However, evolutionary models show that high degrees of intervality can also emerge in higher-dimensional trophic niche spaces when processes of evolutionary diversification and adaptation are considered [31]. Therefore, when applying dimension reduction, do not assume a one-dimensional structure based on intervality alone. The observed topology is a product of both niche space dimensionality and evolutionary history.

Q4: What are the fundamental assembly rules for a stable model food web? For species in a generalized Lotka-Volterra model to coexist sustainably, the interaction matrix must have a nonzero determinant. This is mathematically equivalent to requiring that every species must be part of a non-overlapping pairing [32]. This means each species should be part of an exclusive consumer-resource pairing or a closed loop of such interactions. If a model food web lacks such a configuration, it is inherently unstable. The food web assembly rules derived from this principle predict that species richness will be highest at intermediate trophic levels, which can help guide the construction of feasible model webs [32].

Experimental Protocols for Key Cited Studies

Protocol 1: Predicting Recoverability via Dimension Reduction and Perturbation [29]

- 1. Objective: To determine if a complex, collapsed tri-trophic food web can be recovered through species-specific interventions, using a dimension-reduced model.

- 2. Food Web Construction:

- Generate theoretical food webs with 12 to 24 species distributed across three trophic levels in a ratio of 5:3:2 (basal:primary consumers:top predators).

- Use a pyramidal method or a probabilistic niche-based model (PNM) for network generation.

- Ensure all consumer and top predator species have at least one feeding link.

- Set connectance values to vary between 0.08 and 0.4.

- Implement intra-specific competition, with the strongest competition among basal resources and the weakest among top predators.

- 3. Collapse and Recovery Simulation:

- Collapse the web by driving species populations to zero.

- Apply a positive perturbation (e.g., increased growth rate or population seeding) to a single node or a group of nodes.

- Use dynamical simulations to monitor the propagation of this perturbation.

- 4. Dimension Reduction and Validation:

- Develop a simplified, low-dimensional model that approximates the dynamics of the full, high-dimensional system.

- Compare the recovery trajectory (e.g., rate of recovery, final stable state) predicted by the reduced model against the output of the full dynamic simulation.

- Correlate the accuracy of the reduced model with topological features like connectance and the number of trophic links.

Protocol 2: Parameterizing the Diet Breadth Model (DBM) to Predict Connectance [30]

- 1. Objective: To mechanistically derive food web connectance from the optimal foraging behavior of individual species.

- 2. Foraging Trait Parameterization: For each consumer species j and potential prey species i, define:

- E~i~: Net energy gained from consuming an individual of prey i.

- λ~ij~: Encounter rate between consumer j and prey i.

- H~ij~: Handling time spent by consumer j on prey i.

3. Diet Breadth Calculation:

- For each consumer, rank all potential prey by profitability (P~ij~ = E~i~ / H~ij~), from highest to lowest.

The consumer's diet breadth is the number of prey types, k, that maximizes its rate of energy intake, R, calculated as:

R = ( Σ~i=1~^k^ λ~ij~ E~i~ ) / ( 1 + Σ~i=1~^k^ λ~ij~ H~ij~ )

The most profitable prey is always included.

- 4. Connectance Calculation:

- The total number of links L in the web is the sum of the diet breadths d~j~ of all S species: L = Σ~j=1~^S^ d~j~.

- Connectance C is then: C = L / S².

Table 1: Key Quantitative Ranges from Food Web Theory and Models

| Parameter | Typical Empirical Range | Basis / Model | Implication for Dimension Reduction |

|---|---|---|---|

| Connectance (C) | 0.02 - 0.4 [30] | Observation & Diet Breadth Model | A key constraint; low C can hinder recoverability and may require careful mapping in reduced models [29]. |

| Species Richness (S) | Variable (e.g., 12-24 in model webs) [29] | Theoretical studies | Determines the initial high dimensionality (n) that reduction techniques aim to simplify (to s << n) [29]. |

| Links per Species | ~10 (for model comparison) [31] | Trait-based evolutionary models | A target for ensuring generated model webs are realistic before applying reduction techniques. |

| Trophic Levels | 3 (in simplified studies) [29] | Theoretical tri-trophic food webs | Reduction techniques must capture the essential energy flow and negative interactions across these levels. |

Table 2: Food Web Assembly Rules for Stable Coexistence [32]

| Concept | Mathematical Principle | Ecological Interpretation |

|---|---|---|

| Non-Zero Determinant | det(R) ≠ 0 | The matrix of species interactions must be invertible for a feasible steady state to exist. |

| Non-Overlapping Pairing | Every species is part of a perfect matching or a closed loop of directed interactions. | Each species must have a unique role or a set of exclusive interactions that regulate its population. |

| Assembly Rules | Constraints on species richness at adjacent trophic levels. | The number of species at one level cannot exceed the sum of the numbers on adjacent levels, incorporating apparent competition. |

Workflow and Relationship Visualizations

Model Reduction Workflow

Recoverability Prediction Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Food Web Modeling and Analysis

| Research 'Reagent' | Function / Description | Application in Food Web Studies |

|---|---|---|

| Generalized Lotka-Volterra Equations | A system of differential equations modeling population dynamics of interacting species. | The foundational dynamic framework for simulating population changes and testing stability [32]. |

| Theoretical Food Web Generators | Algorithms (e.g., Pyramidal, Probabilistic Niche Model) that create food webs with specified properties. | Generating null models and test networks with controlled connectance and species richness [29]. |

| Optimal Foraging Parameters (E, λ, H) | Quantifiable traits for net energy (E), encounter rate (λ), and handling time (H). | Parameterizing the Diet Breadth Model to mechanistically predict diet breadth and connectance [30]. |

| Trophic Niche Space Vectors | Abstract multi-dimensional representations of species' resource (vulnerability) and foraging traits. | Modeling the emergence of food web structure from underlying traits and evolutionary processes [31]. |

| Interaction Matrix (R) | A matrix where elements represent the per-capita effect of one species on another's growth rate. | Formally assessing conditions for stable coexistence (e.g., det(R) ≠ 0) and applying assembly rules [32]. |

Integrating Socioeconomic Components into Ecological Network Models

Frequently Asked Questions (FAQs)

Conceptual Foundations