Assessing Accuracy in Machine Learning Behavior Classification: Methods, Challenges, and Applications in Biomedical Research

This article provides a comprehensive framework for assessing the accuracy of machine learning (ML) models in behavioral classification, with a specific focus on applications in drug development and clinical research.

Assessing Accuracy in Machine Learning Behavior Classification: Methods, Challenges, and Applications in Biomedical Research

Abstract

This article provides a comprehensive framework for assessing the accuracy of machine learning (ML) models in behavioral classification, with a specific focus on applications in drug development and clinical research. It explores the foundational principles of ML classification, examines cutting-edge methodological approaches, and addresses critical challenges like data sparsity and model generalizability. Drawing on recent case studies and systematic reviews, it offers practical strategies for model optimization and rigorous validation. The content is tailored to help researchers, scientists, and drug development professionals critically evaluate and implement robust ML classification models to advance biomedical discovery and patient care.

Core Principles and the Critical Need for Accurate Behavioral Phenotyping

The Critical Role of Classification in Biomedical and Behavioral Research

Classification serves as a fundamental pillar in biomedical and behavioral research, enabling scientists to categorize complex phenomena into distinct, meaningful groups. In behavioral neuroscience, classification helps identify distinct behavioral phenotypes, such as sign-tracking versus goal-tracking rodents in Pavlovian conditioning studies [1]. In biomedical domains, machine learning classifiers analyze high-dimensional data from sources like microarrays and medical imaging to distinguish between pathological and healthy states [2] [3] [4]. The accuracy of these classification systems directly impacts diagnostic precision, treatment efficacy, and the validity of research conclusions.

The evolution from subjective categorical assignments to data-driven, machine learning-based classification represents a paradigm shift in research methodology. Traditional approaches often relied on predetermined or subjective cutoff values, which introduced inconsistencies and reduced objectivity [1]. Modern classification frameworks leverage sophisticated algorithms including Support Vector Machines (SVM), Random Forests (RF), Linear Discriminant Analysis (LDA), and neural networks to create more robust, reproducible categorization systems [5] [2]. These advanced methods are particularly crucial when dealing with the inherent variability present in biological and behavioral data, where subtle patterns may elude human observation but have significant implications for understanding disease mechanisms and treatment responses [6].

Comparative Performance of Classification Algorithms

Algorithm Performance Across Conditions

The selection of an appropriate classification algorithm depends heavily on specific data characteristics and research objectives. No single method universally outperforms others across all scenarios, as each possesses distinct strengths and limitations [2]. Experimental comparisons reveal that algorithm performance varies significantly with factors including feature set size, training sample size, biological variation, effect size, and correlation between features [2].

Table 1: Comparative Performance of Classification Algorithms Under Different Conditions

| Condition | Best Performing Algorithm | Key Performance Findings |

|---|---|---|

| Smaller number of correlated features (not exceeding ~½ sample size) | Linear Discriminant Analysis (LDA) | Superior generalization error and stability of error estimates [2] |

| Larger feature sets (sample size ≥20) | Support Vector Machine (SVM) with RBF kernel | Clear performance margin over LDA, RF, and k-Nearest Neighbour [2] |

| High-dimensional biomedical data | Random Forests (RF) | Outperforms k-Nearest Neighbour with highly variable data and small effect sizes [2] |

| Behavior-based student classification | Genetic Algorithm-optimized Neural Network | Superior classification accuracy with minimal processing time for large datasets [5] |

| Mouse phenotyping (female subjects) | Logistic Regression | Highest accuracy for classifying sustained and phasic freezing phenotypes [6] |

| Mouse phenotyping (male subjects) | Random Forest / Support Vector Machine | Best performance for MR1 and MR2 datasets, respectively [6] |

Domain-Specific Performance Considerations

Classification performance is highly context-dependent, with different algorithms excelling in specific research domains. In behavioral research, a hybrid approach combining singular value decomposition for dimensionality reduction with genetic algorithm-optimized neural networks demonstrated superior accuracy for classifying students into behavior-based categories [5]. For behavioral phenotyping in mice, logistic regression achieved the highest accuracy for female subjects, while random forests and SVMs performed best for male subjects across different memory retrieval sessions [6].

In high-dimensional biomedical data analysis, particularly with two-class datasets where features far exceed samples, univariate filter methods often demonstrate competitive performance compared to more complex wrapper and embedded methods [3]. These univariate techniques also tend to provide greater stability in feature selection, though multivariate methods specifically designed to minimize redundancy in selected feature subsets may offer superior performance in certain scenarios [3].

Experimental Protocols for Classification Assessment

Protocol for Comparative Algorithm Evaluation

Robust evaluation of classification algorithms requires standardized experimental protocols to ensure meaningful comparisons. A comprehensive simulation-based approach should incorporate multiple factors simultaneously to improve external validity, including: number of variables (p), training sample size (n), biological variation (σb), within-subject variation (σe), effect size (fold-change, θ), replication (r), and correlation (ρ) between variables [2].

The protocol should implement Monte Carlo cross-validation with numerous iterations (e.g., 1000) of randomly partitioning datasets into training and test splits to obtain robust performance estimates, particularly when dealing with limited sample sizes [6]. This approach helps account for variance between different training iterations. For tuning parameter optimization, employ grid searches over supplied parameter spaces that include software default values to ensure performance estimates at optimized parameters are at least as good as default choices [2].

Table 2: Key Research Reagents and Computational Tools

| Research Reagent/Tool | Function in Classification Research |

|---|---|

| PubMed Medical Images Dataset (PMCMID) | Large-scale annotated medical image dataset for training diagnostic foundation models [4] |

| GoldHamster Corpus | Manually annotated PubMed article corpus for training classifiers to identify experimental models [7] |

| Pavlovian Conditioning Approach (PavCA) Index | Quantitative scoring system for classifying sign-tracking, goal-tracking, and intermediate behavioral phenotypes [1] |

| Gaussian Mixture Models (GMM) | Unsupervised clustering method for identifying subpopulations without predefined labels [6] |

| k-Means Clustering | Partitioning method for grouping similar observations into predefined clusters [1] |

| PubMedBERT | Pre-trained natural language processing model fine-tuned for biomedical text classification [7] |

| Singular Value Decomposition (SVD) | Dimensionality reduction technique for handling high-dimensional data [5] |

| Genetic Algorithms (GA) | Optimization method for feature selection and avoiding overfitting in neural network training [5] |

Protocol for Behavioral Phenotyping Classification

For behavioral classification tasks such as identifying sign-tracking (ST) and goal-tracking (GT) phenotypes in rodents:

- Data Collection: Calculate Pavlovian Conditioning Approach (PavCA) Index scores based on response bias, probability difference, and latency score during conditioning sessions [1].

- Distribution Analysis: Analyze the skewness and kurtosis of score distributions, as these vary across laboratories due to biological and environmental factors [1].

- Classification Implementation: Apply data-driven methods such as k-Means classification or the derivative method rather than arbitrary cutoff values [1].

- Validation: For k-Means classification, specify the number of clusters (k) beforehand; for the derivative method, use mean scores from final conditioning days and identify local minima in the density distribution function to determine optimal cutoff values [1].

For anxiety trait classification in mice using freezing behavior:

- Behavioral Testing: Conduct auditory aversive conditioning with prolonged memory retrieval sessions (e.g., 6-minute conditioned stimulus presentation instead of typical 30-second exposures) [6].

- Feature Extraction: Analyze freezing responses in time bins and model freezing curves using log-linear regression to extract intercept, slope (decay rate), and sustained freezing measures [6].

- Cluster Validation: Employ bootstrap sampling procedures (e.g., 200 random samples with replacement) to calculate Jaccard index values and assess clustering stability [6].

- Model Training: Train supervised machine learning models (e.g., SVM, logistic regression, LDA, random forests) using labeled data from pooled experimental cohorts for sex-specific classification [6].

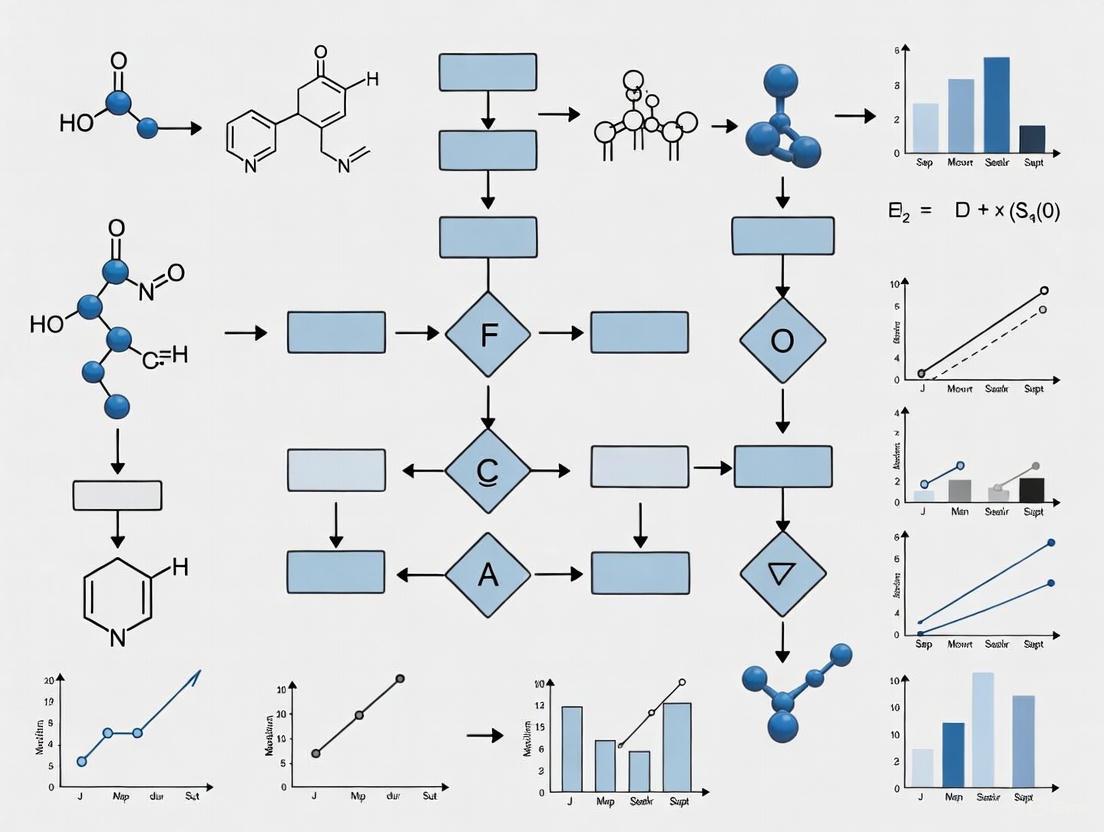

Diagram Title: Classification Research Workflow

Methodological Considerations for Robust Classification

Addressing Data Challenges

Biomedical and behavioral data present unique challenges for classification, including high dimensionality, sample size limitations, and significant heterogeneity. Effective classification requires specialized approaches to address these challenges. Dimensionality reduction techniques like singular value decomposition (SVD) help manage high-dimensional data by performing outlier detection and dimensionality reduction [5]. Feature selection methods are crucial for identifying the most informative variables, with univariate methods generally providing greater stability than multivariate approaches, though the latter may better minimize redundancy in selected feature subsets [3].

Sample size considerations are particularly important in behavioral research, where unsupervised clustering often requires larger sample sizes (e.g., n=30-40) for robust results, creating ethical dilemmas in animal research [6]. Supervised machine learning approaches trained on pooled datasets can subsequently classify individual animals effectively, aligning with the Reduction principle of the 3Rs (Replacement, Reduction, Refinement) in animal research [6]. For text classification of biomedical literature, multi-label document classification approaches that can assign multiple experimental model labels to a single publication enhance the utility of literature mining tools for identifying alternative methods to animal experiments [7].

Experimental Design Implications

Classification accuracy can be significantly influenced by experimental design choices, even when based on identical theoretical models. In search experiments examining sequential information search behavior, designs categorized as passive, quasi-active, or active yielded significantly different participant behaviors at both aggregate and individual levels, despite being derived from the same theoretical framework [8]. These design differences affected average search duration, alignment with theoretical predictions, and the relationship between risk preferences and search outcomes [8].

Similarly, in behavioral phenotyping, methodological variations such as the duration of training days and specific days selected for analysis impact classification outcomes [1]. The lack of standardization in these procedural elements contributes to variability in score distributions across laboratories, necessitating data-driven classification approaches that adapt to specific sample characteristics rather than relying on fixed cutoff values [1].

Diagram Title: Data Challenges and Solutions

Classification methodologies in biomedical and behavioral research continue to evolve, with machine learning approaches increasingly offering superior alternatives to traditional categorical assignments. The optimal selection of classification algorithms depends on specific data characteristics, with no single method universally outperforming others across all scenarios. As research in this field advances, several promising directions emerge, including the development of diagnostic medical foundation models capable of physician-level performance across multiple imaging domains [4], enhanced feature selection methods that balance stability with predictive performance [3], and standardized classification frameworks that can adapt to distributional variations across laboratories and experimental conditions [1].

The integration of supervised machine learning with large, pooled datasets addresses critical ethical considerations in research, particularly the Reduction principle in animal studies, by enabling robust classification with smaller sample sizes [6]. Furthermore, automated classification of biomedical literature facilitates the identification of alternative methods to animal experiments, supporting researchers in complying with animal welfare regulations [7]. As these methodologies mature, they promise to enhance not only classification accuracy but also the reproducibility, efficiency, and ethical foundation of biomedical and behavioral research.

The accurate assessment of machine learning (ML) classification models is paramount in research, particularly in high-stakes fields like drug development and biomedical sciences. Model evaluation transcends the simple question of "is the model correct?" to address more nuanced questions: "when is it correct, on which classes, and at what cost?" [9] [10]. A comprehensive understanding of model performance requires a multi-faceted approach, as no single metric can provide a complete picture [11] [12]. This guide provides a structured comparison of five fundamental metrics—Sensitivity, Specificity, Positive Predictive Value (PPV), Negative Predictive Value (NPV), and the Area Under the Receiver Operating Characteristic Curve (AUC-ROC)—framed within the context of accuracy assessment for behavior classification models in scientific research.

The core of these metrics lies in the confusion matrix, a tabular representation that breaks down predictions into four fundamental categories [13] [10] [12]:

- True Positive (TP): The model correctly predicts the positive class.

- False Positive (FP): The model incorrectly predicts the positive class (Type I error).

- True Negative (TN): The model correctly predicts the negative class.

- False Negative (FN): The model incorrectly predicts the negative class (Type II error).

These building blocks form the basis for calculating all the metrics discussed in this guide, enabling researchers to move beyond simplistic accuracy measures and conduct a thorough diagnostic evaluation of their classifiers [9].

Confusion Matrix Decision Path: This diagram illustrates the logical flow that categorizes a single prediction into one of the four outcomes of a confusion matrix, which is the foundation for calculating all other classification metrics.

Comparative Analysis of Core Diagnostic Metrics

The following table provides a definitive summary of the formulas, interpretations, and optimal use cases for each of the five core diagnostic metrics, enabling researchers to quickly compare and select the most appropriate measures for their specific evaluation needs.

| Metric | Formula | Clinical / Research Interpretation | Optimal Use Case Scenario |

|---|---|---|---|

| Sensitivity (Recall/TPR) | ( \frac{TP}{TP + FN} ) [14] [9] [10] | Probability that a test result will be positive when the disease/behavior is present [15]. | When the cost of missing a positive case (False Negative) is high, e.g., initial disease screening, security threat detection [9] [16]. |

| Specificity (TNR) | ( \frac{TN}{TN + FP} ) [14] [15] | Probability that a test result will be negative when the disease/behavior is not present [15]. | When the cost of a false alarm (False Positive) is high, e.g., confirming a diagnosis before initiating a costly or invasive treatment [9]. |

| Positive Predictive Value (PPV/Precision) | ( \frac{TP}{TP + FP} ) [14] [10] [17] | Probability that the disease/behavior is present when the test is positive [15]. | When the confidence in a positive prediction is critical, e.g., spam filtering, recommender systems, or confirming a research finding [10] [16]. |

| Negative Predictive Value (NPV) | ( \frac{TN}{TN + FN} ) [14] [15] | Probability that the disease/behavior is not present when the test is negative [15]. | When the confidence in ruling out a condition is paramount, e.g., quickly eliminating negative candidates in high-throughput screening [15]. |

| AUC-ROC | Area under the ROC curve [16] | Measure of the model's ability to separate positive and negative cases across all possible thresholds [15] [16]. | For overall model discrimination ability, especially with balanced classes or when the operational threshold is not yet fixed [15] [16]. |

The Interplay and Trade-offs Between Metrics

A critical concept in classification model evaluation is the trade-off between metrics, primarily driven by the classification threshold [9] [17]. This threshold is the probability value above which an instance is classified as positive. As this threshold increases, the model requires more evidence to make a positive prediction. This leads to higher precision (because positive predictions are more reliable) but lower recall (because the model misses more actual positives) [9]. Conversely, lowering the threshold makes the model more willing to predict positively, increasing recall but decreasing precision. This inverse relationship means that it is generally impossible to maximize both sensitivity and PPV simultaneously [9] [17]. The choice of threshold is, therefore, not a technical optimization problem but a domain-specific decision based on the relative costs of false positives and false negatives [9] [15].

Furthermore, PPV and NPV are highly dependent on prevalence [15]. Even with high sensitivity and specificity, if a condition is very rare (low prevalence), the number of false positives can drastically reduce the PPV. This makes it essential for researchers to consider the expected prevalence in the target population when interpreting these predictive values [15].

Experimental Protocols for Metric Validation

To ensure the rigorous evaluation of ML classification models, a standardized experimental protocol is essential. The following workflow outlines a robust methodology for calculating and validating the discussed accuracy metrics, suitable for benchmarking models in behavioral classification research.

Standardized Workflow for Metric Calculation

Experimental Workflow for Metric Validation: This diagram outlines a standardized protocol for evaluating classification models, from data preparation through metric calculation and statistical comparison.

- Dataset Splitting and Cross-Validation: The dataset must be split into training and testing sets, typically using an 80:20 ratio [18]. To ensure robustness and reduce the variance of the estimates, employ K-Fold Cross-Validation (e.g., with k=5 or k=10) [18]. This involves dividing the dataset into k folds, using k-1 folds for training, and the remaining fold for testing, repeating this process k times. The final metrics are averaged across all folds [18].

- Model Training and Prediction: Train the classification model (e.g., Logistic Regression, Decision Tree, Random Forest) on the training set [18]. For each instance in the test set, obtain the predicted probability of belonging to the positive class, not just the final class label [13].

- Calculation of Point Metrics (Sensitivity, Specificity, PPV, NPV):

- Apply a standard default threshold of 0.5 to the predicted probabilities to convert them into binary class labels (0 or 1) [13].

- Construct the confusion matrix by comparing these predicted labels to the ground truth labels [18] [12].

- Calculate Sensitivity, Specificity, PPV, and NPV directly from the counts in the confusion matrix using the formulas provided in Table 1 [14] [10].

- Construction of the ROC Curve and AUC Calculation:

- Vary the classification threshold systematically from 0 to 1 [15] [16].

- For each candidate threshold, calculate the corresponding True Positive Rate (Sensitivity) and False Positive Rate (1 - Specificity) [15] [16].

- Plot the ROC curve with FPR on the x-axis and TPR on the y-axis [15] [10].

- Calculate the Area Under the ROC Curve (AUC-ROC). An AUC of 0.5 indicates random guessing, while 1.0 indicates perfect separation [16]. The AUC provides a single scalar value summarizing the model's performance across all thresholds [16].

Protocol for Comparative Model Evaluation

When comparing the diagnostic performance of two or more laboratory tests or classification algorithms, ROC analysis is the preferred method [15]. The protocol involves:

- Plotting ROC curves for each model on the same graph for visual comparison. The curve that is more bowed towards the top-left corner represents a better-performing model [15].

- Statistically comparing the AUC values. Methods like Delong's test can be used to determine if the difference in AUC between two models, derived from the same dataset, is statistically significant [15].

The Scientist's Toolkit: Essential Research Reagents & Software

The following table details key software solutions and methodological concepts that function as essential "research reagents" for conducting rigorous accuracy assessments of machine learning models.

| Tool / Concept | Function in Evaluation | Example Application |

|---|---|---|

| Scikit-learn (Python) | A comprehensive library providing functions for calculating all metrics, generating confusion matrices, and plotting ROC curves [18]. | from sklearn.metrics import accuracy_score, confusion_matrix, roc_auc_score, precision_score, recall_score [18]. |

| Statistical Analysis Software (SAS, Stata, R) | Offer built-in procedures for advanced ROC analysis, including AUC calculation and statistical comparison of curves from paired experiments [15]. | SAS PROC LOGISTIC for ROC analysis; Stata's roccomp for comparing multiple ROC curves [15]. |

| K-Fold Cross-Validation | A resampling procedure used to assess model performance on limited data, ensuring that metrics are not dependent on a single train-test split [18]. | Using sklearn.model_selection.KFold to obtain a robust, average AUC estimate from 5 iterations of training and testing [18]. |

| Confusion Matrix | The foundational table from which TP, FP, TN, and FN are derived, serving as the input for calculating most other metrics [13] [10]. | Visualizing a model's error distribution using sklearn.metrics.ConfusionMatrixDisplay to identify specific misclassification patterns [18]. |

| Youden's Index | A single statistic that captures the effectiveness of a diagnostic test. It is defined as Sensitivity + Specificity - 1. The threshold that maximizes this index is often chosen as the optimal cut-point [14]. |

Used in clinical diagnostics to select an operating threshold that balances the trade-off between sensitivity and specificity [14]. |

The selection of accuracy metrics is a fundamental decision that shapes the interpretation and validation of machine learning classification models in research. As detailed in this guide, Sensitivity, Specificity, PPV, and NPV offer crucial, yet distinct, lenses on model performance, each with specific strengths and vulnerabilities, particularly regarding class imbalance and error cost [9] [11] [17]. The AUC-ROC provides a valuable, threshold-agnostic overview of a model's discriminatory power [15] [16]. A robust evaluation strategy does not rely on a single metric but employs a suite of these measures in concert, guided by a standardized experimental protocol and a clear understanding of the research context and the consequential costs of different types of errors. This multi-faceted approach is essential for developing trustworthy models that can reliably inform decision-making in scientific and drug development endeavors.

In both scientific research and industrial applications, the practice of classifying subjects, objects, or behaviors using predetermined, arbitrary cutoff values introduces significant inconsistencies and reduces objectivity [1]. Traditional rule-based systems, which rely on logical rules and thresholds defined by human experts, offer high interpretability and are straightforward to implement in well-understood contexts [19]. However, they face substantial limitations in scalability, adaptability, and performance when dealing with complex, evolving, or multivariate scenarios where patterns are difficult to capture with simple if-then logic [19].

The emergence of data-driven approaches represents a paradigm shift toward more adaptive, accurate, and empirically grounded classification systems. This guide provides a comprehensive comparison of these methodologies, detailing their experimental protocols, performance metrics, and practical applications across diverse fields from behavioral neuroscience to educational analytics and drug discovery.

Comparative Analysis of Classification Approaches

The table below summarizes the core characteristics, advantages, and limitations of rule-based and data-driven classification systems.

Table 1: Fundamental Comparison Between Rule-Based and Data-Driven Classification Approaches

| Feature | Rule-Based Systems | Data-Driven Systems |

|---|---|---|

| Basis of Decision | Predefined expert knowledge encoded as logical rules/thresholds [19] | Patterns learned automatically from historical and current data [19] |

| Interpretability | High; every decision can be traced to a specific, understandable rule [19] | Variable; often considered "black boxes," though techniques like SHAP improve explainability [20] |

| Adaptability | Low; requires manual updates by experts to accommodate new scenarios [19] | High; can automatically adapt to new conditions and detect novel patterns [19] |

| Data Dependency | Low; functions effectively without large historical datasets [19] | High; requires substantial, high-quality data for training [19] |

| Performance in Complex Scenarios | Suboptimal; struggles with non-linear relationships and multivariate patterns [19] | Excellent; excels at detecting hidden anomalies and complex correlations [19] |

| Ideal Use Cases | Regulated industries, safety-critical applications, contexts where transparency is crucial [19] | Dynamic environments, predictive maintenance, complex pattern recognition [19] |

Data-Driven Methodologies in Practice: Experimental Protocols and Performance

Behavioral Phenotype Classification in Neuroscience

Experimental Protocol: Research on Pavlovian conditioning approaches (PavCA) demonstrates a move beyond arbitrary cutoffs for classifying rodents as sign-trackers (ST), goal-trackers (GT), or intermediate (IN) [1]. The traditional method uses a fixed PavCA Index score cutoff (e.g., ±0.5), which fails to account for distribution variations across samples [1].

- k-Means Clustering: An unsupervised machine learning algorithm partitions subjects into a predefined number of clusters (k=3 for ST, GT, IN) by minimizing the sum of squared distances from individual scores to cluster centers [1].

- Derivative Method: This approach calculates the density distribution of PavCA Index scores and uses the first derivative to identify local minima in the slope, which correspond to optimal cutoff points that naturally separate phenotypes based on the sample's specific distribution [1].

Performance Data: These data-driven methods, particularly the derivative approach using mean scores from final conditioning days, effectively identify sign-trackers and goal-trackers in relatively small samples without relying on arbitrary thresholds, providing a standardized framework that adapts to unique distributions [1].

Educational Analytics: Behavior-Based Student Classification

Experimental Protocol: The Behavior-Based Student Classification System (SCS-B) employs a hybrid machine learning pipeline to categorize students into four performance groups (A, B, C, D) [5].

- Data Preprocessing: Singular Value Decomposition (SVD) performs outlier detection and dimensionality reduction on student data collected through comprehensive questionnaires covering psychological, behavioral, and academic factors [5].

- Model Training: A genetic algorithm optimizes feature selection and prevents overfitting during the training of a backpropagation neural network (BP-NN), avoiding local minima and enhancing generalization [5].

- Validation: Model robustness is confirmed using fivefold cross-validation, with performance benchmarked against traditional classifiers like Support Vector Machines (SVM) and Multi-Layer Perceptrons (MLP) [5].

Performance Data: The SCS-B framework achieves superior classification accuracy with minimal processing time for handling extensive student data, providing educational institutions with actionable insights for targeted interventions [5].

Drug Discovery: Reducing Misclassification with SHAP

Experimental Protocol: In early drug discovery, tree-based machine learning algorithms (Extra Trees, Random Forest, Gradient Boosting Machine, XGBoost) are benchmarked using compounds with known antiproliferative activity against prostate cancer cell lines (PC3, LNCaP, DU-145) [20].

- Feature Engineering: Molecular structures are encoded using RDKit descriptors, MACCS keys, ECFP4 fingerprints, and custom fragment-based representations [20].

- Misclassification Framework: SHapley Additive exPlanations (SHAP) values identify potentially misclassified compounds whose feature values fall within ranges typical of the opposite class. Four filtering rules ("RAW", "SHAP", "RAW OR SHAP", "RAW AND SHAP") flag uncertain predictions [20].

Performance Data: The best-performing models (GBM and XGB with RDKit and ECFP4 descriptors) achieved Matthews Correlation Coefficient (MCC) values above 0.58 and F1-scores above 0.8 across all datasets [20]. The "RAW OR SHAP" filtering rule identified up to 21%, 23%, and 63% of misclassified compounds in PC3, DU-145, and LNCaP test sets, respectively, significantly improving classifier reliability for virtual screening [20].

Comparative Performance Across Domains

Table 2: Quantitative Performance Comparison of Data-Driven Classification Systems

| Application Domain | Classification Methods | Key Performance Metrics | Comparative Advantage |

|---|---|---|---|

| Behavioral Neuroscience [1] | k-Means Clustering, Derivative Method | Effective phenotype identification in small samples; adapts to sample distribution | Overcomes inconsistency of arbitrary cutoffs (±0.3 to ±0.5) used across laboratories |

| Educational Analytics [5] | GA-Optimized Neural Network with SVD | Superior accuracy, minimal processing time for large data | Outperforms traditional SVM and MLP classifiers |

| Drug Discovery [20] | XGBoost/GBM with SHAP filtering | MCC >0.58, F1-score >0.8; identifies 21-63% misclassifications | Reduces false positives/negatives in virtual screening |

| Industrial Monitoring [19] | Machine Learning (PCA, SVMs, DNNs) | Enhanced anomaly detection, predictive maintenance capabilities | Superior to rule-based systems in complex, multivariate environments |

| QR Code Classification [21] | CNN, XceptionNet | 87.48% accuracy, 85.7% kappa value | Effectively classifies images with various noise types |

Visualization: Data-Driven Classification Workflows

Generalized Workflow for Data-Driven Classification

Data-Driven Classification Workflow

SHAP-Enhanced Misclassification Filtering

SHAP Misclassification Filtering

Table 3: Research Reagent Solutions for Data-Driven Classification

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Data Preprocessing | Singular Value Decomposition (SVD) [5] | Dimensionality reduction, outlier detection, and data compression |

| Feature Engineering | RDKit Descriptors, ECFP4 Fingerprints, MACCS Keys [20] | Encode molecular structures and properties for ML models |

| Clustering Algorithms | k-Means Clustering [1] | Unsupervised grouping of data points based on similarity |

| Tree-Based Classifiers | XGBoost, Gradient Boosting Machines, Random Forest [20] | High-performance classification with built-in feature importance |

| Neural Networks | Backpropagation Neural Networks (BP-NN), LSTM [5] | Complex pattern recognition in sequential and structured data |

| Optimization Techniques | Genetic Algorithms [5] | Prevent overfitting, optimize parameters, avoid local minima |

| Interpretability Frameworks | SHAP (SHapley Additive exPlanations) [20] | Explain model predictions, identify misclassifications |

| Validation Methods | Fivefold Cross-Validation [5] | Robust performance assessment and generalization testing |

The movement beyond arbitrary cutoffs to data-driven classification represents a fundamental advancement in quantitative research methodology across scientific disciplines. While rule-based systems maintain value in well-defined, stable environments where interpretability is paramount [19], data-driven approaches offer superior adaptability, accuracy, and discovery potential in complex, evolving scenarios [19].

The experimental protocols and performance data presented demonstrate that machine learning methods—including k-means clustering, derivative approaches, genetic algorithm-optimized neural networks, and SHAP-enhanced classifiers—provide empirically grounded alternatives to arbitrary thresholds [1] [5] [20]. These approaches successfully address the critical challenges of reproducibility and objectivity while enabling more nuanced and accurate classification across behavioral neuroscience, educational analytics, and drug discovery applications.

As these methodologies continue to evolve, particularly with advancements in explainable AI and hybrid systems, they promise to further bridge the gap between empirical classification and interpretable results, ultimately enhancing decision-making processes in research and industry.

Behavioral phenotypes are defined as collected sets of data in a digital system that capture multidimensional aspects of human or animal behavior, influencing and reflecting underlying psychological and physiological states [22]. The study of these phenotypes has become increasingly important in both basic neuroscience research and clinical applications, particularly with the advent of sophisticated machine learning methods for behavioral classification. Research in this field spans from fundamental investigations into conditioned behaviors like sign-tracking to applied digital health interventions that target clinical endpoints such as weight loss or mental health improvement.

The accurate classification and analysis of behavioral phenotypes enables researchers to identify individual differences in vulnerability to disorders, predict treatment outcomes, and develop personalized intervention strategies [22] [23]. This guide provides a comprehensive comparison of the experimental methodologies, analytical approaches, and performance metrics used in behavioral phenotype research across different domains.

Comparative Performance of Behavioral Classification Methods

Performance Metrics Across Domains

Table 1: Comparison of performance metrics for different behavioral phenotype classification approaches

| Application Domain | Classification Task | Key Metrics | Reported Performance | Reference |

|---|---|---|---|---|

| Digital CBT for Obesity | Engagement prediction | R² | Mean R² = 0.416 (SD 0.006) | [22] |

| Short-term weight change prediction | R² | Mean R² = 0.382 (SD 0.015) | [22] | |

| Long-term weight change prediction | R² | Mean R² = 0.590 (SD 0.011) | [22] | |

| Loneliness Detection | Binary loneliness classification | Accuracy | 80.2% | [24] |

| Change in loneliness level | Accuracy | 88.4% | [24] | |

| Rodent Behavior Analysis | Ethological behavior recognition | Agreement with human annotation | Similar or greater than commercial systems | [25] |

| Inter-rater variability | Eliminated variation within/between human annotators | [25] |

Methodological Comparison

Table 2: Experimental protocols and methodological approaches in behavioral phenotyping

| Research Area | Experimental Protocol | Subjects/Participants | Data Collection Methods | Analysis Approach |

|---|---|---|---|---|

| Sign-Tracking Research | Pavlovian conditioned approach (PCA) | Rats (basic research) and youth (clinical) [26] [23] | Lever presentation followed by reward delivery; measurement of approach behaviors | Pavlovian Conditioned Approach (PavCA) index; neural activity recording |

| Digital Health Interventions | 8-week digital cognitive behavioral therapy [22] | 45 participants with obesity | Mobile app data, psychological questionnaires | Machine learning regression analysis |

| Loneliness Detection | Passive sensing over 16-week semester [24] | 160 college students | Smartphone sensors (GPS, usage, communication), Fitbit activity tracker | Ensemble of gradient boosting and logistic regression |

| Preclinical Behavior Analysis | Open field, elevated plus maze, forced swim tests [25] | C57BL/6J mice | DeepLabCut for markerless pose estimation | Supervised machine learning classifiers |

Detailed Experimental Protocols

Sign-Tracking and Goal-Tracking Paradigms

The Pavlovian conditioned approach (PCA) protocol represents a fundamental experimental method for studying individual differences in incentive salience attribution [26] [23]. In this paradigm, a cue (such as a lever extension) predicts reward delivery in a different location (typically a food magazine). The procedure involves:

- Subjects: Typically rats in basic research or human participants (including youth) in translational studies

- Apparatus: Operant chamber with house light, speaker for auditory cues, pellet dispenser connected to a food magazine, and retractable levers

- Training Protocol: Initial magazine training followed by multiple daily acquisition sessions consisting of 25 trials separated by variable intertrial intervals

- Trial Structure: Each trial begins with presentation of the cue (lever extension accompanied by auditory stimulus and flashing cue light)

- Measurement: The number and timing of physical contacts to both the cue (sign-tracking) and reward delivery location (goal-tracking) are recorded

- Classification: Individuals are classified as sign-trackers (STs) or goal-trackers (GTs) based on a Pavlovian Conditioned Approach (PavCA) index, with scores ≥0.5 indicating sign-tracking and ≤-0.5 indicating goal-tracking [23]

This paradigm has revealed that sign-tracking behavior is associated with externalizing behaviors, attentional and inhibitory control deficits, and distinct patterns of neural activation, particularly in subcortical reward systems [23].

Digital Phenotyping for Health Interventions

Digital phenotyping approaches leverage mobile technology to capture behavioral patterns in naturalistic settings [22] [24]. The methodology typically includes:

- Participant Recruitment: Targeted populations based on research questions (e.g., individuals with obesity for weight loss interventions, college students for loneliness studies)

- Sensor Data Collection: Passive data collection from smartphones (GPS, communication patterns, device usage) and wearable sensors (physical activity, sleep)

- Active Assessments: Validated psychological questionnaires administered at baseline and follow-up timepoints

- Feature Extraction: Derivation of behavioral features encompassing emotional, cognitive, behavioral, and motivational dimensions

- Analysis Framework: Machine learning approaches to identify patterns predictive of outcomes or engagement

In one representative study, researchers collected data from 45 participants undergoing digital cognitive behavioral therapy for 8 weeks, leveraging both conventional phenotypes from psychological questionnaires and multidimensional digital phenotypes from mobile app time-series data [22]. The machine learning analysis discriminated important characteristics predicting both engagement and health outcomes.

Deep Learning-Based Behavioral Analysis

For preclinical research, deep learning approaches have revolutionized behavioral phenotyping [25]. The experimental workflow involves:

- Video Recording: High-quality video recording of behavioral tests (open field, elevated plus maze, forced swim test)

- Pose Estimation: Using DeepLabCut software to extract skeletal representations of animals without physical markers

- Human Annotation: Multiple human raters carefully annotate behaviors to create ground truth datasets

- Feature Extraction: Calculation of position and orientation invariant skeletal representations based on distances, angles, and areas

- Classifier Training: Supervised machine learning classifiers that integrate skeletal representation with manual annotations

- Validation: Comparison against commercial solutions (EthoVision XT14, TSE Multi-Conditioning System) and human raters

This approach has demonstrated the ability to score ethologically relevant behaviors with similar accuracy to humans while outperforming commercial solutions [25].

Signaling Pathways and Neural Mechanisms

(Diagram 1: Neural mechanisms underlying sign-tracking and goal-tracking phenotypes)

Research has identified distinct neural pathways associated with different behavioral phenotypes [26] [23]. Sign-tracking behavior is linked to dopamine-dominated subcortical systems, including the nucleus accumbens core, which facilitate reactive and affectively motivated actions. In contrast, goal-tracking behavior engages cholinergic-dependent cortical structures that underlie executive functioning and goal-directed behaviors.

The relative imbalance between these systems has significant implications for behavioral outcomes. Sign-trackers demonstrate stronger cue-evoked excitatory responses in the nucleus accumbens that encode behavioral vigor, and this neural activity pattern is relatively resistant to extinction compared to goal-trackers [26]. In youth, the propensity to sign-track is associated with externalizing behaviors and greater amygdala activation during reward anticipation, suggesting an over-reliance on subcortical cue-reactive brain systems [23].

Experimental Workflows in Behavioral Phenotyping

(Diagram 2: Comprehensive workflow for behavioral phenotype research)

The experimental workflow for behavioral phenotype research typically follows a structured process beginning with study design and progressing through to clinical endpoint evaluation [22] [24] [25]. The process incorporates both traditional behavioral assessment and modern digital phenotyping approaches, with machine learning analysis serving as a bridge between raw behavioral data and clinically meaningful endpoints.

Digital phenotyping components leverage passive sensing data from smartphones and wearables to capture real-world behavioral patterns, while traditional experimental paradigms provide controlled assessments of specific behavioral tendencies. The integration of these approaches through machine learning models enables the prediction of clinical outcomes such as weight loss in digital interventions [22] or loneliness levels in mental health monitoring [24].

Research Reagent Solutions and Essential Materials

Table 3: Essential research materials and platforms for behavioral phenotyping studies

| Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Behavior Tracking Software | DeepLabCut [25] | Markerless pose estimation for detailed behavioral analysis | Preclinical research, rodent behavior |

| EthoVision XT14 (Noldus) [25] | Automated animal tracking and behavior analysis | Preclinical research, standardized behavioral tests | |

| TSE Multi-Conditioning System [25] | Integrated hardware and software for behavioral testing | Preclinical research, controlled environments | |

| Mobile Data Collection | AWARE Framework [24] | Open-source smartphone data collection platform | Digital phenotyping, passive sensing |

| Fitbit Activity Trackers [24] | Wearable sensors for activity and sleep monitoring | Digital phenotyping, real-world behavior | |

| Analysis Platforms | Custom R/Python Scripts [22] [25] | Machine learning analysis and statistical testing | Data analysis, model development |

| Experimental Apparatus | Operant Conditioning Chambers [26] | Controlled environments for behavioral testing | Sign-tracking/goal-tracking research |

| Open Field Arenas [25] | Standardized testing environments | Preclinical anxiety and exploration research | |

| Elevated Plus Maze [25] | Behavioral test for anxiety-like behavior | Preclinical anxiety research | |

| Forced Swim Test Apparatus [25] | Behavioral test for depression-like behavior | Preclinical depression research |

The comparative analysis of behavioral phenotyping methods reveals a rapidly evolving field that integrates traditional experimental paradigms with cutting-edge computational approaches. Sign-tracking research provides a foundational framework for understanding individual differences in incentive salience attribution, with clear relevance to externalizing behaviors and impulse control disorders [26] [23]. Meanwhile, digital phenotyping approaches demonstrate the practical application of behavioral classification in clinical and real-world settings, with machine learning models successfully predicting engagement and health outcomes [22] [24].

The performance metrics across studies indicate that machine learning approaches can achieve clinically meaningful accuracy in classifying behavioral phenotypes and predicting outcomes. Deep learning methods have reached human-level accuracy in scoring complex ethological behaviors [25], while digital phenotyping approaches can predict clinical endpoints such as weight loss and loneliness with substantial accuracy [22] [24]. As the field advances, the integration of multimodal data sources and the development of more sophisticated analytical frameworks will likely enhance our ability to precisely classify behavioral phenotypes and link them to clinical endpoints across diverse populations and disorders.

The Impact of Data Distribution (Skewness, Kurtosis) on Classification Accuracy

The performance of machine learning classification models is fundamentally tied to the quality and characteristics of the underlying data. While numerous factors influence model accuracy, the shape of the data distribution—quantified by the statistical measures of skewness and kurtosis—plays a critically underappreciated role. In the context of accuracy assessment for behavior classification models, particularly in scientific fields like drug development, ignoring these distributional properties can lead to biased predictions, unreliable conclusions, and ultimately, failed interventions.

This guide examines the direct impact of skewness and kurtosis on classification accuracy. It provides researchers and data scientists with a structured comparison of how different distribution shapes affect model performance, details robust experimental protocols for assessment, and recommends mitigation strategies to enhance the validity and generalizability of classification models.

Understanding Skewness and Kurtosis

To assess data quality for classification, one must first understand the two key metrics that describe a distribution's shape.

Skewness measures the asymmetry of a probability distribution around its mean [27] [28]. A skewness value of zero indicates perfect symmetry, as in a normal distribution. Positive skewness (right-skewed) signifies a longer tail on the right side, meaning the mean is pulled by a concentration of data points on the lower end and a few high-value outliers. Conversely, Negative skewness (left-skewed) indicates a longer tail on the left, with data clustered on the higher end and a few low-value outliers pulling the mean left [27] [29] [28].

Kurtosis measures the "tailedness" and peakedness of a distribution compared to a normal distribution [27] [28]. It is often interpreted through Excess Kurtosis (the kurtosis of the distribution minus the kurtosis of a normal distribution, which is 3). A Mesokurtic distribution has excess kurtosis near zero and resembles the normal distribution. A Leptokurtic distribution has positive excess kurtosis, featuring heavier tails and a sharper peak, which indicates a higher probability of extreme outliers. A Platykurtic distribution has negative excess kurtosis, with lighter tails and a flatter peak, suggesting fewer extreme values [27] [29] [28].

The following diagrams illustrate these core concepts and their relationship to model performance.

Diagram 1: Data Distribution Assessment Workflow for Classification Modeling

Diagram 2: Types of Skewness and Their Characteristics

Diagram 3: Types of Kurtosis and Their Characteristics

Empirical Evidence: Prevalence and Impact on Model Performance

Prevalence of Non-Normal Distributions in Real-World Data

The assumption of normally distributed data is frequently violated in practice. A comprehensive analysis of 504 scale-score and raw-score distributions from state-level educational testing programs found that nonnormal distributions are common and often associated with particular testing programs [30]. This mirrors earlier findings by Micceri (1989), who analyzed 440 distributions and found that 29% were moderately asymmetric and 31% were extremely asymmetric [30]. In health and social sciences, variables commonly exhibit distributions that clearly deviate from normality [31].

Table 1: Observed Skewness and Kurtosis in Real-World Data (Sample of 504 Test Score Distributions)

| Distribution Type | Number of Distributions | Skewness Range | Kurtosis Range | Common Characteristics |

|---|---|---|---|---|

| Raw Score Distributions | 174 | Generally negative, but varies | Generally platykurtic | Naturally bounded, often discrete |

| Scale Score Distributions | 330 | Often negative | Varies, can be leptokurtic | Transformed via IRT, can show ceiling effects |

Documented Impact on Machine Learning Models

The empirical impact of skewness and kurtosis on model training is significant and multifaceted.

- Skewness introduces bias in predictions by pulling the mean toward the long tail. In regression models, a positively skewed feature can cause the model to consistently overpredict, as the model's estimates are drawn toward the outlier-inflated mean [29].

- Skewness disrupts feature scaling. Algorithms that rely on distance metrics, like k-Nearest Neighbors (k-NN) and Support Vector Machines (SVM), assume features are on a comparable scale. A single highly skewed feature can dominate the distance calculation, rendering the model unable to properly weigh the importance of other, more balanced features [29].

- High kurtosis (leptokurtic distributions) increases a model's exposure to outliers. In regression tasks, these extreme values can disproportionately influence the model's parameters, such as the slope of a regression line, leading to inaccurate and unstable predictions [29] [28]. For classification, outliers can distort the decision boundary, increasing misclassification rates [29].

- Both skewness and high kurtosis can lead to overfitting. A model may learn to fit the noise and rare extreme values present in a skewed or heavy-tailed distribution rather than the underlying generalizable pattern. This results in high performance on training data but poor generalization to new, unseen data [29].

Experimental Protocols for Assessment

To systematically evaluate the impact of data distribution on a classification model, the following experimental protocol is recommended. This methodology is adapted from established practices in the literature [29] [30] [32].

Data Preprocessing and Feature Analysis

- Step 1: Data Cleaning. Handle missing values and remove any obvious erroneous data points. For the example diabetes dataset, this may involve removing biologically impossible values for features like glucose or BMI [32].

- Step 2: Calculate Distribution Metrics. For each feature and, if possible, the target variable, calculate the skewness and kurtosis. The common formulas for a sample are:

- Step 3: Visualize Distributions. Create histograms with overlaid density plots and Q-Q (Quantile-Quantile) plots for each feature to visually confirm the asymmetry and tail weight indicated by the numerical metrics.

Model Training and Evaluation under Distribution Stress

- Step 4: Establish a Baseline. Train a diverse set of classification models (e.g., Logistic Regression, SVM, Random Forest, Neural Networks) on the original data. Evaluate performance using accuracy, precision, recall, F1-score, and AUC. Use resampling techniques like bootstrapping to get stable estimates of performance [32].

- Step 5: Introduce Controlled Skewness/Kurtosis. Artificially transform a key predictive feature to create datasets with varying degrees of skewness and kurtosis. This can be done using power transformations (e.g., Box-Cox for skewness) or by synthesizing data with specific distributional properties [29] [33].

- Step 6: Re-train and Compare. Train the same set of models on each of the transformed datasets. Compare their performance metrics against the baseline to isolate the degradation caused by the distributional shift.

Table 2: Experimental Results from a Diabetes Classification Study (Pima Indian Dataset)

| Machine Learning Model | Highest Reported Accuracy | Key Performance Metrics | Notable Feature Importance |

|---|---|---|---|

| Generalized Boosted Regression | 90.91% | Kappa: 78.77%, Specificity: 85.19% | Glucose, BMI, Diabetes Pedigree Function, Age |

| Sparse Distance Weighted Discrimination | Sensitivity: 100% | - | - |

| Generalized Additive Model using LOESS | AUROC: 95.26% | Log Loss: 30.98% | - |

The Scientist's Toolkit: Key Reagents and Solutions

Table 3: Essential Tools for Analyzing Distributional Impact in Classification

| Tool / Solution | Function | Application Context |

|---|---|---|

| Shapiro-Wilk Test | A formal statistical test for normality. | Used to objectively reject the null hypothesis that data is normally distributed [33]. |

| Box-Cox / Yeo-Johnson Transform | Power transformation techniques to reduce skewness. | Applied to continuous, positive (Box-Cox) or any (Yeo-Johnson) data to make distribution more symmetric [29]. |

| Robust Scaler | A scaling method that uses the median and interquartile range (IQR). | Preprocessing for features with high kurtosis or outliers; less sensitive to extremes than Standard Scaler [29]. |

| Tree-Based Models (e.g., Random Forest) | Algorithms that make fewer assumptions about data distribution. | A robust modeling choice when data exhibits significant skewness/kurtosis and transformations are insufficient [29]. |

| Hogg's Estimators (Q, Q2) | Robust estimators of kurtosis and skewness less sensitive to outliers. | Provide a more accurate description of distribution shape for non-normal data, especially with small samples [31]. |

Mitigation Strategies for Improved Accuracy

When skewness or kurtosis is identified as a threat to classification accuracy, several mitigation strategies are available.

Data Transformation: Applying mathematical functions to the data can normalize its distribution.

- Logarithmic Transformation: Effective for reducing positive right skewness [29] [28].

- Box-Cox Transformation: A more generalized power transformation that finds the optimal parameter (λ) to stabilize variance and make data more normal-like [29].

- Square Root or Cube Root Transformations: Can be useful for moderate positive skewness.

Outlier Management: For leptokurtic distributions with heavy tails, managing outliers is crucial.

- Detection and Capping: Use methods like the Interquartile Range (IQR) to identify outliers. These can then be capped (Winsorizing) or removed, though the latter should be done with caution to avoid losing information [29].

- Robust Scaling: As mentioned in the toolkit, using RobustScaler (which centers data on the median and scales by the IQR) prevents a few extreme values from dictating the scale for an entire feature [29].

Model Selection: Choosing algorithms that are inherently less sensitive to distributional assumptions is a key strategic decision.

- Tree-Based Models: Algorithms like Decision Trees, Random Forests, and Gradient Boosting models (e.g., XGBoost) do not rely on assumptions of normality and are more robust to outliers and skewed data [29]. The diabetes classification study found Generalized Boosted Regression to be a top performer [32].

- Avoidance of Sensitive Models: Linear models (like Logistic Regression) and distance-based models (like k-NN and SVM with linear kernels) are more vulnerable to violations of normality and should be used with care after appropriate preprocessing [29].

The influence of skewness and kurtosis on classification accuracy is a critical consideration in the development of reliable machine learning models, especially in high-stakes fields like drug development. Empirical evidence consistently shows that non-normal data distributions are the rule, not the exception, and that they can significantly bias predictions, inflate error rates, and reduce model robustness.

A rigorous approach to accuracy assessment must include a distributional analysis of both features and target variables. By implementing the experimental protocols outlined in this guide—calculating metrics, visualizing distributions, and stress-testing models—researchers can quantify this impact. Subsequently, employing mitigation strategies such as data transformation, outlier management, and robust model selection allows for the development of classifiers that maintain high accuracy and generalizability, even in the face of real-world, imperfect data.

Advanced ML Techniques and Their Real-World Applications in Biomedicine

In behavioral neuroscience and materials informatics, classifying complex phenomena into meaningful categories is a fundamental scientific challenge. Traditional methods often rely on predetermined or subjective cutoff values, which can introduce inconsistencies and hinder reproducibility [1]. This guide provides an objective comparison of three algorithmic approaches—k-Means clustering, the derivative method, and Transformer networks—for automating and enhancing classification accuracy. These methods are increasingly critical for analyzing diverse data, from animal behavior to crystal properties, offering data-driven alternatives to manual classification. We evaluate their performance, detail experimental protocols, and identify their optimal applications within research environments.

Algorithmic Fundamentals and Mechanisms

k-Means Clustering: The Partitioning Workhorse

k-Means is a partitional clustering algorithm designed to group unlabeled data so that data points within the same cluster are more similar to each other than to those in other clusters [34]. Its objective is to partition a dataset of n observations into a user-specified number k of clusters, minimizing the within-cluster variance.

The algorithm operates through four key steps [34]:

- Initialization: Select

kinitial cluster centroids arbitrarily from the data points. - Assignment: Assign each data point to the nearest centroid based on Euclidean distance.

- Update: Recalculate cluster centroids as the mean of all points assigned to that cluster.

- Iteration: Repeat steps 2 and 3 until cluster assignments stabilize or a maximum number of iterations is reached.

A significant limitation is its requirement for a predefined k value, which is often unknown in research settings. The algorithm is also sensitive to initial centroid selection and may converge to local minima [34]. Despite these limitations, its simplicity, efficiency, and ease of interpretation have made it widely applicable across domains [34].

The Derivative Method: A Mathematical Approach for Behavioral Phenotyping

The derivative method is a mathematical approach developed to address classification challenges in behavioral research, particularly for identifying sign-tracking (ST) and goal-tracking (GT) phenotypes in Pavlovian conditioning studies [1]. This method determines cutoff values based on the distribution of index scores within a sample, eliminating reliance on arbitrary thresholds.

The methodology involves [1]:

- Distribution Analysis: Modeling the density distribution of the Pavlovian Conditioning Approach (PavCA) Index scores, which tend toward a bimodal distribution in large samples.

- Derivative Calculation: Computing the first derivative of the density function to analyze variations in the slope parameter.

- Cutoff Identification: Identifying local minimum points in the derivative function, which correspond to natural boundaries between phenotypic categories in the score distribution.

This approach provides a standardized classification framework that adapts to a dataset's unique distributional characteristics, offering enhanced objectivity compared to fixed cutoffs [1].

Transformer Networks: The Attention-Based Revolution

Transformer networks are deep learning architectures based on self-attention mechanisms that have revolutionized natural language processing and are increasingly applied to scientific domains [35]. Unlike sequential models, Transformers process all elements in a dataset simultaneously, enabling the capture of global dependencies and complex relationships.

The core innovation is the self-attention mechanism, which computes weighted sums of input representations, dynamically determining the importance of each element relative to others [35]. In scientific applications, such as materials informatics, Transformer-generated atomic embeddings (e.g., CrystalTransformer) capture complex atomic features by learning unique "fingerprints" for each atom based on their roles and interactions within materials [35].

Experimental Performance Comparison

Behavioral Classification Benchmarks

In behavioral neuroscience, studies have systematically compared classification approaches for categorizing animal behaviors. The table below summarizes key performance findings:

Table 1: Performance Comparison in Behavioral Classification

| Algorithm | Application Context | Accuracy/Performance | Key Strengths | Limitations |

|---|---|---|---|---|

| k-Means [1] | Sign-tracking vs. goal-tracking classification | Effective for identifying ST/GT groups, especially in small samples | Simplicity, intuitiveness, no need for pre-labeled data | Assumes spherical clusters, sensitive to outliers |

| Derivative Method [1] | Sign-tracking vs. goal-tracking classification | Particularly effective when using mean scores from final conditioning days | Adapts to sample distribution, provides standardized cutoff values | Limited validation outside behavioral phenotyping |

| Random Forest [36] | Zebrafish seizure behavior classification | High accuracy for stereotyped seizure phenotypes | Handles nonlinear data, robust to outliers | Requires extensive hyperparameter tuning |

| XGBoost [36] | Zebrafish seizure behavior classification | Comparable to Random Forest for seizure classification | Handling of complex feature relationships | Computational intensity for large datasets |

| Multi-Layer Perceptron [36] | Zebrafish seizure behavior classification | Exceeded human annotator consistency for certain behaviors | Captures complex nonlinear relationships | Requires large training datasets |

Materials Science Applications

In materials informatics, Transformer-based approaches have demonstrated significant improvements in prediction accuracy:

Table 2: Transformer Performance in Materials Property Prediction

| Model Architecture | Target Property | Mean Absolute Error (MAE) | Improvement Over Baseline |

|---|---|---|---|

| CGCNN (Baseline) [35] | Formation Energy (Ef) | 0.083 eV/atom | - |

| CT-CGCNN (with Transformer embeddings) [35] | Formation Energy (Ef) | 0.071 eV/atom | 14% improvement |

| ALIGNN (Baseline) [35] | Formation Energy (Ef) | 0.022 eV/atom | - |

| CT-ALIGNN (with Transformer embeddings) [35] | Formation Energy (Ef) | 0.018 eV/atom | 18% improvement |

| MEGNET (Baseline) [35] | Formation Energy (Ef) | 0.051 eV/atom | - |

| CT-MEGNET (with Transformer embeddings) [35] | Formation Energy (Ef) | 0.049 eV/atom | 4% improvement |

Experimental Protocols and Workflows

Behavioral Classification Protocol (k-Means and Derivative Method)

The experimental workflow for behavioral phenotyping using k-Means and derivative methods follows a structured pipeline:

Diagram 1: Behavioral Classification Workflow

Experimental Setup Details [1]:

- Data Collection: Pavlovian Conditioning Approach (PavCA) Index scores are collected from rodents during conditioning sessions. The index combines response bias, probability difference, and latency scores, ranging from -1 (goal-tracking) to +1 (sign-tracking).

- Preprocessing: Calculate mean scores from the final days of conditioning (typically 2-3 sessions) to ensure stable behavioral measures.

- k-Means Implementation: Apply k-Means with k=3 clusters corresponding to sign-trackers (ST), goal-trackers (GT), and intermediate (IN) groups. Use multiple random initializations to overcome local minima.

- Derivative Method Implementation: Model the density distribution of PavCA scores, compute the first derivative, and identify local minima as natural boundaries between phenotypes.

- Validation: Compare classification results with behavioral observations and biological markers to validate clustering outcomes.

Transformer Network Protocol for Materials Science

The workflow for implementing Transformer networks in materials informatics involves:

Diagram 2: Transformer Materials Analysis

Implementation Details [35]:

- Data Preparation: Collect crystal structures and properties from databases like Materials Project (MP). For formation energy prediction, use datasets with ~69,000 materials.

- Transformer Architecture: Implement CrystalTransformer model with self-attention mechanisms to generate Universal Atomic Embeddings (ct-UAEs). The model learns atomic fingerprints directly from chemical information in crystal databases.

- Model Training: Pre-train the Transformer frontend on expanded datasets (e.g., MP* with 134,243 materials). Focus on predicting bandgap energy (Eg) and formation energy (Ef).

- Integration with Graph Neural Networks: Transfer the generated atomic embeddings to backend models like CGCNN, MEGNET, or ALIGNN for specific property prediction tasks.

- Clustering and Analysis: Apply UMAP clustering to the multi-task ct-UAEs to categorize elements in the periodic table and explore connections between atomic features and crystal properties.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools

| Tool/Reagent | Function/Purpose | Example Applications |

|---|---|---|

| Pavlovian Conditioning Chamber [1] | Controlled environment for behavioral conditioning and data collection | Sign-tracking/goal-tracking experiments in rodents |

| PavCA Index Scoring System [1] | Quantifies behavioral tendencies using response bias, probability difference, and latency scores | Objective measurement of ST/GT phenotypes |

| MATLAB Classification Code [1] | Implements k-Means and derivative method algorithms for behavioral classification | Automated phenotype categorization |

| CrystalTransformer [35] | Transformer model for generating universal atomic embeddings (ct-UAEs) | Materials property prediction, atomic feature capture |

| Materials Project Database [35] | Repository of crystal structures and properties for training machine learning models | Formation energy and bandgap prediction |

| UMAP Clustering [35] | Dimensionality reduction and clustering of high-dimensional embeddings | Categorizing elements based on atomic features |

| Enhanced FA-K-means [37] | Evolutionary K-means integrating Firefly algorithm for automatic clustering | Determining optimal cluster numbers without manual specification |

Comparative Analysis and Research Recommendations

Algorithm Selection Guidelines

Choose k-Means when:

- Working with relatively small sample sizes where complex models might overfit [1]

- Seeking an intuitive, easily interpretable clustering solution [34]

- Computational resources are limited, and implementation simplicity is valued

- Data conforms roughly to spherical cluster shapes with minimal outliers [34]

Opt for the Derivative Method when:

- Classifying behavioral phenotypes based on continuous index scores [1]

- The score distribution shows natural bimodality with unclear boundary regions

- Seeking an objective, data-driven alternative to arbitrary cutoff values

- Working with PavCA Index scores or similarly distributed behavioral metrics

Select Transformer Networks when:

- Dealing with complex, high-dimensional data with intricate relationships [35]

- Sufficient computational resources and large training datasets are available

- Global context and long-range dependencies are important for prediction accuracy

- Transfer learning capabilities can enhance performance on related tasks [35]

Performance and Accuracy Considerations

Each algorithm demonstrates distinct performance characteristics:

k-Means offers reasonable performance for behavioral classification, particularly with smaller sample sizes [1]. However, its accuracy is highly dependent on appropriate k selection and initial centroid initialization. Enhanced variants that automatically determine cluster numbers (e.g., Enhanced FA-K-means) can mitigate this limitation [37].

The Derivative Method provides superior objectivity compared to fixed cutoff approaches, effectively adapting to the specific distribution characteristics of a dataset [1]. Its performance is particularly strong when using mean scores from the final days of conditioning protocols.

Transformer Networks demonstrate remarkable accuracy improvements in materials property prediction, with up to 18% enhancement in formation energy prediction accuracy compared to baseline models [35]. Their ability to capture complex atomic interactions through self-attention mechanisms enables more accurate modeling of intricate scientific relationships.

Future Research Directions

Emerging trends point toward hybrid approaches that combine the strengths of multiple algorithms [35] [37]. Integrating Transformer-derived embeddings with clustering methods for pattern discovery represents a promising avenue. Additionally, the development of more interpretable Transformer architectures could enhance their utility in scientific domains where model transparency is crucial. Evolutionary approaches that automatically optimize clustering parameters address key limitations of traditional k-Means, making unsupervised learning more accessible for exploratory data analysis [37].

In machine learning, particularly within biological and behavioral sciences, raw data is not directly processable by algorithms. Feature representation, or encoding, is the critical first step that transforms this raw data into a structured numerical format. The choice of encoding method directly influences every subsequent stage of the model pipeline, ultimately dictating the accuracy, interpretability, and generalizability of the final predictive system [38]. Within the specific context of accuracy assessment for behavior classification models, the encoding scheme can determine whether a model captures meaningful, biologically relevant signals or is misled by statistical artifacts in the data.

This guide provides a comparative analysis of prominent encoding methodologies, evaluating their performance, underlying experimental protocols, and suitability for different data types prevalent in biomedical research. The objective is to equip researchers and drug development professionals with the evidence needed to select optimal feature representation strategies, thereby enhancing the reliability of their machine learning-driven discoveries.

Comparative Analysis of Encoding Methods

The table below summarizes the core characteristics, performance, and ideal use cases for a range of common encoding techniques.

Table 1: Comprehensive Comparison of Encoding Methods for Biological and Behavioral Data

| Encoding Method | Core Principle | Reported Performance/Data | Key Advantages | Key Limitations | Ideal Use Cases |

|---|---|---|---|---|---|

| One-Hot Encoding [39] | Represents each category as a unique binary vector. | N/A | Prevents false ordinal relationships; simple to implement. | High dimensionality with many categories; ignores label relationships. | Nominal categorical variables with few categories. |

| Label Encoding [39] | Assigns a unique integer to each category. | N/A | Efficient for storage and computation. | Can introduce false ordinal relationships misinterpreted by algorithms. | Categorical features with only two distinct categories. |

| Ordinal Encoding [39] | Assigns integers based on a user-defined ordinal relationship. | N/A | Captures known ordinal relationships between categories. | Not applicable for non-ordinal (nominal) variables. | Ordinal categorical variables (e.g., 'Low', 'Medium', 'High'). |

| Target/Mean Encoding [39] | Replaces categories with the mean of the target variable for that category. | N/A | Incorporates target information; can improve model performance. | High risk of target leakage and overfitting without careful validation. | Categorical features with a high number of categories. |

| End-to-End Learned Embeddings [40] | Model learns optimal encoding from data during training. | Comparable to 20D classical encodings using only 4 dimensions; outperformed classical encodings on PPI prediction task [40]. | Task-specific; compact representation; reduces manual feature engineering. | Requires sufficient data; "black box" nature can reduce interpretability. | Tasks with large datasets where relevant feature relationships are complex or unknown. |

| k-Means & Derivative Classification [1] | Uses unsupervised clustering (k-Means) or distribution analysis to define categories. | Effective for identifying sign-trackers and goal-trackers in behavioral phenotyping, especially in small samples [1]. | Data-driven; reduces subjective/arbitrary cutoff values. | Sensitive to outliers and initial parameters. | Creating behavioral categories from continuous scores (e.g., PavCA Index). |

| Cross-Modality Encoding (CLEF) [41] | Uses contrastive learning to integrate multiple data types (e.g., sequence, structure). | Outperformed state-of-the-art models in predicting secreted effectors (T3SEs/T4SEs/T6SEs); recognized 41 of 43 experimentally verified T3SEs [41]. | Integrates diverse biological evidence; creates powerful, unified representations. | Computationally intensive; requires multiple data modalities. | Integrating multi-omics data or combining sequence with structural/annotation data. |

Experimental Protocols for Key Encoding Methodologies

Protocol: Benchmarking Learned vs. Classical Amino Acid Encodings

This protocol is derived from a systematic comparison of encoding strategies for deep learning applications in bioinformatics [40].

- Objective: To evaluate the performance of end-to-end learned embeddings against classical, manually-curated encoding schemes (e.g., one-hot, BLOSUM62) across different model architectures and data sizes.

- Datasets:

- HLA-II Peptide Binding: Predicting affinity of peptides to Human Leukocyte Antigen class II proteins.

- Protein-Protein Interactions (PPI): Predicting whether two proteins interact, using a dataset from [40].

- Encoding Methods:

- Classical: One-hot (20D), VHSE8 (8D), BLOSUM62 (20D). Weights were fixed during training.

- End-to-End Learned: Embedding layers with dimensions from 1 to 32, updated via backpropagation.

- Control: Random frozen embeddings of the same dimension.

- Model Architectures:

- Recurrent Neural Networks (RNN) with Long Short-Term Memory (LSTM) units.

- Convolutional Neural Networks (CNN).

- A hybrid CNN-RNN model.

- Training Regime: Models were trained on different fractions of the training data (25%, 50%, 75%, 100%) to assess data size dependence.

- Evaluation Metric: Area Under the Curve (AUC) for the HLA-II task; other relevant metrics for the PPI task.

Table 2: Key Research Reagent Solutions for Encoding Experiments

| Reagent / Resource | Function / Description | Example Application |

|---|---|---|