AI on the Front Lines: How Deep Learning and Satellite Data are Monitoring Deforestation and Glacier Melt

This article explores the transformative role of Artificial Intelligence (AI) in monitoring critical environmental changes, specifically deforestation and glacier retreat.

AI on the Front Lines: How Deep Learning and Satellite Data are Monitoring Deforestation and Glacier Melt

Abstract

This article explores the transformative role of Artificial Intelligence (AI) in monitoring critical environmental changes, specifically deforestation and glacier retreat. Aimed at researchers and scientists, it provides a comprehensive overview of the foundational concepts, cutting-edge methodologies, and practical applications of AI-powered tools. The scope includes an examination of deep learning models like vision transformers and YOLOv8 for predictive forecasting and real-time anomaly detection, a discussion of the challenges and optimization strategies in deploying these technologies, and a comparative analysis of their performance and validation. By synthesizing insights from recent case studies and benchmark datasets, this article serves as a technical guide to the current state and future trajectory of AI in environmental monitoring.

The Urgent Signal: Understanding the Scale of Deforestation and Glacier Loss

The accelerating loss of forests and glaciers represents a dual environmental crisis, driven by anthropogenic activities and climate change. Accurate quantification of this loss is critical for formulating effective mitigation and adaptation strategies. This document provides detailed application notes and protocols, framed within the context of a broader thesis on AI-powered tools, to equip researchers and scientists with methodologies for monitoring deforestation and glacier melting. We present structured quantitative data, experimental protocols for AI-driven monitoring, and essential toolkits to standardize research efforts in these critical domains.

Quantitative Data on Environmental Loss

The following tables consolidate the most current and authoritative data on global forest and glacier loss, providing a baseline for assessment and modeling.

Table 1: Global Forest Loss and Status (2024-2025 Data)

| Metric | Value | Source/Period | Context & Trends |

|---|---|---|---|

| Total Forest Area | 4.14 billion hectares | FAO FRA 2025 [1] | Covers ~32% of global land area [1] |

| Annual Deforestation | 10.9 million hectares/yr | FAO (2015-2025) [1] | Slowed from 17.6 million ha/yr (1990-2000) [1] |

| Net Forest Loss | 4.12 million hectares/yr | FAO (2015-2025) [1] | Fallen from 10.7 million ha/yr (1990s) [1] |

| 2024 Forest Loss | 8.1 million hectares | Forest Declaration 2025 [2] | 63% above trajectory needed for 2030 goal [2] |

| Humid Primary Tropical Forest Loss | Data for 2024 | Forest Declaration 2025 [2] | Spike in 2024, largely from climate-induced fires [2] |

| Forest Degradation | 8.8 million hectares (2024) | Forest Declaration 2025 [2] | Erodes ecosystem integrity and climate resilience [2] |

Table 2: Global Glacier Mass Loss (2000-2023 Data)

| Metric | Value | Source/Period | Context & Trends |

|---|---|---|---|

| Average Annual Mass Loss | -273 ± 16 Gigatonnes/yr | GlaMBIE (2000-2023) [3] | Equivalent to 0.75 ± 0.04 mm/yr of sea-level rise [3] |

| Total Mass Change | -6,542 ± 387 Gigatonnes | GlaMBIE (2000-2023) [3] | Contributed 18 ± 1 mm to global sea-level rise [3] |

| Peak Annual Loss (2023) | -548 ± 120 Gigatonnes | GlaMBIE [3] | Record annual mass loss [3] |

| Acceleration of Loss | 36 ± 10% Increase | GlaMBIE (2000-2011 vs 2012-2023) [3] | From -231 to -314 Gt/yr [3] |

| Cumulative Ice Loss (Reference Glaciers) | Equivalent to 27.3 meters of water | WGMS (1970-2023/24) [4] | 37th consecutive year of ice loss [4] |

| Recent Contribution to Sea-Level Rise | 1.5 ± 0.2 mm (2023) | Dussaillant et al., 2025 [4] | 6% of total loss since 1975/76 occurred in 2023 alone [4] |

AI-Powered Monitoring: Protocols and Workflows

Artificial Intelligence is transforming environmental monitoring by enabling the processing of vast geospatial datasets for near real-time detection and predictive forecasting.

Protocol for AI-Based Deforestation Risk Forecasting

This protocol outlines the methodology for proactive deforestation forecasting, as pioneered by Google's ForestCast [5].

- Objective: To predict the location and timing of future deforestation risk using a deep learning model based solely on satellite data, ensuring scalability and consistency across regions.

- Experimental Workflow:

- Input Data Acquisition:

- Source historical satellite imagery from Landsat and Sentinel-2 missions.

- Generate a "change history" input, a satellite-derived layer that identifies every pixel that has already been deforested and assigns a year to that event [5].

- Model Training:

- Employ a vision transformer-based model architecture, which processes entire tiles of satellite pixels to capture crucial spatial context and recent deforestation patterns [5].

- Train the model using satellite-derived labels of past deforestation. The change history input is found to be the most critical predictive factor [5].

- Risk Forecasting & Validation:

- The model outputs a tile of deforestation risk predictions.

- Validate model accuracy by comparing predictions against held-out historical data and benchmark against traditional models that rely on patchy geospatial data (e.g., roads, population density) [5].

- Input Data Acquisition:

- Application: The resulting risk forecasts enable governments, corporations, and communities to target conservation resources, enforcement, and incentives to high-risk areas before deforestation occurs [5].

Protocol for Glacier Calving Front Analysis

This protocol details the use of AI to analyze glacier retreat, specifically for marine-terminating glaciers, which are major contributors to ice loss [6].

- Objective: To automatically detect glacier calving fronts from a large volume of satellite imagery to track long-term and seasonal changes in glacier extent.

- Experimental Workflow:

- Data Collection:

- Compile over one million optical and radar satellite images from open-access sources like Google Earth Engine for the target glaciers (e.g., 149 in Svalbard) [6].

- AI Model Training:

- Train a deep learning model to interpret both optical and radar imagery, enabling it to identify the calving front under diverse environmental conditions and for various glacier types [6].

- Analysis and Time-Series Generation:

- Apply the trained model to the full satellite image archive to automatically map the position of the calving front in each image.

- Generate a time series of glacier front positions from 1985 to the present, allowing for analysis of seasonal cycles and long-term retreat trends [6].

- Data Collection:

- Key Findings: Application of this protocol in Svalbard revealed that 91% of glaciers have significantly shrunk since 1985, with 62% exhibiting seasonal advance-retreat cycles linked to ocean temperatures [6].

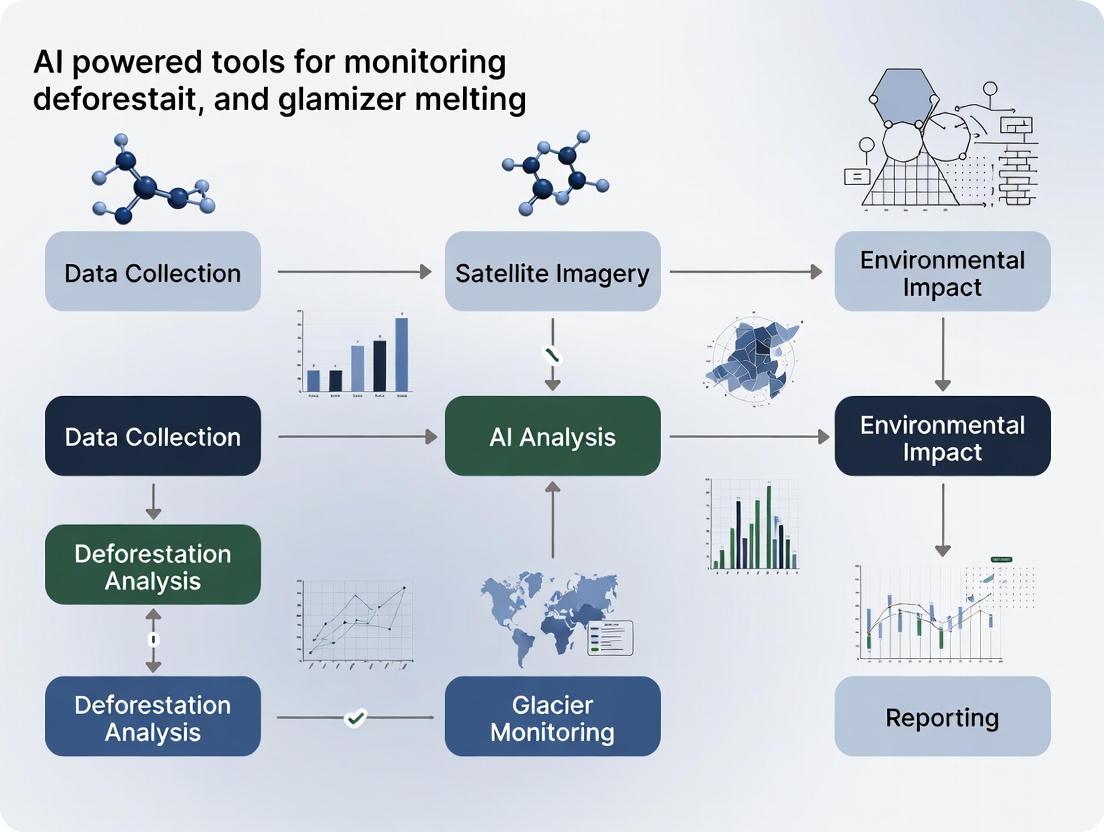

The workflow for these AI-powered monitoring approaches is summarized below.

The Scientist's Toolkit: Research Reagent Solutions

This section details essential materials, datasets, and platforms that function as "research reagents" for conducting experiments in AI-powered environmental monitoring.

Table 3: Essential Tools and Platforms for Environmental Monitoring Research

| Tool / Platform Name | Type | Primary Function in Research |

|---|---|---|

| Global Forest Watch (GFW) [7] | Interactive Web Platform | Provides access to near real-time deforestation alerts and over 65 global forest data sets for visualization and analysis. |

| Google Earth Engine [5] | Cloud Computing Platform | Offers a massive catalog of satellite imagery and geospatial data for scientific analysis and processing at scale. |

| Landsat & Sentinel-2 | Satellite Imagery | Source of multi-spectral optical imagery for tracking land cover change, including forest loss and glacier surface changes. |

| Synthetic Aperture Radar (SAR) \n(e.g., Capella SAR, TerraSAR-X) [8] | Satellite Imagery | Provides all-weather, day-and-night radar imagery capable of penetrating clouds, essential for monitoring in perpetually cloudy regions and measuring glacier surface deformation. |

| GLAD Alert System [7] | Deforestation Alert System | Delivers high-resolution, weekly alerts on tropical forest loss, enabling rapid detection and response. |

| Randolph Glacier Inventory (RGI) [4] | Glacier Database | A global inventory of glacier outlines, serving as a fundamental baseline for glacier mass balance studies. |

| Global Navigation Satellite System (GNSS) \n(e.g., Trimble GNSS) [8] | Ground-based Sensor | Provides highly precise, in-situ measurements of ice movement and position to validate satellite-based observations. |

| LiDAR \n(e.g., Terra LiDAR) [8] | Airborne/Drone-based Sensor | Generates high-resolution 3D models of glacier surface topography and forest structure for detailed volumetric change detection. |

Visualization: From Satellite Data to Actionable Insight

The logical pathway from raw data to actionable knowledge involves multiple steps of AI processing and analysis, which can be visualized as a flow of information through a structured pipeline.

Why Traditional Monitoring Methods Are Falling Short

Traditional methods for monitoring environmental changes, such as manual field surveys and basic satellite image analysis, are increasingly failing to meet contemporary research demands. These conventional approaches are characterized by significant limitations in spatial coverage, temporal resolution, and processing efficiency, creating critical gaps in our understanding of rapidly evolving climate impacts. In deforestation research, manual interpretation of satellite imagery remains labor-intensive and often fails to provide real-time alerts, resulting in delayed intervention [9]. Similarly, in glaciology, fieldwork in remote, harsh environments like the Arctic is challenging, expensive, and logistically constrained, severely limiting the scale and frequency of data collection [6]. The sheer volume of data now available from modern satellite constellations—millions of images—has outpaced the capacity of manual analysis methods [6] [10]. This procedural bottleneck hinders the timely detection of abrupt changes, such as illegal logging events or glacial calving fronts, ultimately compromising the responsiveness of scientific and policy interventions. The transition to advanced, AI-powered monitoring frameworks is therefore not merely an enhancement but a fundamental necessity for producing actionable, timely, and accurate environmental data.

Quantitative Analysis: Traditional vs. AI-Enhanced Monitoring

The limitations of traditional monitoring methods and the advantages of AI-driven approaches are quantitatively evident across several performance metrics. The following table synthesizes key findings from recent studies to facilitate a direct comparison.

Table 1: Performance Comparison of Traditional and AI-Enhanced Monitoring Methods

| Monitoring Focus | Traditional Method & Key Limitation | AI-Enhanced Approach & Documented Improvement |

|---|---|---|

| Global Glacier Mass Change | Relies on sparse, inhomogeneous data from ~500 in-situ glaciers, leading to assessment challenges [3]. | A community effort (GlaMBIE) homogenized data from 35 teams, finding a 36±10% increase in mass loss rate from 2000-2011 to 2012-2023 [3]. |

| Deforestation Anomaly Detection | Manual satellite image processing is slow; by the time loss is identified, "irreversible environmental damage has already occurred" [9]. | A YOLOv8-LangChain framework achieved a 24% increase in recall and significantly reduced false positives, enabling real-time alerts [9]. |

| Calving Front Monitoring | Manual delineation of glacier fronts from satellite images is impractical across millions of images and hundreds of glaciers [6]. | A deep learning model automatically mapped 149 marine-terminating glaciers from over 1 million satellite images, revealing 91% have retreated since 1985 [6] [10]. |

| Forest Carbon Sequestration | Data gaps and a lack of capacity, especially in the Global South, hinder accurate carbon accounting [11]. | AI models (e.g., MATRIX) harness data from 1.8 million global forest plots to provide precise, transparent estimates of aboveground biomass growth [11]. |

Experimental Protocols for AI-Powered Environmental Monitoring

Protocol 1: Real-Time Deforestation Anomaly Detection

This protocol details the methodology for implementing a real-time deforestation detection system using the integrated YOLOv8 and LangChain agent framework as described by Scientific Reports [9].

- Objective: To automatically detect indicators of deforestation (e.g., tree stumps, logging machinery) in satellite or drone imagery and generate geolocated alerts.

- Materials and Reagents:

- Satellite/Drone Imagery: High-resolution optical or SAR imagery. Sources include Sentinel-2, Landsat-8, or commercial providers.

- Computing Infrastructure: GPU-accelerated workstation or cloud instance for model training and inference.

- Software Framework: Python with PyTorch/TensorFlow for YOLOv8, and LangChain for building agentic AI.

- Geographic Information System (GIS): For visualizing and reporting alerts (e.g., ArcGIS, QGIS).

- Step-by-Step Procedure:

- Data Curation and Annotation: Collect a large dataset of satellite images. Annotate images, drawing bounding boxes around deforestation indicators (e.g., "treestump," "bulldozer," "logpile").

- Model Training and Optimization:

- Partition the annotated dataset into training, validation, and test sets (e.g., 70/15/15 split).

- Train the YOLOv8 object detection model on the training set. Monitor metrics like

box_lossandcls_loss. - Validate the model on the validation set, using mean Average Precision (mAP50) as the primary accuracy metric.

- LangChain Agent Integration:

- Develop a LangChain agent equipped with contextual rules (e.g., "ignore single vehicles in established roads").

- The agent receives initial predictions from YOLOv8 and performs dynamic threshold adjustment to reduce false positives.

- Implement a reinforcement learning-based feedback loop where the agent's adjustments are rewarded or penalized based on accuracy.

- Deployment and Alerting:

- Deploy the integrated model in a live inference pipeline that processes incoming satellite imagery.

- The LangChain agent generates finalized predictions and triggers a GIS-based reporting module.

- The system outputs actionable alerts with geographic coordinates, confidence scores, and timestamps.

Protocol 2: AI-Based Glacier Calving Front Dynamics

This protocol outlines the procedure for using a deep learning model to track the retreat of marine-terminating glaciers, as applied to Svalbard [6] [10].

- Objective: To automatically delineate glacier calving fronts from long-term satellite imagery archives to analyze retreat patterns.

- Materials and Reagents:

- Satellite Imagery Archive: Multi-decadal optical (Landsat, Sentinel-2) and radar (Sentinel-1) imagery. The study used open-access data from Google Earth Engine [6].

- Model Architecture: A deep learning model, such as a U-Net variant, suited for semantic segmentation.

- Ground-Truth Data: A subset of images with manually digitized calving fronts for model training and validation.

- Step-by-Step Procedure:

- Data Acquisition and Preprocessing:

- Use Google Earth Engine to compile a time series of over one million satellite images for the target glaciers from 1985 to the present.

- Preprocess images: apply cloud masking for optical data, and calibrate and filter radar data.

- Model Training:

- Train the deep learning model to perform semantic segmentation, teaching it to identify the boundary between ice and ocean in both optical and radar images.

- The model is trained to be robust to various conditions, including different seasons and lighting.

- Inference and Time-Series Analysis:

- Apply the trained model to the entire historical image archive for each of the 149 glaciers.

- The model automatically outputs the pixel location of the calving front for each available image date.

- Trend Calculation and Visualization:

- Calculate the annual and seasonal advance/retreat distance for each glacier front from the time-series of positions.

- Aggregate data to identify regional patterns, correlate retreat rates with ocean temperature data, and pinpoint peak retreat years (e.g., the widespread retreat in 2016 [6]).

- Data Acquisition and Preprocessing:

Visualization of AI Workflows

The integration of AI into environmental monitoring follows a structured pipeline from data acquisition to actionable insight. The following diagram illustrates the core workflow.

Figure 1: Generalized AI Environmental Monitoring Workflow.

Table 2: Essential Research Reagent Solutions for AI-Powered Monitoring

| Research 'Reagent' | Type/Function | Application in Protocol |

|---|---|---|

| YOLOv8 Model | Object Detection Algorithm | Rapidly identifies deforestation indicators in imagery [9]. |

| LangChain Agent | Agentic AI Framework | Provides contextual reasoning and dynamic threshold adjustment to refine object detection [9]. |

| U-Net Architecture | Semantic Segmentation Model | Precisely delineates glacier calving fronts pixel-by-pixel in satellite images [6] [12]. |

| Google Earth Engine | Cloud-Based Geospatial Platform | Provides access to and processing of massive satellite imagery archives [6]. |

| Sentinel-2 Imagery | Multi-Spectral Satellite Data | Primary data source for optical monitoring of land cover and glaciers [12]. |

| Sentinel-1 Imagery | Synthetic Aperture Radar (SAR) Data | Enables monitoring through cloud cover, critical for tropical and polar regions [9] [12]. |

| MATRIX Model | AI Model for Forest Biomass | Estimates forest growth and carbon sequestration potential from global plot data [11]. |

The evidence is clear: traditional monitoring methods are fundamentally inadequate for addressing the scale and urgency of contemporary environmental challenges. The quantitative data and experimental protocols outlined herein demonstrate that AI-powered tools are not merely incremental improvements but represent a paradigm shift. By overcoming the critical shortfalls in speed, scale, and accuracy, AI enables a transition from retrospective documentation to proactive, predictive monitoring. This new capacity is vital for safeguarding vital resources, such as the freshwater supplied by glaciers to over two billion people [13] [14] and the carbon sequestration services of the world's forests [11]. The integration of AI into the environmental scientist's toolkit is therefore an essential step toward building a resilient and sustainable future.

Application Note 1: Proactive Deforestation Risk Forecasting

Background and Rationale

Forests play a critical role in storing carbon, regulating rainfall, and harboring terrestrial biodiversity. However, the world continues to lose forests at an alarming rate, with one recent year recording a loss of 6.7 million hectares of tropical forest—a record high and double the amount lost the previous year [5]. Traditionally, satellite data has provided essential measurement of this loss reactively, documenting damage after it has occurred. The paradigm shift to a proactive approach involves forecasting where deforestation is likely to happen, enabling interventions before forests are lost [5].

Table: Key Quantitative Data for Deforestation Forecasting

| Metric | Reactive Monitoring (Historical) | Proactive Forecasting (AI-Powered) |

|---|---|---|

| Primary Function | Measuring past and present forest loss [5] | Predicting future areas of deforestation risk [5] |

| Temporal Resolution | Near real-time (after loss occurs) [15] | Forward-looking (risk assessment for future timeframes) [5] |

| Key AI Input Data | Satellite imagery (Landsat, Sentinel-2) [15] | Satellite imagery plus "change history" of pixel-level deforestation over time [5] |

| Spatial Application | Consistent across regions [15] | Consistent and scalable across regions (e.g., tropical forests in Latin America and Africa) [5] |

Experimental Protocol: Deforestation Risk Modeling with a "Pure Satellite" Approach

Application: Training a deep learning model to predict pixel-level deforestation risk.

Materials and Workflow:

- Data Acquisition: Source satellite imagery and derived data products.

- Data Preparation: Structure the data into tiles of satellite pixels for model input. The model is designed to receive an entire tile as input to capture crucial spatial context and the pattern of recent deforestation fronts [5].

- Model Training:

- Architecture: Employ a custom model based on vision transformers, optimized for processing spatial data [5].

- Process: Train the model using historical satellite-derived labels of deforestation. The model learns to associate the input tile data (including change history) with subsequent deforestation events [5].

- Validation & Benchmarking: Evaluate model performance by its ability to accurately predict tile-to-tile variation in deforestation amounts and, within a tile, identify the pixels most likely to be deforested next. Compare accuracy against previous methods that rely on patchy geospatial data like roads [5].

Application Note 2: AI-Powered Glacier Dynamics and Calving Monitoring

Background and Rationale

Glaciers, particularly in vulnerable regions like the Arctic, are highly sensitive to climate change. The Svalbard archipelago, for instance, is warming up to seven times faster than the global average [16]. The complete melting of Svalbard's glaciers could raise global sea levels by 1.7 cm, making accurate monitoring of their dynamics vital [16]. A key process driving ice loss in marine-terminating glaciers is calving, where large chunks of ice break off into the ocean. Understanding this process is essential for predicting future glacier mass loss and subsequent sea-level rise [16].

Table: AI-Driven Insights into Glacier Retreat

| Parameter | Svalbard-Wide Analysis (1985-2023) | Seasonal Dynamics |

|---|---|---|

| Scope of Retreat | 91% of marine-terminating glaciers significantly retreated [16] | 62% of glaciers exhibit seasonal retreat-advance cycles [16] |

| Total Area Lost | >800 km² (Larger than New York City) [16] | Seasonal changes often exceed annual changes [16] |

| Annual Rate of Loss | ~24 km²/year (Nearly twice the size of Heathrow Airport) [16] | Retreat is triggered almost immediately by ocean warming in spring [16] |

| Extreme Event (2016) | Calving rates doubled in response to extreme warming and record rainfall [16] | N/A |

Experimental Protocol: Large-Scale Glacier Calving Front Mapping with AI

Application: Using an AI model to automatically map glacier calving fronts from decades of satellite imagery to analyze retreat rates and patterns.

Materials and Workflow:

- Data Collection: Compile a massive dataset of millions of satellite images of the target glaciers over the study period (e.g., 1985-2023 for a Svalbard study) [16].

- Model Training:

- Task: Train a deep learning model, such as a U-Net or DeepLab derivative, for semantic segmentation [12]. The model is tasked with identifying the precise boundary (calving front) between ice and ocean in each image.

- Advantage: This AI method is highly scalable and reproducible, overcoming the labour-intensity and potential inconsistency of manual digitization by human researchers [16].

- Trend Analysis: Use the AI-generated time series of calving front positions to calculate rates of retreat, identify seasonal patterns, and correlate these changes with external climate data such as ocean and air temperature records [16].

- Physical Insight Generation: Combine machine learning with high-resolution satellite and airplane data, constrained by known physics, to derive new constitutive models that describe the ice's viscosity and resistance to flow. This can reveal fundamental physics, such as the anisotropic nature of ice in extension zones, which is not captured in standard lab-derived models [17].

Visualization: Proactive Environmental Monitoring Workflow

The following diagram illustrates the integrated workflow for transitioning from reactive monitoring to proactive forecasting in both deforestation and glacier research.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Resources for AI-Powered Environmental Monitoring

| Research 'Reagent' | Function / Application | Specifications & Notes |

|---|---|---|

| Optical Satellite Imagery (Landsat-8, Sentinel-2) | Primary data source for land cover classification, change detection (deforestation), and glacier mapping [12] [5]. | Affected by cloud cover. Provides multispectral data crucial for analyzing vegetation health and ice surfaces [12]. |

| Synthetic Aperture Radar (SAR) Data (Sentinel-1) | Complementary data source for all-weather, day-and-night monitoring, penetrating cloud cover [12]. | Vital for continuous monitoring in perennially cloudy regions like the tropics or polar winters [12]. |

| 'Change History' Data Layer | A satellite-derived input mapping the history of pixel-level changes (e.g., past deforestation). Serves as the most critical predictive feature for deforestation risk models [5]. | A small but information-dense input that captures trends and moving fronts of environmental change [5]. |

| Deep Learning Model Architectures (Vision Transformers, U-Net) | Core analytical engines. Vision transformers are used for scalable deforestation prediction [5], while U-Net is widely used for semantic segmentation tasks like mapping glacier calving fronts [12]. | Model choice depends on the task (prediction vs. segmentation). Computational demands can be high [12] [5]. |

| High-Resolution Airplane & Satellite Radar | Provides detailed topography and ice thickness data for building high-fidelity geophysical models of ice sheets [17]. | Used to validate and inform AI models, connecting large-scale patterns with physical processes [17]. |

| Physics-Informed Deep Learning Framework | A methodology that integrates physical laws (e.g., laws of ice flow) as constraints within the machine learning model during training [17]. | Ensures model outputs are not just data-driven but also physically plausible, leading to more robust and interpretable discoveries [17]. |

Application Notes: AI for Deforestation and Glacier Monitoring

The integration of deep learning and computer vision with remote sensing is transforming environmental monitoring, enabling precise, large-scale, and automated analysis of deforestation and glacier retreat [18]. These technologies provide researchers with the tools to understand and quantify environmental change with unprecedented accuracy and speed.

Deforestation Monitoring

Global Deforestation Drivers (2001-2022) Deep learning models, particularly convolutional neural networks (CNN), have become essential for automatically detecting deforestation and classifying its drivers from satellite imagery [19]. A significant global dataset developed by the World Resources Institute and Google Deep Mind utilizes an artificial intelligence (AI) algorithm called ResNet to determine the reasons for forest loss at a one-kilometer spatial resolution, distinguishing between seven primary drivers [20].

Table 1: Global Drivers of Tree Cover Loss (2001-2022)

| Driver Category | Percentage of Global Tree Cover Loss |

|---|---|

| Permanent Agriculture | 34.8 ± 2.6% |

| Wildfires | 49.5% (of 2024 tropical primary forest loss) |

| Logging | 26.3% (in Asia) |

| Shifting Cultivation | Data from source |

| Hard Commodities | Data from source |

| Settlements and Infrastructure | Data from source |

| Other Natural Disturbances | Data from source |

Table 2: Regional Deforestation Drivers in Asia

| Driver | Percentage |

|---|---|

| Wildfires | 65.4% |

| Logging | 26.3% |

| Permanent Agriculture | 2.5% |

For specific regions like the Amazon, U-Net models applied to Sentinel-1 radar data have achieved high accuracy, with Forest (Fo) and Deforestation (De) classes reaching F1-Scores of 0.97 and 0.92, respectively [21]. However, in geographically complex and fragmented landscapes like India, a 1 km spatial resolution may be insufficient, necessitating a multi-pronged approach that combines satellite data with additional field observations and biophysical data for a comprehensive understanding [20].

Glacier Monitoring

AI is critical for mapping glaciers and understanding climate change impacts. A deep learning model called GlaViTU (Glacier-VisionTransformer-U-Net) has demonstrated performance that matches expert-level delineation accuracy for global glacier mapping [22].

Table 3: GlaViTU Model Performance by Glacier Type

| Glacier Type | Region Example | Model Performance (Intersection over Union) |

|---|---|---|

| Debris-Rich Areas | High-Mountain Asia | >0.75 |

| General/Previously Unobserved | Various | >0.85 |

| Clean-Ice-Dominated | Various | >0.90 |

The application of AI to study marine-terminating glaciers in Svalbard, Norway, has revealed that 91% of glaciers have significantly shrunk since 1985, with the peak retreat rate occurring in 2016 during an unusual warm period [6]. These models are trained on both optical and radar satellite imagery, enabling them to identify calving fronts under diverse environmental conditions with high accuracy [6].

Experimental Protocols

Protocol 1: Deforestation Driver Classification using ResNet

Objective: To train a deep learning model for identifying and classifying drivers of deforestation from satellite imagery at a 1 km spatial resolution.

Materials:

- Satellite imagery (e.g., from Landsat or Sentinel-2)

- High-resolution 1 km grid cells with at least 0.5% tree cover loss, visually interpreted by experts (for training data)

- Population and biophysical data inputs

- Computational resources (GPU recommended)

Methodology:

- Data Preparation: Assemble a global dataset of satellite images. Define training samples as 1 km grid cells with at least 0.5% tree cover loss. These samples must be visually interpreted and labeled by domain experts according to the seven driver classes [20].

- Model Training: Train a ResNet-based deep learning model. Inputs include satellite imagery along with relevant population and biophysical data. The model learns to associate spatial patterns with a specific driver class (e.g., permanent agriculture, wildfires, logging) [20].

- Model Refinement: Address the challenge of regional variations in driver patterns (e.g., different types of logging) by collecting additional, diverse training data to improve the model's recognition capabilities across different geographies [20].

- Analysis: Apply the trained model to analyze satellite imagery from 2001-2022 to determine the dominant driver of tree cover loss for each 1 km grid cell globally [20].

Deforestation Analysis Workflow

Protocol 2: Multi-Temporal Glacier Calving Front Detection

Objective: To automatically detect and track the calving fronts of marine-terminating glaciers over multiple decades using a deep learning model.

Materials:

- Over 1 million satellite images (optical and radar) from sources like Google Earth Engine, spanning from 1985 to present [6]

- Reference glacier inventory data (e.g., GLIMS, Randolph Glacier Inventory) [22]

- Computational resources for deep learning

Methodology:

- Model Training: Train a deep learning model to automatically detect calving fronts from a massive dataset of satellite images. The model must be trained on both optical and radar imagery to function under various environmental conditions and for different glacier types [6].

- Inference and Tracking: Apply the trained model to analyze the entire time series of satellite imagery for each of the 149 studied glaciers in Svalbard. The model outputs the position of the calving front for each available image date [6].

- Time-Series Analysis: Calculate retreat and advance rates by tracking the change in the calving front position over time. Analyze the data for seasonal patterns (summer retreat, winter advance) and long-term trends [6].

- Correlation with Climate Variables: Correlate the timing and magnitude of glacier retreat with ocean temperature data and atmospheric events (e.g., atmospheric blocking) to understand the climatic drivers of ice loss [6].

Glacier Monitoring Workflow

Protocol 3: Global Glacier Mapping with GlaViTU

Objective: To produce accurate, globally scalable glacier outlines using a hybrid convolutional-transformer deep learning model.

Materials:

- Open satellite imagery (optical and SAR)

- Auxiliary data: Digital Elevation Models (DEMs), thermal data, and interferometric SAR (InSAR) data [22]

- A benchmark tile-based dataset covering 9% of glaciers worldwide, structured into 10x10 km2 tiles [22]

Methodology:

- Data Compilation: Construct a comprehensive dataset from public satellite images and glacier inventories. The dataset should cover diverse glacierized environments and be organized into non-overlapping tiles for model development and testing [22].

- Model Architecture: Implement GlaViTU, a hybrid convolutional-transformer model designed to capture both local image features and long-range dependencies [22].

- Multi-Strategy Training: Explore different training strategies, including a global strategy (one model for all regions) and a regional strategy (one model per region), to achieve high generalization across space, time, and different satellite sensors [22].

- Validation and Accuracy Assessment: Validate the model's performance on an independent test dataset. Assess spatial, temporal, and cross-sensor generalization. Compare the automated outlines against human expert uncertainties in terms of area and distance deviations [22]. Report calibrated confidence metrics for the glacier extents to make predictions more reliable and interpretable [22].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Resources for AI-Based Environmental Monitoring

| Category / Item | Function in Research |

|---|---|

| Satellite Imagery | |

| Landsat & Sentinel-2 | Provides high-resolution optical imagery for visual analysis of forest cover and glacier surfaces [12] [19]. |

| Sentinel-1 | Provides Synthetic Aperture Radar (SAR) data, which penetrates cloud cover, for monitoring in all weather conditions [12] [21]. |

| AI Models & Architectures | |

| U-Net | A dominant deep learning architecture for semantic segmentation, used for precisely delineating deforestation patches or glacier boundaries [12] [19] [21]. |

| ResNet | Used for classifying deforestation drivers by extracting complex features from satellite imagery [20]. |

| Vision Transformer (ViT) | Captures long-range dependencies in images, improving model performance in complex landscapes [22]. |

| Data Platforms | |

| Google Earth Engine | Provides open-access to a massive catalog of satellite imagery and geospatial data for large-scale analysis [6]. |

| Global Forest Watch | Platform providing data and alerts on forest change, incorporating AI-based driver classification [20]. |

| Reference Data | |

| GLIMS & Randolph Glacier Inventory | Provide baseline glacier outlines for model training and validation [22]. |

| Expert Visual Interpretations | Critical for creating accurate labeled datasets to train and validate deep learning models [20]. |

| Computational Tools | |

| Python with DL Frameworks | (e.g., TensorFlow, PyTorch) for developing, training, and deploying deep learning models [19]. |

| Google Colaboratory | Online Jupyter notebook environment with AI integrations to assist in writing Python code for data science [23]. |

Inside the Toolbox: AI Models and Real-World Applications in Environmental Monitoring

Application Note: Deep Learning for Proactive Forest Conservation

Deforestation represents a critical threat to global biodiversity, climate stability, and ecosystem services. Traditional satellite-based monitoring systems provide essential but retrospective insights, documenting loss only after it has occurred [5]. This reactive paradigm limits opportunities for prevention and early intervention. The ForestCast framework introduces a transformative approach by applying deep learning to forecast deforestation risk, enabling proactive conservation and resource allocation before losses happen [5] [24]. This shift from documenting past events to anticipating future vulnerabilities marks a significant advancement in environmental monitoring, aligning with broader applications of AI in tracking ecological changes such as glacier melt and other climate-critical phenomena [25].

Technical Innovation: The ForestCast Benchmark

ForestCast establishes the first publicly available benchmark dataset and deep learning benchmark for deforestation risk forecasting [5] [26]. Its innovation lies in addressing the core challenges of previous forecasting methods, which relied on patchily-available input maps (e.g., roads, population density) that were often inconsistent, difficult to scale, and quickly outdated [5]. In contrast, ForestCast adopts a "pure satellite" approach, deriving all inputs from satellite data, ensuring consistency, global applicability, and future-proofing through continuously updated satellite data streams [5] [24].

The following table summarizes the core quantitative findings from the ForestCast development and benchmarking.

Table 1: Key Performance Metrics of the ForestCast Deep Learning Approach

| Metric Category | Specific Metric | Reported Performance / Value |

|---|---|---|

| Model Architecture | Primary Model Type | Vision Transformers (ViT) [5] [24] |

| Spatial Resolution | Input/Output Resolution | 1 km² (30m minimum mapping unit cited in related research) [5] [21] |

| Input Data Efficacy | Most Predictive Input | Change History (performance indistinguishable from full satellite data) [5] [26] |

| Comparative Accuracy | Benchmarking | Matched or exceeded accuracy of methods using specialized inputs (e.g., roads) [5] |

| Related Model Performance | U-Net (SAR-based) | Highest Overall Accuracy: 0.95; IoU: 0.66 [21] |

| Related Model Performance | U-Net (SAR-based) - Forest Class F1-Score | 0.97 [21] |

| Related Model Performance | U-Net (SAR-based) - Deforestation Class F1-Score | 0.92 [21] |

Experimental Protocol: Deforestation Risk Forecasting

Data Acquisition & Preprocessing

This protocol details the methodology for training and deploying a ForestCast-style deep learning model.

Objective: To acquire and preprocess all necessary satellite data for training a deforestation risk forecasting model.

Materials & Reagents:

- Hardware: High-performance computing cluster with GPUs (e.g., NVIDIA A100/T4), sufficient storage for petabyte-scale satellite data.

- Software: Python 3.8+, Google Earth Engine API, TensorFlow/PyTorch, Rasterio, GDAL.

- Data Sources:

Procedure:

- Define Region of Interest (ROI): Select a focal area (e.g., Southeast Asia, Amazon basin) and a time frame for model training (e.g., 2000-2020).

- Acquire Satellite Imagery: For the ROI and time frame, download multispectral satellite imagery (e.g., Landsat Surface Reflectance Tier 1) at a consistent spatial resolution. Cloud masking is essential.

- Generate "Change History" Input: Process annual forest cover data to create a "change history" raster. This single image summarizes past deforestation by assigning each pixel the year it was deforested; pixels that remain forested are assigned a background value [5]. This is the most critical input.

- Assemble Additional Inputs (Optional):

- Generate Deforestation Labels: Using the forest cover change data, create binary rasters (0: no deforestation, 1: deforestation) for a future target year (e.g., predict 2021 deforestation using data up to 2020). This serves as the ground-truth label for supervised learning.

- Tiling & Normalization: Split all input rasters and label rasters into smaller, manageable tiles (e.g., 256x256 pixels). Normalize pixel values for each input band to a common scale (e.g., 0-1).

Model Training & Optimization

Objective: To train a vision transformer model to predict pixel-wise deforestation risk.

Procedure:

- Model Selection: Implement a Vision Transformer (ViT) architecture. The model should be designed to ingest a full tile of input data and output a corresponding tile of probability scores [5].

- Data Partitioning: Randomly split the data tiles into training (70%), validation (15%), and test (15%) sets, ensuring tiles from the same geographic area are contained within a single split to prevent data leakage.

- Loss Function & Compilation: Use a loss function suitable for binary segmentation, such as Binary Cross-Entropy or a combined Dice-Focal loss, to handle class imbalance. Select the AdamW optimizer.

- Model Training: Train the model on the training set, using the validation set for epoch-to-performance monitoring. Employ early stopping and reduce-learning-rate-on-plateau callbacks to prevent overfitting and stabilize training.

- Hyperparameter Tuning: Systematically tune key hyperparameters using a framework like Optuna [21]. The table below details the parameters and their search spaces.

Table 2: Hyperparameter Tuning for Deforestation Forecasting Models

| Hyperparameter | Search Space / Value | Function / Impact on Model |

|---|---|---|

| Learning Rate | 1e-5 to 1e-3 (log scale) | Controls step size during weight updates; critical for convergence. |

| Batch Size | 32, 64, 128 | Impacts training stability and GPU memory usage. |

| Patch Size | 16, 32 | Size of image patches for Vision Transformer input. |

| Transformer Layers | 6, 12, 24 | Number of transformer encoder blocks; impacts model capacity. |

| Attention Heads | 8, 16 | Number of self-attention heads per layer. |

| Hidden Dimension | 384, 768, 1024 | Dimensionality of the feature embeddings. |

| Dropout Rate | 0.1 to 0.3 | Prevents overfitting by randomly dropping units during training. |

Model Evaluation & Inference

Objective: To rigorously evaluate model performance and generate deforestation risk forecasts.

Procedure:

- Quantitative Evaluation: Run the trained model on the held-out test set. Calculate performance metrics, including:

- Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve.

- Pixel-wise Accuracy, Precision, and Recall.

- Intersection over Union (IoU) for the deforestation class.

- Benchmarking: Compare the model's performance against baseline methods, such as Random Forest models or models that only use static driver maps, to establish its relative advantage [26].

- Risk Map Generation (Inference): To create a new forecast, deploy the trained model on preprocessed, most-recent input data for the target area. The model output is a georeferenced raster where each pixel's value represents the predicted probability of deforestation within the forecast period (e.g., the next year).

- Validation: Where possible, collaborate with in-country partners for ground-truthing to validate the model's predictions against real-world observations [27].

The following workflow diagram illustrates the complete experimental protocol.

The Scientist's Toolkit: Essential Research Reagents & Materials

The successful implementation of a deforestation forecasting system requires a suite of data, computational tools, and software. This table details the essential "research reagents" for this field.

Table 3: Key Research Reagents and Materials for Deforestation Forecasting

| Category | Item / Solution | Function / Application in Research |

|---|---|---|

| Satellite Data Sources | Landsat Archive (USGS) | Provides multi-decadal, medium-resolution optical imagery for historical analysis and change detection. [5] |

| Sentinel-2 Archive (ESA) | Delivers high-resolution optical imagery with a 5-day revisit cycle, beneficial for detailed monitoring. [5] [24] | |

| Sentinel-1 SAR (ESA) | Supplies Synthetic Aperture Radar data, which penetrates cloud cover, enabling monitoring in perpetually cloudy regions. [21] | |

| Benchmark Datasets | Global Forest Change (Hansen et al.) | The foundational, global dataset for training and validating forest extent and change models. [5] |

| ForestCast Southeast Asia Benchmark | The first public benchmark dataset specifically for training and evaluating deep learning deforestation risk models. [26] | |

| Software & Libraries | TensorFlow / PyTorch | Core open-source libraries for building and training deep learning models. |

| Google Earth Engine API | A cloud-computing platform for planetary-scale geospatial analysis, ideal for data access and preprocessing. [5] | |

| GDAL / Rasterio | Essential libraries for processing and manipulating geospatial raster data formats. | |

| Model Architectures | Vision Transformers (ViT) | State-of-the-art architecture used in ForestCast, effective at capturing long-range dependencies in image data. [5] |

| U-Net (with ResNet backbone) | A convolutional network commonly used for semantic segmentation tasks, effective in related LULC studies. [21] | |

| Validation Tools | QGIS with Deforisk Plugin | An open-source GIS application and plugin used for mapping deforestation risks and validating model outputs on a national scale. [27] |

| Ground Control Points (GCPs) | In-situ field measurements used to validate and calibrate remote sensing-based model predictions. [21] |

Integrated Analysis & Future Perspectives

The application of deep learning, particularly vision transformers, marks a paradigm shift in how we approach forest conservation. The core insight from ForestCast—that a simple, satellite-derived "change history" is a powerfully predictive input—simplifies the modeling challenge and enhances scalability [5] [26]. This approach effectively captures the spatial dynamics of deforestation fronts and trends over time.

The methodologies detailed here for forests are directly transferable to the parallel crisis of glacier melting research. The same "pure satellite" philosophy can be applied, using historical glacier extent and velocity maps as a key input to forecast future ice loss. AI models can similarly process raw satellite imagery (optical and SAR) to predict calving events, thinning rates, and the expansion of glacial lakes, thereby providing critical early warnings for communities downstream [25].

For researchers, the path forward involves scaling these models globally, improving temporal resolution for near-real-time risk assessment, and integrating multimodal data. The public release of benchmarks like ForestCast is crucial for fostering collaboration, ensuring reproducibility, and accelerating innovation in the vital field of AI-powered environmental forecasting [5] [26]. The ultimate goal is to transform these risk forecasts into actionable intelligence, empowering governments, corporations, and local communities to protect vulnerable ecosystems before they are lost.

The integration of artificial intelligence (AI) into environmental monitoring represents a paradigm shift in how we protect fragile ecosystems. This document details the application of a novel AI framework that synergizes the real-time object detection capabilities of YOLOv8 (You Only Look Once) with the advanced reasoning and dynamic adjustment capacities of LangChain-based Agentic AI for the detection of illegal logging activities. This approach is designed to overcome the limitations of traditional monitoring methods, which often suffer from delayed detection, sparse coverage of vast and inaccessible forest areas, and high resource demands [9]. The core innovation lies in the creation of a closed-loop system where YOLOv8 provides rapid, visual identification of logging indicators—such as tree stumps, logging machinery, and unauthorized human presence—from satellite and drone imagery, while the LangChain agent introduces a layer of contextual reasoning, dynamic threshold adjustment, and reinforcement-learning-based feedback [9]. This enables the system to not only detect potential threats but also to learn from its environment and improve its performance over time, reducing false positives and increasing recall. Framed within a broader thesis on AI for environmental protection, this methodology establishes a scalable, interpretable, and real-time approach that can be adapted for monitoring other critical phenomena, such as glacier melting.

Quantitative Performance Analysis

The performance of object detection models is quantitatively assessed using a standard set of metrics that evaluate both accuracy and efficiency. The following table summarizes the key metrics used to evaluate the YOLO model within the proposed framework, based on established guidelines for YOLO performance evaluation [28].

Table 1: Key Object Detection Performance Metrics for Model Evaluation

| Metric | Definition | Interpretation in Illegal Logging Context |

|---|---|---|

| Precision (P) | Proportion of true positive detections among all positive predictions [28]. | Measures the model's accuracy in avoiding false alarms; high precision means most alerts are actual logging activity. |

| Recall (R) | Proportion of true positives detected among all actual positives [28]. | Measures the model's ability to find all instances of illegal logging; high recall means few logging events are missed. |

| mAP50 | Mean Average Precision at an IoU threshold of 0.50 [28]. | Evaluates detection accuracy under "easy" criteria, where a predicted bounding box only needs to overlap 50% with a ground truth box. |

| mAP50-95 | Average mAP over IoU thresholds from 0.50 to 0.95 in steps of 0.05 [28]. | A comprehensive metric for detection performance across varying levels of difficulty, from "easy" to "strict". |

| F1 Score | Harmonic mean of precision and recall [28]. | Provides a single score that balances the trade-off between false positives (precision) and false negatives (recall). |

| IoU | Intersection over Union; measures the overlap between predicted and ground truth bounding boxes [28]. | Quantifies the accuracy of object localization (e.g., how precisely the bounding box encapsulates a logging truck). |

In a specific application for deforestation anomaly detection, the integration of a LangChain agent with a YOLOv8 model demonstrated significant operational improvements, even with a modest baseline mAP50. The following table summarizes the reported experimental outcomes [9].

Table 2: Experimental Outcomes of YOLOv8 and LangChain Agent Integration for Deforestation Detection

| Performance Aspect | Reported Outcome | Significance |

|---|---|---|

| Training Performance | Steady improvements with boxloss, clsloss, and distribution focal loss reduced by >50% [9]. | Indicates effective model convergence and learning from the training dataset. |

| Baseline mAP50 | Approximately 0.07 [9]. | Suggests a challenging detection environment or dataset, highlighting the need for post-processing enhancement. |

| Recall Enhancement | Increase of up to 24% compared to baseline YOLO models [9]. | The LangChain agent's dynamic adjustment successfully helped the system identify more true instances of logging activity. |

| False Positives | Notable reduction through reinforcement-learning-based feedback [9]. | Improved the operational efficiency of the system by minimizing unnecessary alerts, a critical feature for field deployment. |

Experimental Protocols

Data Acquisition and Preprocessing Protocol

Objective: To collect and prepare a multimodal dataset suitable for training and validating the YOLOv8 model for illegal logging indicator detection. Materials: Access to satellite imagery providers (e.g., Sentinel, Landsat) or UAV/drone platforms; computing infrastructure with adequate GPU resources; data annotation software (e.g., LabelImg, CVAT). Procedure:

- Data Collection: Acquire multispectral and RGB imagery from target forested regions. Data should encompass diverse conditions (seasons, weather, lighting) and contain examples of both positive instances (logging equipment, stumps, roads) and negative instances (undisturbed forest, natural clearings) [9].

- Data Annotation: Annotate all collected images by drawing bounding boxes around target objects. Each bounding box must be labeled with the correct class (e.g., "treestump", "loggingtruck", "excavator"). This creates the ground truth data [28] [29].

- Data Augmentation: Apply a suite of augmentation techniques to the training dataset to improve model robustness. This should include, but not be limited to:

- Geometric transformations: Rotation (±15°), scaling (0.8x - 1.2x), shearing, and horizontal flipping.

- Color space adjustments: Variations in brightness, contrast, and saturation.

- Noise injection: To simulate sensor noise and varying image quality.

- Dataset Splitting: Divide the fully annotated and augmented dataset into three subsets: a training set (~70%), a validation set (~15%), and a held-out test set (~15%). The test set must remain completely unseen during the training process to provide an unbiased evaluation.

Model Training and Validation Protocol

Objective: To train the YOLOv8 object detection model and perform iterative validation. Materials: Preprocessed and split dataset; computing environment with CUDA-enabled GPU; Ultralytics YOLOv8 Python library. Procedure:

- Model Selection & Initialization: Choose an appropriate YOLOv8 model variant (e.g., YOLOv8s for a balance of speed and accuracy) [29]. Initialize the model with pre-trained weights from a large-scale dataset like COCO to leverage transfer learning.

- Hyperparameter Configuration: Define the training hyperparameters in a configuration file. Key parameters include:

- Initial learning rate (e.g., 0.01)

- Batch size (dependent on GPU memory)

- Number of epochs (e.g., 100-300)

- Optimizer (e.g., AdamW)

- Image size (e.g., 640x640 pixels)

- Training Loop: Execute the training process. The model will iteratively process batches from the training set, compute the loss (e.g., box loss, classification loss), and update its weights via backpropagation.

- Validation and Metric Tracking: After each epoch, validate the model on the validation set. Use the

model.val()function to compute key performance metrics, including Precision, Recall, mAP50, and mAP50-95 [28]. Monitor these metrics for convergence and potential overfitting. - Visual Analysis: Regularly inspect the visual outputs generated during validation, such as the validation batch predictions (

val_batchX_pred.jpg) and precision-recall curves (PR_curve.png). This provides an intuitive understanding of model performance and failure modes [28].

LangChain Agent Integration and Deployment Protocol

Objective: To integrate the trained YOLOv8 model with a LangChain agent for dynamic alert refinement and to deploy the full system for real-time monitoring.

Materials: Trained YOLOv8 model (.pt file); LangChain framework; access to a Large Language Model (LLM) API (e.g., OpenAI, Anthropic); GIS software or APIs (e.g., ArcGIS, Google Maps API).

Procedure:

- Agent Design: Construct a LangChain agent equipped with tools that allow it to:

- Call the YOLOv8 Model: Process new images and receive initial detection results.

- Access Contextual Data: Query GIS databases for land ownership status, protected area boundaries, and historical imagery [9].

- Adjust Confidence Thresholds: Dynamically raise or lower the confidence threshold for detections based on the location's risk profile (e.g., lower thresholds in protected areas) [9].

- Feedback Loop Implementation: Implement a reinforcement learning (RL) feedback mechanism where the agent's decisions (e.g., to issue an alert) are rewarded or penalized based on ground-truth verification from field reports or expert analysis. This allows the agent to learn and improve its decision-making policy over time [9].

- Alert Generation: Program the agent to synthesize the YOLO detections, contextual data, and its learned policy to generate actionable, geolocated alerts. These alerts should include the image, detected objects, confidence scores, and map coordinates for the event.

- System Deployment: Deploy the integrated system on a cloud server or edge device. Connect it to a continuous stream of imagery from satellite APIs or drone feeds. The system should be configured to run inference at a specified interval (e.g., daily) and automatically route confirmed alerts to relevant authorities or research teams.

Workflow Visualization

The following diagram illustrates the integrated workflow of the YOLOv8 and LangChain agent system for generating real-time illegal logging alerts.

The Scientist's Toolkit: Research Reagent Solutions

For researchers aiming to replicate or build upon this framework, the following table details the essential "research reagents" – the key software, data, and hardware components required.

Table 3: Essential Research Reagents for AI-Powered Deforestation Monitoring

| Tool / Component | Type | Function in the Experimental Protocol |

|---|---|---|

| YOLOv8/X/Nano | Software Model | Provides the core, high-speed object detection capability for identifying logging-related objects in imagery [29]. |

| LangChain Framework | Software Library | Enables the creation of an intelligent agent that can orchestrate tools, manage context, and make reasoned decisions [9]. |

| Global Forest Watch | Data Platform | An open-access source of satellite-based forest change data, useful for initial analysis, validation, and sourcing training imagery [30]. |

| Sentinel-2 / Landsat 8 | Satellite Imagery | Provides frequent, medium-to-high-resolution multispectral optical imagery for monitoring large forested areas [31]. |

| ICEYE SAR Satellite | Satellite Imagery | Supplies Synthetic Aperture Radar (SAR) data, capable of penetrating cloud cover, enabling all-weather, day-and-night monitoring [30]. |

| CUDA-enabled GPU | Hardware | (e.g., NVIDIA RTX Series) Accelerates the model training and inference processes, making real-time or near-real-time analysis feasible. |

| LabelImg / CVAT | Software Tool | Open-source graphical image annotation tools used for manually drawing bounding boxes to create the ground truth dataset for model training. |

| GIS Software (e.g., QGIS) | Software Platform | Used to manage and analyze spatial data, such as protected area boundaries and land tenure, which provides critical context for the LangChain agent [9]. |

The accelerating retreat of glaciers is a primary driver of global sea-level rise and a key indicator of climate change. Accurately monitoring glacier dynamics, specifically the position of calving fronts and overall mass balance, is therefore critical for climate modeling and mitigation efforts. Traditional methods of manual delineation from satellite imagery are no longer feasible at a global scale given the vast volumes of data now available. This Application Note details how artificial intelligence (AI), specifically deep learning, is being deployed to automate and enhance the precision of mapping glacier calving fronts and extents, thereby providing researchers with scalable, consistent, and high-temporal-resolution data essential for contemporary glaciology.

Technical Specifications & Performance Benchmarks

Deep learning models for glacier mapping are typically evaluated on their accuracy in delineating glacier boundaries and calving fronts against manual expert interpretations. The following table summarizes the performance and key attributes of several state-of-the-art approaches.

Table 1: Performance Benchmarks of AI Models for Glacier Mapping

| Model Name | Primary Task | Reported Performance Metric | Key Innovation | Region of Validation |

|---|---|---|---|---|

| GlaViTU [22] | Glacier extent mapping | IoU >0.85 (clean ice); >0.75 (debris-rich areas) | Hybrid Convolutional-Transformer architecture for global scalability | Global (11 diverse regions) |

| CISNet [32] | Calving front extraction | - | Dual-branch network using change information between image pairs to guide segmentation | Antarctica, Greenland, Alaska |

| U-Net-based System [33] | Calving front delineation | Mean error of 59.3 ± 5.9 m vs. manual extraction | Fully automated processing system applied to multi-spectral Landsat imagery | Antarctic Peninsula |

| Deep Learning Model [6] | Calving front detection | - | Model trained on both optical and radar images for diverse conditions | Svalbard (149 glaciers) |

Key: IoU = Intersection over Union, a metric where 1 represents a perfect match between the predicted and reference area.

Experimental Protocols & Workflows

This section outlines standardized protocols for implementing AI-based glacier monitoring, from data preparation to model application.

Protocol 1: Automated Calving Front Delineation using a U-Net-Based System

This protocol, adapted from Loebel et al. (2025), describes an end-to-end workflow for generating a high-temporal-resolution calving front product [33].

Data Acquisition & Pre-processing:

- Input Data: Acquire Level-1 multi-spectral imagery from satellites such as Landsat-8 or Landsat-9.

- Tiling: Crop the satellite scenes into smaller, manageable tiles (e.g., 512 x 512 pixels) centered on the glacier's last known calving front position.

- Resolution Standardization: Ensure all input bands are resampled to a unified ground sampling distance (e.g., 30 meters).

Model Architecture & Training:

- Model: Employ a U-Net-based convolutional neural network. The encoder (contracting path) captures contextual features, while the decoder (expanding path) enables precise localization.

- Training Data: Train the model on a dataset of thousands of manually delineated calving fronts from reference glaciers (e.g., initially trained on 869 Greenland glaciers, then fine-tuned with 252 Antarctic Peninsula fronts).

- Loss Function: Use a loss function suitable for segmentation, such as a combination of cross-entropy and Dice loss, to handle class imbalance.

Inference & Post-processing:

- Prediction: Feed new, pre-processed satellite tiles into the trained model. The output is a probability map indicating the likelihood of each pixel being part of the calving front.

- Vectorization: Convert the probability map into a single-pixel-wide line vector representing the calving front position.

- Quality Control: Implement an automated confidence filter based on the prediction probability to remove low-confidence results, followed by optional manual verification for a subset of data.

Validation:

- Compare the AI-derived calving fronts against a held-out set of manually delineated fronts. Calculate the mean distance deviation (e.g., 59.3 meters) as the primary accuracy metric [33].

Protocol 2: Global Glacier Extent Mapping with GlaViTU

This protocol, based on the work presented in Nature Communications, is designed for mapping entire glacier outlines across diverse global environments [22].

Data Compilation:

- Input Features: Compile a multi-modal dataset including:

- Optical Satellite Imagery (e.g., Landsat, Sentinel-2)

- Synthetic Aperture Radar (SAR) Data (e.g., backscatter, interferometric coherence)

- Digital Elevation Models (DEMs)

- Reference Labels: Use existing glacier inventories (e.g., GLIMS, Randolph Glacier Inventory) for supervised training.

- Input Features: Compile a multi-modal dataset including:

Model Training Strategy:

- Architecture: Utilize the GlaViTU (Glacier-VisionTransformer-U-Net) model, which combines the local feature extraction power of CNNs with the global contextual understanding of Vision Transformers [22].

- Training Strategy: Adopt a "global strategy" where a single model is trained on data from multiple, globally distributed regions to ensure strong generalization.

Prediction and Uncertainty Quantification:

- Inference: Apply the trained model to new satellite acquisitions to generate glacier extent maps.

- Confidence Calibration: The model reports a calibrated confidence score for each pixel's classification. This allows users to identify areas of high uncertainty (e.g., debris-covered ice, mountain shadows) and filter results accordingly [22].

Workflow Visualization: AI-Assisted Glacier Monitoring

The following diagram illustrates the logical workflow and data flow for a generalized AI-based glacier monitoring system, integrating the protocols described above.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of the aforementioned protocols relies on specific computational tools and datasets, which function as the essential "research reagents" in this digital domain.

Table 2: Essential Research Reagents for AI-Based Glacier Mapping

| Reagent / Resource | Type | Function & Application | Example / Source |

|---|---|---|---|

| Multi-spectral Satellite Imagery | Data | Provides optical data for visualizing glacier surfaces and boundaries across different wavelengths. | Landsat [33], Sentinel-2 [22] |

| Synthetic Aperture Radar (SAR) Data | Data | Enables glacier monitoring regardless of cloud cover or polar darkness; backscatter and coherence are key features. | Sentinel-1 [22], COSMO-SkyMed [34] |

| Benchmark Datasets | Data | Publicly available, labeled datasets for training and fairly comparing different AI models. | CaFFe (Calving Fronts) [32], Custom Benchmark Datasets [22] |

| Geospatial Computing Platform | Software/Platform | Cloud-based platform for storing, processing, and analyzing large volumes of satellite imagery. | Google Earth Engine [6] |

| Deep Learning Framework | Software | Open-source libraries used to build, train, and deploy deep learning models. | PyTorch, TensorFlow |

| Pre-trained Glacier Models | Model | Models like GlaViTU [22] or published U-Net variants [33] provide a starting point for transfer learning, reducing computational cost and time. | Model weights shared on repositories like GitHub or Zenodo. |

Concluding Remarks

The integration of AI into glaciology marks a methodological shift, transforming our capacity to observe the cryosphere. The protocols and tools detailed herein enable the production of consistent, high-frequency, and accurate datasets on glacier calving fronts and extents at a global scale. This data is indispensable for refining mass balance calculations, improving ice dynamic models, and constraining projections of future sea-level rise. As these AI tools continue to evolve and become more accessible, they will form the backbone of robust monitoring systems, empowering scientists and policymakers to make informed decisions based on the most current understanding of a rapidly changing planet.

The accelerating crises of deforestation and glacier melting demand monitoring solutions that are both expansive in scale and precise in detail. Integrated geospatial platforms represent a paradigm shift in environmental science, merging the macro-scale perspective of satellites with the micro-scale resolution of drones through the power of Artificial Intelligence (AI). These systems are transitioning environmental monitoring from reactive observation to proactive forecasting and precise intervention. This convergence is particularly crucial for tracking two of the most pressing symptoms of climate change: the rapid loss of forests, which account for nearly 10% of global anthropogenic greenhouse-gas emissions [5], and the alarming retreat of glaciers, which have contributed approximately 18 mm to global sea-level rise since 2000 [3].

Platforms such as MORFO's AI Suite, FlyPix AI, and Google's Geospatial AI ecosystem are at the forefront of this transformation. They enable a multi-scalar approach to observation, allowing researchers to detect continental-scale trends while simultaneously inspecting individual seedlings or glacial crevasses. The integration of AI and machine learning (ML) is the core engine of this revolution, automating the analysis of massive geospatial datasets—including optical imagery, synthetic aperture radar (SAR), LiDAR, and topographic data—to generate actionable insights with unprecedented speed and accuracy [35] [36]. This document provides detailed application notes and experimental protocols for leveraging these integrated platforms in deforestation and glacier melting research, providing researchers with the methodological foundation to implement these tools in their own conservation and climate studies.

This section details the core architecture, data sources, and primary functions of the leading integrated AI platforms for environmental monitoring. A thorough understanding of each platform's capabilities and specialties is essential for selecting the appropriate tool for specific research objectives in deforestation and glaciology.

MORFO AI Suite

The MORFO AI Suite is a specialized platform designed to revolutionize large-scale forest restoration and monitoring. Its primary goal is to make reforestation more efficient, accurate, and cost-effective by overcoming the limitations of traditional satellite imagery and manual fieldwork [37]. The suite is composed of several integrated tools that function as a cohesive system for forest management:

- MORFO Dash: A central dashboard that consolidates all project monitoring data, providing interactive reports and visualizations for over 20 key performance indicators (KPIs), including hectares restored, carbon sequestration, and biodiversity metrics [37] [38].

- Cover Drone ID: Utilizes ultra-high-resolution drone imagery (0.3 cm/pixel) to create detailed land cover maps, analyze landscapes, track forest cover, identify watercourses, and detect vegetation changes [37].

- Tree Drone ID: Focuses on monitoring mature trees (≥5 years old), using drone-captured images to identify tree species and assess their health, height, and canopy size for long-term biodiversity tracking [37] [38].

- Seedling Drone ID: A breakthrough tool that identifies and tracks tree species with high accuracy from just six months after planting, a critical window for restoration success that traditional satellites cannot monitor [37] [38].

- Seedling Picture ID: A ground-based complement to Seedling Drone ID that allows field teams to gather detailed data on seedling health, enriching the overall dataset [37].

- Soil Insights: Analyzes soil conditions to generate a Quality Index, determining the suitability of the soil for supporting healthy forest growth [37] [38].

FlyPix AI

FlyPix AI is a geospatial analytics platform that leverages AI to simplify complex image analysis for environmental monitoring, including glacier tracking. Its key value proposition is providing fast, actionable insights through a user-friendly, no-code interface, making advanced geospatial analysis accessible to researchers without extensive technical expertise [8]. The platform is characterized by its flexibility and compatibility with multiple data sources:

- AI-Powered Analytics: Employs advanced algorithms for precise ice mass classification, glacier retreat detection, and structural assessments of glacial features like crevasses [8].

- Multi-Source Data Compatibility: Supports and integrates data from UAVs (drones), various satellite constellations, and LiDAR sensors, offering flexibility for different monitoring applications and scales [8].

- Automated Change Detection: Streamlines the tracking of glacier dynamics, including ice loss, surface movement, and anomaly identification, which is crucial for long-term climate research [8].

- Visualization Tools: Generates heatmaps and 3D models to enhance the visual analysis of glacial topography and changes over time [8].

Google Geospatial AI

Google's Geospatial AI ecosystem represents a planetary-scale approach to Earth observation. As of 2025, it integrates several powerful models and platforms into a unified stack for real-time geospatial reasoning, predictive modeling, and natural language interfaces [35]. Its components are foundational for global-scale environmental analysis:

- Gemini 2.5 Multimodal Reasoning Engine: Processes user inquiries in natural language, along with vector data and satellite imagery, to perform tasks like land cover classification, change detection, and rapid map creation [35].

- AlphaEarth: A climate-aware AI model developed by DeepMind that uses physics-informed machine learning to forecast key climate variables, including glacial melt and sea-level rise, by learning from decades of global climate data [35].

- Google Earth Engine 2.0: The computational backbone, optimized for serverless GPU acceleration and hosting a massive catalog of satellite imagery (e.g., Landsat, Sentinel, MODIS) for analysis [35].

- Specialized AI Models: Google has also developed targeted models for forest monitoring, such as the "Natural Forests of the World 2020" map, which uses a multi-modal temporal-spatial vision transformer (MTSViT) to distinguish natural forests from tree plantations with 92.2% accuracy [36]. The "ForestCast" model further represents a shift from monitoring to forecasting, using a deep learning model based on vision transformers to predict deforestation risk purely from satellite data history [5].

Table 1: Comparative Analysis of Integrated AI Monitoring Platforms

| Feature | MORFO AI Suite | FlyPix AI | Google Geospatial AI |

|---|---|---|---|

| Primary Focus | Forest restoration & biodiversity monitoring [37] | General-purpose geospatial analysis (e.g., glaciers, infrastructure) [8] | Planetary-scale Earth observation & forecasting [35] |

| Core Data Sources | Ultra-high-resolution (0.3 cm/pixel) drone imagery, ground pictures, soil data [37] [38] | UAV/drone imagery, satellite data, LiDAR [8] | Landsat, Sentinel, MODIS, PlanetScope, real-time climate sensor data [35] |

| Key AI Capabilities | Species recognition, seedling tracking, soil quality indexing, biodiversity KPIs [37] [38] | Ice mass classification, change detection, 3D modeling, automated anomaly tracking [8] | Natural language querying (Gemini), climate forecasting (AlphaEarth), deforestation risk prediction (ForestCast) [35] [5] |

| Typical Outputs | Species-level maps, soil quality index, carbon sequestration reports, canopy health [37] | Glacier retreat maps, surface change detection, ice fracture reports, elevation profiles [8] | Global forest type maps, deforestation risk forecasts, climate impact simulations, real-time disaster maps [35] [36] [5] |

| Implementation Scale | Project-level (e.g., 23 projects in Latin America) [37] | Local to regional-scale studies [8] | Global to regional-scale analysis [35] [36] |

Application Notes for Deforestation Monitoring

The following section outlines specific methodologies and experimental protocols for using integrated platforms to combat deforestation, from establishing baselines to predicting future risk.

Application Note: Establishing a "Natural Forest" Baseline with Google's AI

1. Research Objective: To create a high-resolution, globally consistent baseline map of natural forests as of 2020 to support compliance with deforestation-free regulations (e.g., EUDR) and accurate conservation monitoring [36].

2. Experimental Protocol:

- Platform & Model: Google's Geospatial AI stack, specifically the MTSViT (multi-modal temporal-spatial vision transformer) model used for the "Natural Forests of the World 2020" map [36].

- Input Data Preparation:

- Satellite Imagery: Collect a time series of Sentinel-2 satellite imagery over the target area for the year 2020. The model analyzes seasonal spectral signatures [36].

- Topographical Data: Source elevation and slope data for the same region to provide contextual information [36].

- Geographical Coordinates: Define the area of interest with precise coordinates.

- Methodology:

- The AI model is not fed a single snapshot but analyzes a 1280 x 1280 meter patch of land over the course of a year.

- It processes the multi-temporal satellite data, topographical data, and geographical context simultaneously.

- For each 10 x 10 meter pixel within the patch, the model estimates the probability that it represents a natural forest based on learned spectral, temporal, and texture signatures. Natural forests exhibit complex, heterogeneous patterns compared to the uniform signatures of plantations [36].

- Outputs & Validation:

- Output: A seamless, 10-meter resolution global probability map where each pixel is classified as likely being a natural forest or not [36].

- Validation: The map achieved a best-in-class accuracy of 92.2% when validated against an updated, independent global dataset originally designed for forest management studies [36].

Application Note: Early-Stage Reforestation Tracking with MORFO