Advanced Threshold Selection for Asymmetry Graph Analysis: Methodologies, Optimization, and Applications in Biomedical Research

This article provides a comprehensive guide to threshold selection in asymmetry graph analysis, a critical technique for modeling complex, non-symmetric relationships in biomedical data.

Advanced Threshold Selection for Asymmetry Graph Analysis: Methodologies, Optimization, and Applications in Biomedical Research

Abstract

This article provides a comprehensive guide to threshold selection in asymmetry graph analysis, a critical technique for modeling complex, non-symmetric relationships in biomedical data. We first establish the foundational principles of graph asymmetry and the pivotal role of thresholds in defining graph topology and subsequent analysis. The core of the article explores cutting-edge methodological frameworks, including fuzzy options in Graph Model for Conflict Resolution (GMCR) and novel graph-theoretic indices like the Weighted Asymmetry Index. We then address common challenges and present optimization strategies, such as asymmetric threshold schemes, which can enhance classification accuracy. The guide concludes with a comparative analysis of validation techniques, using real-world case studies from neuroimaging and drug discovery to demonstrate how proper threshold selection improves the robustness and interpretability of results for researchers and drug development professionals.

The Fundamentals of Asymmetry in Graph Analysis: From Theory to Thresholds

FAQs: Understanding Asymmetry Detection in Meta-Analyses

Q1: What is the key difference between traditional funnel plots and the newer Doi plot for assessing asymmetry?

Traditional funnel plots visually assess the symmetry of study effect sizes against a measure of precision (like standard error). A key limitation is their reliance on subjective visual interpretation, which can be misleading. Furthermore, their utility changes significantly depending on the choice of effect measure and precision definition [1].

The Doi plot is an innovative alternative that modifies the normal quantile plot. It plots absolute Z-scores in reverse order on the Y-axis and effect sizes on the X-axis. The smallest absolute Z-score serves as the tip, with a perpendicular line dividing the plot into two regions. This provides a clearer and more intuitive visual structure for interpreting asymmetry, addressing the inherent shortcomings of the funnel plot [1].

Q2: How does the LFK index improve upon p-value-based tests like the Egger test for quantifying asymmetry?

The Egger test is a p-value-based method that tests the statistical significance of funnel plot asymmetry. A major limitation is its dependence on the number of studies (k) in the meta-analysis. Its sensitivity declines sharply in smaller meta-analyses (e.g., when k < 20), meaning it often fails to detect true asymmetry when few studies are available [1].

In contrast, the LFK index is an effect size measure, not a statistical test. It quantifies the difference in the area under the curve between the two regions of the Doi plot. In a perfectly symmetrical plot, the LFK index is zero. Crucially, its performance is independent of the number of studies, providing a more robust measure of asymmetry, especially for meta-analyses with a small k [1].

Q3: Based on simulation studies, which method is more sensitive for detecting publication bias?

Simulation studies that varied the number of studies (k) and the level of simulated bias have demonstrated that the LFK index exhibits consistently higher sensitivity across these different scenarios. The Egger test, due to its dependence on k, shows high sensitivity only when a large number of studies are included [1].

The table below summarizes a comparative simulation based on a replication of Schwarzer et al.:

Table 1: Diagnostic Performance of LFK Index vs. Egger Test in Simulated Meta-Analyses

| Method | Type of Measure | Performance with small k (e.g., 5-10 studies) | Performance with large k (e.g., 50 studies) | Key Characteristic |

|---|---|---|---|---|

| LFK Index | Effect Size (Magnitude of asymmetry) | Consistently High Sensitivity | Consistently High Sensitivity | k-independent |

| Egger Test | Statistical Test (p-value) | Low Sensitivity | High Sensitivity | k-dependent |

Q4: What are the threshold values for interpreting the LFK index?

Unlike p-value-based tests, the LFK index is interpreted using specific thresholds that describe the degree of asymmetry in the Doi plot [1]:

- LFK index within ±1: Suggests symmetry.

- LFK index between ±1 and ±2: Suggests minor asymmetry.

- LFK index beyond ±2: Suggests major asymmetry.

Troubleshooting Guide: Common Issues in Asymmetry Analysis

Problem: Inconclusive or conflicting results between visual inspection of a funnel plot and the Egger test.

- Potential Cause: The subjective nature of funnel plot interpretation coupled with the low statistical power of the Egger test in meta-analyses with a small number of studies.

- Solution: Transition to the Doi plot and LFK index framework. Generate a Doi plot for a more structured visual assessment and calculate the LFK index for a k-independent quantitative measure. This integrated approach provides a more reliable and consistent diagnosis of asymmetry [1].

Problem: A highly significant Egger test in a meta-analysis containing a large number of studies.

- Potential Cause: The Egger test's p-value is highly dependent on the number of studies. A large k can lead to a statistically significant p-value (e.g., p < 0.05) even when the actual asymmetry in the data is trivial from a clinical or practical standpoint.

- Solution: Do not rely solely on the p-value. Calculate the LFK index, which is an effect size and better represents the magnitude of asymmetry. Report both the Doi plot visualization and the LFK index value for a more nuanced interpretation that distinguishes statistical significance from practical importance [1].

Problem: How to handle complex, real-world conflicts where decision-makers' preferences are not clear-cut (binary) but exist on a spectrum.

- Potential Cause: Traditional Graph Model for Conflict Resolution (GMCR) frameworks often simplify option selection to binary choices (Yes/No), which does not capture the inherent fuzziness or partial commitment to a choice in real-world scenarios.

- Solution: Employ a fuzzy options approach within the GMCR framework. This allows decision-makers to assign a degree of selection to each option (e.g., a value between 0 and 1), providing a more nuanced and realistic modeling of preferences and conflicts, including those with power asymmetries [2].

Experimental Protocol: Simulating and Comparing Asymmetry Detection Methods

Objective: To evaluate and compare the diagnostic performance of the LFK index and the Egger test in detecting publication bias under controlled conditions with varying numbers of studies and levels of simulated bias.

Methodology Summary: This protocol is based on a replicated simulation study [1].

1. Simulation Parameters: Table 2: Core Simulation Parameters for Generating Meta-Analysis Data

| Parameter | Settings | Description |

|---|---|---|

| Number of Studies (k) | 5, 10, 20, 50 | To test dependence on study numbers. |

| Data Generating Model | Copas Selection Model | Introduces a correlation (ρ) between study outcome and probability of publication. |

| Bias Level (ρ) | 0, -0.3, -0.5, -0.9 | ρ=0 implies no bias; more negative values imply stronger bias. |

| Sample Size Distribution | Log-normal (Small, Large) | Simulates different levels of precision across studies. |

| Iterations per Scenario | 1000 | Number of simulated meta-analyses for each parameter combination to ensure result stability. |

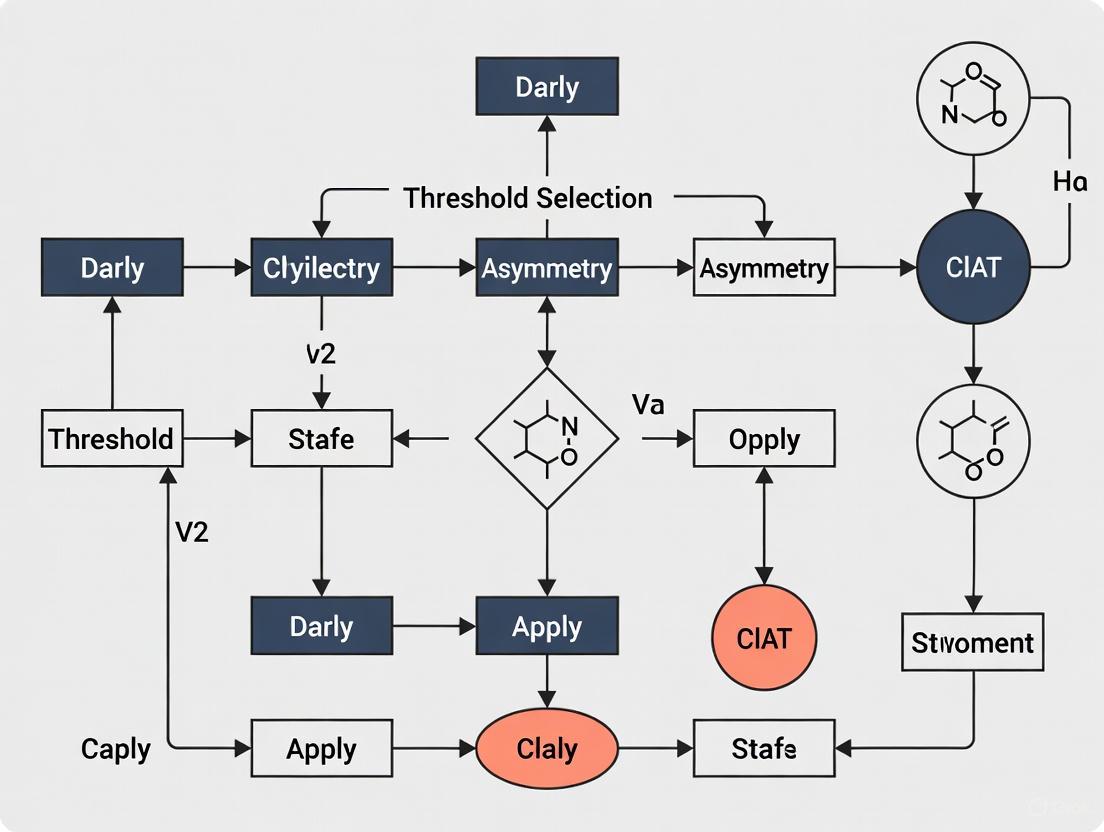

2. Workflow Diagram:

3. Data Analysis:

- For each simulated meta-analysis, calculate the LFK index and the Egger test p-value.

- Dichotomize the outcomes: LFK index beyond ±1 indicates asymmetry; Egger test p-value < 0.1 indicates asymmetry.

- Compare these results to the known, simulated truth (the value of ρ) to calculate diagnostic performance metrics like Sensitivity (ability to detect true bias) and Specificity (ability to correctly identify the absence of bias) for each method across the different scenarios [1].

Research Reagent Solutions

Table 3: Essential Computational Tools for Asymmetry Analysis in Meta-Analysis

| Item/Tool | Function | Application Note |

|---|---|---|

| R Statistical Software | Primary environment for statistical computing and graphics. | Essential for running simulations and implementing advanced meta-analysis techniques. The simulation protocol can be coded in R [1]. |

| Metafor Package (R) | Provides comprehensive functions for fitting meta-analytic models. | Can be used to calculate effect sizes, create traditional funnel plots, and perform the Egger test. |

| GMCR Framework (Matlab/Python) | Framework for modeling and analyzing strategic conflicts. | Can be extended with fuzzy logic to model conflicts with power asymmetry and non-binary preferences [2]. |

| APCA Contrast Algorithm | A modern method for calculating perceptual contrast between colors. | Useful for ensuring visualizations and diagrams adhere to accessibility standards (WCAG) for color contrast, aiding clarity for all readers [3]. |

Frequently Asked Questions (FAQs)

Q1: What is the primary consequence of setting a connectivity threshold too high in an asymmetry graph? Setting a connectivity threshold too high can lead to an over-fragmented graph. This occurs because only the very strongest connections are preserved, potentially breaking apart a single, meaningful cluster into multiple, disconnected components. Consequently, you might fail to identify the true underlying community structure or miss crucial relationships between nodes [4].

Q2: How does an inappropriately low threshold affect my graph analysis? An inappropriately low threshold results in an overly dense and noisy graph. By allowing weak, often spurious connections to remain, the graph becomes a "hairball." This makes it difficult to distinguish significant pathways from noise, obscures key topological features, and can lead to incorrect conclusions about the network's properties [4].

Q3: Are there quantitative methods to guide threshold selection for asymmetry analysis? Yes, quantitative methods are essential. The LFK index, for example, is an effect size measure developed to quantify asymmetry in meta-analysis Doi plots. Unlike p-value-based tests whose sensitivity depends on the number of studies (k), the LFK index provides a k-independent measure of asymmetry, allowing for more robust comparisons and threshold setting across different analyses [1].

Q4: Why is visual accessibility important in graph visualization, and how can I achieve it? Visual accessibility ensures your research findings are interpretable by all colleagues, including those with color vision deficiencies. Relying solely on color to encode information can exclude portions of your audience. Best practices include [4] [5]:

- Using high-contrast colors between elements and the background.

- Combining color with other visual cues like node shape, patterns, and size.

- Providing alternative color schemes, such as colorblind-friendly modes.

Q5: My graph visualization tool isn't fully accessible to screen readers. What is a recommended temporary solution?

If a complex chart cannot be made immediately accessible via keyboard navigation and text alternatives, use the aria-hidden attribute to hide it from screen readers. You must then provide an aria-label that describes the chart and, crucially, offer an alternative way to access the same information, such as a data table or a text summary [4].

Troubleshooting Guides

Problem 1: Over-Fragmented Graph

- Symptoms: The graph has many isolated nodes and small, disconnected clusters instead of a few meaningful components.

- Diagnosis: The connectivity threshold is likely set too high.

- Solution:

- Gradually lower the threshold and observe the point at which the main components begin to connect.

- Validate the newly formed connections against your domain knowledge to ensure they are meaningful.

- Monitor Graph Metrics: Use the following table to track key metrics as you adjust the threshold:

| Threshold Value | Number of Components | Average Node Degree | Diagnosis |

|---|---|---|---|

| 0.9 | 45 | 1.2 | Too High: Excessive fragmentation |

| 0.7 | 15 | 2.5 | Potentially Optimal: Balanced structure |

| 0.5 | 5 | 8.1 | Potentially Viable: Consolidated structure |

| 0.3 | 2 | 25.7 | Too Low: Risk of excessive density and noise |

Problem 2: Overly Dense "Hairball" Graph

- Symptoms: The graph is a dense web of connections where no clear structure or pathway is visible.

- Diagnosis: The connectivity threshold is almost certainly set too low.

- Solution:

- Systematically increase the threshold until the main pathways and clusters become visually distinct.

- Justify the new threshold using a quantitative measure. For instance, in asymmetry analysis, you might use the LFK index to set a threshold that effectively separates symmetric from asymmetric graphs.

- Experimental Protocol for Threshold Optimization:

- Aim: To identify the optimal connectivity threshold for distinguishing true signal from noise in asymmetry graph analysis.

- Method: Apply a series of thresholds to your graph data. For each threshold, calculate both global (e.g., number of components) and local (e.g., node degree) topological metrics. Simultaneously, calculate an asymmetry metric like the LFK index.

- Analysis: Identify the "elbow" in a plot of graph density versus threshold value. Correlate threshold levels with asymmetry indices to select a value that maximizes meaningful structure while minimizing noise [1].

Problem 3: Inaccessible Graph Visualizations

- Symptoms: Colleagues report difficulty distinguishing elements in your graph, or screen reader users cannot access the information.

- Diagnosis: The visualization relies on a single visual channel (e.g., color) and lacks accessibility features.

- Solution:

- Implement Multi-Channel Encoding:

- Ensure Color Contrast: Use a color contrast checker to verify that all colors have a sufficient contrast ratio against the background (at least 3:1 for large non-text elements and 4.5:1 for standard text) [6].

- Provide Keyboard Navigation: Ensure users can navigate through graph elements using the Tab key and interact with them using Enter or Space [4].

Research Reagent Solutions

| Item | Function/Benefit |

|---|---|

| Graph Visualization SDK (e.g., KeyLines, ReGraph) | Provides the core toolkit for building interactive graph visualization applications, with built-in support for customization and accessibility features like keyboard navigation [4]. |

| Color Contrast Analyzer (e.g., WebAIM) | A critical tool for verifying that the color palettes used in your graphs meet WCAG guidelines, ensuring legibility for users with low vision or color blindness [4] [5]. |

| Asymmetry Metric (LFK Index) | A quantitative reagent for analysis; it acts as an effect size measure for asymmetry in plots like the Doi plot, enabling k-independent assessment and more reliable threshold setting for detecting bias or asymmetry [1]. |

| Accessible Pattern Library | A pre-designed set of seamlessly looping patterns (lines, dots, shapes) used as fills in bar charts or other areas to make complex data visualizations distinguishable without relying on color alone [5]. |

Diagram: Graph Threshold Impact on Connectivity

Diagram: Accessible Multi-Channel Data Encoding

The Impact of Threshold Selection on Downstream Analysis and Interpretation

Frequently Asked Questions

1. What is the fundamental impact of threshold selection in asymmetry analysis? Threshold selection represents a critical bias-variance trade-off. Choosing a threshold that is too low introduces bias by including data that does not represent true tail behavior or asymmetry. Conversely, a threshold that is too high leads to high variability and unstable estimates due to a small sample size. This choice fundamentally affects the reliability of all subsequent analyses, including the estimation of return levels in environmental science or the assessment of publication bias in meta-analyses [7] [8].

2. How does the LFK index improve upon traditional methods like Egger's test for publication bias? The LFK index is an effect size measure of asymmetry, unlike Egger's test, which is a p-value-based statistical test. This key difference makes the LFK index independent of the number of studies (k) in a meta-analysis. Simulation studies show that the LFK index maintains consistently high sensitivity across meta-analyses of varying sizes, whereas the sensitivity of Egger's test declines sharply when the number of studies is small (k < 20) [1].

3. What are the common types of thresholds encountered in research data analysis? Researchers often work with three main categories of thresholds, each with distinct implications:

- Peaks Over Threshold (POT): Used in extreme value analysis to model data exceeding a high threshold, assuming a Generalized Pareto Distribution (GPD) for the exceedances [7] [8].

- Statistical Asymmetry Thresholds: Used to quantify bias in data synthesis (e.g., LFK index in Doi plots) or to test distributional symmetry (e.g., Rp test) [1] [9].

- Physical/Dimensional Thresholds: Used in geosciences and image analysis to define physical boundaries, such as the midline of a drainage basin to calculate an Asymmetry Factor (AF) [10].

4. My quantile-quantile (Q-Q) plot suggests a heavy-tailed distribution. How should this inform my threshold choice? A heavy-tailed Q-Q plot indicates that extreme values are more likely than a normal distribution would predict. In this context, automated threshold selection methods like the TAil-Informed threshoLd Selection (TAILS) method are particularly advantageous. These methods are designed to robustly capture genuine tail behavior, even from distributions with diverse drivers, which helps prevent underestimating the frequency or magnitude of extreme events [7].

Troubleshooting Guides

Problem: Inconsistent Asymmetry Detection in a Small Meta-Analysis

Issue: When your meta-analysis contains a limited number of studies (e.g., k < 10), you get conflicting signals about publication bias. A funnel plot is difficult to interpret visually, and Egger's test is non-significant, but you suspect small-study effects are present.

Solution: Employ the Doi plot and LFK index, which are more robust for small k.

- Step 1: Generate a Doi Plot. Plot the effect sizes on the x-axis against the absolute Z-scores in reverse order on the y-axis. The most precise study (smallest Z-score) will form the tip of the plot [1].

- Step 2: Calculate the LFK Index. The LFK index quantifies the difference in the area under the curve on the two sides of the most precise study in the Doi plot. In a perfectly symmetrical plot, the index is zero [1].

- Step 3: Interpret the LFK Index. Use the following classification to diagnose asymmetry [1]:

- LFK index within ±1: Symmetrical plot (no asymmetry).

- LFK index ±1 to ±2: Minor asymmetry.

- LFK index beyond ±2: Major asymmetry.

This methodology directly addresses the limitation of p-value-based tests in small meta-analyses.

Problem: Selecting an Optimal Threshold for Peak Over Threshold (POT) Analysis

Issue: You need to model extreme events (e.g., precipitation, sea levels) using a POT framework, but your results are highly sensitive to the arbitrary threshold you selected.

Solution: Implement an automated, data-driven threshold selection method to find an optimal value.

- Step 1: Choose a Candidate Method. Several automated methods exist. The following table compares two common approaches:

| Method | Principle | Best Used For |

|---|---|---|

| Anderson-Darling (AD) [8] | Finds the threshold where the distribution of exceedances best fits a GPD using a modified Anderson-Darling statistic. | General POT applications where a single, optimal threshold is desired. |

| TAil-Informed Selection (TAILS) [7] | Prioritizes capturing the behavior of the most extreme observations, accepting some additional uncertainty to better model the tail's end. | Data where the most extreme events are critical, and tail behavior is complex. |

- Step 2: Validate Threshold Choice. Regardless of the method, perform a sensitivity analysis.

- Plot key GPD parameters (shape and scale) against a range of thresholds.

- Look for a "stability region" where these parameter estimates are relatively constant over a range of thresholds. Your chosen threshold should lie within this region [8].

- Step 3: Assess Model Fit. Use a goodness-of-fit test (like the right-sided Anderson-Darling test) or diagnostic plots (e.g., probability plot, quantile plot) to confirm that the data above your chosen threshold are well-modeled by a GPD [7].

Problem: Validating Data Symmetry After Normalization in Genomic Analysis

Issue: After normalizing RNA-sequencing data, you need to verify the assumption of symmetric distribution before proceeding with differential expression analysis, as overlooked asymmetry can cause inaccurate results [9].

Solution: Apply a formal statistical test for symmetry, such as the Rp test.

- Step 1: Prepare the Data. Begin with your normalized gene expression values for a specific gene across all samples [9].

- Step 2: Execute the Rp Test. The workflow involves the following steps, which can be implemented algorithmically [9]:

- Step 3: Interpret the Result. A significant p-value (after adjusting for multiple testing, e.g., Bonferroni correction) leads to rejecting the null hypothesis of symmetry, indicating your normalized data remains asymmetrical. This signals that the normalization may not have been fully effective for this gene, and conclusions from downstream analyses should be drawn with caution [9].

The Scientist's Toolkit: Key Reagents & Methods

The table below summarizes essential methodological "reagents" for conducting robust asymmetry and threshold analysis.

| Item | Function / Principle | Application Context |

|---|---|---|

| Doi Plot & LFK Index [1] | Visual and quantitative assessment of publication bias. The LFK index is a k-independent measure of asymmetry. | Meta-analysis of clinical trials or experimental studies. |

| Peaks Over Threshold (POT) & GPD [7] [8] | Models the tail of a distribution by fitting a Generalized Pareto Distribution to all data exceeding a defined threshold. | Extreme Value Analysis (EVA) of environmental hazards, finance. |

| Rp Test [9] | A statistical test to evaluate symmetry of a distribution about its median. | Genomic data analysis (e.g., RNA-seq) after normalization. |

| Automated Threshold Selection (e.g., TAILS, AD) [7] [8] | Data-driven algorithms to select a threshold for POT analysis, reducing subjectivity. | Any POT application where an objective, reproducible threshold is needed. |

| Drainage Basin Asymmetry Factor (AF) [10] | Calculated as AF = (Ar/At), where Ar is the basin area to the right of the trunk stream, and At is the total area. A value of 0.5 indicates symmetry. | Geomorphology, tectonic studies. |

Frequently Asked Questions

Q1: What is regulatory asymmetry in a biological network context? Regulatory asymmetry describes a situation where, within the same cellular network, a transcription factor (TF) gene and its target genes experience quantitatively different levels of repression or activation, even when controlled by identical promoter sequences [11]. This phenomenon is inherited from the network's architecture and is not due to sequence differences.

Q2: My deterministic model fails to predict the observed asymmetry. Why? This is a common issue. Simple deterministic models based on mass action kinetics often fail to capture the inherent regulatory asymmetry. This is because they average out the different microenvironments experienced by genes in distinct regulatory states. To accurately predict asymmetry, you should employ stochastic simulations of kinetic models that account for the discrete, random binding and unbinding events of transcription factors [11].

Q3: How can I experimentally tune the degree of asymmetry in my synthetic network? You can control the magnitude of regulatory asymmetry by manipulating key network parameters [11]:

- Network Size: Introduce non-functional 'decoy' binding sites on plasmids to compete for TF binding and mimic the demand of a larger network.

- TF-Binding Affinity: Use operator sites with different sequence identities (e.g., O2, O1, Oid) to control the TF unbinding rate.

- Cellular Growth Rate: Note that asymmetry is most significant in typical growth conditions and can disappear if the growth rate is too fast or too slow.

Q4: My network visualization is cluttered and asymmetry is hard to see. How can I improve it?

- Choose the Right Layout: Force-directed layouts can misinterpret spatial proximity. Consider alternative layouts like adjacency matrices, which excel at showing clusters and are better for dense networks [12].

- Use Color Effectfully: Apply a divergent color scheme (e.g., red to blue) to emphasize extreme values and ensure color is not the only channel used to convey information. Add data labels for clarity [12] [13].

- Ensure Proper Labeling: Use legible font sizes and position labels to avoid clutter. If labels cannot be sufficiently enlarged in the main figure, provide a high-resolution, zoomable version online [12].

Q5: At the critical threshold (R₀ = 1), how do I determine the stability of the system's equilibrium? When the basic reproduction number R₀ equals 1, the linear approximation of the system is insufficient to determine stability. You must perform a second-order approximation of the system's reaction function. The stability is then determined by the sign of this second derivative; a negative value indicates stability, while a positive value indicates instability [14].

Experimental Protocol: Quantifying Regulatory Asymmetry in a Synthetic SIM

This protocol outlines a synthetic biology approach to investigate regulatory asymmetry in a Negative Single-Input Module (SIM) in E. coli, based on the methodology from [11].

1. Objective To quantitatively measure the inherent regulatory asymmetry between an autoregulated transcription factor (TF) gene and its target gene under identical promoter control.

2. Key Reagents and Materials

- Strain: Engineered E. coli strain with an integrated genetic circuit.

- TF Gene: LacI-mCherry fusion gene (for both regulation and fluorescent quantification).

- Target Gene: YFP (Yellow Fluorescent Protein) gene.

- Promoters: Identical promoters for the TF and target genes, each with a single LacI-binding site (e.g., centered at +11 relative to the transcription start site).

- Plasmids for Decoy Sites: Plasmids containing an array of high-affinity operator sites (Oid) to act as competing, non-regulatory binding sites. The copy number can be varied (e.g., 0 to 5 sites per plasmid).

3. Procedure Step 1: System Construction

- Integrate the LacI-mCherry (TF) and YFP (target) genes into the host chromosome, ensuring both are controlled by identical, LacI-repressible promoters.

- Transform the strain with plasmids carrying a variable number of decoy binding sites to modulate the network's competitive load.

Step 2: Culturing and Induction

- Grow the engineered bacterial strains under the desired, standard growth conditions.

- If using an inducible system, apply the inducer (e.g., IPTG for LacI) at varying concentrations to probe different regulatory states.

Step 3: Flow Cytometry Measurement

- Harvest cells during mid-exponential growth phase.

- Use flow cytometry to measure the fluorescence intensity of mCherry (reporting TF levels) and YFP (reporting target gene expression) for thousands of individual cells.

Step 4: Data Analysis and Fold-Change Calculation

- Calculate the mean fluorescence for each channel and strain.

- For the target gene (YFP): Determine the unregulated expression level by measuring fluorescence in a control strain lacking LacI.

- For the TF gene (LacI-mCherry): Determine its unregulated expression by measuring fluorescence in a control strain where its promoter's operator site is mutated to a non-functional sequence (e.g., NoO1v1).

- Compute the Fold-Change (FC) for each gene:

FC = (Expression in regulated condition) / (Unregulated expression) - Quantify Asymmetry: The regulatory asymmetry is observed as a lower Fold-Change (greater repression) for the TF gene compared to the target gene.

Research Reagent Solutions

The table below details key reagents used in the featured experiment for studying network asymmetry.

| Item Name | Function in the Experiment |

|---|---|

| LacI-mCherry TF Fusion | Serves as the model autoregulatory transcription factor; mCherry enables quantitative tracking of TF levels. |

| YFP Reporter Gene | Acts as the target gene; its expression level is the key output measured to quantify regulation. |

| Operator Site Variants (O2, O1, Oid) | Used to precisely tune TF-binding affinity, allowing investigation of its role in asymmetry. |

| Decoy Binding Site Plasmids | Introduce specific, non-functional binding sites to compete for TF binding and mimic network size. |

| Promoter with Mutated Operator (NoO1v1) | Critical control element to measure the unregulated, baseline expression of the autoregulated TF gene. |

The following table summarizes key quantitative relationships and parameters from the study of asymmetry in the negative autoregulatory SIM motif [11].

| Parameter or Relationship | Description | Impact on Regulatory Asymmetry |

|---|---|---|

| Number of Competing Binding Sites | Models network size/load via decoy sites. | Increases the magnitude of asymmetry; more sites increase demand for free TF. |

| TF-Binding Affinity | Controlled by operator sequence (O2 < O1 < Oid). | Higher affinity increases the magnitude of observed asymmetry. |

| Cellular Growth Rate | The rate at which the host cells are growing. | Asymmetry is most significant at typical growth rates and disappears at very fast or slow rates. |

| Fold-Change (FC) | FC = Regulated Expression / Unregulated Expression. | The core measurable: Asymmetry is present when FCTF gene < FCtarget gene. |

Visualization of Concepts and Workflows

Diagram 1: Negative Single-Input Module (SIM) Motif

Diagram 2: Experimental Workflow for Quantifying Asymmetry

Diagram 3: Regulatory Asymmetry Outcome

Methodological Frameworks for Asymmetric Graph Construction and Analysis

Leveraging Fuzzy Theory for Asymmetric Conflict Resolution (GMCR)

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of using fuzzy options over binary options in a Graph Model for Conflict Resolution (GMCR)?

Traditional GMCR frameworks simplify option selection into binary choices (Yes or No), which can fail to capture the nuanced positions of decision-makers in real-world conflicts. Fuzzy options allow you to represent the degree to which an option is selected, using membership degrees between 0 and 1. This is crucial for modeling the inherent uncertainty and gradual preference shifts in power asymmetry conflicts, providing a more realistic and flexible analysis [2].

FAQ 2: How does power asymmetry fundamentally alter the conflict dynamics in a fuzzy GMCR?

In a power asymmetry conflict, a "leader" with superior power influences the preferences of a "follower." Within the fuzzy GMCR framework, the follower is modeled to unilaterally adjust their degree of option selection to reach a consensus with the leader. This adjustment is a key dynamic that drives the conflict towards resolution, moving beyond the static preferences found in symmetric models [2] [15].

FAQ 3: My Graphviz diagram is generating a warning about HTML-like labels not being available. What should I do?

This warning indicates that your Graphviz installation lacks the necessary libexpat support [16]. To resolve this:

- Install an Updated Graphviz Package: Download and install the latest version from the official Graphviz website [16].

- Use a Supported Online Editor: Utilize online tools like the Graphviz Visual Editor, which is based on the maintained

@hpcc-js/wasmlibrary and fully supports HTML-like labels [16].

FAQ 4: How can I format a node's label to have text in different colors or bold font?

Standard record-based nodes (shape=record) do not support rich text formatting. You must use HTML-like labels by setting shape=none and enclosing the label content with angle brackets <> instead of quotes [17]. Inside, you can use HTML tags like <B>, <I>, and <FONT> for formatting [16].

Troubleshooting Common Experimental Issues

Issue: Unrealistic or Unstable Conflict Equilibria

- Problem: The model produces no equilibria, or the predicted stable states do not align with realistic outcomes.

- Solution: This often stems from improperly defined fuzzy preference thresholds.

- Recalibrate Thresholds: Re-interview decision-makers (DMs) to refine their selection thresholds for each option. Ensure thresholds reflect their actual psychological decision points.

- Sensitivity Analysis: Systematically vary the fuzzy truth values and preference rankings to identify threshold ranges where the model's equilibria are robust. This helps in understanding the model's stability boundaries [2].

Issue: Model Fails to Converge to a Consensus State

- Problem: The simulation shows no possible movement towards a resolution, even when a power asymmetry is defined.

- Solution: The follower's adjustment logic might be too rigid.

- Review Adjustment Rules: Check the algorithm governing how the follower adjusts their option selection degrees. The rules should allow for gradual concessions. Implement a step-wise adjustment process where the follower moves their position incrementally toward the leader's preferred state [2] [15].

Issue: Graphviz Visualization is Cluttered or Illegible

- Problem: The generated graph is too dense, with overlapping nodes and edges, making it impossible to read.

- Solution: Use Graphviz attributes to control layout and spacing.

- Increase Layout Space: Use the

ratio,size, andoverlapattributes at the graph level to provide more space. - Simplify Node Content: Use the

shape=plainattribute with HTML-like labels to ensure node size is determined solely by the label content, preventing unnecessary large margins [18]. - Utilize Clusters: Group related nodes using

subgraphclusters to visually organize the graph and improve hierarchy comprehension [17].

- Increase Layout Space: Use the

Experimental Protocols & Methodologies

Protocol 1: Defining Fuzzy Options and Membership Degrees

Purpose: To translate qualitative DM stances into quantitative fuzzy values for model input.

Steps:

- Identify Options: List all relevant options ( O = {o1, o2, ..., o_k} ) available to all DMs in the conflict.

- Elicit Membership Degrees: For each DM, conduct interviews or surveys to determine their degree of selection for each option. For example, a DM's position might be represented as ( \mu{o1}=0.8, \mu{o2}=0.3 ), where ( \mu ) is the membership function and the values indicate the degree of choosing an option [2].

- Construct Fuzzy States: A conflict state ( s ) is defined by the vector of membership degrees for all options, forming a fuzzy state space [2].

Protocol 2: Calculating Fuzzy Truth Value Prioritization

Purpose: To rank conflict states based on DMs' fuzzy preferences.

Steps:

- State Generation: Enumerate all feasible fuzzy states from the combinations of option membership degrees.

- Preference Elicitation: Have DMs rank these fuzzy states or provide a set of option statements with associated truth values.

- Priority Ranking: Apply the fuzzy truth value prioritization approach. This method calculates a ranking of conflict states that reflects the fuzzy characteristics of the options and the DMs' preferences [2].

Protocol 3: Stability Analysis under Fuzzy Power Asymmetry

Purpose: To identify equilibrium states where no DM has a incentive to unilaterally move away, considering power dynamics.

Steps:

- Designate Leader/Follower: Identify which DM is the power subject (leader) and which is the follower.

- Model Follower Adjustment: The follower adjusts their degree of option selection based on the leader's preferences. This is modeled as a unilateral move in the state transition graph.

- Define Stability Criteria: Establish logical and matrix definitions for stability concepts (e.g., Nash stability) that incorporate the follower's fuzzy unilateral improvements under the leader's influence.

- Identify Equilibria: Analyze the graph model to find states that are stable for all DMs under the defined stability criteria. These equilibria represent the most likely resolutions of the conflict [2] [15].

Quantitative Data Tables

Table 1: Fuzzy Option Selection Degrees for Carbon Emission Conflict

This table illustrates how fuzzy options capture nuanced positions in a supply chain carbon emission conflict, a typical application area [2].

- Decision Makers: Local Government (Leader), Upstream Manufacturer (Follower).

- Options: o1: Introduce strict carbon policy, o2: Invest in R&D for carbon reduction, o3: Produce low-carbon products.

| Conflict State (s) | Description | Local Gov. (o1) | Upstream Manu. (o2) | Upstream Manu. (o3) |

|---|---|---|---|---|

| s1 | Status Quo | 0.1 | 0.2 | 0.3 |

| s2 | Policy Push | 0.9 | 0.4 | 0.5 |

| s3 | Joint Initiative | 0.8 | 0.8 | 0.7 |

| s4 | Full Adoption | 1.0 | 0.9 | 0.9 |

Table 2: Fuzzy Preference Thresholds for Stability Analysis

This table defines the thresholds used to determine a DM's willingness to move between states, a core parameter in the analysis [2].

| Decision Maker | Option | Low Engagement Threshold | Moderate Engagement Threshold | High Engagement Threshold |

|---|---|---|---|---|

| Local Government | o1 (Strict Policy) | ( \mu < 0.3 ) | ( 0.3 \leq \mu \leq 0.7 ) | ( \mu > 0.7 ) |

| Upstream Manufacturer | o2 (R&D Investment) | ( \mu < 0.2 ) | ( 0.2 \leq \mu \leq 0.6 ) | ( \mu > 0.6 ) |

| Upstream Manufacturer | o3 (Low-carbon Production) | ( \mu < 0.4 ) | ( 0.4 \leq \mu \leq 0.8 ) | ( \mu > 0.8 ) |

Research Reagent Solutions

Essential materials and conceptual tools for conducting research in fuzzy asymmetric GMCR.

| Item Name | Function in Research |

|---|---|

| Graph Model for Conflict Resolution (GMCR) | The core analytical framework for modeling strategic interactions among multiple decision-makers [2] [15]. |

| Fuzzy Set Theory | The mathematical foundation for representing and computing with fuzzy, non-Boolean options, allowing for degrees of membership rather than crisp true/false values [2]. |

| Fuzzy Truth Value Prioritization Method | An algorithm used to calculate the ranking of conflict states based on the fuzzy characteristics of options and DMs' preferences [2]. |

| Power Asymmetry Stability Definitions | Logical and matrix-based definitions of stability (e.g., Nash, GMR) that are modified to account for the leader-follower dynamic and fuzzy preferences [2] [15]. |

| Graphviz Software | An open-source graph visualization tool used to diagram the state transitions and equilibria in the GMCR, making the model's outcomes interpretable [16] [18]. |

Graphviz Diagrams

Fuzzy GMCR Power Dynamics

Fuzzy Option State Transition

Implementing the Weighted Asymmetry Index for Network Quantification

Frequently Asked Questions (FAQs)

Q1: What is the core mathematical principle behind the Weighted Asymmetry Index (WAI), and how does it differ from traditional symmetry measures?

The Weighted Asymmetry Index (WAI) is a graph-theoretic metric designed to quantify asymmetry in a network by considering the distances of vertices connected by an edge. Unlike traditional distance-based indices like the Wiener or Szeged index, which treat all vertex pairs equally, the WAI specifically measures how uneven the distances from each vertex to the rest of the graph are, factoring in the contribution of each edge. It captures intrinsic asymmetries that older indices might overlook, making it particularly useful for analyzing complex networks where local structural imbalances are critical, such as in molecular stability or network vulnerability studies [19].

Q2: Within a thesis on threshold selection, why is the WAI particularly sensitive to the choice of parameters, and what are the consequences of poor threshold selection?

The WAI's calculation often depends on underlying parameters, such as distance metrics or weighting functions. In the broader context of threshold selection for asymmetry analysis, choosing inappropriate thresholds can lead to two main issues:

- Loss of Sensitivity: Overly broad thresholds may fail to capture fine-grained asymmetries, causing the index to miss crucial local structural imbalances [19].

- Artificial Inflation/Noise: Poorly calibrated thresholds can artificially amplify minor, insignificant asymmetries or introduce noise, making the results unreliable. This is a common problem in symmetry quantification, where methods can be sensitive to the scale and range of input data [20]. Proper threshold selection is therefore essential to ensure the WAI accurately reflects the true asymmetry of the network.

Q3: How can I validate that my calculated WAI value is meaningful and not an artifact of my graph preprocessing or sampling method?

Validation should involve benchmarking against known network structures and checking for consistency.

- Benchmark with Extreme Graphs: First, compute the WAI for graphs with known, extreme asymmetry (e.g., a star graph) and perfect symmetry (e.g., a complete graph). The WAI should reflect these properties, helping to calibrate your expectations [19].

- Robustness Analysis: Perform a sensitivity analysis by slightly varying your preprocessing steps (like how edge weights or distances are calculated) and resampling your network if applicable. If the WAI value fluctuates drastically with minor changes, it may indicate instability in your measurement setup. Comparing its behavior with other indices can also provide a sanity check [19] [20].

Q4: My network is derived from real-world biological data (e.g., a protein-protein interaction network). Are there specific considerations for applying the WAI in such domains?

Yes, biological networks often have specific characteristics:

- Weighted and Directed Connections: Biological networks are often not simple, unweighted graphs. When applying the WAI, ensure your graph model accurately reflects whether connections are directed (e.g., regulatory influences) and weighted (e.g., interaction strengths). The WAI may need to be adapted to account for these features to be biologically meaningful [21].

- Multi-scale Asymmetry: Asymmetry might exist at different scales—local (around a node) and global (the entire network). Your analysis should specify which level of organization the WAI is intended to capture, as this can influence the interpretation of results in a biological context [22].

Q5: What are the best practices for visualizing networks where the WAI has identified significant asymmetries?

Effective visualization is key to interpreting WAI results.

- Highlight Asymmetry Hotspots: Use node color and size to represent the contribution of individual vertices or edges to the overall asymmetry index. This quickly directs attention to network regions responsible for the observed asymmetry.

- Incorporate Layout Algorithms: Use force-directed or other layout algorithms that can spatially represent the imbalance quantified by the WAI. For instance, nodes with highly asymmetric distance profiles might be pulled further from the network's center of mass. The following workflow can serve as a guide:

Troubleshooting Guides

Issue 1: Unexpectedly Low or High WAI Values

Problem: The calculated WAI value is much lower or higher than anticipated based on the network's structure.

Diagnosis and Resolution:

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Incorrect Distance Metric | Verify the definition of "distance" used in your calculation. Is it topological (shortest path) or geometric? | Ensure consistency between your graph's interpretation and the distance metric. Recalculate using the appropriate metric. |

| Improper Graph Connectivity | Check if the graph is fully connected. WAI calculations may be skewed in disconnected graphs. | Consider using the largest connected component or adapting the index for disconnected graphs. |

| Edge Weight Sensitivity | If edges are weighted, test how sensitive the WAI is to the weight scale. | Normalize edge weights to a common scale (e.g., 0-1) to prevent a single high-weight edge from dominating the asymmetry measure. |

Issue 2: WAI is Insensitive to Structural Changes

Problem: Modifications to the network that are perceived as increasing asymmetry do not significantly change the WAI.

Diagnosis and Resolution:

- Check Parameter Thresholds: The WAI might be relying on parameters or thresholds that are too coarse. Refine these parameters to be more sensitive to the specific structural changes you are introducing [23].

- Local vs. Global Measure: The WAI might be a global index, and your changes are highly local. Investigate if a local variant of the asymmetry index exists or can be defined to focus on the relevant part of the network [19].

- Validate with Ground Truth: Create a simple, synthetic network where you can precisely control the asymmetry. Apply the WAI to this network to verify it responds as expected to known changes.

Issue 3: High Computational Complexity for Large Networks

Problem: The calculation of the WAI is prohibitively slow for large-scale networks.

Diagnosis and Resolution:

- Profile the Calculation: Identify the bottleneck. Is it the all-pairs shortest path calculation, or the asymmetry computation itself?

- Employ Sampling Techniques: For an approximate WAI, use node or edge sampling strategies instead of processing the entire network. Ensure the sampling method is unbiased.

- Optimize Data Structures: Use efficient graph libraries (e.g., NetworkX, igraph) and data structures optimized for large graphs. For very large networks, consider distributed computing frameworks.

Issue 4: Integrating WAI into a Graph Machine Learning Pipeline

Problem: Difficulty using the WAI as a feature or loss component in a Graph Neural Network (GNN) model.

Diagnosis and Resolution:

- Differentiability: The standard WAI formulation may not be differentiable, which is required for backpropagation. Work is needed to create a smooth, differentiable approximation of the index.

- Feature Engineering: Instead of using the global WAI, compute node-level or edge-level asymmetry scores that can be used as input features for the GNN. This leverages the asymmetry information in a way compatible with standard GNN architectures [24].

- Customized Subgraph Encoding: If using subgraph-based methods, ensure the subgraph selection process is sensitive enough to capture the asymmetries the WAI is designed to measure. Customizing subgraph selection and encoding can be critical for tasks like drug-drug interaction prediction, where asymmetric patterns are common [24].

Key Experimental Protocols

Protocol 1: Establishing a WAI Baseline for Standard Graph Topologies

Objective: To compute and document reference WAI values for common graph classes, providing a baseline for experimental results.

Methodology:

- Graph Generation: Generate instances of standard graph types:

- Path Graph (Pn)

- Star Graph (Sn)

- Complete Graph (Kn)

- Complete Bipartite Graph (Km,n)

- Wheel Graph (Wn)

- Parameter Definition: Define a consistent distance metric (e.g., shortest path length) and weighting scheme (initially unweighted).

- WAI Calculation: Implement the WAI formula and calculate the index for each graph type.

- Analysis: Compare the values to understand how different topological features influence the asymmetry index.

Expected Outcome: A table of reference values, confirming that path and star graphs have higher asymmetry than complete graphs [19].

Protocol 2: Optimizing Threshold Parameters for WAI in Specific Applications

Objective: To systematically determine the optimal threshold parameters for the WAI to maximize its performance in a specific task, such as classifying different network types.

Methodology:

- Dataset Curation: Assemble a dataset of labeled networks where asymmetry is a distinguishing feature.

- Parameter Space Definition: Identify the key parameters in your WAI formulation (e.g., distance thresholds, decay factors).

- Grid Search Execution: Perform a grid search over the parameter space. For each parameter set, compute the WAI for all networks and use a simple classifier (e.g., k-NN) to assess classification accuracy.

- Validation: Validate the best-performing parameter set on a held-out test set.

Expected Outcome: A set of optimized, data-specific parameters for the WAI that enhance its discriminative power, following a paradigm similar to entropy parameter optimization [23]. The workflow is summarized below:

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for WAI-Based Network Analysis

| Item Name | Function / Description | Example / Notes |

|---|---|---|

| Graph Theory Library | Provides foundational algorithms for graph manipulation, shortest path calculation, and metric computation. | NetworkX (Python), igraph (R/Python/C++). Essential for implementing the WAI. |

| Linear Regression Model | Used in model-based frameworks to assign directed, signed weights to edges in a network, which can then be analyzed for asymmetry. | Ordinary Least Squares regression can predict node state based on neighbors, creating an asymmetric weight matrix [21]. |

| Neural Architecture Search (NAS) | Automates the design of data-specific subgraph selection and encoding functions, which can be tailored to capture asymmetric patterns. | Can be used to customize subgraph-based pipelines for tasks like drug-drug interaction prediction, where asymmetry is common [24]. |

| Symmetry Axiom Framework | A set of benchmarking standards (axioms) used to evaluate and validate any proposed symmetry or asymmetry index. | Axioms include finite range, identification of perfect symmetry/asymmetry, direction identification, signal order independence, and scaling invariance [20]. |

| Entropy Maximization Problem | A methodological approach used to quantify node-specific information content by measuring uncertainty reduction in a network. | The InfoRank index uses this to rank nodes by information content, addressing information asymmetry [25]. |

Spectral Feature Modeling with Graph Signal Processing for Brain Connectivity

Troubleshooting Guides

Guide: Resolving Low Classification Accuracy in GSP Models

Problem: The overall classification accuracy of your Graph Signal Processing (GSP) model for distinguishing brain states (e.g., ASD vs. neurotypical) is significantly lower than expected or reported in literature.

Explanation: Low accuracy often stems from suboptimal graph construction parameters or inadequate feature selection, which fails to capture discriminative connectivity patterns.

Solution:

- Step 1: Verify Graph Sparsity Threshold. Reconstruct your brain connectivity graphs using different sparsity thresholds. Empirical studies have identified that a 25% sparsity threshold often optimizes the trade-off between robust feature extraction and computational efficiency. A sparsity that is too low may include noisy, non-informative connections, while one that is too high may remove critical connections [26].

- Step 2: Perform Feature Ablation. Systematically remove groups of features from your model to identify their individual contributions. Research indicates that spectral entropy is a critically important feature; its removal can cause a performance drop of nearly 30%. Ensure this feature is correctly calculated and included [26].

- Step 3: Check Modality Fusion. If using multimodal data (e.g., fMRI and EEG), confirm that your fusion pipeline effectively leverages the complementary information. fMRI provides high spatial resolution, while EEG offers high temporal resolution. Use a spectral graph-based fusion method to preserve modality-specific characteristics and avoid redundancy [26].

Guide: Addressing Instability in Spectral Feature Extraction

Problem: Extracted spectral features, such as Graph Fourier Transform (GFT) coefficients, are unstable across repeated analyses of the same subject or dataset.

Explanation: Instability can be caused by inconsistent pre-processing of neuroimaging data or a failure to account for the dynamic nature of functional connectivity.

Solution:

- Step 1: Standardize Pre-processing. Ensure all functional MRI (fMRI) data is processed through a standardized pipeline (e.g., the Configurable Pipeline for the Analysis of Connectomes - CPAC). Key steps must include slice-time correction, motion correction, skull-stripping, global mean intensity normalization, nuisance regression, and band-pass filtering (e.g., 0.01–0.1 Hz) [27].

- Step 2: Implement Dynamic FC Analysis. Transition from static to dynamic Functional Connectivity (dFC) analysis. Use a sliding window approach (e.g., a 30-second window with a 1-second step) to capture time-varying connectivity patterns. This helps abstract temporal dependencies into more stable, high-level representations [27].

- Step 3: Apply Data Harmonization. When using multi-site data, employ harmonization tools like the ComBat method to remove site-specific biases introduced by different MRI scanners and protocols. Use site information as the batch variable and include age, gender, and diagnostic status as covariates [27].

Frequently Asked Questions (FAQs)

Q1: What is the role of the sparsity threshold in constructing the brain connectivity graph, and what is the recommended value? A1: The sparsity threshold controls the density of connections in your graph by retaining only the strongest connections. This simplifies the network and reduces the influence of weak, potentially noisy connections. Based on experimental optimization, a 25% sparsity threshold is recommended for maximizing both the robustness of the extracted features and computational efficiency in GSP-based brain connectivity analysis [26].

Q2: Which GSP-derived feature is most critical for achieving high classification performance in brain disorder detection? A2: Spectral entropy has been identified as the most discriminative feature. Feature ablation analysis demonstrates that removing spectral entropy can lead to a performance decrease of nearly 30%. This feature likely captures the complexity and disorder of brain signals in the spectral graph domain, which are highly indicative of conditions like Autism Spectrum Disorder [26].

Q3: How can I model temporal dependencies in dynamic functional connectivity data for improved classification? A3: A deep learning framework combining Long Short-Term Memory (LSTM) networks with an attention mechanism is effective. The LSTM captures intricate temporal dependencies in the sequence of dynamic connectivity states, while the attention mechanism learns to weight the importance of different time points or connectivity patterns, leading to more accurate classification [27].

Q4: My model is overfitting to the training data. What strategies can I use to improve generalizability? A4: Consider these approaches:

- Data Harmonization: Use the ComBat method to correct for inter-site variability, especially when using public datasets like ABIDE [27].

- Feature Reduction: Apply Principal Component Analysis (PCA) to your high-dimensional GSP features (e.g., GFT coefficients, spectral entropy, clustering coefficients) to reduce redundancy and compress the feature space while preserving critical information [26].

- Advanced Spectral Learning: Implement methods like dynamic Connectivity analysis with Spectral Learning (dCSL), which uses non-stationary spectral kernel mapping in a deep architecture to better capture generalizable temporal patterns without overfitting [28].

The table below consolidates key quantitative findings from recent studies on GSP and related analysis methods for brain connectivity.

Table 1: Key Experimental Findings and Parameters

| Study Focus | Key Metric | Reported Value / Range | Context and Notes |

|---|---|---|---|

| GSP Framework Performance [26] | Classification Accuracy | 98.8% | Achieved using SVM with RBF kernel on multimodal (fMRI+EEG) GSP features. |

| Feature Importance [26] | Performance Drop from Ablation | ~30% | Observed decrease in accuracy when spectral entropy feature was removed. |

| Graph Construction [26] | Optimal Sparsity Threshold | 25% | Maximized robustness and computational efficiency of graph models. |

| LSTM-Attention Model [27] | Classification Accuracy | 74.9% | Achieved on heterogeneous ABIDE dataset using dynamic functional connectivity. |

| Sliding Window Setup [27] | Window Width / Step Size | 30 sec / 1 sec | Parameters for segmenting rs-fMRI data to capture dynamic FC. |

Detailed Experimental Protocols

Protocol: GSP-Based Feature Extraction and Classification

This protocol details the methodology for achieving high classification accuracy using a GSP framework, as referenced in the troubleshooting guides [26].

Objective: To extract discriminative spectral features from brain connectivity graphs and classify subjects (e.g., ASD vs. control) with high accuracy.

Workflow:

Data Acquisition & Preprocessing:

Graph Construction:

- Define nodes as brain ROIs.

- Define edges as functional interactions, calculated using correlation or coherence between regional time-series.

- Apply a sparsity threshold (recommended: 25%) to the connectivity matrix to create a subject-specific, unweighted graph [26].

GSP Feature Extraction:

- Calculate the Graph Laplacian matrix of the thresholded graph.

- Compute the Graph Fourier Transform (GFT) to decompose brain signals into spectral components.

- Extract key spectral features:

- Spectral Entropy: Measures the uncertainty or complexity of the signal in the graph spectral domain.

- GFT Coefficients: The spectral representation of the original graph signal.

- Clustering Coefficients: Measures the degree to which nodes cluster together in the graph.

Feature Fusion & Classification:

- Combine the extracted GSP features and reduce dimensionality using Principal Component Analysis (PCA).

- Train a Support Vector Machine (SVM) classifier with a radial basis function (RBF) kernel on the transformed features.

- Validate model performance using cross-validation.

Protocol: Dynamic Functional Connectivity Analysis with Spectral Learning

This protocol outlines the dCSL method for analyzing dynamic FCs to capture higher-order temporal patterns [28].

Objective: To estimate and analyze dynamic Functional Connectivity (dFC) for improved brain disorder detection by learning its spectral properties.

Workflow:

dFCs Estimation:

- Use a sliding window (e.g., width=30s, step=1s) on preprocessed BOLD signal time-series.

- Within each window, calculate the Pearson's correlation (PC) between all ROI pairs to create a time-series of dFC matrices.

Spectral Kernel Mapping:

- Integrate Fourier transform with kernel methods to construct a non-stationary spectral kernel.

- This mapping transforms the dFC time-series into a representation that captures long-range correlations and temporal dependencies.

Deep Architecture for Spectral Learning:

- Stack the spectral kernel mapping into a deep neural network.

- This architecture pores time-varying spectrum and hierarchical representations of dFCs, enabling the capture of complex, higher-order temporal patterns.

Classification:

- Use the high-level temporal features extracted by the deep dCSL model for final classification tasks.

Signaling Pathway & Workflow Visualizations

GSP Analysis Workflow

Threshold Optimization Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for GSP-based Brain Connectivity Research

| Resource Category | Specific Tool / Dataset | Function and Application |

|---|---|---|

| Public Neuroimaging Datasets | ABIDE (Autism Brain Imaging Data Exchange) I & II | A large-scale, multi-site repository of fMRI data from individuals with ASD and controls, essential for training and validating models [27]. |

| ADNI (Alzheimer's Disease Neuroimaging Initiative) | Provides fMRI and other data focused on Alzheimer's disease and mild cognitive impairment, useful for transdiagnostic studies [28]. | |

| Standardized Pre-processing Pipelines | CPAC (Configurable Pipeline for the Analysis of Connectomes) | A standardized, open-source software pipeline for the automated pre-processing of fMRI data, ensuring reproducibility [27]. |

| Data Harmonization Tools | ComBat | A statistical method used to remove unwanted batch effects (e.g., from different scanner sites) in multi-site neuroimaging studies [27]. |

| Brain Parcellation Atlases | Craddock 200 (CC200) | A functional atlas that parcellates the brain into 200 regions of interest (ROIs), providing a standardized set of network nodes [27]. |

| Core GSP & ML Libraries | Scikit-learn (Python) | Provides implementations of standard classifiers (e.g., SVM) and tools for feature reduction (e.g., PCA) [26]. |

| SciPy (Python) | A fundamental library for scientific computing, used for optimization and linear algebra operations in GSP [29]. | |

| Dynamic FC Analysis Methods | Sliding Window Technique | The primary method for estimating dynamic FCs by calculating correlations within successive time windows [28] [27]. |

| Advanced Temporal Modeling | LSTM with Attention Mechanism | A deep learning architecture used to model long-term temporal dependencies in dynamic connectivity data and identify critical time points [27]. |

This technical support center is designed for researchers applying threshold-based asymmetry analysis in ASD biomarker discovery. The following guides address common experimental challenges, leveraging multivariate statistical approaches to identify diagnostic subgroups within this heterogeneous disorder [30] [31].

Troubleshooting Guides & FAQs

FAQ 1: How do I determine optimal statistical thresholds for subgroup stratification in heterogeneous ASD populations?

Answer: Optimal threshold determination requires multi-algorithm validation. Begin with these steps:

- Initial Analysis: Apply multiple algorithms (Random Forest, t-test, correlation-based feature selection) to your proteomic or biomarker dataset to identify candidate markers [32].

- Cross-Validation: Identify a "core" set of biomarkers that appear significant across all applied algorithms. In a serum proteomic study, combining three algorithms yielded a core panel of 9 proteins that effectively identified ASD [32].

- Threshold Calibration: Use the identified core panel to establish a baseline predictive model. Systematically test different statistical thresholds for this panel to optimize for your specific goal (e.g., maximizing diagnostic sensitivity vs. specificity) [32].

- Performance Validation: Evaluate the chosen threshold against an independent test set. The referenced 9-protein panel achieved an Area Under the Curve (AUC) of 0.86, with specificity of 0.82 and sensitivity of 0.84 [32].

FAQ 2: What are the primary sources of variability that can impact threshold stability in ASD biomarker analysis?

Answer: Key variability sources include:

- Clinical Heterogeneity: The diverse behavioral presentation and high prevalence of co-occurring conditions (e.g., ADHD, anxiety, epilepsy) in ASD introduce significant biological variability that can affect biomarker levels and threshold accuracy [31].

- Technical Measurement Error: Incorporate quality control steps, such as using blinded duplicate samples, to assess and account for assay variability during proteomic analysis [32].

- Sociodemographic Factors: Factors such as racial minority status or lower maternal education can influence developmental trajectories and age of diagnosis, potentially confounding biomarker expression [30].

FAQ 3: My biomarker panel shows good diagnostic accuracy but poor correlation with clinical severity scores. How can I improve this?

Answer: This indicates a disconnect between your diagnostic and prognostic thresholds.

- Refined Correlation Analysis: Correlate the levels of each biomarker in your panel with standardized clinical severity scores, such as the ADOS (Autism Diagnostic Observation Schedule) total score. Select biomarkers that are significantly correlated with these scores for your prognostic model [32].

- Dimensionality Reduction: Apply machine learning techniques like Random Forest to measure feature importance, using metrics like Mean Decrease in Gini Index, to identify which biomarkers are most predictive of both diagnosis and symptom severity [32].

Experimental Protocol for Threshold-Based ASD Biomarker Discovery

The following table summarizes a detailed proteomic workflow for discovering and validating a blood-based biomarker panel for ASD, suitable for threshold-based analysis.

Table 1: Experimental Protocol for ASD Biomarker Discovery and Validation

| Protocol Step | Detailed Methodology | Technical Parameters & Purpose |

|---|---|---|

| 1. Participant Recruitment | Recruit cohorts of ASD and Typically Developing (TD) controls, matched for age and sex. Confirm ASD diagnosis with gold-standard instruments (ADOS, ADI-R) and DSM-5 criteria. Screen TD participants to rule out developmental concerns [32]. | Purpose: To establish a well-characterized cohort. Reduces confounding variability. ADOS total score provides a continuous measure of ASD severity for correlation analysis [32]. |

| 2. Sample Collection & Prep | Perform a fasting blood draw. Collect blood in serum separation tubes. Allow clotting (10-15 mins), then centrifuge. Aliquot serum and store at -80°C [32]. | Purpose: To preserve biomarker integrity. Standardizing clotting and centrifugation time minimizes pre-analytical variability. |

| 3. Proteomic Analysis | Analyze serum samples using a high-throughput platform (e.g., SomaLogic's SOMAScan). Analyze a large number of proteins (e.g., 1,125 after quality control) [32]. | Purpose: To obtain a multivariate protein abundance profile for each subject. Provides the high-dimensional data needed for biomarker discovery. |

| 4. Data Normalization | Normalize protein abundance data by log10 transformation, followed by z-transformation. Clip outliers (e.g., z-scores beyond -3 / +3) [32]. | Purpose: To make protein measurements comparable across samples and reduce the influence of extreme outliers. |

| 5. Biomarker Selection | Apply multiple algorithms to the normalized data:• Random Forest: Identify top proteins by feature importance (MeanDecreaseGini).• T-test: Select proteins with most significant differences between groups.• Correlation: Identify proteins most highly correlated with ADOS severity scores [32]. | Purpose: To find a robust, multi-faceted biomarker panel. Combining methods identifies a "core" set of proteins predictive of diagnosis and severity [32]. |

| 6. Model Training & Thresholding | Train a predictive model (e.g., using machine learning) with the core protein panel. Establish diagnostic thresholds based on model outputs (e.g., probability scores). Validate thresholds using a separate test set or cross-validation [32]. | Purpose: To create a clinical test. The threshold is optimized to balance sensitivity and specificity, achieving the best diagnostic performance. |

Signaling Pathway and Workflow Visualization

The following diagram illustrates the logical workflow for the biomarker discovery and threshold analysis process, from cohort establishment to clinical application.

Biomarker Discovery and Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for ASD Proteomic Biomarker Studies

| Item Name | Function / Application in Research |

|---|---|

| Serum Separation Tubes | Used for standardized collection of blood samples. Tubes contain a gel separator and clot activator to yield clean serum for proteomic analysis after centrifugation [32]. |

| SOMAScan Assay Platform | A high-throughput proteomic platform used to measure the levels of a large number of proteins (e.g., 1,317) simultaneously from a small volume of serum, facilitating biomarker discovery [32]. |

| Autism Diagnostic Observation Schedule (ADOS) | A gold-standard, standardized assessment tool used to characterize and measure the severity of ASD-specific behaviors (Social Affect and Restricted/Repetitive Behaviors), providing a critical clinical correlate for biomarkers [30] [32]. |

| Random Forest Algorithm | A machine learning method used to analyze complex proteomic data, measure the importance of individual protein biomarkers, and build robust, multi-protein predictive models for ASD classification [32]. |

| Adaptive Behavior Assessment System (ABAS-II) | A diagnostic tool used to screen and confirm typical development in control subjects, ensuring the TD group is free from developmental concerns that could confound biomarker analysis [32]. |

Troubleshooting Guides

FAQ 1: Why does my power calculation show sufficient power, but my trial still fails to detect a significant effect?

Problem: A clinical trial was designed with a conventional power of 80% but failed to reject the null hypothesis, leading to a costly Phase II failure.

Investigation & Solution: The issue likely stems from an overestimation of the true effect size or an underestimation of population variability during the planning stage. Conventional power calculations often use a single assumed value for the treatment effect, which does not account for the uncertainty around this estimate.

- Diagnostic Check: Review the assumptions used in your initial power calculation. Compare the observed effect size and variability from your trial to the values you assumed.

- Recommended Approach: Transition from a single-point power estimate to inference on power. This involves using the p-value function to create a confidence distribution for the power itself, which better quantifies the risk of failure. A point estimate of power (whether from conventional calculation or Bayesian assurance) does not adequately capture this risk [33].

- Protocol: Implement a model-based drug development (MBDD) approach using exposure-response analysis.

- From prior Phase I data, define the relationship between drug exposure (e.g., AUC) and a binary efficacy response using a logistic model:

P(AUC) = 1 / (1 + e^-(β0 + β1 * AUC))[34]. - Using a population PK model, simulate the distribution of AUC for your planned Phase II doses [34].

- Simulate thousands of trials at your planned sample size. For each trial, generate AUC values for each subject, calculate their probability of response, simulate a binary outcome, and then fit an exposure-response model to the simulated data [34].

- The power is the proportion of simulated trials where the exposure-response slope (β1) is statistically significant [34]. This method often reveals a wider range of possible power values than a conventional calculation, highlighting the risk of proceeding.

- From prior Phase I data, define the relationship between drug exposure (e.g., AUC) and a binary efficacy response using a logistic model:

FAQ 2: How do I select the threshold for an asymmetry graph when the exposure-response relationship is non-linear?

Problem: An exposure-response analysis for a new oncology drug suggests a plateau in efficacy at higher doses. Defining an asymmetric "efficacy window" for decision-making is challenging.

Investigation & Solution: The threshold should not be a single point but a region informed by the model's uncertainty and the clinical context. The problem is one of inadequate dose-ranging study design and underutilization of the exposure-response model for decision-making.

- Diagnostic Check: Plot the simulated exposure-response curve with confidence intervals. A flattening curve indicates a potential plateau.

- Recommended Approach: Use the exposure-response powering methodology to define an asymmetric decision threshold based on a target efficacious response.

- Protocol:

- Define Decision Thresholds: Establish a lower threshold (e.g., a response rate significantly better than placebo but below the maximum) and an upper threshold (e.g., the onset of unacceptable toxicity). The space between them is the asymmetric target region [34].

- Power the Asymmetry Test: Use the simulation protocol from FAQ 1. However, the analysis goal is to determine the sample size required not just for a significant slope, but to demonstrate with high confidence that the response at your target dose lies within your pre-specified asymmetric thresholds.

- Quantify Risk: The simulation output will show the probability that your trial will correctly identify the dose within the target window. This probability directly informs the Go/No-Go decision by quantifying the risk of misclassification [33].

FAQ 3: My bioinformatics pipeline for target identification is producing inconsistent results, leading to poor lead compound selection. How can I stabilize it?

Problem: High attrition rates in preclinical stages due to unreliable target identification from genomic data.

Investigation & Solution: Inconsistencies often arise from data quality issues, tool incompatibility, or a lack of robust validation steps within the pipeline.

- Diagnostic Check: Use quality control tools like FastQC and MultiQC on your raw genomic data. Check for version conflicts between software (e.g., BWA for alignment and GATK for variant calling) using a workflow management system like Nextflow, which logs errors [35].

- Recommended Approach: Implement a standardized, documented, and version-controlled bioinformatics pipeline.

- Protocol:

- Data Preprocessing: Use Trimmomatic to remove low-quality sequences and adapters from raw data [35].

- Alignment: Align sequences to a reference genome using a standardized tool like BWA or STAR [35].

- Variant Calling/Expression: Identify genetic variants with GATK or analyze gene expression levels [35].

- Validation: Cross-check your results using an alternative tool or a known dataset. Integrate biological context (e.g., pathway analysis) to triage targets, prioritizing those with strong biological plausibility [36].

- Documentation: Use Git for version control and document all parameters and software versions to ensure reproducibility [35].

Experimental Protocols

Protocol 1: Inference on Power for Go/No-Go Decisions

Objective: To move beyond point estimates of power and perform statistical inference on power for better risk management in Phase II/III Go/No-Go decisions [33].

Materials:

- Prior study data (Phase I or preclinical) on effect size and variability.

- Statistical software (e.g., R).

Methodology:

- Define the P-value Function: For your planned analysis (e.g., a two-sample t-test), construct the p-value function based on your prior data. This function shows how the p-value varies across different possible values of the true treatment effect.

- Calculate Confidence Distribution for Power: Use the p-value function to create a confidence distribution for the true effect size. For each possible effect size in this distribution, calculate the corresponding power for your planned trial's sample size.

- Visualize and Interpret: Plot the resulting distribution of power. This visualization shows the range of plausible power values, explicitly quantifying the risk that the true power is unacceptably low. This distribution is the basis for inference on power [33].

Protocol 2: Exposure-Response Power and Sample Size Determination

Objective: To determine the power and sample size for a dose-ranging study using exposure-response models, which can be more efficient than conventional methods [34].

Materials:

- Population PK model parameters (mean clearance, variability).

- Assumed exposure-response model parameters (e.g., β0, β1 for a logistic model).

- Statistical software (e.g., R) for simulation.

Methodology:

- Define Parameters: Set the assumed intercept (β0), slope (β1), number of doses (

m), and PK parameters (typical CL/F and CV%) [34]. - Simulate Trial Population: For a given sample size

nper dose group, simulaten * mAUC values based on the log-normal PK distribution:AUC = Dose / (CL/F)[34]. - Simulate Response: For each simulated AUC, calculate the probability of response