Advanced Remote Sensing for Habitat Fragmentation Assessment: Techniques, Applications, and Future Directions

This article provides a comprehensive overview of the pivotal role remote sensing technologies play in assessing and monitoring habitat fragmentation, a primary driver of global biodiversity loss.

Advanced Remote Sensing for Habitat Fragmentation Assessment: Techniques, Applications, and Future Directions

Abstract

This article provides a comprehensive overview of the pivotal role remote sensing technologies play in assessing and monitoring habitat fragmentation, a primary driver of global biodiversity loss. It explores the foundational ecological principles of fragmentation, details cutting-edge methodological approaches including AI-driven analysis and LiDAR, and addresses key challenges in data integration and model interpretation. By presenting rigorous validation frameworks and comparative case studies across diverse ecosystems—from temperate forests to marine environments—this resource equips researchers, scientists, and conservation professionals with the knowledge to leverage Earth observation data for evidence-based conservation planning and ecological management.

Understanding Habitat Fragmentation: Ecological Principles and the Remote Sensing Imperative

Defining Habitat Fragmentation and Its Impacts on Biodiversity and Ecosystem Services

Habitat fragmentation describes the process by which large, continuous expanses of habitat are transformed into smaller, isolated patches, separated by a matrix of human-transformed landscapes [1] [2]. This process is a critical environmental issue and a principal driver of global biodiversity loss, with research indicating it can reduce biodiversity by 13% to 75% and significantly impair key ecosystem functions [2]. It is crucial to distinguish habitat fragmentation from the related concept of habitat loss. While habitat loss refers to the outright disappearance of habitat, fragmentation per se refers to the breaking apart of habitat independent of the total amount lost, fundamentally altering the spatial configuration of the remaining habitat [1] [2]. These changes include a decrease in the average size of habitat patches, an increase in their isolation, and a higher ratio of edge to interior habitat, initiating complex ecological cascades [1].

Within the context of remote sensing for environmental assessment, monitoring habitat fragmentation is paramount. As one study emphasizes, "Earth observation techniques and remotely sensed imagery are crucial tools for the large-scale monitoring of forest habitat loss and fragmentation," a task amplified by new satellite missions providing high-resolution, open-access data [3]. This guide provides a comparative analysis of the ecological impacts of habitat fragmentation and the experimental protocols used to quantify them, serving as a foundation for researchers applying geospatial technologies to conservation science.

The Multifaceted Impacts of Habitat Fragmentation: A Comparative Analysis

The impacts of habitat fragmentation are profound and interwoven, affecting all levels of ecological organization. The table below synthesizes the primary direct and indirect effects, providing a structured comparison of their mechanisms and consequences.

Table 1: Comparative Analysis of Habitat Fragmentation Impacts on Ecological Systems

| Impact Category | Key Mechanism | Documented Consequences | Experimental Support |

|---|---|---|---|

| Biodiversity Loss | Reduction in patch size and resource availability [1]. | 13-75% reduction in species richness; greater effect in smaller, older fragments [2]. | Synthesis of long-term fragmentation experiments [2]. |

| Edge Effects | Altered microclimate (light, temperature, wind), increased invasive species, and human disturbance at boundaries [1] [4]. | Changes in species composition; reduced population density for interior species; increased mortality [1] [4] [5]. | Global forest analysis shows >70% of forests within 1 km of an edge [2]. |

| Genetic Decline | Isolation limits gene flow, leading to inbreeding in small populations [4] [5]. | Reduced genetic diversity; inbreeding depression (e.g., Florida panther, Macquarie perch) [4] [5]. | Population genetic studies and predictive models [4]. |

| Disrupted Ecological Processes | Barriers to movement interrupt seed dispersal, pollination, and nutrient cycling [5]. | Impaired plant regeneration; altered trophic cascades; changes in biomass and nutrient cycles [2] [5]. | Ecosystem function measurements in experimental fragments [2]. |

| Ecosystem Service Degradation | Landscape disintegration reduces the capacity of ecosystems to perform regulating functions [6] [7]. | Decline in water purification, carbon storage, soil retention, and flood mitigation [6] [5] [7]. | Quantitative analysis of ES supply vs. fragmentation indices [6]. |

Interrupted Ecological Processes and Trophic Dynamics

Fragmentation creates physical barriers that disrupt vital ecological processes. Species that act as seed dispersers or pollinators may struggle to move between patches, leading to reduced plant recruitment and genetic connectivity for flora [5]. Furthermore, the loss of top predators from small fragments can trigger trophic cascades; for instance, the decline of wolves in fragmented landscapes has been linked to increased predation on species like the mountain caribou, while also causing unchecked growth in herbivore populations like deer, which subsequently over-consume vegetation [4] [5]. These disruptions ultimately lead to a breakdown in fundamental ecosystem functions, with experiments showing clear reductions in biomass and alterations to nutrient cycles [2].

Impacts on Ecosystem Services

Habitat fragmentation directly undermines the ecosystem services that support human well-being. Research in the Yangtze River Delta region has demonstrated that processes like the decline in habitat area and increased habitat isolation have complicated, often nonlinear, effects on services such as water yield, soil retention, carbon storage, and habitat quality [6]. For example, larger, contiguous forests are significantly more efficient at sequestering carbon than smaller, fragmented patches [5]. Similarly, the fragmentation of wetlands diminishes their capacity to purify water, recharge groundwater, and buffer floods, leading to tangible losses in natural capital and increased risks for human communities [5] [7].

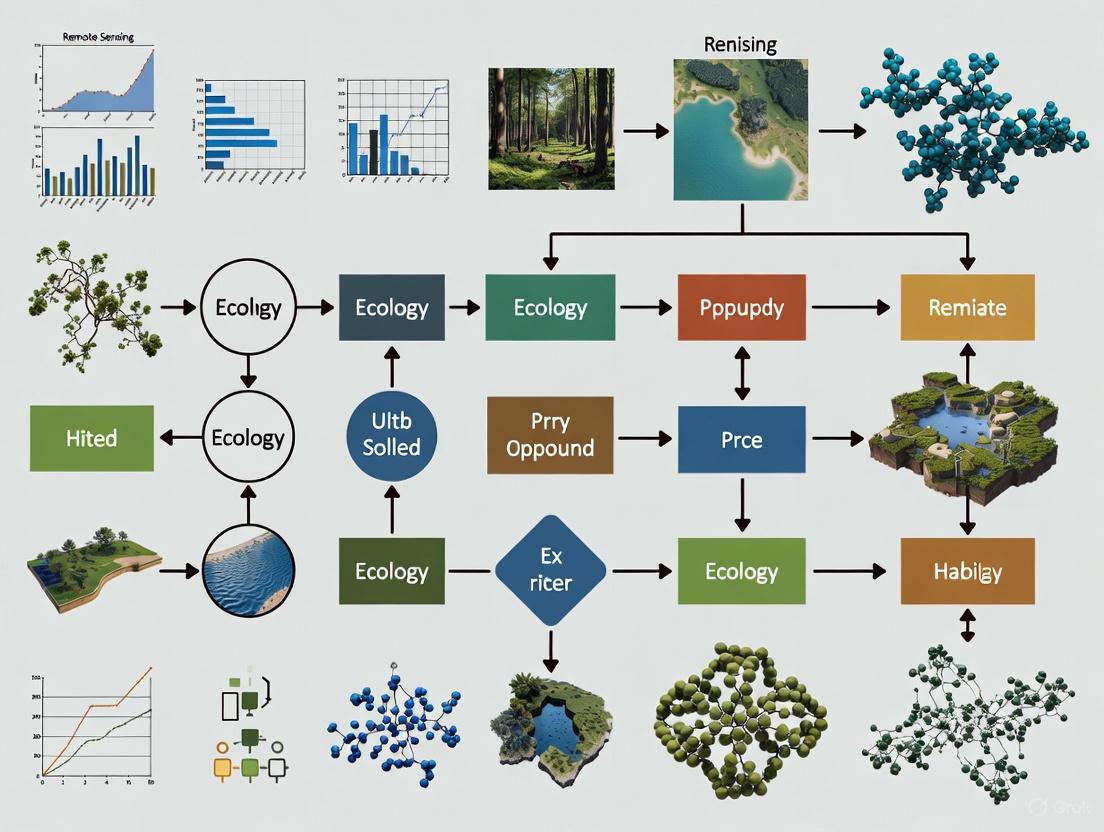

The following diagram illustrates the logical chain of causes and effects that connects the initial drivers of fragmentation to its ultimate impacts on ecosystems and human societies.

Experimental Protocols for Quantifying Fragmentation and Its Effects

A multi-faceted approach is required to rigorously measure habitat fragmentation and its ecological consequences. The methodologies below represent key protocols used in field ecology and remote sensing.

Protocol 1: Long-Term Fragmentation Experiments

Objective: To isolate and test the causal effects of specific fragmentation components (e.g., area, isolation, edge) on biodiversity and ecosystem function over time [2].

Workflow:

- Site Selection & Baseline Data Collection: Select a large area of continuous habitat. Conduct comprehensive pre-treatment surveys to document species abundance, richness, community composition, and ecosystem processes [2].

- Experimental Manipulation: Using a replicated, blocked design, systematically create habitat fragments of varying sizes (e.g., 1ha, 10ha) and degrees of isolation (e.g., with or without corridors). Control for the total amount of habitat loss across the landscape [2].

- Post-Treatment Monitoring: Regularly census all fragments and control sites for decades. Track species populations, community turnover, genetic diversity, and metrics of ecosystem function like biomass accumulation and nutrient cycling [2].

- Data Analysis: Compare ecological responses in the experimental fragments to the control areas. Use statistical models to disentangle the effects of area, isolation, and edge from each other [2].

Protocol 2: Remote Sensing-Based Landscape Monitoring

Objective: To map habitat loss and fragmentation patterns over large spatial extents and long time periods using satellite imagery [3].

Workflow:

- Data Acquisition: Compile a time series of satellite imagery (e.g., Landsat, Sentinel-2) for the region and time period of interest. Pre-process the imagery for atmospheric and radiometric corrections to ensure consistency [3].

- Land Cover Classification: Use machine learning classifiers (e.g., Random Forest) on the satellite data to generate annual or seasonal land cover maps, identifying the habitat class of interest (e.g., forest, grassland) [8] [3].

- Landscape Metric Calculation: Input the habitat maps into landscape ecology software (e.g., FRAGSTATS) to calculate quantitative indices for each patch and the overall landscape. Key metrics include:

- Change Detection & Correlation: Analyze temporal trends in landscape metrics to quantify fragmentation dynamics. Statistically correlate these metrics with field-sampled data on biodiversity or modeled ecosystem services to assess impact [6] [7] [3].

The workflow for the remote sensing protocol is visualized below, highlighting the sequence from data acquisition to analytical output.

The Scientist's Toolkit: Key Reagent Solutions for Fragmentation Research

This section details essential tools and data sources, the "research reagents," that are fundamental for conducting modern habitat fragmentation studies.

Table 2: Essential Research Tools for Habitat Fragmentation Assessment

| Tool / Solution | Category | Primary Function in Research | Example Sources/Platforms |

|---|---|---|---|

| Landsat & Sentinel-2 | Satellite Imagery | Provides medium-resolution, multi-spectral data with long-term historical archives and frequent revisit times for change detection. | USGS EarthExplorer, ESA Copernicus Open Access Hub [3] |

| Google Earth Engine (GEE) | Cloud Computing Platform | Enables planetary-scale analysis of geospatial data by hosting massive datasets and providing high-performance computing capabilities. | Google [3] |

| FRAGSTATS | Analytical Software | Calculates a wide suite of landscape pattern metrics (e.g., patch area, density, connectivity) from categorical maps. | University of Massachusetts Amherst [6] [8] |

| Change Detection Algorithms | Analytical Model | Identifies and characterizes disturbances and land cover changes from satellite image time series. | LandTrendr [3], CCDC [3], Global Forest Watch [3] |

| Global Forest Change Data | Processed Dataset | Offers a pre-processed, global map of annual forest loss and gain, serving as a key baseline for forest fragmentation studies. | Hansen et al., 2013 [3] |

| InVEST Model | Analytical Model | Maps and quantifies the supply and value of ecosystem services (e.g., carbon storage, habitat quality) under different land use scenarios. | Natural Capital Project [7] |

The experimental evidence is unequivocal: habitat fragmentation is a powerful agent of ecological change, consistently degrading biodiversity and compromising the functionality of ecosystems. The impacts are not merely additive but are often synergistic, with edge effects, genetic isolation, and disrupted species interactions compounding over time to accelerate ecosystem decay [1] [2]. The legacy of past fragmentation creates an "extinction debt," where the full consequences of population subdivision may not be realized for decades [1].

For researchers, the path forward requires integrating the tools detailed in this guide. Ground-truthed, long-term experimental data remains the gold standard for establishing causal mechanisms, while remote sensing provides the scalable capacity to measure fragmentation patterns across entire continents. Combining these approaches with powerful cloud computing and sophisticated landscape models offers the best hope for accurately diagnosing the health of fragmented landscapes and prescribing effective conservation interventions, such as the strategic implementation of wildlife corridors and habitat restoration [1] [5] [3]. As global changes continue to exert pressure on natural systems, the scientific community's ability to monitor, understand, and mitigate habitat fragmentation will be critical to safeguarding biodiversity and the essential ecosystem services upon which humanity depends矜.

Distinguishing Between Habitat Loss, Fragmentation Per Se, and Edge Effects

Habitat degradation represents a primary driver of global biodiversity loss, yet its constituent processes are often conflated in ecological research and conservation practice [9]. This guide provides a structured comparison of three interconnected yet distinct phenomena: habitat loss, the outright destruction of living space; fragmentation per se, the breaking apart of habitat independent of total area reduction; and edge effects, the ecological changes at habitat boundaries [9]. Understanding these distinctions is crucial for developing effective conservation strategies and accurately assessing anthropogenic impacts on ecosystems.

Within conservation biology, these processes frequently occur simultaneously with synergistic effects on ecosystems, though they differ significantly in their mechanisms and ecological consequences [9]. Remote sensing technologies have emerged as pivotal tools for disentangling these complex spatial processes, enabling researchers to quantify patterns, monitor changes, and predict ecological outcomes across landscape scales [3] [10]. The integration of satellite imagery, machine learning algorithms, and spatial analysis now provides unprecedented capability to distinguish and monitor these separate components of habitat degradation.

Conceptual Framework and Definitions

Core Concepts and Their Interrelationships

Table 1: Defining Core Components of Habitat Degradation

| Component | Definition | Primary Drivers | Spatial Manifestation |

|---|---|---|---|

| Habitat Loss | Complete destruction or removal of living space for species [9] | Deforestation for agriculture, urban expansion, resource extraction [11] [9] | Reduction in total habitat area |

| Fragmentation Per Se | Breaking apart of continuous habitat into smaller, isolated patches independent of habitat loss [12] [3] | Road construction, infrastructure development, natural barriers [11] | Increased habitat subdivision without reduction in total area |

| Edge Effects | Ecological changes at boundaries between habitat types [9] | Habitat fragmentation creating transition zones [9] | Altered environmental conditions and species composition at edges |

These three components interact within a hierarchical relationship where habitat loss typically initiates the degradation process, fragmentation subdivides the remaining habitat, and edge effects subsequently modify the ecological conditions within the resulting habitat patches [9]. The distinction between fragmentation per se and habitat loss is particularly critical, as the former specifically refers to the spatial configuration of habitat independent of the total amount lost—a conceptual separation that has profound implications for biodiversity outcomes [12].

Theoretical Foundations and Ecological Mechanisms

The ecological consequences of these processes stem from distinct mechanistic pathways. Habitat loss directly reduces carrying capacity by eliminating resources and living space, leading to immediate population declines [9]. Fragmentation per se primarily affects species through isolation, which impedes dispersal, colonization, and gene flow among subpopulations [12] [3]. Edge effects operate through abiotic and biotic mechanisms, including altered microclimate conditions (light, temperature, humidity), increased predation pressure, and invasion by disturbance-adapted species [9].

The Habitat Amount Hypothesis proposed by Fahrig [12] posits that species richness depends primarily on the total amount of habitat in a local landscape rather than its spatial configuration. However, this perspective remains contentious, with empirical studies reporting contrasting patterns and theoretical models demonstrating that fragmentation per se can have either positive or negative effects on species diversity depending on contextual factors like the total habitat amount and competitive interactions within communities [12].

Remote Sensing Methodologies for Detection and Monitoring

Sensor Platforms and Technical Specifications

Table 2: Remote Sensing Platforms for Habitat Degradation Assessment

| Platform/Sensor | Spatial Resolution | Temporal Resolution | Key Applications | Advantages | Limitations |

|---|---|---|---|---|---|

| Sentinel-2 | 10-60 m [13] | 5 days [13] | Large-scale habitat loss detection, land cover change [13] [3] | Free access, broad spectral range, frequent revisit [13] | Limited detail for small habitat patches |

| PlanetScope | ~3 m [13] | Near-daily [13] | Fine-scale fragmentation mapping, patch delineation [13] | High spatial resolution, frequent monitoring | Commercial license, narrower spectral range |

| Landsat | 30 m | 16 days | Long-term change detection, historical analysis [3] | Extensive historical archive, free access | Coarser resolution limits small patch detection |

| Google Earth Engine | Varies by dataset | Varies by dataset | Landscape metrics calculation, multi-temporal analysis [3] | Cloud computing, massive data catalog, processing power | Requires technical expertise |

Experimental Protocols and Analytical Workflows

Remote sensing-based assessment of habitat degradation typically follows a structured workflow encompassing data acquisition, preprocessing, classification, and spatial analysis. For detecting goldenrod invasion as a specific example of habitat degradation, researchers have developed optimized protocols using multitemporal imagery [13]. The experimental methodology typically involves:

Data Collection: Acquisition of multitemporal satellite imagery (e.g., Sentinel-2, PlanetScope) covering the entire growing season, with particular emphasis on phenologically distinct periods such as autumn, when invasive goldenrods exhibit distinctive spectral signatures [13].

Image Preprocessing: Atmospheric correction, radiometric calibration, and geometric registration to ensure data consistency across time series and between different sensor platforms.

Feature Extraction: Calculation of spectral bands, vegetation indices (e.g., NDVI), and temporal statistics that enhance the separability of target species or habitat types from surrounding vegetation [13].

Classification: Application of machine learning algorithms such as Random Forest or One-Class Support Vector Machines (OCSVM) to identify and map habitat features. Random Forest has demonstrated consistently superior performance for goldenrod detection, achieving F1-scores of 0.98 using multitemporal Sentinel-2 data [13].

Landscape Analysis: Calculation of spatial metrics (patch size, connectivity, edge-to-area ratios) from classification outputs to quantify fragmentation patterns and edge effects [3].

Comparative Ecological Impacts and Conservation Implications

Differential Effects on Biodiversity and Ecosystem Processes

The three components of habitat degradation exert distinct pressures on ecological communities, with varying implications for species persistence and ecosystem function. Habitat loss represents the most severe impact, directly causing immediate local extinctions by eliminating the fundamental resources required for population maintenance [9]. Fragmentation per se drives more gradual species loss over time through mechanisms including reduced genetic exchange, increased demographic stochasticity in small populations, and disruption of metapopulation dynamics [12] [3]. Edge effects primarily cause shifts in community composition, favoring generalist and edge-adapted species while negatively impacting habitat specialists through altered microclimatic conditions and increased predation pressure [9].

The relationship between these processes is complex and context-dependent. Theoretical models suggest that fragmentation per se can either increase or decrease species diversity depending on the total amount of habitat remaining [12]. When habitat is abundant, fragmentation may enhance diversity by creating environmental heterogeneity and reducing competitive exclusion. Conversely, when habitat is scarce, further fragmentation typically accelerates biodiversity loss by exacerbating isolation effects and reducing patch sizes below viable thresholds [12].

Quantitative Comparisons and Empirical Evidence

Table 3: Comparative Ecological Impacts of Habitat Degradation Components

| Impact Category | Habitat Loss | Fragmentation Per Se | Edge Effects |

|---|---|---|---|

| Species Richness | Immediate decline proportional to area lost [9] | Context-dependent: positive effect with large habitat amount, negative with small amount [12] | Increased generalists, decreased interior specialists [9] |

| Genetic Diversity | Reduced population size increases drift | Isolation limits gene flow, increases inbreeding [11] | Typically minimal direct impact |

| Community Composition | Non-random loss of habitat specialists | Alters competitive balance, favors dispersers | Significant species replacement at edges [9] |

| Ecosystem Function | Direct loss of functional processes | Disruption of spatial processes, nutrient flows | Altered nutrient cycling, microclimate [9] |

| Recovery Potential | Most challenging to reverse [9] | Reconnection possible through corridors | Reversible through natural succession |

Empirical evidence from remote sensing studies demonstrates the practical application of these distinctions. Research on invasive goldenrod detection achieved highest accuracy (F1-score: 0.98) using multitemporal Sentinel-2 imagery and Random Forest classification, highlighting the value of phenological timing in detecting habitat degradation [13]. This approach successfully distinguished invasion patterns—a form of habitat degradation—from natural vegetation, enabling precise mapping of degradation extent and configuration.

The Scientist's Toolkit: Research Solutions for Habitat Assessment

Table 4: Research Toolkit for Habitat Fragmentation Assessment

| Tool Category | Specific Solutions | Primary Function | Application Context |

|---|---|---|---|

| Satellite Platforms | Sentinel-2, PlanetScope, Landsat [13] [3] | Multispectral image acquisition | Habitat extent mapping, change detection |

| Cloud Computing | Google Earth Engine, SEPAL, OpenEO [3] | Big data processing, algorithm implementation | Landscape metric calculation, time series analysis |

| Classification Algorithms | Random Forest, One-Class SVM [13] | Automated feature identification | Habitat type classification, invasive species detection |

| Landscape Metrics | Patch size, connectivity, edge density [3] | Quantification of spatial patterns | Fragmentation assessment, configuration analysis |

| Vegetation Indices | NDVI, species-specific indices [13] [3] | Vegetation status assessment | Habitat condition monitoring, degradation detection |

| Change Detection Algorithms | LandTrendr, CCDC, Global Forest Change [3] | Temporal change identification | Habitat loss quantification, disturbance monitoring |

Integrated Framework for Conservation Decision-Making

The effective distinction between habitat loss, fragmentation per se, and edge effects enables more targeted conservation interventions. Habitat loss necessitates restoration or protection of remaining areas, while fragmentation per se can be addressed through connectivity enhancement such as wildlife corridors and stepping stone habitats [9]. Edge effects may be mitigated through buffer zone establishment and management strategies that reduce contrast between habitat patches and the surrounding matrix [9].

Remote sensing technologies are increasingly integral to these conservation solutions, providing the spatial data necessary to prioritize actions, monitor outcomes, and adapt strategies over time [3] [10]. The integration of multi-scale sensor data with machine learning classification and spatial analysis represents a transformative advancement in our capacity to understand, monitor, and mitigate the complex processes of habitat degradation across landscape scales.

The Critical Role of Earth Observation in Large-Scale Environmental Monitoring

Earth Observation (EO) has fundamentally transformed our capacity to monitor environmental changes across the globe. Satellite-based remote sensing provides an unparalleled vantage point for tracking phenomena from habitat fragmentation to climate impacts, offering objective, repeatable, and global-scale data that ground-based methods cannot achieve alone [14]. The launch of TIROS-1 in 1960 marked the beginning of meteorological satellite applications, but the true revolution began with the Landsat program in the 1970s, which established a long-term, operational EO program for managing natural resources [15]. Today, with constellations like Sentinel and PlanetScope providing high-resolution, frequent revisits, and cloud computing platforms like Google Earth Engine enabling planetary-scale analysis, EO has become an indispensable tool for researchers and conservationists tackling pressing environmental challenges [3].

This technological evolution is particularly critical for monitoring habitat fragmentation, a key driver of biodiversity loss. As human activities and climate change increasingly subdivide natural landscapes, EO provides the spatial and temporal continuity necessary to map these changes systematically, identify fragmentation hotspots, and guide conservation interventions [3]. This guide examines the current capabilities of EO systems, compares sensor and platform performance for specific environmental monitoring applications, and details the experimental protocols that enable researchers to convert satellite data into actionable ecological insights.

Sensor and Platform Capabilities Comparison

The effectiveness of EO for environmental monitoring depends on selecting appropriate sensors and platforms, each with distinct strengths in spatial, temporal, and spectral resolution. The following tables compare the core specifications of major satellite systems and their suitability for different monitoring tasks.

Table 1: Comparison of Current Earth Observation Satellite Sensors

| Satellite/Sensor | Spatial Resolution | Revisit Time | Key Spectral Bands | Primary Applications |

|---|---|---|---|---|

| Landsat 8 & 9 (OLI/TIRS) | 15m (panchromatic), 30m, 100m (thermal) [15] | 16 days (8 days combined) [15] | 11 bands: Coastal aerosol, Visible, NIR, SWIR, Cirrus, Thermal [15] | Land cover change, vegetation health, surface temperature, long-term time series analysis [15] |

| Sentinel-2 (MSI) | 10m, 20m, 60m [15] | 5 days (2-satellite constellation) [15] | 13 bands: Visible, Red Edge, NIR, SWIR [15] | Vegetation monitoring, habitat mapping, agricultural assessment, change detection [15] |

| PlanetScope | ~3m [13] | Near-daily [13] | RGB, NIR [13] | High-detail local mapping, invasive species detection, site-specific monitoring [13] |

| Commercial Very High Resolution (e.g., Airbus, Maxar) | < 1m - 0.3m [16] | Varies by satellite/tasking | Panchromatic, Multispectral, SAR [16] | Infrastructure mapping, detailed habitat delineation, defense and intelligence [16] |

Table 2: Suitability of EO Platforms for Habitat Fragmentation and Biodiversity Monitoring

| Platform/Software | Primary Function | Key Strengths | Limitations | Ideal Use Case |

|---|---|---|---|---|

| Google Earth Engine | Cloud computing for geospatial analysis [3] | Massive data catalog, high-performance processing, pre-loaded algorithms (e.g., LandTrendr) [3] | Requires coding knowledge, can be complex for custom models | Large-scale, long-time series change detection and habitat loss analysis [3] |

| ENVI | Image analysis and geospatial insights [16] | Powerful analysis tools, support for diverse sensor types, AI/deep learning capabilities [16] | Cost, steep learning curve for beginners [16] | Detailed spectral analysis for habitat condition and degradation [16] |

| ArcGIS Pro | Full-suite GIS and remote sensing platform [16] | Integrates imagery with other spatial data, 2D/3D analysis, strong cartographic output | Can be overwhelming due to extensive features [16] | Mapping landscape patterns, calculating fragmentation metrics, and integrating field data [16] |

| QGIS | Open-source GIS [17] | Free, large community support, handles various geospatial formats [17] | Steep learning curve, limited automation, performance issues with large datasets [17] | Cost-effective landscape analysis and mapping for research teams with limited budgets [17] |

Experimental Protocols for Key Monitoring Applications

Converting raw satellite data into reliable ecological information requires rigorous methodologies. Below are detailed protocols for two critical applications: mapping invasive species and monitoring forest habitat fragmentation.

Detection and Monitoring of Invasive Plant Species

A 2025 study demonstrated a high-accuracy approach for detecting invasive goldenrods (Solidago spp.) in Poland's Kampinos National Park using multitemporal satellite imagery and machine learning [13].

Objective: To evaluate the performance of Random Forest (RF) and One-Class Support Vector Machine (OCSVM) classifiers for detecting Solidago spp. using Sentinel-2 and PlanetScope imagery [13]. Data Acquisition:

- Satellite Imagery: Multitemporal Sentinel-2 (10-60m resolution) and PlanetScope (~3m resolution) data, capturing the entire growing season from spring to late autumn [13].

- Ground Truthing: In-situ data identifying goldenrod patches for model training and validation [13]. Goldenrods form dense, tall monocultures, making them spectrally distinguishable [13].

Methodology:

- Image Processing: Atmospherically correct imagery and calculate a suite of vegetation indices (e.g., NDVI) [13].

- Feature Selection: Create 17 different classification scenarios incorporating spectral bands, vegetation indices, and temporal statistics to identify the most predictive variables [13].

- Model Training: Train both RF and OCSVM models on the different feature sets. RF is robust against overfitting, while OCSVM is useful when reliable ground truth for other land cover classes is limited [13].

- Accuracy Assessment: Validate model outputs against held-back ground truth data using metrics like F1-score [13].

Key Findings:

- Random Forest Superiority: RF consistently outperformed OCSVM, achieving F1-scores up to 0.98 with Sentinel-2 data and 2-29% higher accuracy with PlanetScope imagery [13].

- Optimal Timing: Autumn imagery (October–November) yielded the most reliable detection due to the distinct phenological characteristics of goldenrods (e.g., persistent dry biomass) [13].

- Sensor Comparison: Sentinel-2, with its broader spectral range (including Red Edge bands), provided better accuracy for large-scale detection. PlanetScope's higher spatial resolution enhanced local detail but sometimes with lower classification accuracy [13].

- Feature Simplicity: The addition of complex vegetation indices did not necessarily improve classification accuracy beyond using the standard spectral bands [13].

Diagram: Workflow for invasive species detection using multitemporal satellite imagery and machine learning, based on the protocol from [13].

Monitoring Forest Habitat Fragmentation

Forest fragmentation involves the breaking apart of habitat into smaller, isolated patches, and is a primary driver of biodiversity decline [3]. EO enables the quantification of this process through landscape metrics.

Objective: To map forest habitat loss and quantify fragmentation patterns over time to inform conservation planning [3]. Data Acquisition:

- Primary Data Source: Time series of Landsat (30m) and/or Sentinel-2 (10m) imagery, available via open-access platforms like Google Earth Engine (GEE) [3].

- Temporal Scope: Multi-year archives (e.g., 1985-present) are used to establish baselines and track changes [3].

Methodology:

- Land Cover Classification: Use algorithms (e.g., Random Forest) on satellite imagery to create forest/non-forest maps for different time periods [3].

- Change Detection: Employ temporal segmentation algorithms like LandTrendr on GEE to identify disturbances (e.g., clear-cuts, fires) and recovery patterns from spectral trajectories [3].

- Metric Calculation: Input the forest cover maps into a landscape ecology toolkit (e.g.,

landscapemetricsin R) to calculate key fragmentation indices [3]:- Patch Size and Density: Measures the subdivision of the forest.

- Edge Density: Quantifies the amount of boundary between forest and non-forest.

- Core Area: Identifies interior forest habitat, away from edges.

- Connectivity Indices: Assesses how easily species can move between patches.

Key Findings:

- EO is crucial for monitoring the amount and configuration of forest habitats, which are altered by both natural disturbances and management practices like clear-cutting or selective logging [3].

- Cloud computing platforms like GEE have democratized large-scale fragmentation analysis by providing access to vast data archives and high-performance computing [3].

- A key limitation is that satellite sensors cannot fully capture micro-spatial variations (e.g., understory conditions, deadwood volume) that are critical for many species. Therefore, integration with ground surveys is essential for comprehensive assessment [3].

Diagram: Standard workflow for monitoring forest habitat fragmentation using satellite imagery time series, as described in [3].

Table 3: Key Research Reagents and Tools for EO-based Environmental Monitoring

| Tool/Solution | Function | Relevance to Habitat Fragmentation Research |

|---|---|---|

| Google Earth Engine (GEE) | Cloud-based planetary-scale analysis platform [3] | Provides access to massive satellite archives (Landsat, Sentinel) and built-in algorithms for time-series analysis of forest cover change and disturbance [3]. |

| LandTrendr Algorithm | Temporal segmentation algorithm for change detection [3] | Identifies the timing and magnitude of forest disturbance and recovery events from spectral trajectories, crucial for tracking habitat loss [3]. |

| Global Forest Change Dataset | Global, annual maps of forest loss and gain [3] | Offers a readily available baseline data layer for quantifying forest cover change and initiating fragmentation studies at a global scale [3]. |

| Kili Technology | Enterprise geospatial annotation platform [17] | Enables precise labeling of satellite imagery (e.g., habitat types, features) to create high-quality training data for machine learning models [17]. |

| Landscape Metrics Software (e.g., FRAGSTATS) | Computes quantitative indices of landscape pattern [3] | Calculates key fragmentation metrics such as patch density, edge density, and connectivity from land cover maps derived from satellite data [3]. |

| Sentinel-2 MSI & Landsat OLI | Multispectral satellite sensors [15] | The workhorse sensors for land monitoring, providing free, analysis-ready data with optimal spectral and spatial resolution for habitat mapping [15]. |

Earth Observation has matured into a critical technology for large-scale environmental monitoring, providing the objective, repeatable, and global data needed to track habitat fragmentation and biodiversity loss [14] [3]. The synergistic use of satellite systems like Landsat and Sentinel-2, combined with powerful cloud analytics and machine learning, allows researchers to move from simply observing change to understanding and predicting ecological outcomes [13] [3].

The future of EO in ecology lies in multi-scale integration. This means seamlessly combining the broad-scale, continuous view from satellites with the fine-resolution detail from drones and the deep ecological context provided by ground surveys [3]. As satellite constellations grow and analysis methods become more sophisticated, EO will play an increasingly vital role in generating the evidence base needed for effective conservation policy and action, helping to mitigate the ongoing biodiversity crisis [18] [14].

Remote sensing technology provides critical data for assessing habitat fragmentation, a key issue in conservation biology. The choice of platform and sensor directly influences the accuracy, scale, and type of information that researchers can derive about landscape patterns and ecological changes. This guide objectively compares the performance of major remote sensing platforms—from satellite systems like Landsat and Sentinel to unmanned aerial vehicles (UAVs) equipped with LiDAR and photogrammetric sensors—within the context of habitat fragmentation research. Supporting experimental data and detailed methodologies are provided to inform researchers and scientists in selecting the appropriate tools for their specific applications.

Remote sensing platforms can be broadly categorized into satellites and UAVs, each with distinct operational parameters and data characteristics. The following table summarizes the key specifications of the platforms and sensors discussed in this guide.

Table 1: Comparison of Key Remote Sensing Platforms and Sensors

| Platform / Sensor | Spatial Resolution | Temporal Resolution | Key Data Products | Primary Applications in Habitat Assessment |

|---|---|---|---|---|

| Landsat 8 & 9 | 15-30 m (multispectral) [19] | 16 days [20] | Multispectral imagery, vegetation indices (e.g., NDVI) [21] | Broad-scale land cover change, deforestation tracking, long-term carbon storage monitoring [21] |

| Sentinel-2 | 10-60 m (multispectral) [19] | 5 days (combined constellation) | Multispectral imagery, high-resolution vegetation indices | Vegetation health assessment, detailed land cover classification, habitat mapping |

| UAV-based LiDAR | Variable (e.g., 140 pts/m² achievable) [22] | On-demand | 3D Point Clouds, Digital Terrain Models (DTMs), Canopy Height Models [23] [24] | Under-canopy terrain modeling, forest structure analysis, vertical habitat complexity [25] |

| UAV-based Photogrammetry | Centimeter-level (from imagery) | On-demand | 3D Point Clouds, Orthomosaics, Digital Surface Models (DSMs) [25] | High-resolution 2D/3D mapping, tree crown delineation, species classification in open canopies [22] |

| Radar | Meter to kilometer-scale | Days to weeks | Backscatter intensity, interferometric coherence | Forest biomass estimation, deforestation monitoring under cloud cover [23] |

| RF Sensors | N/A (signal-based) | Continuous | RF signal fingerprints, communication spectra | Detection of unauthorized UAV activity in sensitive habitats [23] |

| Acoustic Sensors | N/A (sound-based) | Continuous | Acoustic signatures | Biodiversity monitoring (e.g., bird, amphibian populations), UAV detection [23] |

Table 2: Quantitative Performance Comparison from Experimental Studies

| Experiment Focus | Platform/Sensor Combination | Key Performance Metric | Result | Source |

|---|---|---|---|---|

| Co-registration Accuracy | Landsat-8 (L8) vs. Sentinel-2 (S2) | Circular Error at 90% probability (CE90) | <6 meters with GRI*; >12 meters without GRI [19] | [19] |

| Temporal Co-registration | Landsat-8 vs. Landsat-9 (L9) | CE90 | <3 meters [19] | [19] |

| Tree Species Classification | UAV LiDAR (fused with hyperspectral) | Overall Accuracy | 95.98% [22] | [22] |

| Tree Species Classification | UAV Photogrammetry (fused with hyperspectral) | Overall Accuracy | ~95% (inferred from narrowed gap) [22] | [22] |

| Individual Tree Segmentation | UAV LiDAR | F-score | 0.83 [22] | [22] |

| Individual Tree Segmentation | UAV Photogrammetry | F-score | 0.79 [22] | [22] |

| Carbon Storage Estimation | Sentinel-2A (High-resolution reference) | Model Performance | Superior accuracy for dominant species [21] | [21] |

| Carbon Storage Estimation | Landsat 8 (Whole-forest, lower-resolution) | Model Performance | Effective for long-term, broad-scale trend analysis [21] | [21] |

*GRI: Global Reference Image

Detailed Experimental Protocols and Data

Satellite Image Co-registration Accuracy Assessment

Objective: To evaluate the geometric alignment accuracy between Landsat-8 and Sentinel-2 satellite products, which is crucial for multi-temporal analysis of habitat change [19].

Methodology:

- Data Collection: Utilize globally distributed tile sets from both Landsat-8 Collection-2 terrain-corrected (L1TP) products and Sentinel-2 L1C products processed with and without the Global Reference Image (GRI) [19].

- Image-to-Image (I2I) Analysis: Perform automated matching of corresponding ground control points between the satellite image pairs.

- Accuracy Calculation: Compute the Circular Error at 90% probability (CE90), which represents the radius within which 90% of the points between the two images align [19].

Key Findings: The use of the GRI in the Sentinel-2 processing chain significantly enhances co-registration accuracy with Landsat-8, reducing errors from over 12 meters to less than 6 meters CE90. This high level of alignment is essential for precisely tracking habitat boundary shifts over time [19].

Satellite Co-registration Workflow

UAV-based LiDAR vs. Photogrammetry for Tree Species Classification

Objective: To compare the performance of UAV-based LiDAR and UAV-based Digital Aerial Photogrammetry (DAP) in classifying individual tree species in an urban forest setting, a task relevant to understanding biodiversity in fragmented habitats [22].

Methodology:

- Data Acquisition:

- LiDAR: Collect point cloud data using a system like the SZT-R250 on a drone (e.g., DJI 600 Pro). Achieve an average point density of ~140 points/m² [22].

- Photogrammetry: Capture high-resolution, overlapping RGB images from multiple angles to generate a 3D point cloud using Structure from Motion (SfM) algorithms [25] [22].

- Data Pre-processing:

- Individual Tree Segmentation: Apply a segmentation algorithm (e.g., marked watershed algorithm) on the CHMs derived from both LiDAR and DAP to delineate individual tree crowns [22].

- Feature Extraction: For each segmented tree, extract features.

- Classification and Fusion: Use a machine learning classifier (e.g., Random Forest) to classify tree species using the extracted features. A subsequent step can fuse the UAV data with hyperspectral imagery to assess accuracy improvement [22].

- Accuracy Assessment: Compare the F-score for individual tree segmentation and the overall accuracy for species classification between the two methods [22].

Key Findings: LiDAR slightly outperformed DAP in segmenting individual trees (F-score 0.83 vs. 0.79). However, for pixel-based species classification, DAP achieved higher initial accuracy (73.83% vs. 57.32%) due to its rich spectral-textural information. When both data types were fused with hyperspectral data, LiDAR achieved a very high individual tree classification accuracy of 95.98%, though the gap with DAP narrowed significantly, demonstrating the value of multi-sensor fusion [22].

UAV Tree Classification Workflow

Forest Carbon Storage Estimation Using Multi-Resolution Imagery

Objective: To develop and compare methods for estimating forest carbon storage using high-resolution Sentinel-2A imagery and lower-resolution Landsat 8 imagery, linking to habitat quality assessment [21].

Methodology:

- Field Survey: Establish sample plots (e.g., 20m x 20m for trees) in the study area. Measure tree parameters (species, Diameter at Breast Height - DBH, height) and shrub metrics. Calculate plot carbon storage using allometric equations and carbon coefficients [21].

- Approach 1 (Traditional for Landsat 8):

- Directly model the relationship between the field-measured carbon storage and vegetation indices (e.g., NDVI) derived from Landsat 8 imagery [21].

- Approach 2 (Reference-Based for Landsat 8):

- High-Resolution Model: First, develop an optimal carbon estimation model for dominant species/types (e.g., Populus, Salix, Shrubs) using high-resolution Sentinel-2A imagery and machine learning models (Random Forest, Decision Tree, Multiple Linear Regression). This model serves as a reference [21].

- Low-Resolution Application: Use the species-specific relationships established from the Sentinel-2A model to inform and improve the whole-forest carbon storage estimates from the historical Landsat 8 imagery archive [21].

- Model Comparison: Evaluate the accuracy of both approaches for the Landsat 8 data, comparing them against the reference model from Sentinel-2A [21].

Key Findings: Approach 2, which used Sentinel-2A estimates as a reference, yielded superior accuracy for whole-forest assessment with Landsat 8 imagery compared to the traditional direct modeling of Approach 1. This method enabled the calculation of historical carbon storage, demonstrating a carbon increase of 27 Mt (89%) in the Ordos Forest from 2013 to 2023, showcasing the feasibility of long-term carbon monitoring by aligning low- and high-resolution data [21].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Equipment and Software for Remote Sensing Experiments

| Item Name | Category | Function / Application | Example Models / Types |

|---|---|---|---|

| DJI Zenmuse L2 | UAV LiDAR Sensor | Integrated LiDAR, IMU, and camera for high-accuracy 3D mapping and point cloud generation on a UAV platform [24]. | Mechanical LiDAR [24] |

| Solid-State LiDAR | UAV LiDAR Sensor | Compact, durable, and cost-effective LiDAR for basic topographic mapping and obstacle detection; uses electronic beam steering [24]. | Various emerging models |

| Multispectral Sensor | UAV/Satellite Sensor | Captures image data at specific wavelengths beyond visible light for calculating vegetation indices (e.g., NDVI) and assessing plant health [21]. | Sensors on Sentinel-2, Landsat 8/9 |

| Hyperspectral Sensor | UAV/Satellite Sensor | Captures imagery across hundreds of narrow spectral bands, enabling detailed material and species identification through unique spectral signatures [22]. | Sensors used in fusion studies [22] |

| GNSS Receiver | Positioning System | Provides precise geographic coordinates for ground control points (GCPs) and direct georeferencing of UAV-collected data [22]. | |

| Inertial Measurement Unit (IMU) | Positioning System | Measures the platform's orientation (roll, pitch, yaw) in real-time, critical for correcting LiDAR and photogrammetric data [22] [24]. | |

| LiDAR360 / Similar Software | Data Processing Software | Processes raw LiDAR point clouds; used for denoising, classification (ground/non-ground), and generating DEMs/DTMs [22] [24]. | LiDAR360 [22] [24] |

| Structure from Motion (SfM) Software | Data Processing Software | Processes overlapping 2D images from drones to generate 3D point clouds, orthomosaics, and surface models [25] [22]. | |

| Random Forest Classifier | Analysis Algorithm | A machine learning algorithm used for classifying land cover, tree species, or other features based on remote sensing data [21] [22]. |

Remote Sensing Techniques and Workflows for Quantifying Landscape Fragmentation

Land Cover Classification and Change Detection Algorithms for Fragmentation Mapping

Habitat fragmentation, the process by which large, continuous habitats are subdivided into smaller, isolated patches, is a primary driver of global biodiversity loss [3]. Accurate assessment of this fragmentation requires precise land cover classification and change detection to monitor landscape alterations over time. Remote sensing provides the fundamental data source for these analyses, with machine learning algorithms serving as critical tools for transforming satellite imagery into actionable information about landscape patterns [26] [3]. The choice of classification algorithm directly impacts the accuracy of fragmentation metrics, influencing conservation decisions and habitat management strategies. This guide provides a comparative analysis of current classification methodologies, their performance characteristics, and implementation protocols to support researchers in selecting appropriate techniques for fragmentation mapping.

Comparative Performance of Classification Algorithms

Quantitative Accuracy Assessment

Classification algorithms demonstrate varying performance characteristics across different landscapes and sensor configurations. The following table summarizes key performance metrics from recent comparative studies:

Table 1: Performance comparison of land cover classification algorithms

| Algorithm | Overall Accuracy Range | Kappa Coefficient Range | Relative Performance | Optimal Use Cases |

|---|---|---|---|---|

| Random Forest (RF) | 87%-94% [27] [28] | 0.83-0.84 [29] [27] | Excellent | Complex agricultural landscapes, heterogeneous regions [29] [27] |

| Support Vector Machine (SVM) | 87%-92% [28] | 0.80-0.67 (2015-2020) [29] | Very Good | High-dimensional data, limited training samples [27] [30] |

| Maximum Likelihood (ML) | 66%-82% [29] [28] | 0.57-0.77 [29] | Good | Homogeneous landscapes with normal data distribution [29] |

| Deep Learning (U-Net) | 41% (complex habitats) [31] | Not reported | Variable | High-resolution imagery with ample training data [31] |

| Geospatial Foundation Models (Clay v1.0) | 51% (complex habitats) [31] | Not reported | Promising | Multi-temporal analysis, transfer learning scenarios [31] |

Fragmentation Mapping Considerations

For habitat fragmentation assessment, classification accuracy directly influences the reliability of landscape pattern metrics. Random Forest consistently demonstrates robustness in handling the spectral heterogeneity typical of fragmented landscapes, generating more accurate representations of habitat patches and corridors [29] [28]. Studies indicate that RF maintains higher accuracy across different spatial resolutions (Landsat 30m, Sentinel-10m, Planet 3-5m), which is crucial for consistent fragmentation monitoring [28]. The algorithm's resistance to overfitting and ability to manage high-dimensional feature spaces makes it particularly suitable for analyses incorporating multiple vegetation indices and topographic predictors [29] [30].

Experimental Protocols and Methodologies

Standardized Classification Workflow

Table 2: Essential methodological steps for comparative classifier evaluation

| Protocol Phase | Key Activities | Purpose & Rationale |

|---|---|---|

| Data Acquisition | Acquire multi-spectral imagery (Landsat, Sentinel-2); collect ground reference data; define area of interest | Ensure data consistency across comparisons; establish validation baseline [29] [28] |

| Pre-processing | Atmospheric correction; geometric registration; cloud masking; compute vegetation indices | Minimize non-land cover related spectral variation; enhance feature discrimination [27] |

| Training Data Preparation | Define LULC classes; select training samples with balanced distribution; split into training/validation sets | Control for training bias; enable statistically robust accuracy assessment [29] [30] |

| Classifier Implementation | Configure algorithm-specific parameters; execute classification; generate LULC maps | Standardize implementation conditions across tested algorithms [29] [28] |

| Accuracy Assessment | Calculate overall accuracy, Kappa coefficient; create error matrices; perform statistical testing | Quantify performance differences; determine significance of results [29] [30] |

Algorithm-Specific Configuration

Random Forest implementation requires parameterization of the number of trees (ntree) and variables per split (mtry). Studies demonstrating high accuracy typically utilize 100-500 trees, with mtry set to approximately the square root of the total number of input features [29] [30]. Support Vector Machine performance depends heavily on kernel selection and parameter tuning; the Radial Basis Function (RBF) kernel often outperforms linear alternatives for complex landscapes, though it requires careful optimization of the cost (C) and gamma (γ) parameters [30] [28]. Maximum Likelihood classification assumes normal distribution of training data and requires sufficient samples for each class to accurately estimate covariance matrices [29].

Figure 1: Standardized workflow for land cover classification and fragmentation assessment

Advanced Architectures for Change Detection

Paradigm Comparison: Post-Classification vs. Direct Change Detection

Two primary approaches dominate change detection for fragmentation monitoring: post-classification comparison (independent classification of multi-temporal images followed by comparison) and direct change detection (end-to-end models trained to identify changes directly from multi-temporal data) [31].

Table 3: Change detection paradigm comparison for habitat monitoring

| Approach | Methodology | Advantages | Limitations | Reported Performance |

|---|---|---|---|---|

| Post-Classification | Independent classification of time-series images; comparison of outputs | Flexible; enables detailed change trajectory analysis; can utilize any classifier | Error propagation from both classifications; sensitive to misregistration | Clay v1.0: 51% accuracy (complex habitats) [31] |

| Direct Change Detection | Specialized models (e.g., ChangeViT) process bi-temporal imagery to identify changes directly | Reduced error accumulation; inherently handles temporal dependencies | Limited change trajectory information; requires specialized training data | Binary change detection: 0.53 IoU [31] |

Geospatial Foundation Models

Recent advances in geospatial foundation models (GFMs) like Prithvi-EO-2.0 and Clay v1.0 demonstrate promising transfer learning capabilities when pre-trained on massive satellite datasets and fine-tuned for specific habitat monitoring tasks [31]. These models show particular robustness in cross-temporal evaluation, with Clay maintaining 33% accuracy on 2020 data versus U-Net's 23% when trained on earlier temporal periods [31]. While overall accuracy values for complex habitat classification remain moderate (51%), this represents significant progress for fine-scale habitat differentiation in topographically complex environments like alpine ecosystems [31].

Figure 2: Architectural approaches to change detection for fragmentation monitoring

Table 4: Essential research reagents and computational platforms for fragmentation mapping

| Resource Category | Specific Tools | Function & Application |

|---|---|---|

| Cloud Computing Platforms | Google Earth Engine, SEPAL, OpenEO | Planetary-scale analysis; access to imagery archives; parallel processing capabilities [3] [28] |

| Desktop GIS Platforms | ArcGIS Pro, QGIS | Advanced spatial analysis; visualization; integration with field data [28] |

| Satellite Imagery Sources | Landsat (30m), Sentinel-2 (10m), Planet (3-5m) | Multi-resolution land cover mapping; change detection; vegetation monitoring [29] [28] |

| Auxiliary Data Products | LiDAR, terrain attributes, vegetation indices | Enhanced classification accuracy; 3D structure analysis; habitat quality assessment [31] |

| Specialized Algorithms | LandTrendr, Continuous Change Detection and Classification (CCDC) | Temporal segmentation; disturbance mapping; trend analysis [3] |

Data Fusion for Enhanced Accuracy

Integrating multi-sensor data significantly improves classification accuracy in complex habitats. Studies demonstrate that combining optical imagery (RGB, NIR) with LiDAR-derived height data and terrain attributes increases semantic segmentation accuracy from 30% to 50% in topographically complex environments [31]. This multimodal approach enables better discrimination of vegetation structure and habitat types, which is critical for accurate fragmentation assessment. For regional-scale water resource management, data fusion of Landsat 8 and Sentinel-2 imagery has successfully supported land cover classification with overall accuracy reaching 87-91% [27] [28].

Based on comprehensive performance evaluation, Random Forest emerges as the most robust classifier for habitat fragmentation applications, demonstrating consistent high accuracy across diverse landscapes and sensor configurations [29] [27] [28]. For change detection specifically, the optimal paradigm depends on monitoring objectives: post-classification approaches using RF or GFMs provide more detailed change trajectory information, while direct change detection excels at binary change identification with reduced error propagation [31]. The integration of multi-modal data (optical, LiDAR, terrain) significantly enhances classification accuracy in ecologically complex regions. Researchers should prioritize algorithm validation with representative training data specific to their study region and conservation targets, as classifier performance varies with landscape complexity and habitat characteristics.

For researchers and scientists monitoring habitat fragmentation, calculating landscape metrics is a fundamental process for quantifying spatial pattern changes. Metrics such as patch size, connectivity, and core area provide critical, reproducible data on the extent and ecological consequences of habitat subdivision [32] [3]. The breaking apart of habitats into smaller, isolated patches directly impacts biodiversity by reducing habitat area, increasing deleterious edge effects, and isolating populations [3]. Within the framework of remote sensing research, these metrics transform raw classified imagery—derived from sources like Landsat and Sentinel-2—into actionable, quantitative insights about landscape degradation [33] [3]. This guide objectively compares the leading software and platforms for calculating these essential metrics, providing a foundational resource for environmental scientists and conservation planners.

Comparative Analysis of Landscape Metric Tools

The selection of an appropriate software platform is a critical first step in landscape metric analysis. The tools available range from long-established, specialized programs to modern, flexible coding packages. The following section provides a data-driven comparison of the primary tools used in the field.

Table 1: Key Software for Calculating Landscape Metrics

| Software/ Package | Primary Type | Key Strengths | Notable Metrics & Functions | Integration & Data Sources |

|---|---|---|---|---|

| FRAGSTATS [32] [33] | Standalone GUI Software | Industry standard; vast array of metrics; well-documented. | Area-Edge: AREA, PLAND, ED.Core Area: CORE.Aggregation: LPI, LSI, COHESION.Diversity: SIDI. | Imports classified raster grids (e.g., GeoTIFF); direct use of remote sensing classification outputs. |

| Makurhini (R Package) [34] | R Programming Package | Focus on connectivity & fragmentation; scenario evaluation. | Fragmentation: Effective Mesh Size (MESH).Connectivity: PC, IIC, dPC, ProtConn, ECA. | Uses vector (node-based) or raster data; integrates with sf, raster, terra; considers landscape heterogeneity for connectivity. |

| QGIS with Plugins [33] | Desktop GIS with Extensions | Open-source; pre-processing of imagery; visualization of results. | Core GIS functions for area/perimeter; plugins for basic metrics; essential pre-processing (e.g., sieving). | Central hub for remote sensing data; used for visualization and filtering (e.g., Sieve function) before analysis in other tools. |

Supporting Experimental Data and Performance Considerations

A critical consideration in tool selection and result interpretation is the impact of error. Map misclassification in input data can cause large and variable errors in the resulting landscape pattern indices (LPIs) [35]. One study found that even maps with low overall misclassification rates could yield errors in LPIs of much larger magnitude and with substantial variability. Furthermore, common post-processing techniques like smoothing to reduce "salt-and-pepper" noise can sometimes increase LPI error or even reverse the direction of the error, potentially leading to an underestimation of habitat fragmentation [35]. This underscores the need for rigorous accuracy assessment of input land cover classifications.

Essential Landscape Metrics and Their Ecological Interpretation

Landscape metrics quantify specific aspects of spatial pattern. For habitat fragmentation research, they can be grouped by the structural characteristic they measure.

Table 2: Core Metrics for Habitat Fragmentation Assessment

| Metric Category | Specific Metrics | Ecological Interpretation & Application |

|---|---|---|

| Area and Edge Metrics [32] | Patch Area (AREA), Percentage of Landscape (PLAND), Edge Density (ED) | PLAND measures habitat amount, a primary driver of species occurrence. ED quantifies total edge length per unit area, crucial for studying edge effects, which can alter microclimate and benefit or harm species depending on their affinity for edge habitats. |

| Core Area Metrics [32] | Core Area (CORE) | Delineates the interior area of a patch after excluding a buffer from the edge. Vital for assessing habitat quality for "forest-interior" species that are adversely affected by edge conditions (e.g., increased predation or parasitism). |

| Connectivity & Aggregation Metrics [32] [34] | Largest Patch Index (LPI), Patch Cohesion Index (COHESION), Probability of Connectivity (PC) | LPI quantifies the dominance of the largest patch. COHESION measures the physical connectedness of a patch type. PC is a advanced connectivity index that considers the amount of habitat and its connection via dispersal paths of specific lengths. |

Experimental Protocols for Metric Calculation

A standardized workflow ensures the reproducibility and reliability of landscape metric analysis. The following protocol outlines the key stages from data acquisition to final interpretation.

Detailed Methodological Workflow

The process of calculating landscape metrics follows a logical sequence from raw data to ecological insight, integrating multiple tools and validation steps.

Diagram Title: Workflow for Landscape Metric Analysis from Remote Sensing Data

Phase 1: Data Acquisition and Preparation. The process begins with acquiring cloud-free, analysis-ready satellite imagery from platforms like Landsat or Sentinel-2 [3]. This imagery is then classified into thematic land cover maps (e.g., forest/non-forest) using supervised or unsupervised methods in software like QGIS or on cloud platforms like Google Earth Engine. A critical and often overlooked step is rigorous accuracy assessment, where the classified map is validated against ground truth data to generate an error matrix [35]. This step is vital because, as previously noted, even low misclassification rates can propagate into large, unpredictable errors in the final landscape metrics.

Phase 2: Data Pre-processing. The raw classified raster often contains small, spurious patches resulting from misclassification. Applying a sieving filter, such as the Sieve function in QGIS, removes isolated pixel groups below a defined connectivity threshold (e.g., merging patches smaller than 20 connected pixels with the surrounding class) [33]. This reduces noise, but caution is required as the threshold must be set to avoid removing genuine, small habitat patches that may be ecologically relevant.

Phase 3: Metric Calculation and Analysis. The pre-processed habitat map is imported into specialized software like FRAGSTATS or R (using the Makurhini package). The researcher must then select metrics aligned with their ecological questions, as defined in Table 2. The analysis is typically run at the patch, class (e.g., the forest class), and landscape levels to provide a multi-scale perspective [33].

Phase 4: Interpretation and Application. The final phase involves statistically analyzing the results and interpreting them in an ecological context. For example, a high Edge Density (ED) and low mean core area may indicate significant fragmentation and a lack of interior habitat for sensitive species [32]. These findings can directly inform conservation actions, such as prioritizing specific patches for protection or planning habitat corridors to improve connectivity [34].

This section catalogs the essential "research reagents"—the core datasets, software, and platforms required to conduct a landscape metrics analysis for habitat fragmentation studies.

Table 3: Essential Research Reagents for Landscape Metric Analysis

| Category | Item/Resource | Description & Function in Research |

|---|---|---|

| Primary Data Sources | Landsat & Sentinel-2 Imagery | Provides multi-spectral, analysis-ready satellite data at medium resolution (10m-30m). The foundational data layer for land cover classification and change detection [3]. |

| Analysis Software | FRAGSTATS 4.2 | The benchmark software for computing a wide suite of landscape metrics from classified raster data. It is the most comprehensive and widely cited tool in the field [32] [33]. |

| Analysis Package | Makurhini R Package | A specialized R package for calculating advanced connectivity (PC, IIC) and fragmentation indices. Enables scenario evaluation and integrates landscape heterogeneity into connectivity models [34]. |

| Pre-processing & Visualization | QGIS Desktop GIS | Open-source Geographic Information System used for visualizing original and classified imagery, pre-processing data (e.g., sieving), and creating publication-quality maps [33]. |

| Computing Platform | Google Earth Engine (GEE) | A cloud-computing platform for planetary-scale geospatial analysis. Allows access to massive satellite data catalogs and enables large-scale land cover classification and change detection without local computing limits [3]. |

| Reference Material | FRAGSTATS Documentation | The comprehensive user manual and metric guide by McGarigal (2015). It is indispensable for correctly interpreting the range, meaning, and calculation of each metric [33]. |

The objective comparison of tools for calculating landscape metrics reveals a complementary ecosystem of software. FRAGSTATS remains the undisputed standard for comprehensive, metric-rich analysis of raster-based patterns. In contrast, the Makurhini R package offers specialized, advanced capabilities for functional connectivity assessment, which is critical for understanding the implications of fragmentation for species movement. The choice between them is not mutually exclusive; a robust research workflow often integrates QGIS for pre-processing, FRAGSTATS for core pattern analysis, and Makurhini for in-depth connectivity modeling. Ultimately, the most critical factor underlying all analyses is the quality of the input land cover classification, as errors at this stage propagate non-linearly into the final metrics, potentially compromising the ecological conclusions [35]. Researchers must therefore pair sophisticated metric analysis with rigorous remote sensing and validation protocols to ensure their findings accurately reflect on-the-ground habitat conditions.

Habitat loss and fragmentation, recognized as key drivers of the global biodiversity crisis, transform contiguous forests into smaller, less connected fragments, compromising ecosystem services and species interactions [3]. Remote sensing has emerged as a crucial tool for large-scale monitoring of these changes, particularly with new satellite missions providing high-resolution open-access data and cloud computing platforms enabling planetary-scale analysis [3]. This case study examines forest fragmentation in Bavaria, Germany's largest federal state, utilizing modern earth observation data to quantify fragmentation patterns and their ecological implications. The analysis demonstrates how remote sensing techniques can provide critical baseline data for conservation planning and fragmentation assessment in temperate forest ecosystems.

Methodological Frameworks for Fragmentation Analysis

Conceptual Foundations: Fragmentation Versus Forest Loss

Forest fragmentation represents a distinct process from forest loss, each with different ecological consequences. Fragmentation occurs when forests are divided into more numerous and disconnected patches, potentially without reducing total forest area (a scenario termed 'fragmentation per se'), while forest loss involves an actual reduction in forested area [36]. This distinction is significant because maintaining habitat area despite fragmentation can still support animal habitats and ecosystem functioning [36]. Different spatial processes drive landscape fragmentation, including:

- Perforation: Creating holes or gaps in the original land cover

- Dissection: Subdividing land cover with equal-width alterations like roads

- Subdivision: Splitting large forest areas into smaller ones

- Shrinkage: Gradual reduction in patch size

- Attrition: Final disappearance of patches [37]

Analytical Approach for the Bavarian Case Study

The Bavarian fragmentation analysis employed a comprehensive methodology based on earth observation data [36] [38]:

- Data Source: A forest mask derived from September 2024 satellite imagery

- Spatial Units: 83,253 forest polygons ≥0.1 hectares analyzed

- Fragmentation Metrics: 22 distinct metrics calculated to quantify fragmentation patterns

- Spatial Aggregation: Results aggregated within administrative units and across topographic gradients (elevation and aspect)

- Classification System: Forest patches categorized into five size classes (XS, S, M, L, XL)

- Edge Effects: Edge zones defined as transitional regions up to 100 meters interior to forest perimeters

- Statistical Analysis: K-means clustering applied to identify distinct fragmentation patterns across districts

Table 1: Key Methodological Components for Fragmentation Assessment

| Component | Specification | Application in Bavarian Study |

|---|---|---|

| Base Data | Forest mask from satellite imagery | September 2024 data coverage |

| Spatial Units | Individual forest polygons | 83,253 polygons ≥0.1 hectares analyzed |

| Analytical Metrics | Landscape pattern indices | 22 fragmentation metrics calculated |

| Topographic Analysis | Elevation and aspect parameters | Distribution across elevational zones and slope orientations |

| Statistical Approach | Cluster analysis | K-means clustering of administrative districts |

Experimental Protocols and Workflow

The assessment of forest fragmentation follows a structured workflow from data acquisition to the interpretation of ecological patterns. The following diagram visualizes this methodological sequence, from initial data collection through the key analytical steps to the final clustering of results.

Data Acquisition and Pre-processing

The Bavarian study utilized a forest mask derived from September 2024 earth observation data, identifying 2.384 million hectares of forest across the state [36] [38]. This foundational dataset was processed to distinguish forest from non-forest areas, creating a binary classification that enabled subsequent spatial analysis. The processing likely involved cloud computing platforms such as Google Earth Engine, which combines a catalog of satellite imagery with planetary-scale analysis capabilities and is particularly suitable for large-scale habitat fragmentation monitoring [3].

Fragmentation Metric Calculation

The analysis computed 22 distinct metrics to quantify various aspects of fragmentation patterns [36]. These metrics typically include measurements of:

- Patch density and size distribution: Number of patches per unit area and their size characteristics

- Edge effects: Perimeter length and edge area calculations

- Core area: Interior forest area beyond specified edge distances

- Spatial configuration: Isolation, connectivity, and aggregation indices

- Shape complexity: Measurement of patch shape irregularity

These metrics were aggregated within administrative boundaries and topographic units to enable systematic comparison across the region.

Topographic and Spatial Analysis

The distribution of forest patches was analyzed with respect to elevation and aspect orientation to identify topographic patterns in fragmentation [36] [38]. This involved:

- Elevational zoning: Categorizing forest distribution across 200-meter elevation intervals

- Aspect analysis: Examining forest cover across north, south, east, and west-facing slopes

- Spatial clustering: Applying K-means clustering to identify districts with similar fragmentation characteristics

Key Findings: Quantitative Assessment of Bavarian Forest Fragmentation

Patch Size Distribution and Edge Effects

The analysis revealed a forest landscape dominated by small fragments with extensive edge influence [36] [38]:

Table 2: Forest Fragmentation Metrics in Bavaria

| Fragmentation Parameter | Value | Ecological Significance |

|---|---|---|

| Total Forest Area | 2.384 million hectares | 34.1% of Bavaria's land surface |

| Number of Forest Patches | 83,253 polygons | High level of subdivision |

| XS Patches (<25 ha) Ratio | 13:1 (compared to all other size classes) | Extreme dominance of small fragments |

| Edge Zone Area | >1.68 million hectares | 70.5% of total forest area |

| Core Forest Area | <703,000 hectares | Only 29.5% of total forest area |

| Average Edge Depth | 100 meters | Standardized microclimatic buffer zone |

The remarkably high proportion of edge habitat (70.5% of total forest area) has significant ecological implications, as edge zones exhibit different microclimatic conditions, increased invasion by generalist species, and altered ecosystem processes compared to forest interiors [36]. The disproportionate number of small patches suggests most forest fragments contain little or no core area, potentially limiting habitat availability for forest-interior species.

Topographic Patterns in Forest Distribution

The distribution of forest fragments across Bavaria showed distinct patterns related to topography [36] [38]:

Table 3: Forest Distribution by Topographic Parameters

| Topographic Factor | Forest Distribution Pattern | Notable Observations |

|---|---|---|

| Elevation | 0-200m: Lowest forest cover400-600m: ~30% forest cover1000-1200m: >60% forest cover (maximum)1400m+: Declining cover | XL patches dominate higher elevations (600-1400m) |

| Aspect Orientation | North-facing: Dominant slope directionWest-facing: Highest forest cover (~36%)East-facing: Lowest forest cover | Forest cover inversely related to slope abundance |

| Terrain Preference | Largest patches at higher elevationsSmall patches distributed across all elevations | XL patches correspond to protected areas |

The concentration of large forest patches at higher elevations likely reflects both historical conservation priorities and the lower suitability of these areas for agriculture and urban development. The preferential forest cover on west-facing slopes may result from microclimatic advantages, as these slopes receive afternoon sun at the warmest part of the day, potentially creating more favorable growing conditions [38].

Research Reagent Solutions and Analytical Tools

Table 4: Essential Materials and Platforms for Fragmentation Analysis

| Tool/Category | Specific Examples | Function in Fragmentation Research |

|---|---|---|

| Earth Observation Data | Sentinel-2, Landsat, PlanetScope, Pléiades Neo | Land cover classification, change detection, forest mask generation |

| Cloud Computing Platforms | Google Earth Engine, SEPAL, OpenEO | Planetary-scale analysis, time-series processing, data fusion |

| Fragmentation Algorithms | LandTrendr, CCDC, VCT, Verdet | Temporal segmentation, change detection, trajectory analysis |

| Spatial Analysis Tools | FRAGSTATS, Guidos Toolbox | Landscape metric calculation, pattern quantification |

| Validation Data | Airborne LiDAR, Field plots, High-resolution imagery | Accuracy assessment, structural parameter estimation |

| Topographic Data | Digital Elevation Models, Aspect maps | Terrain analysis, microclimatic modeling |

Cloud computing platforms, particularly Google Earth Engine, have revolutionized fragmentation monitoring by providing access to massive data archives and high-performance computing capabilities without requiring local infrastructure [3]. These platforms enable researchers to implement complex change detection algorithms like LandTrendr and Continuous Change Detection and Classification (CCDC) across large spatial extents [3].

Comparative Assessment: Remote Sensing Approaches for Fragmentation Monitoring

Methodological Comparisons Across Forest Ecosystems

The Bavarian case study exemplifies how modern earth observation data can quantify fragmentation patterns in temperate forests. Comparative studies from other regions highlight both consistent and divergent approaches:

In the Democratic Republic of Congo, researchers used fragmentation analysis to assess forest degradation, finding that canopy height and aboveground biomass were significantly reduced in forest edges compared to core areas [39]. This demonstrates the global applicability of fragmentation metrics as proxies for ecosystem condition.