Addressing the Unseen: A 2025 Roadmap for Inclusive Biodiversity Research and Its Impact on Biomedical Science

This article explores the critical gaps in biodiversity research, focusing on the underrepresentation of specific geographical regions, taxonomic groups, and methodological approaches.

Addressing the Unseen: A 2025 Roadmap for Inclusive Biodiversity Research and Its Impact on Biomedical Science

Abstract

This article explores the critical gaps in biodiversity research, focusing on the underrepresentation of specific geographical regions, taxonomic groups, and methodological approaches. Synthesizing the latest 2025 research and policy developments, it provides a comprehensive framework for researchers and drug development professionals to identify these biases, implement advanced and inclusive monitoring technologies, and validate findings through robust, comparative frameworks. The discussion highlights the direct implications of a more complete understanding of biodiversity for drug discovery, clinical trial design, and the development of treatments that are effective across diverse human populations.

Mapping the Blind Spots: Identifying Geographical, Taxonomic, and Conceptual Gaps in Biodiversity Science

Technical Support & Troubleshooting

This section provides practical solutions for researchers encountering issues related to geographical biases in their biodiversity data sets.

Troubleshooting Guide: Addressing Data Gap Biases in Biodiversity Analysis

Problem: Statistical models of biodiversity trends show significant bias, likely due to non-random gaps in spatial or temporal data.

- Impact: Model outputs, such as species population trends, may be unreliable and not representative of the true ecological pattern, potentially leading to misplaced conservation actions [1].

- Context: This problem frequently occurs when using large-scale aggregated data sets (e.g., from GBIF or BioTIME) or citizen science data, where sampling effort is often skewed toward easily accessible areas like regions near roads or urban centers, leaving remote areas under-sampled [2] [1].

| Problem Symptom | Likely Cause | Prerequisites to Check |

|---|---|---|

| Model performance and parameter estimates change significantly when adding/removing data from well-sampled regions. | Spatial Sampling Bias: Factors affecting sampling effort (e.g., proximity to roads, population density) overlap with factors affecting species distribution [2] [1]. | Map your sampling locations against variables like road networks, human population density, and country GDP to identify spatial correlation. [2] [1] |

| Estimated species trends are inconsistent with other regional studies or expert knowledge. | Temporal (Annual) Gaps: Unplanned gaps in time series data, for instance due to a failure to retain surveyors or external events, can distort perceived trends [1]. | Check for completeness of time series across all sampling sites. Plot sampling effort (number of records) per year for the entire region of interest. [1] |

| Poor model predictive performance when projecting to new, under-sampled geographic regions. | Geographic & Economic Bias: Data availability is strongly linked to a country's wealth, with high-income countries having far more observations per hectare [2]. | Verify the geographic distribution of your source data. Check the proportion of data originating from high-income countries versus lower-income, high-biodiversity regions. [2] |

Resolution Steps

Quick Fix: Data Subsampling (Time: ~30 minutes)

- Objective: Reduce the influence of over-represented areas to create a more balanced dataset.

- Method: Rarify or subsample your occurrence data to create a spatially uniform grid. For example, randomly select a fixed maximum number of records per grid cell in an overlaid map grid.

- Limitation: This approach discards usable data and may not fully address bias if the drivers of sampling and species occurrence are correlated [1].

Standard Resolution: Statistical Weighting (Time: 1-2 hours)

- Objective: Account for uneven sampling effort without discarding data.

- Method: Assign weights to your records based on a model of sampling probability.

- Model Sampling Probability: Create a model (e.g., a logistic regression) where the response is sampled/not-sampled across your study region. Use covariates like distance to roads, land cover, and human footprint index as predictors [1].

- Calculate Weights: The inverse of the predicted probability of sampling from this model can be used as a weight in subsequent analyses (e.g., occupancy or trend models) [1].

- Outcome: This can reduce both bias and variance in parameter estimates like species trends [1].

Comprehensive Solution: Integrated Imputation & Modeling (Time: Half-day+)

- Objective: Model the ecological process while simultaneously accounting for the observational (sampling) process.

- Method: Use advanced statistical frameworks that integrate imputation or use joint likelihoods.

- Occupancy-Detection Models: For species occurrence data, use models that explicitly separate the ecological process (true presence/absence) from the observational process (probability of detection) [1].

- Joint Modeling: Implement models that jointly estimate species trends and the sampling process. This can be done within a Bayesian framework, allowing you to specify informed priors about data gaps in under-sampled regions [1].

- Verification: Compare the results with those from simpler methods and assess model fit and predictive performance on held-out data.

Experimental Protocol: Implementing Targeted Sampling to Fill Data Gaps

Purpose: To design and execute a sampling strategy that intentionally targets geographically under-represented regions within a broader study area, thereby mitigating spatial bias in biodiversity data [2] [1].

Workflow Diagram

Methodology

- Define Study Region and Stratify: Clearly delineate the geographical boundaries of your study. Overlay a uniform grid (e.g., 10km x 10km cells) across the region [1].

- Map Existing Data Coverage: Download all available biodiversity records for the study region from repositories like the Global Biodiversity Information Facility (GBIF). Map these records onto your grid to visually identify cells with no or very few records [2] [1].

- Prioritize Sampling Cells: From the under-sampled cells, prioritize those that are:

- Located in key biodiversity areas (e.g., tropical biomes, biodiversity hotspots) [2].

- Ecologically distinct from well-sampled areas.

- Logistically feasible to access, considering safety and cost.

- Design Standardized Protocol: Develop a rigorous, repeatable protocol for data collection (e.g., transect walks, camera traps, audio recorders) to ensure new data is comparable across sites and with existing data [1].

- Implementation and Data Management: Execute the field sampling. All collected data must be thoroughly validated, curated with appropriate metadata, and archived in public repositories like GBIF to ensure it fills the gap for future research [2].

Frequently Asked Questions (FAQs)

Q1: Why is the geographical bias in biodiversity data a critical problem for global research and policy?

The bias leads to a misrepresentation of global biodiversity. Since environmental finance and policy decisions—such as directing funds for protection or creating carbon credits—are often based on available data, regions with poor data coverage risk being marginalized [2]. This means critical biodiversity areas in tropical or lower-income countries may be overlooked for conservation, while well-studied areas receive disproportionate attention and resources [2].

Q2: What are the primary drivers behind the uneven distribution of environmental data?

The imbalance is driven by multiple, often overlapping factors [2]:

- Economic Resources: A country's GDP is a strong predictor of data density. High-income countries have seven times more biodiversity observations per hectare than lower-income countries [2].

- Accessibility: Over 80% of global biodiversity records are collected within 2.5 km of a road, biasing data against remote wilderness areas [2].

- Citizen Science Distribution: Citizen science data, while valuable, is often concentrated in North America and Europe and tends to over-represent certain species groups like birds [2].

Q3: How can I quantify the level of geographical bias in my own dataset or a public dataset I'm using?

Begin by summarizing the provenance of the records. Create a table showing the number and percentage of records originating from different countries or regions. Furthermore, map the records against key socio-economic and infrastructural layers to identify correlations. The following table provides a real-world reference of data disparity from a major aggregator:

| Country / Region | Contribution to Global Biodiversity Data | Key Driver(s) of Bias |

|---|---|---|

| United States | 37% of GBIF data [2] | High GDP, extensive citizen science participation [2] |

| Top 10 Countries (including the US) | 79% of GBIF data [2] | Concentration of research funding and infrastructure [2] |

| High-income countries (general) | 7x more data per hectare [2] | Financial capacity for research and monitoring [2] |

| Areas within 2.5 km of a road | >80% of records [2] | Ease of physical access for researchers and volunteers [2] |

Q4: My research requires data from a region with very low coverage. What can I do besides expensive field campaigns?

You can employ several methodological approaches:

- Ecological Niche Modeling: Use existing, even sparse, records from the target region alongside environmental variables (climate, topography) to model the potential distribution of species [2].

- Remote Sensing: Utilize satellite imagery to infer habitat quality and extent, which can serve as a proxy for biodiversity in data-poor areas [2].

- Bayesian Optimization: This iterative, adaptive experimental design can help you learn from each new data point to suggest the most promising locations for future sampling, maximizing information gain from a limited number of field campaigns [3].

The Scientist's Toolkit: Research Reagent Solutions

This table details key resources for identifying, analyzing, and mitigating geographical biases in biodiversity research.

| Tool / Resource Name | Type & Function | Relevance to Geographical Bias |

|---|---|---|

| Global Biodiversity Information Facility (GBIF) | Data Aggregator: A repository compiling billions of species occurrence records from around the world. | The primary source for assessing global data coverage and disparity. 79% of its data comes from just ten countries [2]. |

| Remote Sensing & Satellite Imagery (e.g., Landsat) | Technology: Provides consistent, global data on land cover, vegetation health, and habitat change. | Allows researchers to infer ecological conditions in remote or under-sampled regions where field data is lacking [2]. |

| R/Python with DoE & Imputation Packages | Statistical Software: Tools for advanced experimental design (DoE.base, pyDOE3) and handling missing data (e.g., MICE in R). | Enables the use of statistical methods like weighting and imputation to correct for biased data gaps [3] [1]. |

| Bayesian Optimization Software (e.g., BayBE) | Algorithmic Tool: An iterative method that learns from previous results to suggest the next most informative experiment [3]. | Optimizes limited sampling resources by adaptively guiding where to collect data next in an under-sampled region [3]. |

| Socio-Economic Covariate Data (e.g., GDP, Road Maps) | Ancillary Data: Publicly available datasets on human activity and infrastructure. | Crucial for modeling the "sampling process" — understanding why data is missing in certain areas to correct for it [2] [1]. |

Technical Support Center

Welcome to the Taxonomic Representation Technical Support Center. This resource is designed to help researchers identify and correct for taxonomic bias in their biodiversity and drug discovery pipelines. The following guides address common experimental hurdles when working with historically under-represented groups.

Troubleshooting Guides & FAQs

Category: Sample Collection & Fieldwork

Q: Our annelid (e.g., earthworm, polychaete) field samples consistently yield low-quality, degraded DNA. What is the primary cause and how can we mitigate it?

- A: Annelids have high levels of RNases and DNases in their coelomic fluid and tissues, which are activated upon injury or stress. Standard collection methods used for insects are insufficient.

- Protocol: Rapid Annelid Tissue Preservation

- In-field Euthanasia: Immediately upon collection, subdue the specimen in a 5-10% ethanol solution.

- Rapid Dissection: Using sterile tools, quickly dissect the target tissue (e.g., a segment of body wall, gonad).

- Instant Preservation: Place the tissue fragment directly into a DNA/RNA Shield or RNAlater solution. Do not rely on ethanol alone for initial preservation.

- Flash Freezing: If possible, submerge the entire vial in liquid nitrogen for transport to -80°C storage.

Q: We are attempting to cultivate slow-growing medicinal plants for metabolomic studies, but face contamination from fast-growing fungi and bacteria. What is a targeted solution?

- A: Standard antibiotic cocktails are often optimized for common lab microbes and fail against environmental contaminants from soil.

- Protocol: Enhanced Plant Tissue Culture Sterilization

- Pre-treatment: Rinse plant material thoroughly in running tap water to remove soil debris.

- Surface Sterilization: Immerse tissue in a 20-40% commercial bleach solution (1-2% sodium hypochlorite final concentration) with 1-2 drops of Tween 20 for 15 minutes with gentle agitation.

- Antifungal Rinse: Instead of just sterile water, rinse the tissue 3-4 times with a sterile 0.1% solution of PPM (Plant Preservative Mixture), a broad-spectrum biocide.

- Culture Media Supplementation: Add PPM at a 0.05-0.1% concentration to the growth medium to suppress latent contamination.

Category: Genomic & Metagenomic Analysis

Q: Our vertebrate (e.g., amphibian, fish) whole-genome sequencing is plagued by high levels of host mitochondrial DNA, skewing coverage and assembly. How can we enrich for nuclear DNA?

- A: Standard DNA extraction protocols do not differentiate between nuclear and organellar DNA. Mitochondrial DNA can constitute over 50% of a sequencing library from certain tissues.

- Protocol: Nuclear DNA Enrichment via Sucrose Gradient Centrifugation

- Tissue Homogenization: Gently homogenize fresh tissue in a chilled, isotonic buffer (e.g., 0.25 M Sucrose, 10 mM Tris-HCl pH 7.5, 5 mM MgCl2) to keep nuclei intact while lysing other organelles.

- Filtration: Filter the homogenate through a 40-70μm nylon mesh to remove debris.

- Density Gradient: Layer the filtrate over a discontinuous sucrose gradient (e.g., 1.6 M and 2.3 M sucrose) and centrifuge at high speed (e.g., 80,000 x g for 1 hour).

- Nuclear Pellet: The intact nuclei will form a pellet at the bottom. Carefully remove the supernatant containing mitochondrial and cytoplasmic contaminants.

- DNA Extraction: Proceed with a standard phenol-chloroform or column-based DNA extraction on the purified nuclear pellet.

Q: In soil metagenomics, microbial DNA from bacteria and fungi overwhelmingly dominates, masking the presence of annelid or nematode DNA. How can we specifically target metazoan sequences?

- A: This is a classic issue of taxonomic bias in metagenomic sequencing. The solution is targeted enrichment prior to sequencing.

- Protocol: Metazoan-Targeted Hybrid Capture for Metagenomes

- Library Prep: Prepare a standard shotgun metagenomic sequencing library from total environmental DNA.

- Bait Design: Synthesize biotinylated RNA "baits" (e.g., 80-120nt) complementary to conserved metazoan genomic regions (e.g., ultra-conserved elements - UCEs) or mitochondrial cytochrome c oxidase subunit I (COI) genes.

- Hybridization: Incubate the denatured library with the metazoan baits to allow specific hybridization.

- Capture: Add streptavidin-coated magnetic beads, which bind the biotinylated baits and their hybridized metazoan DNA fragments.

- Wash & Elute: Use a magnet to separate the bead-bound metazoan DNA from the bulk microbial DNA. Wash away non-specifically bound material and elute the enriched metazoan library for sequencing.

Data Presentation: Quantifying the Shortfall

Table 1: Representation Disparity in Public Genomic Databases (NCBI, as of 2023-2024)

| Taxonomic Group | Estimated Global Species Richness | Sequenced Genomes (Representative) | Percentage of Richness Sequenced |

|---|---|---|---|

| Arthropoda | ~1,000,000 | ~4,500 | ~0.45% |

| Microbes | ~1,000,000 (est.) | ~500,000 (RefSeq) | ~50% (heavily biased) |

| Annelida | ~20,000 | ~25 | ~0.125% |

| Vertebrata | ~65,000 | ~1,800 | ~2.77% |

| Plantae | ~380,000 | ~1,200 | ~0.32% |

Note: Data is approximate and based on live search summaries of NCBI BioProject and RefSeq statistics. The figure for microbes includes a vast number of cultured isolates and single-amplified genomes, but still represents a tiny fraction of total estimated diversity.

Table 2: Key Research Reagent Solutions for Under-Represented Taxa

| Reagent / Material | Function | Application Note |

|---|---|---|

| DNA/RNA Shield | Inactivates nucleases immediately upon contact, preserving nucleic acid integrity. | Critical for annelids, fish, and other taxa with high nuclease activity. Superior to ethanol for RNA work. |

| PPM (Plant Preservative Mixture) | Broad-spectrum biocide effective against fungi, bacteria, and other microbes. | Essential for sterilizing and maintaining axenic cultures of slow-growing plants and their associated tissues. |

| Sucrose Gradient Media | Separates cellular components based on density via ultracentrifugation. | Used for isolating intact nuclei from vertebrate tissues to reduce mitochondrial DNA contamination in WGS. |

| Metazoan-Specific RNA Baits | Biotinylated oligonucleotides for hybrid capture of target DNA fragments. | Enables enrichment of rare metazoan sequences from complex environmental DNA samples dominated by microbial DNA. |

| Collagenase Type I/II | Enzyme that digests collagen, a major component of connective tissue. | Vital for dissociating tough vertebrate and annelid tissues for primary cell culture establishment. |

Experimental Protocols & Visualization

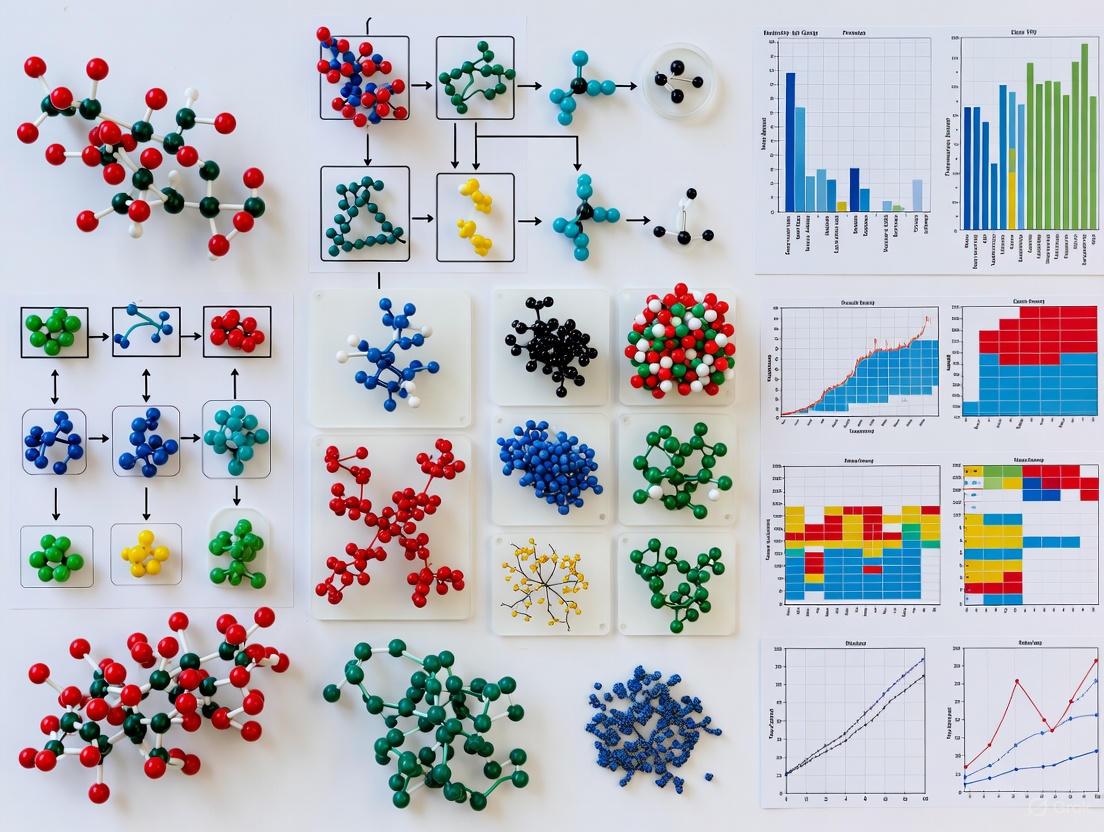

Diagram 1: Annelid DNA Degradation Pathway

Diagram 2: Nuclear DNA Enrichment Workflow

Diagram 3: Metazoan DNA Hybrid Capture

FAQs on Metric Gaps and Experimental Troubleshooting

Q1: What are the most critical gaps in current biodiversity metrics beyond species abundance? Current biodiversity research exhibits significant biases, with a heavy reliance on simple metrics like species abundance and richness. Substantial gaps exist in studies incorporating functional diversity (the range of ecological functions performed by organisms) and phylogenetic diversity (the evolutionary history represented by species). Evidence syntheses reveal that research predominantly uses averaged abundance data, creating a substantial knowledge gap regarding the impacts of agricultural and other management practices on functional and phylogenetic aspects of biodiversity [4].

Q2: My research on management impacts shows inconclusive results. Could the choice of biodiversity metric be a factor? Yes. Relying solely on abundance data can mask the true effects of an intervention. A practice might increase the number of individuals but reduce functional or phylogenetic diversity, making the ecosystem more vulnerable. For example, a fertilizer might benefit only a few, closely related species that perform similar functions. To draw reliable conclusions, your experimental design should incorporate a suite of complementary metrics, including functional traits and phylogenetic relatedness, to detect these nuanced changes [4].

Q3: How can I design an experiment that effectively captures functional diversity?

- Identify Key Functional Traits: Select traits relevant to your ecosystem and research question (e.g., for plants: root depth, leaf area, nitrogen fixation; for soil organisms: body size, feeding guild).

- Standardize Trait Measurements: Follow established protocols for measuring traits to ensure comparability with other studies.

- Calculate Complementary Indices: Do not rely on a single index. Use a combination, such as:

- Functional Richness (FRic): The volume of functional space occupied by the community.

- Functional Evenness (FEve): The regularity of species distribution in that functional space.

- Functional Divergence (FDiv): The degree to which species traits are at the extremes of the functional space [5].

Q4: What are the common methodological challenges in phylogenetic diversity analysis and how can I address them?

- Challenge 1: Incomplete or Low-Resolution Phylogenies. Many evolutionary relationships, especially for understudied taxa, are not fully resolved.

- Solution: Use the most comprehensive phylogenetic trees available (e.g., from resources like NatureServe [5]) and conduct sensitivity analyses to see if your results hold across different tree hypotheses.

- Challenge 2: Computational Complexity. Calculating phylogenetic diversity for large datasets can be computationally intensive.

- Solution: Utilize specialized software and R packages (e.g.,

picante,PhyloMeasures) designed for efficient computation of phylogenetic metrics.

- Solution: Utilize specialized software and R packages (e.g.,

Q5: My project site is small. Are functional and phylogenetic diversity metrics still relevant? Absolutely. While landscape-scale connectivity is crucial for many species, individual sites contribute to the larger ecological matrix. Assessing these advanced metrics at a site level helps ensure that the project supports a wide range of ecological functions and evolutionary history, enhancing its resilience and long-term value. Tools like the Americas Biodiversity Metric are designed to help assign biodiversity values to individual sites based on habitat quality and strategic significance [5].

Data Summaries: Evidence Gaps in Biodiversity Research

The following tables synthesize findings from a large-scale systematic map of secondary research, highlighting biases and gaps in the evidence base for agricultural impacts on biodiversity [4].

Table 1: Geographic and Taxonomic Biases in Biodiversity Evidence Synthesis

| Category | Prevalence in Research | Key Gaps / Underrepresented Elements |

|---|---|---|

| Geographic Focus | Dominated by studies from high-income countries, notably the USA, China, and Brazil [4]. | Low- and middle-income countries, particularly in tropical regions with high biodiversity, are severely underrepresented. |

| Taxonomic Focus | Arthropods and microorganisms are most frequently studied [4]. | Annelids (e.g., earthworms), vertebrates, and plants are less represented relative to their ecological importance [4]. |

| Management Scale | Focus on individual practices (e.g., fertilizer use, phytosanitary interventions) [4]. | Research at the farm and landscape levels, and on the combined effects of multiple practices, is scarce [4]. |

Table 2: Prevalence of Different Biodiversity Metrics in Research

| Metric Type | Description | Prevalence in Evidence Synthesis |

|---|---|---|

| Species Abundance | The number of individuals per species. | Predominant metric; evidence heavily relies on averaged abundance data [4]. |

| Species Richness | The number of different species present. | Commonly used, but often without complementary diversity metrics. |

| Functional Diversity | The range and value of ecological functions and traits in a community. | Substantial gap; significantly underrepresented in studies [4]. |

| Phylogenetic Diversity | The sum of evolutionary history represented by species in a community. | Major gap; rarely incorporated into meta-analytical evidence [4]. |

Experimental Protocols & Methodologies

Protocol 1: Integrating Functional Diversity into Agricultural Management Studies

This protocol outlines a methodology to move beyond abundance metrics when assessing the impact of agricultural practices.

1. Experimental Design:

- Site Selection: Choose paired sites (managed vs. control) or establish a BACI (Before-After-Control-Impact) design.

- Treatment and Control: Clearly define the agricultural intervention (e.g., organic fertilization, crop diversification) and the control (conventional practice).

2. Field Sampling:

- Taxon-Specific Methods: Use standardized methods to sample the target taxa (e.g., pitfall traps for ground beetles, sweep nets for pollinators, soil cores for earthworms).

- Trait Data Collection: For all species identified, measure or compile from databases a set of functional traits. For example, for birds: beak shape, body mass, foraging stratum; for plants: specific leaf area, seed mass, plant height.

3. Data Analysis:

- Calculate Functional Diversity Indices: Using software like R with the

FDpackage, calculate:- Functional Richness (FRic): The volume of functional space occupied.

- Functional Evenness (FEve): The uniformity of species distribution in functional space.

- Functional Divergence (FDiv): The degree of niche differentiation.

- Statistical Comparison: Compare the functional diversity indices between treatment and control groups using multivariate statistics (e.g., PERMANOVA) to test for significant differences.

Protocol 2: Assessing Phylogenetic Diversity in Restoration Projects

This protocol provides a framework for evaluating the evolutionary component of biodiversity in restored ecosystems.

1. Species List Compilation:

- Create a comprehensive list of all species recorded at the restoration site and a reference (intact) ecosystem.

2. Phylogenetic Tree Construction:

- Sequence Data: Obtain DNA barcode sequences (e.g., rbcL, matK for plants; COI for animals) from public databases like GenBank or BOLD for as many species as possible.

- Tree Building: Use phylogenetic software (e.g., MrBayes, BEAST) or web platforms (e.g., PhyloT) to generate a phylogenetic tree that represents the evolutionary relationships among the species.

3. Phylogenetic Metric Calculation:

- Using packages like

picantein R, calculate:- Faith's Phylogenetic Diversity (PD): The sum of the branch lengths of the phylogenetic tree connecting all species in the community.

- Mean Pairwise Distance (MPD): The mean phylogenetic distance between all pairs of species in the community.

- Mean Nearest Taxon Distance (MNTD): The mean phylogenetic distance between each species and its closest relative in the community.

- Comparison: Compare the PD, MNTD, and MPD values of the restored site to the reference ecosystem to assess the recovery of phylogenetic structure.

Research Reagent Solutions & Essential Materials

Table 3: Key Resources for Advanced Biodiversity Metrics

| Item / Resource | Function / Application |

|---|---|

| Functional Trait Databases (e.g., TRY Plant Trait Database) | Provides standardized, global data on plant functional traits, essential for calculating functional diversity indices without field measurement of every trait [5]. |

| Phylomatic / V.PhyloMaker | Software tools and R packages used to generate phylogenetic trees for a given list of plant species, streamlining the process of phylogenetic diversity analysis. |

R Statistical Environment with packages FD, picante, BAT |

The primary computational environment for calculating a wide array of biodiversity metrics, including functional (FD) and phylogenetic (picante) diversity, and for conducting beta-diversity analyses (BAT). |

| Americas Biodiversity Metric 1.0 | A spreadsheet-based tool (adapted from the UK Biodiversity Metric) that allows users to estimate biodiversity value and net gain/loss by accounting for habitat size, quality, and strategic significance, promoting a multi-faceted view of site value [5]. |

| Citizen Science Platforms (e.g., iNaturalist, eBird) | Provide extensive species occurrence data that can be used for richness and distribution analyses, and with careful curation, for some trait-based studies [5]. |

| NatureServe Explorer | An authoritative source for conservation status, taxonomy, and ecology of species in North America, providing critical information for prioritizing species and understanding their functional roles [5]. |

Experimental Workflow and Signaling Pathway Diagrams

Workflow for Integrated Biodiversity Assessment

Pathway from Practice to Diversity Outcome

Frequently Asked Questions (FAQs)

What if my institution cannot afford the conference registration fee?

- Issue: High costs are a primary barrier to participation [6].

- Solution: Proactively seek out conferences that offer waivers or significantly reduced fees for researchers from LMICs. Many conference organizers and scientific societies have such programs, though they may not be widely advertised. Prepare a compelling case for your institution's financial need when applying.

How can I navigate restrictive visa processes for international travel?

- Issue: Researchers from LMICs often face visa and travel restrictions when attending conferences in high-income countries [6].

- Solution: Initiate the visa application process as early as possible. Request a formal letter of invitation from the conference organizers and, if you are presenting, a letter of acceptance for your abstract. These documents are crucial for your application. Conference organizers can support attendees by providing clear, official documentation well in advance.

Why is my research, published in a local journal, not being cited by the global community?

- Issue: There is a significant linguistic and visibility bias in science. Research published in languages other than English or in local/regional journals is often undervalued and lacks visibility in major international databases [7] [8].

- Solution: To increase the global reach of your work, consider submitting to international, open-access journals. Simultaneously, you can increase the impact of your local publications by depositing preprints in international archives and using social media and professional networks like ResearchGate to share your findings with a global audience.

What can I do if I feel isolated or lack a professional network at a large international conference?

- Issue: A lack of existing networks can make conference attendance intimidating and less productive, and ethnically minoritized researchers are more likely to feel isolated [9].

- Solution: Before the conference, use conference apps or social media to identify and connect with other researchers (including those from your region) who will be attending. During the event, seek out smaller satellite meetings, workshops, or social events that are more conducive to networking. Some conferences are now implementing dedicated "meet-a-mentor" or networking sessions to facilitate these connections.

How can I address the challenge of "parachute science" in my collaborations?

- Issue: "Parachute science" occurs when researchers from high-income countries conduct studies in LMICs without meaningful partnership with local scientists, leading to extractive practices and undervalued local contributions [7] [10].

- Solution: Frame collaborations around equitable partnership from the outset. This includes co-developing research questions, ensuring shared authorship on resulting publications, and co-leading grant applications. Local researchers should be recognized for their crucial contributions of local knowledge, data, and fieldwork, moving beyond a role of mere facilitation.

Troubleshooting Guide: A Framework for Overcoming Participation Barriers

This guide provides a systematic approach to diagnosing and addressing the common barriers that prevent LMIC researchers from fully participating in global scientific conferences.

Troubleshooting Flowchart

The following diagram outlines a strategic workflow for identifying your primary challenges and locating targeted solutions.

Detailed Experimental Protocols

Protocol 1: Secure Funding and Conference Access

- Objective: To overcome the economic and logistical hurdles that prevent physical or virtual attendance at international conferences.

- Background: Financial constraints, including costs for registration, travel, and accommodation, are a dominant barrier. Visa restrictions further compound this issue [6].

- Methodology:

- Identify Funding Sources: Diligently search for conferences that explicitly offer grants, waivers, or sliding-scale fees for LMIC researchers. Target funding bodies and charities that specifically support scientific mobility.

- Leverage Digital Platforms: Propose virtual presentation options to conference organizers if travel is impossible. Record presentations in advance to mitigate time-zone differences [6].

- Document Preparation: For visa applications, assemble a comprehensive packet including the conference agenda, proof of abstract acceptance, invitation letter, and evidence of funding or institutional support.

- Expected Outcome: Successful procurement of financial support and necessary travel documents, enabling conference participation.

Protocol 2: Amplify Research Output for Global Reach

- Objective: To increase the visibility and impact of research conducted in LMICs within the global scientific community.

- Background: Linguistic bias and the undervaluing of non-English or locally published research create a significant visibility gap [7] [8].

- Methodology:

- Strategic Publishing: Where possible, target reputable international, open-access journals. For work published locally, create English-language summaries or extended abstracts to share on international preprint servers and academic social networks.

- Utilize Knowledge Platforms: Actively use open-access repositories and data-sharing platforms to make datasets and findings accessible to a global audience.

- Engage in Open Science: Advocate for and participate in open-science initiatives within your field to break down access barriers.

- Expected Outcome: Increased citation rates, international collaboration requests, and greater integration into the global research conversation.

Protocol 3: Build Equitable and Sustainable Collaborative Networks

- Objective: To establish international research partnerships that fairly value and compensate the contributions of LMIC researchers and institutions.

- Background: "Parachute science" and a lack of existing networks marginalize local experts and can lead to research that is misaligned with local priorities [7] [10].

- Methodology:

- Initiate Contact: Use professional networking sites (e.g., LinkedIn, ResearchGate) to identify potential collaborators with shared interests. Engage in virtual seminars and webinars to build connections.

- Negotiate Terms Early: In new collaborations, explicitly discuss roles, responsibilities, data ownership, and authorship expectations at the project's inception. Draft a memorandum of understanding (MoU) to formalize these agreements.

- Champion Local Leadership: Actively seek and propose projects where the principal investigator (PI) or co-PI is based at an LMIC institution, ensuring leadership and decision-making power is shared.

- Expected Outcome: Establishment of long-term, mutually beneficial research partnerships that build local capacity and produce more relevant and impactful science.

The following table details key "reagents" or tools required to conduct effective "experiments" in overcoming participation barriers.

| Research Reagent | Function & Explanation |

|---|---|

| Digital Collaboration Platforms | Tools like Zoom, Slack, and Overleaf are essential for maintaining low-cost, continuous communication with international colleagues, facilitating virtual meetings, and co-writing manuscripts and grants [6]. |

| Open-Access (OA) Journals & Preprint Servers | Publishing in OA journals or sharing preprints on servers like arXiv or bioRxiv ensures that research findings are not hidden behind paywalls, dramatically increasing their accessibility and potential impact [7]. |

| Institutional Liaisons | Dedicated roles within universities or research institutes that act as bridges to international partners. They can help navigate bureaucratic processes, identify funding, and foster sustainable institutional partnerships [7]. |

| Virtual Conference Hubs | Designated spaces (physical or virtual) within LMIC institutions for groups to participate in international conferences together. This reduces individual costs, mitigates isolation, and fosters local community discussion around global topics [6]. |

| Formal Collaboration Agreements (MoUs) | A Memorandum of Understanding is a critical document that outlines the principles, goals, and specific responsibilities of each partner in a collaboration. It helps prevent exploitative practices by ensuring all contributions are recognized from the start [10]. |

Frequently Asked Questions (FAQs)

What does 'underrepresented' mean in a research context? The term 'underrepresented' describes elements—whether species, genetic ancestries, human populations, or perspectives—that are systematically absent or inadequately accounted for in scientific data, models, policies, or research collections, despite their ecological, genetic, or social significance. This underrepresentation can lead to biased datasets, inaccurate conclusions, and interventions that are ineffective or even harmful for the omitted groups [11] [12] [13].

Why is it a problem if certain species are underrepresented in biodiversity models? When wildlife species are underrepresented, it leads to an incomplete understanding of ecosystem functioning, as different species play unique and critical roles. For example, a WWF-led study highlights that wildlife provides at least 12 of the 18 categories of essential benefits to people, known as Nature's Contributions to People (NCP). These range from food and livelihoods to disease regulation and cultural identity. Ignoring the decline of specific species, like sea otters or vultures, has led to collapsed kelp forests, damaged fisheries, and public health crises, demonstrating the profound real-world consequences of this oversight [11].

What are the consequences of using genomic databases that lack diversity? Genomic databases that predominantly contain data from individuals of European ancestry have direct clinical consequences. They can lead to:

- Inaccurate Genetic Tests: Tests designed to predict disease risk or tailor treatments are less accurate for individuals from underrepresented ancestries, potentially providing incorrect or harmful results [12].

- Variant Misclassification: Benign genetic variants that are common in an underrepresented population may be misclassified as disease-causing because they are rare or absent in the overrepresented population. One study on hearing loss reclassified 24 variants from pathogenic to benign after incorporating data from a Korean population, preventing potential misdiagnosis [14].

If underrepresented populations are willing to participate in research, why are they still underrepresented? Evidence consistently shows that racial and ethnic minority groups in the U.S. are as willing, if not more willing, to participate in clinical research if asked [15]. The barriers are not primarily willingness, but systemic issues within the research ecosystem, including:

- Failure to Ask: A fundamental barrier is that researchers simply do not ask individuals from these groups to participate [15].

- Historical and Structural Factors: Legacy of historical abuses, ongoing systemic discrimination, and mistrust of the healthcare system create barriers [12] [15].

- Research Infrastructure: The geographic distribution of research institutions and a disconnect between researchers and the communities they study, sometimes described as "parachute science," limit engagement [16] [17].

Troubleshooting Guides

Problem: My genomic variant interpretation pipeline may be producing biased results due to unrepresentative data.

| Step | Action | Rationale & Key Metric |

|---|---|---|

| 1. Audit Input Data | Identify the ancestral backgrounds represented in your primary reference database (e.g., gnomAD). | Rationale: To quantify representation bias. Metric: Percentage of samples by ancestry. In gnomAD v4, only 2.78% are of East Asian descent, making variants common in this group seem rare [14]. |

| 2. Integrate Diverse Data | Supplement your analysis with population-specific databases if your cohort includes underrepresented groups (e.g., KOVA2 for Korean, ToMMo for Japanese populations). | Rationale: To obtain accurate Minor Allele Frequency (MAF) estimates for the population in question. Metric: Pathogenic/likely pathogenic variants with MAFs exceeding ACMG benign thresholds (e.g., BA1: MAF ≥ 0.001 for dominant genes) in a specific population should be flagged for re-evaluation [14]. |

| 3. Re-evaluate Variants | Systematically reclassify variants flagged in Step 2 using a conservative, evidence-based pipeline. | Rationale: To prevent misdiagnosis. Metric: Number/percentage of variants successfully reclassified from "Pathogenic" to "Benign" or "Uncertain Significance." One study reclassified 3.38% of hearing loss variants initially deemed pathogenic [14]. |

| 4. Validate with Case Data | Compare the frequency of reclassified variants in a patient cohort versus control databases. | Rationale: To confirm the benign nature or identify potential founder effects. Metric: Odds Ratio (OR) with 95% confidence interval. A non-significant OR supports benign reclassification [14]. |

Problem: My biodiversity research and collections do not equitably represent species or knowledge from the Global South.

| Step | Action | Rationale & Key Metric |

|---|---|---|

| 1. Map Collection Biases | Analyze the geographic origins of name-bearing type specimens in your collection versus their current housing. | Rationale: To visualize the "knowledge split." Metric: Calculate the Endemic Deficit (proportion of a country's endemic species whose name-bearers are housed abroad). For freshwater fish, most name-bearers are in Global North museums, dislocated from their origin countries [13]. |

| 2. Foster Equitable Partnerships | Move beyond "parachute science" by co-designing research questions and methodologies with local experts and institutions from the study region. | Rationale: To ensure research is relevant, ethical, and builds local capacity. Metric: Proportion of research publications with lead authors or co-authors from the study region [17]. |

| 3. Promote Knowledge Repatriation | Actively support the repatriation of specimen data and physical specimens, and improve access protocols for researchers in countries of origin. | Rationale: To close biodiversity knowledge gaps and empower local stewardship. Metric: Number of digitized records shared with institutions in the source country or number of specimen repatriation agreements initiated [13]. |

| 4. Diversify Dissemination | Publish findings in open-access formats and, where possible, in languages relevant to the study region. | Rationale: To ensure that generated knowledge is accessible to those who need it most for conservation. Metric: Number of open-access publications and availability of summaries in non-English languages [17]. |

Experimental Protocols

Protocol 1: Reinterpretating Genetic Variants Using Underrepresented Population Data

Objective: To correctly reclassify the pathogenicity of genetic variants by incorporating allele frequency data from underrepresented populations, thereby reducing health disparities in genomic medicine.

Materials:

- Primary Dataset: List of candidate pathogenic/likely pathogenic variants (e.g., from ClinVar, DVD).

- Control Databases: Genomic data from general populations (e.g., gnomAD) AND population-specific databases (e.g., KOVA2 for Korean, ToMMo for Japanese).

- Case Cohort: Genomic data from affected patients (optional, for validation).

- Software: Bioinformatic pipeline for variant annotation and frequency comparison (e.g., Python, R).

Methodology:

- Variant Extraction: Compile a list of all variants classified as pathogenic (P) or likely pathogenic (LP) for your disease of interest from your primary dataset.

- Frequency Filtering: Cross-reference this list with the population-specific control database(s). Identify all P/LP variants that are present in the specific population.

- Apply ACMG Criteria: For each identified variant, check its allele frequency against the ACMG/AMP guidelines' benign criteria.

- Apply the BA1 (Benign, Stand-alone) criterion if the variant's MAF is ≥ 0.001 for dominant genes or ≥ 0.005 for recessive genes in the population-specific database.

- Apply the BS1 (Benign, Strong) criterion if the MAF is greater than expected for the disorder (e.g., ≥ 0.0002 for dominant genes).

- Literature & Founder Analysis: For variants flagged by Step 3, conduct a thorough literature review to determine if they are known pathogenic founder alleles in that population. Evidence can include segregation studies, functional assays, or high frequency in case cohorts.

- Segregation & Penetrance Analysis (If data available): For variants not established as founders, perform segregation analysis in available family trios. Estimate penetrance using allele frequencies in large control and case cohorts.

- Final Reclassification: Reclassify variants as Benign (B) or Likely Benign (LB) if they meet BA1/BS1 criteria and lack evidence for pathogenicity. Retain as P/LP if they are validated founder alleles with high penetrance [14].

Diagram 1: Variant Reinterpretation Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Application |

|---|---|

| Diverse Genomic Databases (e.g., KOVA2, ToMMo) | Population-specific allele frequency databases are crucial reagents for accurate variant interpretation in non-European populations, preventing the misclassification of common benign variants as pathogenic [14]. |

| Equitable Partnership Agreements | Formal frameworks for co-development of research projects, including agreements on data ownership, authorship, and benefit-sharing, are essential reagents for conducting inclusive and non-extractive research in global contexts [17]. |

| Culturally Adapted Consent Materials | Translated and culturally appropriate informed consent forms and study information sheets are key reagents to building trust and ensuring meaningful participation of diverse communities, addressing historical mistrust [15]. |

| Name-Bearing Type Specimens | These are the ultimate biological reagents for taxonomy, serving as the standard reference for species identity. Ensuring fair global access to these specimens is fundamental for accurate biodiversity documentation [13]. |

Building a More Inclusive Lens: Advanced Tools and Strategies for Comprehensive Biodiversity Monitoring

Quantitative Comparison of Biodiversity Monitoring Technologies

The table below summarizes a quantitative comparison of key biodiversity monitoring methods, based on a 2025 case study, to help researchers select appropriate techniques. [18]

Table 1: Performance and Cost Comparison of Biodiversity Monitoring Methods

| Method | Key Taxa Detected | Average Species Richness per Site (Case Study) | Relative Cost per Species (5+ campaigns) | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| Passive Acoustic Monitoring (PAM) | Vocalizing species (e.g., birds, amphibians) | Highest (over 10 more species/site than other methods) | Lowest | High temporal coverage; automated species ID; cost-effective for long-term monitoring | Limited to vocalizing taxa; requires developed AI models; low recall for some calls |

| Environmental DNA (eDNA) | Vertebrates, Invertebrates, Microbes | Varies (assessed community composition) | Increases rapidly with multiple campaigns | Detects rare/elusive species; broad taxonomic range; non-invasive | Cannot reliably estimate abundance; reference database gaps; cost compounds with repeats |

| In-Person Surveys | Birds, Mammals, Amphibians, Reptiles | Intermediate | Intermediate | Provides behavioral & health data; minimal equipment | Time-consuming; observer bias; limited temporal coverage |

| Camera Trapping | Medium-to-large mammals, ground birds | Intermediate | Intermediate (but high equipment outlay) | Provides visual evidence & behavior data | Limited to triggered movements; data processing can be laborious |

Frequently Asked Questions (FAQs) and Troubleshooting

Environmental DNA (eDNA)

Q: Our eDNA results show low species detections. What could be the cause?

- A: Low detection rates can stem from several factors. First, ensure samples are not cross-contaminated during collection or in the lab by using gloves, sterilized equipment, and single-use containers. [19] Second, the genetic reference databases for your target region or taxonomic group may be incomplete; verify primer specificity and check database coverage for your study area. [20] [19] Finally, eDNA degrades quickly; transport samples on ice and freeze them immediately upon arrival at the lab. [21]

Q: Can eDNA be used to track the functional recovery of an ecosystem during restoration?

- A: Yes. eDNA metabarcoding is highly effective for tracking community-wide shifts and functional recovery. It allows for the rapid and standardized monitoring of multiple taxa simultaneously across a restoration chronosequence, providing a high-resolution view of ecological succession that is difficult to achieve with traditional methods. [19]

Q: Our eDNA study failed to detect a known invasive species in the area. Why?

- A: False negatives can occur if the invasive species is at a very low density and sheds little DNA, or if its DNA is patchily distributed in the environment. Increase sampling effort (both spatial and temporal) to improve detection probability for rare species. [19] Also, confirm that your molecular assays (primers/probes) are validated for the specific invasive species you are targeting. [21]

Bioacoustics / Passive Acoustic Monitoring (PAM)

Q: The AI model for our acoustic data has a high false-positive rate for certain bird species. How can we improve accuracy?

- A: This is a common challenge. Always implement a confidence threshold and manually validate a subset of detections, especially for species known to have similar calls. [18] For the 2025 case study, researchers discarded any automated detections with less than a 95% chance of being true positives after manual validation. [18] If possible, retrain or fine-tune the AI model (e.g., BirdNET) with localized audio data to improve its performance for your specific region. [18]

Q: How should we place recording devices in a woodland environment?

- A: To capture representative biodiversity, use multiple recording devices distributed across the survey site. A general rule is to space devices at 500m separation to avoid double-counting while maximizing coverage. For small woodlands, a single device may capture only ~73% of species richness. Do not place devices closer than 250m apart. [22]

Q: What is the main limitation of PAM?

- A: The primary limitation is its restriction to vocalizing taxa with developed AI detection models, which are currently most advanced for birds and amphibians. [18] Performance can vary significantly across taxa, with many aquatic and invertebrate species being underrepresented in models. Background noise can also affect reliability. [20]

Remote Sensing

Q: Can remote sensing directly monitor animal biodiversity?

- A: Generally, no. Satellite-based remote sensing is excellent for assessing habitat conditions, structure, and threats (e.g., deforestation), but it is limited in directly monitoring wildlife beyond very large megafauna. It is best used in conjunction with eDNA and bioacoustics, which are better suited for directly detecting species. [18] [23]

Q: What remote sensing technologies are most promising for detailed ecosystem mapping?

- A: Hyperspectral imaging is particularly powerful because it can reveal detailed plant traits such as chlorophyll content, leaf structure, and water stress, enabling detailed ecosystem mapping at fine scales. Sensors like the AVIS 4, which captures over 200 spectral bands with sub-metre resolution, are at the forefront of this capability. [20]

Data Management and Integration

Q: What are the best practices for managing and storing the large datasets generated by these technologies?

- A: Establish a clear workflow that separates raw data from processed data, with specific standards and metadata requirements for each. [20] Prioritize the storage of raw data to allow for future re-analysis as methods improve. Where possible, use common national or European infrastructures (e.g., through projects like BioAgora and Priodiversity) that mandate standards and promote collaboration. [20]

Q: How can we integrate data from historical surveys with new molecular and acoustic data?

Experimental Protocols for Key Technologies

Detailed Protocol: eDNA Metabarcoding for Aquatic Biodiversity

This protocol is adapted for mangrove and freshwater ecosystems, as described in recent studies. [18] [19]

Workflow Overview:

Step-by-Step Methodology:

Field Sampling:

- Materials: Sterile gloves, single-use water collection bottles or Niskin bottles, cooler with ice.

- Procedure: Collect bulk water samples from multiple random points within the target water body (e.g., farm dam, mangrove creek). Pool the water samples into a single, sterile container to create a composite sample for the site. Wear gloves at all times and change them between sites to prevent cross-contamination. [18] [19]

Filtration:

- Materials: Sterile filtration units, peristaltic pump (if needed), 0.22µm sterile mixed cellulose ester filters.

- Procedure: In a clean area, filter a known volume of water (typically 1-2 liters) through the sterile filters. The filters will trap the eDNA. The filtration equipment should be sterilized or use single-use units between sites. [19]

eDNA Extraction:

- Materials: Commercial eDNA extraction kit (e.g., DNeasy PowerWater Kit), centrifuge, microcentrifuge tubes.

- Procedure: Following the manufacturer's protocol for your chosen kit, extract the DNA from the filters. This typically involves lysis, binding, washing, and elution steps. Perform extractions in a dedicated clean lab to avoid contamination with PCR products or other DNA sources. [19]

PCR Amplification (Metabarcoding):

- Materials: PCR reagents, taxon-specific primers (e.g., general vertebrate primers: 12S-V5; general invertebrate primers: COI), thermocycler.

- Procedure: Amplify the target barcode gene regions using polymerase chain reaction (PCR) with primers designed to bind to a wide range of species within your taxon of interest. Include negative controls (blank water) to check for contamination. [18] [19]

Sequencing:

- Procedure: Purify the PCR products and prepare libraries for high-throughput sequencing on a platform such as Illumina MiSeq or NovaSeq. This step is typically performed by a specialized sequencing facility. [19]

Bioinformatic Analysis:

- Tools: Use pipelines like QIIME 2, DADA2, or OBITools.

- Procedure: Process raw sequences to remove low-quality reads, identify and correct errors, and cluster sequences into Molecular Operational Taxonomic Units (MOTUs). Compare these MOTUs against reference databases (e.g., GenBank, BOLD) for taxonomic assignment. [19]

Ecological Interpretation:

- Procedure: Analyze the taxonomic lists to calculate biodiversity metrics (e.g., species richness, community composition) and compare these across sites or over time to draw ecological conclusions about ecosystem health or restoration progress. [19]

Detailed Protocol: Passive Acoustic Monitoring (PAM) with Automated Detection

This protocol is based on the 2025 case study and practical guidance for woodland surveys. [18] [22]

Workflow Overview:

Step-by-Step Methodology:

Deployment Planning:

- Materials: Map of the study area, GPS unit.

- Procedure: Determine the number and location of recording units. As a guideline, space AudioMoth or similar recorders at 500m intervals to maximize coverage. If surveying multiple habitats (e.g., wetland, woodland edge), place additional units. Mark all locations on a map. [22] [18]

Field Deployment:

- Materials: Autonomous recording units (e.g., AudioMoth), weatherproof cases, SD cards, locks/cables, GPS unit.

- Procedure: Program recorders with a schedule (e.g., record for one hour at dawn and one hour at dusk daily). Secure them to trees or posts at ~1.5m height. Record the GPS coordinates and device ID for each deployment. [22] [18]

Data Retrieval:

- Procedure: After the survey period (e.g., 1-3 months), retrieve the devices and download the audio files. Ensure files are backed up securely. [18]

Automated Species Identification:

- Tools: BirdNET-Analyzer, custom convolutional neural network (CNN) models for amphibians.

- Procedure: Process the audio files through the AI models. For birds, BirdNET is a widely used solution that analyzes spectrograms to identify species. [18] For other taxa like amphibians, you may need to develop or fine-tune a model using training libraries of local species' calls. [18]

Manual Validation:

- Procedure: This is a critical step. Manually listen to and verify a subset of the automated detections, especially for species with low confidence scores or known confusing calls. The 2025 case study manually validated over 17,000 detections and applied a 95% confidence threshold before accepting a detection as a true positive. [18]

Data Synthesis:

- Procedure: Compile the validated detections to calculate metrics such as species richness, relative activity (number of detections), and temporal activity patterns for each site. [18]

Research Reagent Solutions and Essential Materials

Table 2: Essential Materials for Novel Biodiversity Monitoring

| Category | Item | Specific Example / Specification | Primary Function |

|---|---|---|---|

| eDNA Sampling | Sterile Water Collection Bottles | Single-use, non-DNA binding material | Collect water samples without contamination |

| Filtration Equipment | 0.22µm sterile filters; peristaltic pump | Concentrate eDNA from large water volumes | |

| DNA Extraction Kit | DNeasy PowerWater Kit (Qiagen) | Isolate pure eDNA from environmental samples | |

| PCR Primers | 12S-V5 (vertebrates), COI (invertebrates) | Amplify taxon-specific gene regions for sequencing | |

| Bioacoustics | Autonomous Recorder | AudioMoth | Record vocalizations on a programmable schedule |

| AI Detection Software | BirdNET | Automatically identify bird & amphibian calls from audio | |

| Training Database | Xeno-Canto, Macaulay Library | Provide source data for building/validating AI models | |

| Remote Sensing | Hyperspectral Sensor | AVIS 4 (200+ bands, sub-metre resolution) | Capture detailed spectral data for plant trait analysis |

Technical Support Center: FAQs & Troubleshooting

Q1: Our eDNA metabarcoding results for soil arthropods show inconsistent taxonomic assignments and low read counts for key insect groups. What are the primary sources of this bias and how can we mitigate them?

A: Inconsistent results in eDNA metabarcoding for soil arthropods often stem from primer bias, PCR inhibition, and DNA extraction efficiency. The Biodiversa+ framework emphasizes standardized protocols to address these issues for under-represented taxa.

- Primer Bias: Universal primers often have mismatches for specific insect orders.

- Solution: Perform in silico testing of primer pairs (e.g., EcoPCR) against reference databases. Consider using a primer cocktail targeting different marker regions (e.g., COI, 16S, 18S) to broaden taxonomic coverage.

- PCR Inhibition: Humic acids and other contaminants in soil co-extract with DNA and inhibit polymerase.

- Solution: Incorporate a pre-extraction soil wash step with a buffer like PBS or a commercial inhibitor removal kit. Use a qPCR assay with an internal positive control (IPC) to quantify inhibition levels.

- DNA Extraction Efficiency: The chitinous exoskeletons of insects require rigorous lysis.

- Solution: Implement a mechanical lysis step (e.g., bead beating) in addition to chemical lysis. Use a standardized soil mass and validate the protocol with a mock community of known composition.

Q2: When applying the recommended genetic monitoring framework to assess intraspecific genetic diversity, we encounter challenges with low-quality DNA from non-invasive samples (e.g., insect leg fragments, degraded soil samples). What is the optimal library preparation method?

A: For low-quality/depleted DNA from non-invasive samples, the Biodiversa+ framework recommends Reduced-Representation Sequencing (RRS) methods like RADseq (Restriction-site Associated DNA sequencing) over whole-genome sequencing.

Detailed Protocol: Double-Digest RADseq (ddRADseq) for Degraded Samples

- DNA Quantification: Use a fluorescence-based method (e.g., Qubit) as spectrophotometry is unreliable for degraded DNA.

- Restriction Digestion: Digest 100ng of DNA (if available) with two restriction enzymes (a rare and a frequent cutter, e.g., SbfI and MseI) to create a reproducible subset of the genome.

- Ligation of Adapters: Ligate unique barcoded adapters to each sample. This allows for multiplexing and identifies PCR duplicates.

- Size Selection: Perform strict size selection (e.g., 300-400bp) via gel electrophoresis or automated systems to exclude very short fragments and ensure library uniformity.

- PCR Amplification: Use a high-fidelity, low-bias polymerase for a minimal number of PCR cycles (e.g., 12-15) to prevent over-amplification of less degraded fragments.

- Library QC: Validate the final library using a Bioanalyzer or TapeStation.

Comparison of Genetic Methods for Non-Invasive Samples

| Method | DNA Input Requirement | Best For Degraded DNA? | Cost per Sample | Key Advantage for Biodiversa+ |

|---|---|---|---|---|

| Whole Genome Sequencing | High (>>100ng) | No | High | Comprehensive genomic data |

| ddRADseq | Low-Moderate (~10-100ng) | Yes | Moderate | Cost-effective for population genetics |

| RNAseq | High (>>100ng) | No | High | Functional diversity assessment |

| Metabarcoding | Very Low (~1ng) | Yes | Low | Rapid biodiversity screening |

Q3: Our analysis of soil microbial functional diversity via metatranscriptomics is yielding high levels of "unknown" functional annotations. How can we improve the functional assignment for under-represented soil taxa?

A: High "unknown" annotations are common due to the vast uncultured microbial diversity in soil. The Biodiversa+ strategy involves a multi-layered database and analysis approach.

- Use Consolidated Databases: Do not rely on a single database (e.g., KEGG alone). Use integrated databases like MGnify, IMG/M, or the Non-redundant Protein Database (NR) from NCBI, which aggregate more sequences from environmental samples.

- Incorporate Custom Databases: Build a custom database using genomes from relevant soil-specific isolate and single-cell amplified genome (SAG) projects.

- Apply Sensitive Search Tools: Use diamond BLASTX or HMMER for sequence similarity searches, as they are more sensitive than traditional BLAST for distant homologs.

- Functional Inference from Taxonomy: For sequences that cannot be assigned a function, infer potential ecological roles based on their taxonomic assignment and the known functions of closely related, cultured relatives.

Experimental Protocols

Protocol 1: Standardized Soil Biodiversity Sampling and eDNA Extraction for Metabarcoding

Objective: To obtain reproducible and inhibitor-free eDNA from soil cores for the metabarcoding of microfauna, mesofauna, and microbial communities.

Materials:

- Soil corer (5cm diameter)

- Sterile gloves and sampling bags

- Liquid Nitrogen or RNAlater for field preservation

- DNeasy PowerSoil Pro Kit (Qiagen) or equivalent

- Bead-beater or vortex adapter

- Microcentrifuge, qPCR machine

Methodology:

- Sampling: Take five soil cores from a 1m x 1m quadrat to a depth of 10cm. Composite and homogenize the cores in a sterile bag.

- Preservation: Immediately sub-sample 10g of soil into a tube and flash-freeze in liquid nitrogen or preserve in 15ml of RNAlater. Store at -80°C.

- Sub-sampling: Weigh out 0.25g of soil (wet weight) for DNA extraction.

- Inhibitor Removal: Perform an initial wash with a phosphate buffer to remove humic acids if inhibition is historically high.

- DNA Extraction: Follow the manufacturer's protocol for the DNeasy PowerSoil Pro Kit, ensuring complete mechanical lysis.

- Quality Control: Quantify DNA using Qubit. Test for inhibition via qPCR with a universal 16S rRNA gene assay and an IPC.

Protocol 2: Assessing Insect Population Genetic Structure using ddRADseq

Objective: To generate genome-wide SNP data for population genetic analysis of insect species, focusing on under-sampled or cryptic species.

Materials:

- Tissue samples (leg, thorax muscle)

- DNeasy Blood & Tissue Kit (Qiagen)

- Restriction Enzymes (SbfI-HF, MseI)

- T4 DNA Ligase

- Size-selective magnetic beads (e.g., SPRIselect)

- High-fidelity PCR Master Mix

- Pippin Prep system or similar for precise size selection

- Illumina sequencing platform

Methodology:

- DNA Extraction: Extract high-molecular-weight DNA. Assess quality and quantity via Qubit and Bioanalyzer (DNA Integrity Number >7 is ideal).

- Double Digestion: Set up a restriction digest with SbfI and MseI on 100-500ng of DNA. Incubate at 37°C for 2 hours.

- Adapter Ligation: Ligate uniquely barcoded P1 and P2 adapters to the digested fragments. Purify with SPRIselect beads.

- Size Selection: Perform a tight size selection (e.g., 350-450bp) to isolate the fragment library. This is critical for read length compatibility and reducing locus dropout.

- PCR Enrichment: Amplify the size-selected library with 12-15 cycles using primers complementary to the adapters.

- Sequencing: Pool equimolar amounts of each library and sequence on an Illumina NovaSeq (150bp paired-end recommended).

Visualizations

Diagram 1: Soil eDNA Metabarcoding Workflow

Diagram 2: ddRADseq Wet-Lab Process

Diagram 3: Data Integration for Under-represented Taxa

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Kit | Function in Biodiversa+ Context | Key Consideration |

|---|---|---|

| DNeasy PowerSoil Pro Kit (Qiagen) | Standardized extraction of inhibitor-free DNA from diverse soil types. | Critical for reproducible metabarcoding across European sites. |

| ZymoBIOMICS Microbial Community Standard | Mock community for validating metabarcoding and metagenomics workflows. | Essential for quantifying technical bias against under-represented taxa. |

| NEBNext Ultra II DNA Library Prep Kit | High-efficiency library construction for low-input genomic DNA (e.g., from insects). | Enables genetic diversity studies from small, non-invasive samples. |

| SPRIselect Beads (Beckman Coulter) | Size selection and clean-up for ddRADseq and other NGS libraries. | Provides reproducibility and controls library fragment size. |

| TaqMan Environmental Master Mix 2.0 | qPCR master mix resistant to common environmental inhibitors. | Used for quantification and inhibition testing of eDNA extracts. |

Welcome to the Technical Support Center

This support center provides troubleshooting guides and FAQs to help researchers implement inclusive and standardized protocols for biodiversity data collection and species identification. The guidance is framed within the broader thesis of addressing under-represented elements in biodiversity research, offering practical solutions to common methodological and collaborative challenges [7].

Troubleshooting Guides

Challenge: Linguistic Bias and Communication Barriers

Linguistic bias, particularly the dominance of English in science, can exclude valuable non-English research and create barriers for non-native English speakers [7].

- Problem: My team's research, published in a local language journal, is not being cited or recognized in international syntheses.

Solution:

- Action 1: Proactively publish key findings in bilingual formats (e.g., local language and English) or provide extended English abstracts and summaries [7].

- Action 2: Utilize institutional or funder resources to partner with professional translation services for wider dissemination.

- Action 3: Submit your work to journals that offer linguistically inclusive policies, such as translation services or multilingual abstracts [7].

Problem: As a non-native English speaker, I face challenges in writing manuscripts and responding to reviewer comments.

- Solution:

- Action 1: Seek out and use free online language support tools and writing workshops specifically designed for scientists.

- Action 2: Advocate within your collaborative networks for the establishment of peer language-review partnerships.

- Action 3: When submitting papers, check if the journal provides language editing support or has a policy of not rejecting papers solely based on English proficiency [7].

The following workflow outlines a strategic approach to mitigate linguistic bias in research:

Challenge: Undervalued Local Research Contributions

Research from underrepresented regions is often systematically undervalued due to its publication in local journals or reports not indexed in major international databases [7].

- Problem: Our local biodiversity monitoring data, crucial for regional conservation, is not visible in global databases like the GBIF, limiting its impact.

Solution:

- Action 1: Ensure data is structured according to global standards (e.g., using the Biodiversity Monitoring Standards Framework (BMSF) and Darwin Core archives) to facilitate integration [24].

- Action 2: Contribute validated data to regional and global data aggregators like GBIF, while applying the CARE (Collective Benefit, Authority to Control, Responsibility, and Ethics) principles to ensure indigenous rights and data sovereignty are respected [24].

- Action 3: Publish data papers in peer-reviewed journals to increase the findability and credibility of your datasets.

Problem: Critical reports submitted to local stakeholders are overlooked by the international research community.

- Solution:

- Action 1: Deposit copies of technical reports in open-access, globally recognized repositories (e.g., Zenodo, institutional repositories) with persistent digital object identifiers (DOIs).

- Action 2: Structure the reports to include standardized metadata and keywords to improve discoverability via web searches.

- Action 3: Co-author synthesis papers with international collaborators that explicitly cite and build upon these local reports.

Challenge: Parachute Science and Extractive Practices

Parachute science occurs when international researchers conduct fieldwork in biodiversity-rich regions without meaningful partnership with local experts, leading to inequitable collaboration and capacity drain [7].

- Problem: An international team proposes a research project in our country but has not included local scientists in the design or budgeted for local capacity building.

Solution:

- Action 1: Establish a pre-collaboration agreement that outlines principles of equitable partnership, including co-development of research questions, fair budget allocation for local institutions, and agreements on data ownership and publication authorship [7].

- Action 2: Propose the creation of a liaison role within the project to facilitate communication and ensure local context is integrated [7].

- Action 3: Refer to the framework of collective responsibility, which assigns clear duties to all research participants to dismantle systemic barriers [7].

Problem: As a local researcher, I am often only asked to provide logistical support or data, but not included in data analysis, interpretation, or publication.

- Solution:

- Action 1: Clearly communicate your expectations and expertise at the outset of the project. Negotiate your role to include active participation in all stages of the research process.

- Action 2: Leverage the project to request training or shared analytical responsibilities to build specific skills desired for full participation.

- Action 3: Advocate for authorship policies that reflect all substantial intellectual contributions, as outlined by guidelines like the CRediT (Contributor Roles Taxonomy).

Challenge: Standardizing Species Identification with Genetic Tools

Accurate species identification is fundamental, yet definitions of "species" and "subspecies" vary, impacting conservation policy, especially under legislation like the U.S. Endangered Species Act (ESA) [25].

- Problem: Genetic data from my study population shows evidence of hybridization, creating uncertainty about its taxonomic status and conservation priority.

Solution:

- Action 1: Do not rely on a single species concept. Integrate data from multiple sources (genomic, ecological, morphological, behavioral) to assess the population's status against the Biological Species Concept (reproductive isolation), the Phylogenetic Species Concept (shared ancestry), and ecological role [25].

- Action 2: Use genetic markers to create a "genetic fingerprint" for the population, which can help define its discreteness and significance, key criteria for defining a Distinct Population Segment (DPS) under the ESA [25] [26].

- Action 3: Consult with conservation genetics labs (e.g., the USFWS Conservation Genetics Community of Practice) that specialize in these techniques [26].

Problem: I need to definitively identify a species from a tissue sample or determine the sex of an individual that cannot be visually distinguished.

- Solution:

- Action 1: Employ conservation genetics techniques using diagnostic genetic markers. This involves DNA extraction, PCR amplification of specific markers (e.g., for species identification or sex-linked genes), and sequencing or fragment analysis [26].

- Action 2: Compare the resulting genetic sequences or profiles to reference databases (e.g., BOLD, GenBank) for species identification.

- Action 3: For sex identification, use assays designed to amplify genes located on sex chromosomes, with results interpreted based on the presence/absence or dosage of specific alleles.

The table below summarizes the key concepts for defining species and their relevance to conservation:

| Concept | Core Principle | Key Data Required | Relevance to Conservation |

|---|---|---|---|

| Biological [25] | Groups of interbreeding individuals reproductively isolated from others. | Observations of mating, hybrid viability/fertility, pre- and post-mating isolation mechanisms. | High; directly assesses gene flow but can be challenging to test in the wild. |

| Phylogenetic [25] | Groups of organisms descended from a common ancestor, sharing lineage-specific mutations. | Genomic DNA sequence data to construct phylogenetic trees and identify monophyletic groups. | High; useful for identifying evolutionarily significant units (ESUs) based on shared ancestry. |

| Chronospecies [25] | A species identified at one point in time that has changed enough from its ancestor to be considered distinct. | Morphological and/or genetic time-series data from fossil and modern specimens. | Limited; primarily paleontological, difficult to apply to modern conservation decisions. |

| Subspecies [25] | Phylogenetically distinguishable populations with partial restriction of gene flow. | Genetic, morphological, and ecological data to demonstrate distinctiveness and geographic separation. | High; the U.S. ESA allows subspecies to be listed and protected [25]. |

| Distinct Population Segment (DPS) [25] | A vertebrate population segment that is discrete and significant to the species. | Data on population discreteness (genetic, ecological) and significance (ecological, unique adaptations). | Critical; the legal mechanism under the U.S. ESA for protecting populations below subspecies level [25]. |

The following workflow provides a protocol for standardized species identification integrating multiple data types:

Frequently Asked Questions (FAQs)