Accelerating Ocean Modeling: A Guide to GPU-Accelerated SCHISM Using CUDA Fortran

This article provides a comprehensive guide for researchers and scientists on implementing GPU acceleration for the SCHISM ocean model using CUDA Fortran.

Accelerating Ocean Modeling: A Guide to GPU-Accelerated SCHISM Using CUDA Fortran

Abstract

This article provides a comprehensive guide for researchers and scientists on implementing GPU acceleration for the SCHISM ocean model using CUDA Fortran. Covering foundational concepts, we explore the motivation for moving from CPU to GPU computing to achieve lightweight, high-performance simulations. The methodological section details the identification of computational hotspots and the practical steps for CUDA Fortran integration. We address common troubleshooting and optimization strategies to overcome performance bottlenecks, and finally, we present a validation framework and comparative performance analysis against CPU and alternative GPU implementations, demonstrating speedups of over 35x in large-scale experiments.

Why GPU Acceleration is Revolutionizing SCHISM Ocean Simulations

The Semi-implicit Cross-scale Hydroscience Integrated System Model (SCHISM) is an open-source, community-supported modeling system designed for seamless simulation of three-dimensional baroclinic circulation across creek-lake-river-estuary-shelf-ocean scales [1]. Built as a derivative of the original SELFE model (v3.1dc), SCHISM has evolved with significant differences and enhancements, now distributed under an Apache v2 license [1] [2]. This model employs an unstructured grid approach that allows it to efficiently handle complex geometries and multiple physical processes, making it particularly valuable for coastal oceanography, flood forecasting, and earth system applications.

SCHISM represents a comprehensive modeling framework that integrates various physical and biological processes within a single computational environment. Its capacity for cross-scale simulation—from global ocean dynamics down to small rivers and creeks—without requiring grid nesting sets it apart from many traditional ocean models [3]. This capability positions SCHISM as a powerful tool for addressing complex environmental challenges, particularly in the context of climate change and coastal natural disasters that demand high-resolution forecasting across interconnected systems.

Core Algorithmic Framework

Numerical Foundations

SCHISM's algorithmic foundation centers on solving the Reynolds-averaged Navier-Stokes equations in their hydrostatic form, coupled with transport equations for salt and heat [2]. The model employs a semi-implicit finite-element/finite-volume method combined with an Eulerian-Lagrangian algorithm to address a wide range of physical processes while maintaining numerical stability and efficiency [1]. This hybrid approach judiciously mixes higher-order with lower-order methods to obtain stable and accurate results while enforcing mass conservation through a finite-volume transport algorithm [1].

The core governing equations include:

Momentum equation:

where ν is the vertical eddy viscosity coefficient, g is gravitational acceleration, η is the free surface height, and f represents other forcing terms [4].

Continuity equations:

Transport equations:

These formulations enable SCHISM to handle both barotropic and baroclinic processes across diverse spatial and temporal scales [2].

Key Numerical Innovations

SCHISM incorporates several innovative algorithmic features that enhance its capability for cross-scale modeling:

Unstructured Mixed Grids: Utilizes hybrid triangular/quadrangular elements in the horizontal dimension and flexible LSC2 or hybrid SZ coordinates in the vertical dimension, enabling natural fitting to complex geometries and bathymetry [1] [2].

Semi-Implicit Time Stepping: Employs a semi-implicit scheme that bypasses the most stringent CFL stability constraints, allowing for significantly larger time steps compared to explicit methods without mode splitting errors [1] [3].

Advanced Transport Solvers: Implements mass-conservative, monotone, higher-order transport solvers including TVD2 and WENO schemes that maintain accuracy across a wide range of Courant numbers [1] [2].

Robust Wetting/Drying: Naturally incorporates wetting and drying of tidal flats through a mass-conservative approach suitable for inundation studies [1].

Polymorphism: A single grid can dynamically mimic 1D, 2DV, 2DH, or 3D configurations based on local hydrodynamic conditions [3].

Table 1: Key Numerical Algorithms in SCHISM

| Algorithm Category | Specific Methods | Key Advantages |

|---|---|---|

| Horizontal Discretization | Finite-element/finite-volume hybrid; Unstructured triangular/quadrangular grids | Superior boundary fitting; local refinement/derefinement; adapts to complex geometry [1] [2] |

| Vertical Discretization | Hybrid SZ coordinates; LSC2 (Localized Sigma Coordinates with Shaved Cells) | Accurate representation of complex topographic variations; reduced pressure gradient errors [1] [2] |

| Time Stepping | Semi-implicit; Eulerian-Lagrangian | No CFL stability constraints; no mode-splitting errors; numerical efficiency [1] [3] |

| Momentum Advection | Higher-order Eulerian-Lagrangian with ELAD filter | Reduced numerical diffusion; stability in eddying regimes [1] [2] |

| Transport Solvers | TVD2; WENO | Mass conservation; monotonicity; higher-order accuracy [1] [2] |

Computational Characteristics and Demands

Performance Characteristics

SCHISM exhibits high computational burden due to its comprehensive physics and cross-scale resolution capabilities [5]. The model has been parallelized using domain decomposition MPI with generally good scalability across multiple computing cores [2]. Typical temporal resolution involves time steps ranging from 100-400 seconds, with output intervals configured according to specific application requirements (e.g., every 0.5 hours for storm surge simulation) [4] [5].

The computational demands vary significantly based on model domain and resolution. For example, a global implementation with ~4.6 million nodes and ~9 million triangular elements represents a large-scale simulation that requires high-performance computing resources [3]. In contrast, regional applications with ~70,775 grid nodes and 30 vertical layers can run on more modest computational resources, though still demanding substantial processing power [4].

GPU Acceleration with CUDA Fortran

Recent research has developed GPU-SCHISM, a GPU-accelerated parallel version using the CUDA Fortran framework to enhance computational efficiency [4]. This implementation specifically targets the Jacobi iterative solver module, identified as a computational hotspot, for GPU acceleration. Performance assessments demonstrate that:

- For small-scale classical experiments, a single GPU improves the efficiency of the Jacobi solver by 3.06 times and accelerates the overall model by 1.18 times [4].

- For large-scale experiments with 2,560,000 grid points, the GPU speedup ratio reaches 35.13 compared to CPU-only implementation [4].

- The performance advantage of GPUs becomes more pronounced with higher-resolution calculations that better leverage the parallel architecture of GPUs [4].

- Comparative analysis shows that CUDA-based GPU acceleration consistently outperforms OpenACC implementations across all experimental conditions [4].

Table 2: Computational Performance of SCHISM on Different Hardware Platforms

| Hardware Configuration | Problem Scale | Performance Metrics | Key Findings |

|---|---|---|---|

| Multi-core CPU (MPI) | Global ocean (4.6M nodes) | Good scalability across cores | Suitable for large-scale production runs [3] |

| Single GPU (CUDA Fortran) | Small-scale (classical tests) | 1.18x overall speedup; 3.06x solver speedup | CPU has advantages in small-scale calculations [4] |

| Single GPU (CUDA Fortran) | Large-scale (2.56M nodes) | 35.13x speedup ratio | GPU particularly effective for high-resolution calculations [4] |

| Multi-GPU (CUDA Fortran) | Various scales | Reduced acceleration with added GPUs | Communication overhead limits multi-GPU scaling [4] |

The GPU acceleration approach represents a significant step toward lightweight operational deployment of storm surge numerical forecasting systems, potentially enabling high-resolution forecasting at coastal stations with limited hardware resources [4].

Experimental Protocols for GPU-Accelerated SCHISM

Model Setup and Configuration

Implementing GPU-accelerated SCHISM simulations requires careful attention to both software configuration and hardware considerations. The following protocol outlines the essential steps for configuring SCHISM with CUDA Fortran acceleration:

Source Code Acquisition: Download SCHISM v5.8.0 or later from the official repository (https://ccrm.vims.edu/schismweb/) [1] [4].

Compilation with CUDA Fortran: Compile the model using CUDA Fortran compilers, ensuring compatibility between the compiler version and the GPU hardware drivers.

Minimum Input Files Preparation:

hgrid.gr3: Horizontal grid definitionvgrid.in: Vertical grid configurationparam.nml: Main parameter settings- Friction file (

drag.gr3,rough.gr3, ormanning.gr3) bctides.in: Boundary conditions for tides [6]

GPU Configuration: For single-GPU execution, configure the model to offload computational hotspots (particularly the Jacobi solver) to the GPU while managing data transfer efficiently between CPU and GPU memory spaces [4].

Execution Command: Run the model using MPI with appropriate process allocation, typically:

mpirun -np NPROC ./pschism <# scribes>where NPROC represents the number of MPI processes [6].

Performance Benchmarking Methodology

To quantitatively evaluate the acceleration performance of GPU-SCHISM, researchers should implement the following benchmarking protocol:

Baseline Establishment:

- Run the standard CPU-based SCHISM on a reference system with documented specifications

- Execute both small-scale (e.g., ~70,775 grid nodes) and large-scale (e.g., >2 million grid nodes) test cases

- Record execution times for complete simulations and for specific computational kernels, particularly the Jacobi solver

GPU-Accelerated Execution:

- Execute the same test cases using GPU-SCHISM on comparable hardware

- Maintain identical model parameters, time steps, and output configurations

- Ensure minimal background processes to reduce timing variability

Metrics Collection:

- Measure total execution time for each simulation

- Profile computational hotspots to identify solver-specific speedup

- Calculate speedup ratios as: TCPU / TGPU

- Verify numerical consistency between CPU and GPU results to ensure acceleration doesn't compromise accuracy [4]

Scalability Assessment:

- Evaluate multi-GPU performance using strong and weak scaling metrics

- Quantify communication overhead in multi-GPU configurations

- Assess power efficiency comparing CPU and GPU implementations [4]

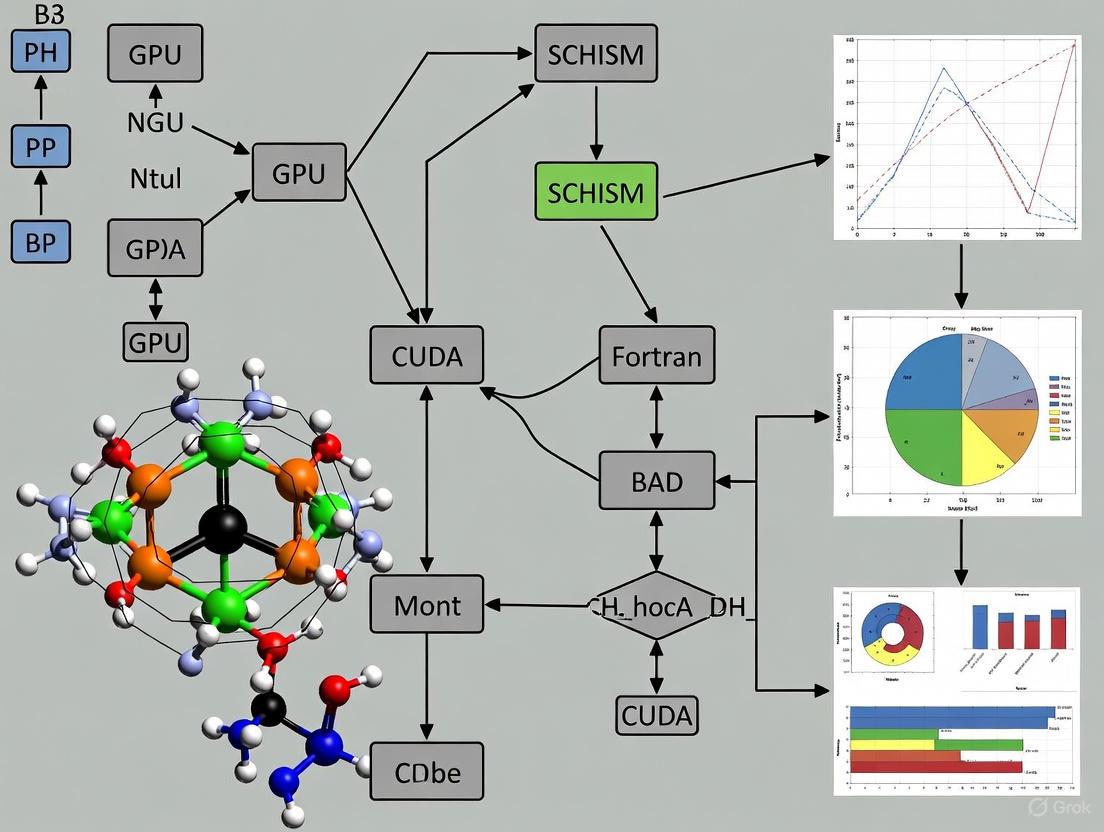

GPU-Accelerated SCHISM Workflow: Data flow between CPU and GPU components during simulation.

Application Case Studies

Global Tidal Simulation

A notable application demonstrating SCHISM's cross-scale capabilities is a global tidal simulation using SCHISM v5.10.0 [3]. This implementation featured:

- A global unstructured mesh with ~4.6 million nodes and ~9 million triangular elements

- Nominal open ocean resolution of 10-15 km, refining to ~3 km at most coastlines

- Higher resolution (1-2 km) in regions of interest like North America and the western Pacific

- Detailed representation of estuaries and rivers along the US West Coast without grid nesting

The model achieved impressive accuracy with complex root-mean-square errors of 4.2 cm for the M2 tidal constituent and 5.4 cm for all five major constituents in the deep ocean [3]. This performance demonstrates SCHISM's capacity for seamless simulation from global scales into estuaries, potentially serving as the backbone for global tide surge and compound flooding forecasting frameworks.

Regional Storm Surge Modeling

For regional-scale applications, SCHISM has been implemented along the coast of Fujian Province, China, for storm surge simulation [4]. This configuration featured:

- 70,775 grid nodes with refinement near the Fujian coast and around Taiwan Island

- 30 vertical layers using the LSC2 coordinate system

- Bathymetric data combining nautical charts (nearshore) and ETOPO1 (offshore)

- 300-second time step with 5-day forecast duration

This regional implementation provided the test case for evaluating GPU acceleration performance, demonstrating the practical utility of GPU-SCHISM for operational forecasting scenarios where computational efficiency is critical for timely predictions.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for SCHISM Research

| Tool/Category | Specific Implementation | Function in Research |

|---|---|---|

| Programming Languages | Fortran (with CUDA Fortran extensions), C/C++ | Core model implementation; GPU acceleration [4] [5] |

| Parallelization Frameworks | MPI, Coarray Fortran, OpenMP | Distributed memory parallelism; shared memory optimization [1] [7] |

| GPU Acceleration | CUDA Fortran, OpenACC | Computational hotspot acceleration; performance optimization [4] |

| Grid Generation Tools | Custom SCHISM grid tools | Horizontal (hgrid.gr3) and vertical (vgrid.in) grid creation [6] |

| Data Formats | NetCDF (outputs), GR3 (inputs) | Standardized input/output handling; interoperability [6] |

| Performance Profilers | GPU profiling tools (nvprof), MPI performance tools | Identification of computational bottlenecks; optimization guidance [4] |

| Visualization | VisIT (specified for SCHISM outputs) | Model result analysis and visualization [6] |

SCHISM Research Ecosystem: Hardware and software components for high-performance SCHISM simulations.

SCHISM represents a sophisticated modeling framework that successfully addresses the challenge of cross-scale simulation from creek to global ocean scales. Its algorithmic foundation combining semi-implicit time stepping, unstructured grids, and advanced numerical schemes provides both numerical stability and physical accuracy across diverse hydrodynamic regimes. The computational demands of these capabilities are substantial, but recent advances in GPU acceleration using CUDA Fortran offer promising pathways toward more efficient execution, particularly for high-resolution applications.

The integration of GPU acceleration specifically targets computational hotspots like the Jacobi solver, delivering significant speedup for large-scale problems while maintaining numerical accuracy. This development supports the trend toward lightweight operational deployment of sophisticated forecasting systems, potentially expanding access to high-resolution coastal modeling at regional forecasting centers with limited computational resources. As SCHISM continues to evolve, further optimization of multi-GPU scalability and memory management will enhance its utility for both research and operational applications.

High-resolution forecasting is essential for accurate prediction of oceanic and atmospheric phenomena, yet it presents formidable computational challenges. Traditional forecasting models rely on Central Processing Units (CPUs), which often struggle with the massive computational loads required for detailed simulations. This application note explores the paradigm shift towards Graphics Processing Unit (GPU)-accelerated computing, specifically within the context of the SCHISM (Semi-implicit Cross-scale Hydroscience Integrated System Model) ocean model using CUDA Fortran. As forecasting resolution increases to capture finer-scale processes, the computational burden outstrips the capabilities of CPU-based systems, creating bottlenecks that delay critical forecasting timelines. The parallel architecture of GPUs offers a transformative solution, enabling researchers to achieve unprecedented simulation speeds while maintaining numerical accuracy. This document provides a comprehensive technical overview of GPU implementation strategies, performance benchmarks, and detailed protocols for accelerating high-resolution forecasting systems, presenting a compelling case for GPU adoption in computational geoscience research and operational forecasting environments.

GPU vs. CPU Performance: Quantitative Analysis

The transition from CPU to GPU computing represents a fundamental architectural shift from sequential to parallel processing. While CPUs typically contain a few to dozens of cores optimized for sequential serial processing, GPUs comprise thousands of smaller, efficient cores designed to handle multiple tasks simultaneously. This parallel architecture makes GPUs particularly well-suited for the matrix and vector operations that dominate numerical weather and ocean prediction models.

Performance analysis of the GPU-accelerated SCHISM model demonstrates significant advantages over traditional CPU-based implementations. For large-scale experiments with 2,560,000 grid points, the GPU achieves a speedup ratio of 35.13 times compared to CPU performance [4]. This substantial acceleration enables researchers to run higher-resolution simulations or more ensemble members within practical timeframes, directly addressing the computational bottlenecks that have historically constrained forecasting detail and accuracy.

However, GPU superiority is scale-dependent. For smaller-scale classical experiments, the CPU maintains advantages due to lower overhead, with the GPU providing a more modest 1.18 times overall acceleration [4]. This performance relationship highlights the importance of matching computational architecture to problem scale, with GPUs delivering maximum benefit for large, computationally intensive simulations where their parallel architecture can be fully utilized.

Table 1: Performance Comparison of CPU vs. GPU Implementation in SCHISM Model

| Experimental Scenario | Grid Points | Speedup Ratio (GPU/CPU) | Key Performance Findings |

|---|---|---|---|

| Large-scale experiment | 2,560,000 | 35.13x | GPU demonstrates superior performance for computationally intensive workloads [4] |

| Small-scale classical test | Not specified | 1.18x | CPU maintains advantages for smaller computational workloads [4] |

| Jacobi solver hotspot | Not specified | 3.06x | Specific computationally intensive modules show significant improvement [4] |

The performance analysis extends beyond raw speed measurements to encompass energy efficiency considerations. Studies comparing different parallel implementations found that GPU-accelerated models can reduce energy consumption by a factor of 6.8 while maintaining comparable performance to extensive CPU clusters [4]. This energy efficiency makes GPU systems particularly valuable for operational forecasting environments where computational resources must balance performance with practical constraints.

SCHISM Model GPU Acceleration: Implementation Framework

Technical Architecture

The implementation of GPU acceleration for the SCHISM model utilizes the CUDA Fortran framework, representing the first successful GPU porting of this widely used ocean numerical model [4]. This implementation, designated GPU-SCHISM, maintains the model's core numerical structure while offloading computationally intensive components to the GPU. The technical architecture preserves the model's semi-implicit finite element/finite volume method combined with the Euler-Lagrange algorithm for solving the hydrostatic form of the Navier-Stokes equations [4]. This approach maintains the numerical stability and accuracy of the original model while leveraging GPU parallelism.

The SCHISM model employs an unstructured hybrid triangular/quadrilateral grid in the horizontal direction and hybrid SZ/LSC2 coordinate systems in the vertical direction [4]. This complex grid structure presents both challenges and opportunities for GPU implementation. While unstructured grids require careful memory management to ensure coalesced memory access on GPUs, they also provide natural parallelism across grid elements that can be effectively mapped to GPU thread hierarchies.

Computational Hotspot Identification

Performance analysis of the CPU-based SCHISM model identified the Jacobi iterative solver module as a primary computational hotspot, making it an optimal initial target for GPU acceleration [4]. This solver is representative of the linear algebra operations that form the computational core of many numerical forecasting models. By focusing acceleration efforts on this bottleneck, developers achieved a 3.06 times speedup for the solver alone [4], demonstrating how targeted GPU implementation can address specific performance constraints.

The success of this targeted approach illustrates the importance of comprehensive profiling before implementation. Rather than attempting to port the entire model to GPU simultaneously, the developers adopted a strategic approach that prioritized components with the greatest potential performance impact. This methodology maximizes return on development investment while minimizing disruptions to the model's overall structure and functionality.

Table 2: SCHISM Model Components and GPU Acceleration Potential

| Model Component | Computational Characteristics | GPU Acceleration Potential | Implementation Considerations |

|---|---|---|---|

| Jacobi iterative solver | High parallelism, memory-intensive | High (3.06x demonstrated) | Requires careful memory management for optimal performance [4] |

| Grid management | Irregular memory access, conditional logic | Moderate | Challenging due to unstructured grid; may benefit from hybrid CPU-GPU approach |

| Data input/output | Sequential, limited parallelism | Low | Best kept on CPU with asynchronous data transfers |

| Physical parameterizations | Mix of parallel and sequential elements | Variable | Requires component-specific analysis and implementation |

Experimental Protocols for GPU Acceleration

Performance Measurement Methodology

Accurately measuring GPU performance requires specialized approaches that account for the asynchronous execution model of GPU kernels. The following protocol outlines the standard methodology for quantifying GPU acceleration effectiveness:

Instrumentation with CUDA Events: Utilize the CUDA event API for precise kernel timing measurements. Create start and stop events using

cudaEventCreate(), record these events in the execution stream withcudaEventRecord(), synchronize on the stop event usingcudaEventSynchronize(), and calculate elapsed time withcudaEventElapsedTime()[8]. This approach provides resolution of approximately half a microsecond without stalling the GPU pipeline.Effective Bandwidth Calculation: Compute achieved memory bandwidth using the formula:

BWEffective = (RB + WB) / (t × 10^9)GB/s, where RB and WB represent the number of bytes read and written per kernel, and t is the elapsed time in seconds [8]. This metric is particularly relevant for memory-bound computations common in forecasting models.Theoretical Peak Performance Comparison: Compare achieved performance with theoretical hardware limits. For example, calculate theoretical memory bandwidth using:

BWTheoretical = Memory Clock Rate × (Interface Width/8) × 2 / 10^9GB/s for DDR memory [8]. Similar calculations can be performed for computational throughput based on GPU architecture specifications.Validation of Numerical Results: Implement quantitative accuracy checks by comparing key output variables between CPU and GPU implementations. For example, the SCHISM validation measured maximum error in the SAXPY computation, ensuring that acceleration did not compromise numerical integrity [8].

Code Transition Protocol

Transitioning existing Fortran code to CUDA Fortran requires a systematic approach to maintain correctness while achieving performance gains:

Hotspot Identification: Profile the application to identify computationally intensive sections using tools such as NVIDIA Nsight Systems or CPU-based profilers. Focus initial efforts on routines consuming the most computational time.

Incremental Implementation: Begin with computationally dense, parallelizable routines rather than attempting a full port. The Jacobi solver in SCHISM represents an ideal starting point [4].

Memory Management: Replace standard Fortran allocate statements with

cudaMalloc()or managed memory declarations for data structures processed on the GPU. Implement explicit data transfers using assignment statements orcudaMemcpy()calls [8].Kernel Configuration: Determine optimal execution configuration (grid and block dimensions) based on problem size and GPU capabilities. For structured operations, 512 threads per block often provides a good starting point [8].

Unified Memory Consideration: Evaluate the use of managed memory for simplified programming, particularly during initial implementation, while being aware of potential performance implications for data access patterns.

Visualization of GPU Acceleration Workflow

The following diagram illustrates the complete workflow for implementing and validating GPU acceleration in forecasting models, from initial profiling through performance measurement:

The workflow emphasizes an iterative approach to GPU acceleration, where performance measurement often leads to additional optimization cycles. This process ensures both numerical correctness and computational efficiency throughout the implementation.

Performance Measurement and Metrics Protocol

Accurate performance measurement is essential for validating GPU acceleration effectiveness. The following diagram details the specific methodology for timing kernel execution and calculating key performance metrics:

This measurement protocol avoids the performance pitfalls of CPU-based timing methods that can stall the GPU pipeline. The CUDA event API provides lightweight, accurate timing specifically designed for asynchronous GPU operations [8].

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of GPU-accelerated forecasting requires both hardware and software components optimized for high-performance computing. The following table details the essential "research reagents" for developing and deploying GPU-accelerated forecasting systems:

Table 3: Essential Research Reagents for GPU-Accelerated Forecasting

| Tool Category | Specific Solution | Function in GPU Acceleration |

|---|---|---|

| Programming Framework | CUDA Fortran | Enables direct GPU programming within Fortran environments, providing access to GPU hardware capabilities [4] |

| Performance Analysis | CUDA Event API | Measures kernel execution time with minimal overhead, enabling accurate performance assessment [8] |

| Computational Hardware | NVIDIA GPU (H100/B200) | Provides parallel processing capability with specialized tensor cores for accelerated floating-point operations [9] |

| Modeling Infrastructure | SCHISM v5.8.0 | Serves as the base ocean modeling framework for GPU acceleration implementation [4] |

| Precision Management | Automatic Mixed Precision (AMP) | Maintains numerical accuracy while improving computational efficiency through selective use of reduced precision [9] |

| Memory Management | Pinned (Page-Locked) Memory | Enables faster host-device data transfers by eliminating paging overhead [7] |

| Parallel Paradigms | Coarray Fortran | Facilitates distributed memory parallelism with syntax familiar to Fortran programmers [7] |

Discussion and Future Perspectives

The implementation of GPU acceleration for high-resolution forecasting represents a paradigm shift in computational geoscience. The demonstrated performance improvements, particularly for large-scale simulations, directly address the critical bottleneck of computational expense that has limited forecasting resolution and ensemble sizes. The SCHISM model case study provides a validated framework for similar acceleration efforts across the forecasting domain.

Future developments in GPU technology promise additional advances. Emerging innovations such as tensor cores optimized for AI tasks and the integration of quantum computing concepts may further transform forecasting capabilities [10]. The ongoing development of programming frameworks like CUDA Fortran and OpenACC will continue to lower implementation barriers, making GPU acceleration accessible to a broader range of research institutions and operational forecasting centers.

The successful implementation of GPU-SCHISM using CUDA Fortran lays a foundation for lightweight, efficient operational forecasting systems that can be deployed in resource-constrained environments [4]. This technological advancement supports more timely and accurate predictions for critical applications including storm surge forecasting, climate change modeling, and agricultural planning, ultimately enhancing societal resilience to environmental hazards.

For researchers and scientists working on high-performance computational models like SCHISM, the choice between CUDA Fortran and OpenACC is critical for achieving optimal GPU acceleration. CUDA Fortran provides explicit, low-level control over GPU hardware, enabling highly tuned performance and access to advanced features like Tensor Cores and cooperative groups [11]. In contrast, OpenACC offers a directive-based, high-level approach that simplifies code adaptation and maintains greater portability [12] [13]. Recent research on GPU-accelerated SCHISM models demonstrates that CUDA Fortran can achieve significant speedup ratios of up to 35.13× for large-scale simulations with 2.56 million grid points [4]. This application note provides a structured comparison and detailed experimental protocols to guide researchers in selecting and implementing the most appropriate framework for their specific ocean modeling requirements.

GPU acceleration has become indispensable in computational geosciences, particularly for high-resolution ocean models like SCHISM that demand substantial computational resources. The explicit programming model of CUDA Fortran extends standard Fortran with GPU-specific attributes and constructs, giving expert programmers direct control over all aspects of GPU programming, including memory management, kernel launches, and stream synchronization [11] [14]. This model interfaces directly with powerful CUDA libraries (cuBLAS, cuSOLVER, cuFFT) and enables use of the latest hardware features like managed memory and tensor cores [11].

The directive-based model of OpenACC allows developers to incrementally accelerate existing Fortran, C, and C++ code by adding compiler hints that specify regions for parallel execution on accelerators [15] [13]. This approach maintains a single codebase that can be compiled for either CPU or GPU execution, offering greater portability across different hardware platforms [12] [16]. OpenACC's !$acc directives manage data transfers and kernel launches automatically, significantly reducing the programming effort required for initial GPU implementation [13].

Conceptual Comparison and Technical Specifications

Table 1: Framework Comparison for GPU Acceleration

| Feature | CUDA Fortran | OpenACC |

|---|---|---|

| Programming Approach | Language extensions, explicit control | Compiler directives, implicit parallelism |

| Learning Curve | Steeper, requires GPU architecture knowledge | Gentler, minimal GPU knowledge needed |

| Data Management | Explicit device and managed attributes [11] |

Automatic via copy, copyin, copyout clauses [13] |

| Kernel Definition | attributes(global) subroutine [14] |

!$acc kernels or !$acc parallel loop [13] |

| Performance Potential | Higher, with expert tuning [4] [17] | Good, but may trail optimized CUDA [12] |

| Portability | NVIDIA GPUs only | Multiple accelerators, CPUs via -acc=multicore [15] [16] |

| Hardware Feature Access | Full access (Tensor Cores, constant memory) [11] [17] | Limited to directive-supported features [17] |

| Interoperability | With OpenACC, OpenMP, CUDA C [11] | With CUDA libraries, CUDA Fortran [11] [14] |

| Code Modification | Extensive | Minimal |

The interoperability between these frameworks enables a hybrid approach, where most of the application is accelerated with OpenACC directives while performance-critical sections are hand-tuned using CUDA Fortran [11] [17]. This strategy balances development efficiency with computational performance, particularly beneficial for complex models like SCHISM where certain algorithms (e.g., Jacobi solvers) constitute computational hotspots [4].

Performance Analysis in SCHISM and Hydrological Modeling

Table 2: Experimental Performance Results from Scientific Studies

| Application Context | Problem Scale | CUDA Fortran Speedup | OpenACC Speedup | Notes | Source |

|---|---|---|---|---|---|

| SCHISM Ocean Model | 2.56M grid points | 35.13× | Not tested | Jacobi solver accelerated 3.06× [4] | [4] |

| SCHISM Ocean Model | Small-scale classical | 1.18× overall | Not tested | CPU advantageous at small scale [4] | [4] |

| Drainage Network Extraction | 25,000 × 25,000 DEM | 16.8× | 12.5× | Optimized CUDA vs. OpenACC [12] | [12] |

| Drainage Network Extraction | 5,000 × 5,000 DEM | 11.3× | 8.7× | Naive CUDA implementation [12] | [12] |

| Implicit Ocean Model (GPU-IOCASM) | Not specified | 312× | Not applicable | Minimized CPU-GPU data transfer [18] | [18] |

Performance analysis from recent SCHISM implementation reveals that CUDA Fortran achieves substantially higher speedups for large-scale problems, with performance gains becoming more pronounced as computational workload increases [4]. This scalability is particularly valuable for high-resolution storm surge forecasting where spatial variability demands refined computational grids. However, research also indicates that CPU-based computation retains advantages for smaller-scale simulations, suggesting that workload characteristics should inform accelerator selection [4].

Comparative studies in hydrological applications demonstrate that while both frameworks provide significant acceleration over CPU implementations, CUDA consistently outperforms OpenACC across different problem sizes and algorithms [12]. The performance differential stems from CUDA's ability to leverage hardware-specific features and enable finer-grained optimization. However, OpenACC delivers substantial speedups with considerably less development effort, making it particularly valuable for research teams with limited GPU programming expertise or those prioritizing code maintainability [12].

Experimental Protocols for SCHISM Model Acceleration

Protocol 1: Hotspot Identification and Profiling

Objective: Identify computational bottlenecks in the SCHISM model suitable for GPU acceleration.

- Instrumentation: Compile the SCHISM model with profiling flags (

-pgfor gprof or use NVIDIA Nsight Systems). - Benchmark Execution: Run the model with representative input data (e.g., Fujian coast simulation domain with 70,775 grid nodes) [4].

- Analysis: Analyze profiling output to identify functions with the highest execution time. Research indicates the Jacobi iterative solver is typically the primary hotspot [4].

- Validation: Verify identified hotspots account for significant (>20%) total runtime to ensure acceleration efforts yield meaningful benefits.

Protocol 2: CUDA Fortran Implementation for Jacobi Solver

Objective: Accelerate the Jacobi solver hotspot using CUDA Fortran.

- Code Refactoring:

- Memory Management:

- Explicitly transfer input data from host to device using

cudaMemcpyor assign tomanagedvariables for automatic transfer [11]. - Allocate device memory for intermediate results.

- Explicitly transfer input data from host to device using

- Optimization:

- Utilize shared memory for data reused between threads.

- Adjust thread block dimensions (e.g., 128 threads/block) based on GPU compute capability [13].

- Verification:

- Compare results from CPU and GPU implementations to ensure numerical equivalence.

- Validate against classical experimental data to maintain simulation accuracy [4].

Protocol 3: OpenACC Directive Implementation

Objective: Accelerate computational regions using OpenACC directives.

- Region Identification: Mark target loops and computational regions with

!$accdirectives [13]. - Data Management: Enclose accelerated regions within

!$acc dataconstructs with appropriate clauses (copyin,copyout,create) to manage data transfer [14]. - Parallel Execution:

- Precede parallel loops with

!$acc parallel loopdirectives. - Add

reductionclauses for operations like summing values [11].

- Precede parallel loops with

- Compilation and Tuning:

Protocol 4: Hybrid Implementation Strategy

Objective: Combine OpenACC productivity with CUDA Fortran performance.

- Baseline Implementation: Begin with OpenACC directives for the entire application.

- Performance Analysis: Profile the OpenACC-accelerated code to identify remaining bottlenecks.

- Incremental Optimization: Replace OpenACC directives for performance-critical sections with custom CUDA Fortran kernels [17].

- Interoperability: Use

!$acc host_data use_device()to pass device pointers from OpenACC regions to CUDA Fortran kernels [11] [14].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Tools and Environments for GPU-Accelerated SCHISM Research

| Tool Category | Specific Solution | Function/Purpose | Usage Example |

|---|---|---|---|

| Compiler Suite | NVIDIA HPC SDK | Fortran compiler with CUDA Fortran & OpenACC support [15] [16] | nvfortran -acc=gpu -gpu=cc80 schism.f90 |

| Profiling Tools | NVIDIA Nsight Systems | Performance analysis of GPU and CPU execution | Identify kernel bottlenecks and load imbalance |

| GPU Libraries | cuBLAS, cuSOLVER, cuSPARSE | Accelerated linear algebra operations [11] [14] | Replace manual matrix solves with library calls |

| Debugging Tools | CUDA-MEMCHECK | Memory access violation detection | Debug kernel memory errors |

| System Utilities | nvaccelinfo |

GPU capability and driver verification [15] | Verify CUDA version and compute capability |

| Cluster Management | SLURM with GPU support | Multi-GPU job scheduling and resource allocation | Scale to multiple nodes for large domains |

For researchers accelerating the SCHISM model, the choice between CUDA Fortran and OpenACC involves critical trade-offs between performance, development complexity, and maintainability. Based on experimental evidence and technical capabilities, we recommend:

For maximum performance in production environments with large-scale simulations (>1 million grid points), implement computationally intensive kernels like the Jacobi solver in CUDA Fortran, potentially within a hybrid framework [4] [17].

For rapid prototyping and initial GPU acceleration, begin with OpenACC directives to achieve substantial speedups with minimal code modification, particularly for smaller-scale problems [12] [13].

For long-term maintainability while preserving performance optimization opportunities, adopt a hybrid approach using OpenACC for the majority of the codebase with CUDA Fortran for identified bottlenecks [11] [17].

The remarkable 312× speedup demonstrated in specialized ocean models [18] highlights the transformative potential of GPU acceleration for operational storm surge forecasting systems. By strategically selecting and implementing the appropriate GPU programming framework, researchers can achieve the computational efficiency necessary for high-resolution, timely coastal hazard predictions.

Structured grids form the computational backbone of many geophysical simulation models, including oceanographic models like SCHISM (Semi-implicit Cross-scale Hydroscience Integrated System Model). These grids systematically discretize physical space into organized elements, enabling the numerical solution of partial differential equations governing oceanic and atmospheric phenomena. Within the SCHISM framework, structured grids provide the spatial context for simulating complex multi-scale processes including storm surges, tidal dynamics, and coastal inundation [4].

The transition from traditional CPU-based computation to GPU-accelerated approaches represents a paradigm shift in geophysical modeling. This shift enables researchers to achieve unprecedented simulation speeds while maintaining numerical accuracy, particularly crucial for operational forecasting systems deployed in resource-constrained coastal monitoring stations. By leveraging GPU architecture's massive parallelism, models like SCHISM can resolve finer spatial scales and extend forecast horizons without prohibitive computational costs [4].

CUDA Fortran has emerged as a pivotal technology bridge, allowing computational scientists to harness GPU capabilities while maintaining investment in existing Fortran codebases. This is particularly valuable in ocean modeling, where many established codes are written in Fortran. The integration of CUDA capabilities directly into Fortran enables targeted acceleration of computationally intensive kernel operations while preserving the overall model structure and scientific integrity [19].

Theoretical Foundations: From Grid Structures to Parallel Execution

Structured Grid Architectures

Structured grids in computational geophysics typically employ regular tessellation patterns, most commonly quadrilateral or hexahedral elements in 2D and 3D configurations respectively. SCHISM implements an unstructured grid approach in the horizontal direction with hybrid triangular/quadrilateral elements, while maintaining structured layering in the vertical dimension. This hybrid approach provides geometrical flexibility for complex coastal boundaries while preserving computational efficiency through structured vertical columns [4].

The mathematical representation of physical processes on these grids involves discretizing the governing Navier-Stokes equations. SCHISM specifically solves the hydrostatic form of these equations using a semi-implicit finite element/finite volume method combined with Euler-Lagrange algorithms. The momentum and continuity equations central to SCHISM are:

- Momentum Equation:

Du/Dt = ∂/∂z(ν∂u/∂z) - g∇η + f - Continuity Equation:

∂η/∂t + ∇·∫_{-h}^η u dz = 0

where u represents velocity, ν is the vertical eddy viscosity coefficient, g is gravitational acceleration, η is free surface height, and f encompasses additional forcing terms [4].

GPU Parallel Execution Model

The CUDA programming model abstracts the GPU architecture as a collection of Streaming Multiprocessors (SMs), each capable of executing multiple thread blocks concurrently. Threads are grouped into warps (typically 32 threads) that execute instructions in lockstep. This Single Instruction, Multiple Thread (SIMT) paradigm enables the massive parallelism essential for accelerating structured grid computations [20].

Key to efficient GPU execution is understanding the hierarchy of thread blocks, warps, and individual threads, and how this hierarchy maps to the structured grid domain decomposition. For finite element models like SCHISM, this typically involves assigning one thread per grid element or nodal point, allowing simultaneous evaluation of governing equations across the entire computational domain [4] [19].

Performance Metrics and Analysis Framework

Computational Performance Metrics

Quantifying GPU acceleration effectiveness requires robust performance metrics. Two fundamental measurements are essential: effective bandwidth and computational throughput. Effective bandwidth measures data movement efficiency between GPU memory and processing units, while computational throughput quantifies floating-point operation execution rates [8].

The effective bandwidth calculation formula is:

BW_Effective = (R_B + W_B) / (t × 10^9) GB/s

Where R_B represents bytes read per kernel, W_B represents bytes written per kernel, and t is elapsed time in seconds. For computational throughput, the metric is:

GFLOP/s = 2N / (t × 10^9)

Where N is the number of elements and the factor of 2 accounts for typical multiply-add operations counted as two floating-point operations [8].

SCHISM GPU Acceleration Performance

Table 1: SCHISM GPU Acceleration Performance Metrics

| Experiment Scale | Grid Points | GPU Speedup Ratio | Key Performance Findings |

|---|---|---|---|

| Small-scale | 70,775 | 1.18x | Single GPU improves Jacobi solver efficiency by 3.06x |

| Large-scale | 2,560,000 | 35.13x | GPU demonstrates superior performance for high-resolution calculations |

| Comparative Framework | - | CUDA superior to OpenACC | CUDA outperforms OpenACC across all experimental conditions |

Performance analysis of SCHISM model GPU acceleration reveals scale-dependent effectiveness. For smaller-scale simulations with approximately 70,775 grid nodes, a single GPU provides modest overall acceleration of 1.18 times, though specific computational hotspots like the Jacobi solver show more significant 3.06-fold improvements. This demonstrates that kernel-specific optimization can yield substantial benefits even when overall application speedup is limited by other factors [4].

For large-scale experiments with 2.56 million grid points, the GPU acceleration ratio increases dramatically to 35.13 times, highlighting that GPU architectures achieve maximum efficiency when processing substantial computational workloads that fully utilize available parallel resources [4].

Experimental Protocols for GPU Acceleration

Performance Measurement Methodology

Accurate measurement of kernel execution time is fundamental to GPU performance optimization. The CUDA event API provides precise timing mechanisms that avoid stalling the GPU pipeline, unlike CPU-based synchronization approaches. The standard protocol involves [8]:

- Event Creation: Initialize

cudaEventobjects for start and stop timestamps - Event Recording: Place events into appropriate CUDA streams before and after kernel launches

- Synchronization: Block CPU execution until the stop event is recorded

- Elapsed Time Calculation: Use

cudaEventElapsedTime()to compute milliseconds between events

This method provides resolution of approximately 0.5 microseconds, enabling fine-grained performance analysis of individual kernels within the SCHISM computational pipeline [8].

Concurrent Kernel Execution Protocol

Achieving true parallel kernel execution requires careful stream management and resource allocation. The experimental protocol must address [21]:

- Stream Creation: Explicitly create multiple CUDA streams using

cudaStreamCreate() - Kernel Launch Configuration: Assign kernels to separate streams in the execution configuration

- Resource Monitoring: Ensure individual kernels do not consume sufficient resources to block concurrent execution

- Dependency Management: Identify and handle data dependencies between kernels

Experimental evidence confirms that kernels must be issued to separate CUDA streams and must have limited resource utilization to achieve genuine concurrency. Kernel launches in the default stream (stream 0) or those that fully utilize GPU resources will execute serially regardless of stream assignment [21].

Optimization Strategies for CUDA Fortran

Occupancy and Register Management

GPU occupancy, defined as the ratio of active warps to maximum supported warps per multiprocessor, significantly impacts performance. Optimization strategies must balance thread-level parallelism with resource constraints. For scientific codes typical in geophysical modeling, approximately 50% occupancy is often sufficient for optimal performance [22].

Register usage represents a primary constraint on occupancy. Each NVIDIA SM contains 65,536 registers, imposing a theoretical maximum of 32 registers per thread at 100% occupancy (2048 threads). When threads require more registers, occupancy decreases accordingly. Optimization approaches include [22]:

- Kernel Specialization: Decomposing generic routines handling multiple cases into specialized kernels with reduced register pressure

- Register Limit Control: Using compiler flags like

-gpu=maxregcount:<n>to explicitly control register allocation - Algorithmic Restructuring: Reorganizing computations to reduce temporary variable requirements

The -gpu=maxregcount compiler directive forces register spilling to local memory, which can improve occupancy but may incur performance penalties due to increased memory traffic. This approach proves most beneficial when register usage is borderline (e.g., 33 or 129 registers) [22].

Memory Access Optimization

Efficient memory access patterns are critical for achieving high performance in structured grid computations. The SCHISM model implementation demonstrates several key optimization principles [4]:

- Minimizing CPU-GPU Data Transfer: Performing maximum computation on the GPU before transferring results back to the CPU

- Asynchronous Execution: Overlapping computation with I/O operations by proceeding with subsequent calculations while output data transfers occur

- Coalesced Memory Access: Ensuring threads within warps access contiguous memory locations to maximize memory bandwidth utilization

These optimizations are particularly important for implicit ocean models like SCHISM, where iterative solvers dominate computational expense. The Jacobi solver identified as a performance hotspot in SCHISM benefits significantly from memory access pattern optimization in addition to parallel execution [4].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential Computational Tools for SCHISM GPU Acceleration Research

| Tool/Component | Function | Implementation Notes |

|---|---|---|

| SCHISM v5.8.0 | Base ocean model framework | Provides core hydrodynamic simulation capabilities with unstructured grid support |

| CUDA Fortran Compiler | GPU code compilation | PGI compiler with CUDA Fortran extensions enables GPU kernel development |

| Nsight Compute | Performance profiling | Critical for identifying bottlenecks and optimization opportunities |

| CUDA Event API | Performance measurement | Enables precise kernel timing with minimal GPU pipeline disruption |

| Jacobi Solver Module | Computational hotspot | Primary target for GPU acceleration in SCHISM |

| MPI Libraries | Multi-GPU communication | Enables scaling across multiple GPUs for large-domain simulations |

The experimental workflow for SCHISM GPU acceleration relies on specialized software tools and hardware components. The CUDA Fortran compiler environment provides the essential bridge between traditional Fortran scientific programming and GPU hardware capabilities. This allows researchers to maintain investment in existing model codebases while selectively accelerating computational hotspots [19].

Performance analysis tools like Nsight Compute provide critical insights into kernel behavior, memory access patterns, and occupancy limitations. These profiling tools enable data-driven optimization decisions, moving beyond intuition to empirical performance improvement [22].

The integration of structured grid methodologies with kernel-based parallel execution represents a fundamental advancement in geophysical computational science. The SCHISM model implementation demonstrates that targeted GPU acceleration using CUDA Fortran can deliver substantial performance improvements while maintaining numerical accuracy and scientific validity.

The scale-dependent nature of acceleration effectiveness highlights the importance of workload characteristics in GPU performance. While small-scale simulations show modest improvements, large-scale computations with millions of grid points achieve order-of-magnitude speedups, enabling higher-resolution forecasting and more comprehensive physical parameterization.

Future developments in GPU architecture and programming models will likely further enhance these benefits, particularly as multi-GPU scaling challenges are addressed through improved communication patterns and load balancing techniques. The continued evolution of CUDA Fortran will ensure that scientific computing communities can leverage these advances while preserving decades of investment in Fortran-based modeling infrastructure.

Implementing CUDA Fortran in SCHISM: A Step-by-Step Methodology

In the field of ocean numerical modeling, the SCHISM (Semi-implicit Cross-scale Hydroscience Integrated System Model) has established itself as a powerful tool for simulating storm surges, coastal inundation, and other hydrodynamic phenomena [4]. However, as resolution demands increase for more accurate forecasting, the computational burden of these simulations grows substantially, creating a critical performance bottleneck for operational deployment [4]. Within the context of GPU acceleration research using CUDA Fortran, identifying and optimizing computational hotspots becomes paramount. This application note details structured methodologies for performance profiling of the SCHISM model, focusing specifically on identifying resource-intensive components such as the Jacobi iterative solver, which has been empirically identified as a primary performance-limiting factor [4]. The protocols outlined herein provide researchers with a systematic approach to diagnose performance constraints and establish baselines for accelerated computing implementations.

Performance Profiling and Hotspot Identification

Quantitative Performance Profiling of SCHISM Components

Comprehensive profiling of the SCHISM model under CPU-only execution reveals distinct computational patterns, with certain modules consuming disproportionate runtime resources. The following table summarizes typical performance characteristics observed in operational contexts, highlighting the Jacobi solver's significant role in the overall computational load.

Table 1: Computational Hotspot Analysis in SCHISM Model

| Model Component | Function Description | Approximate CPU Runtime Percentage | Identified as Hotspot |

|---|---|---|---|

| Jacobi Solver | Iterative solution for linear systems | ~25-40% | Yes [4] |

| Baroclinic Pressure Gradient | Calculates density-driven flow forces | ~10-15% | No |

| Horizontal Viscosity | Models turbulent mixing effects | ~5-10% | No |

| Vertical Mixing | Handles vertical transport processes | ~5-10% | No |

| Transport Equations | Advection-diffusion computation | ~15-20% | No |

| Input/Output Operations | Data reading and writing | ~5-15% | Context-dependent |

Experimental Protocol for Hotspot Identification

Objective: To systematically identify computational hotspots within the SCHISM model codebase through instrumented profiling.

Materials and Environment:

- SCHISM source code (v5.8.0 or later)

- High-performance computing node with multicore CPUs

- GNU gprof or similar profiling toolset

- Intel VTune Amplifier (optional for advanced analysis)

- Compilers: Intel Fortran or GNU Fortran compiler

Methodology:

- Code Instrumentation: Compile SCHISM with profiling flags enabled (

-pgfor gprof,-debugfor Intel compilers). - Representative Workload Execution: Run the model with a realistic simulation scenario for sufficient duration to capture stable performance patterns.

- Profile Data Collection: Execute the profiler to gather runtime statistics.

- Call Graph Analysis: Generate and analyze the call graph to understand function relationships and cumulative timings.

- Hotspot Identification: Flag functions exceeding 5% of total runtime as potential optimization targets.

Validation Criteria:

- Profiling results consistent across multiple independent runs

- Identified hotspots align with theoretical computational complexity expectations

- Jacobi solver typically emerges as dominant hotspot in semi-implicit schemes [4]

Jacobi Solver Analysis and Optimization Potential

Computational Characteristics of the Jacobi Method

The Jacobi iterative method solves systems of linear equations of the form Ax = b by decomposing matrix A into diagonal (D) and remainder (R) components, then iteratively updating the solution vector until convergence [23]. The algorithm exhibits several characteristics that make it both computationally demanding and suitable for acceleration:

- Inherent Parallelism: Each element of the solution vector can be updated independently using values from the previous iteration

- Memory-Bound Nature: Performance often limited by memory bandwidth rather than floating-point capability [24]

- Regular Data Access Patterns: Structured grid operations enable efficient GPU memory utilization

Experimental Protocol for Jacobi Solver Performance Benchmarking

Objective: To quantify the performance characteristics of the Jacobi solver independent of the full SCHISM model.

Materials and Environment:

- Custom Jacobi benchmark implementation [25]

- CPU and GPU test systems with performance monitoring capabilities

- Precision timing utilities (e.g., CUDA events for GPU timing)

Methodology:

- Problem Initialization: Generate a diagonally dominant matrix representative of SCHISM pressure Poisson problems.

- Baseline Measurement: Execute serial CPU implementation to establish performance baseline.

- Parallel Implementation: Develop CUDA Fortran kernel for Jacobi iteration.

- Performance Metrics: Measure execution time for 10,000 iterations across varying problem sizes.

- Scalability Analysis: Assess performance scaling with increasing grid dimensions.

Table 2: Performance Benchmarks for Jacobi Solver Implementations

| Implementation Approach | Problem Size: 512×512 | Problem Size: 2048×2048 | Relative Speedup |

|---|---|---|---|

| Serial CPU Code | 17.25 seconds | 279.46 seconds | 1.0× (baseline) [25] |

| Unoptimized CUDA Kernel | 14.46 seconds | N/A (exceeded GPU resources) | 1.2× [25] |

| Optimized CUDA Kernel | 1.65 seconds | 16.17 seconds | 10.5× [25] |

Validation Criteria:

- Numerical results identical between CPU and GPU implementations within floating-point tolerance

- Optimized implementation demonstrates ≥3× speedup over unoptimized kernel [25]

- Solution convergence rates equivalent across implementations

GPU Acceleration Workflow for SCHISM

Integrated Acceleration Methodology

Successful GPU acceleration of SCHISM requires a systematic approach that addresses both individual hotspots and overall data movement. The following workflow illustrates the comprehensive process from initial profiling to deployed optimization:

Experimental Protocol for Full Model GPU Acceleration

Objective: To implement and validate a GPU-accelerated version of SCHISM using CUDA Fortran while maintaining numerical accuracy.

Materials and Environment:

- SCHISM v5.8.0 source code

- NVIDIA GPU with CUDA Fortran support

- PGI or NVIDIA HPC SDK compilers

- Performance comparison: CPU nodes vs. GPU-enabled nodes

Methodology:

- Incremental Porting: Begin with Jacobi solver acceleration, then progress to other identified hotspots.

- Data Management Strategy: Minimize host-device transfers by maintaining data resident on GPU where possible.

- Asynchronous Execution: Overlap computation and I/O operations to hide latency.

- Multi-GPU Implementation (optional): Extend to multiple GPUs using domain decomposition.

Validation Metrics:

- Accuracy: Maximum relative error < 0.001% compared to CPU reference

- Performance: Overall model speedup of 1.18× for small problems, 35.13× for large-scale problems (2.56M grid points) [4]

- Precision: Maintain double-precision arithmetic for critical operations

The Researcher's Toolkit: Essential Materials and Reagents

Table 3: Essential Research Reagents and Computational Tools

| Item Name | Specification/Purpose | Usage in Research Context |

|---|---|---|

| SCHISM Source Code | Version 5.8.0 or later | Base numerical model for profiling and acceleration [4] |

| NVIDIA HPC SDK | Includes CUDA Fortran compiler | Essential for GPU kernel development and compilation [22] |

| Performance Profilers | gprof, NVIDIA Nsight Compute | Identify hotspots and analyze GPU kernel performance [22] |

| Jacobi Benchmark Code | Custom CUDA implementation | Reference for solver optimization techniques [25] |

| Test Dataset | Fujian coast simulation domain | Representative workload for validation [4] |

| High-Performance GPU | NVIDIA architecture with CUDA support | Acceleration hardware platform [4] |

Structured performance profiling provides the critical foundation for successful GPU acceleration of the SCHISM ocean model. Through methodical identification of computational hotspots, particularly the Jacobi solver, researchers can prioritize optimization efforts where they yield maximum benefit. The experimental protocols outlined in this document enable reproducible characterization of model performance and establishment of validated acceleration approaches. By leveraging these methodologies within a CUDA Fortran framework, significant performance improvements of up to 35× for large-scale problems can be achieved while maintaining the numerical accuracy required for operational forecasting [4]. This approach facilitates the lightweight deployment of high-resolution storm surge forecasting systems even in resource-constrained coastal monitoring stations.

The acceleration of computational models using Graphics Processing Units (GPUs) has become a critical methodology in high-performance computing, particularly for resource-intensive applications like oceanographic modeling. Within this context, the Semi-implicit Cross-scale Hydroscience Integrated System Model (SCHISM) represents a widely adopted framework for ocean numerical simulations, whose computational efficiency, however, is constrained by the substantial resources it requires [4]. This application note details the methodology and protocols for successfully porting key computational routines of the SCHISM model to GPU kernels using CUDA Fortran, enabling significant performance gains while maintaining numerical accuracy. The integration of CUDA Fortran provides a lower-level explicit programming model that grants expert programmers direct control over all aspects of GPGPU programming, making it particularly suitable for optimizing complex scientific codes [26]. By implementing a GPU-accelerated parallel version of SCHISM (GPU–SCHISM), researchers have demonstrated the feasibility of lightweight operational deployment of storm surge numerical forecasting systems on hardware configurations typically available at coastal marine forecasting stations [4].

Performance Analysis and Acceleration Potential

Comprehensive performance profiling of the original CPU-based SCHISM model identified the Jacobi iterative solver module as a primary computational hotspot, consuming a disproportionate amount of runtime and thus presenting the most significant opportunity for acceleration [4]. This finding directed the initial porting efforts toward this critical routine.

Quantitative evaluation of the GPU-accelerated SCHISM model demonstrates variable speedup factors dependent on computational workload scale and hardware configuration. The following table summarizes key performance metrics obtained from experimental implementations:

Table 1: Performance Metrics of GPU-Accelerated SCHISM Model

| Experiment Scale | Grid Points | GPU Configuration | Speedup Factor | Notes |

|---|---|---|---|---|

| Small-scale | ~70,775 | Single GPU | 1.18x (overall model) | Jacobi solver accelerated by 3.06x [4] |

| Small-scale | ~70,775 | Single GPU | 3.06x (Jacobi solver only) | Hotspot routine performance [4] |

| Large-scale | 2,560,000 | Single GPU | 35.13x (overall model) | GPU more effective at higher resolutions [4] |

| Multi-GPU | Various | Multiple GPUs | Diminishing returns | Reduced workload per GPU hinders acceleration [4] |

The performance data reveals a crucial insight: GPU acceleration provides substantially greater benefits for larger-scale simulations with millions of grid points, where the computational workload sufficiently saturates GPU parallel capabilities. For smaller problem sizes, CPU computation often retains advantages due to lower overhead [4]. This relationship between problem scale and acceleration factor must guide decisions regarding when GPU acceleration is warranted.

Table 2: Comparison of GPU Programming Models for SCHISM

| Programming Model | Implementation Complexity | Performance | Control Level | Suitability |

|---|---|---|---|---|

| CUDA Fortran | Higher (explicit) | Superior | Direct hardware control | Performance-critical applications [4] |

| OpenACC | Lower (directive-based) | Good | Compiler-driven | Rapid prototyping [4] |

| CUDA C | Moderate (explicit) | Superior | Direct hardware control | New development [26] |

Comparison between CUDA and OpenACC-based GPU acceleration approaches confirms that CUDA consistently outperforms OpenACC across all experimental conditions for the SCHISM model, though it requires more extensive code modifications [4].

Experimental Protocols and Methodologies

Workflow for Porting SCHISM Routines to GPUs

The following diagram illustrates the systematic workflow for identifying and porting computational hotspots to GPU kernels:

Code Transformation Protocol

Host Code Modification

The host code management portion requires specific modifications to handle device selection, memory allocation, and kernel launch operations:

Essential host code modifications include:

Module Inclusion: The

cudaformodule must be included to access CUDA Fortran definitions and runtime APIs [27]:Device Memory Declaration: Arrays requiring GPU processing must be declared with the

deviceattribute [27]:Execution Configuration: Kernel launch parameters define thread organization:

Device Kernel Development

Kernel Implementation Protocol:

Kernel Attribute Specification: Subroutines executing on GPU must include

attributes(global)qualifier [27]:Thread Indexing Calculation: Each thread computes global position using built-in variables:

Note: CUDA Fortran uses unit offset for

threadIdxandblockIdx, unlike CUDA C's zero offset [27].Bounds Checking: Essential when total threads exceed array size:

Validation and Verification Protocol

To ensure numerical equivalence between CPU and GPU implementations:

- Statistical Comparison: Implement norm calculations (L1, L2, Linf) between CPU and GPU outputs

- Benchmarking Suite: Maintain identical test cases for both implementations

- Precision Analysis: Verify that single-precision GPU results maintain required accuracy thresholds for scientific validity [4]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Tools and Environments for CUDA Fortran Development

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Compilation Environment | NVIDIA HPC SDK (nvfortran) | Compiles .cuf files with CUDA Fortran extensions [27] |

| Compiler Flags | -Mcuda, -Mcuda=nordc | Enables CUDA support; disables relocatable device code when needed [28] |

| Development Tools | NVIDIA Nsight Systems, RenderDoc | Debugging and performance profiling [29] |

| Libraries | cudafor module, CUBLAS | Provides CUDA runtime interfaces and optimized routines [26] |

| Parallelization Templates | Thread Block (tBlock), Grid | Organizes parallel execution hierarchy [27] |

| Memory Management | Device arrays, Pinned memory | Enables data residence on GPU and fast host-device transfers [26] |

Kernel Execution Model

The following diagram illustrates how CUDA Fortran kernels execute on the GPU hardware, showing the relationship between grid, thread blocks, and individual threads:

This application note has established comprehensive protocols for successfully porting key computational routines of the SCHISM ocean model to GPU kernels using CUDA Fortran. The methodology demonstrates that identifying performance-critical hotspots like the Jacobi solver and implementing them with CUDA Fortran kernels can achieve significant acceleration factors ranging from 1.18x for small-scale simulations to over 35x for large-scale implementations [4]. The techniques detailed herein—from host code modification and kernel development to validation procedures—provide researchers with a structured approach to GPU acceleration that maintains numerical accuracy while substantially improving computational efficiency. This work lays a foundation for lightweight operational deployment of high-resolution oceanographic models on accessible hardware configurations, potentially enabling more widespread and timely coastal hazard forecasting.

Within the context of accelerating the SCHISM (Semi-implicit Cross-scale Hydroscience Integrated System Model) ocean model using CUDA Fortran, efficient memory management is not merely a performance enhancement but a critical determinant of feasibility. The primary challenge in heterogeneous computing lies in the substantial performance disparity between the computational speed of GPUs and the bandwidth of the data pathway connecting the CPU and GPU memories [30] [31]. For operational storm surge forecasting systems deployed on workstations with limited hardware, sophisticated memory strategies enable the high-resolution simulations necessary for accurate predictions [4]. This document outlines structured application notes and experimental protocols for managing CPU-GPU data transfer and memory allocation, providing a practical guide for researchers and scientists working on GPU-accelerated geophysical models.

Quantitative Analysis of Memory Performance

Optimizing memory management requires a clear understanding of the performance characteristics of different strategies. The data presented below provides a quantitative foundation for decision-making.

Table 1: Comparative Performance of Host-to-Device Data Transfer Methods

| Transfer Method | Reported Bandwidth Improvement | Key Characteristics | Best Use Cases |

|---|---|---|---|

| Pageable Host Memory | Baseline | Requires temporary pinned staging array; higher overhead [31] | Legacy code; non-performance-critical transfers |

| Pinned (Page-Locked) Host Memory | Up to 50% reduction in transfer time [32] | Enables direct memory access (DMA) by GPU [31] | Frequent, large data transfers between CPU and GPU |

| Batched Small Transfers | Significant performance improvement over individual calls [31] | Reduces per-transfer overhead | Operations involving many small, independent data arrays |

| Asynchronous Transfers | Can double sustained throughput [32] | Overlaps data transfer with kernel execution [31] | Pipelined workflows where computation and transfer can be parallelized |

Table 2: Performance Impact of GPU Memory Access Patterns

| Memory Type | Latency (Clock Cycles) | Key Optimization Strategy | Reported Performance Gain |

|---|---|---|---|

| Global Memory | 400-600 [32] | Coalesced memory access | Up to 8x speedup [32] |

| Shared Memory | 1-2 [32] | Avoidance of bank conflicts | 2-3x speedup common; up to 10x in tiled matrix multiplication [32] |

| Constant Memory | Near-register speed (cached) [32] | Used for read-only data uniform across threads | Over 20% kernel execution time reduction [32] |

| Registers | ~0 [32] | Keeping usage per thread below hardware limit (e.g., <32) | Prevents spilling to local memory, avoiding ~5x slowdown [32] |

The performance figures in Table 1 underscore the critical importance of minimizing and optimizing data transfers. For instance, using pinned host memory can nearly halve the time spent moving data across the PCIe bus, which is a common bottleneck [31] [32]. Similarly, Table 2 highlights the vast latency differences between the GPU's memory hierarchies. Leveraging the faster memory spaces (shared, constant, registers) is paramount for kernel performance, with documented cases of optimized memory access patterns leading to order-of-magnitude speedups [32]. In the case of SCHISM, identifying performance hotspots like the Jacobi solver and applying these memory optimizations was key to achieving a net model acceleration [4].

Experimental Protocols for Memory Optimization

This section provides a detailed, step-by-step methodology for profiling, diagnosing, and optimizing memory operations in a CUDA Fortran application such as GPU–SCHISM.

Protocol 1: Profiling and Baseline Establishment

Objective: To measure initial application performance and identify memory-related bottlenecks.

Materials: CUDA Fortran application (e.g., SCHISM v5.8.0), NVIDIA Nsight Systems profiler, nvprof (for legacy CUDA versions).

- Profile Execution: Run the application under a profiler to collect initial data. Use the command line with

nvprofor the graphical interface of Nsight Systems. - Identify Hotspots: Analyze the profiling report to determine:

- The proportion of total runtime consumed by data transfers (

cudaMemcpyvariants) versus kernel execution [31]. - The specific kernels with the longest execution times.

- The amount of time spent in

cudaMallocandcudaFree.

- The proportion of total runtime consumed by data transfers (

- Establish Metrics: Record key baseline metrics for later comparison. These should include:

- Total application runtime.

- Aggregate time spent in host-to-device and device-to-host transfers.

- Execution time of the top five most time-consuming kernels.

- Generate Memory Snapshot: For detailed allocator analysis, use a framework like PyTorch's memory snapshot capability to record the state of allocated memory, which can be visualized to understand fragmentation and allocation patterns [33]. While this is specific to PyTorch, the principle of using available tools to inspect memory state is universally applicable.

Protocol 2: Implementing and Validating Pinned Memory

Objective: To reduce data transfer latency by implementing pinned host memory. Materials: CUDA Fortran application, CUDA Toolkit.

- Code Modification: Replace standard Fortran

allocatestatements for host arrays that are frequently transferred with the CUDA Fortran pinned memory attribute.- Original:

real, allocatable :: host_data(:) - Modified:

real, allocatable, pinned :: host_data(:) - Alternatively, use

cudaHostAllocfor more control [31].

- Original:

- Bandwidth Verification: Implement a benchmark routine, similar to the one described by NVIDIA [31], to measure the achieved bandwidth for both pageable and pinned memory transfers. Compare the results to ensure the expected improvement is realized.

- Functional Testing: Run the application's standard validation suite (e.g., against known oceanographic data [4]) to ensure that the change to pinned memory does not alter numerical results.

- Performance Measurement: Re-profile the application using the tools from Protocol 1 and compare the data transfer times against the established baseline.

Protocol 3: Kernel Optimization via Shared Memory

Objective: To reduce a kernel's reliance on high-latency global memory by leveraging low-latency shared memory. Materials: CUDA Fortran application, NVIDIA Nsight Compute profiler.

- Target Selection: Select a kernel identified as a hotspot in Protocol 1 that exhibits non-coalesced global memory access or redundant data reads.

- Shared Memory Design: Refactor the kernel to use shared memory. This typically involves:

- Declaring a shared memory array:

real, shared :: tile[?] - Having each thread in a block load a portion of data from global memory into the

tile. - Synchronizing threads with

call syncthreads()to ensure the entire tile is loaded. - Performing computations using data from the shared memory tile.

- Declaring a shared memory array:

- Bank Conflict Analysis: Use Nsight Compute to profile the refactored kernel and check for shared memory bank conflicts. If conflicts are detected, reorganize data access patterns (e.g., via padding or thread ID mapping adjustments) to resolve them [32].

- Validation and Benchmarking: Verify the correctness of the new kernel's output and measure its execution time. The GPU–SCHISM study, for example, focused its optimization on the Jacobi solver, a known performance hotspot [4].

The following workflow diagram summarizes the iterative process of optimizing a CUDA application's memory performance.

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers embarking on GPU acceleration of scientific models, the following tools and concepts are indispensable.

Table 3: Essential Tools and Libraries for CUDA Fortran Memory Management

| Tool/Technique | Function | Relevance to SCHISM/GPU Models |

|---|---|---|

| Pinned Memory | Host memory allocated to be directly accessible by the GPU, enabling high-bandwidth transfers [31]. | Critical for efficient transfer of large bathymetry, forcing, and state variable arrays between CPU and GPU. |

| CUDA Unified Memory | A single memory address space accessible from both CPU and GPU, simplifying programming (via cudaMallocManaged) [34]. |