Accelerating Environmental Innovation: A Guide to GPU-Accelerated Finite Element Analysis

This article provides a comprehensive overview of the implementation and benefits of using Graphics Processing Units (GPUs) for Finite Element Analysis (FEA) in environmental science and engineering.

Accelerating Environmental Innovation: A Guide to GPU-Accelerated Finite Element Analysis

Abstract

This article provides a comprehensive overview of the implementation and benefits of using Graphics Processing Units (GPUs) for Finite Element Analysis (FEA) in environmental science and engineering. It explores the foundational principles of GPU computing, detailing its superiority over traditional Central Processing Units (CPUs) for handling the massive parallelism inherent in FEA. The piece covers core methodological approaches, including matrix-free solvers and multi-GPU strategies, and presents specific application case studies relevant to environmental modeling. Furthermore, it offers practical guidance on troubleshooting and optimization to overcome common computational bottlenecks, and concludes with a rigorous validation and comparative analysis of performance metrics. Designed for researchers and professionals, this guide serves as a roadmap for leveraging GPU-accelerated FEA to solve large-scale, complex environmental challenges with unprecedented speed and efficiency.

Why GPUs for FEA? Unlocking Unprecedented Computational Power for Environmental Modeling

In the realm of computational science, a computational bottleneck is defined as a limitation in processing capabilities that arises when the efficiency of algorithms becomes compromised due to exponentially growing space and time requirements [1]. For researchers conducting Finite Element Analysis (FEA) on traditional Central Processing Unit (CPU)-based architectures, these bottlenecks represent significant barriers to advancing environmental applications, from modeling contaminant transport in watersheds to predicting the impacts of climate change on polar sea ice [2] [3].

The fundamental issue resides in the architectural mismatch between the inherently parallel nature of FEA computations and the sequentially-oriented design of CPUs. While CPUs excel at executing complex, sequential tasks quickly, they struggle with the massively parallel mathematical operations required to solve the large systems of equations governing FEA simulations. As environmental models grow in sophistication to incorporate higher-resolution data from sources like lidar digital elevation models, this architectural mismatch becomes increasingly problematic, leading to extended simulation times that can hinder scientific progress [2].

Characterizing CPU Bottlenecks in FEA Workflows

Architectural and Memory Limitations

The computational bottlenecks in traditional CPU-based FEA manifest primarily through memory bandwidth constraints and the sequential execution model of CPU architectures. In system architecture, bottlenecks may be caused by non-distributable computations or resources, such as a single-server instance, or by components that consume excessive CPU, memory, or network resources under normal load [1]. The roofline model provides a visual representation of these performance limitations, showing that computation may be restricted by either memory bottlenecks caused by data movement or by the system's peak performance capacity [1].

For FEA applications, which typically generate large, sparse matrix systems, these memory bottlenecks are particularly pronounced. CPU bottlenecks can result from shortages in memory or input/output (I/O) bandwidth, leading the system to use extra CPU time to compensate [1]. In multi-CPU systems, each CPU is associated with a nonuniform memory access (NUMA) node, and memory access across NUMA nodes is slower than within a node, making NUMA configuration a critical bottleneck concern [1]. As one researcher noted regarding CFD applications, "For instance, I often run simulations requiring over 1TB of RAM. That means I would need over a dozen 80GB A100s (at a cost of $18k+ apiece, over $220k total) to run my simulations on a GPU cluster. Meanwhile, you can build a single 2P EPYC Genoa node with 128 cores and 1.5TB of DDR5 RAM for under $30k" [4].

Quantitative Performance Limitations

The tables below summarize key performance limitations observed in CPU-based FEA systems across different environmental application domains:

Table 1: CPU Performance Limitations in Hydrological Modeling [2]

| Simulation Domain Size | CPU Hardware Configuration | Performance Limitation |

|---|---|---|

| 78 × 78 × 10 | Single-threaded 16-core Intel Xeon 2.67 GHz | Baseline reference performance |

| 128 × 128 × 16 | Single-threaded 16-core Intel Xeon 2.67 GHz | Increased computation time exceeding linear scaling |

| 256 × 256 × 16 | Single-threaded 16-core Intel Xeon 2.67 GHz | Significant memory bandwidth saturation |

Table 2: Comparative Performance in CFD Applications [4]

| Solver Precision | Hardware Configuration | Performance Time | Memory Usage |

|---|---|---|---|

| Double precision | AMD Ryzen 5900x 12 cores | 53.43 sec | 10.24 GB |

| Double precision | 2 servers of dual AMD EPYC 7532 (128 cores) | 6.67 sec | 16.6 GB |

| Single precision | AMD Ryzen 5900x 12 cores | 77.88 sec | 7.53 GB |

Experimental Protocols for Assessing Computational Bottlenecks

Protocol 1: Benchmark Testing for Integrated Surface-Sub-Surface Flow Models

Objective: To quantitatively evaluate CPU-based computational bottlenecks in conjunctive hydrological modeling using high-resolution topographic data [2].

Materials and Methods:

- Software Requirements: GCS-flow model or equivalent integrated surface-subsurface flow simulator

- Hardware Configuration: Single-threaded sedec-core CPUs (16 Intel Xeon 2.67 GHz processors)

- Input Data: Lidar digital elevation model (lDEM) data from Goose Creek watershed (6.6 km × 7.4 km domain with 2.0 m soil depth)

- Discretization Method: Finite difference alternating direction implicit (ADI) approach

- Simulation Parameters: Multiple domain sizes (Nx × My × Pz): (i) 78 × 78 × 10; (ii) 128 × 128 × 16; (iii) 256 × 256 × 16

Procedure:

- Preprocess lidar topographic data to generate computational grids at specified resolutions

- Initialize coupled surface-subsurface flow model with appropriate boundary conditions

- Execute simulation runs for each domain size while monitoring:

- Memory utilization patterns

- Computation time per iteration

- Cache performance metrics

- Compare results against GPU-accelerated implementations using NVIDIA Tesla C2070 and Tesla K40

- Analyze performance scaling using layer-wise decomposition and code profiling tools

Output Metrics:

- Wall clock time per simulation

- Memory footprint across different domain sizes

- Parallel efficiency and scaling limitations

- Identification of specific computational bottlenecks (memory-bound vs. compute-bound)

Protocol 2: CPU-GPU Heterogeneous Computing Performance Assessment

Objective: To implement and evaluate a CPU-GPU heterogeneous computing framework for finite volume CFD applications [5].

Materials and Methods:

- Software Framework: SENSEI (Structured Euler Navier-Stokes Explicit Implicit) solver with OpenACC directives

- Hardware Configuration: CPU-GPU heterogeneous system with specified workload balancing

- Test Case: 2D 30-degree supersonic inlet with simplified geometry

- Grid Generation: Solve 2D elliptic grid generation equations with Dirichlet boundary conditions

Procedure:

- Implement performance model for CPU-GPU heterogeneous computing to estimate performance utilizing both CPU and GPU as workers

- Abstract computational procedures into high-level computation and communication patterns organized chronologically into a workflow chart

- Divide single iteration of computation into interior domain residual calculation, boundary condition application, and solution update stages

- Assign workloads to CPU and GPU workers based on their respective speeds to prevent CPU idling

- Apply performance optimizations using OpenACC directives including:

- Sufficient parallelism exploration to increase parallel speedup

- Data locality optimization through data structure padding and data region reuse

- Reduction of implicit synchronization points and serial code sections

- Execute benchmark simulations while collecting performance metrics

Performance Evaluation:

- Calculate scaled size steps per np time (ssspnt) using: ssspnt = (s × size × steps) / (np × t)

- Compare wall clock time per iteration against pure-GPU implementation

- Assess memory bandwidth utilization and data transfer efficiency

Computational Workflow and Bottleneck Analysis

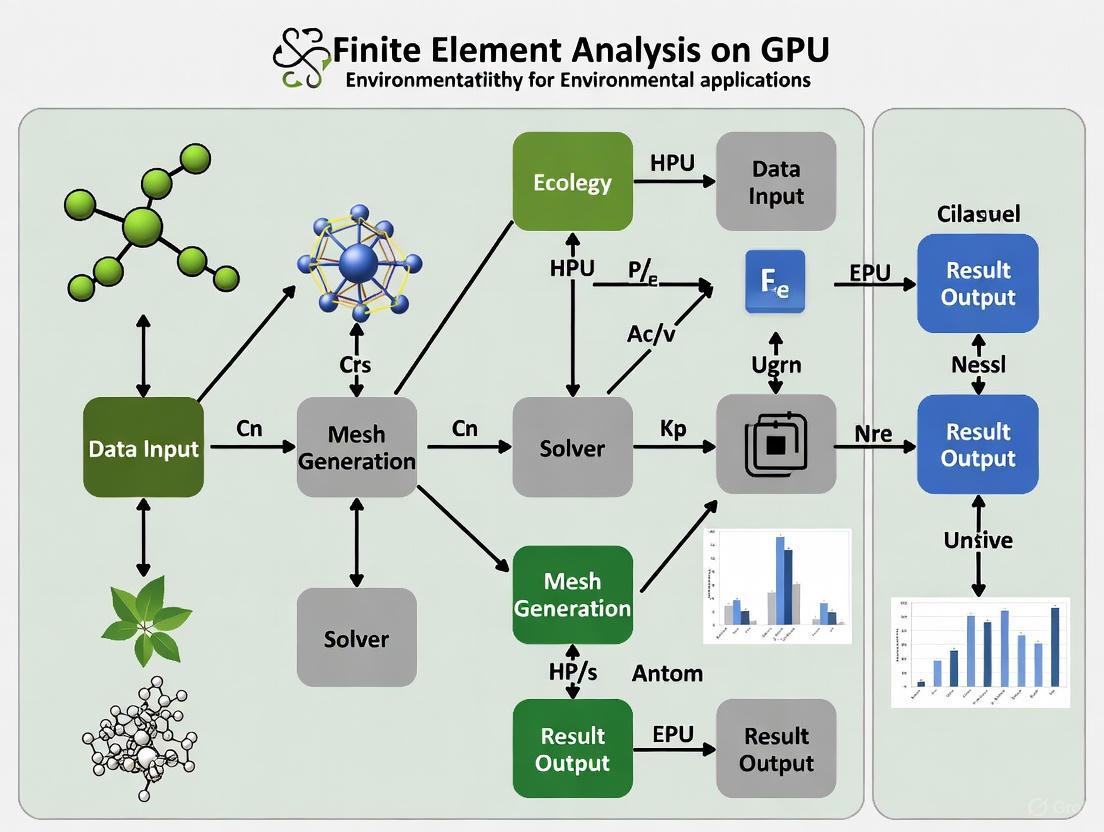

The following diagram illustrates the typical computational workflow in traditional CPU-based FEA and identifies where primary bottlenecks occur:

Figure 1: CPU Bottlenecks in FEA Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Computational Research Reagents for FEA Bottleneck Analysis

| Research Reagent | Function | Application Context |

|---|---|---|

| GCS-flow Model [2] | Integrated surface-subsurface flow simulator with ADI discretization | Hydrological modeling with lidar-resolution topographic data |

| JAX-WSPM Framework [6] | GPU-accelerated finite element solver for unsaturated porous media | Coupled water flow and solute transport simulations |

| SENSEI CFD Solver [5] | Structured Euler Navier-Stokes Explicit Implicit solver | Finite volume CFD applications with CPU-GPU heterogeneous computing |

| Intel VTune Profiler [1] | Performance analysis tool for identifying code hotspots | CPU utilization and cache behavior analysis in FEA applications |

| Kokkos Framework [3] | Parallel programming model for performance portability | Sea-ice dynamics simulation with higher-order finite elements |

| OpenACC Directives [5] | High-level programming standard for parallel computing | CPU-GPU heterogeneous implementation with minimal code intrusion |

GPU Acceleration as a Mitigation Strategy

The limitations of CPU-based FEA have prompted investigation into Graphics Processing Unit (GPU) acceleration as a mitigation strategy. GPUs offer an order of magnitude higher floating-point performance and efficiency compared to CPUs, but their full utilization often requires significant engineering effort [3]. Empirical evidence shows that more than 62% of system energy in major mobile consumer workloads is attributed to data movement, with memory access consuming more than 100 to 1000 times more energy than complex additions [1].

For environmental applications, researchers have demonstrated that GPU-based implementations can achieve substantial performance improvements. In hydrological modeling, implementations on NVIDIA Tesla GPUs have shown significant speedups compared to single-threaded CPU performance [2]. Similarly, in sea-ice modeling, a GPU port of the dynamical core achieved a sixfold speedup while maintaining performance on CPUs [3].

The following diagram contrasts the traditional CPU-based workflow with an optimized GPU-accelerated approach:

Figure 2: GPU Acceleration Mitigating CPU Bottlenecks

Computational bottlenecks in traditional CPU-based FEA present significant challenges for environmental researchers seeking to model complex systems at high resolutions. These limitations stem from fundamental architectural constraints in CPU design, particularly regarding memory bandwidth and parallel processing capabilities. The experimental protocols and analytical frameworks presented herein provide methodologies for quantifying these bottlenecks and evaluating potential solutions.

As the field progresses, heterogeneous computing approaches that strategically leverage both CPU and GPU resources show considerable promise for overcoming these limitations [5]. Frameworks such as Kokkos [3] and JAX-WSPM [6] offer pathways toward performance portability across different hardware architectures. For environmental researchers, addressing these computational bottlenecks is not merely a matter of convenience but a critical requirement for advancing our understanding of complex environmental systems through high-fidelity simulation.

The evolution of Graphics Processing Units (GPUs) from specialized graphics renderers to general-purpose parallel processors represents a pivotal shift in high-performance computing (HPC). Modern GPU architectures deliver exceptional computational density and energy efficiency for scientific simulations, particularly for finite element analysis (FEA) in environmental applications. Unlike traditional Central Processing Units (CPUs) optimized for sequential execution, GPUs employ a massively parallel architecture containing thousands of computational cores designed to execute tens of thousands of concurrent threads. This architectural paradigm enables order-of-magnitude acceleration for complex environmental simulations, including climate modeling, fluid dynamics, and sea-ice mechanics, where solving large-scale systems of partial differential equations is computationally demanding [7] [8].

The relevance of GPU computing is particularly pronounced in the context of environmental research, where the spatial and temporal resolution of models directly impacts predictive accuracy. Frameworks like JAX-FEM demonstrate how GPU-accelerated finite element solvers can automate inverse design and facilitate mechanistic data science, providing powerful tools for environmental engineers and computational scientists [7]. Furthermore, the porting of codes like neXtSIM-DG for sea-ice dynamics to GPU platforms highlights the tangible benefits of this technology, yielding a sixfold speedup compared to CPU implementations and enabling higher-resolution climate projections [3]. Understanding GPU architecture fundamentals—from its parallel structure and memory hierarchy to its execution model—is therefore essential for researchers aiming to leverage accelerated computing for environmental problem-solving.

Core Architectural Concepts

Fundamental Structure of a Modern GPU

At a high level, a GPU is a highly parallel processor architecture composed of processing elements and a sophisticated memory hierarchy. NVIDIA GPUs, for instance, consist of a collection of Streaming Multiprocessors (SMs), an on-chip L2 cache, and high-bandwidth DRAM [9]. Each SM contains its own instruction schedulers and multiple types of instruction execution pipelines for arithmetic, load/store, and other operations. For example, an NVIDIA A100 GPU contains 108 SMs, a 40 MB L2 cache, and HBM2 memory delivering up to 2039 GB/s of bandwidth [9]. This structure contrasts sharply with a CPU, which typically has a few powerful cores optimized for low-latency sequential code execution, whereas a GPU employs thousands of smaller, energy-efficient cores optimized for high-throughput parallel tasks [8].

The GPU Execution Model: Threads, Warps, and Blocks

To utilize their parallel resources, GPUs execute functions using a hierarchical thread model. A kernel function is executed by a grid of thread blocks, where each block contains a collection of threads that can communicate via shared memory and synchronize their execution. At runtime, a thread block is scheduled on an SM, and each SM can execute multiple thread blocks concurrently [9]. This two-level hierarchy allows the GPU to efficiently manage its vast parallel resources. A key to high performance is occupancy—having enough active thread blocks and warps (groups of 32 threads that execute in lockstep) to hide the latency of dependent instructions and memory operations by immediately switching to other threads that are ready to execute [9]. For a GPU with many SMs, it is crucial to launch a kernel with several times more thread blocks than the number of SMs to fully utilize the hardware and minimize the "tail effect," where the GPU becomes underutilized as only a few thread blocks remain running at the end of a kernel's execution [9].

Memory Hierarchy and Data Movement

Efficient data movement is often the most critical factor in achieving high performance in GPU applications. The GPU memory hierarchy is designed to provide low-latency access to frequently used data and high-bandwidth access to larger datasets. The hierarchy typically includes:

- Global Memory (DRAM): Large, high-bandwidth memory shared by all SMs, but with relatively high latency.

- L2 Cache: Shared by all SMs, it helps reduce the effective latency of accesses to global memory.

- Shared Memory: A small, low-latency, software-managed memory shared by all threads within a thread block. It is ideal for inter-thread communication and data reuse.

- Registers: The fastest memory, private to each thread.

The high-level data flow from a CPU host to the GPU device and through its internal memory hierarchy can be visualized as follows:

Performance Characteristics and Metrics

Key Performance Indicators

GPU performance is quantified using several key metrics that help researchers select appropriate hardware and optimize their applications. The most common metrics are:

- TFLOPS (TeraFLOPS): Measures the GPU's floating-point performance, indicating how many trillions of floating-point operations (like multiplies or adds) it can perform per second. Higher TFLOPS values signify greater computational capacity, which is critical for AI and scientific simulations [8]. For example, an NVIDIA A100 GPU can achieve a peak throughput of 312 FP16 TFLOPS [9]. It is important to note that a single multiply-add operation comprises two floating-point operations.

- Memory Bandwidth: The rate at which data can be read from or stored into the GPU's global memory by the processors. Higher bandwidth enables faster data movement, which is crucial for feeding the computational cores and reducing bottlenecks in data-intensive applications [8]. Bandwidth is measured in GB/s (Gigabytes per second).

- Arithmetic Intensity: A crucial algorithmic metric defined as the number of floating-point operations performed per byte of data transferred from memory (FLOPs/byte). It determines whether a computation is memory-bound (limited by data transfer speed) or compute-bound (limited by raw calculation speed) on a given processor [9].

Performance Limitations and the Roofline Model

The performance of any GPU kernel is typically limited by one of three factors: memory bandwidth, math (computational) bandwidth, or latency. The relationship between arithmetic intensity and hardware capabilities can be summarized by a simple model. A kernel is considered math-limited if the time spent on math operations exceeds the time spent on memory accesses. This condition can be expressed as:

# of Operations / Math Bandwidth > # of Bytes Accessed / Memory Bandwidth

Rearranging this inequality shows that a kernel is math-limited if its Arithmetic Intensity > (Peak Math Bandwidth / Peak Memory Bandwidth). The ratio on the right is known as the machine's ops:byte or AI balance ratio [9]. Many common operations in scientific computing, such as vector addition or applying an activation function like ReLU, have low arithmetic intensity and are therefore memory-bound. In contrast, operations like large matrix multiplications or dense linear algebra have high arithmetic intensity and are compute-bound.

Table 1: Performance Characteristics of Common Operations on a V100 GPU (Ops:Byte Ratio ~40-139)

| Operation | Arithmetic Intensity (FLOPS/B) | Usually Limited By... |

|---|---|---|

| Linear Layer (Large Batch) | 315 | Arithmetic (Compute) |

| Layer Normalization | < 10 | Memory |

| Max Pooling (3x3 window) | 2.25 | Memory |

| Linear Layer (Batch Size 1) | 1 | Memory |

| ReLU Activation | 0.25 | Memory |

GPU Acceleration in Finite Element Analysis

Application to Finite Element Methods

The Finite Element Method (FEM) is a powerful technique for numerically solving partial differential equations (PDEs) that appear in structural analysis, heat transfer, fluid flow, and other scientific domains [7]. The method involves discretizing a domain into a mesh of simple elements, formulating a weak form of the governing PDE, and solving the resulting large, sparse system of linear equations. The computational workflow of FEM, particularly the matrix assembly phase, is inherently parallel and maps exceptionally well to GPU architectures. During assembly, the contribution of each element to the global stiffness matrix can be computed independently, allowing for massive parallelism across thousands of elements [10].

GPU acceleration has shown remarkable success in real-world FEA applications. For instance, the development of a GPU-accelerated dynamical core for the sea-ice model neXtSIM-DG resulted in a sixfold speedup compared to the CPU-based implementation [3]. Similarly, a research project implementing a GPU-accelerated FEM solver in Python and CUDA demonstrated performance gains as high as 27.2x faster than a CPU implementation for problems with millions of nodes [10]. Frameworks like JAX-FEM, built on the JAX library, leverage GPU acceleration and automatic differentiation to not only solve forward PDE problems efficiently but also to automate inverse design problems, which are central to optimization and material design in environmental research [7].

Detailed Experimental Protocol: GPU-Accelerated FEA

This protocol outlines the methodology for benchmarking a GPU-accelerated Finite Element solver against a CPU-based reference, suitable for environmental simulations like soil mechanics or fluid flow in porous media.

Research Reagent Solutions

Table 2: Essential Software and Hardware for GPU-Accelerated FEA

| Item | Function / Purpose |

|---|---|

| GPU Computing Hardware (e.g., NVIDIA A100, RTX 2080 Ti) | Provides the parallel processing cores for accelerating the matrix assembly and linear solver phases of the FEM algorithm. |

| Heterogeneous Computing Framework (e.g., Kokkos, SYCL, CUDA) | Enables the development of a single codebase that can run efficiently on both CPU and GPU architectures, simplifying porting and maintenance [3]. |

| Machine Learning Framework (e.g., JAX, PyTorch) | Provides a high-level, user-friendly interface for linear algebra operations, with a specialized backend that automatically leverages GPU acceleration and new hardware features [7] [3]. |

| Sparse Linear Solver Library (e.g., CuSOLVER, AmgX) | Implements highly optimized iterative solvers (like MINRES, Conjugate Gradient) for the large, sparse linear systems characteristic of FEA, often providing significant speedups on GPUs [10]. |

Workflow and Procedures

The experimental workflow for a typical GPU-accelerated FEA simulation involves several stages, from problem setup to performance analysis, as illustrated below:

Problem Setup and Mesh Generation: Generate a finite element mesh for the environmental domain (e.g., a watershed, an airshed, a geological formation). The mesh should be large enough (containing millions of elements) to saturate the GPU's parallel capacity and amortize the cost of data transfer. Export the mesh connectivity and nodal coordinates.

Data Transfer to GPU: Allocate memory on the GPU device and transfer the mesh data (nodal coordinates, element connectivity) from the CPU host memory. This step incurs a latency penalty, so it is crucial to minimize the frequency and volume of host-device transfers.

GPU-Accelerated Stiffness Matrix Assembly: Execute the parallel assembly kernel on the GPU. A common strategy is to assign one thread block (or a warp) to compute the local stiffness matrix for a single element or a group of elements. The kernel writes the non-zero contributions directly into the global stiffness matrix in a format suitable for sparse solvers (e.g., CSR). The choice of kernel implementation (e.g., batched CuPy operations vs. custom CUDA kernels) can significantly impact performance [10].

GPU-Accelerated Linear Solution: Solve the system of equations ( KU = F ) on the GPU using an iterative solver optimized for sparse matrices. The Minimum Residual (MINRES) solver is often a good choice for symmetric systems, as it effectively leverages the sparsity and symmetry of the stiffness matrix [10]. The solver should reside entirely on the GPU to avoid costly data transfers during iterations.

Solution Transfer and Post-processing: Transfer the solution vector ( U ) back to the CPU host memory for analysis and visualization (e.g., analyzing stress fields in a structure or pollutant concentration in a fluid).

Performance Benchmarking: Compare the total runtime and the time taken for the assembly and solve phases against a baseline CPU implementation (e.g., an OpenMP-parallelized code running on an 8-core Xeon processor) [10]. Key metrics are speedup (( \text{CPU Time} / \text{GPU Time} )) and performance-per-watt.

Environmental Impact and Sustainability

The remarkable performance of GPUs comes with a significant environmental footprint that researchers must consider. The operational energy consumption of AI and HPC systems, heavily reliant on GPUs, is projected to reach up to 8% of global electricity by 2030 [11]. A single high-performance GPU server can consume between 300-500 watts per hour during operation, with large training clusters drawing megawatts of continuous power [11]. Furthermore, the environmental cost extends beyond operation to the manufacturing phase. The production of a single high-performance GPU server can generate between 1,000 to 2,500 kilograms of CO2 equivalent, known as the "embedded" or "embodied" carbon emissions [11] [12].

Table 3: Environmental Impact Factors for GPU Computing

| Factor | Impact Description | Mitigation Strategy |

|---|---|---|

| Operational Energy | Direct electricity consumption during computation, contributing to carbon emissions based on the local grid's energy mix. | Use renewable energy sources; optimize code for faster execution and lower energy use; select energy-efficient GPU architectures. |

| Manufacturing (Embodied Carbon) | Emissions from the complex process of semiconductor fabrication, which involves energy-intensive lithography and rare earth minerals. | Extend hardware lifespan; purchase from vendors providing carbon footprint data; support circular economy principles for hardware. |

| Cooling Infrastructure | Traditional air cooling can consume up to 40% of a data center's total energy. | Adopt advanced cooling technologies like liquid immersion cooling; use AI for dynamic cooling optimization. |

Adopting sustainable computing practices is becoming imperative. Researchers can contribute by:

- Optimizing Computational Efficiency: Writing highly efficient code that completes tasks faster directly reduces energy consumption.

- Leveraging Advanced Hardware: Utilizing newer GPU architectures that offer better performance-per-watt (e.g., Tensor Cores for mixed-precision computation) [9].

- Choosing Cloud Providers with Renewable Energy: Preferring data centers that are powered by renewable sources can significantly reduce the operational carbon footprint of simulations [11].

- Considering Full Lifecycle Impact: Acknowledging that the environmental impact of computing includes manufacturing and end-of-life disposal, not just operational electricity [12].

Understanding GPU architecture is fundamental for harnessing its power in scientific computing, particularly for finite element analysis in environmental research. The massive parallelism, hierarchical memory, and high-throughput execution model of GPUs can accelerate complex simulations by orders of magnitude, enabling higher-fidelity models of climate, hydrology, and ecosystems. However, achieving optimal performance requires careful consideration of algorithmic arithmetic intensity and memory access patterns to avoid bottlenecks. Furthermore, as the field progresses, the environmental impact of large-scale computing necessitates a commitment to sustainability, pushing researchers toward more efficient algorithms and hardware. By mastering these architectural principles, scientists and engineers can leverage GPU technology to tackle some of the most pressing environmental challenges with unprecedented speed and scale.

Finite Element Analysis (FEA) is a cornerstone of computational mechanics, enabling the simulation of complex physical phenomena across engineering and scientific disciplines. The integration of Graphics Processing Units (GPUs) into FEA workflows has initiated a paradigm shift, offering transformative potential for research in environmental applications. GPU-accelerated computing leverages the massively parallel architecture of modern GPUs to dramatically speed up computationally intensive tasks that are traditionally bound by Central Processing Unit (CPU) limitations [13]. For researchers modeling environmental systems—such as subsurface fluid flow, contaminant transport, or geophysical hazards—this acceleration can make previously intractable, high-fidelity simulations feasible.

The performance benefits of GPU acceleration are not uniformly distributed across all stages of an FEA simulation. This document details the key workflows—specifically matrix assembly, numerical solvers, and visualization—that are most amenable to GPU acceleration. It provides a technical foundation and practical protocols for researchers in environmental science and related fields to effectively leverage GPU resources, thereby enhancing the scope and scale of their computational investigations.

Matrix Assembly on GPUs

Matrix assembly is the process of constructing the global system of equations from the contributions of individual finite elements. This step involves substantial computation, as it requires the integration of shape functions and material properties over all elements in the mesh.

GPU Acceleration Methodology

The parallel nature of matrix assembly makes it an ideal candidate for GPU offloading. Each element's contribution to the global stiffness matrix can be computed independently, allowing for massive parallelization.

- Parallel Element Processing: GPU cores simultaneously compute the element-level matrices (e.g., stiffness, mass, damping) for numerous elements. This is a classic "embarrassingly parallel" problem, where thousands of threads can run concurrently with minimal synchronization [13].

- Global Matrix Assembly: After computing element matrices, the contributions are assembled into the global sparse matrix. Efficient management of this process is critical to avoid memory conflicts, often using atomic operations or sophisticated coloring algorithms to handle concurrent writes to the same global matrix row [6].

- Leveraging High-Level Libraries: Emerging frameworks like JAX facilitate GPU-accelerated assembly by providing a high-level, NumPy-like API. JAX's

jit(just-in-time) compilation can transform straightforward Python code for element matrix computation into highly optimized GPU kernels, significantly reducing development time while maintaining performance [6].

Performance Characteristics

The table below summarizes the typical performance gains and key considerations for GPU-accelerated matrix assembly.

Table 1: Performance Profile of GPU-Accelerated Matrix Assembly

| Aspect | CPU-Based Assembly | GPU-Accelerated Assembly | Key Enabling Factors |

|---|---|---|---|

| Parallelism Scale | Dozens of cores | Thousands of threads | Massive parallelism of GPU cores [13] |

| Computational Throughput | Lower | 5x to 20x potential speedup [13] | Parallel processing of all elements |

| Optimal Use Case | Small to medium models | Large-scale models with >1M elements | High element count ensures full GPU utilization |

| Implementation Complexity | Lower (traditional C++/Fortran) | Higher (CUDA) or Lower (JAX) [6] | High-level frameworks (JAX, PyTorch) simplify coding |

Experimental Protocol: GPU-Accelerated Assembly with JAX

This protocol outlines the steps for benchmarking matrix assembly performance for a 3D elastic problem using the JAX library.

- Objective: To quantify the speedup achieved by GPU-accelerated matrix assembly compared to a single-threaded CPU implementation.

- Software and Hardware:

- Software: Python 3.x, JAX library,

numpyfor CPU baseline,timemodule for profiling. - Hardware: A compute-class GPU (e.g., NVIDIA A100, V100, or RTX 4090) with high memory bandwidth and a modern multi-core CPU for baseline comparison [13].

- Software: Python 3.x, JAX library,

- Procedure:

- Mesh Generation: Generate a 3D hexahedral mesh of a unit cube, varying the number of elements (e.g., from 10³ to 100³).

- CPU Baseline Implementation:

- Write a function in pure NumPy that iterates over each element to compute its local stiffness matrix and assembles it into the global matrix.

- Profile the execution time of this function.

- GPU-Accelerated Implementation with JAX:

- Write an equivalent function using

jax.numpy. - Use

jax.jitto compile the function for GPU execution. - Ensure operations are vectorized to leverage GPU parallelism.

- Profile the execution time, excluding the initial JIT compilation overhead.

- Write an equivalent function using

- Data Collection and Analysis:

- Record assembly times for both implementations across different mesh sizes.

- Calculate the speedup factor (CPU time / GPU time) for each mesh size.

- Plot speedup versus number of degrees of freedom to identify performance scaling.

Solver Acceleration on GPUs

The solution of the linear system of equations ( Kx = f ) is often the most computationally intensive phase of an FEA simulation, especially for large-scale problems. GPU acceleration can yield order-of-magnitude speedups for certain classes of solvers.

Solver Types and GPU Suitability

- Iterative Solvers (e.g., Preconditioned Conjugate Gradient - PCG): These solvers perform matrix-vector multiplications and vector operations that are highly parallelizable. They are typically memory-bandwidth bound, a domain where GPUs excel due to their vastly superior memory bandwidth compared to CPUs. Virtually any modern GPU can provide significant acceleration for iterative solvers [14].

- Sparse Direct Solvers: These solvers rely on matrix factorization (e.g., LU decomposition) and are compute-bound, requiring high double-precision (FP64) floating-point performance. This limits acceleration to high-end, compute-class GPUs like the NVIDIA A100, H100, or AMD MI300 series, which have dedicated FP64 cores [13] [14].

- Mixed Solvers: A newer class of solvers, such as the one in Ansys Mechanical APDL, hybridizes direct and iterative methods. It uses single-precision (FP32) arithmetic on GPUs for performance while maintaining double-precision accuracy on CPUs, making it compatible with a wider range of GPUs, including workstation-class cards like the NVIDIA RTX A6000 [14] [15].

Performance Characteristics

The table below compares the GPU acceleration potential for different solver types used in FEA.

Table 2: Performance Profile of GPU-Accelerated FEA Solvers

| Solver Type | Key GPU Dependency | Typical Speedup | Best-Suited GPU Types |

|---|---|---|---|

| Iterative (e.g., PCG) | Memory Bandwidth | 5x to 18x (total simulation time) [16] | All (Gaming, Workstation, Server) [14] |

| Sparse Direct | Double-Precision (FP64) Compute | High (e.g., H100 benchmark) [14] | High-End Server (NVIDIA A/H100, AMD MI200/300) [13] |

| Mixed | Single-Precision (FP32) Compute | Comparable performance to H100 on cost-effective GPUs [15] | Workstation & Server (e.g., NVIDIA RTX A6000) [15] |

| Nonlinear & Multiphysics | Parallelism across domains/particles | 11x (e.g., Ansys HFSS) [13] | Server-class GPUs with high memory capacity [13] |

Experimental Protocol: Benchmarking Solver Performance in Ansys Mechanical APDL

This protocol provides a methodology for evaluating the impact of GPU acceleration on different solver types within a commercial FEA package.

- Objective: To measure the solution time speedup for a standard benchmark model using PCG, Sparse, and Mixed solvers with GPU acceleration enabled.

- Software and Hardware:

- Software: Ansys Mechanical APDL 2025 R1 or newer.

- Hardware: Two GPU configurations:

- Configuration A (Workstation): NVIDIA RTX A6000 (or similar with high FP32 performance).

- Configuration B (Server): NVIDIA H100 or A100 (with high FP64 performance).

- A multi-core CPU system for baseline testing.

- Model: Use the official V25 benchmark model set from Ansys, specifically the "iter-1" model for PCG and the "direct" model for the Sparse solver [14].

- Procedure:

- CPU Baseline: Run each model and solver combination using CPU cores only. Record the solution time from the solver output file (

*.STATor*.PCS). - GPU Acceleration:

- Activate GPU acceleration via the Ansys Product Launcher (High-Performance Computing tab) or command line (e.g.,

ansys252 -acc nvidia -na 1) [14]. - Repeat the simulations for each solver and GPU configuration.

- For the PCG solver, verify in the output file that the solution was fully offloaded to the GPU.

- Activate GPU acceleration via the Ansys Product Launcher (High-Performance Computing tab) or command line (e.g.,

- Data Collection and Analysis:

- For each test case, calculate the speedup as

CPU Solution Time / GPU Solution Time. - Create a table comparing speedups across solver types and GPU hardware.

- Analyze the results, correlating performance gains with the GPU's hardware specifications (FP64 performance for Sparse solver, memory bandwidth for PCG).

- For each test case, calculate the speedup as

- CPU Baseline: Run each model and solver combination using CPU cores only. Record the solution time from the solver output file (

The workflow for this benchmarking protocol is summarized in the following diagram:

Visualization and Post-Processing Acceleration

After solving, visualizing the results (e.g., stresses, displacements, fluid velocities) is a critical step for analysis. For large models, manipulating and rendering the mesh and result fields can be interactive.

Graphics Acceleration with Remote Visualization

GPU acceleration in visualization focuses on rendering performance.

- Local Graphics Rendering: A local workstation GPU (e.g., NVIDIA Quadro/RTX A-series) accelerates model rotation, zooming, animation, and contour plotting by offloading graphics rendering from the CPU [13].

- Remote Visualization for Cloud HPC: In cloud-based high-performance computing (HPC) workflows, technologies like NICE DCV or Elastic Cloud Workstations (ECWs) are used. These solutions stream the graphical desktop interface from a powerful GPU in the cloud to a lightweight local machine, providing a smooth and responsive visual experience for pre- and post-processing large models directly from the cloud [13].

The Scientist's Toolkit for GPU-Accelerated Environmental FEA

This section details the essential hardware and software components for establishing a research environment capable of performing GPU-accelerated FEA for environmental applications.

Table 3: Essential Research Reagents for GPU-Accelerated FEA

| Category | Item | Specification / Example | Function in Workflow |

|---|---|---|---|

| Hardware | Compute-Class GPU | NVIDIA A100/H100 (Server) or RTX A6000 (Workstation); >24 GB VRAM, >600 GB/s bandwidth [13] | Primary accelerator for solver and assembly computations. |

| Hardware | High-Bandwidth CPU & RAM | CPU with high memory bandwidth; ample system RAM. | Feeds data to the GPU; handles non-offloaded serial tasks. |

| Software | GPU-Accelerated Solvers | Ansys Mechanical APDL, LS-DYNA, Altair Radioss, JAX-WSPM [13] [6] | Specialized software that can leverage GPU APIs (CUDA, OpenACC). |

| Software | High-Level Frameworks (JAX) | JAX with jax.numpy, jit, vmap [6] |

Enables rapid development of custom, differentiable FEA solvers with built-in GPU support. |

| Software | Remote Visualization | NICE DCV, HP Anywhere, X2Go | Enables remote visualization of large result sets from cloud HPC resources [13]. |

| Method | Differentiable Programming | Using JAX's automatic differentiation [6] [17] | Facilitates inverse modeling (e.g., parameter estimation from sparse field data). |

Integrated Workflow for Environmental Modeling

The synergy between the accelerated workflows is key for complex environmental simulations, such as modeling coupled water flow and solute transport in unsaturated porous media.

The following diagram illustrates an integrated, GPU-accelerated workflow for such an application, highlighting the roles of assembly, solving, and visualization.

The "memory wall" describes the growing performance gap between processor speed and memory bandwidth, a critical bottleneck in high-performance computing (HPC). In finite element analysis (FEA), this manifests as processors idly waiting for data from memory, severely limiting scalability and efficiency. This challenge is particularly acute in environmental applications, such as large-scale climate modeling or subsurface flow simulation, where problems involve complex, multi-physics interactions across vast spatial and temporal scales.

GPU computing directly confronts this bottleneck through architectural specialization. Unlike general-purpose CPUs with relatively few complex cores, GPUs contain thousands of simpler, energy-efficient cores organized for massive parallelism. More critically for the memory wall, they incorporate high-bandwidth memory subsystems specifically designed for data-intensive workloads. Modern data center GPUs like the NVIDIA H100 feature 3.35 TB/s of memory bandwidth using HBM3 technology, dramatically surpassing traditional CPU memory systems [18]. This architectural approach makes GPU computing particularly transformative for memory-bound FEA problems in environmental research, where data movement often dominates computation time.

GPU Architectural Innovations for Memory Bandwidth

High-Bandwidth Memory Technologies

GPU architects have developed specialized memory technologies to alleviate bandwidth constraints. High-Bandwidth Memory (HBM) represents a fundamental departure from traditional GDDR memory architecture, employing 3D stacking of DRAM dies with through-silicon vias (TSVs). This configuration provides substantially wider memory interfaces and shorter physical paths for data movement. The progression from HBM2e to HBM3e in GPUs like the NVIDIA H200 demonstrates the rapid evolution of this technology, with the H200 achieving 4.8 TB/s of memory bandwidth—a 76% increase in memory capacity and 43% improvement in bandwidth compared to the H100 [18]. This massive bandwidth enables environmental researchers to tackle larger, more complex FEA models with improved temporal resolution.

Memory-Specific Core Architectures

GPUs further optimize memory usage through specialized cores that reduce data movement. Tensor Cres, available in modern NVIDIA GPUs, accelerate matrix operations common in FEA solver kernels. These cores can perform mixed-precision calculations, dramatically reducing memory footprint while maintaining accuracy. For environmental FEA applications where double precision is often necessary, GPUs like the H100 include dedicated FP64 cores that perform native double-precision calculations without performance penalties [19]. This specialization contrasts with consumer-grade GPUs that emulate FP64 operations using pairs of FP32 cores, achieving only half the speed—a critical consideration for scientific computing.

Table 1: GPU Memory Bandwidth and Core Specifications for Scientific Computing

| GPU Model | Memory Technology | Memory Bandwidth | FP64 Cores | Best Suited FEA Applications |

|---|---|---|---|---|

| NVIDIA H200 | HBM3e | 4.8 TB/s | Dedicated | Ultra-large environmental models (>100B parameters) |

| NVIDIA H100 | HBM3 | 3.35 TB/s | Dedicated | Production-scale multi-physics FEA |

| NVIDIA A100 | HBM2e | 2.0 TB/s | Dedicated | Budget-conscious environmental research projects |

| NVIDIA L40 | GDDR6 | ~1 TB/s | Emulated (FP32) | Single-precision CFD and structural mechanics |

Application to Finite Element Analysis in Environmental Research

Algorithmic Transformations for GPU Architectures

Translating FEA to GPUs requires rethinking traditional algorithms to maximize memory efficiency. The core FEA workflow—matrix assembly, linear system solving, and post-processing—must be reorganized to exploit fine-grained parallelism while minimizing data transfer. Research demonstrates that algebraic multigrid (AMG) methods, particularly aggregation-based approaches, achieve superior performance on GPU architectures because they require less device memory than classical AMG methods while effectively reducing error components across frequencies [20]. This makes them ideal preconditioners for Krylov subspace methods like the conjugate gradient algorithm in environmental FEA applications ranging from porous media flow to atmospheric dynamics.

The JAX-CPFEM platform exemplifies this algorithmic transformation, implementing an open-source, GPU-accelerated crystal plasticity finite element method that achieved a 39× speedup in a polycrystal case with approximately 52,000 degrees of freedom compared to traditional CPU-based implementations [21]. This performance gain stems from both increased computational throughput and optimized memory access patterns that keep the GPU's parallel cores saturated with data.

Multi-GPU Strategies for Large-Scale Environmental Modeling

For environmental FEA problems exceeding single GPU memory capacity, multi-GPU approaches provide a scalable solution. Using domain decomposition techniques with hybrid MPI (Message Passing Interface) for inter-node communication, researchers can distribute massive FEA problems across multiple GPUs, effectively aggregating their combined memory bandwidth. Studies show this approach successfully addresses structural mechanics problems with millions of degrees of freedom by implementing a "GPU-awareness" in MPI that minimizes costly data transfers between host and device memory [20].

Table 2: Environmental FEA Applications and GPU Memory Considerations

| Environmental Application | Primary FEA Challenge | Recommended GPU Precision | Memory per Million Cells | Multi-GPU Strategy |

|---|---|---|---|---|

| Coastal flood modeling | Large domain, complex boundaries | Hybrid (FP32/FP64) | ~1.2 GB (steady state) | Domain decomposition by geographic region |

| Subsurface contaminant transport | Multi-phase flows, heterogeneous media | FP64 (native) | ~2.5 GB (transient) | Vertical stratification with overlapping boundaries |

| Atmospheric aerosol dispersion | Turbulence, particle tracking | FP32 (primary), FP64 (coupling) | ~1.8 GB (with DPM) | Horizontal domain splitting with halo regions |

| Geothermal reservoir simulation | Thermo-hydro-mechanical coupling | FP64 (native) | ~3.0 GB (multi-physics) | Physics-based distribution with coordinated solves |

Experimental Protocols for GPU-Accelerated Environmental FEA

Protocol: Weak Scalability Analysis for Distributed GPU FEA

Objective: Quantify parallel efficiency when increasing problem size proportionally with GPU resources.

Materials:

- Computing Resources: Multi-GPU cluster with at least 4 nodes, each containing 2+ data center GPUs (e.g., NVIDIA A100 or H100)

- Software Stack: NVIDIA CUDA 12.8+ or AMD ROCm 6.0+, MPI implementation (OpenMPI or MPICH), FEA framework with GPU support (e.g., MFEM, JAX-FEM, Ansys Fluent GPU Solver)

- Benchmark Case: 3D porous flow simulation with varying mesh resolution (500K to 20M elements)

Methodology:

- Domain Decomposition: Use ParMETIS to partition mesh with balanced element distribution across GPUs

- Memory Pre-allocation: Pre-allocate device memory for stiffness matrices, solution vectors, and temporary arrays

- Solver Configuration: Configure aggregation AMG-preconditioned conjugate gradient solver with:

- Smoothed aggregation for transfer operators

- Jacobi relaxation for smoothing

- V-cycle multigrid pattern

- Execution: Run simulation with strong (fixed total problem size) and weak (fixed problem size per GPU) scaling configurations

- Metrics Collection: Record solve time, memory usage, bandwidth utilization, and inter-GPU communication overhead

Validation: Compare results against reference CPU implementation using double-precision accuracy thresholds for conservation laws (mass, momentum)

Protocol: Precision and Performance Trade-off Analysis

Objective: Determine optimal precision settings for specific environmental FEA applications.

Materials:

- Test System: Single GPU with dedicated FP64 cores (e.g., NVIDIA H100) and emulated FP64 capability (e.g., NVIDIA L40)

- Software: Ansys Fluent GPU Solver or equivalent with precision control

- Benchmark Cases:

- Case A: Incompressible flow with mild gradients (river hydraulics)

- Case B: Compressible flow with strong shocks (atmospheric dynamics)

- Case C: Multi-phase transport with sharp interfaces (contaminant plume)

Methodology:

- Baseline Establishment: Run each case in native FP64, recording solution time and memory usage

- Precision Variants: Execute with:

- FP32 throughout

- Hybrid precision (FP32 main solver, FP64 critical kernels)

- FP32 with iterative refinement

- Convergence Monitoring: Track residual reduction rates and iteration counts for each precision configuration

- Accuracy Assessment: Compare key outputs (drag coefficients, concentration fields, shock positions) against FP64 reference

- Performance Analysis: Calculate speedup factors and memory savings while quantifying accuracy trade-offs

Environmental Impact and Sustainability Considerations

Energy Efficiency of GPU-Accelerated FEA

The computational intensity of environmental FEA carries significant energy implications that must be considered within the context of climate research. While GPU manufacturing has substantial embodied carbon—approximately 164 kg CO₂e per H100 card according to NVIDIA's assessment—the operational efficiency gains can offset this impact over the system's lifetime [12]. Research demonstrates that well-optimized GPU FEA implementations can deliver 2-4× better performance per watt compared to CPU-only systems, directly reducing the electricity consumption of large-scale environmental simulations.

The Fujitsu AI Computing Broker presents an innovative approach to maximizing GPU utilization, demonstrating 270% improvement in proteins processed per hour on A100 GPUs for AlphaFold2 simulations through dynamic resource allocation [22]. Similar strategies applied to environmental FEA could significantly enhance sustainability by eliminating idle GPU cycles and consolidating workloads. For research institutions with fixed carbon budgets, these efficiency gains translate directly to increased simulation capacity without proportional increases in environmental impact.

The Researcher's Toolkit for GPU FEA

Table 3: Essential Research Reagent Solutions for GPU-Accelerated Environmental FEA

| Tool Category | Specific Solutions | Function in GPU FEA | Environmental Application Example |

|---|---|---|---|

| GPU Hardware | NVIDIA H100/H200, AMD MI300X | Provide high-bandwidth memory and specialized cores for parallel FEA kernels | Large-scale climate model ensembles |

| Programming Models | CUDA, HIP, OpenCL, OpenACC | Enable low-level GPU programming and performance optimization | Custom physical parameterizations for atmospheric models |

| FEA Libraries | MFEM, JAX-FEM, AMGCL | Provide GPU-acceler finite element discretization and solver components | Rapid prototyping of new groundwater contamination models |

| Linear Algebra | cuBLAS, cuSPARSE, hipBLAS | Accelerate fundamental mathematical operations on GPU architectures | Efficient stiffness matrix assembly for seismic wave propagation |

| Preconditioners | AmgX, hypre, PETSc | Deliver scalable multigrid preconditioning for GPU systems | Overcoming ill-conditioning in heterogeneous subsurface flows |

| Profiling Tools | NVIDIA Nsight, ROCprofiler | Identify memory bandwidth bottlenecks and optimization opportunities | Tuning multi-GPU parallel efficiency for ocean circulation models |

GPU computing represents a fundamental shift in addressing the memory wall for finite element analysis in environmental research. Through specialized high-bandwidth memory architectures, memory-aware core designs, and algorithmic transformations, modern GPUs can deliver order-of-magnitude improvements in simulation throughput while reducing energy consumption per computation. The experimental protocols and technical considerations outlined here provide a foundation for environmental researchers to effectively leverage these capabilities.

Looking forward, several emerging technologies promise to further alleviate memory bandwidth constraints. Unified memory architectures that eliminate explicit host-device transfers, compute express links that enable direct GPU-to-GPU communication, and specialized processing-in-memory techniques that perform computations directly within memory stacks all represent active research frontiers. For environmental scientists tackling increasingly complex challenges—from predicting climate tipping points to optimizing renewable energy systems—mastering these GPU computing paradigms will be essential for extracting timely insights from ever-larger FEA simulations.

The growing complexity of environmental models, which aim to simulate phenomena from urban flash floods to global climate change, demands an unprecedented level of computational power. Traditional Central Processing Unit (CPU)-based computing often falls short, making Graphics Processing Units (GPUs) an indispensable tool for researchers. GPUs, with their massively parallel architecture consisting of thousands of cores, are uniquely suited to accelerate the large-scale numerical simulations that underpin modern environmental science. This document defines the scope of environmental applications where GPU-level performance is not merely beneficial but essential, providing application notes and detailed protocols for the research community. The focus is placed on applications involving finite element analysis and other computationally intensive methodologies critical for advancing environmental research and policy.

High-Performance Application Domains

The following environmental modeling domains exhibit significant computational challenges that are effectively addressed by GPU acceleration. The table below summarizes key applications and their performance demands.

Table 1: Environmental Applications with High GPU Performance Demands

| Application Domain | Specific Modeling Task | Key Computational Challenge | Reported GPU Speedup |

|---|---|---|---|

| Hydrological & Flood Modeling | High-resolution rural/urban flash flood simulation [23] | Spatially distributed rainfall-runoff modeling; dual drainage; surface-sewer coupling | Information Missing |

| Atmospheric Dispersion & Air Quality | Accident radionuclide release simulation [24] | Stochastic Lagrangian particle model; simulating advection, diffusion for thousands of particles | >10x faster than sequential CPU version [24] |

| Climate & Daylight Modeling | Climate-based daylight glare probability (DGP) calculation [25] | Accelerating Two-phase, Three-phase, and Five-phase Method matrices (e.g., Daylight Coefficient, View matrices) | 83.0% to 94.8% reduction in computation time [25] |

| Ecological & Evolutionary Systems | Evolutionary Spatial Cyclic Games (ESCGs) simulation [26] | Agent-based modeling of ecological dynamics; scaling to large system sizes (e.g., 3200x3200 grid) | Up to 28x speedup (CUDA vs. single-threaded C++) [26] |

| Advanced Climate & Weather Forecasting | Earth-2 extreme weather modeling [27] | AI-driven weather predictions at ultra-high spatial resolution (3.5 km) for storms and floods | Information Missing |

Detailed Experimental Protocols

Protocol 1: GPU-Accelerated Stochastic Lagrangian Particle Model for Atmospheric Dispersion

This protocol details the methodology for simulating the dispersion of pollutants, such as radionuclides from an accidental release, using a GPU-accelerated stochastic Lagrangian model [24].

1. Problem Setup and Initialization:

- Objective: To simulate the dispersion of pollutants from a point source on a local scale, predicting ground-level concentration fields faster than real-time for decision support.

- Domain Definition: Define a three-dimensional computational domain encompassing the area of interest. The release point (source term) is specified by its coordinates and release rate.

- Meteorological Data: Input 3D wind field (u, v, w components) and turbulence parameters. These fields can be derived from weather models or measurements.

2. CPU Pre-Processing:

- Mesh Generation: The host (CPU) generates a structured grid for the simulation domain, which will be used for interpolating meteorological data and aggregating final concentration values.

- Memory Allocation: The CPU allocates memory on the device (GPU) for all necessary arrays: particle positions, velocities, masses, and the concentration grid.

- Data Transfer: Initial meteorological data and the first set of particle data are transferred from host memory to GPU device memory.

3. GPU Kernel Execution (CUDA Implementation): The core computation is parallelized by assigning one CUDA thread to each particle. The following steps are executed in the kernel function on the GPU [24] [28]:

- Particle Release: In each time step, a set of new particles is introduced at the source location.

- Advection: Each thread calculates the displacement of its assigned particle based on the interpolated wind field.

x_i(t+Δt) = x_i(t) + u_i * Δt

- Turbulent Diffusion: A stochastic component is added to the displacement to model turbulent diffusion. A random walk process is used, often based on a Gaussian random number.

- Chemical Transformation/Deposition: If modeling chemically active species, mass decay or deposition to the ground is calculated for each particle.

- Concentration Mapping: After moving, each particle contributes its mass to the nodes of the grid cell it occupies. Atomic operations are used to avoid race conditions when multiple threads (particles) update the same grid cell value simultaneously.

4. CPU Post-Processing:

- Data Retrieval: After a specified number of time steps, the concentration field is transferred from GPU device memory back to host CPU memory.

- Visualization & Analysis: The CPU handles the visualization of the concentration map, calculation of dosage, and other analysis required for decision support.

Protocol 2: GPU-Accelerated Finite Element Analysis for Environmental Fluid Dynamics

This protocol outlines the use of nonlinear Finite Element algorithms on GPUs for solving environmental fluid dynamics problems, such as high-resolution flood modeling [23] [28]. The methodology is based on the Total Lagrangian Explicit Dynamics formulation.

1. Problem Definition and Mesh Generation:

- Objective: To simulate fluid flow and surface dynamics, such as water propagation over complex urban topography during a flash flood.

- Geometry & Discretization: Import or generate a 3D geometric model of the domain (e.g., a city with buildings, streets, and sewer systems). Discretize the volume using a mixed mesh of hexahedral and tetrahedral elements to balance accuracy and meshing ease [28].

- Material Properties: Assign non-linear material models (e.g., for water flow, soil infiltration) to different parts of the mesh.

- Boundary Conditions: Define initial conditions (e.g., rainfall intensity) and boundary conditions (e.g., fixed terrain, open boundaries).

2. CPU Pre-Processing and Data Preparation:

- Matrix Assembly: The CPU assembles initial global matrices, but note that in explicit methods, no global stiffness matrix is formed. Instead, the focus is on initializing element-level data.

- Memory Allocation on GPU: The CPU allocates device memory for nodal coordinates, displacements, velocities, accelerations, element connectivity, and material properties.

- Data Transfer: Transfer the initialized data structures from the host to the GPU's global memory.

3. GPU Kernel Execution for Explicit Time Integration: The core computation is broken down into several data-parallel kernels that are executed on the GPU. The following sequence is performed for each time step [28]:

- Internal Force Calculation: A kernel is launched with one thread per element (or per integration point) to calculate the internal forces. This uses the Total Lagrangian formulation to compute stresses and element forces based on the current deformation.

- Contact Force Calculation: If applicable, a separate kernel handles contact conditions (e.g., fluid-terrain interaction).

- Nodal Force Assembly: The element internal forces are assembled into a global force vector. This step requires careful parallel reduction and potentially atomic operations to handle node collisions.

- Time Integration: A kernel with one thread per node updates the nodal accelerations, velocities, and displacements using an explicit central difference rule:

a = M⁻¹ * (F_ext - F_int)v(t+Δt/2) = v(t-Δt/2) + a(t) * Δtu(t+Δt) = u(t) + v(t+Δt/2) * Δt

- Stability Check: The CPU or a GPU kernel calculates a stable time step for the next iteration based on the Courant–Friedrichs–Lewy (CFL) condition.

4. Output and Steady-State Detection:

- Solution Output: At specified intervals, nodal displacements and other result variables are transferred back to the CPU for storage and visualization.

- Steady-State Detection: The CPU monitors the kinetic energy of the system or the change in displacements between time steps to determine when a steady-state solution (e.g., the final floodwater extent) has been reached.

The Scientist's Toolkit: Essential Research Reagents & Computing Solutions

The "reagents" for computational research are the software tools, hardware, and libraries that enable GPU-accelerated environmental modeling.

Table 2: Key Research Reagents for GPU-Accelerated Environmental Modeling

| Category | Item | Function in Research |

|---|---|---|

| Programming Models & APIs | NVIDIA CUDA [24] [28] | A parallel computing platform and programming model that enables developers to use C/C++ to write programs that execute on NVIDIA GPUs. |

| Apple Metal [26] | A low-level graphics and compute API for iOS, macOS, and other Apple devices, used for GPU acceleration on Apple hardware. | |

| Software Libraries & Frameworks | NVIDIA Omniverse [27] | A platform for building and connecting 3D tools and applications, used for creating digital twins of environmental systems like oceans. |

| NVIDIA NIM [27] | Microservices for deploying AI models, used to containerize and run specialized models for weather prediction and flood risk. | |

| Hardware & Infrastructure | GPU Clusters (HPC) [29] [11] | High-performance computing systems integrating multiple GPU servers; provide the raw computational power for large-scale simulations. |

| NVIDIA Jetson [27] | A platform for edge AI and computing, used for real-time environmental monitoring like wildfire detection from CubeSats. | |

| Specialized Algorithms | Total Lagrangian Explicit Dynamics [28] | A finite element formulation ideal for GPU implementation, efficient for solving non-linear, dynamic problems like brain shift or fluid flow. |

| Spherical Fourier Neural Operators (SFNO) [27] | A type of AI model used for accelerating global weather and climate simulations, achieving high resolution and accuracy. |

The scope of environmental applications demanding GPU-level performance is vast and critical for advancing scientific understanding and developing effective mitigation strategies. As demonstrated, domains including flood modeling, atmospheric dispersion, climate prediction, and ecological simulation achieve order-of-magnitude speedups through GPU acceleration. This enables higher-resolution models, more accurate predictions, and ultimately, more reliable scientific insights. The provided protocols and toolkit offer a foundation for researchers to leverage these powerful computational techniques, pushing the boundaries of what is possible in environmental science.

Implementation Strategies and Environmental Use Cases for GPU-FEA

In the quest for high-performance computational mechanics for environmental applications, the finite element method (FEM) has encountered significant bottlenecks, particularly in memory bandwidth limitations. Conventional matrix-based solvers, which explicitly form and store the global stiffness matrix, are increasingly proving to be the primary computational bottleneck in large-scale simulations, often consuming over 90% of the total runtime [30]. For environmental research involving complex, multi-physics problems such as geotechnical modeling, subsurface flow, and fluid-structure interaction, these limitations restrict model fidelity and real-world applicability.

Matrix-free solvers and Element-by-Element (EbE) strategies represent a paradigm shift in finite element analysis. These approaches circumvent the memory bottleneck by computing the action of the stiffness matrix on a vector directly from the elemental-level operations without ever assembling the global matrix [31] [32]. When combined with the massive parallel architecture of Graphics Processing Units (GPUs), these algorithms unlock unprecedented simulation capabilities, enabling researchers to solve larger, more complex environmental problems with greater efficiency.

Core Algorithmic Principles

The Matrix-Free Paradigm

The fundamental principle behind matrix-free solvers is a reformulation of the computational workflow in iterative linear solvers. In traditional FEM, the global stiffness matrix [K] is explicitly assembled and stored in sparse format, and the solver performs sparse matrix-vector products (SpMV) during each iteration. In contrast, matrix-free methods recognize that for iterative solvers like the Conjugate Gradient (CG) method, what is fundamentally required is not the matrix itself but its action on a vector—the result of [K]{u} [31].

Matrix-free implementation replaces the single, large SpMV operation with the assembly of numerous small, dense matrix-vector products using local elemental matrices. The product of the global matrix [K] with a global vector {u} is computed as:

where [ke] is the elemental matrix, [Ge] is the gather matrix that maps local degrees of freedom to global ones, and {u_e} is the local vector of elemental degrees of freedom [31]. This approach eliminates the need to store the large, sparse global matrix, dramatically reducing memory consumption and memory bandwidth requirements.

Element-by-Element (EbE) Strategy

The EbE technique is a specific implementation of the matrix-free paradigm that decouples element solutions by directly solving elemental equations instead of the global system [33]. In the context of smoothed finite element methods (S-FEM), the EbE approach can be extended to smoothing domains, leading to a Smoothing-Domain-by-Smoothing-Domain (SDbSD) strategy [33].

For acoustic simulations using edge-based smoothed FEM (ES-FEM), the application of the EbE strategy transforms the system into a form where operations are performed at the smoothing domain level:

where [K̄(e)], [M(e)], and [C(e)] represent the smoothing domain stiffness matrix, mass matrix, and damping matrix, respectively, and {P(E)} is the smoothing domain pressure vector [33]. This formulation maintains the accuracy benefits of ES-FEM while enabling efficient parallel implementation.

GPU Implementation Frameworks

Parallelization Strategies

The effectiveness of matrix-free and EbE methods hinges on their implementation on parallel architectures, particularly GPUs. Two primary thread assignment strategies have emerged for organizing parallel computation:

- Node-based assignment: Threads are assigned to nodes, with each thread responsible for computations associated with a specific node [31]. This approach often requires atomic operations to avoid race conditions when multiple threads attempt to write to the same global memory location simultaneously.

- Degree-of-Freedom (DOF)-based assignment: Threads are assigned to individual degrees of freedom, providing finer-grained parallelism and potentially better load balancing [31]. This strategy can reduce thread divergence and minimize the need for atomic operations.

For elastoplastic problems where material states vary spatially, advanced implementation strategies are required. One effective approach uses a single elemental matrix for all elastic elements while maintaining individual matrices for plastic regions, with data restructuring and index lists to minimize thread divergence [31].

Memory Access Optimization

Efficient memory access patterns are critical for GPU performance. Matrix-free methods naturally reduce dependency on memory and avoid performance-detrimental sparse storage formats [31]. For structured meshes with congruent elements (e.g., voxel-based meshes), additional optimizations are possible by leveraging identical elemental tangent matrices across all elements [31].

Caching strategies play a crucial role in balancing computation and memory access. Research on finite-strain elasticity has explored various caching levels—from storing only scalar quantities to caching full fourth-order tensors—to optimize performance based on specific hardware capabilities and problem characteristics [32].

Performance Analysis and Benchmarking

Quantitative Performance Gains

Recent implementations of matrix-free solvers on GPU architectures have demonstrated remarkable speedups compared to traditional approaches. The table below summarizes performance gains reported in recent studies:

Table 1: Performance comparison of solver implementations

| Solver Type | Hardware Configuration | Problem Scale | Speedup Factor | Application Domain |

|---|---|---|---|---|

| GPU AMG Solver [30] | AMD Ryzen 9 5950X + RTX 3090 | 2M+ elements | 18× | Geotechnical Analysis |

| Matrix-Free CG [31] | NVIDIA GPU | Large-scale 3D | 26× | Elastoplasticity |

| CPU AMG Solver [30] | High-performance Server | Large-scale models | 12× | Geotechnical Analysis |

| GPU Direct Solver [30] | Consumer GPU | Small-medium models | 3× | General FEA |

The performance advantages are particularly pronounced for large-scale problems. In geotechnical applications, the RS3 software implementation demonstrated that GPU-accelerated algebraic multigrid (AMG) preconditioners can achieve up to 18× faster computation times compared to previous solver technologies, even on consumer-grade hardware [30].

Comparative Analysis of Solver Approaches

Table 2: Characteristics of different solver paradigms

| Characteristic | Direct Solvers | Traditional Iterative | Matrix-Free/EBE |

|---|---|---|---|

| Memory Consumption | High | Moderate | Low |

| Parallel Scalability | Limited | Good | Excellent |

| Implementation Complexity | Low | Moderate | High |

| Robustness | High | Variable | Model-Dependent |

| Hardware Utilization | CPU-intensive | Better CPU utilization | Optimal for GPU |

| Suited Problem Size | Small-medium | Medium-large | Very large |

The matrix-free approach's performance advantage stems from its higher arithmetic intensity and reduced memory bandwidth requirements. Studies estimate that traditional iterative sparse linear solvers saturate memory bandwidth at less than 2% of a modern CPU's theoretical arithmetic computing power [32]. Matrix-free methods address this imbalance by increasing computational workload per data element moved, thereby better utilizing available computational resources.

Application Protocols for Environmental Research

Protocol 1: Matrix-Free Implementation for Elastoplasticity

Application: Modeling soil stability, landslide simulation, and foundation settlement in geotechnical environmental engineering.

Objective: Implement an efficient matrix-free solver for elastoplastic problems commonly encountered in geotechnical environmental applications.

Materials and Software:

- CUDA-enabled NVIDIA GPU (e.g., RTX 3090, A30)

- Finite element library with matrix-free capabilities (e.g., deal.II)

- AceGen for automatic differentiation and code generation [32]

Methodology:

- Problem Formulation: Apply the Newton-Raphson method to solve the nonlinear governing equations of elastoplasticity at each incremental load step.

- Elemental Matrix Computation: Compute elemental tangent matrices considering the material state (elastic or plastic). For J2 plasticity, this involves evaluating the consistency condition and plastic flow direction [31].

- State-Based Processing: Implement a single kernel strategy that handles both elastic and plastic elements. Use index lists to separate elements by state (elastic/plastic) to avoid thread divergence [31].

- Matrix-Vector Product: For each element, compute the local matrix-vector product using either node-based or DOF-based thread assignment.

- Global Assembly: Perform scatter operations to assemble local contributions into the global residual vector without storing the global matrix.

- Solution Update: Apply the preconditioned conjugate gradient method to solve the linear system and update the displacement field.

Validation: Compare results with conventional matrix-based solvers for benchmark problems. Verify accuracy by checking equilibrium convergence and plastic zone distribution.

Protocol 2: EbE with ES-FEM for Environmental Acoustics

Application: Noise propagation modeling in environmental impact assessments, underwater acoustics, and urban noise pollution studies.

Objective: Implement an EbE-based edge-smoothed finite element method for efficient acoustic simulations on GPU platforms.

Materials and Software:

- CUDA programming environment

- ES-FEM formulation for acoustic waves

- Preconditioned iterative solver (e.g., FGMRES)

Methodology:

- Mesh Preparation and Smoothing Domain Construction: Generate the finite element mesh and create smoothing domains based on mesh edges using a semi-parallel construction strategy [33].

- SDbSD Parallel Strategy: Implement the Smoothing-Domain-by-Smoothing-Domain approach by extending the traditional EbE strategy to smoothing domains [33].

- Matrix-Free Evaluation: Compute the action of the smoothed stiffness and mass matrices on vectors directly from smoothing domain computations without global matrix assembly.

- GPU Memory Management: Store all data in a unified array to improve data reading/writing efficiency. Utilize shared memory to cache frequently accessed data [33].

- Iterative Solution: Apply a preconditioned iterative solver (e.g., FGMRES with AMG preconditioning) to solve the resulting linear system.

- Performance Optimization: Implement kernel fusion to merge multiple computation steps and reduce data transfer between CPU and GPU.

Validation: Assess numerical accuracy by comparing with analytical solutions for canonical problems. Evaluate computational efficiency by measuring speedup relative to CPU implementation.

The Scientist's Toolkit

Table 3: Essential research reagents and computational tools for matrix-free FEM

| Tool/Reagent | Function/Purpose | Implementation Example |

|---|---|---|

| AceGen | Automatic differentiation and code generation | Generates optimized quadrature-point routines for tangent evaluations [32] |

| deal.II Library | Finite element library with matrix-free support | Provides infrastructure for matrix-free operations on distributed meshes [32] |

| CUDA Platform | Parallel computing platform for GPU acceleration | Implements node-based or DOF-based thread assignments for matrix-free SpMV [31] |

| AMG Preconditioner | Algebraic multigrid preconditioning for iterative methods | Accelerates convergence in FGMRES for ill-conditioned systems [30] |

| Smoothing Domain | Fundamental unit in ES-FEM for accuracy improvement | Basis for SDbSD parallel strategy in acoustic simulations [33] |

| Elimination Tree | Data structure for sparse direct solvers | Guides parallel factorization in hybrid CPU-GPU direct solvers [34] |

Workflow Visualization

Matrix-Free FEM Workflow on GPU

Matrix-free solvers and Element-by-Element strategies represent a fundamental shift in finite element computation, particularly for GPU-accelerated environmental simulations. By eliminating the memory bottleneck associated with global matrix storage and leveraging the fine-grained parallelism of GPU architectures, these approaches enable unprecedented scalability and performance. The integration of automatic differentiation tools like AceGen further enhances the practicality of these methods for complex environmental applications involving nonlinear material behavior.