Accelerating Biomedical Discovery: A Guide to Implementing Particle MCMC on GPU

This article provides a comprehensive guide for researchers and drug development professionals on implementing Particle Markov Chain Monte Carlo (pMCMC) on Graphics Processing Units (GPUs).

Accelerating Biomedical Discovery: A Guide to Implementing Particle MCMC on GPU

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing Particle Markov Chain Monte Carlo (pMCMC) on Graphics Processing Units (GPUs). pMCMC is a powerful class of algorithms for Bayesian inference in complex models, such as state-space models common in pharmacology and neuroscience, but its high computational cost has historically limited its application. We explore the foundational principles of GPU parallelization for Monte Carlo methods, detail methodological implementations and real-world applications in drug discovery and clinical trial modeling, address key troubleshooting and optimization challenges, and present validation studies and comparative analyses of performance gains. By leveraging GPU acceleration, scientists can achieve speedups of 10 to over 1000 times, making previously intractable problems in uncertainty quantification and molecular analysis feasible and transforming the pace of biomedical research.

GPU and pMCMC Foundations: Core Concepts for Parallel Computation

Markov Chain Monte Carlo (MCMC) represents a class of algorithms designed to draw samples from probability distributions that are too complex for analytical study, especially in high-dimensional spaces [1]. In Bayesian statistics, MCMC methods enable the approximation of posterior distributions—the fundamental output of Bayesian inference that combines prior knowledge with observed data. However, traditional MCMC faces significant challenges with complex models, particularly those involving latent variables or requiring integration over unobserved states [2].

Particle Markov Chain Monte Carlo (pMCMC) emerges as a powerful extension that combines two sophisticated methodologies: MCMC and Sequential Monte Carlo (SMC) methods, also known as particle filters [2]. This hybrid approach addresses a critical limitation of standard MCMC when dealing with state-space models or other complex hierarchical structures where the likelihood function is computationally intractable. By using particle filters to provide unbiased estimates of the likelihood within a Metropolis-Hastings framework, pMCMC enables efficient sampling from the posterior distribution of parameters in models where direct likelihood calculation is infeasible [3].

The foundational pMCMC algorithm utilizes a single Markov chain, but recent advancements have led to the development of novel variants such as the multiple-chain pPCMC algorithm (denoted ppMCMC) designed to improve sampling efficiency for multi-modal posteriors [2]. For drug development professionals, these methods offer particular promise in pharmacokinetic/pharmacodynamic modeling, therapeutic drug monitoring, and virtual bioequivalence assessments where complex models must be informed by limited clinical data [4] [5].

Core Concepts and Theoretical Framework

The Mathematical Foundation of pMCMC

The Particle MCMC framework operates at the intersection of two Monte Carlo methodologies. At its core, pMCMC employs sequential Monte Carlo to approximate the otherwise intractable likelihood of observed data given parameters in state-space models. This likelihood estimate is then utilized within a Metropolis-Hastings acceptance ratio to ensure the Markov chain converges to the true posterior distribution [2].

Formally, given a parameter vector θ and data y₁₋ₙ, the posterior distribution follows Bayes' theorem: p(θ|y₁₋ₙ) = p(y₁₋ₙ|θ) · p(θ) / p(y₁₋ₙ) where p(y₁₋ₙ|θ) represents the likelihood, p(θ) the prior, and p(y₁₋ₙ) the marginal likelihood [4]. In state-space models, the likelihood p(y₁₋ₙ|θ) typically involves an intractable integral over latent states. Particle filters approximate this likelihood by propagating a set of particles through the state space using importance sampling and resampling techniques [3].

Addressing Multi-modality with Advanced pMCMC Variants

A significant challenge in complex model inference involves multi-modal posterior distributions where standard MCMC chains may become trapped in local modes. The ppMCMC algorithm addresses this limitation by employing multiple Markov chains instead of a single chain [2]. This multi-chain approach enables better exploration of the parameter space and more reliable characterization of multi-modal distributions, which commonly arise in mixture models or models with non-identifiable parameters.

Recent research has further extended these concepts through Metropolis-adjusted interacting particle samplers, which maintain an ensemble of particles that evolve according to coupled stochastic differential equations [6]. These interacting particle systems leverage information from the entire ensemble to infer properties of the target distribution, such as local curvature, enabling proposals that are better adapted to the target distribution.

Comparative Analysis of pMCMC Methods

Table 1: Comparison of Key pMCMC Methods and Their Characteristics

| Method | Key Mechanism | Target Applications | Strengths | Limitations |

|---|---|---|---|---|

| Standard pMCMC | Single Markov chain with particle filter likelihood estimation | State-space models with moderate complexity | Theoretical guarantees of convergence; Reliable for unimodal posteriors | Limited efficiency for multi-modal distributions; Computational cost per iteration |

| ppMCMC | Multiple Markov chains for enhanced exploration | Complex multi-modal posteriors; Multi-level hierarchical models | 1.96x higher sampling efficiency vs pMCMC; Better mode exploration | Increased memory requirements; More complex implementation |

| Metropolis-Adjusted Interacting Particle Samplers | Ensemble of particles with coupled dynamics; Metropolization of proposals | High-dimensional inference problems; Distributions with complex correlations | Innate parallelization; Adaptive proposals via ensemble information; Avoids local mode trapping | Potential bias from time discretization; Complex acceptance rules |

Table 2: Performance Benchmarks for pMCMC Hardware Implementations

| Hardware Platform | Algorithm | Speedup Factor vs CPU | Power Efficiency | Key Implementation Features |

|---|---|---|---|---|

| FPGA pMCMC | Standard pMCMC | 12.1x (CPU); 10.1x (GPU) | 53x more efficient | Particle and chain parallelism; Custom architectures |

| FPGA ppMCMC | Multiple-chain pMCMC | 34.9x (CPU); 41.8x (GPU) | 173x more efficient | Massive parallelization; Optimized for multi-modal posteriors |

| GPU SMC | Sequential Monte Carlo | Varies by application | Moderate | Batched, GPU-native framework; Data-driven covariance tuning |

Application Protocols in Drug Development

Protocol 1: Bayesian Parameter Estimation for Pharmacokinetic Models

Background and Purpose: Therapeutic drug monitoring (TDM) represents a critical application of Bayesian inference in clinical pharmacology, where patient-specific data combines with population prior knowledge to enable model-informed precision dosing [4]. This protocol details the application of pMCMC for estimating individual pharmacokinetic parameters, overcoming limitations of traditional maximum a posteriori (MAP) estimation that provides only point estimates without uncertainty quantification.

Materials and Reagents:

- Patient biomarker measurements (e.g., drug concentrations, neutrophil counts)

- Population pharmacokinetic model structure

- Prior distributions for pharmacokinetic parameters

- Computational environment with pMCMC implementation

Experimental Procedure:

- Model Specification: Define the structural pharmacokinetic model comprising system equations: dx/dt(t) = f(x(t);θ,u), x(t₀) = x₀(θ) where x represents state variables (e.g., drug concentrations), θ denotes individual parameters, and u defines inputs (e.g., dose regimen) [4].

Observation Model: Establish the relationship between observed quantities and model states: h(t) = h(x(t),θ) specifying how biomarker measurements (e.g., plasma drug concentrations) relate to the underlying system states [4].

Statistical Model: Define the probabilistic relationship accounting for measurement error and model misspecification: Yⱼ|Θ=θ ~ p(·|θ;hⱼ(θ),Σ), j=1,...,n specifying the distribution of observations given model predictions [4].

Prior Definition: Formulate prior distributions based on population studies: Θ ~ pΘ(·;θTV(cov),Ω) where θTV represents typical parameter values potentially dependent on covariates, and Ω captures interindividual variability [4].

pMCMC Implementation: Configure the pMCMC algorithm with the following specifications:

- Employ a particle filter with 100-500 particles to approximate the likelihood

- Utilize an adaptive proposal mechanism for parameter updates

- Run multiple chains for robustness assessment (ppMCMC variant)

- Implement on GPU hardware for computational efficiency

Convergence Assessment: Monitor chain convergence using standard diagnostics (Gelman-Rubin statistic, effective sample size) and validate predictive performance against held-out data.

Validation and Interpretation: The output provides a full posterior distribution for individual parameters, enabling computation of credible intervals for key therapeutic indices such as AUC (area under the concentration-time curve) and Cmax (peak concentration). This comprehensive uncertainty quantification supports more informed dosing decisions by characterizing risks of subtherapeutic exposure or toxicity [4].

Protocol 2: Virtual Bioequivalence Assessment for Formulation Development

Background and Purpose: Virtual bioequivalence (VBE) assessment uses simulation models informed by in vitro data and abbreviated clinical trials to evaluate formulation performance without conducting full-scale comparative clinical trials [5]. This protocol outlines a Bayesian workflow incorporating pMCMC for model calibration and uncertainty propagation in VBE studies.

Materials and Reagents:

- In vitro dissolution and release data for test and reference formulations

- Abbreviated clinical trial data (if available)

- Population pharmacokinetic model for the reference product

- Prior information on critical quality attributes (CQAs)

Experimental Procedure:

- Model Structure Definition: Establish a mechanistic pharmacokinetic model structure capable of predicting key bioequivalence metrics (AUC, Cmax) for both test and reference formulations. For complex delivery systems (e.g., long-acting injectables), incorporate relevant release mechanisms [5].

Bayesian Model Calibration: Implement pMCMC to calibrate model parameters using available data:

- Use particle filters to handle latent variables related to drug release and absorption

- Employ ppMCMC with multiple chains to ensure thorough exploration of parameter space

- Incorporate prior distributions informed by in vitro characterization

Posterior Predictive Assessment: Generate the joint posterior predictive distribution for AUC and Cmax ratios between test and reference formulations:

- Execute Monte Carlo simulations from the posterior distribution

- Compute probability of bioequivalence (AUC and Cmax ratios within 0.8-1.25 range)

- Declare bioequivalence if probability exceeds 0.95 threshold [5]

Safe-Space Analysis: Conduct sensitivity analysis to determine the range of formulation attributes (e.g., release rate) that maintain bioequivalence using the posterior distribution.

Implementation Considerations: The fully Bayesian workflow provides more precise decision criteria compared to frequentist approaches applied to virtual trials, better controlling both consumer and producer risk [5]. For nonlinear pharmacokinetic models, the posterior predictive distribution of Cmax and AUC differences must be estimated via simulation as closed-form solutions are generally unavailable.

Computational Implementation and Workflow

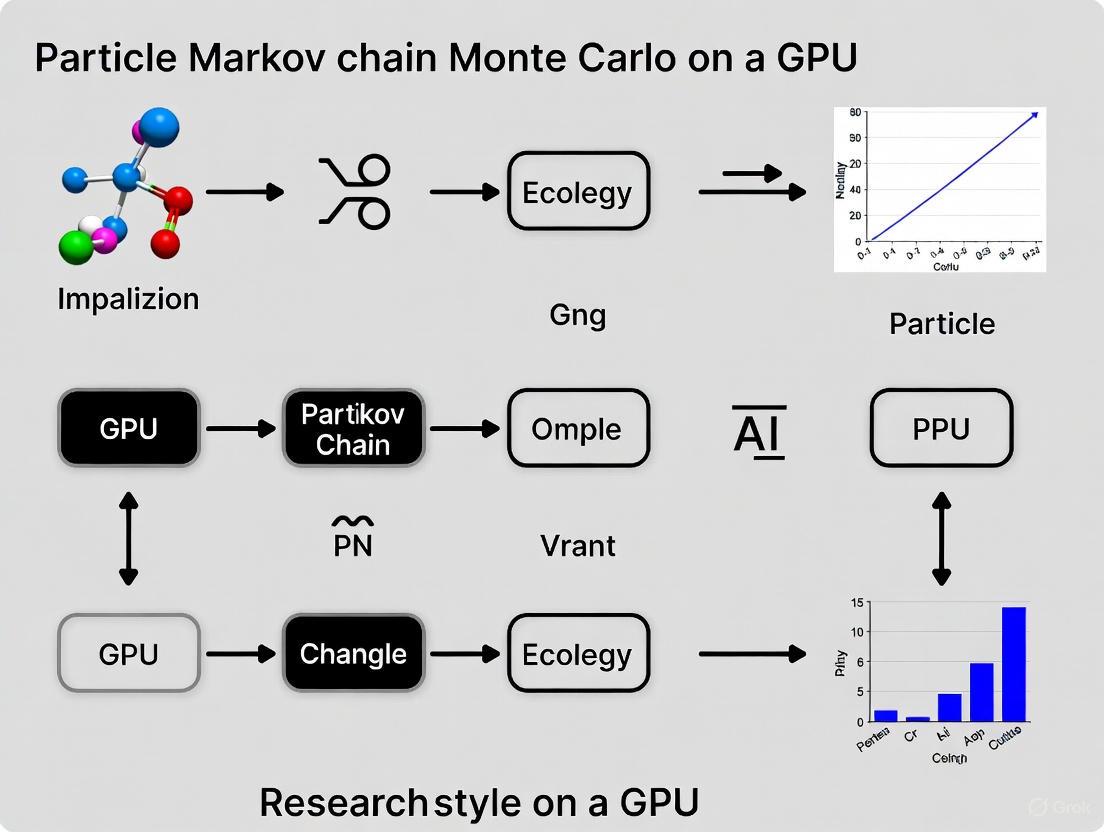

Figure 1: pMCMC Algorithm Workflow

Table 3: Essential Computational Tools for pMCMC Implementation

| Tool/Category | Specific Examples | Function in pMCMC Research | Implementation Considerations |

|---|---|---|---|

| Hardware Platforms | FPGA, GPU (NVIDIA CUDA) | Massive parallelization of particle operations | FPGA provides 41.8x speedup for ppMCMC; GPU offers batched execution [2] [7] |

| Software Libraries | Blackjax, Custom pMCMC implementations | Pre-built components for particle filtering and MCMC | Look for GPU-native frameworks with data-driven covariance tuning [7] |

| Sampling Algorithms | Sequential Monte Carlo, Multiple-chain pMCMC | Core computational engines for posterior approximation | ppMCMC achieves 1.96x higher sampling efficiency for multi-modal posteriors [2] |

| Diagnostic Tools | Effective sample size, Gelman-Rubin statistic | Assessment of chain convergence and mixing | Particularly critical for multi-chain implementations and multi-modal problems |

Figure 2: Hardware Acceleration Architectures for pMCMC

Particle MCMC represents a significant advancement in Bayesian computation for complex models, particularly in pharmaceutical applications where mechanistic models must be informed by limited and noisy data. The integration of particle filters within MCMC frameworks enables inference in models where likelihood evaluation would otherwise be computationally prohibitive.

Future development directions include enhanced interaction between particle systems and Markov chains, improved adaptation mechanisms, and tighter integration with emerging hardware architectures. As GPU and FPGA technologies continue to evolve, along with algorithm refinements such as the multiple-chain ppMCMC and Metropolis-adjusted interacting particle samplers, pMCMC methodologies are poised to tackle increasingly complex inference problems in drug development, from virtual bioequivalence assessment to personalized therapeutic monitoring [2] [6] [5]. The massive parallelization capabilities of modern hardware accelerators, demonstrated by 41.8x speedups for multi-chain ppMCMC on FPGAs, will make previously intractable problems feasible [2].

Why GPUs? Understanding Parallel Architecture and Data-Throughput Advantages over CPUs

For researchers implementing advanced statistical methods like particle Markov chain Monte Carlo (pMCMC), the choice of computational hardware is not merely a practical detail but a foundational decision that dictates the feasibility and scale of their work. The central processing unit (CPU) has long been the universal workhorse for scientific computation. However, in the domain of large-scale parallel inference, the graphics processing unit (GPU) has emerged as a transformative technology. This document elucidates the architectural principles that give GPUs a profound advantage in data-throughput and parallel processing, with a specific focus on applications within Bayesian inference and pharmaceutical research. The core distinction lies in their design philosophy: CPUs are designed for low-latency execution of sequential tasks, while GPUs are engineered for high-throughput parallel processing [8]. This architectural divergence is the key to understanding orders-of-magnitude speedups in pMCMC and other Monte Carlo methods, enabling research previously considered computationally intractable.

Core Architectural Principles: CPUs vs. GPUs

Design Philosophy and Hardware Layout

The fundamental difference between a CPU and a GPU stems from their intended primary functions. A CPU is a sophisticated, general-purpose processor optimized for executing a sequence of operations (a single thread) as quickly as possible. It dedicates a significant portion of its transistor count to complex control logic and large cache memory to reduce the latency of individual operations. This makes it excellent for tasks that involve complex decision-making and frequent, diverse operations.

In contrast, a GPU is a specialized processor designed for data-parallel computation, where the same instruction is executed simultaneously on many data elements [9]. This Single Instruction, Multiple Data (SIMD) architecture allows the GPU to devote a much larger proportion of its transistors to Arithmetic Logic Units (ALUs)—the components that perform actual calculations—rather than to cache and flow control. Whereas a high-end CPU might have a few dozen cores, a modern GPU comprises thousands of smaller, efficient cores, creating a massively parallel processing engine [8] [9].

Table 1: Fundamental Architectural Comparison of CPU vs. GPU

| Feature | CPU (Central Processing Unit) | GPU (Graphics Processing Unit) |

|---|---|---|

| Primary Design Goal | Low-latency execution of sequential tasks | High-throughput parallel data processing |

| Core Philosophy | "Do one thing, very fast." | "Do many things, simultaneously." |

| Core Type & Count | A few complex, powerful cores (e.g., 8-64) | Thousands of smaller, efficient cores (e.g., 1,000-10,000+) |

| Memory Architecture | Large cache hierarchies to minimize latency for a few threads | High-bandwidth memory to feed data to thousands of threads |

| Ideal Workload | Task-parallel, complex control flow, diverse operations | Data-parallel, simple control flow, uniform operations |

The Throughput Advantage for Monte Carlo Methods

This architectural distinction directly translates to a performance advantage in simulation and sampling tasks. The primary metric for GPUs is throughput—the total amount of computation completed in a unit of time—rather than the latency of any single computation. This is perfectly suited for population-based Monte Carlo methods, including pMCMC and Sequential Monte Carlo (SMC) [9].

For instance, running multiple MCMC chains is a classic "embarrassingly parallel" problem at the chain level. With a CPU-based approach, chains are typically distributed across multiple CPU cores, with each core running a single chain. The GPU approach, facilitated by software frameworks like JAX and PyTorch, is fundamentally different. It uses a vectorized-map operation to run all chains in lockstep [8]. Every operation—such as calculating the log-density or its gradient for all chains—is performed simultaneously across all chains on the GPU's thousands of cores. This single instruction, multiple data (SIMD) execution is far more efficient than the asynchronous execution on a multi-core CPU [10]. This parallelization extends from running entire chains down to vectorizing calculations within a single model likelihood function, enabling efficient computation even for large datasets.

Diagram 1: Data-throughput paradigm of GPU vs. CPU.

Quantitative Performance Benchmarks

The theoretical architectural advantages of GPUs manifest as dramatic real-world speedups. The following table compiles documented performance gains across various scientific computing domains, particularly in Monte Carlo simulation and related inference tasks.

Table 2: Documented Performance Gains with GPU Acceleration

| Application Context | CPU Baseline | GPU Implementation | Speedup Factor | Key Enabling Factor |

|---|---|---|---|---|

| pMCMC for Genetics SSM [11] | Optimized CPU implementation | Custom FPGA/GPU Architecture | 42x | Massive parallelization of particle filter operations |

| COVID-19 SEIR Model Forecasting [12] | Parallelized CPU Implementation | Single GPU | 13x | Parallelization of likelihood calculations and chains |

| COVID-19 SEIR Model Forecasting [12] | Parallelized CPU Implementation | 8x GPU Cloud Server | 56.5x | Multi-GPU scaling and optimized data sharding |

| General Population-Based Monte Carlo [9] | Single-threaded CPU Code | GPU (NVIDIA GTX 280) | 35x to 500x | Data-parallel simulation of populations of samples |

| Tomography Simulation (CT, PET) [13] | Single-core CPU | Single GPU | 100x to 1000x | Parallel simulation of independent photon histories |

Beyond raw speed, GPU computations can also be significantly more energy-efficient. One study found that a custom FPGA/GPU architecture for pMCMC was up to 173x more power-efficient than a state-of-the-art CPU implementation, reducing the economic and environmental cost of large-scale computations [11].

Protocol for GPU-Accelerated Particle MCMC Implementation

This protocol outlines the key steps for implementing a pMCMC sampler designed to leverage GPU architecture, based on successful applications in state-space modeling [12] [11].

Experimental Workflow

The following diagram and steps describe the core workflow for a GPU-accelerated pMCMC experiment, from model definition to analysis.

Diagram 2: GPU-accelerated pMCMC workflow.

Step 1: Model Definition. Define the state-space model, including the transition density f(X_t | X_{t-1}, θ), observation density g(Y_t | X_t, θ), and prior distributions for parameters θ [11].

Step 2: Algorithm Selection. Select a pMCMC variant suitable for your posterior distribution. For multi-modal posteriors, a Population-based pMCMC (ppMCMC) that uses multiple interacting chains is recommended over single-chain pMCMC to improve mixing [11].

Step 3: Software & Hardware Setup.

- Software: Utilize a GPU-aware framework like JAX or PyTorch. These frameworks provide automatic differentiation (for gradient-based methods) and crucial vectorization primitives like

vmap(vectorizing map) to parallelize computations across chains [8] [14]. - Hardware: Configure a server with one or more modern GPUs (e.g., NVIDIA V100, A100, or H100). For large problems, a multi-GPU setup is essential.

Step 4: Parallel Execution Loop. Implement the MCMC kernel to operate on multiple chains simultaneously:

- a. Vectorized Proposal: Draw proposed parameters for all

Nchains in a single, batched operation. - b. Vectorized Particle Filtering: Execute

Nindependent particle filters, one per chain, in parallel on the GPU. This is the most computationally intensive step and benefits most from parallelization. The key is to structure the particle filter code to process all chains and particles in a data-parallel manner [11]. - c. Parallel Likelihood Estimation: Calculate the unbiased likelihood estimates from the particle filters for all chains using SIMD operations.

- d. Vectorized Accept/Reject: Perform the Metropolis-Hastings accept/reject decision for all chains in a single, batched operation.

Step 5: Output & Analysis. After the sampling loop, transfer the final MCMC samples from GPU memory to CPU memory for convergence diagnostics (e.g., using R-hat statistics [10]) and subsequent analysis.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Software and Hardware for GPU-Accelerated pMCMC Research

| Item Name | Type | Function/Benefit | Example/Note |

|---|---|---|---|

| JAX [8] [14] | Software Library | Provides NumPy-like API with automatic differentiation, just-in-time (JIT) compilation, and vectorization (vmap) for efficient execution on GPUs/TPUs. |

Core library for writing GPU-agnostic code. |

| PyTorch [8] | Software Library | Deep learning framework with strong GPU support and an ecosystem for scientific computing; often used for gradient-based MCMC. | Alternative to JAX, widely adopted. |

| NVIDIA CUDA Toolkit | Software Platform | A development environment for creating high-performance, GPU-accelerated applications. | Provides low-level control for custom kernels. |

| BioNeMo Framework [15] | Domain-Specific Software | An open-source training framework providing domain-specific curated data loaders, training recipes, and example model architectures that are NVIDIA-optimized for GPU clusters. | For drug discovery applications (proteins, small molecules). |

| NVIDIA DGX Station | Hardware | Integrated, pre-configured server containing multiple high-end GPUs, designed for AI and HPC workloads. | Simplifies hardware procurement and setup. |

| Multi-Try Metropolis (MTM) [12] | Algorithm | A variant of Metropolis-Hastings that evaluates multiple proposals in parallel, trading more parallel likelihood calculations for a higher acceptance rate. | Increases GPU utilization per iteration. |

Application in Drug Discovery: A Use Case

The pharmaceutical industry, where the "invisible bottleneck" of compute inefficiency can delay promising drug pipelines, is a prime beneficiary of this technology [16]. GPU acceleration is central to modern drug discovery, enabling the screening of thousands of molecules in hours instead of years [16] [15].

Use Case: Accelerated Virtual Screening. A typical workflow involves using a pMCMC method to infer parameters of a complex pharmacokinetic model. The likelihood calculation for each proposed parameter set θ requires running a stochastic simulation of the drug's interaction with a biological target. With a CPU, this is prohibitively slow. On a GPU, thousands of these stochastic simulations for different parameter sets (across multiple chains) can be executed concurrently. This parallelism reduces the time for parameter inference from months to hours, dramatically accelerating the iterative design-make-test-analyze cycle in drug development [12]. Major pharmaceutical companies like Eli Lilly are investing heavily in proprietary supercomputers powered by thousands of NVIDIA GPUs specifically to power such AI-driven discovery efforts [17].

Critical Considerations and Potential Pitfalls

While powerful, a successful GPU implementation requires careful attention to several factors:

- Algorithmic Suitability: Not all MCMC algorithms are "GPU-friendly." Algorithms with heavy control flow or that cause chains to become desynchronized can perform poorly. For example, the No-U-Turn Sampler (NUTS) can be inefficient because each chain may require a different number of leapfrog steps per iteration, leading to thread divergence and idle processors as the GPU waits for the slowest chain to finish [10]. Choosing algorithms with minimal control flow and uniform computation across chains is critical.

- Memory Management: Data must be explicitly transferred between CPU (host) and GPU (device) memory. Poorly managed transfers can become a significant bottleneck. The goal is to minimize these transfers by keeping computation and data on the GPU as long as possible [9].

- Problem Scale: The overhead of using a GPU is only worthwhile for sufficiently large problems. The computation must be substantial enough to fully utilize the thousands of cores. For pMCMC, this often means running many chains or using a large number of particles in the particle filter [12] [11].

Particle Markov Chain Monte Carlo (pMCMC) is a class of algorithms that combines the strengths of particle filtering (Sequential Monte Carlo) and Markov Chain Monte Carlo (MCMC) sampling for performing Bayesian inference on complex, high-dimensional statistical models. The core innovation of pMCMC is its use of a particle filter to produce an unbiased estimate of the likelihood within an MCMC procedure, enabling inference for state-space models where the likelihood is intractable. The computational intensity of both particle filtering and MCMC sampling has driven research into their parallelization, with Graphics Processing Unit (GPU) computing emerging as a transformative technology for acceleration. GPU-based parallel computing offers unmatched computational performance, enabling practical, large-scale pMCMC applications that are infeasible with serial implementations on central processing units (CPUs) [13]. The inherent parallelism in pMCMC algorithms arises from independent particle propagation in particle filters and the potential for parallel chain execution in MCMC, making them ideally suited for GPU architectures that excel at executing thousands of parallel threads [18] [13].

Core Components of pMCMC Algorithms

Particle Filtering (Sequential Monte Carlo)

Particle filters (PFs) are sequential Monte Carlo methods used for state estimation in nonlinear and non-Gaussian systems. They represent the posterior probability distribution of a system's state using a set of random samples (particles) with associated weights [19]. The standard bootstrap particle filter operates through a recursive sampling-importance-resampling (SIR) framework:

- Sampling: At each time step, particles are propagated according to the system's transition model (proposal distribution).

- Importance Weighting: Each particle is weighted based on its likelihood given the current observation.

- Resampling: Particles are resampled with replacement to eliminate those with negligible weights and focus computational resources on promising regions of the state space.

Despite their theoretical generality, classical particle filters struggle with particle degeneracy (where most particle weights become negligible) and the challenge of designing effective proposal distributions, especially in high-dimensional spaces [19]. Recent advances include differentiable particle filters (DPFs) that embed sample-based filtering into deep state-space models, enabling end-to-end learning of system dynamics and observation models from data [19].

MCMC Sampling

Markov Chain Monte Carlo (MCMC) methods constitute a family of algorithms for sampling from complex probability distributions. They construct a Markov chain that has the desired distribution as its equilibrium distribution, allowing for approximate sampling after a sufficient burn-in period [20]. In Bayesian statistics, MCMC is particularly valuable for obtaining posterior distributions when analytical solutions are intractable. Traditional MCMC methods like the Metropolis-Hastings algorithm and Gibbs sampling can face challenges with convergence and mixing in high-dimensional spaces, often requiring long iteration times to produce reliable samples [21]. The integration of MCMC with particle filters in pMCMC algorithms, such as Particle MCMC (PMCMC), provides a powerful framework for joint parameter and state estimation in complex dynamical systems [19].

Synergy in pMCMC Frameworks

In pMCMC algorithms, the particle filter provides an unbiased estimate of the marginal likelihood for a given parameter value. This stochastic likelihood estimate is then used within an MCMC sampler (typically a Metropolis-Hastings algorithm) to accept or reject parameter proposals [19]. This synergy allows pMCMC to perform full Bayesian inference for state-space models where the likelihood function is not available in closed form. The PMCMC framework [19] specifically integrates particle filtering into MCMC algorithms, creating a powerful tool for systems with complex latent state dynamics.

Parallelism in pMCMC Components

Inherent Parallelism in Particle Filters

The structure of particle filters contains multiple sources of data parallelism that can be exploited for acceleration:

- Embarrassingly Parallel Particle Propagation: The propagation of particles through the state transition model during the sampling step can be executed completely independently for each particle, making this aspect "embarrassingly parallel" [18].

- Parallel Weight Calculation: The importance weight calculation for each particle, given the current observation, can be performed concurrently across all particles.

- Parallelized Resampling: While more complex, the resampling step can be implemented using parallel algorithms and data structures to distribute the computational load.

These characteristics make particle filters particularly amenable to implementation on massively parallel architectures like GPUs, where thousands of threads can simultaneously process different particles [18].

Parallelism in MCMC Sampling

MCMC methods present more challenges for parallelization due to their inherently sequential nature, where each state depends on the previous one. However, several effective parallelization strategies have been developed:

- Multiple Independent Chains: Running multiple MCMC chains in parallel with different initializations represents the most straightforward approach to parallelism, though it does not accelerate individual chains [21].

- Within-Chain Parallelism: For hierarchical models or specific sampling algorithms, components within a single chain can be parallelized. This includes parallel evaluation of likelihoods for different data segments or parallel proposal generation.

- Adaptive and Wavelet-Based Methods: Recent research has combined wavelet theory with multi-chain parallel sampling methods to improve convergence and reduce iteration times in high-dimensional financial data analysis [21].

GPU-Accelerated Implementation

The implementation of pMCMC algorithms on GPU architectures leverages several key strategies to maximize performance:

- Massive Data Parallelism: GPUs exploit the fine-grained parallelism in particle filters by assigning individual threads or thread blocks to process different particles simultaneously [13].

- Memory Hierarchy Optimization: Efficient use of GPU memory hierarchies (shared memory, registers, and caches) is critical for reducing memory access latency and improving throughput.

- Specialized GPU Kernels: Developing custom CUDA or OpenCL kernels specifically designed for particle operations (transition, weighting) and sparse matrix operations in MCMC sampling can significantly enhance performance [22].

- Hardware-Specific Optimizations: Leveraging emerging GPU features like ray-tracing cores, tensor cores, and GPU-execution-friendly transport methods offers opportunities for further performance enhancements [13].

Table 1: Quantitative Performance Gains from GPU Acceleration in Monte Carlo Methods

| Application Domain | Speedup Factor | Key Implementation Factors |

|---|---|---|

| Tomography MC Simulation [13] | 100–1000× over CPU | Parallel photon transport in voxelized geometry |

| Multi-Objective Path Planning [22] | 600–1000× over sequential methods | Sparse matrix operations; custom CUDA kernels |

| Financial MCMC [21] | Reduced iteration time to 364 seconds | Multi-chain parallel sampling; wavelet preprocessing |

Experimental Protocols and Application Notes

Protocol: GPU-Accelerated Particle Filter Implementation

This protocol provides a methodology for implementing a particle filter on GPU architectures for state estimation in dynamic systems [18] [19].

System Specification:

- Define the state transition model: ( xt = f(x{t-1}, ut, wt) ) where ( w_t ) is process noise.

- Define the observation model: ( yt = h(xt, vt) ) where ( vt ) is measurement noise.

- Specify initial state distribution ( p(x_0) ).

GPU Memory Allocation and Initialization:

- Allocate GPU memory for particle states (current and previous), weights, and resampled indices.

- Initialize ( N ) particles by sampling from ( p(x_0) ) using parallel random number generation on the GPU.

Particle Propagation Kernel:

- Launch a GPU kernel with ( N ) threads, where each thread ( i ) computes:

- ( xt^i = f(x{t-1}^i, ut, wt^i) )

- ( wt^i \sim pw(\cdot) ) (process noise distribution)

- Launch a GPU kernel with ( N ) threads, where each thread ( i ) computes:

Weight Calculation Kernel:

- Launch a GPU kernel with ( N ) threads, where each thread ( i ) computes:

- ( \omegat^i = p(yt | x_t^i) ) (observation likelihood)

- Perform parallel sum reduction to compute total weight: ( \Omegat = \sum{i=1}^N \omega_t^i )

- Normalize weights: ( \tilde{\omega}t^i = \omegat^i / \Omega_t )

- Launch a GPU kernel with ( N ) threads, where each thread ( i ) computes:

Resampling Implementation:

- Implement parallel resampling algorithms such as systematic resampling on the GPU.

- For differentiable particle filters, employ soft-resampling or entropy-regularized optimal transport to maintain differentiability [19].

Performance Optimization:

- Utilize shared memory for frequently accessed data.

- Employ memory coalescing for global memory accesses.

- Balance thread block sizes for specific GPU architectures.

Protocol: Differentiable Particle Filter with Diffusion Models

This protocol outlines the implementation of DiffPF, a differentiable particle filter that leverages diffusion models for enhanced sampling, representing the state-of-the-art in particle filtering research [19].

Problem Formulation:

- Consider a dynamic system with hidden states ( \bm{x}t ) and observations ( \bm{o}t ).

- Aim to estimate the posterior distribution ( p(\bm{x}t | \bm{o}{1:t}, \bm{a}_{1:t}) ).

Model Architecture Design:

- Transition Model: Implement using neural networks (fully connected, LSTM, or GRU) to map previous states to current states.

- Observation Model: Implement as a neural network that outputs parameters of a distribution or unnormalized likelihood scores.

- Diffusion Sampler: Employ a conditional diffusion model that refines predicted particles using current observations to generate samples from the complex, multimodal filtering posterior.

Training Procedure:

- Prediction Step: Compute prior ( p(\bm{x}t | \bm{o}{1:t-1}, \bm{a}{1:t}) = \int p(\bm{x}t | \bm{x}{t-1}, \bm{a}t) p(\bm{x}{t-1} | \bm{o}{1:t-1}, \bm{a}{1:t-1}) d\bm{x}{t-1} ).

- Update Step: Use the diffusion model to sample from the approximate posterior ( p(\bm{x}t | \bm{o}{1:t}, \bm{a}_{1:t}) ), conditioned on both predicted particles and current observation.

- Loss Function: Implement end-to-end training using a combination of state estimation error and likelihood maximization.

Implementation Advantages:

- Eliminates explicit particle weighting and manual proposal design.

- Naturally avoids particle degeneracy, removing the need for non-differentiable resampling.

- Supports high-dimensional, multimodal distributions in a fully differentiable framework.

Application Note: pMCMC for Medical Tomography

GPU-accelerated pMCMC methods have found significant application in medical tomography, including computed tomography (CT), cone-beam CT (CBCT), and positron emission tomography (PET) [13].

- Problem Context: Tomographic image reconstruction involves estimating internal structures from penetrating wave measurements, complicated by stochastic physical interactions that introduce artifacts.

- pMCMC Application: pMCMC algorithms are used for joint parameter and state estimation in complex tomographic systems, enabling precise modeling of the underlying physics.

- GPU Acceleration Benefits: Speedups of 100–1000× over CPU implementations make practical, large-scale MC applications feasible, providing essential support for new imaging system development.

- Implementation Considerations: Leverage GPU-accelerated MC platforms like GGEMS and gDRR for simulating CBCT projections and dose calculations. Utilize modular GPU-based MC codes to adapt to emerging simulation needs like virtual clinical trials and digital twins for healthcare [13].

Visualization of pMCMC Workflows

pMCMC Algorithm Architecture

Diagram 1: pMCMC Algorithm Architecture showing the interaction between particle filtering and MCMC sampling, with parallelizable components highlighted.

GPU Parallelization Strategy

Diagram 2: GPU Parallelization Strategy for pMCMC showing the division of labor between CPU control and GPU parallel execution.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for pMCMC Research and Implementation

| Tool/Category | Function | Example Implementations |

|---|---|---|

| GPU Programming Platforms | Provides parallel computing framework for algorithm acceleration | CUDA [13], OpenCL [23], ROCm (AMD) [23] |

| MC Simulation Packages | Models complex physical interactions in scientific applications | Geant4, EGSnrc, GATE, TOPAS [13] |

| GPU-Accelerated MC Platforms | Specialized tools for tomography and medical physics | gDRR (CBCT) [13], GGEMS (dose simulation) [13] |

| Differentiable Programming Frameworks | Enables end-to-end learning in particle filters | PyTorch, TensorFlow (for DiffPF) [19] |

| MCMC Sampling Libraries | Provides foundational algorithms for Bayesian inference | PyMC, Stan (with GPU extensions) |

| Visualization and Analysis Tools | Enables interpretation of high-dimensional posterior distributions | Python (Matplotlib, Plotly), R (ggplot2) |

The integration of particle filtering and MCMC sampling in pMCMC algorithms represents a powerful framework for Bayesian inference in complex dynamical systems. The key to making these computationally intensive methods practical lies in exploiting their inherent parallelism through GPU acceleration. As demonstrated across multiple application domains, GPU implementation can provide speedup factors of 100–1000× compared to conventional CPU approaches, transforming previously infeasible problems into tractable research questions [13] [22]. Future research directions include further development of differentiable particle filters with advanced sampling techniques like diffusion models [19], improved modularization of GPU-based MC codes for emerging applications in digital twins and virtual clinical trials [13], and leveraging new GPU hardware capabilities (ray-tracing cores, tensor cores) for additional performance gains. The continued co-design of pMCMC algorithms with GPU architectures will undoubtedly expand the frontiers of computational statistics and enable more sophisticated analyses across scientific disciplines.

For researchers in fields like drug development, the journey from CPU-based clusters to accessible many-core GPU computing represents a pivotal shift in computational capability. This evolution has unlocked the potential to tackle previously intractable problems, such as the complex inference required for particle Markov Chain Monte Carlo (pMCMC) methods in Bayesian statistics. Where scientists once relied on distributed networks of central processing units (CPUs) and battled with complex programming paradigms like MPI to achieve parallelism, the modern landscape offers massively parallel graphics processing units (GPUs) programmable through high-level frameworks [24]. This application note details this technological transition, providing a quantitative analysis and experimental protocols to guide scientists in leveraging these advanced computing resources for accelerated research.

A Historical Shift in Computing Paradigms

The Era of CPU Clusters

The demand for parallel computing has existed since the 1960s, long before the advent of modern multi-core processors. Scientific computation and simulations drove the need to execute more than one instruction at a time. In the absence of today's integrated parallel hardware, researchers turned to CPU clusters—networks of individual computers connected via high-speed interconnects. Achieving parallelism required mastering complex and often cumbersome programming interfaces like the Message Passing Interface (MPI), which was essential for coordinating tasks across the separate nodes of the cluster [24]. This approach was a testament to the "high-performance programming is becoming more and more complicated" reality of the time, presenting a significant barrier to entry for many scientific teams [24].

The Rise of Accessible Many-Core GPUs

The computing landscape underwent a fundamental transformation with the rise of many-core GPU computing. Initially designed for rendering graphics, GPUs are built around a design philosophy that prioritizes massive parallel throughput over the low-latency execution of a few tasks that CPUs excel at [25]. A modern GPU contains thousands of relatively simple cores organized into Streaming Multiprocessors (SMs), allowing it to perform the same computation on vast datasets simultaneously under a Single Instruction, Multiple Threads (SIMT) model [25]. This architectural shift, coupled with the development of accessible programming platforms like CUDA and OpenCL, has democratized access to teraflops of computational power [24]. The move from distributed clusters to integrated, programmable accelerators represents a monumental simplification and amplification of computational capacity for the scientific community.

Quantitative Analysis: Performance Gains in Computational Statistics

The theoretical advantages of GPU computing are borne out by dramatic performance improvements in practical algorithms, particularly in Bayesian inference and MCMC sampling. The table below summarizes key performance metrics from published studies that implemented pMCMC algorithms on different hardware platforms.

Table 1: Performance Comparison of pMCMC Hardware Implementations

| Hardware Platform | Speedup Factor vs. CPU | Key Performance Metric | Energy Efficiency | Source |

|---|---|---|---|---|

| FPGA (pMCMC) | 12.1x (CPU) / 10.1x (GPU) | Sampling throughput | Up to 53x more efficient than CPU/GPU | [2] |

| FPGA (ppMCMC) | 34.9x (CPU) / 41.8x (GPU) | Sampling throughput | 173x more power efficient | [11] |

| GPU (pMCMC) | Used as baseline for FPGA | Sampling throughput | Baseline | [2] |

| Modern Software (JAX/PyTorch) | Dramatic speedups reported | ESS/second (Time to acceptable error) | Not specified | [8] |

These quantitative results highlight two key trends. First, specialized hardware like FPGAs can deliver order-of-magnitude improvements in speed and energy efficiency for complex SSM inferences, bringing previously intractable analyses within reach [11]. Second, the underlying driver for GPU acceleration is the alignment of algorithm structure with hardware architecture. MCMC workloads offer opportunities for parallelization across data, model parameters, and multiple chains, making them a natural fit for the parallel processing capabilities of GPUs and the software frameworks that support them [8].

Experimental Protocols for Hardware-Accelerated pMCMC

Protocol 1: FPGA-Accelerated pMCMC for Large-Scale Inference

This protocol is adapted from studies achieving significant speedups for a large-scale genetics State-Space Model [2] [11].

1. Problem Setup & Algorithm Selection:

- Model: Define a State-Space Model (SSM) with unknown parameters. The goal is to sample from the Bayesian posterior distribution of these parameters, which is analytically intractable.

- Algorithm: Select the standard Particle MCMC (pMCMC) algorithm. pMCMC uses a Particle Filter (PF) to generate an unbiased estimate of the model's density, which is then used within an MCMC sampler [11].

2. Hardware Configuration:

- Accelerator: Utilize a Field Programmable Gate Array (FPGA).

- Architecture: Implement a custom, parallel hardware architecture on the FPGA that is tailored for pMCMC. The design must exploit the parallelism inside the Particle Filter to maximize throughput [2].

3. Implementation and Execution:

- Pipelining: Structure the computation to pipeline operations, increasing the utilization of the PF datapath inside the FPGA.

- Sampling: Execute the pMCMC algorithm on the FPGA, generating thousands of samples from the posterior distribution.

4. Analysis:

- Validation: Confirm that the generated samples converge to the correct posterior distribution.

- Benchmarking: Compare the sampling throughput (samples per second) and energy efficiency against state-of-the-art CPU and GPU implementations of the same pMCMC algorithm [11].

Protocol 2: Multi-Modal Posterior Sampling with FPGA-based ppMCMC

This protocol extends Protocol 1 to handle multi-modal posterior distributions, which are challenging for standard MCMC methods.

1. Problem Setup:

- Model: Use an SSM known to produce a multi-modal posterior distribution, such as the DNA methylation model from the referenced genetics study [11].

- Algorithm: Select the Population-based Particle MCMC (ppMCMC) algorithm. ppMCMC uses multiple interacting Markov chains instead of a single chain to improve sampling efficiency and exploration of multi-modal distributions [11].

2. Hardware Configuration:

- Accelerator: Utilize an FPGA.

- Architecture: Implement a novel hardware architecture that pipelines the computations of the multiple ppMCMC chains. This design increases the utilization of the PF datapath and allows for efficient chain interaction [2].

3. Implementation and Execution:

- Chain Management: Configure the number of chains and the number of particles per PF to balance exploration and computational cost.

- Sampling: Execute the ppMCMC algorithm on the FPGA hardware.

4. Analysis:

- Efficiency: Calculate the sampling efficiency gain over sequential CPU implementations of pMCMC and ppMCMC (e.g., a 1.96x higher sampling efficiency for ppMCMC [11]).

- Performance: Compare the sampling throughput against FPGA pMCMC, CPU ppMCMC, and GPU ppMCMC implementations to quantify the combined algorithmic and hardware speedup (e.g., 3.24x faster than FPGA pMCMC [11]).

Protocol 3: Modern GPU-Accelerated MCMC with Software Frameworks

This protocol leverages accessible GPU hardware and modern software frameworks, as described in the literature [8].

1. Problem Setup:

- Model: Define a probabilistic model in code, specifying the model parameters, priors, and likelihood.

- Objective: Generate samples from the posterior distribution ( p(\theta \mid y) ) to estimate expectations of a test function ( f ), ( \mathbb{E}[f(\theta)] ) [8].

2. Hardware & Software Configuration:

- Hardware: Use a consumer-grade or data-center GPU (e.g., NVIDIA series with Tensor Cores).

- Software Framework: Use a high-level, array-oriented framework such as JAX or PyTorch. These frameworks provide automatic differentiation and vectorized-map operations, which are crucial for gradient-based MCMC and chain-level parallelism [8].

3. Implementation and Execution:

- Algorithm Selection: Employ a gradient-based MCMC method like Hamiltonian Monte Carlo (HMC) or the No-U-Turn Sampler (NUTS). These methods require gradients of the log-density, which are provided automatically by the software framework [8].

- Parallelization: Use the framework's vectorization capabilities to run multiple MCMC chains in parallel on the GPU. This exploits the massive data parallelism of the hardware.

- Efficiency Metric: Optimize hyperparameters to maximize the Effective Sample Size (ESS) per second, which minimizes the time to an acceptable error in estimation [8].

4. Analysis:

- Benchmarking: Compare the ESS/second and total runtime against a traditional, CPU-based workflow running 4-8 chains in parallel on a multi-core processor.

- Diagnostics: Perform standard MCMC diagnostics (e.g., trace plots, Gelman-Rubin statistics) to ensure chain convergence and sampling quality.

Workflow Visualization

The following diagram illustrates the high-level architectural and workflow evolution from CPU clusters to modern GPU/accelerator computing, which underpins the experimental protocols.

The Scientist's Toolkit: Research Reagent Solutions

For researchers embarking on implementing hardware-accelerated pMCMC, the following tools and "reagents" are essential.

Table 2: Essential Research Reagents and Tools for GPU/Accelerator MCMC Research

| Tool / Reagent | Type | Function & Explanation |

|---|---|---|

| JAX / PyTorch | Software Framework | Provides a NumPy-like interface for writing numerical code that can be compiled to run efficiently on GPUs/TPUs. Includes automatic differentiation for gradient-based MCMC (HMC, NUTS) and vectorized-map for easy chain parallelism [8]. |

| NVIDIA CUDA | Software Platform | A parallel computing platform and programming model that allows developers to use CUDA-enabled GPUs for general-purpose processing (GPGPU). Essential for low-level GPU programming [26]. |

| FPGA Development Kits | Hardware & Software | Provides the hardware (e.g., FPGA chips on a board) and software toolchain (e.g., Vitis, Vivado) needed to design and implement custom hardware architectures for specialized algorithms like pMCMC [2] [11]. |

| Particle Filter (PF) | Algorithmic Component | A Monte Carlo technique used within pMCMC to provide an unbiased estimate of the analytically intractable model density. Its inherent parallelism is a key target for hardware acceleration [11]. |

| InfiniBand / RDMA | Network Technology | High-performance network architecture used in advanced clusters. Provides high bandwidth and low latency, allowing for efficient direct memory access (RDMA) between nodes in a multi-GPU or multi-node cluster [26]. |

For researchers in drug development and scientific computing, the promise of GPU acceleration is compelling, offering potential speedups of 10x to over 1000x compared to traditional CPU-based computation [13] [27]. However, this performance is not automatic; it hinges on the computational characteristics of the specific model or algorithm. Framed within a broader thesis on implementing particle Markov chain Monte Carlo (MCMC) on GPU, this application note provides a structured framework to assess whether a given scientific computing problem is a suitable candidate for GPU acceleration. We summarize key criteria, present quantitative performance data, and provide detailed protocols for evaluating and implementing models on GPU architectures, with a focus on applications in drug discovery and materials science.

Key Characteristics of GPU-Friendly Problems

The massive parallel architecture of GPUs is not universally suited to all computational tasks. A model is a strong candidate for GPU acceleration if it exhibits the following characteristics:

High Parallelism: The problem can be decomposed into a large number of independent, or nearly independent, tasks that can be executed simultaneously. GPUs excel at Single Instruction, Multiple Data (SIMD) parallelism, where hundreds or thousands of processing cores execute the same operation on different data elements [28] [29]. Monte Carlo simulations that involve running multiple independent chains are inherently well-suited, as the chains can be executed in parallel [8].

Coarse-Grained Parallelism with Minimal Synchronization: Algorithms where parallel tasks require minimal communication and synchronization between them are ideal. Fine-grained parallelism with frequent data exchange can severely hamper GPU performance. In MCMC, samplers like NUTS are less GPU-friendly because each chain may require a different number of operations (e.g., leapfrog steps) per iteration, forcing the system to wait for the slowest chain and underutilizing the hardware [10].

High Arithmetic Intensity: This refers to the ratio of arithmetic operations to memory operations. GPUs have immense computational throughput but relatively high memory access latencies. Problems that are compute-bound (spend more time on calculations than on memory access) benefit most from GPU acceleration. Evaluating a complex machine learning potential for millions of atoms in a Monte Carlo simulation is a prime example of a high arithmetic intensity task [30].

Regular Memory Access Patterns: Efficient GPU execution requires that parallel threads access memory in a coalesced or contiguous manner. This allows the memory subsystem to combine multiple accesses into a single transaction. Irregular, data-dependent memory access patterns can drastically reduce effective memory bandwidth and thus overall performance [10].

Limited Control Flow: Algorithms with minimal branching (e.g.,

if-elsestatements) and predictable execution paths are more efficient on GPUs. Complex control flow (e.g., the internal tree management in the NUTS algorithm) can cause thread divergence, where threads within the same warp (a group of threads executed in SIMD) must execute different code paths serially, wasting compute cycles [10].Support for Lower Precision: Many GPU applications, particularly in deep learning, can leverage single-precision (FP32) or even half-precision (FP16) arithmetic for significant speedups without sacrificing necessary accuracy. However, double-precision (FP64) remains crucial for certain scientific applications to ensure numerical stability and accuracy [27].

Quantitative Performance Data

Empirical benchmarks demonstrate the significant performance gains achievable across various scientific domains when applications are well-suited to GPU architecture.

Table 1: GPU vs. CPU Speedup in Scientific Applications

| Application Domain | Example Model/Software | Reported Speedup (GPU vs. CPU) | Key Factor for Suitability |

|---|---|---|---|

| Fluid/Particle Simulation | Particleworks (FP32) [27] | 11.3x (RTX 4090) | Massive parallelism in particle-particle interactions |

| Fluid/Particle Simulation | Particleworks (FP64) [27] | 7.3x (H100) | High double-precision performance for accuracy |

| Particle Coagulation | Monte Carlo Simulation [28] | ~100x (GTX 285) | Independent computation for each simulation particle |

| Tomography Simulation | MC for CT/PET [13] | 100–1000x | Parallel photon transport in voxelized geometry |

| Drug Discovery | Molecular Docking/Virtual Screening [23] [31] | Orders of magnitude | High-throughput, independent docking calculations |

Table 2: Impact of GPU Selection on Performance

| GPU Type | Precision Strength | Typical Use Case | Example Performance |

|---|---|---|---|

| Consumer (e.g., RTX 4090) | High FP32, Low FP64 | Cost-effective for single-precision workloads | 11.3x FP32 speedup [27] |

| Professional (e.g., RTX 6000 Ada) | High FP32, Large Memory | Scalable multi-GPU workstations | 9.3x FP32 speedup [27] |

| Data Center (e.g., H100, A100) | High FP64, Large HBM | Memory-intensive, high-precision scientific computing | 7.3x FP64 speedup [27] |

Experimental Protocols for GPU Implementation

Protocol: Suitability Assessment for Particle MCMC

This protocol provides a step-by-step methodology to analyze a particle MCMC algorithm for potential GPU acceleration.

Problem Decomposition Analysis

- Objective: Identify the primary source of parallelism.

- Procedure:

- Map the algorithm's data flow and task dependencies.

- Determine if parallelism exists across chains, particles, data points, or model parameters [8].

- For particle MCMC, assess if the evolution and weighting of particles can be performed independently.

- Success Criterion: Identification of a dominant, fine-grained parallel dimension with thousands of independent tasks.

Control Flow and Synchronization Audit

- Objective: Identify algorithmic elements that may hinder GPU efficiency.

- Procedure:

- Analyze the MCMC sampler (e.g., NUTS vs. non-adaptive HMC) for per-iteration variability in computational cost [10].

- Check for frequent global synchronization points or complex branching logic dependent on sample values.

- Success Criterion: The algorithm exhibits predictable, uniform computation per iteration with minimal global synchronization.

Arithmetic Intensity and Memory Footprint Estimation

- Objective: Gauge the compute- vs. memory-bound nature of the problem.

- Procedure:

- Profile the target log-density and gradient computations.

- Estimate the memory required per thread and for the entire dataset.

- Success Criterion: The core computations are computationally expensive (high arithmetic intensity) and the memory footprint fits within available GPU global memory (e.g., 24-80GB for modern GPUs) [28] [27].

Protocol: GPU-Accelerated Monte Carlo Simulation for Material Science

This protocol details the implementation of a scalable Monte Carlo (SMC-GPU) algorithm for large-scale atomistic systems, as demonstrated in the study of high-entropy alloys [30].

Algorithm Selection and System Preparation

- Reagents & Tools:

- SMC-GPU Algorithm: A generalized checkerboard algorithm designed for GPUs [30].

- Machine Learning Potential (MLP): A surrogate energy model trained on DFT data (e.g., using local short-range order parameters as features) [30].

- Initial Atomic Configuration: A supercell of the material (e.g., FeCoNiAlTi HEA) with initial atomic positions and species.

- Procedure:

- System Partitioning: Divide the atomic system into a checkerboard-style grid of "link-cells." The size of each link-cell must be large enough that MC trial moves within non-adjacent cells are independent [30].

- Local-Interaction Zone (LIZ) Definition: For each atom being updated, define a local zone encompassing all atoms within the cutoff radius of its machine learning potential. This confines energy change calculations to a small, local neighborhood.

- Reagents & Tools:

GPU Kernel Implementation and Execution

- Reagents & Tools:

- CUDA or OpenCL: Programming frameworks for GPU kernel development.

- GPU Hardware: Such as an NVIDIA A100 or H100.

- Procedure:

- Parallel Kernel Launch: Assign one GPU thread block to process multiple independent link-cells concurrently. Within a block, individual threads handle the atoms within a single link-cell [30].

- Concurrent MC Trials: Perform Monte Carlo trial moves (e.g., atom swaps) on all atoms sharing the same relative index across different link-cells simultaneously. This is the core of the parallelism.

- Energy Evaluation: For each trial move, use the MLP to compute the energy change by querying only the atoms within the pre-defined LIZ.

- Metropolis Acceptance: Let each thread independently accept or reject its trial move based on the Metropolis criterion.

- Reagents & Tools:

Validation and Performance Analysis

- Procedure:

- Validate the GPU results against a small-scale, trusted CPU simulation.

- Measure performance metrics, including simulation time per MC step and strong scaling efficiency as the system size increases.

- Expected Outcome: The implementation should enable the simulation of systems exceeding one billion atoms on a single GPU, revealing nanostructure evolution phenomena that are intractable with serial algorithms [30].

- Procedure:

The following diagram illustrates the core logical workflow of the SMC-GPU protocol.

Diagram 1: The SMC-GPU simulation workflow, highlighting the parallel evaluation of independent Monte Carlo trials on the GPU.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of GPU-accelerated models requires both specialized software frameworks and an understanding of hardware capabilities.

Table 3: Essential Software and Hardware Tools for GPU-Accelerated Research

| Tool Category | Specific Examples | Function & Application |

|---|---|---|

| GPU Programming Models | CUDA [28] [29], OpenCL, ROCm [23] | Provide low-level APIs and toolkits for writing code that executes directly on GPUs. |

| High-Level Frameworks | JAX [8], PyTorch [8] [23] | Simplify GPU programming via automatic differentiation and vectorization; enable seamless execution of NumPy-like code on GPUs/TPUs. |

| Specialized MC Software | SMC-GPU [30], BINDSURF [29] | Domain-specific, GPU-optimized packages for materials science (atomistic MC) and drug discovery (molecular docking). |

| Consumer-Grade GPUs | NVIDIA GeForce RTX 4090 [27] | Cost-effective for single-precision (FP32) heavy workloads; ideal for algorithm development and testing. |

| Professional/Data Center GPUs | NVIDIA RTX 6000 Ada, H100, A100 [27] | Feature large memory capacities and high double-precision (FP64) performance, essential for production-scale scientific simulations. |

Determining whether a model is a good candidate for GPU acceleration is a systematic process. The key is to assess the problem's inherent parallelism, memory access patterns, control flow, and precision requirements against the strengths and constraints of GPU architecture. For particle MCMC and other Monte Carlo methods in drug discovery and materials science, the potential is tremendous. By leveraging the assessment criteria, performance data, and implementation protocols outlined in this document, researchers can make informed decisions, strategically invest development resources, and harness the transformative power of GPU computing to tackle problems at a scale and speed previously thought impossible.

Implementation and Applications: Building and Deploying GPU-Accelerated pMCMC

Particle Markov Chain Monte Carlo (pMCMC) is a sophisticated class of algorithms used for sampling from complex probability distributions, particularly within Bayesian analysis of State-Space Models (SSMs). These methods are essential when dealing with models where the posterior distribution does not admit a closed-form expression, a common scenario in fields such as genetics, pharmacokinetics, and drug discovery [2]. The core computational challenge involves generating a sequence of samples that approximate the target posterior distribution. Given the iterative nature and inherent parallelism in evaluating multiple particles, pMCMC algorithms are exceptionally well-suited for acceleration on highly parallel hardware architectures like Graphics Processing Units (GPUs) [2].

The fundamental goal of MCMC is to draw samples from a probability distribution, π(x), often a Bayesian posterior. pMCMC enhances this by using a particle filter to efficiently estimate the intractable likelihoods required for the acceptance probability in the Metropolis-Hastings algorithm [1]. This allows pMCMC to handle complex, high-dimensional models that are analytically intractable. The performance of these algorithms is critical, as they are often applied to large datasets, making computational efficiency a primary concern for researchers and scientists in drug development [2].

CUDA and OpenCL: A Comparative Analysis for pMCMC

Selecting the appropriate GPU programming framework is a critical strategic decision that influences performance, development time, and the portability of pMCMC applications. The two predominant frameworks are NVIDIA's CUDA and the open standard OpenCL.

The table below summarizes the core differences between these two frameworks, which are pivotal for making an informed choice in a research setting.

Table 1: Key Characteristics of CUDA and OpenCL

| Feature | CUDA | OpenCL |

|---|---|---|

| Vendor & Type | Proprietary framework from NVIDIA [32] | Open, royalty-free standard from the Khronos Group [32] |

| Hardware Support | Exclusive to NVIDIA GPUs [32] | Portable across NVIDIA, AMD, Intel GPUs, and multi-core CPUs [32] [33] |

| Programming Language | C/C++ with CUDA keywords [32] | C99-based language with extensions for parallelism [32] |

| Performance | Can better match NVIDIA hardware characteristics; highly optimized [32] [33] | Competitive on NVIDIA hardware, but performance can vary more across vendors [33] |

| Libraries & Ecosystem | Rich, high-performance libraries (cuBLAS, cuRAND, cuSPARSE, etc.) [32] | Fewer native libraries; relies more on vendor implementations and cross-platform efforts [32] |

| Community & Support | Large, well-established community and extensive documentation [32] | Growing community, but smaller than CUDA's [32] |

For pMCMC, several factors from this table are particularly salient. The cuRAND library in CUDA provides high-performance random number generation, which is the bedrock of any Monte Carlo method [32]. Furthermore, linear algebra operations, accelerated by cuBLAS, are frequently used in particle weighting and propagation steps within the particle filter. While OpenCL can achieve strong performance, reaching the peak efficiency of a well-tuned CUDA implementation on NVIDIA hardware often requires significant extra effort, including vendor-specific optimizations [33]. However, OpenCL's primary advantage is its performance portability, allowing a single codebase to be deployed on heterogeneous computing environments, which is valuable in research groups with diverse hardware [32].

Implementation Protocols and Workflow

Implementing a pMCMC algorithm on a GPU involves a structured methodology that leverages parallelism at multiple levels. The following workflow diagram outlines the key stages of this process.

Protocol 1: Algorithm and Framework Selection

- Algorithm Specification: Begin by formally defining the State-Space Model and the target posterior distribution. Choose a specific pMCMC variant, such as Particle Gibbs with Ancestor Sampling (PGAS), which can improve mixing times for multi-modal distributions [2].

- Hardware Inventory: Assess the available GPU hardware within the research team. If the environment is exclusively NVIDIA, CUDA is a strong candidate. For mixed hardware (e.g., both NVIDIA and AMD GPUs), OpenCL provides essential portability [32].

- Framework Decision: Make a final selection based on the trade-off between maximum performance on specific hardware (favoring CUDA) and code portability across different systems (favoring OpenCL) [32].

Protocol 2: Kernel Design and Parallelization Strategy

The core of GPU acceleration lies in designing computational "kernels"—the functions that run on the GPU.

- Identify Parallelizable Components: In pMCMC, the most significant targets for parallelization are:

- Particle Evaluation: The forward propagation and likelihood calculation for each particle in a set are independent and can be executed in parallel [2].

- Multiple Markov Chains: For improved exploration of multi-modal posteriors, multiple independent Markov chains can be run simultaneously on the GPU, a strategy exploited by the ppMCMC algorithm [2].

- Kernel Implementation:

- For CUDA: Write kernels in CUDA C/C++. Use one thread block to process one particle or one chain. Leverage shared memory within a block for efficient data sharing during resampling steps.

- For OpenCL: Write kernels in OpenCL C. The mapping is similar, with work-items and work-groups corresponding to CUDA's threads and thread blocks.

- Critical Operations: Implement efficient parallel versions of key operations like the resampling step, which can be challenging due to its inherently sequential nature. Algorithms like parallel prefix-sum (inclusive scan) are often used here.

Protocol 3: Host Code and Memory Management

The host code, running on the CPU, manages the GPU execution and data movement.

- Environment Setup: Initialize the GPU device and context. This setup overhead, as noted in profiling results, should be minimized, for instance, by compiling OpenCL kernels once and reusing them [33].

- Memory Allocation: Allocate memory buffers on the GPU for particles, weights, random states, and chain states. The goal is to minimize costly data transfers between the host and device memory.

- Execution Loop: The host code controls the main MCMC loop. For each iteration, it launches the particle filter kernel, followed by a kernel for the MCMC acceptance step, and finally schedules any necessary data transfers.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successfully implementing a high-performance pMCMC solution requires both software and hardware components. The table below details the essential "research reagents" for this computational task.

Table 2: Key Research Reagents and Materials for GPU-Accelerated pMCMC

| Item Name | Type | Function / Purpose |

|---|---|---|

| NVIDIA GPU (Tesla/GeForce) | Hardware | Provides the parallel processing cores for executing CUDA or OpenCL kernels. High memory bandwidth is critical for performance [32]. |

| CUDA Toolkit | Software | SDK including compiler (nvcc), debugger, profiler (Nsight), and core libraries like cuBLAS and cuRAND essential for numerical computing [32]. |

| OpenCL SDK | Software | Development environment from a vendor (NVIDIA, AMD, Intel) providing the compiler and API headers for writing portable, cross-platform GPU code [32]. |

| MAGMA Library | Software | A dense linear algebra library optimized for GPUs and multicore CPUs, useful for matrix operations within models [33]. |

| AdvancedPS.jl | Software | A Julia package providing efficient implementations of particle-based Monte Carlo samplers, serving as a valuable reference or base for customization [34]. |

| FPGA Accelerator | Hardware | An alternative to GPUs, offering massive parallelism and custom data paths for specific algorithms, demonstrated to achieve significant speedups and energy efficiency for pMCMC [2]. |

Case Study and Performance Benchmarking

To illustrate the potential of GPU acceleration, we examine a case study from genetics, where a novel pMCMC algorithm (ppMCMC) and custom hardware architectures were evaluated [2]. The ppMCMC algorithm, which uses multiple Markov chains to better handle multi-modal posteriors, showed a 1.96x higher sampling efficiency than standard pMCMC when implemented on CPUs.

The performance gains from specialized hardware implementation are quantitatively summarized in the table below.

Table 3: Performance Benchmark of pMCMC/ppMCMC Hardware Architectures (vs. CPU/GPU)

| Metric | pMCMC on FPGA | ppMCMC on FPGA | Baseline (CPU/GPU) |

|---|---|---|---|

| Speedup vs. CPU | 12.1x | 34.9x | 1.0x (Baseline) |

| Speedup vs. GPU | 10.1x | 41.8x | 1.0x (Baseline) |

| Power Efficiency vs. CPU | Up to 53x | 173x | 1.0x (Baseline) |

Data adapted from [2]

These results demonstrate that coupling advanced algorithms like ppMCMC with specialized parallel hardware can lead to order-of-magnitude improvements, bringing previously intractable data analyses within reach. The following diagram visualizes the relative performance and efficiency reported in this study.

The implementation of pMCMC algorithms on GPUs represents a powerful synergy between advanced statistical methodology and high-performance computing. The choice between CUDA and OpenCL is not merely technical but strategic, balancing raw performance against hardware flexibility. For research teams operating in a homogeneous NVIDIA environment, CUDA offers a mature ecosystem and highly tuned libraries that can significantly accelerate development and execution. Conversely, OpenCL provides a vital path for teams requiring cross-platform compatibility.

The profound speedups and energy efficiencies demonstrated through custom implementations on hardware like FPGAs highlight the transformative potential of this approach. As computational demands in Bayesian statistics, drug development, and scientific machine learning continue to grow, leveraging these GPU frameworks will be indispensable for enabling timely and complex data analysis.

Implementing Particle Markov Chain Monte Carlo (PMCMC) methods on GPU architectures represents a transformative approach for computationally intensive applications, including pharmaceutical research and drug development. These methods combine the flexibility of particle filters for state-space models with the rigorous statistical foundation of Markov Chain Monte Carlo (MCMC) sampling. The sequential nature of traditional particle filters and MCMC methods has historically limited their scalability, but recent advances in parallel algorithm design and GPU hardware capabilities have enabled significant performance breakthroughs. By leveraging massive parallelism, researchers can now achieve speedup factors of 100x to 10,000x compared to conventional CPU implementations, making previously intractable problems in Bayesian inference and real-time tracking computationally feasible [35] [14].

This paradigm shift enables researchers to tackle complex problems in pharmacological modeling, including personalized drug dosing regimens, clinical trial simulation, and molecular dynamics at unprecedented scales. The integration of GPU-accelerated PMCMC methods allows for more sophisticated hierarchical models that can account for patient variability, disease progression, and drug interactions while providing uncertainty quantification essential for regulatory decision-making. This document provides detailed application notes and experimental protocols for implementing these cutting-edge computational strategies within biomedical research contexts.

Parallel Particle Filter Implementations

Algorithmic Foundations and GPU Adaptation

Particle filters (PFs), also known as Sequential Monte Carlo (SMC) methods, provide a powerful framework for state estimation in non-linear, non-Gaussian dynamic systems common in pharmacological modeling. The core algorithm consists of four iterative steps: particle propagation, weight calculation, weight normalization, and resampling. The computational challenge arises from the sequential dependencies between iterations and the resampling step's global communication requirements [36].

Recent research has demonstrated that strategic modifications to canonical particle filter algorithms enable efficient GPU implementation while maintaining statistical integrity:

Half-Precision Particle Filter: Schieffer et al. (2023) implemented a half-precision particle filter on CUDA cores that achieved a 1.5-2x performance improvement over single-precision and 2.5-4.6x improvement over double-precision baselines on NVIDIA V100, A100, A40, and T4 GPUs. This approach mitigates numerical instability through algorithmic adjustments rather than simple precision reduction [37].

Cellular Particle Filter (CPF): This adaptation reorganizes particles into a two-dimensional locally connected grid inspired by cellular neural network architecture. Each grid element connects to its eight neighbors, enabling rapid local information flow. The critical resampling step is performed on subsets within a radius r neighborhood rather than the complete particle set, reducing global communication overhead [36].